WO2025006042A2 - Method for color correction based on ground truth color chart - Google Patents

Method for color correction based on ground truth color chart Download PDFInfo

- Publication number

- WO2025006042A2 WO2025006042A2 PCT/US2024/025609 US2024025609W WO2025006042A2 WO 2025006042 A2 WO2025006042 A2 WO 2025006042A2 US 2024025609 W US2024025609 W US 2024025609W WO 2025006042 A2 WO2025006042 A2 WO 2025006042A2

- Authority

- WO

- WIPO (PCT)

- Prior art keywords

- color

- biological tissue

- domain

- tissue includes

- cie

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Pending

Links

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/90—Determination of colour characteristics

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B5/00—Measuring for diagnostic purposes; Identification of persons

- A61B5/0033—Features or image-related aspects of imaging apparatus, e.g. for MRI, optical tomography or impedance tomography apparatus; Arrangements of imaging apparatus in a room

- A61B5/004—Features or image-related aspects of imaging apparatus, e.g. for MRI, optical tomography or impedance tomography apparatus; Arrangements of imaging apparatus in a room adapted for image acquisition of a particular organ or body part

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B5/00—Measuring for diagnostic purposes; Identification of persons

- A61B5/0059—Measuring for diagnostic purposes; Identification of persons using light, e.g. diagnosis by transillumination, diascopy, fluorescence

- A61B5/0077—Devices for viewing the surface of the body, e.g. camera, magnifying lens

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B5/00—Measuring for diagnostic purposes; Identification of persons

- A61B5/145—Measuring characteristics of blood in vivo, e.g. gas concentration or pH-value ; Measuring characteristics of body fluids or tissues, e.g. interstitial fluid or cerebral tissue

- A61B5/1455—Measuring characteristics of blood in vivo, e.g. gas concentration or pH-value ; Measuring characteristics of body fluids or tissues, e.g. interstitial fluid or cerebral tissue using optical sensors, e.g. spectral photometrical oximeters

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/0002—Inspection of images, e.g. flaw detection

- G06T7/0012—Biomedical image inspection

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B2560/00—Constructional details of operational features of apparatus; Accessories for medical measuring apparatus

- A61B2560/02—Operational features

- A61B2560/0242—Operational features adapted to measure environmental factors, e.g. temperature, pollution

- A61B2560/0247—Operational features adapted to measure environmental factors, e.g. temperature, pollution for compensation or correction of the measured physiological value

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61B—DIAGNOSIS; SURGERY; IDENTIFICATION

- A61B5/00—Measuring for diagnostic purposes; Identification of persons

- A61B5/44—Detecting, measuring or recording for evaluating the integumentary system, e.g. skin, hair or nails

- A61B5/441—Skin evaluation, e.g. for skin disorder diagnosis

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/10—Image acquisition modality

- G06T2207/10024—Color image

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20081—Training; Learning

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/30—Subject of image; Context of image processing

- G06T2207/30004—Biomedical image processing

- G06T2207/30041—Eye; Retina; Ophthalmic

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N1/00—Scanning, transmission or reproduction of documents or the like, e.g. facsimile transmission; Details thereof

- H04N1/46—Colour picture communication systems

- H04N1/56—Processing of colour picture signals

- H04N1/60—Colour correction or control

- H04N1/603—Colour correction or control controlled by characteristics of the picture signal generator or the picture reproducer

- H04N1/6033—Colour correction or control controlled by characteristics of the picture signal generator or the picture reproducer using test pattern analysis

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N9/00—Details of colour television systems

- H04N9/64—Circuits for processing colour signals

Definitions

- the present disclosure generally relates to color correction in bioimaging and in particular to color correction based on a predetermined ground truth color chart, and further determining concentration of a molecule in a sample based on the color corrected bioimaging.

- BACKGROUND This section introduces aspects that may help facilitate a better understanding of the disclosure. Accordingly, these statements are to be read in this light and are not to be understood as admissions about what is or is not prior art.

- a photograph can provide diagnostic information beyond a mere visual representation. The primary challenge is to achieve color accuracy, which is referred to as the ability to define standardized colors of a sample and accurately detect them across diverse photo acquisition settings..

- Photographs acquired using a digital camera exhibit detrimental variations in colors, depending on device models, light conditions, and file formats.

- Color accuracy in medical imaging, color consistency in machine vision, and color constancy in human perception are distinct yet interrelated aspects of color science and management. The importance of color consistency is well recognized in machine vision and general photography, which focuses on color correction and color reproduction. Color constancy in human perception primarily focuses on achieving identical perceptual response.

- the onboard cameras of mobile devices have been extensively used for medical photography, including telemedicine and mobile health (mHealth) applications.

- color undersampling in the conventional color charts fundamentally reduces the degree of color accuracy.

- Color correction and calibration computations with a limited number of reference colors are prone to random and systematic errors (e.g., Gaussian, impulse, photon, or speckle noise) in a variety of photo acquisitions. Incorporating more reference colors allows us to use advanced machine learning techniques that can outperform the conventional computations.

- regression-based color correction computations also known as lookup table and interpolation

- commonly used in machine vision require manual fine-tuning, depending on photo acquisition settings. [0007] In digital photography, color appearance is considerably affected by a variety of factors, settings, and conditions.

- FIG.1A wherein representative digital photos of biological tissue (e.g., palpebral conjunctiva) captured under four various white-light illumination conditions are provided including LEDs with color temperature of 3000 K, 4300 K, and 5800 K as well as fluorescent tube light.

- Color accuracy in diagnostic photography refers to the ability to detect the absolute colors across various photo acquisition settings.

- a digital trichromatic camera three-color image sensor

- RGB red-green-blue

- spectral response functions also known as the spectral sensitivity

- model-specific RGB spectral response functions lead to device-dependent color mapping of several popular low- and high-end smartphones with ANDROID and iOS operating systems including APPLE iPHONE 12 PRO, APPLE iPHONE SE, SAMSUNG GALAXY S21, and SAMSUNG GALAXY A52.

- the RGB spectral responses of smartphone cameras exhibit significant model-specific variations as shown in FIGs. 1C and 1D, resulting in notoriously device-dependent RGB color values. From an illumination standpoint, different types of white light sources have distinct spectral profiles in otherwise grossly white-appearing light conditions as shown in FIGs.1E and 1F.

- Neural network-based color recovery can overcome this intrinsic limitation.

- a neural network architecture allows the incorporation of possible polynomial expansion terms, and the weights of the connections between the nodes across the layers of the network can be determined specifically for a given scene or sample. After scene/sample-specific training, the algorithm takes the RGB values acquired from each pixel as the input and returns the CIE XYZ values under CIE illuminant E as the output.

- a neural network algorithm enables fully automated and high-fidelity color recovery under diverse acquisition scenarios.

- the reference colors in the color chart serve as training data for a particular scene or sample in which the chart is juxtaposed with the sample of interest.

- a neural network-based color recovery algorithm has three advantages. First, given a relatively small amount of training data, the proposed network is concise and consists of only three layers: input, hidden, and output layers. Second, sigmoid activation functions are employed to be highly suitable for shallow networks, supported by the universal approximation theory. Third, the hidden layer mimics all possible terms of the RGB polynomial (root-polynomial) expansions. This hidden layer design completely avoids the manual selection of appropriate expansion terms for a specific scene or sample.

- the neural network algorithm can recover absolute colors under CIE illuminant E with high fidelity in a fully automated manner.

- the absolute CIE XYZ (under CIE illuminant E) and acquired RGB values of the reference colors in the color chart served as a training dataset for the neural network, which was specific to a photo.

- the network architecture mimicked regression-based color corrections that include higher-degree RGB polynomial expansions.

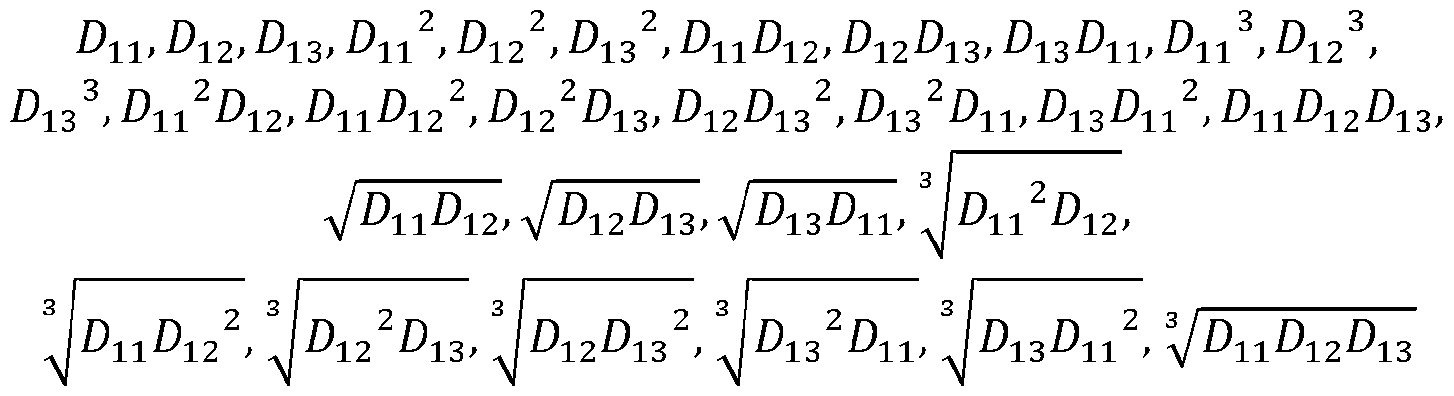

- the hidden layer can capture the nonlinearity between the CIE XYZ and RGB values with 29 nodes, which are equivalent to all possible 29 terms of RGB polynomial expansions.

- Batch normalization is applied to the hidden layer for efficient and accurate training.

- a sigmoid activation function is used after batch normalization.

- the sigmoid function exhibits superior performance compared to other activation functions in shallow neural networks, as supported by the universal approximation theory.

- the network is trained with the root mean square error over a maximum of 500 epochs.

- the Adam optimizer with an initial learning rate of 5 ⁇ 10-4 and a mini-batch size of 30 is used. [0014] Therefore, there is an unmet need for a novel method that can use a ground truth color chart and correct colors in captured images to true colors.

- SUMMARY [0015] A method for correcting colors in a captured image is disclosed.

- the method includes receiving a ground truth color chart having a plurality of reference color patches, capturing an image based on a first color domain from a scene or sample of interest juxtaposed with the received ground truth color chart, the captured image thereby including the plurality of reference color patches, obtaining ground truth color values associated with each of the reference color patches in a second color domain, generating a scene or sample-specific dataset using the plurality of color values of the reference color patches in the ground truth color chart in the first color domain and the second color domain as the input and output, respectively, training a neural network of color correction with the scene or sample-specific dataset, and applying the trained neural network to convert portion of the scene or sample of interest outside the ground truth color chart in the captured image in the first color domain to thereby generate an output image based on the second color domain.

- the first color domain is the same as the second color domain.

- the first color domain is different than the second color domain.

- the ground truth color values based on the second color domain associated with each of the reference color patches are defined under International Commission on Illumination (CIE) illuminant E.

- CIE International Commission on Illumination

- spatial nonuniformity of illumination is corrected in the capture image.

- the scene or sample of interest is from a biological tissue.

- the biological tissue includes palpebral conjunctiva.

- the biological tissue includes skin.

- the biological tissue includes teeth.

- the biological tissue includes tongue. [0025] In the above method, the biological tissue includes eye. [0026] In the above method, the biological tissue includes bulbar conjunctiva. [0027] In the above method, the biological tissue includes urine. [0028] In the above method, the biological tissue includes whole blood. [0029] In the above method, the biological tissue includes eye tears. [0030] In the above method, the biological tissue includes nailbed. [0031] In the above method, the biological tissue includes external eye. [0032] In the above method, the biological tissue includes external saliva. [0033] In the above method, the biological tissue includes external stool. [0034] In the above method, the biological tissue includes external body fluid.

- the biological tissue includes biological solutions.

- each of the first or second color domains includes RGB.

- each of the first or second color domains includes CIE XYZ.

- each of the first or second color domains includes CIE YUV.

- each of the first or second color domains includes CIE UVW.

- each of the first or second color domains includes CIE RGB.

- each of the first or second color domains includes CIE LAB.

- each of the first or second color domains includes CIE LUV.

- each of the first or second color domains includes HSL. [0044] In the above method, each of the first or second color domains includes HSV. [0045] In the above method, each of the first or second color domains includes HCL. [0046] In the above method, each of the first or second color domains includes LMS. [0047] In the above method, the neural network is a multilayer perceptron. [0048] In the above method, the neural network includes one or more hidden layers which employ one of sigmoid activation function or sigmoid linear unit activation function. [0049] In the above method, the neural network includes connections defined by weights which weights are initialized by sampling from a predetermined distribution.

- Another method of determining concentration of a molecule in a sample from a captured image includes establishing a color-corrected image (output image) from a captured image from a scene or sample of interest, the output image provides color corrections from a first color domain to a second color domain, the established output image associated with color values of the sample in the second color domain, and using color values of the output image of the sample in the second color domain to determine the concentration of a molecule of interest.

- the above method further includes determining full spectral values from the associated color values of the output image in the second color domain, when a priori spectral data are available.

- the determined concentration of the molecule of interest establishes blood hemoglobin.

- blood hemoglobin concentration is determined based on the calculated full spectral values and a spectrum-to-concentration model as compared to a predetermined population spectral values and associated population blood hemoglobin concentration values using partial least squares regression.

- the above method further includes receiving a color-to-concentration model expressing concentration of the molecule based on colors in the second color domain, and applying the color-to-concentration model to the output image to thereby obtain concentration of the molecule from the output image using least squares regression.

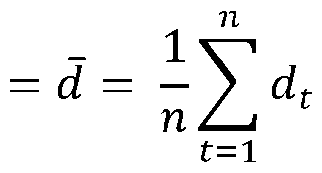

- r e represents color value of the second color domain

- ⁇ ⁇ represents the second color value of the second color domain

- ⁇ represents the third color value of the second color domain

- p represents number of polynomial expansion terms

- coefficients % & , % ⁇ , % ⁇ , % ⁇ , ..., % ⁇ are determined using a priori subject population molecule concentration data vs.

- the first color domain is the same as the second color domain.

- the first color domain is different than the second color domain.

- the second color domain is defined under International Commission on Illumination (CIE) illuminant E.

- CIE International Commission on Illumination

- the scene or sample of interest is from a biological tissue.

- the biological tissue includes palpebral conjunctiva.

- the biological tissue includes skin.

- the biological tissue includes teeth.

- the biological tissue includes tongue.

- the biological tissue includes eye.

- the biological tissue includes bulbar conjunctiva.

- the biological tissue includes urine.

- the biological tissue includes whole blood.

- the biological tissue includes eye tears.

- the biological tissue includes nailbed.

- the biological tissue includes external eye.

- the biological tissue includes external saliva.

- the biological tissue includes external stool.

- the biological tissue includes external body fluid.

- the biological tissue includes biological solutions.

- each of the first or second color domains includes RGB.

- each of the first or second color domains includes CIE XYZ. [0079] In the above method, each of the first or second color domains includes CIE YUV. [0080] In the above method, each of the first or second color domains includes CIE UVW. [0081] In the above method, each of the first or second color domains includes CIE RGB. [0082] In the above method, each of the first or second color domains includes CIE LAB. [0083] In the above method, each of the first or second color domains includes CIE LUV. [0084] In the above method, each of the first or second color domains includes HSL. [0085] In the above method, each of the first or second color domains includes HSV.

- each of the first or second color domains includes HCL.

- each of the first or second color domains includes LMS.

- FIG.1A provides representative digital photos of biological tissue (e.g., palpebral conjunctiva) captured under five various white-light illumination conditions are provided including LEDs with color temperature of 3000 K, 4300 K, and 5800 K as well as fluorescent tube light.

- FIG.1B provides model-specific RGB spectral response functions which lead to device- dependent color mapping of several popular low- and high-end smartphones with ANDROID and iOS operating systems including APPLE iPHONE 12 PRO, APPLE iPHONE SE, SAMSUNG GALAXY S21, and SAMSUNG GALAXY A52.

- FIGs.1C and 1D provide device-dependent RGB color values.

- FIGs.1E and 1F provides different types of white light sources which have distinct spectral profiles in otherwise grossly white-appearing light conditions.

- FIG.1G provides diverse spectral characteristics of light sources which fundamentally limit color management (e.g., white balancing), resulting in light condition-specific RGB color values.

- FIGs.1H and 1I provide degree of color compression (FIG.1H: schematic of file compressions) significantly influenced by the image file format (FIG.1I: DNG, JPEG, and MP4).

- FIG.2 is a flowchart that describes the methodology of the present disclosure at a high level.

- FIG.3 is an example of a ground truth color chart.

- FIG.4 is a flowchart depicting alternative processes for determining concentration from a corrected image, according to the present disclosure.

- FIG.5 is an example of example of a scene of interest with the ground truth color chart of FIG.3 juxtaposed therein.

- FIG.6 provides blood hemoglobin quantification results using the ground truth color chart of FIG.5.

- DETAILED DESCRIPTION [0099]

- the term “about” can allow for a degree of variability in a value or range, for example, within 10%, within 5%, or within 1% of a stated value or of a stated limit of a range.

- the term “substantially” can allow for a degree of variability in a value or range, for example, within 90%, within 95%, or within 99% of a stated value or of a stated limit of a range.

- a novel method is disclosed herein that can use a ground truth color chart and correct colors in captured images to true colors. Towards this end, a machine learning methodology is provided that can be trained based on a ground truth color chart juxtaposed near an image of a tissue of interest against spectral and colorimetric information of the ground truth color chart.

- the machine learning methodology may be configured to receive inputs in one of many domains, including RGB, CIE XYZ, CIE RGB, CIE YUV, CIE UVW, CIE LAB, CIE LUV, HSL, HSV, HCL, and LMS; and then provide an output in the same or different domains selected from the above list of domains.

- the ground truth color chart received by the method of the present disclosure incorporates a sufficient number of reference color patches thus allowing to use advanced machine learning techniques that can outperform conventional color correction computations.

- the machine learning approach of the present disclosure operates unlike a regression-based color correction computation methodology in which such methods are commonly used in machine vision systems and which require manual fine-tuning, depending on photo acquisition settings.

- the present methodology offers a machine learning methodology that includes a training phase based on the ground truth color chart which is juxtaposed next to a scene or tissue of interest after which the trained setup can quickly and effectively correct colors in the remainder of the captured image in the above-described domains.

- the present methodology describes a comprehensive method that can realize high fidelity of color accuracy in diagnostic photography through tissue-specific ground truth color chart and provide true color recovery.

- the methodology described herein is centered around peripheral perfusion and blood hemoglobin, however, the same methodology can be applied to any scene or tissue of interest.

- a color chart of biological tissue is thus provided that is highly sensitive and accurate to tissue and other biological content such as blood hemoglobin.

- the method includes receiving a ground truth color chart having a plurality of reference color patches.

- An example of such a ground truth color chart is shown in FIG.3.

- the method shown in FIG.2 also includes capturing an image based on a first color domain from the scene of interest while having the received ground truth color chart juxtaposed with the scene of interest. Therefore, the captured image thereby includes the plurality of reference color patches.

- An example of such a scene of interest with the ground truth color chart juxtaposed therein is shown in FIG.5.

- the method shown in FIG.2 indicates obtaining ground truth color values based on a second color domain for each of the reference color patches (hereinafter referred to as GTCP i , where i has a value between 1 to n, where n represents the number of reference color patches).

- the ground truth color values may be provided (i.e., a priori known) or determined by applying the ground truth color chart to a spectrometer.

- the obtained ground truth color values of each color patch and the captured color patch are provided to an untrained neural network.

- a training phase of the neural network commences by using the plurality of reference color patches in the captured image and GTCPi.

- the neural network determines output color values for each of the plurality of color patches based on the second color domain (NNCPi) and the associated input to the neural network.

- the training phase involves establishing an error signal associated with a difference between NNCPi and GTCPi. This iterative process is repeated until the established error signal reaches a steady state below a threshold. Once the error signal reaches this state, the neural network is deemed as being trained.

- the method as shown in FIG.2 applies the trained neural network to the captured image to thereby generate an output image based on the second color domain with corrected colors.

- the first color domain is the same as the second color domain.

- the first color domain is different as the second color domain.

- the scene of interest is from a biological tissue.

- the tissue includes palpebral conjunctiva.

- the tissue includes skin.

- the tissue includes teeth.

- the tissue includes tongue.

- the tissue includes eye.

- the tissue includes bulbar conjunctiva.

- the tissue includes urine. [00115] In FIG.2, according to one embodiment, the tissue includes whole blood. [00116] In FIG.2, according to one embodiment, the tissue includes eye tears. [00117] In FIG.2, according to one embodiment, the tissue includes nailbed [00118] In FIG.2, according to one embodiment, the tissue includes external eye. [00119] In FIG.2, according to one embodiment, the tissue includes saliva. [00120] In FIG.2, according to one embodiment, the tissue includes stool. [00121] In FIG.2, according to one embodiment, the tissue includes body fluid. [00122] In FIG.2, according to one embodiment, the tissue includes biological solutions. [00123] In FIG.2, according to one embodiment, the predetermined domain is RGB.

- the first color domain is CIE XYZ. [00125] In FIG.2, according to one embodiment, the first color domain is CIE RGB. [00126] In FIG.2, according to one embodiment, the first color domain is CIE YUV. [00127] In FIG.2, according to one embodiment, the first color domain is CIE UVW. [00128] In FIG.2, according to one embodiment, the first color domain is CIE LAB. [00129] In FIG.2, according to one embodiment, the first color domain is CIE LUV. [00130] In FIG.2, according to one embodiment, the first color domain is HSL. [00131] In FIG.2, according to one embodiment, the first color domain is HSV.

- the first color domain is HCL. [00133] In FIG.2, according to one embodiment, the first color domain is LMS. [00134] In FIG.2, according to one embodiment, the second color domain is CIE XYZ. [00135] In FIG.2, according to one embodiment, the second color domain is CIE RGB. [00136] In FIG.2, according to one embodiment, the second color domain is CIE YUV. [00137] In FIG.2, according to one embodiment, the second color domain is CIE UVW [00138] In FIG.2, according to one embodiment, the second color domain is CIE LAB. [00139] In FIG.2, according to one embodiment, the second color domain is CIE LUV.

- the second color domain is HSL. [00141] In FIG.2, according to one embodiment, the second color domain is HSV. [00142] In FIG.2, according to one embodiment, the second color domain is HCL. [00143] In FIG.2, according to one embodiment, the second color domain is LMS. [00144] In FIG.2, according to one embodiment, the input layer of the neural network has three nodes of three-color components. [00145] In FIG.2, according to one embodiment, the output layer of the neural network has three nodes of three-color components. [00146] In FIG.2, according to one embodiment, the neural network has a hidden layer.

- the hidden layer has a plurality of nodes to incorporate a 2nd order of parameters having at least four of these terms: ⁇ , ⁇ ⁇ , ⁇ , ⁇ ⁇ ⁇ , ⁇ ⁇ ⁇ , ⁇ ⁇ ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , wherein ⁇ ⁇ represents second color value of the first color domain, and ⁇ ⁇ represents third color value of the first color domain.

- the hidden layer employs one of sigmoid activation function and sigmoid linear unit activation function.

- the hidden layer has a plurality of nodes to incorporate a 3rd order of parameters having at least four of these terms: ⁇ ⁇ , ⁇ , ⁇ , ⁇ ⁇ , ⁇ ⁇ , ⁇ ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ ⁇ , ⁇ ⁇ , ⁇ ⁇ , ⁇ ⁇ , ⁇ ⁇ , ⁇ ⁇ , ⁇ ⁇ ⁇ ⁇ ⁇ ⁇ , ⁇ ⁇ , ⁇ ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ ,

- the hidden layer employs one of sigmoid activation function and sigmoid linear unit activation function.

- the hidden layer has a plurality of nodes to incorporate a 4th order of parameters having at least four of these terms: ⁇ , ⁇ , ⁇ ⁇ ⁇ ⁇ ⁇ ⁇ ⁇ ⁇ ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ , ⁇ 8 ⁇ ⁇ ⁇ ⁇ , 8 ⁇ ⁇ ⁇ ⁇ ⁇ , 8 ⁇ ⁇ ⁇ ⁇ ⁇ , 8 ⁇ ⁇ ⁇ ⁇ ⁇ , ⁇ 8 ⁇ ⁇ ⁇ ⁇ ⁇ , ⁇ ⁇ ⁇ ⁇ ⁇ wherein ⁇ represents first color value of the first color domain, ⁇ represents second color value of the first color domain, and ⁇ ⁇ represents third color value of the first color

- the hidden layer employs one of sigmoid activation function and sigmoid linear unit activation function.

- the present disclosure has described color correction based on a ground truth color chart. However, once colors are corrected in an image, the present disclosure provides a process by which concentration of a substance can be ascertained from an image based on the flowchart provided in FIG.4. This figure shows the steps for the use of color- corrected color values of a sample of interest (not from the ground truth color chart) for colorimetric diagnostic applications. Accordingly, two different approaches are described for obtaining the biological concentration of the sample using color-corrected color values in the second color domain (e.g.

- CIE XYZ values under CIE Illuminant E serve as a frontend input to achieve diagnostic color accuracy with a single-shot photo.

- Color corrected CIE XYZ values of the sample allows achieving diagnostic-level color accuracy, which is not affected by ambient light conditions or smartphone/device models. Accordingly, the present disclosure provides steps when the spectral learning (i.e., spectral reconstruction) is possible, such that the concentration of the sample can be computed by two steps: 1) spectral learning and 2) partial least squares regression (or principal component analysis).

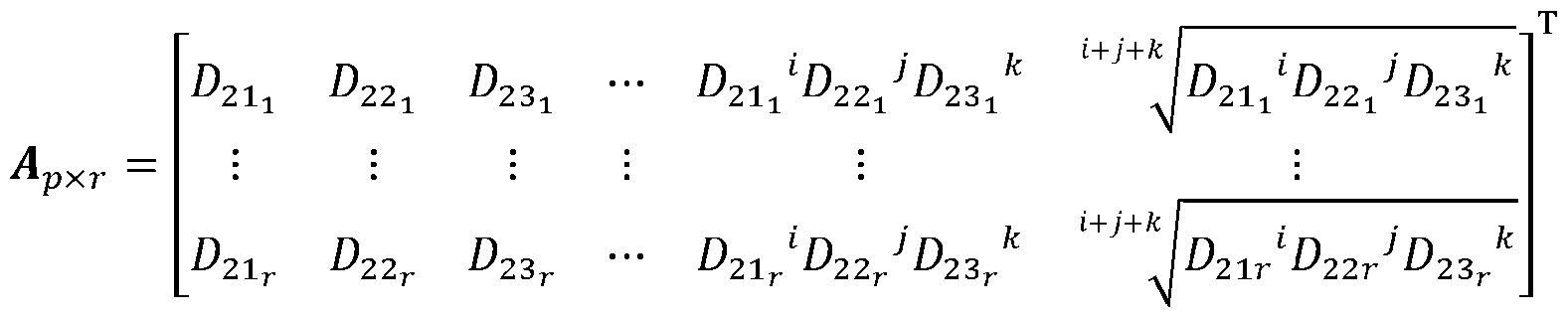

- the full spectral values from the tristimulus color values of the sample are determined in a second color domain (e.g. the color corrected CIE XYZ values of the sample), when relevant spectral data are available.

- ⁇ represents the first color value (the color corrected CIE X values of the sample) of the second color domain

- ⁇ represents the second color value (the color corrected CIE Y values of the sample) of the second color domain

- ⁇ ⁇ represents the third color (the color corrected CIE Z values of the sample) of the second color domain.

- the second color domain can be CIE XYZ. This method allows determining a biological concentration from the determined full spectral data of the sample in the second color domain.

- Hgb represents the concentration of blood hemoglobin

- coefficients % & , % ⁇ , % ⁇ , % ⁇ , ..., % ⁇ are determined using a priori subject population blood hemoglobin concentration data vs. spectral intensity data across a range of wavelengths ( ⁇ ⁇ , ⁇ ⁇ , ⁇ , ..., ⁇ ).

- the concentration of the sample can be computed directly by using the CIE XYZ values of the sample.

- CIE XYZ values of the sample e.g. urine strips or finger prick strips

- Color-corrected CIE XYZ values of the sample of interest, juxtaposed with the color chart, can be utilized to return the concentration of the sample.

- a mathematical model can be directly formulated to compute the concentration, using the CIE XYZ values of the sample.

- this method includes determining concentrations of the sample compared with reference color patches in a color chart (e.g.

- the second color domain can be CIE XYZ.

- This method may also include determining a biological concentration from the determined color values of the sample in the second color domain (e.g., the color-corrected CIE XYZ values of the sample).

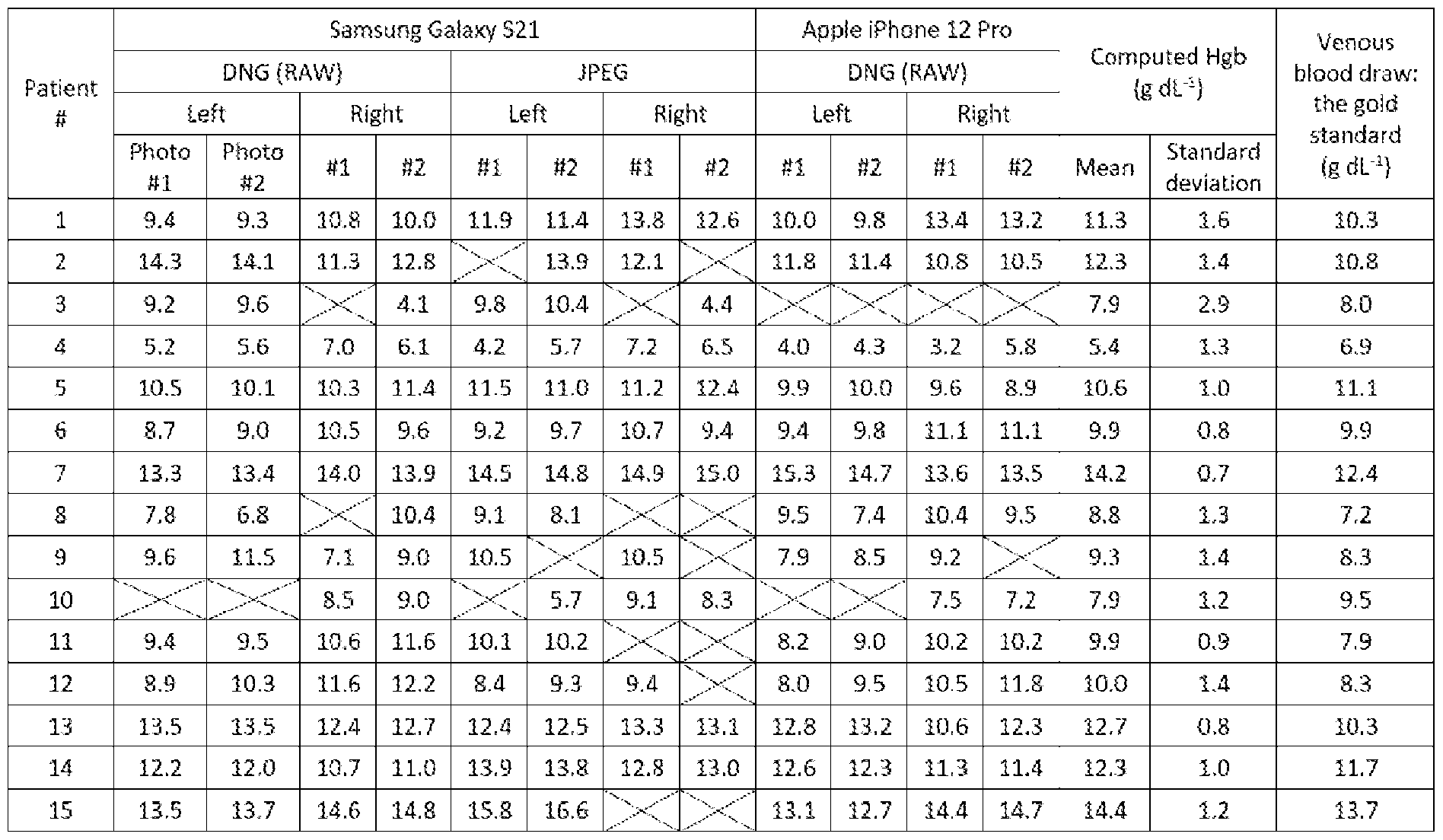

- the clinical data include photos of the palpebral conjunctiva with the ground truth color chart and clinical laboratory blood hemoglobin results of venous blood draws as the gold standard, from fifteen SCD patients aged from 14 to 73 years (FIG.5).

- the clinical photos are acquired under mixed light conditions of sunlight, fluorescent tube, and white-light LED in indoor settings (FIG.5), immediately before or after venous blood draws.

- FIG.6 and TABLE 1 show the blood hemoglobin quantification results using the ground truth color chart.

- the scatter plot and the error bar represent the mean and the standard deviation, respectively.

- CIE XYZ values input

- a mathematical model can be formulated to predict the concentration (output), using the CIE XYZ values (input).

- the World Health Organization (WHO) Haemoglobin Colour Scale (or Tallquist Haemoglobin Scale) is a diagnostic method for estimating blood hemoglobin concentration from a drop of blood. It comprises six reference color patches representing blood hemoglobin levels at 4.7, 6.3, 7.8, 9.4, 10.9, 12.5, 14.1, and 15.6 g dL -1 . A drop of blood smeared onto filter paper is compared with the WHO Haemoglobin Colour Scale. Estimation is achieved by matching the blood sample with the color standards through circular apertures placed in the center of each color standard. However, the original clinical utility of this method is limited due to the error- prone subjective nature of color comparisons made with the naked eye.

Landscapes

- Health & Medical Sciences (AREA)

- Engineering & Computer Science (AREA)

- Life Sciences & Earth Sciences (AREA)

- Physics & Mathematics (AREA)

- General Health & Medical Sciences (AREA)

- Medical Informatics (AREA)

- Surgery (AREA)

- Public Health (AREA)

- Biomedical Technology (AREA)

- Heart & Thoracic Surgery (AREA)

- Biophysics (AREA)

- Molecular Biology (AREA)

- Radiology & Medical Imaging (AREA)

- Animal Behavior & Ethology (AREA)

- Nuclear Medicine, Radiotherapy & Molecular Imaging (AREA)

- Pathology (AREA)

- Veterinary Medicine (AREA)

- Computer Vision & Pattern Recognition (AREA)

- General Physics & Mathematics (AREA)

- Theoretical Computer Science (AREA)

- Spectroscopy & Molecular Physics (AREA)

- Optics & Photonics (AREA)

- Quality & Reliability (AREA)

- Image Processing (AREA)

- Color Image Communication Systems (AREA)

- Spectrometry And Color Measurement (AREA)

Abstract

A method for correcting colors in a captured image, which includes receiving a ground truth color chart having a plurality of reference color patches, capturing an image based on a first color domain from a scene or sample of interest juxtaposed with the ground truth color chart, obtaining ground truth color values associated with each of the reference color patches in a second color domain, generating a scene or sample-specific dataset using the plurality of color values of the reference color patches in the ground truth color chart in the first and second color domains as the input and output, training a neural network of color correction with the dataset, and applying the trained neural network to convert portion of the scene or sample of interest outside the ground truth color chart in the first color domain to thereby generate an output image based on the second color domain.

Description

METHOD FOR COLOR CORRECTION BASED ON GROUND TRUTH COLOR CHART CROSS-REFERENCE TO RELATED APPLICATIONS [0001] The present non-provisional patent application is related to and claims the priority benefit of U.S. Provisional Patent Application Serial 63524318, filed June 30, 2023, and of U.S. Provisional Patent Application Serial 63524352, filed June 30, 2023, the contents of each of which are hereby incorporated by reference in their entirety into the present disclosure. STATEMENT REGARDING GOVERNMENT FUNDING [0002] This invention was made with government support under R01EB033788 awarded by the National Institutes of Health. The government has certain rights in the invention. TECHNICAL FIELD [0003] The present disclosure generally relates to color correction in bioimaging and in particular to color correction based on a predetermined ground truth color chart, and further determining concentration of a molecule in a sample based on the color corrected bioimaging. BACKGROUND [0004] This section introduces aspects that may help facilitate a better understanding of the disclosure. Accordingly, these statements are to be read in this light and are not to be understood as admissions about what is or is not prior art. [0005] A photograph can provide diagnostic information beyond a mere visual representation. The primary challenge is to achieve color accuracy, which is referred to as the ability to define standardized colors of a sample and accurately detect them across diverse photo acquisition

settings.. Photographs acquired using a digital camera exhibit detrimental variations in colors, depending on device models, light conditions, and file formats. Color accuracy in medical imaging, color consistency in machine vision, and color constancy in human perception are distinct yet interrelated aspects of color science and management. The importance of color consistency is well recognized in machine vision and general photography, which focuses on color correction and color reproduction. Color constancy in human perception primarily focuses on achieving identical perceptual response. However, there is a lack of studies to realize color accuracy in medical imaging. Recently, the onboard cameras of mobile devices have been extensively used for medical photography, including telemedicine and mobile health (mHealth) applications. Healthcare professionals now consider the acquisition of photos using mobile devices (i.e., smartphones and tablets) to be a mandatory part of healthcare practice because diagnostic photography plays a key role in a variety of digital health applications. As a result, there is an urgent need for implementing color accuracy in diagnostic photography. [0006] Reproducing original colors is challenging in photography, printing, and electronic displays because of intrinsic color distortions during image acquisition, processing, and output. The existing color calibration or correction methods using the conventional color charts are inadequate to achieve high fidelity of color accuracy for diagnostic purposes. First, generating a universal color gamut that can exactly reproduce all colors is challenging. Optimizing a unique color gamut is necessary, but identifying relevant yet subtle color derivatives is not straightforward without spectroscopic analyses, which enable to augment homogeneous data for ideal gamut determination. Second, color undersampling in the conventional color charts fundamentally reduces the degree of color accuracy. Color correction and calibration computations with a limited number of reference colors are prone to random and systematic errors (e.g., Gaussian, impulse, photon, or speckle noise) in a variety of photo acquisitions. Incorporating more reference colors allows us to use advanced machine learning techniques that can outperform the conventional computations. Third, regression-based color correction computations (also known as lookup table and interpolation) commonly used in machine vision require manual fine-tuning, depending on photo acquisition settings.

[0007] In digital photography, color appearance is considerably affected by a variety of factors, settings, and conditions. Reference is made to FIG.1A wherein representative digital photos of biological tissue (e.g., palpebral conjunctiva) captured under four various white-light illumination conditions are provided including LEDs with color temperature of 3000 K, 4300 K, and 5800 K as well as fluorescent tube light. Color accuracy in diagnostic photography refers to the ability to detect the absolute colors across various photo acquisition settings. From a device standpoint, a digital trichromatic camera (three-color image sensor) has unique red-green-blue (RGB) spectral response functions (also known as the spectral sensitivity) as a function of the wavelength of light ^. Referring to FIG.1B, model-specific RGB spectral response functions lead to device-dependent color mapping of several popular low- and high-end smartphones with ANDROID and iOS operating systems including APPLE iPHONE 12 PRO, APPLE iPHONE SE, SAMSUNG GALAXY S21, and SAMSUNG GALAXY A52. Notably, the RGB spectral responses of smartphone cameras exhibit significant model-specific variations as shown in FIGs. 1C and 1D, resulting in notoriously device-dependent RGB color values. From an illumination standpoint, different types of white light sources have distinct spectral profiles in otherwise grossly white-appearing light conditions as shown in FIGs.1E and 1F. Diverse spectral characteristics of light sources fundamentally limit color management (e.g., white balancing), resulting in light condition-specific RGB color values as shown in FIG.1G. From file format and bit depth (color depth) standpoints, the degree of color compression is significantly influenced by the image file format as shown in FIGs.1H and 1I. JPEG, the most commonly used file format (8-bit depth), uses lossy compression to reduce file size. RAW (also known as DNG) minimizes data compression and rendering. Recent high-end smartphones provide Pro Mode or ProRAW for 10-bit depth in each RGB channel. In telemedicine settings (FIG.1H), live, two-way video conferencing between a healthcare provider and patient introduces additional diversity in file formats (e.g., MP4). Overall, diverse photo acquisition scenarios pose a fundamental challenge for color accuracy in diagnostic photography. [0008] Color correction is critical when the images are being used for diagnostic purposes. For example, suppose images are acquired from a biological tissue and those images are being used

to make diagnostic decisions. Without color correction, wrong diagnostic decisions may be made that are detrimental to the outcome of the diagnostic process. [0009] The primary drawback of regression-based color corrections (lookup table and interpolation) is their limited adaptability across diverse photo acquisition settings, resulting in a lack of generalizability. To enhance color correction performance beyond the simple 3×3 linear color correction, the higher-degree RGB polynomial (or root-polynomial) expansion terms are frequently incorporated. However, the impact of polynomial expansions on color correction is scene/sample-specific, and their effectiveness is not consistently guaranteed. Consequently, an appropriate set of polynomial expansions must be selected manually and empirically for each photo acquisition. [0010] Neural network-based color recovery can overcome this intrinsic limitation. A neural network architecture allows the incorporation of possible polynomial expansion terms, and the weights of the connections between the nodes across the layers of the network can be determined specifically for a given scene or sample. After scene/sample-specific training, the algorithm takes the RGB values acquired from each pixel as the input and returns the CIE XYZ values under CIE illuminant E as the output. [0011] Specifically, a neural network algorithm enables fully automated and high-fidelity color recovery under diverse acquisition scenarios. The reference colors in the color chart serve as training data for a particular scene or sample in which the chart is juxtaposed with the sample of interest. Importantly, a neural network-based color recovery algorithm has three advantages. First, given a relatively small amount of training data, the proposed network is concise and consists of only three layers: input, hidden, and output layers. Second, sigmoid activation functions are employed to be highly suitable for shallow networks, supported by the universal approximation theory. Third, the hidden layer mimics all possible terms of the RGB polynomial (root-polynomial) expansions. This hidden layer design completely avoids the manual selection of appropriate expansion terms for a specific scene or sample. [0012] The neural network algorithm can recover absolute colors under CIE illuminant E with high fidelity in a fully automated manner. The absolute CIE XYZ (under CIE illuminant E) and

acquired RGB values of the reference colors in the color chart served as a training dataset for the neural network, which was specific to a photo. [0013] Importantly, the network architecture mimicked regression-based color corrections that include higher-degree RGB polynomial expansions. For example, the hidden layer can capture the nonlinearity between the CIE XYZ and RGB values with 29 nodes, which are equivalent to all possible 29 terms of RGB polynomial expansions. The input layer has three nodes corresponding to the RGB values acquired using a digital camera (x_(3×1)=[R,G,B]^T), whereas the output layer with three nodes returns the recovered CIE XYZ values (y_(3×1)=[X,Y,Z]^T) under a specific photo acquisition setting. Batch normalization is applied to the hidden layer for efficient and accurate training. A sigmoid activation function is used after batch normalization. The sigmoid function exhibits superior performance compared to other activation functions in shallow neural networks, as supported by the universal approximation theory. The network is trained with the root mean square error over a maximum of 500 epochs. The Adam optimizer with an initial learning rate of 5×10-4 and a mini-batch size of 30 is used. [0014] Therefore, there is an unmet need for a novel method that can use a ground truth color chart and correct colors in captured images to true colors. SUMMARY [0015] A method for correcting colors in a captured image is disclosed. The method includes receiving a ground truth color chart having a plurality of reference color patches, capturing an image based on a first color domain from a scene or sample of interest juxtaposed with the received ground truth color chart, the captured image thereby including the plurality of reference color patches, obtaining ground truth color values associated with each of the reference color patches in a second color domain, generating a scene or sample-specific dataset using the plurality of color values of the reference color patches in the ground truth color chart in the first color domain and the second color domain as the input and output, respectively, training a neural network of color correction with the scene or sample-specific dataset, and applying the trained neural network to convert portion of the scene or sample of interest outside the ground truth

color chart in the captured image in the first color domain to thereby generate an output image based on the second color domain. [0016] In the above method, the first color domain is the same as the second color domain. [0017] In the above method, the first color domain is different than the second color domain. [0018] In the above method, the ground truth color values based on the second color domain associated with each of the reference color patches are defined under International Commission on Illumination (CIE) illuminant E. [0019] In the above method, spatial nonuniformity of illumination is corrected in the capture image. [0020] In the above method, the scene or sample of interest is from a biological tissue. [0021] In the above method, the biological tissue includes palpebral conjunctiva. [0022] In the above method, the biological tissue includes skin. [0023] In the above method, the biological tissue includes teeth. [0024] In the above method, the biological tissue includes tongue. [0025] In the above method, the biological tissue includes eye. [0026] In the above method, the biological tissue includes bulbar conjunctiva. [0027] In the above method, the biological tissue includes urine. [0028] In the above method, the biological tissue includes whole blood. [0029] In the above method, the biological tissue includes eye tears. [0030] In the above method, the biological tissue includes nailbed. [0031] In the above method, the biological tissue includes external eye. [0032] In the above method, the biological tissue includes external saliva. [0033] In the above method, the biological tissue includes external stool. [0034] In the above method, the biological tissue includes external body fluid. [0035] In the above method, the biological tissue includes biological solutions. [0036] In the above method, each of the first or second color domains includes RGB. [0037] In the above method, each of the first or second color domains includes CIE XYZ. [0038] In the above method, each of the first or second color domains includes CIE YUV. [0039] In the above method, each of the first or second color domains includes CIE UVW.

[0040] In the above method, each of the first or second color domains includes CIE RGB. [0041] In the above method, each of the first or second color domains includes CIE LAB. [0042] In the above method, each of the first or second color domains includes CIE LUV. [0043] In the above method, each of the first or second color domains includes HSL. [0044] In the above method, each of the first or second color domains includes HSV. [0045] In the above method, each of the first or second color domains includes HCL. [0046] In the above method, each of the first or second color domains includes LMS. [0047] In the above method, the neural network is a multilayer perceptron. [0048] In the above method, the neural network includes one or more hidden layers which employ one of sigmoid activation function or sigmoid linear unit activation function. [0049] In the above method, the neural network includes connections defined by weights which weights are initialized by sampling from a predetermined distribution. [0050] Another method of determining concentration of a molecule in a sample from a captured image is disclosed. The method includes establishing a color-corrected image (output image) from a captured image from a scene or sample of interest, the output image provides color corrections from a first color domain to a second color domain, the established output image associated with color values of the sample in the second color domain, and using color values of the output image of the sample in the second color domain to determine the concentration of a molecule of interest. [0051] The above method further includes determining full spectral values from the associated color values of the output image in the second color domain, when a priori spectral data are available. [0052] In the above method, the full spectral values are calculated from ^^×^ = ^^×^^^×^, wherein N represents number of wavelengths of spectral values based on a predetermined resolution, r represents number of a priori spectral data,

^^×^ represents a transformation matrix to transform the determined color values of the output image in the second color domain to full spectral values, where p represents number of polynomial expansion terms, and ^^×^ represents an expanded matrix of the determined color values of the output image in the second color domain represented by: ! ^^ ^ ^ ^ ^^^ é ^ ^ ^ ^ ^^^ ^^^^ ^^^^ ⋯ ^^^^ ^^^^ ^^^^ ^^^^^ ^^^^ ^^^^ ù ú û wherein

^^^ represents first color value of the second color domain, ^^^ represents second color value of the second color domain, and ^^^ represents third color value of the second color domain. [0053] In the above method, the determined concentration of the molecule of interest establishes blood hemoglobin. [0054] In the above method, blood hemoglobin concentration is determined based on the calculated full spectral values and a spectrum-to-concentration model as compared to a predetermined population spectral values and associated population blood hemoglobin concentration values using partial least squares regression. [0055] In the above method, the spectrum-to-concentration model is: "#$ = %& + %^()^^* + %^()^^* + %^()^^* ⋯ + %^()^^*

and coefficients %&, %^, %^, %^, …, %^ are determined using a priori subject population blood hemoglobin concentration data vs. spectral intensity data across a range of wavelengths (^^, ^^, ^^, …, ^^). [0056] The above method further includes receiving a color-to-concentration model expressing concentration of the molecule based on colors in the second color domain, and applying the color-to-concentration model to the output image to thereby obtain concentration of the molecule from the output image using least squares regression.

[0057] In the above method, the color-concentration model is: +,-%.-/01/2,- = % + % ^ ^ ^ ^ & ^ ^^ + %^^^^ + %^^^^ ⋯ + %^3^^^^ ^^^ ^^^ + ^^^^^ %^ ^^^^ ^^^^ ^^^^ ^ degree of expansion,

represents color value of the second color domain, ^^^ represents the second color value of the second color domain, ^^^ represents the third color value of the second color domain, p represents number of polynomial expansion terms, and coefficients %&, %^, %^, %^, …, %^ are determined using a priori subject population molecule concentration data vs. color values in the second color domain. [0058] In the above method, the first color domain is the same as the second color domain. [0059] In the above method, the first color domain is different than the second color domain. [0060] In the above method, the second color domain is defined under International Commission on Illumination (CIE) illuminant E. [0061] In the above method, the scene or sample of interest is from a biological tissue. [0062] In the above method, the biological tissue includes palpebral conjunctiva. [0063] In the above method, the biological tissue includes skin. [0064] In the above method, the biological tissue includes teeth. [0065] In the above method, the biological tissue includes tongue. [0066] In the above method, the biological tissue includes eye. [0067] In the above method, the biological tissue includes bulbar conjunctiva. [0068] In the above method, the biological tissue includes urine. [0069] In the above method, the biological tissue includes whole blood. [0070] In the above method, the biological tissue includes eye tears. [0071] In the above method, the biological tissue includes nailbed. [0072] In the above method, the biological tissue includes external eye. [0073] In the above method, the biological tissue includes external saliva. [0074] In the above method, the biological tissue includes external stool.

[0075] In the above method, the biological tissue includes external body fluid. [0076] In the above method, the biological tissue includes biological solutions. [0077] In the above method, each of the first or second color domains includes RGB. [0078] In the above method, each of the first or second color domains includes CIE XYZ. [0079] In the above method, each of the first or second color domains includes CIE YUV. [0080] In the above method, each of the first or second color domains includes CIE UVW. [0081] In the above method, each of the first or second color domains includes CIE RGB. [0082] In the above method, each of the first or second color domains includes CIE LAB. [0083] In the above method, each of the first or second color domains includes CIE LUV. [0084] In the above method, each of the first or second color domains includes HSL. [0085] In the above method, each of the first or second color domains includes HSV. [0086] In the above method, each of the first or second color domains includes HCL. [0087] In the above method, each of the first or second color domains includes LMS. BRIEF DESCRIPTION OF FIGURES [0088] FIG.1A provides representative digital photos of biological tissue (e.g., palpebral conjunctiva) captured under five various white-light illumination conditions are provided including LEDs with color temperature of 3000 K, 4300 K, and 5800 K as well as fluorescent tube light. [0089] FIG.1B provides model-specific RGB spectral response functions which lead to device- dependent color mapping of several popular low- and high-end smartphones with ANDROID and iOS operating systems including APPLE iPHONE 12 PRO, APPLE iPHONE SE, SAMSUNG GALAXY S21, and SAMSUNG GALAXY A52. [0090] FIGs.1C and 1D provide device-dependent RGB color values.

[0091] FIGs.1E and 1F provides different types of white light sources which have distinct spectral profiles in otherwise grossly white-appearing light conditions. [0092] FIG.1G provides diverse spectral characteristics of light sources which fundamentally limit color management (e.g., white balancing), resulting in light condition-specific RGB color values. [0093] FIGs.1H and 1I provide degree of color compression (FIG.1H: schematic of file compressions) significantly influenced by the image file format (FIG.1I: DNG, JPEG, and MP4). [0094] FIG.2 is a flowchart that describes the methodology of the present disclosure at a high level. [0095] FIG.3 is an example of a ground truth color chart. [0096] FIG.4 is a flowchart depicting alternative processes for determining concentration from a corrected image, according to the present disclosure. [0097] FIG.5 is an example of example of a scene of interest with the ground truth color chart of FIG.3 juxtaposed therein. [0098] FIG.6 provides blood hemoglobin quantification results using the ground truth color chart of FIG.5. DETAILED DESCRIPTION [0099] For the purposes of promoting an understanding of the principles in the present disclosure, reference will now be made to the embodiments illustrated in the drawings, and specific language will be used to describe the same. It will nevertheless be understood that no limitation of the scope of this disclosure is thereby intended. [00100] In the present disclosure, the term “about” can allow for a degree of variability in a value or range, for example, within 10%, within 5%, or within 1% of a stated value or of a stated limit of a range.

[00101] In the present disclosure, the term “substantially” can allow for a degree of variability in a value or range, for example, within 90%, within 95%, or within 99% of a stated value or of a stated limit of a range. [00102] A novel method is disclosed herein that can use a ground truth color chart and correct colors in captured images to true colors. Towards this end, a machine learning methodology is provided that can be trained based on a ground truth color chart juxtaposed near an image of a tissue of interest against spectral and colorimetric information of the ground truth color chart. The machine learning methodology may be configured to receive inputs in one of many domains, including RGB, CIE XYZ, CIE RGB, CIE YUV, CIE UVW, CIE LAB, CIE LUV, HSL, HSV, HCL, and LMS; and then provide an output in the same or different domains selected from the above list of domains. [00103] The ground truth color chart received by the method of the present disclosure incorporates a sufficient number of reference color patches thus allowing to use advanced machine learning techniques that can outperform conventional color correction computations. The machine learning approach of the present disclosure operates unlike a regression-based color correction computation methodology in which such methods are commonly used in machine vision systems and which require manual fine-tuning, depending on photo acquisition settings. In contrast, the present methodology offers a machine learning methodology that includes a training phase based on the ground truth color chart which is juxtaposed next to a scene or tissue of interest after which the trained setup can quickly and effectively correct colors in the remainder of the captured image in the above-described domains. [00104] Thus, the present methodology describes a comprehensive method that can realize high fidelity of color accuracy in diagnostic photography through tissue-specific ground truth color chart and provide true color recovery. The methodology described herein is centered around peripheral perfusion and blood hemoglobin, however, the same methodology can be applied to any scene or tissue of interest. A color chart of biological tissue is thus provided that is highly sensitive and accurate to tissue and other biological content such as blood hemoglobin. After receiving the physical and spectral characterization of the ground truth color chart, the machine learning algorithm for gamut-learned color recovery is discussed using deep learning,

which enables high fidelity of color accuracy in diverse settings. Using a series of diagnostic color scales, tissue phantoms, and whole blood-mimicking samples, we extensively compare the proposed method with the conventional regression-based color correction approaches. We further demonstrate the feasibility of an mHealth application that can noninvasively predict blood hemoglobin levels from single-shot photographs of easily accessible peripheral tissue across various photo acquisition settings. Referring to FIG.2, a flowchart is presented that describes the methodology of the present disclosure at a high level. The flowchart in FIG.2, thus represents a method for correcting colors in a captured image from a scene of interest. The method includes receiving a ground truth color chart having a plurality of reference color patches. An example of such a ground truth color chart is shown in FIG.3. The method shown in FIG.2 also includes capturing an image based on a first color domain from the scene of interest while having the received ground truth color chart juxtaposed with the scene of interest. Therefore, the captured image thereby includes the plurality of reference color patches. An example of such a scene of interest with the ground truth color chart juxtaposed therein is shown in FIG.5. Next, the method shown in FIG.2 indicates obtaining ground truth color values based on a second color domain for each of the reference color patches (hereinafter referred to as GTCPi, where i has a value between 1 to n, where n represents the number of reference color patches). The ground truth color values may be provided (i.e., a priori known) or determined by applying the ground truth color chart to a spectrometer. The obtained ground truth color values of each color patch and the captured color patch are provided to an untrained neural network. A training phase of the neural network commences by using the plurality of reference color patches in the captured image and GTCPi. The training of the neural network includes running an epoch for each of the color patches (i.e., i = 1, 2, 3, …, N). For each iteration, the training phase includes inputting each of the captured plurality of reference color patches (first color domain) to the neural network at its input layer. The neural network then determines output color values for each of the plurality of color patches based on the second color domain (NNCPi) and the associated input to the neural network. Next, the training phase involves establishing an error signal associated with a difference between NNCPi and GTCPi. This iterative process is repeated until the established error signal reaches a steady state below a threshold. Once the error signal

reaches this state, the neural network is deemed as being trained. Next, the method as shown in FIG.2 applies the trained neural network to the captured image to thereby generate an output image based on the second color domain with corrected colors. [00105] In FIG.2, according to one embodiment, the first color domain, is the same as the second color domain. [00106] In FIG.2, according to one embodiment, the first color domain, according to one embodiment, is different as the second color domain. [00107] In FIG.2, according to one embodiment, the scene of interest is from a biological tissue. [00108] In FIG.2, according to one embodiment, the tissue includes palpebral conjunctiva. [00109] In FIG.2, according to one embodiment, the tissue includes skin. [00110] In FIG.2, according to one embodiment, the tissue includes teeth. [00111] In FIG.2, according to one embodiment, the tissue includes tongue. [00112] In FIG.2, according to one embodiment, the tissue includes eye. [00113] In FIG.2, according to one embodiment, the tissue includes bulbar conjunctiva. [00114] In FIG.2, according to one embodiment, the tissue includes urine. [00115] In FIG.2, according to one embodiment, the tissue includes whole blood. [00116] In FIG.2, according to one embodiment, the tissue includes eye tears. [00117] In FIG.2, according to one embodiment, the tissue includes nailbed [00118] In FIG.2, according to one embodiment, the tissue includes external eye. [00119] In FIG.2, according to one embodiment, the tissue includes saliva. [00120] In FIG.2, according to one embodiment, the tissue includes stool. [00121] In FIG.2, according to one embodiment, the tissue includes body fluid. [00122] In FIG.2, according to one embodiment, the tissue includes biological solutions. [00123] In FIG.2, according to one embodiment, the predetermined domain is RGB. [00124] In FIG.2, according to one embodiment, the first color domain is CIE XYZ. [00125] In FIG.2, according to one embodiment, the first color domain is CIE RGB. [00126] In FIG.2, according to one embodiment, the first color domain is CIE YUV.

[00127] In FIG.2, according to one embodiment, the first color domain is CIE UVW. [00128] In FIG.2, according to one embodiment, the first color domain is CIE LAB. [00129] In FIG.2, according to one embodiment, the first color domain is CIE LUV. [00130] In FIG.2, according to one embodiment, the first color domain is HSL. [00131] In FIG.2, according to one embodiment, the first color domain is HSV. [00132] In FIG.2, according to one embodiment, the first color domain is HCL. [00133] In FIG.2, according to one embodiment, the first color domain is LMS. [00134] In FIG.2, according to one embodiment, the second color domain is CIE XYZ. [00135] In FIG.2, according to one embodiment, the second color domain is CIE RGB. [00136] In FIG.2, according to one embodiment, the second color domain is CIE YUV. [00137] In FIG.2, according to one embodiment, the second color domain is CIE UVW [00138] In FIG.2, according to one embodiment, the second color domain is CIE LAB. [00139] In FIG.2, according to one embodiment, the second color domain is CIE LUV. [00140] In FIG.2, according to one embodiment, the second color domain is HSL. [00141] In FIG.2, according to one embodiment, the second color domain is HSV. [00142] In FIG.2, according to one embodiment, the second color domain is HCL. [00143] In FIG.2, according to one embodiment, the second color domain is LMS. [00144] In FIG.2, according to one embodiment, the input layer of the neural network has three nodes of three-color components. [00145] In FIG.2, according to one embodiment, the output layer of the neural network has three nodes of three-color components. [00146] In FIG.2, according to one embodiment, the neural network has a hidden layer. [00147] In FIG.2, according to one embodiment, the hidden layer has a plurality of nodes to incorporate a 2nd order of parameters having at least four of these terms: ^ , ^ ^, ^^^, ^ ^ ^^ , ^ ^ ^^ , ^ ^^ ^ ^^^ , ^^^^^^, ^^^^^^, ^^^^^^, wherein ^^^

^^^ represents second color value of the first color domain, and ^^^ represents third color value of the first color domain.

[00148] In FIG.2, according to one embodiment, the hidden layer employs one of sigmoid activation function and sigmoid linear unit activation function. [00149] In FIG.2, according to one embodiment, the hidden layer has a plurality of nodes to incorporate a 3rd order of parameters having at least four of these terms: ^^^, ^^^, ^^^, ^^^ ^ , ^^^ ^ , ^^^ ^ , ^^^^^^, ^^^^^^, ^^^^^^, ^^^ ^ , ^^^ ^ , ^ ^ ^ ^ ^ ^ ^ ^ ^^ , ^^^ ^^^, ^^^^^^ , ^^^ ^^^, ^^^^^^ , ^^^ ^^^, ^^^^^^ , ^^^^^^^^^, wherein

^^^ represents second color value of the first color domain, and ^^^ represents third color value of the first color domain. [00150] In FIG.2, according to one embodiment, the hidden layer employs one of sigmoid activation function and sigmoid linear unit activation function. [00151] In FIG.2, according to one embodiment, the hidden layer has a plurality of nodes to incorporate a 4th order of parameters having at least four of these terms: ^ , ^ , ^ ^ ^ ^ ^ ^ ^ ^^ ^^ ^^, ^^^ , ^^^ , ^^^ , ^^^^^^, ^^^^^^, ^^^^^^, ^^^ , ^^^ , ^^^ , ^

8 ^ ^^^ ^ ^^^ ^ , 8 ^ ^^^ ^ ^^^ ^ , 8 ^ ^^^ ^ ^^^ ^ , 8 ^ ^^^ ^ ^^^

^^ , ^^

8 ^^ ^^ , ^

^^ ^^ ^^ ^ wherein ^^^ represents first color value of the first color domain,

^^^ represents second color value of the first color domain, and ^^^ represents third color value of the first color domain. [00152] In FIG.2, according to one embodiment, the hidden layer employs one of sigmoid activation function and sigmoid linear unit activation function.

[00153] So far, the present disclosure has described color correction based on a ground truth color chart. However, once colors are corrected in an image, the present disclosure provides a process by which concentration of a substance can be ascertained from an image based on the flowchart provided in FIG.4. This figure shows the steps for the use of color- corrected color values of a sample of interest (not from the ground truth color chart) for colorimetric diagnostic applications. Accordingly, two different approaches are described for obtaining the biological concentration of the sample using color-corrected color values in the second color domain (e.g. CIE XYZ values under CIE Illuminant E). In both cases, color corrected CIE XYZ values of the sample serve as a frontend input to achieve diagnostic color accuracy with a single-shot photo. Color corrected CIE XYZ values of the sample allows achieving diagnostic-level color accuracy, which is not affected by ambient light conditions or smartphone/device models. Accordingly, the present disclosure provides steps when the spectral learning (i.e., spectral reconstruction) is possible, such that the concentration of the sample can be computed by two steps: 1) spectral learning and 2) partial least squares regression (or principal component analysis). [00154] In the case of spectral learning, color corrected CIE XYZ values of the sample are used to reconstruct the full spectral information from the color corrected CIE XYZ values. Spectral learning addresses an ill-posed challenge. Depending on the availability of existing data, several machine learning approaches can be used for solving this underdetermined problem including: compressed sensing or sparsity regularization (L1 norm minimization), least squares regression (L2 norm minimization), and deep learning. Among these approaches, statistical learning using fixed-design linear regression with polynomial expansions offers a highly stable inverse calculation that can transform CIE XYZ values to high-resolution spectral data owing to the nature of L2 norm minimization. [00155] In the first approach of spectral learning with CIE XYZ values the full spectral values from the tristimulus color values of the sample are determined in a second color domain (e.g. the color corrected CIE XYZ values of the sample), when relevant spectral data are available. The full spectral values are obtained from: ^^×^ = ^^×^^^×^,

wherein N represents number of wavelengths of spectral values based on a predetermined resolution, r represents the number of existing spectral data, ^^×^ represents a transformation matrix to transform the tristimulus color values of the sample to full spectral values, where p represents number of polynomial expansion terms, and ^^×^ represents an expanded the tristimulus color values of the sample represented by: ! é^^^^ ^^^^ ^^^^ ⋯ ^ ^ ^^^ ^ ^ ^ ^^^^^ ^^^ ^^^^ ^^ ^ ^ ^ ^^^ ^^^^ ^^^^ ù ú ú ú û wherein

^^^ represents the first color value (the color corrected CIE X values of the sample) of the second color domain, ^^^ represents the second color value (the color corrected CIE Y values of the sample) of the second color domain, and ^^^ represents the third color (the color corrected CIE Z values of the sample) of the second color domain. the second color domain can be CIE XYZ. This method allows determining a biological concentration from the determined full spectral data of the sample in the second color domain. In this method the biological concentration may be blood hemoglobin and which is determined based on least partial least squares regression, based on the determined spectral values as: "#$ = %& + %^()^^* + %^()^^* + %^()^^* ⋯ + %^()^^* wherein Hgb represents the concentration of blood hemoglobin, coefficients %&, %^, %^, %^, …, %^ are determined using a priori subject population blood hemoglobin concentration data vs. spectral intensity data across a range of wavelengths (^^, ^^, ^^, …, ^^). [00156] However, if spectral learning (i.e., spectral reconstruction) is not possible, the concentration of the sample can be computed directly by using the CIE XYZ values of the sample.

[00157] Typically, this is the case where reference color patches in the color chart (e.g. urine strips or finger prick strips) contain the corresponding concentration information of interest to estimate. Color-corrected CIE XYZ values of the sample of interest, juxtaposed with the color chart, can be utilized to return the concentration of the sample. In such instances, a mathematical model can be directly formulated to compute the concentration, using the CIE XYZ values of the sample. Thus, this method includes determining concentrations of the sample compared with reference color patches in a color chart (e.g. existing color chart for urine strips or finger prick strips), from the tristimulus color values of the sample in the second color domain. In this method, the second color domain can be CIE XYZ. This method may also include determining a biological concentration from the determined color values of the sample in the second color domain (e.g., the color-corrected CIE XYZ values of the sample). Accordingly, the biological parameter can be the sample concentration determined based on a least squares regression that is based on a polynomial expansion of the determined color values of the sample expressed as: +,-%.-/01/2,- = % ^ ^ ^ & + %^^^^ + %^^^^ + %^^^^ ⋯ + %^3^^^^ ^^^ ^^^ +

^^^ represents the first color value (the color corrected CIE X values of the sample) of the second color domain, ^^^ represents the second color value (the color corrected CIE Y values of the sample) of the second color domain, and ^^^ represents the third color (the color corrected CIE Z values of the sample) of the second color domain, p represents number of polynomial expansion terms, and coefficients %&, %^, %^, %^, …, %^ are determined from the determined color values and associated concentrations of the reference color patches in the existing color chart (e.g. urine strips or finger prick strips). [00158] We apply the gamut-learned color recovery method to a clinical laboratory application. The color-corrected values serve as the frontend input of a diagnostic algorithm to

compute a concentration of interest. As a test model system of relying on peripheral tissue, we take advantage of an mHealth application that noninvasively predicts blood hemoglobin levels from digital photos of the palpebral conjunctiva. We analyze a pilot human study data (clinicaltrials.gov NCT05045820) that aims to improve the quality of sickle cell disease (SCD) patient care by minimizing iatrogenic blood losses caused by frequent blood draws. The clinical data include photos of the palpebral conjunctiva with the ground truth color chart and clinical laboratory blood hemoglobin results of venous blood draws as the gold standard, from fifteen SCD patients aged from 14 to 73 years (FIG.5). [00159] We focus on reproducibility (inter-measurement reliability) that is a variation among different users, devices, and settings, when the same patient is assessed. The clinical photos are acquired under mixed light conditions of sunlight, fluorescent tube, and white-light LED in indoor settings (FIG.5), immediately before or after venous blood draws. For inter-blood hemoglobin assessments (reproducibility), we employ a substantial dataset of 156 photos from both the left and right eyelids in the RAW (i.e., DNG) and JPEG file formats, using SAMSUNG GALAXY S21 and APPLE iPHONE 12PRO. [00160] FIG.6 and TABLE 1 show the blood hemoglobin quantification results using the ground truth color chart. The scatter plot and the error bar represent the mean and the standard deviation, respectively. The linear correlation between the computed blood hemoglobin levels and clinical laboratory results returns a high correlation coefficient of 0.87 with narrow 95% limits of agreement (95% LOA) of [-2.51, 2.30 g dL-1] and bias of -0.11 g dL-1 in the Bland- Altman plot. Importantly, the ranges of 95% LOA are highly comparable to those of capillary blood sampling (e.g., finger prick) tests. A pooled standard deviation of the computed blood hemoglobin levels captures the reproducibility, returning a value of 1.26 g dL-1. Overall, these results demonstrate that the proposed gamut-learned color recovery method enables simple, reliable, consistent, and reproducible color accuracy for diagnostic bioassays and bioimaging.