CN110930495A - Multi-unmanned aerial vehicle cooperation-based ICP point cloud map fusion method, system, device and storage medium - Google Patents

Multi-unmanned aerial vehicle cooperation-based ICP point cloud map fusion method, system, device and storage medium Download PDFInfo

- Publication number

- CN110930495A CN110930495A CN201911155928.6A CN201911155928A CN110930495A CN 110930495 A CN110930495 A CN 110930495A CN 201911155928 A CN201911155928 A CN 201911155928A CN 110930495 A CN110930495 A CN 110930495A

- Authority

- CN

- China

- Prior art keywords

- point cloud

- point

- transformation

- registration

- icp

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Pending

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T15/00—3D [Three Dimensional] image rendering

- G06T15/005—General purpose rendering architectures

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/30—Determination of transform parameters for the alignment of images, i.e. image registration

-

- G—PHYSICS

- G09—EDUCATION; CRYPTOGRAPHY; DISPLAY; ADVERTISING; SEALS

- G09B—EDUCATIONAL OR DEMONSTRATION APPLIANCES; APPLIANCES FOR TEACHING, OR COMMUNICATING WITH, THE BLIND, DEAF OR MUTE; MODELS; PLANETARIA; GLOBES; MAPS; DIAGRAMS

- G09B29/00—Maps; Plans; Charts; Diagrams, e.g. route diagram

- G09B29/003—Maps

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/10—Image acquisition modality

- G06T2207/10028—Range image; Depth image; 3D point clouds

Landscapes

- Engineering & Computer Science (AREA)

- Physics & Mathematics (AREA)

- Theoretical Computer Science (AREA)

- General Physics & Mathematics (AREA)

- Mathematical Physics (AREA)

- Business, Economics & Management (AREA)

- Educational Administration (AREA)

- Educational Technology (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Computer Graphics (AREA)

- Processing Or Creating Images (AREA)

Abstract

The invention provides an ICP point cloud map fusion method, system, device and storage medium based on multi-unmanned aerial vehicle cooperation, wherein the ICP point cloud map fusion method comprises the following steps: the extraction step comprises: extracting key points from two data sets according to the same key point selection standard, wherein the two data sets are two point cloud maps with overlapped areas and are respectively marked as a point cloud P and a point cloud Q, the point cloud P is a target point cloud, and the point cloud Q is a reference point cloud; a calculation step: respectively calculating feature descriptors of all selected key points; the processing steps are as follows: estimating the corresponding relation of the coordinate positions of the feature descriptors in the two data sets by combining the coordinate positions of the feature descriptors and the coordinate positions of the feature descriptors; a registration step: and estimating rigid body transformation by using the corresponding relation, and completely registering. The invention has the beneficial effects that: the method of the invention can improve the convergence rate of the ICP algorithm with higher precision, and obtains very good technical effect.

Description

Technical Field

The invention relates to the technical field of unmanned aerial vehicles, in particular to an ICP point cloud map fusion method, system and device based on multi-unmanned aerial vehicle cooperation and a storage medium.

Background

1. Cooperative SLAM technology with multiple unmanned planes:

under unknown environment, unmanned aerial vehicle can estimate self position and gesture, is the key technology that can effectively accomplish the task. When the unmanned aerial vehicle runs at low altitude and low speed, the information such as position and speed required by flight can be obtained by using methods such as a Global Positioning System (GPS) and an Inertial Navigation System (INS), so that the flight requirement in the city is met. However, the GPS and the INS have limitations in use, the error range of the GPS is 3 to 10 meters, and the GPS cannot work normally due to weak signals in indoor environments and severe environments such as mountains and canyons. Simultaneously, INS can cause the gradual accumulation of error along with unmanned aerial vehicle's continuous motion. In this case, the estimation of the position and attitude of the drone in an unknown environment can be solved by using Simultaneous Localization and Mapping (SLAM). The pose estimation is a key link for realizing intelligent flight such as autonomous flight and autonomous navigation of the unmanned aerial vehicle.

In the current research combining unmanned aerial vehicles and SLAMs, a single unmanned aerial vehicle is mainly used as a research object, and no mature research result exists for Cooperative SLAMs (CSLAMs) of multiple unmanned aerial vehicles, wherein CSLAMs refer to multiple unmanned aerial vehicles flying in the environment at the same time, and the building of status and maps is performed through mutual cooperation, so that the SLAM algorithm is combined with a multi-unmanned aerial vehicle system. By the CSLAM, the map building precision can be improved, the map building speed can be improved, the coverage range of the unmanned aerial vehicle in the environment in unit time can be enlarged, and the CSLAM has important significance in large-scale search application of unknown environments.

Under the complex environment, because the sensor sight is sheltered from or the factors such as flight airspace restriction, only use single unmanned aerial vehicle can't maintain the continuous tracking to the target in the region of large range, need rely on the cooperation of many unmanned aerial vehicles to accomplish the task. Compared with a single unmanned aerial vehicle, the unmanned aerial vehicle cooperative task execution system has the following advantages that:

(1) the quality of task completion can be improved through matching;

(2) the time for completing the tasks can be shortened and the overall efficiency can be improved by executing the tasks in parallel;

(3) the ability of executing tasks can be improved by sharing resources and information;

(4) the success probability of the tasks can be increased through the proportion of task allocation, and the robustness is improved.

At present, the application in the field of many unmanned aerial vehicles gradually develops from the direction of single-machine independent task completion to the direction of multi-machine cooperation. A large number of projects are developed and researched aiming at the fields of unmanned aerial vehicle cooperative investigation, unknown environment map construction, target tracking, positioning, networking formation control and the like.

2. Point cloud fusion technology:

the three-dimensional point cloud data registration technology is to fuse point cloud data collected under different visual angles, and to unify local point cloud data under different coordinate systems to one coordinate system through rigid transformation to obtain complete point cloud data of a measured object or a scene. If the division is performed from the perspective of high or low registration accuracy, the division can be divided into coarse registration and precise registration. The rough registration is to roughly register the point cloud data of two arbitrary positions to make the point cloud data approximately in the same position, so as to provide a good initial value for accurate registration. Common methods for coarse registration are: label method, turntable method, principal component analysis method, curvature analysis method, and the like. And the accurate registration is to perform accurate matching on the point cloud data after the coarse registration, and solve a translation matrix and a rotation matrix to obtain a complete point cloud data model.

At present, the most widely used in the fusion registration algorithm is the Iterative Closest Point (ICP) algorithm proposed in 1992, and although the ICP algorithm can meet the requirements of our registration task in many cases, certain problems still exist. Firstly, the point having the greatest influence on the ICP algorithm is that the algorithm depends on a better initial registration result, and if the initial registration position is worse, the algorithm is easily trapped in local optimization or infinite iteration, thereby greatly influencing the convergence speed of the algorithm and increasing the time complexity. In addition, the ICP algorithm selects a simple Euclidean distance sum to measure the registration error of the cloud corresponding point pair of the point to be registered, and optimization processing is carried out.

Therefore, on the basis of the ICP algorithm, many improved methods are proposed to accommodate many registration cases. Hans Martin Kjer et al propose an algorithm for sampling registration based on curvature. The congratulation and the like realize the rapid registration of the point cloud by introducing the idea of neighborhood characteristics.

However, these commonly used registration algorithms are usually accompanied by a large number of repeated matrix operations, such as the iterative operations found in conventional ICP algorithms. If the traditional serial registration mode is still adopted, the registration efficiency of the whole algorithm is influenced, and the registration real-time performance is further influenced. Therefore, as GPU parallel technology and CUDA (Computer Unified device architecture) programmable models continue to develop, GPUs are added to the algorithm to achieve parallel acceleration of the conventional algorithm. Choi et al use the CUDA programmable architecture of the GPU to accelerate the traditional ICP algorithm, and transfer a large amount of matrix operations in the algorithm to the GPU for completion, thereby realizing real-time image registration and improving the convergence speed of the algorithm.

The defects of the background art are as follows:

(1) technical defect of single unmanned plane

The problem faced by visual SLAM is that, with the continuous operation of drones, positioning drift can accumulate gradually, causing large-area distortion of maps. Although the loop detection of the rear end single machine can effectively relieve the accumulation of errors. However, in order to increase the number of times of loop return, a large amount of loop movement of the unmanned aerial vehicle is caused, the number of turns is increased, and meanwhile the coverage area in unit time is also remarkably reduced. Therefore, how to adopt the cooperation of multiple drones to complete SLAM is necessary.

In recent years, with the requirement that an intelligent agent needs to be more accurately positioned and construct a high-precision three-dimensional map, the three-dimensional SLAM algorithm of a single unmanned aerial vehicle cannot meet the requirement, particularly large-scale three-dimensional reconstruction, a large amount of time is consumed for a single machine to complete a task, and a large accumulated error is generated, so that the accuracy of positioning and mapping is greatly reduced. Therefore, the multi-unmanned plane three-dimensional SLAM algorithm framework becomes a good solution. The multi-unmanned aerial vehicle SLAM system is a coupled multi-agent system, key information is shared mutually by utilizing the cooperation among all unmanned aerial vehicles, the process of multi-unmanned aerial vehicle cooperative positioning and integral global three-dimensional point cloud map construction is realized in a communication mode, the efficiency and the precision requirement of three-dimensional reconstruction are improved in such a mode, and the requirement of complex tasks is met.

In the existing research, the cooperation of multiple unmanned aerial vehicles does not have mature and systematic research results, and the multi-machine cooperation is mainly divided into a centralized type and a distributed type in the research field of robots. More researches stay in multi-machine cooperation based on Kalman filtering as rear-end optimization, along with the movement of the unmanned aerial vehicle, the calculated amount of a scheme using filtering as rear-end processing rises exponentially, and the method is not suitable for being applied to engineering.

(2) Technical aspect of point cloud fusion

Although ICP algorithms can in many cases fulfill the requirements of our registration task, certain problems still exist. Firstly, the point having the greatest influence on the ICP algorithm is that the algorithm depends on a better initial registration result, and if the initial registration position is worse, the algorithm is easily trapped in local optimization or infinite iteration, thereby greatly influencing the convergence speed of the algorithm and increasing the time complexity. In addition, the ICP algorithm selects a simple Euclidean distance sum to measure the registration error of the cloud corresponding point pair of the point to be registered, and optimization processing is carried out.

Disclosure of Invention

The invention provides an ICP point cloud map fusion method based on multi-unmanned aerial vehicle cooperation, which comprises the following steps of:

the extraction step comprises: firstly, extracting key points from two data sets according to the same key point selection standard, wherein the two data sets are two point cloud maps with overlapped areas and are respectively marked as a point cloud P and a point cloud Q, wherein the point cloud P is a target point cloud, and the point cloud Q is a reference point cloud;

a calculation step: respectively calculating feature descriptors of all selected key points;

the processing steps are as follows: estimating the corresponding relation of the coordinate positions of the feature descriptors in the two data sets by combining the coordinate positions of the feature descriptors and the coordinate positions of the feature descriptors;

a registration step: and estimating rigid body transformation by utilizing the corresponding relation, and completely registering, so that the point cloud Q is unified to a coordinate system which is the same as the point cloud P through coordinate transformation, and is fused into a point cloud map.

As a further refinement of the present invention, in the processing step, the corresponding point pair is preliminarily estimated using a SAC-IA algorithm.

As a further improvement of the invention, a judging step is further included between the processing step and the registering step,

a judging step: judging whether the data has noise, if so, removing the wrong corresponding point pairs which have influence on the registration;

in the registration step, the rigid body transformation is estimated by using the residual correct corresponding relation, and the complete registration is carried out, so that the point clouds Q are unified to a coordinate system which is the same as the point cloud P through coordinate transformation, and a point cloud map is fused.

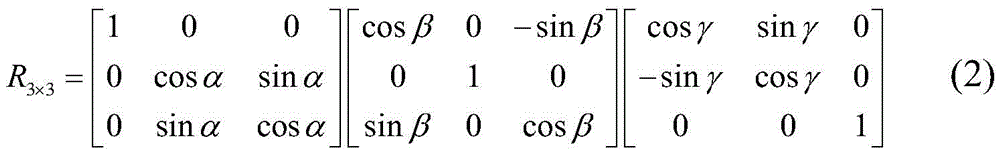

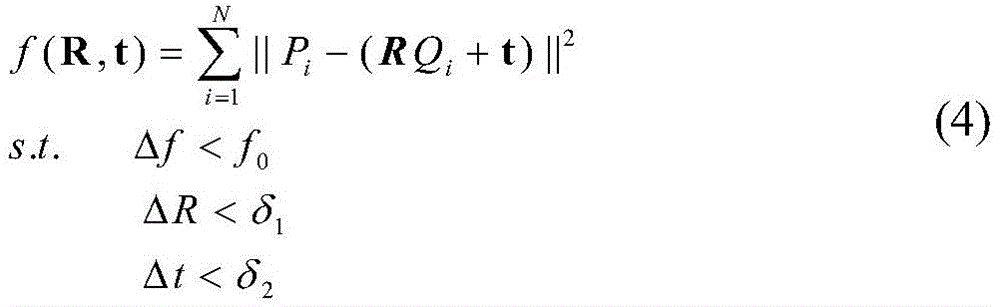

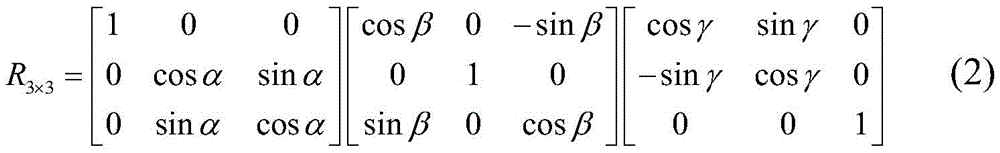

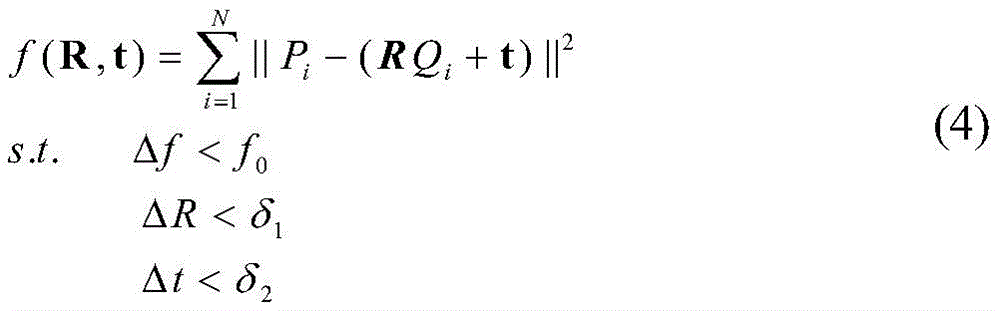

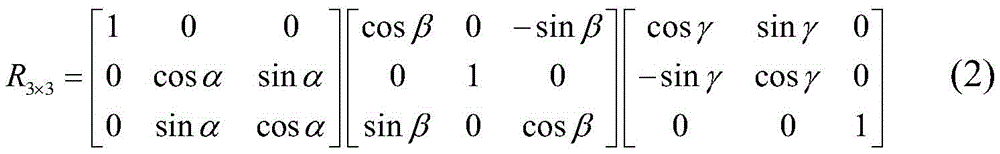

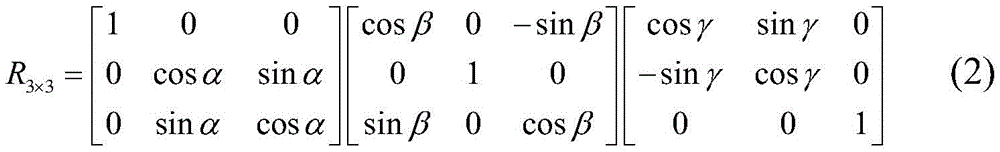

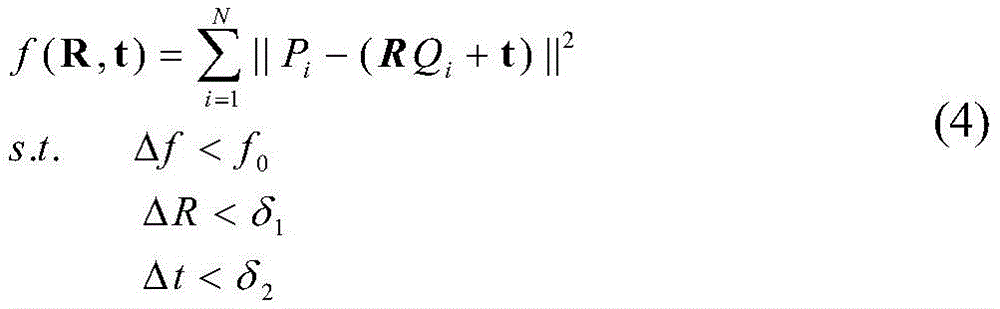

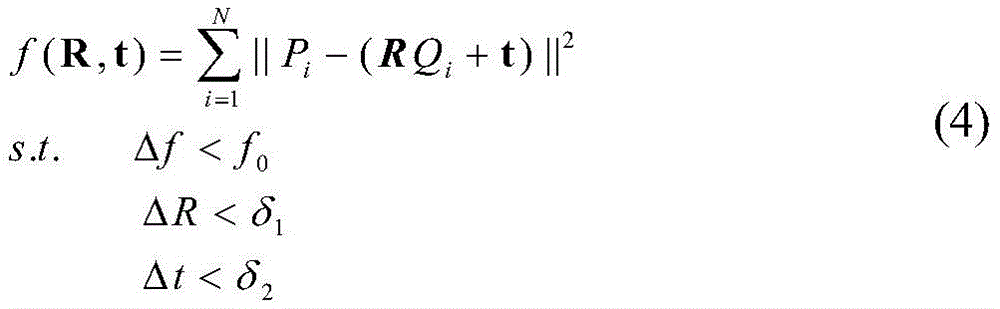

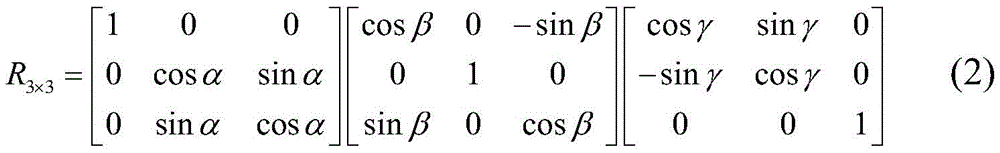

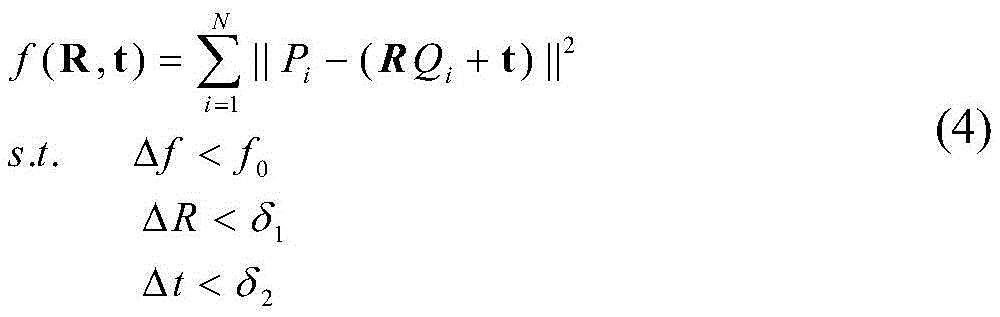

As a further improvement of the present invention, in the registration step, when the point cloud P and the point cloud Q under two different coordinate systems are subjected to coordinate transformation, rigid body transformation is realized by the following formula

qi′(x′,y′,z′)=Rqi(x,y,z)+t (1)

Wherein R is a rotation matrix, t is a translation matrix, respectively expressed as

t3×1=[txtytz]T(3)

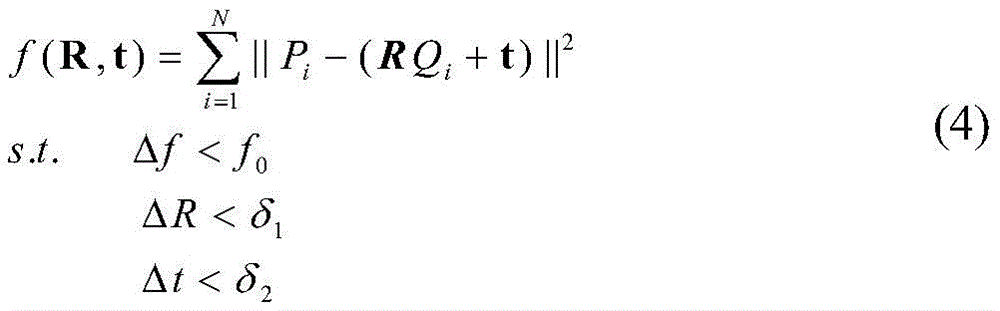

Wherein α, β and gamma represent the rotation angles of the points along the x, y and z axes, respectively, and tx、ty、tzRespectively representing the translation amounts of the points along the x, y and z axes; point cloud P is the target point cloud, and P is { P ═ Pi|Pi∈R3I is 1,2, …, N, the number of point clouds is N, the point cloud Q is the reference point cloud, Q is Q ═ Qi|Qi∈R3,i=1,2, …, M, the number of point clouds is M, the rotation transformation matrix is R, the translation transformation vector is t, and f (R, t) represents the error between the reference point set Q and the target point set P under the transformation matrix (R, t)

The problem of solving the optimal transformation matrix is converted into solving the optimal solution (R, t) that satisfies min (f (R, t)).

The invention also discloses an ICP point cloud map fusion system based on multi-unmanned aerial vehicle cooperation, which comprises the following steps:

an extraction module: the method comprises the steps of firstly, extracting key points from two data sets according to the same key point selection standard, wherein the two data sets are two point cloud maps with overlapped areas and respectively marked as a point cloud P and a point cloud Q, wherein the point cloud P is a target point cloud, and the point cloud Q is a reference point cloud;

a calculation module: respectively calculating the feature descriptors of all the selected key points;

a processing module: the coordinate positions of the feature descriptors in the two data sets are combined, the corresponding relation of the feature descriptors and the coordinate positions of the feature descriptors is estimated on the basis of the similarity of the feature descriptors and the coordinate positions of the feature descriptors, and corresponding point pairs are;

a registration module: and the method is used for estimating rigid body transformation by utilizing the corresponding relation and completely registering, so that the point cloud Q is unified to a coordinate system which is the same as the point cloud P through coordinate transformation and is fused into a point cloud map.

As a further refinement of the present invention, in the processing step, the corresponding point pair is preliminarily estimated using a SAC-IA algorithm.

As a further improvement of the invention, a judgment module is further included between the processing module and the registration module,

a judging module: the method comprises the steps of judging whether data is noisy or not, and if the data is noisy, removing corresponding point pairs of errors which have influence on registration;

in the registration module, the rigid body transformation is estimated by using the residual correct corresponding relation, and the complete registration is carried out, so that the point clouds Q are unified to a coordinate system which is the same as the point cloud P through coordinate transformation, and a point cloud map is fused.

As a further improvement of the invention, in the registration module, when the point cloud P and the point cloud Q under two different coordinate systems are subjected to coordinate transformation, rigid body transformation is realized by the following formula

qi′(x′,y′,z′)=Rqi(x,y,z)+t (1)

Wherein R is a rotation matrix, t is a translation matrix, respectively expressed as

t3×1=[txtytz]T(3)

Wherein α, β and gamma represent the rotation angles of the points along the x, y and z axes, respectively, and tx、ty、tzRespectively representing the translation amounts of the points along the x, y and z axes; point cloud P is the target point cloud, and P is { P ═ Pi|Pi∈R3I is 1,2, …, N, the number of point clouds is N, the point cloud Q is the reference point cloud, Q is Q ═ Qi|Qi∈R3I is 1,2, …, M, the number of point clouds is M, the rotation transformation matrix is R, the translation transformation vector is t, and f (R, t) represents the error between the reference point set Q and the target point set P under the transformation matrix (R, t)

The problem of solving the optimal transformation matrix is converted into solving the optimal solution (R, t) that satisfies min (f (R, t)).

The invention also provides an ICP point cloud map fusion device based on multi-unmanned aerial vehicle cooperation, which comprises the following components: a memory, a processor and a computer program stored on the memory, the computer program being configured to implement the steps of the ICP point cloud map fusion method of the invention when invoked by the processor.

The invention also provides a computer readable storage medium storing a computer program configured to, when invoked by a processor, implement the steps of the ICP point cloud map fusion method of the invention.

The invention has the beneficial effects that: the method is based on a multi-unmanned aerial vehicle cooperation mode, after the local point cloud map is obtained, the fused global map is obtained by applying the improved ICP point cloud fusion algorithm, and for a large-scale point cloud data map, the method can improve the convergence speed of the ICP algorithm while having higher precision, and a very good technical effect is obtained.

Drawings

FIG. 1 is a flow chart of the overall implementation of ICPI-based map fusion of the present invention;

FIG. 2 is a time diagram for each iteration;

FIG. 3 is a graph of evaluation fusion errors;

fig. 4 is a flow chart of a method of the present invention.

Detailed Description

The method aims at the defects of the limitation of the existing single unmanned plane SLAM algorithm and the respective existence of distributed centralization. The invention discloses an ICP point cloud map fusion method based on multi-unmanned aerial vehicle cooperation, which is based on the design of a centralized algorithm by combining a multi-unmanned aerial vehicle system and a visual SLAM.

The following is a detailed description:

1. brief introduction of the problem:

the point cloud registration comprises registration of a manual registration relying instrument and automatic registration, the automatic registration technology of the point cloud calculates dislocation between two point clouds by using a computer through a certain algorithm or a statistical rule, so that the effect of automatic registration of the two point clouds is achieved, the essence is that coordinate system transformation is carried out on data point clouds measured in different coordinate systems to obtain an integral data model, and the key of the problem is how to obtain parameters R (rotation matrix) and T (translation vector) of the coordinate transformation, so that the distance of three-dimensional data measured at two visual angles after the coordinate transformation is minimum, and the current registration algorithm can be divided into integral registration and local registration according to the process. There is a separate registration module in the PCL that implements the registration-related underlying data structure, and classical registration algorithms, such as ICP.

The registration process of the three-dimensional point cloud can be mainly divided into two stages: initial registration (coarse registration) and precise registration. In general, the point clouds generated by measurement are located in respective coordinate systems, and for two point clouds which are far from the corresponding initial positions, initial registration is firstly used to enable the two point clouds with overlarge initial distances to be close to each other, so that a foundation is laid for next registration. And then, the positions of the two point clouds are closer by adopting accurate registration, so that the error of the overlapped part is further reduced, and a larger-scale point cloud is spliced. The purpose of initial registration is to reduce the initial positions of two point clouds and reduce the difficulty of accurate registration. The purpose of accurate registration is to splice two point clouds into a larger-scale point cloud, and lay a foundation for three-dimensional reconstruction. The algorithms used in these two phases are different from the purpose.

2. Mathematical modeling:

first, several definitions of concepts related to the present invention are given:

suppose we have now obtained two point cloud maps with overlapping areas, which are respectively marked as a point cloud P and a point cloud Q, where P is a target point cloud and Q is a reference point cloud, and we aim to unify the point cloud Q under a coordinate system the same as the point cloud P through coordinate transformation to form one point cloud map.

When the coordinates of the points P and Q in two different coordinate systems are transformed, the rigid body transformation can be realized by the following formula

qi′(x′,y′,z′)=Rqi(x,y,z)+t (1)

Wherein R is a rotation matrix, t is a translation matrix, which can be respectively expressed as

t3×1=[txtytz]T(3)

Wherein α, β and gamma represent the rotation angles of the points along the x, y and z axes, respectively, and tx、ty、tzRepresenting the amount of translation of the point along the x, y, z axes, respectively.

Six unknowns α, β, gamma, t are involved in the rigid body transformationx、ty、tzTherefore, at least six linear equations need to be determined, that is, at least 3 corresponding point pairs need to be found in the overlapping region of the point clouds to be matched, and the point pairs cannot be collinear, so that the parameter estimation of the rigid matrix can be completed.

Assuming a given point cloud P as the target point cloud, using P ═ Pi|Pi∈R3I is 1,2, …, N, the number of point clouds is N, given point cloud Q as the reference point cloud, Q is Q ═ { Q ″i|Qi∈R3I is 1,2, …, M, and the number of point clouds is M. Let the rotation transformation matrix be R and the translation transformation vector be t, and f (R, t) denote the error between the reference point set Q and the target point set P under the transformation matrix (R, t)

The problem of solving the optimal transformation matrix can be transformed into solving the optimal solution (R, t) that satisfies min (f (R, t)). Least square method, singular value decomposition, orthogonal matrix method, dual four-element method and the like can be selected for solving.

Aiming at the point cloud map obtained by each unmanned aerial vehicle, when the size of the map is large, the number of point clouds is increased sharply, and the time complexity for point cloud registration is increased greatly, so that the operations of sampling and filtering the initial point clouds, extracting key points and the like are required, and the number of operable point clouds is reduced.

3. Describing a point cloud map fusion algorithm based on ICP-improved (ICPI):

the invention aims to research point cloud fusion, and a local three-dimensional point cloud map reconstructed by different unmanned aerial vehicles by utilizing a SLAM algorithm is fused to obtain a global point cloud map of an environment.

Aiming at the point cloud map obtained by each unmanned aerial vehicle, when the size of the map is large, the number of point clouds is increased sharply, and the time complexity for point cloud registration is increased greatly, so that the operations of sampling and filtering the initial point clouds, extracting key points and the like are required, and the number of operable point clouds is reduced.

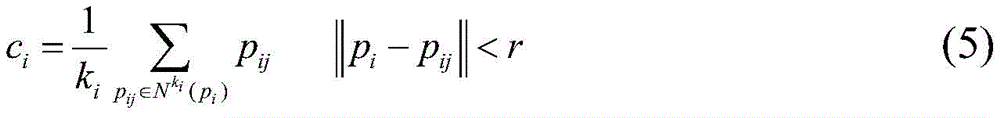

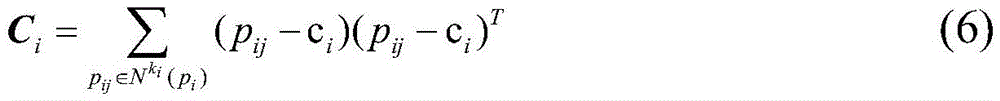

When a matched characteristic point pair is searched, a k nearest neighborhood point is searched by using a kd-tree searching method, for the condition that the density degree of point clouds of the point clouds is different, a neighborhood searching radius r is set, and only k within the distance of r from the neighborhood searching radius r is selectediPoints such that relatively few points are selected in sparse areas and more points are selected in dense areas, with k for each pointiThe central point c can be obtained from the point set formed by the nearest points of each neighborhoodi

Wherein a given set of three-dimensional pointsAnd ki nearest neighbor points of each point piFurther, solving the incidence matrix Ci

Computing the eigenvalues of the matrix { λ }0,λ1,λ2},λ0≤λ1≤λ2If pi satisfies the following expression, it is regarded as a feature point

Wherein epsilon1And ε2Is a set threshold.

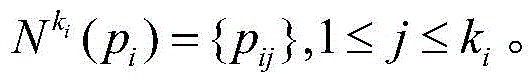

As shown in fig. 4, the specific implementation steps are as follows:

Through the expression of the problems, the process of solving the transformation parameters R and t is needed to fuse point cloud maps under different coordinate systems into a unified coordinate system. Firstly, filtering and drying point cloud data, then searching key points and describing the key points, and after the above work is finished, obtaining transformation parameters R and t by using initial fusion, and then optimizing by accurate fusion.

The pseudo-code description of the algorithm is as in table 1:

TABLE 1 map fusion algorithm based on ICPI

The overall execution flow of map fusion based on ICPI is shown in figure 1.

Experimental results and performance analysis:

in an experiment, the method of the invention is used for reconstructing a plurality of point cloud sub-map global fusion maps obtained by SLAM technology, and experimental equipment comprises the following steps: ubuntu 16.04 LTS, Intel core i5-7500 CPU @3.4 GHz.

TABLE 2 Experimental results of initial fusion

In table 2, we can see that the error of the SAC-IA initial fusion result based on the kd-tree is small (the smaller Score indicates the smaller fusion error), the obtained initial rotation and translation matrix is more accurate, and a good fusion standard is ensured for the accurate fusion of the back end.

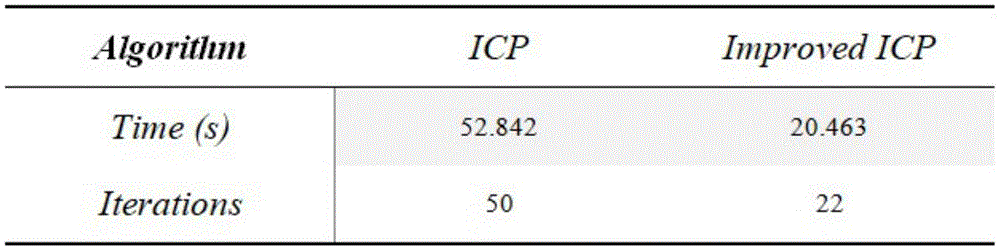

TABLE 3 exact fusion results

After the initial fusion, we performed an exact fusion using the modified ICP algorithm. The fusion result of the algorithm and the fusion comparison with the traditional algorithm give specific comparison data through experiments, and the comparison result shows that the algorithm has great advantages, so that the iteration times can be greatly reduced, and the time cost of the algorithm is reduced.

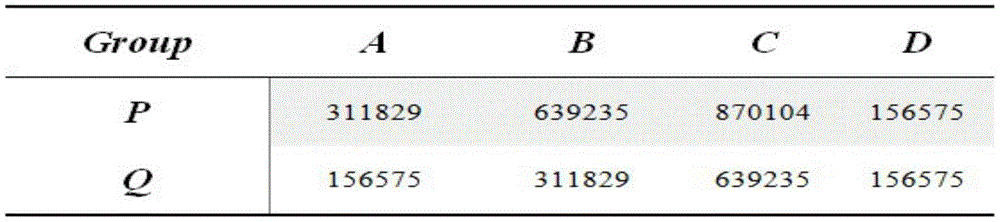

TABLE 4 Point cloud data set

The fusion result analysis of different point sets is carried out, a map fusion result graph is obtained through experiments, and a table 4 shows four different sub-point cloud maps, wherein data represent the data size of the points in the point clouds. The result shows that when the point cloud data is increased, the method still has good fusion effect.

The results of the evaluation are given in fig. 2 and 3, where a + Improved represents the experiment of the a group data based on the modified ICP algorithm, and other expressions are similar. From experimental result analysis, compared with the traditional fusion mode, the method provided by the invention has the advantages that the iteration times are reduced, the convergence rate is shortened, and a map fusion result meeting the error requirement can be obtained.

The invention also discloses an ICP point cloud map fusion system based on multi-unmanned aerial vehicle cooperation, which comprises the following steps:

an extraction module: the method comprises the steps of firstly, extracting key points from two data sets according to the same key point selection standard, wherein the two data sets are two point cloud maps with overlapped areas and respectively marked as a point cloud P and a point cloud Q, wherein the point cloud P is a target point cloud, and the point cloud Q is a reference point cloud;

a calculation module: respectively calculating the feature descriptors of all the selected key points;

a processing module: the coordinate positions of the feature descriptors in the two data sets are combined, the corresponding relation of the feature descriptors and the coordinate positions of the feature descriptors is estimated on the basis of the similarity of the feature descriptors and the coordinate positions of the feature descriptors, and corresponding point pairs are;

a registration module: and the method is used for estimating rigid body transformation by utilizing the corresponding relation and completely registering, so that the point cloud Q is unified to a coordinate system which is the same as the point cloud P through coordinate transformation and is fused into a point cloud map.

In the processing step, the corresponding point pairs are preliminarily estimated using the SAC-IA algorithm.

A judging module is further included between the processing module and the registration module,

a judging module: the method comprises the steps of judging whether data is noisy or not, and if the data is noisy, removing corresponding point pairs of errors which have influence on registration;

in the registration module, the rigid body transformation is estimated by using the residual correct corresponding relation, and the complete registration is carried out, so that the point clouds Q are unified to a coordinate system which is the same as the point cloud P through coordinate transformation, and a point cloud map is fused.

In the registration module, when the point cloud P and the point cloud Q under two different coordinate systems are subjected to coordinate transformation, rigid body transformation is realized through the following formula

qi′(x′,y′,z′)=Rqi(x,y,z)+t (1)

Wherein R is a rotation matrix, t is a translation matrix, respectively expressed as

t3×1=[txtytz]T(3)

Wherein α, β and gamma represent the rotation angles of the points along the x, y and z axes, respectively, and tx、ty、tzRespectively representing the translation amounts of the points along the x, y and z axes; point cloud P is the target point cloud, and P is { P ═ Pi|Pi∈R3,i=1,2, …, N, where the number of point clouds is N, point cloud Q is the reference point cloud, and Q is { Q ═ Q }i|Qi∈R3I is 1,2, …, M, the number of point clouds is M, the rotation transformation matrix is R, the translation transformation vector is t, and f (R, t) represents the error between the reference point set Q and the target point set P under the transformation matrix (R, t)

The problem of solving the optimal transformation matrix is converted into solving the optimal solution (R, t) that satisfies min (f (R, t)).

The invention also discloses an ICP point cloud map fusion device based on multi-unmanned aerial vehicle cooperation, which comprises the following components: a memory, a processor and a computer program stored on the memory, the computer program being configured to implement the steps of the ICP point cloud map fusion method of the invention when invoked by the processor.

The invention also discloses a computer readable storage medium storing a computer program configured to implement the steps of the ICP point cloud map fusion method of the invention when invoked by a processor.

The invention provides a multi-unmanned aerial vehicle-based cooperation mode aiming at the real-time positioning and map construction under the unknown environment of multiple unmanned aerial vehicles, and a fused global map is obtained by applying an improved ICP point cloud fusion algorithm after a local point cloud map is obtained.

Aiming at the defects of low convergence speed of the traditional initial fusion and ICP algorithm, the invention provides an improvement of the algorithm based on a kd-tree closest point search mode. According to the density degree of the point cloud, the feature points can be effectively extracted and the nearest neighbor points can be searched. And meanwhile, an included angle threshold value of the normal vector is also set to remove wrong matching point pairs, so that the time cost of the algorithm in the direction of wrong iteration is avoided. Finally, the invention utilizes the intelligent agent carrying the SLAM algorithm to construct the three-dimensional point cloud map of the indoor environment for carrying out the fusion experiment. The result shows that for a large-scale point cloud data map, the method can improve the convergence speed of the ICP algorithm while having higher precision, and the research of the method has certain application value.

The foregoing is a more detailed description of the invention in connection with specific preferred embodiments and it is not intended that the invention be limited to these specific details. For those skilled in the art to which the invention pertains, several simple deductions or substitutions can be made without departing from the spirit of the invention, and all shall be considered as belonging to the protection scope of the invention.

Claims (10)

1. An ICP point cloud map fusion method based on multi-unmanned aerial vehicle cooperation is characterized by comprising the following steps of:

the extraction step comprises: firstly, extracting key points from two data sets according to the same key point selection standard, wherein the two data sets are two point cloud maps with overlapped areas and are respectively marked as a point cloud P and a point cloud Q, wherein the point cloud P is a target point cloud, and the point cloud Q is a reference point cloud;

a calculation step: respectively calculating feature descriptors of all selected key points;

the processing steps are as follows: estimating the corresponding relation of the coordinate positions of the feature descriptors in the two data sets by combining the coordinate positions of the feature descriptors and the coordinate positions of the feature descriptors;

a registration step: and estimating rigid body transformation by utilizing the corresponding relation, and completely registering, so that the point cloud Q is unified to a coordinate system which is the same as the point cloud P through coordinate transformation, and is fused into a point cloud map.

2. An ICP point cloud map fusion method according to claim 1, wherein in the processing step, the corresponding point pairs are preliminarily estimated using a SAC-IA algorithm.

3. The ICP point cloud map fusion method of claim 1, further comprising a step of determining between said processing step and said registering step,

a judging step: judging whether the data has noise, if so, removing the wrong corresponding point pairs which have influence on the registration;

in the registration step, the rigid body transformation is estimated by using the residual correct corresponding relation, and the complete registration is carried out, so that the point clouds Q are unified to a coordinate system which is the same as the point cloud P through coordinate transformation, and a point cloud map is fused.

4. The ICP point cloud map fusion method according to claim 1, wherein in the registration step, when the point cloud P and the point cloud Q under two different coordinate systems are subjected to coordinate transformation, rigid body transformation is realized by the following formula

qi′(x′,y′,z′)=Rqi(x,y,z)+t (1)

Wherein R is a rotation matrix, t is a translation matrix, respectively expressed as

t3×1=[txtytz]T(3)

Wherein α, β, γ represent rotations of a point along the x, y, z axes, respectivelyAngle of rotation, tx、ty、tzRespectively representing the translation amounts of the points along the x, y and z axes; point cloud P is the target point cloud, and P is { P ═ Pi|Pi∈R3I is 1,2, …, N, the number of point clouds is N, the point cloud Q is the reference point cloud, Q is Q ═ Qi|Qi∈R3I is 1,2, …, M, the number of point clouds is M, the rotation transformation matrix is R, the translation transformation vector is t, and f (R, t) represents the error between the reference point set Q and the target point set P under the transformation matrix (R, t)

The problem of solving the optimal transformation matrix is converted into solving the optimal solution (R, t) that satisfies min (f (R, t)).

5. The utility model provides a ICP point cloud map fusion system based on cooperation of many unmanned aerial vehicles which characterized in that includes:

an extraction module: the method comprises the steps of firstly, extracting key points from two data sets according to the same key point selection standard, wherein the two data sets are two point cloud maps with overlapped areas and respectively marked as a point cloud P and a point cloud Q, wherein the point cloud P is a target point cloud, and the point cloud Q is a reference point cloud;

a calculation module: respectively calculating the feature descriptors of all the selected key points;

a processing module: the coordinate positions of the feature descriptors in the two data sets are combined, the corresponding relation of the feature descriptors and the coordinate positions of the feature descriptors is estimated on the basis of the similarity of the feature descriptors and the coordinate positions of the feature descriptors, and corresponding point pairs are;

a registration module: and the method is used for estimating rigid body transformation by utilizing the corresponding relation and completely registering, so that the point cloud Q is unified to a coordinate system which is the same as the point cloud P through coordinate transformation and is fused into a point cloud map.

6. An ICP point cloud map fusion system according to claim 5, wherein: in the processing step, the corresponding point pairs are preliminarily estimated using the SAC-IA algorithm.

7. An ICP point cloud map fusion system according to claim 5, wherein: a judging module is further included between the processing module and the registration module,

a judging module: the method comprises the steps of judging whether data is noisy or not, and if the data is noisy, removing corresponding point pairs of errors which have influence on registration;

in the registration module, the rigid body transformation is estimated by using the residual correct corresponding relation, and the complete registration is carried out, so that the point clouds Q are unified to a coordinate system which is the same as the point cloud P through coordinate transformation, and a point cloud map is fused.

8. The ICP point cloud map fusion system of claim 5, wherein in the registration module, when the point cloud P and the point cloud Q under two different coordinate systems are subjected to coordinate transformation, rigid body transformation is realized through the following formula

qi′(x′,y′,z′)=Rqi(x,y,z)+t (1)

Wherein R is a rotation matrix, t is a translation matrix, respectively expressed as

t3×1=[txtytz]T(3)

Wherein α, β and gamma represent the rotation angles of the points along the x, y and z axes, respectively, and tx、ty、tzRespectively representing the translation amounts of the points along the x, y and z axes; point cloud P is the target point cloud, and P is { P ═ Pi|Pi∈R3I is 1,2, …, N, the number of point clouds is N, the point cloud Q is the reference point cloud, Q is Q ═ Qi|Qi∈R3I is 1,2, …, M, the number of point clouds is M, the rotation transformation matrix is R, the translation transformation vector is t, and f (R, t) represents the error between the reference point set Q and the target point set P under the transformation matrix (R, t)

The problem of solving the optimal transformation matrix is converted into solving the optimal solution (R, t) that satisfies min (f (R, t)).

9. The utility model provides a ICP point cloud map fusion device based on cooperation of many unmanned aerial vehicles which characterized in that: the method comprises the following steps: a memory, a processor and a computer program stored on the memory, the computer program being configured to carry out the steps of the ICP point cloud map fusion method of any one of claims 1-4 when invoked by the processor.

10. A computer-readable storage medium characterized by: the computer readable storage medium stores a computer program configured to, when invoked by a processor, implement the steps of the ICP point cloud map fusion method of any one of claims 1-4.

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201911155928.6A CN110930495A (en) | 2019-11-22 | 2019-11-22 | Multi-unmanned aerial vehicle cooperation-based ICP point cloud map fusion method, system, device and storage medium |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201911155928.6A CN110930495A (en) | 2019-11-22 | 2019-11-22 | Multi-unmanned aerial vehicle cooperation-based ICP point cloud map fusion method, system, device and storage medium |

Publications (1)

| Publication Number | Publication Date |

|---|---|

| CN110930495A true CN110930495A (en) | 2020-03-27 |

Family

ID=69851657

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN201911155928.6A Pending CN110930495A (en) | 2019-11-22 | 2019-11-22 | Multi-unmanned aerial vehicle cooperation-based ICP point cloud map fusion method, system, device and storage medium |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN110930495A (en) |

Cited By (22)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN111553410A (en) * | 2020-04-27 | 2020-08-18 | 哈尔滨工程大学 | Point cloud identification method based on key point local curved surface feature histogram and spatial relationship |

| CN111580554A (en) * | 2020-05-13 | 2020-08-25 | 东南大学 | Indoor unmanned aerial vehicle formation flying method based on frame-by-frame identification and generation of original point cloud |

| CN111652085A (en) * | 2020-05-14 | 2020-09-11 | 东莞理工学院 | Object recognition method based on the combination of 2D and 3D features |

| CN111815684A (en) * | 2020-06-12 | 2020-10-23 | 武汉中海庭数据技术有限公司 | Space multivariate feature registration optimization method and device based on unified residual error model |

| CN111915661A (en) * | 2020-07-24 | 2020-11-10 | 广州大学 | Point cloud registration method, system and computer readable storage medium based on RANSAC algorithm |

| CN112000130A (en) * | 2020-09-07 | 2020-11-27 | 哈尔滨工业大学 | Unmanned aerial vehicle's multimachine cooperation high accuracy is built and is drawn positioning system |

| CN112161635A (en) * | 2020-09-22 | 2021-01-01 | 中山大学 | Cooperative graph building method based on minimum loop detection |

| CN112183285A (en) * | 2020-09-22 | 2021-01-05 | 合肥科大智能机器人技术有限公司 | 3D point cloud map fusion method and system for transformer substation inspection robot |

| CN112435166A (en) * | 2020-11-27 | 2021-03-02 | 广东电网有限责任公司肇庆供电局 | Unmanned aerial vehicle flight strip splicing method and equipment, storage medium and processing device |

| CN113160287A (en) * | 2021-03-17 | 2021-07-23 | 华中科技大学 | Complex component point cloud splicing method and system based on feature fusion |

| CN113192114A (en) * | 2021-07-01 | 2021-07-30 | 四川大学 | Blade multi-field point cloud registration method based on overlapping features and local distance constraint |

| CN113506368A (en) * | 2021-07-13 | 2021-10-15 | 阿波罗智能技术(北京)有限公司 | Map data fusion method, map data fusion device, electronic device, map data fusion medium, and program product |

| CN113706591A (en) * | 2021-07-30 | 2021-11-26 | 华东理工大学 | Point cloud-based surface weak texture satellite three-dimensional reconstruction method |

| CN114511673A (en) * | 2022-01-26 | 2022-05-17 | 哈尔滨工程大学 | Improved ICP-based seabed local environment preliminary construction method |

| CN114549601A (en) * | 2022-02-11 | 2022-05-27 | 中国科学院精密测量科学与技术创新研究院 | Landslide multi-temporal TLS point cloud precise registration method considering point pair reliability |

| CN114742141A (en) * | 2022-03-30 | 2022-07-12 | 成都九洲迪飞科技有限责任公司 | Multi-source information data fusion studying and judging method based on ICP point cloud |

| CN114861014A (en) * | 2022-04-25 | 2022-08-05 | 中国第一汽车股份有限公司 | Data processing method, data processing device and computer readable storage medium |

| CN115082547A (en) * | 2022-07-27 | 2022-09-20 | 深圳市华汉伟业科技有限公司 | Profile measuring method based on point cloud data and storage medium |

| WO2022227096A1 (en) * | 2021-04-30 | 2022-11-03 | 深圳市大疆创新科技有限公司 | Point cloud data processing method, and device and storage medium |

| CN116630424A (en) * | 2023-05-30 | 2023-08-22 | 深圳海星智驾科技有限公司 | Tray detection method, device, computer equipment and storage medium |

| CN118229776A (en) * | 2024-02-06 | 2024-06-21 | 中国科学院空天信息创新研究院 | Point cloud map lightweight and matching auxiliary positioning method, system, device and medium |

| CN119848764A (en) * | 2024-12-24 | 2025-04-18 | 华中科技大学 | Cross-scale data fusion method, device, equipment and storage medium based on characteristic region |

Citations (4)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN106296693A (en) * | 2016-08-12 | 2017-01-04 | 浙江工业大学 | Based on 3D point cloud FPFH feature real-time three-dimensional space-location method |

| CN106780459A (en) * | 2016-12-12 | 2017-05-31 | 华中科技大学 | A kind of three dimensional point cloud autoegistration method |

| CN107818598A (en) * | 2017-10-20 | 2018-03-20 | 西安电子科技大学昆山创新研究院 | A kind of three-dimensional point cloud map amalgamation method of view-based access control model correction |

| CN108830902A (en) * | 2018-04-19 | 2018-11-16 | 江南大学 | A kind of workpiece identification at random and localization method based on points cloud processing |

-

2019

- 2019-11-22 CN CN201911155928.6A patent/CN110930495A/en active Pending

Patent Citations (4)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN106296693A (en) * | 2016-08-12 | 2017-01-04 | 浙江工业大学 | Based on 3D point cloud FPFH feature real-time three-dimensional space-location method |

| CN106780459A (en) * | 2016-12-12 | 2017-05-31 | 华中科技大学 | A kind of three dimensional point cloud autoegistration method |

| CN107818598A (en) * | 2017-10-20 | 2018-03-20 | 西安电子科技大学昆山创新研究院 | A kind of three-dimensional point cloud map amalgamation method of view-based access control model correction |

| CN108830902A (en) * | 2018-04-19 | 2018-11-16 | 江南大学 | A kind of workpiece identification at random and localization method based on points cloud processing |

Non-Patent Citations (1)

| Title |

|---|

| HAO LI ET.AL: "A ICP-Improved Point Cloud Maps Fusion Algorithm with Multi-UAV Collaboration" * |

Cited By (35)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN111553410A (en) * | 2020-04-27 | 2020-08-18 | 哈尔滨工程大学 | Point cloud identification method based on key point local curved surface feature histogram and spatial relationship |

| CN111553410B (en) * | 2020-04-27 | 2022-10-28 | 哈尔滨工程大学 | Point cloud recognition method based on key point local surface feature histogram and spatial relationship |

| CN111580554A (en) * | 2020-05-13 | 2020-08-25 | 东南大学 | Indoor unmanned aerial vehicle formation flying method based on frame-by-frame identification and generation of original point cloud |

| CN111652085A (en) * | 2020-05-14 | 2020-09-11 | 东莞理工学院 | Object recognition method based on the combination of 2D and 3D features |

| CN111815684A (en) * | 2020-06-12 | 2020-10-23 | 武汉中海庭数据技术有限公司 | Space multivariate feature registration optimization method and device based on unified residual error model |

| CN111815684B (en) * | 2020-06-12 | 2022-08-02 | 武汉中海庭数据技术有限公司 | Space multivariate feature registration optimization method and device based on unified residual error model |

| CN111915661A (en) * | 2020-07-24 | 2020-11-10 | 广州大学 | Point cloud registration method, system and computer readable storage medium based on RANSAC algorithm |

| CN111915661B (en) * | 2020-07-24 | 2023-08-08 | 广州大学 | Point cloud registration method, system and computer readable storage medium based on RANSAC algorithm |

| CN112000130A (en) * | 2020-09-07 | 2020-11-27 | 哈尔滨工业大学 | Unmanned aerial vehicle's multimachine cooperation high accuracy is built and is drawn positioning system |

| CN112183285A (en) * | 2020-09-22 | 2021-01-05 | 合肥科大智能机器人技术有限公司 | 3D point cloud map fusion method and system for transformer substation inspection robot |

| CN112161635A (en) * | 2020-09-22 | 2021-01-01 | 中山大学 | Cooperative graph building method based on minimum loop detection |

| CN112161635B (en) * | 2020-09-22 | 2022-07-05 | 中山大学 | A Cooperative Mapping Method Based on Minimum Loop Closure Detection |

| CN112183285B (en) * | 2020-09-22 | 2022-07-12 | 合肥科大智能机器人技术有限公司 | 3D point cloud map fusion method and system for transformer substation inspection robot |

| CN112435166A (en) * | 2020-11-27 | 2021-03-02 | 广东电网有限责任公司肇庆供电局 | Unmanned aerial vehicle flight strip splicing method and equipment, storage medium and processing device |

| CN113160287A (en) * | 2021-03-17 | 2021-07-23 | 华中科技大学 | Complex component point cloud splicing method and system based on feature fusion |

| CN113160287B (en) * | 2021-03-17 | 2022-04-22 | 华中科技大学 | Complex component point cloud splicing method and system based on feature fusion |

| WO2022227096A1 (en) * | 2021-04-30 | 2022-11-03 | 深圳市大疆创新科技有限公司 | Point cloud data processing method, and device and storage medium |

| CN113192114A (en) * | 2021-07-01 | 2021-07-30 | 四川大学 | Blade multi-field point cloud registration method based on overlapping features and local distance constraint |

| CN113192114B (en) * | 2021-07-01 | 2021-09-03 | 四川大学 | Blade multi-field point cloud registration method based on overlapping features and local distance constraint |

| CN113506368A (en) * | 2021-07-13 | 2021-10-15 | 阿波罗智能技术(北京)有限公司 | Map data fusion method, map data fusion device, electronic device, map data fusion medium, and program product |

| CN113706591A (en) * | 2021-07-30 | 2021-11-26 | 华东理工大学 | Point cloud-based surface weak texture satellite three-dimensional reconstruction method |

| CN113706591B (en) * | 2021-07-30 | 2024-03-19 | 华东理工大学 | Point cloud-based three-dimensional reconstruction method for surface weak texture satellite |

| CN114511673A (en) * | 2022-01-26 | 2022-05-17 | 哈尔滨工程大学 | Improved ICP-based seabed local environment preliminary construction method |

| CN114511673B (en) * | 2022-01-26 | 2022-12-09 | 哈尔滨工程大学 | A Preliminary Construction Method of Seabed Local Environment Based on Improved ICP |

| CN114549601A (en) * | 2022-02-11 | 2022-05-27 | 中国科学院精密测量科学与技术创新研究院 | Landslide multi-temporal TLS point cloud precise registration method considering point pair reliability |

| CN114549601B (en) * | 2022-02-11 | 2023-03-28 | 中国科学院精密测量科学与技术创新研究院 | Landslide multi-temporal TLS point cloud fine registration method considering point pair reliability |

| CN114742141A (en) * | 2022-03-30 | 2022-07-12 | 成都九洲迪飞科技有限责任公司 | Multi-source information data fusion studying and judging method based on ICP point cloud |

| CN114861014A (en) * | 2022-04-25 | 2022-08-05 | 中国第一汽车股份有限公司 | Data processing method, data processing device and computer readable storage medium |

| CN115082547B (en) * | 2022-07-27 | 2022-11-15 | 深圳市华汉伟业科技有限公司 | Profile measuring method based on point cloud data and storage medium |

| CN115082547A (en) * | 2022-07-27 | 2022-09-20 | 深圳市华汉伟业科技有限公司 | Profile measuring method based on point cloud data and storage medium |

| CN116630424A (en) * | 2023-05-30 | 2023-08-22 | 深圳海星智驾科技有限公司 | Tray detection method, device, computer equipment and storage medium |

| CN118229776A (en) * | 2024-02-06 | 2024-06-21 | 中国科学院空天信息创新研究院 | Point cloud map lightweight and matching auxiliary positioning method, system, device and medium |

| CN118229776B (en) * | 2024-02-06 | 2025-05-02 | 中国科学院空天信息创新研究院 | Point cloud map lightweight and matching auxiliary positioning method, system, device and medium |

| CN119848764A (en) * | 2024-12-24 | 2025-04-18 | 华中科技大学 | Cross-scale data fusion method, device, equipment and storage medium based on characteristic region |

| CN119848764B (en) * | 2024-12-24 | 2025-09-30 | 华中科技大学 | Cross-scale data fusion method, device, equipment and storage medium based on characteristic region |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN110930495A (en) | Multi-unmanned aerial vehicle cooperation-based ICP point cloud map fusion method, system, device and storage medium | |

| CN114526745B (en) | Drawing construction method and system for tightly coupled laser radar and inertial odometer | |

| CN113587933B (en) | An indoor mobile robot positioning method based on branch and bound algorithm | |

| CN113168717A (en) | Point cloud matching method and device, navigation method and equipment, positioning method and laser radar | |

| Cai et al. | Mobile robot localization using gps, imu and visual odometry | |

| CN108759833A (en) | A kind of intelligent vehicle localization method based on priori map | |

| CN115775242A (en) | A matching-based point cloud map quality assessment method | |

| CN114219022B (en) | Multi-sensor multi-target tracking method combining cluster analysis and particle swarm optimization algorithm | |

| CN109974693A (en) | UAV positioning method, device, computer equipment and storage medium | |

| CN113960614B (en) | A method for constructing elevation maps based on frame-map matching | |

| CN112444246B (en) | Laser fusion positioning method in high-precision digital twin scene | |

| CN116679314A (en) | Method and system for synchronous mapping and positioning of 3D laser radar based on fusion of point cloud intensity | |

| CN113920180B (en) | Point cloud registration optimization method based on normal distribution transformation hypothesis verification | |

| CN118067108A (en) | Construction and positioning method and system based on laser radar-inertial navigation-vision fusion | |

| CN113554705A (en) | A robust localization method for lidar in changing scenes | |

| CN118501847A (en) | Ping Miandian cloud clustering voxel-based three-dimensional normal distribution transformation positioning method, device, medium and product | |

| CN118310531A (en) | Cross-scene positioning method and system for robot with coarse-to-fine point cloud registration | |

| CN117213470B (en) | A multi-machine fragment map aggregation and update method and system | |

| JP2023533140A (en) | How to improve location estimation accuracy for self-driving cars | |

| Xue et al. | Visual-marker-based localization for flat-variation scene | |

| CN117889861A (en) | A robot vision global positioning method guided by laser ranging | |

| CN117930254A (en) | Unmanned aerial vehicle laser radar repositioning method and system based on structured information assistance | |

| CN117687006A (en) | External parameter calibration method and device from laser radar to inertial measurement unit | |

| CN117191023A (en) | Relative positioning method of unmanned system cluster based on extended Kalman filter | |

| CN117109568B (en) | Inertial/multi-dimensional vision joint positioning method |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| RJ01 | Rejection of invention patent application after publication |

Application publication date: 20200327 |

|

| RJ01 | Rejection of invention patent application after publication |