CN110245678B - An Image Matching Method Based on Heterogeneous Siamese Region Selection Network - Google Patents

An Image Matching Method Based on Heterogeneous Siamese Region Selection Network Download PDFInfo

- Publication number

- CN110245678B CN110245678B CN201910376172.1A CN201910376172A CN110245678B CN 110245678 B CN110245678 B CN 110245678B CN 201910376172 A CN201910376172 A CN 201910376172A CN 110245678 B CN110245678 B CN 110245678B

- Authority

- CN

- China

- Prior art keywords

- map

- network

- feature

- heterogeneous

- convolution

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/24—Classification techniques

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/045—Combinations of networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/40—Extraction of image or video features

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- General Physics & Mathematics (AREA)

- Data Mining & Analysis (AREA)

- Evolutionary Computation (AREA)

- Life Sciences & Earth Sciences (AREA)

- Artificial Intelligence (AREA)

- General Engineering & Computer Science (AREA)

- Computing Systems (AREA)

- Software Systems (AREA)

- Molecular Biology (AREA)

- Computational Linguistics (AREA)

- Biophysics (AREA)

- Biomedical Technology (AREA)

- Mathematical Physics (AREA)

- General Health & Medical Sciences (AREA)

- Health & Medical Sciences (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Bioinformatics & Computational Biology (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Evolutionary Biology (AREA)

- Multimedia (AREA)

- Image Analysis (AREA)

Abstract

本发明公开了一种基于异构孪生区域选取网络的图像匹配方法,属于计算机视觉领域。包括依次串联的异构孪生网络和区域匹配网络;异构孪生网络用于提取模板图的特征图和待匹配图的特征图;区域匹配网络用于根据模板图的特征图和待匹配图的特征图,得到区域匹配结果;异构孪生网络包括相互并联的子网络A和子网络B,每个子网络为依次串联的特征提取模块、特征融合模块和最大值池化模块,两个子网络各模块均相同,仅特征提取模块的首层卷积的卷积核不同。本发明将异构孪生区域选取网络应用于图像匹配,实现非固定尺度模板与待匹配图的输入,充分利用图像多层特征,有效提升了匹配方法的性能,增加了匹配的成功率与速度。

The invention discloses an image matching method based on a heterogeneous twin area selection network, which belongs to the field of computer vision. Including the heterogeneous twinning network and the region matching network in series; the heterogeneous twinning network is used to extract the feature map of the template map and the feature map of the map to be matched; the region matching network is used to extract the feature map of the template map and the feature map to be matched according to the feature map of the template map. Figure, get the regional matching results; the heterogeneous twin network includes sub-network A and sub-network B in parallel with each other, each sub-network is a feature extraction module, a feature fusion module and a maximum pooling module in series, and the modules of the two sub-networks are the same. , only the convolution kernel of the first layer of the feature extraction module is different. The invention applies the heterogeneous twin region selection network to image matching, realizes the input of non-fixed scale template and the image to be matched, fully utilizes the multi-layer features of the image, effectively improves the performance of the matching method, and increases the matching success rate and speed.

Description

技术领域technical field

本发明属于计算机视觉领域,更具体地,涉及一种基于异构孪生区域选取网络的图像匹配方法。The invention belongs to the field of computer vision, and more particularly, relates to an image matching method based on a heterogeneous twin area selection network.

背景技术Background technique

图像匹配是指在一幅(或一批)图像中寻找与给定场景区域图像相似的图像或者图像区域(子图像)的过程。通常将已知场景区域图像称为模板图像,而将待搜索图像中可能与它对应的子图称作该模板的待匹配的场景区域图像。图像匹配是在来自不同时间或者不同视角的同一场景的两幅或多幅图像之间寻找对应关系,具体应用包括目标或场景识别、在多幅图像中求解3D结构、立体对应和运动跟踪等。Image matching refers to the process of finding images or image areas (sub-images) that are similar to a given scene area image in an image (or a batch of images). Usually, the known scene area image is called a template image, and the sub-images in the image to be searched that may correspond to it are called the scene area image to be matched of the template. Image matching is to find correspondence between two or more images of the same scene from different times or different perspectives. Specific applications include target or scene recognition, 3D structure solving in multiple images, stereo correspondence, and motion tracking.

目前多数图像匹配算法都只是用了浅层的人工特征,例如灰度特征、梯度特征等,由于拍摄时间、拍摄角度、自然环境的变化,传感器本身的缺陷及噪声等影响,拍摄的图像会存在灰度失真和几何畸变,场景在图像中发生较大的变化,导致模板图像与待匹配图像之间存在着一定程度上的差异,浅层特征往往会失效,因此目前在模板制备上需要花费大量人力,操作过程复杂,效率较低;目前多数深度神经网络对数据数量要求较高,对于训练集中数量较少的样本类型,即少样本、单样本的情况,往往无法识别。At present, most image matching algorithms only use shallow artificial features, such as grayscale features and gradient features. Grayscale distortion and geometric distortion, the scene changes greatly in the image, resulting in a certain degree of difference between the template image and the image to be matched, and the shallow features often fail, so the current template preparation requires a lot of money Manpower, complex operation process and low efficiency; most deep neural networks currently require a high amount of data, and are often unable to identify the types of samples in the training set with a small number, that is, few samples and single samples.

发明内容SUMMARY OF THE INVENTION

针对现有技术的缺陷,本发明的目的在于解决现有技术图像匹配算法基本无法适应成像视点及尺度变化、场景适应性和抗干扰能力弱且匹配成功率较低的技术问题。In view of the defects of the prior art, the purpose of the present invention is to solve the technical problems that the prior art image matching algorithm basically cannot adapt to the changes of imaging viewpoint and scale, the scene adaptability and anti-interference ability are weak, and the matching success rate is low.

为实现上述目的,第一方面,本发明实施例提供了一种异构孪生区域选取网络,所述异构孪生区域选取网络包括依次串联的异构孪生网络和区域匹配网络;In order to achieve the above object, in a first aspect, an embodiment of the present invention provides a heterogeneous twin area selection network, and the heterogeneous twin area selection network includes a serially connected heterogeneous twin network and an area matching network;

所述异构孪生网络用于提取模板图的特征图和待匹配图的特征图;The heterogeneous twin network is used to extract the feature map of the template map and the feature map of the map to be matched;

所述区域匹配网络用于根据模板图的特征图和待匹配图的特征图,得到区域匹配结果;The region matching network is used to obtain a region matching result according to the feature map of the template map and the feature map of the map to be matched;

所述异构孪生网络包括相互并联的子网络A和子网络B,每个子网络为依次串联的特征提取模块、特征融合模块和最大值池化模块,两个子网络仅特征提取模块的首层卷积的卷积核不同,其余模块均相同。The heterogeneous twin network includes a sub-network A and a sub-network B in parallel with each other, each sub-network is a feature extraction module, a feature fusion module and a maximum pooling module in series, and the two sub-networks are only the first-layer convolution of the feature extraction module. The convolution kernels are different, and the rest of the modules are the same.

具体地,所述特征提取模块用于提取输入图像的特征图,所述特征融合模块用于融合特征提取模块最后三层的卷积特征,所述最大值池化模块将融合特征尺度归一化。Specifically, the feature extraction module is used to extract the feature map of the input image, the feature fusion module is used to fuse the convolution features of the last three layers of the feature extraction module, and the maximum pooling module normalizes the scale of the fusion feature .

具体地,所述特征提取模块将ResNet18第二层替换为卷积。Specifically, the feature extraction module replaces the second layer of ResNet18 with convolution.

具体地,所述特征提取模块将ResNet18最后一层替换为卷积层。Specifically, the feature extraction module replaces the last layer of ResNet18 with a convolutional layer.

具体地,所述区域匹配网络包括:特征划分模块、分类模块和位置回归模块;其中,分类模块与位置回归模块并联,并行处理,并串接在特征划分模块之后;Specifically, the area matching network includes: a feature division module, a classification module, and a position regression module; wherein, the classification module and the position regression module are connected in parallel, processed in parallel, and connected in series after the feature division module;

所述特征划分模块包括:第一卷积、第二卷积、第三卷积、第四卷积;The feature division module includes: a first convolution, a second convolution, a third convolution, and a fourth convolution;

所述第一卷积用于从模板图的特征图中提取模板分类特征图;The first convolution is used to extract the template classification feature map from the feature map of the template map;

所述第二卷积用于从模板图的特征图中提取模板位置特征图;The second convolution is used to extract the template position feature map from the feature map of the template map;

所述第三卷积用于从待匹配图的特征图中提取待匹配图分类特征图;The third convolution is used to extract the classification feature map of the map to be matched from the feature map of the map to be matched;

所述第四卷积用于从待匹配图的特征图中提取待匹配图位置特征图;The fourth convolution is used to extract the position feature map of the map to be matched from the feature map of the map to be matched;

所述分类模块用于使用模板分类特征图作为卷积核,与待匹配图分类特征图进行卷积,输出匹配的类别;The classification module is configured to use the template classification feature map as a convolution kernel, perform convolution with the classification feature map of the map to be matched, and output the matched category;

所述位置回归模块用于使用模板位置特征图作为卷积核,与待匹配图位置特征图进行卷积,输出匹配的位置。The position regression module is configured to use the template position feature map as a convolution kernel, perform convolution with the position feature map of the map to be matched, and output the matched position.

第二方面,本发明实施例提供了一种基于第一方面所述的异构孪生区域选取网络的图像匹配方法,包括如下步骤:In a second aspect, an embodiment of the present invention provides an image matching method based on the heterogeneous twin region selection network described in the first aspect, including the following steps:

S1.使用训练样本训练异构孪生区域选取网络,训练样本为模板图-待匹配图图对,训练样本的标签为模板图对应的区域在待匹配图中对应区域的位置信息;S1. Use the training sample to train the heterogeneous twinned region selection network, the training sample is a template map-to-be-matched map pair, and the label of the training sample is the location information of the corresponding region of the template map in the to-be-matched map;

S2.将待测样本输入训练好的异构孪生区域选取网络,输出待测样本的匹配结果。S2. Input the sample to be tested into the trained heterogeneous twin area selection network, and output the matching result of the sample to be tested.

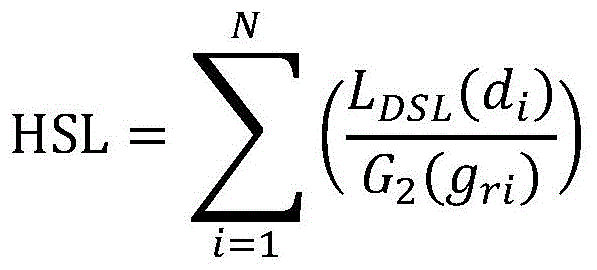

具体地,训练时总损失函数=HCE+HSL;Specifically, the total loss function during training=HCE+HSL;

d=|t-t*|=|(x0-xG)+(yo-yG)+(wo-wG)+(ho-hG)|d=|tt * |=|(x 0 -x G )+(y o -y G )+(w o -w G )+(h o -h G )|

其中,HCE是分类损失函数,HSL是位置回归损失,p表示预测样本类别为正的概率,p*是对应的标签,N表示样本的总数,t表示网络输出的样本位置t*是对应的标签,即样本实际的位置x、y表示横坐标、纵坐标,w、h为宽度、长度。Among them, HCE is the classification loss function, HSL is the position regression loss, p represents the probability that the predicted sample category is positive, p * is the corresponding label, N represents the total number of samples, and t represents the sample position output by the network t * is the corresponding label, that is, the actual position of the sample x and y represent abscissa and ordinate, and w and h represent width and length.

具体地,输出位置和实际标签的交并比IOU>0.7,则p*=1,否则,p*=0。Specifically, if the intersection ratio of the output position and the actual label is IOU>0.7, then p * =1, otherwise, p * =0.

具体地,在步骤S1之后、步骤S2之前,还可以用待测样本对异构孪生区域选取网络进行优化,具体如下:Specifically, after step S1 and before step S2, the sample to be tested can also be used to optimize the heterogeneous twin area selection network, as follows:

将测试样本集中的模板图与待匹配图对输入网络,将输出结果与标签计算交并比,以交并比衡量匹配成功概率,以此评估图像匹配网络的性能,并决定是否继续训练网络。The template image in the test sample set and the image to be matched are input to the network, and the output result and the label are calculated and compared, and the matching success probability is measured by the cross-union ratio, so as to evaluate the performance of the image matching network and decide whether to continue training the network.

第三方面,本发明实施例提供了一种计算机可读存储介质,该计算机可读存储介质上存储有计算机程序,该计算机程序被处理器执行时实现上述第二方面所述的基于异构孪生区域选取网络的图像匹配方法。In a third aspect, an embodiment of the present invention provides a computer-readable storage medium, where a computer program is stored on the computer-readable storage medium, and when the computer program is executed by a processor, implements the heterogeneous twin-based method described in the second aspect above. Image matching method for region selection network.

总体而言,通过本发明所构思的以上技术方案与现有技术相比,具有以下有益效果:In general, compared with the prior art, the above technical solutions conceived by the present invention have the following beneficial effects:

1.本发明将异构孪生区域选取网络应用于图像匹配,并根据网络模型实际应用中存在的问题进行改进,算法实现非固定尺度模板与待匹配图的输入,同时充分利用图像多层特征信息,有效提升了匹配方法的抗干扰能力,适应成像的视点及尺度变化,增加了匹配的成功率与速度,减少了匹配对模板质量的需求,适应少样本、单样本条件下的匹配,在实际应用中可大大降低人力成本。1. The present invention applies the heterogeneous twin region selection network to image matching, and improves it according to the problems existing in the practical application of the network model. The algorithm realizes the input of a non-fixed scale template and a graph to be matched, and makes full use of the multi-layer feature information of the image at the same time. , which effectively improves the anti-interference ability of the matching method, adapts to the viewpoint and scale changes of imaging, increases the success rate and speed of matching, reduces the demand for template quality for matching, and adapts to matching under the conditions of few samples and single samples. In the application, the labor cost can be greatly reduced.

2.本发明在训练网络的过程中,提出了一种新型的损失函数,即添加了均衡损失函数,包括均衡交叉熵损失HCE与均衡回归损失HSL两项,这两项损失项对于提高网络的匹配成功率具有优越的效果,且有效提高了网络收敛的速度。2. In the process of training the network, the present invention proposes a new type of loss function, that is, a balanced loss function is added, including balanced cross entropy loss HCE and balanced regression loss HSL. The matching success rate has a superior effect, and effectively improves the speed of network convergence.

附图说明Description of drawings

图1为本发明实施例提供的训练样本示意图;1 is a schematic diagram of a training sample provided by an embodiment of the present invention;

图2为本发明实施例提供的测试样本示意图;2 is a schematic diagram of a test sample provided by an embodiment of the present invention;

图3为本发明实施例提供的异构孪生区域选取网络结构示意图;3 is a schematic diagram of a network structure for selecting a heterogeneous twin area according to an embodiment of the present invention;

图4为本发明实施例提供的一种基于异构孪生区域选取网络的图像匹配方法流程图;4 is a flowchart of an image matching method based on a heterogeneous twin region selection network provided by an embodiment of the present invention;

图5(a)为本发明实施例提供的待测模板图;Figure 5(a) is a template diagram to be tested provided by an embodiment of the present invention;

图5(b)为本发明实施例提供的待测样本的匹配结果。FIG. 5(b) is a matching result of a sample to be tested provided by an embodiment of the present invention.

具体实施方式Detailed ways

为了使本发明的目的、技术方案及优点更加清楚明白,以下结合附图及实施例,对本发明进行进一步详细说明。应当理解,此处所描述的具体实施例仅仅用以解释本发明,并不用于限定本发明。In order to make the objectives, technical solutions and advantages of the present invention clearer, the present invention will be further described in detail below with reference to the accompanying drawings and embodiments. It should be understood that the specific embodiments described herein are only used to explain the present invention, but not to limit the present invention.

样本生成sample generation

(1)准备n个不同场景{P1,...,Pi...,Pn},同一场景Pi中含若干可见光图像{Pi1,...,Pij,...PiM},Pij即场景Pi的第j张图。(1) Prepare n different scenes {P 1 ,...,P i ...,P n }, the same scene P i contains several visible light images {P i1 ,...,P ij ,...P iM }, P ij is the jth picture of scene P i .

(2)对同一场景的每张图像,在场景中人工框选区域{Pij1,...,Pijk,...Pijs},Pijk即代表场景Pi的第j幅图第k个区域。在同场景不同图像中,区域的大小、明暗、角度等均不同,且区域存在一定的形变。(2) For each image of the same scene, the artificial frame selection area {P ij1 , ..., P ijk , ... P ijs } in the scene, P ijk represents the j-th image of the scene P i The k-th image area. In different images of the same scene, the size, brightness, and angle of the regions are different, and the regions have certain deformations.

(3)在同一场景Pi的若干可见光图像{Pi1,...,Pij,...PiM}中,随机选取一图像裁剪其中选择的区域k,作为模板图随机选取另一图像作为待匹配图,并标记k在待匹配图中对应区域的位置,作为标签(ground truth)。(3) Among several visible light images {P i1 , . . . , P ij , . . . P iM } of the same scene P i , randomly select an image Crop the selected area k as a template image Pick another image at random As the graph to be matched, and mark k in the graph to be matched The position of the corresponding region in , as the label (ground truth).

表示场景Pi第j幅图像区域k所对应的标签,xG表示区域k在图像Pij中对应区域中心的横坐标,yG表示区域k在图像Pij中对应区域中心的纵坐标,wG表示区域k在图像Pij中对应区域的宽度,hG表示区域k在图像Pij中对应区域的长度。以上坐标均为像素坐标。 Represents the label corresponding to the jth image area k of the scene P i , x G represents the abscissa of the corresponding area center of the area k in the image P ij , y G represents the ordinate of the corresponding area center of the area k in the image P ij , w G represents the width of the corresponding region of the region k in the image P ij , and h G represents the length of the corresponding region of the region k in the image P ij . The above coordinates are pixel coordinates.

(4)将模板图与对应的待匹配图以图对形式作为网络的输入,记为 (4) The template diagram with the corresponding graph to be matched Take the form of graph pairs as the input of the network, denoted as

并重复此操作,对全场景{P1,P2,P3...,Pn}进行遍历,选取部分场景作为训练样本集,其余场景作为测试样本集,为了在测试中验证网络对于少样本、单样本情况的适应能力,训练集与测试集的样本类别不重叠,训练集不含测试集的样本类别,例如,测试集中包含飞机、船舶等,训练集中不含飞机船舶。训练集中部分训练样本如图1所示,包括类别车手、汽车、行人。测试集中部分测试样本如图2所示,包括类别建筑、兔子、飞机。And repeat this operation, traverse the whole scene {P 1 , P 2 , P 3 ..., P n }, select some scenes As a training sample set, the rest of the scenes As a test sample set, in order to verify the adaptability of the network to the few-sample and single-sample situations in the test, the sample categories of the training set and the test set do not overlap, and the training set does not contain the sample categories of the test set. For example, the test set contains aircraft, Ships, etc., aircraft and ships are not included in the training set. Part of the training samples in the training set are shown in Figure 1, including categories of drivers, cars, and pedestrians. Some test samples in the test set are shown in Figure 2, including the categories of buildings, rabbits, and airplanes.

异构孪生区域选取网络Heterogeneous Twin Region Selection Network

如图3所示,异构孪生区域选取网络包括串联的异构孪生网络和区域匹配网络,所述异构孪生网络用于提取模板图的特征图和待匹配图的特征图,所述区域匹配网络用于根据模板图的特征图和待匹配图的特征图,得到图像匹配结果。异构孪生区域选取网络仅适应同谱段传感器图像,如可见光模板图与可见光待匹配图,不适用于异谱段图像匹配,如SAR模板图与可见光待匹配图。As shown in Figure 3, the heterogeneous twinning region selection network includes a series-connected heterogeneous twinning network and a region matching network, the heterogeneous twinning network is used to extract the feature map of the template map and the feature map of the map to be matched, and the region matching The network is used to obtain the image matching result according to the feature map of the template map and the feature map of the map to be matched. The heterogeneous twinning region selection network is only suitable for sensor images of the same spectrum, such as the visible light template map and the visible light to-be-matched map, but not suitable for hetero-spectral image matching, such as the SAR template map and the visible-light to-be-matched map.

异构孪生网络包括并联的子网络A和子网络B,子网络包括串联的特征提取模块、特征融合模块和最大值池化模块。考虑到模板图与待匹配图的成像视点、图像尺度不同,特征差距较大,采用相同的卷积核提取特征,效果一般,因此两子网络特征提取模块的首层卷积的卷积核不同,而其余模块均相同。The heterogeneous twin network includes parallel sub-network A and sub-network B, and the sub-network includes serial feature extraction module, feature fusion module and maximum pooling module. Considering that the imaging viewpoint and image scale of the template image and the image to be matched are different, and the feature gap is large, the same convolution kernel is used to extract features, and the effect is general. Therefore, the convolution kernels of the first-layer convolution of the feature extraction modules of the two sub-networks are different. , while the rest of the modules are the same.

特征提取模块用于提取输入图像的特征图,融合模块用于融合特征提取模块最后三层的卷积特征,最大值池化模块将融合特征尺度归一化。The feature extraction module is used to extract the feature map of the input image, the fusion module is used to fuse the convolution features of the last three layers of the feature extraction module, and the maximum pooling module normalizes the scale of the fusion features.

本发明实施例中特征提取模块采用残差网络结构,优选ResNet18。进一步地,将ResNet18第二层由最大池化层(下采样)改为卷积,使网络自动学习合适的采样核函数;将ResNet18的最后一层由全连接层改为卷积层,并后接最大值池化模块,使得网络满足于不同尺寸的图像输入要求;将ResNet18的后三层的参数进行修改,使得后三层特征图尺寸完全相同。修改后的网络称为ResNet18v2。In the embodiment of the present invention, the feature extraction module adopts a residual network structure, preferably ResNet18. Further, the second layer of ResNet18 is changed from the maximum pooling layer (downsampling) to convolution, so that the network can automatically learn the appropriate sampling kernel function; the last layer of ResNet18 is changed from a fully connected layer to a convolutional layer, and then The maximum pooling module is connected to make the network meet the image input requirements of different sizes; the parameters of the last three layers of ResNet18 are modified to make the feature maps of the latter three layers the same size. The modified network is called ResNet18v2.

特征融合模块中多层卷积特征图融合,对于多尺度的区域匹配效果更佳。设A网络最后三层特征层为Conv_A1,Conv_A2,Conv_A3,B网络最后三层特征层为Conv_B1,Conv_B2,Conv_B3,那么融合特征Conv_A、Conv_B即为:The multi-layer convolution feature map fusion in the feature fusion module is better for multi-scale region matching. Suppose the last three feature layers of A network are Conv_A1, Conv_A2, Conv_A3, and the last three feature layers of B network are Conv_B1, Conv_B2, Conv_B3, then the fusion features Conv_A and Conv_B are:

其中,w1=2,w2=4,w3=6。Wherein, w 1 =2, w 2 =4, and w 3 =6.

对特征图进行块最大池化操作,使网络可以适应任意大小的模板图与待匹配图输入,并提高处理速度,其中块最大池化指对不同大小的特征图利用最大池化方法获得固定大小的特征图。The block maximum pooling operation is performed on the feature map, so that the network can adapt to the input of any size template map and the image to be matched, and improve the processing speed. The block maximum pooling refers to using the maximum pooling method for feature maps of different sizes to obtain a fixed size. feature map.

区域匹配网络包括:特征划分模块、分类模块和位置回归模块,其中分类模块与位置回归模块并联,并行处理,并串接在特征划分模块之后The region matching network includes: a feature division module, a classification module and a position regression module, wherein the classification module and the position regression module are connected in parallel, processed in parallel, and connected in series after the feature division module

特征划分模块包括:第一卷积、第二卷积、第三卷积、第四卷积,第一卷积用于从模板图的特征图中提取模板分类特征图,第二卷积用于从模板图的特征图中提取模板位置特征图,第三卷积用于提取待匹配图分类特征图,第四卷积用于提取待匹配图位置特征图。The feature division module includes: a first convolution, a second convolution, a third convolution, and a fourth convolution. The first convolution is used to extract the template classification feature map from the feature map of the template map, and the second convolution is used to extract the template classification feature map. The template location feature map is extracted from the feature map of the template map, the third convolution is used to extract the classification feature map of the to-be-matched map, and the fourth convolution is used to extract the to-be-matched map location feature map.

分类模块用于使用模板分类特征图作为卷积核,与待匹配图分类特征图进行卷积,输出匹配的类别,包括匹配与不匹配两类。The classification module is used to use the template classification feature map as the convolution kernel, convolve with the classification feature map of the map to be matched, and output the matching category, including matching and non-matching.

位置回归模块用于使用模板位置特征图作为卷积核,与待匹配图位置特征图进行卷积,输出匹配的位置。The position regression module is used to use the template position feature map as the convolution kernel, convolve with the position feature map of the map to be matched, and output the matching position.

均衡损失函数=分类损失函数HCE+位置回归损失HSLBalanced loss function = classification loss function HCE + location regression loss HSL

分类损失函数HCEClassification loss function HCE

考虑简单的二分类交叉熵损失函数(binary cross entropy loss):Consider a simple binary cross entropy loss:

其中,x为HES-RPN网络的初始类别输出,p是网络输出的正样本类别概率,p取值范围为[0,1],p*是对应的标签,p*值为0或者1。输出位置和实际标签的交并比IOU>0.7,则p*=1,否则,p*=0。Among them, x is the initial class output of the HES-RPN network, p is the positive sample class probability output by the network, the value range of p is [0, 1], p * is the corresponding label, and the value of p * is 0 or 1. If the intersection ratio of the output position and the actual label, IOU>0.7, then p * =1, otherwise, p * =0.

其对x的梯度(导数)为:Its gradient (derivative) to x is:

于是可以定义一个梯度模长,i表示样本i。具体来说,将梯度模长的取值范围划分为若干个单位区域。对于一个样本,若它的梯度模长为g,它的密度就定义为处于它所在的单位区域内的样本数量除以这个单位区域的长度ε:So we can define a gradient modulo length, i represents sample i. Specifically, the value range of the gradient modulus length is divided into several unit regions. For a sample, if its gradient modulus length is g, its density is defined as the number of samples in its unit area divided by the length of this unit area ε:

α初始值为1,后续随着训练进程,逐渐上升至2。k表示样本k;N表示样本的总数,ε为一个很小的数,一般取0.01。而梯度密度的倒数就是样本计算损失后要乘的权值,则新的分类的损失:The initial value of α is 1, and it gradually rises to 2 as the training progresses. k represents the sample k; N represents the total number of samples, and ε is a very small number, generally 0.01. The reciprocal of the gradient density is the weight to be multiplied by the sample after calculating the loss, then the loss of the new classification:

位置回归损失HSLLocation regression loss HSL

而传统常用的回归分支损失函数Smooth L1,这是一个分段函数:The traditional commonly used regression branch loss function Smooth L1, which is a piecewise function:

那么当样本与标签的距离偏差较大,也就是|x|≥1时,smoothL1函数的导数恒定为1,对网络参数更新的影响是相同的,这样无法具体区分样本具体的难易程度。为了解决这个问题,引入了DSL损失:Then when the distance deviation between the sample and the label is large, that is, |x|≥1, the derivative of the smoothL 1 function is constant at 1, and the impact on the network parameter update is the same, so it is impossible to distinguish the specific difficulty of the sample. To solve this problem, a DSL loss is introduced:

d=|t-t*|=|(x0-xG)+(yo-yG)+(wo-wG)+(ho-hG)|d=|tt * |=|(x 0 -x G )+(y o -y G )+(w o -w G )+(h o -h G )|

r为位置回归损失标记,便于与分类损失区分。则新的位置回归损失HSL表示为:r is the location regression loss tag, which is easy to distinguish from the classification loss. Then the new position regression loss HSL is expressed as:

其中,in,

β初始值为1,后续随着训练进程,逐渐上升至1.5。u为一个小数,一般取0.02,t表示网络输出的样本位置xo表示在待匹配图PNM中异构孪生区域选取网络的输出区域中心的横坐标,yo表示在待匹配图PNM中异构孪生区域选取网络的输出区域中心的纵坐标,wo表示在待匹配图PNM中异构孪生区域选取网络的输出区域的宽度,ho表示在待匹配图PNM中异构孪生区域选取网络的输出区域长度。t*是对应的标签,即样本实际的位置 The initial value of β is 1, and it gradually increases to 1.5 as the training progresses. u is a decimal, generally 0.02, t represents the sample position output by the network x o represents the abscissa of the output area center of the heterogeneous twin area selection network in the to-be-matched graph P NM , yo represents the ordinate of the output area center of the heterogeneous twin area selection network in the to - be-matched graph P NM , w o Represents the width of the output area of the heterogeneous twin area selection network in the to-be-matched graph P NM , and h o represents the output area length of the heterogeneous twin area selection network in the to-be-matched graph P NM . t * is the corresponding label, that is, the actual position of the sample

如图4所示,一种基于异构孪生区域选取网络的图像匹配方法,包括如下步骤:As shown in Figure 4, an image matching method based on a heterogeneous twin region selection network includes the following steps:

S1.使用训练样本训练异构孪生区域选取网络,训练样本为模板图-待匹配图图对,训练样本的标签为模板图对应的区域在待匹配图中对应区域的位置;S1. Use the training sample to train the heterogeneous twinned area selection network, the training sample is a template graph-to-be-matched graph pair, and the label of the training sample is the position of the corresponding area of the template graph in the to-be-matched graph;

S2.将待测样本输入训练好的异构孪生区域选取网络,输出待测样本的匹配结果。S2. Input the sample to be tested into the trained heterogeneous twin area selection network, and output the matching result of the sample to be tested.

使用网络进行图像匹配。选取任意的两幅图像,类别不限,作为模板、待匹配图,以图像对形式输入训练完毕的图像匹配异构孪生区域选取网络,即可得到匹配结果。Image matching using the web. Select any two images, the category is not limited, as the template, the image to be matched, input the trained image in the form of image pair to match the heterogeneous twin area selection network, and then the matching result can be obtained.

图5(a)为待测模板图,图5(b)为待测样本的匹配结果。Fig. 5(a) is a diagram of a template to be tested, and Fig. 5(b) is a matching result of a sample to be tested.

分别采用本发明中异构孪生区域选取网络和现有技术中SiameseFC、SiamRPN、SiamRPN++、灰度互相关、HOG匹配对同一数据集进行匹配,匹配准确率和运行时间如表1所示。The same data set is matched by using the heterogeneous twin region selection network in the present invention and SiameseFC, SiamRPN, SiamRPN++, grayscale cross-correlation, and HOG matching in the prior art, and the matching accuracy and running time are shown in Table 1.

表1Table 1

其中,运行时间指在模板图大小为127*127,待匹配图大小为512*512情况下,完成一次匹配所需的时间。The running time refers to the time required to complete a matching when the size of the template image is 127*127 and the size of the image to be matched is 512*512.

在步骤S1之后、步骤S2之前,还可以用待测样本对异构孪生区域选取网络进行优化。After step S1 and before step S2, the sample to be tested can also be used to optimize the heterogeneous twin area selection network.

将测试样本集中的模板图与待匹配图对输入网络,将输出结果与标签计算交并比,以交并比衡量匹配成功概率,以此评估图像匹配网络的性能,并决定是否继续训练网络。The template image in the test sample set and the image to be matched are input to the network, and the output result and the label are calculated and compared, and the matching success probability is measured by the cross-union ratio, so as to evaluate the performance of the image matching network and decide whether to continue training the network.

网络输出结果与实际标签的交并比IOU,具体公式为:network output with actual labels The intersection and union ratio of IOU, the specific formula is:

oarea=wo*ho oarea=w o *h o

garea=wG*hG garea=w G *h G

将交并比IOU大于0.3的图像视为匹配成功,计算匹配成功的图像占所有图的比值,即为匹配成功率,成功率越高则匹配性能越好。The images whose intersection and union ratio IOU is greater than 0.3 are regarded as successful matching, and the ratio of successfully matching images to all images is calculated, which is the matching success rate. The higher the success rate, the better the matching performance.

设测试集场景中共有T张图像,其中,A张图像交并比大于0.3,那么匹配成功率SR(success rate)即为: Set up a test set scenario There are T images in total, among which, the intersection ratio of A images is greater than 0.3, then the matching success rate SR (success rate) is:

随着训练进程,若SR不再上升,则说明网络性能不再提升,停止训练网络,否则继续训练网络。With the training process, if the SR no longer increases, it means that the network performance no longer improves, stop training the network, otherwise continue to train the network.

以上,仅为本申请较佳的具体实施方式,但本申请的保护范围并不局限于此,任何熟悉本技术领域的技术人员在本申请揭露的技术范围内,可轻易想到的变化或替换,都应涵盖在本申请的保护范围之内。因此,本申请的保护范围应该以权利要求的保护范围为准。The above are only the preferred embodiments of the present application, but the protection scope of the present application is not limited to this. Any person skilled in the art can easily think of changes or replacements within the technical scope disclosed in the present application, All should be covered within the scope of protection of this application. Therefore, the protection scope of the present application should be subject to the protection scope of the claims.

Claims (8)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201910376172.1A CN110245678B (en) | 2019-05-07 | 2019-05-07 | An Image Matching Method Based on Heterogeneous Siamese Region Selection Network |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201910376172.1A CN110245678B (en) | 2019-05-07 | 2019-05-07 | An Image Matching Method Based on Heterogeneous Siamese Region Selection Network |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN110245678A CN110245678A (en) | 2019-09-17 |

| CN110245678B true CN110245678B (en) | 2021-10-08 |

Family

ID=67883642

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN201910376172.1A Active CN110245678B (en) | 2019-05-07 | 2019-05-07 | An Image Matching Method Based on Heterogeneous Siamese Region Selection Network |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN110245678B (en) |

Families Citing this family (14)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN110807793B (en) * | 2019-09-29 | 2022-04-22 | 南京大学 | Target tracking method based on twin network |

| CN110705479A (en) * | 2019-09-30 | 2020-01-17 | 北京猎户星空科技有限公司 | Model training method, target recognition method, device, equipment and medium |

| US11625834B2 (en) * | 2019-11-08 | 2023-04-11 | Sony Group Corporation | Surgical scene assessment based on computer vision |

| CN111428875A (en) * | 2020-03-11 | 2020-07-17 | 北京三快在线科技有限公司 | Image recognition method and device and corresponding model training method and device |

| CN111401384B (en) * | 2020-03-12 | 2021-02-02 | 安徽南瑞继远电网技术有限公司 | Transformer equipment defect image matching method |

| CN111489361B (en) * | 2020-03-30 | 2023-10-27 | 中南大学 | Real-time visual target tracking method based on deep feature aggregation of twin network |

| CN111784644A (en) * | 2020-06-11 | 2020-10-16 | 上海布眼人工智能科技有限公司 | Printing defect detection method and system based on deep learning |

| CN112150467A (en) * | 2020-11-26 | 2020-12-29 | 支付宝(杭州)信息技术有限公司 | Method, system and device for determining quantity of goods |

| CN112785371A (en) * | 2021-01-11 | 2021-05-11 | 上海钧正网络科技有限公司 | Shared device position prediction method, device and storage medium |

| CN113705731A (en) * | 2021-09-23 | 2021-11-26 | 中国人民解放军国防科技大学 | End-to-end image template matching method based on twin network |

| CN114708578B (en) * | 2022-04-01 | 2025-08-22 | 南京地平线机器人技术有限公司 | Lip movement detection method, device, readable storage medium and electronic device |

| CN115202477A (en) * | 2022-07-07 | 2022-10-18 | 合肥安达创展科技股份有限公司 | AR (augmented reality) view interaction method and system based on heterogeneous twin network |

| CN115330876B (en) * | 2022-09-15 | 2023-04-07 | 中国人民解放军国防科技大学 | Target template graph matching and positioning method based on twin network and central position estimation |

| CN115861595B (en) * | 2022-11-18 | 2024-05-24 | 华中科技大学 | A multi-scale domain adaptive heterogeneous image matching method based on deep learning |

Citations (4)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| WO2017219515A1 (en) * | 2016-06-22 | 2017-12-28 | 中兴通讯股份有限公司 | Method for photographing focusing and electronic device |

| CN108388927A (en) * | 2018-03-26 | 2018-08-10 | 西安电子科技大学 | Small sample polarization SAR terrain classification method based on the twin network of depth convolution |

| CN109446889A (en) * | 2018-09-10 | 2019-03-08 | 北京飞搜科技有限公司 | Object tracking method and device based on twin matching network |

| CN109712121A (en) * | 2018-12-14 | 2019-05-03 | 复旦大学附属华山医院 | A kind of method, equipment and the device of the processing of medical image picture |

Family Cites Families (1)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US9953217B2 (en) * | 2015-11-30 | 2018-04-24 | International Business Machines Corporation | System and method for pose-aware feature learning |

-

2019

- 2019-05-07 CN CN201910376172.1A patent/CN110245678B/en active Active

Patent Citations (4)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| WO2017219515A1 (en) * | 2016-06-22 | 2017-12-28 | 中兴通讯股份有限公司 | Method for photographing focusing and electronic device |

| CN108388927A (en) * | 2018-03-26 | 2018-08-10 | 西安电子科技大学 | Small sample polarization SAR terrain classification method based on the twin network of depth convolution |

| CN109446889A (en) * | 2018-09-10 | 2019-03-08 | 北京飞搜科技有限公司 | Object tracking method and device based on twin matching network |

| CN109712121A (en) * | 2018-12-14 | 2019-05-03 | 复旦大学附属华山医院 | A kind of method, equipment and the device of the processing of medical image picture |

Non-Patent Citations (2)

| Title |

|---|

| High Performance Visual Tracking with Siamese Region Proposal Network;Bo Li et al.;《2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition》;20181217;第8971-8980页 * |

| 基于小样本学习的目标匹配研究;柳青林;《中国优秀硕士学位论文全文数据库 信息科技辑》;20190215;I138-1627 * |

Also Published As

| Publication number | Publication date |

|---|---|

| CN110245678A (en) | 2019-09-17 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN110245678B (en) | An Image Matching Method Based on Heterogeneous Siamese Region Selection Network | |

| CN112884064B (en) | A method of target detection and recognition based on neural network | |

| CN110827251B (en) | Power transmission line locking pin defect detection method based on aerial image | |

| CN111428748B (en) | HOG feature and SVM-based infrared image insulator identification detection method | |

| CN108416307B (en) | Method, device and equipment for detecting pavement cracks of aerial images | |

| CN109034184B (en) | Grading ring detection and identification method based on deep learning | |

| CN109829914A (en) | The method and apparatus of testing product defect | |

| CN115294473A (en) | Insulator fault identification method and system based on target detection and instance segmentation | |

| CN107945204A (en) | A kind of Pixel-level portrait based on generation confrontation network scratches drawing method | |

| CN109558806A (en) | The detection method and system of high score Remote Sensing Imagery Change | |

| CN107133943A (en) | A kind of visible detection method of stockbridge damper defects detection | |

| CN108647655A (en) | Foreign object detection method for power lines in low-altitude aerial images based on light convolutional neural network | |

| CN109684922A (en) | A kind of recognition methods based on the multi-model of convolutional neural networks to finished product dish | |

| CN116363532A (en) | Traffic target detection method for UAV images based on attention mechanism and reparameterization | |

| CN110245711A (en) | The SAR target identification method for generating network is rotated based on angle | |

| CN111652273B (en) | Deep learning-based RGB-D image classification method | |

| CN113128308B (en) | Pedestrian detection method, device, equipment and medium in port scene | |

| CN112258490A (en) | Low-emissivity coating intelligent damage detection method based on optical and infrared image fusion | |

| CN113569981A (en) | A power inspection bird's nest detection method based on single-stage target detection network | |

| CN111199255A (en) | Small target detection network model and detection method based on dark net53 network | |

| CN108932712A (en) | A kind of rotor windings quality detecting system and method | |

| CN114694042A (en) | Disguised person target detection method based on improved Scaled-YOLOv4 | |

| CN110796716B (en) | An Image Colorization Method Based on Multiple Residual Networks and Regularized Transfer Learning | |

| CN111126173B (en) | High-precision face detection method | |

| CN114092441A (en) | A product surface defect detection method and system based on dual neural network |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |