Attorney Docket No.2010358-0329 SYSTEMS AND METHODS FOR AUTOMATED CANCER STAGING AND RISK PREDICTION CROSS-REFERENCE TO RELATED APPLICATIONS [0001] This application claims priority to and benefit from U.S. Provisional Application 63/654,279, filed May 31, 2024, U.S. Non-Provisional Application No. 18/667,972, filed May 17, 2024, U.S. Provisional Application 63/606,794, filed December 6, 2023, and U.S. Provisional Application No.63/540,339, filed September 25, 2023, the content of each of which is incorporated by reference herein in their entirety. This application also claims priority to and benefit from U.S. Application No.18/667,945, filed May 17, 2024 and U.S. Provisional Application No.63/606,824, filed December 6, 2023, the content of which is hereby incorporated by reference in its entirety. This application is related to U.S. Provisional Application No.63/350,211, filed June 8, 2022, U.S. Provisional Application No.63/458,031, filed on April 7, 2023, U.S. Provisional Application No. 63/461,486, filed on April 24, 2023, and U.S. Patent Application No.18/207,246, filed on June 8, 2023, the contents of each of which are hereby incorporated by reference in their entirety. This application is also related to U.S. Patent Application No.16/734,609, filed January 6, 2020, and U.S. Patent Application No.17/762,796, filed March 23, 2022, the contents of each which are hereby incorporated by reference in their entirety. FIELD [0002] This invention relates generally to systems and methods for creation, analysis, and/or presentation of medical image data. More particularly, in certain embodiments, the invention relates to systems and methods for automated analysis of medical images to identify and/or characterize cancerous lesions and/or prognosis or risk for a subject. BACKGROUND [0003] Prostate-specific membrane antigen (PSMA)-targeted positron emission tomography (PET) has recently been used for staging of patients with prostate cancer. In particular, the PSMA binding agents

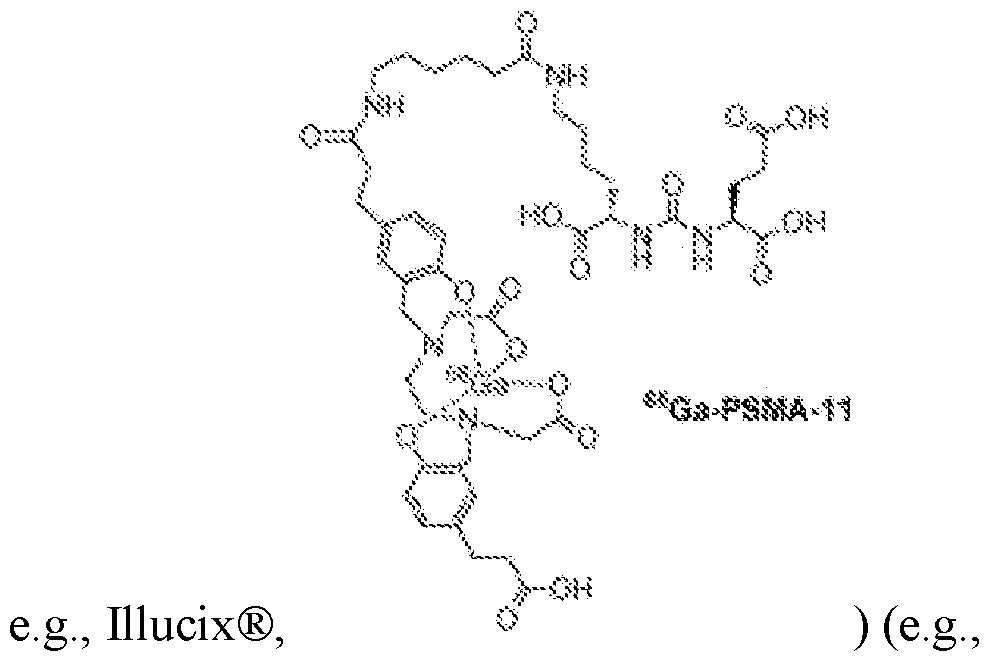

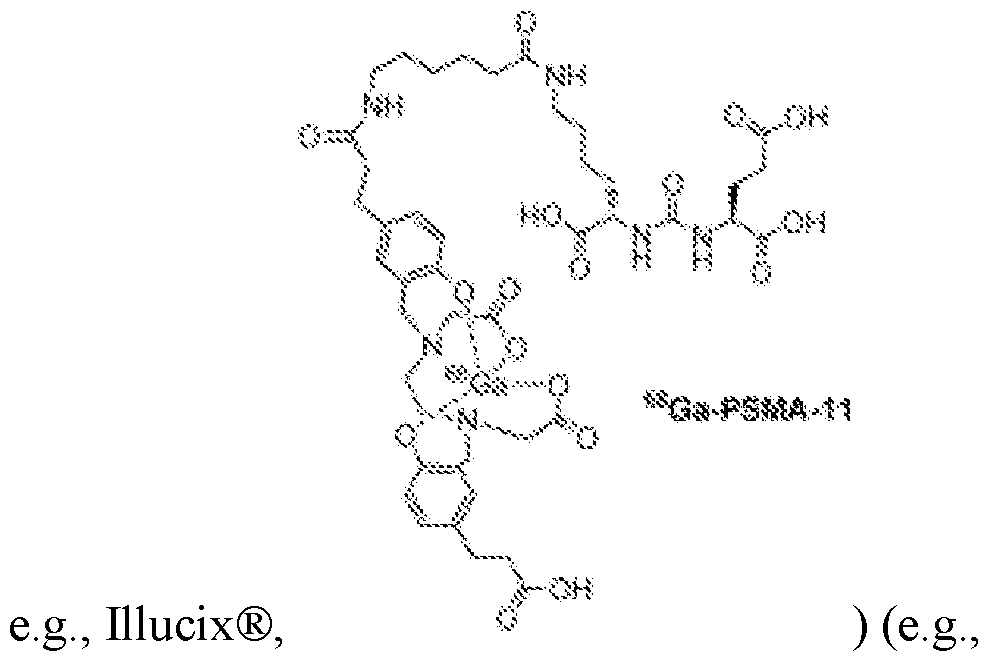

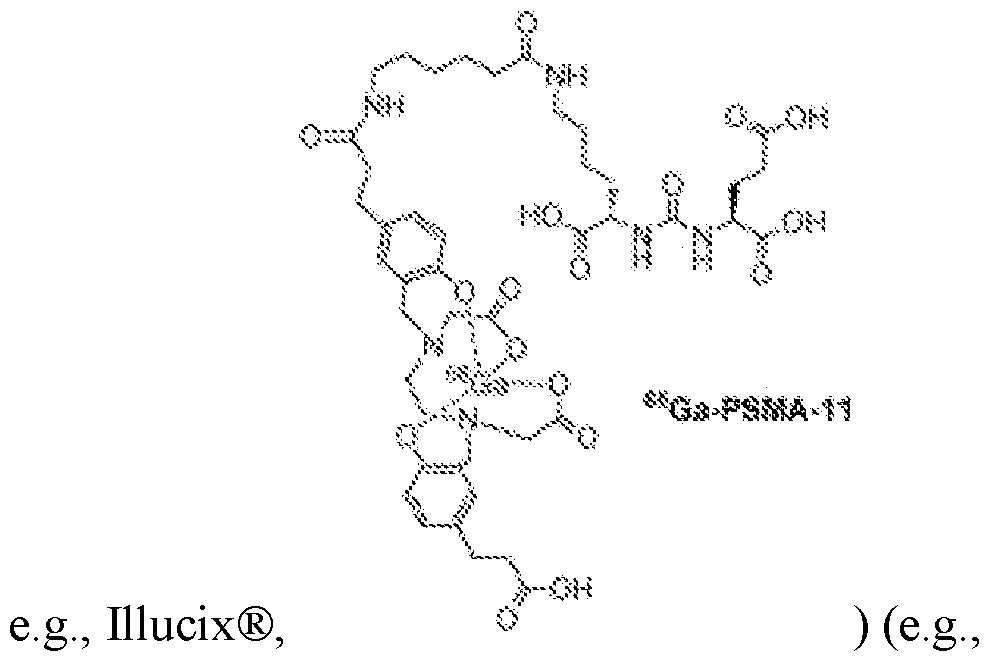

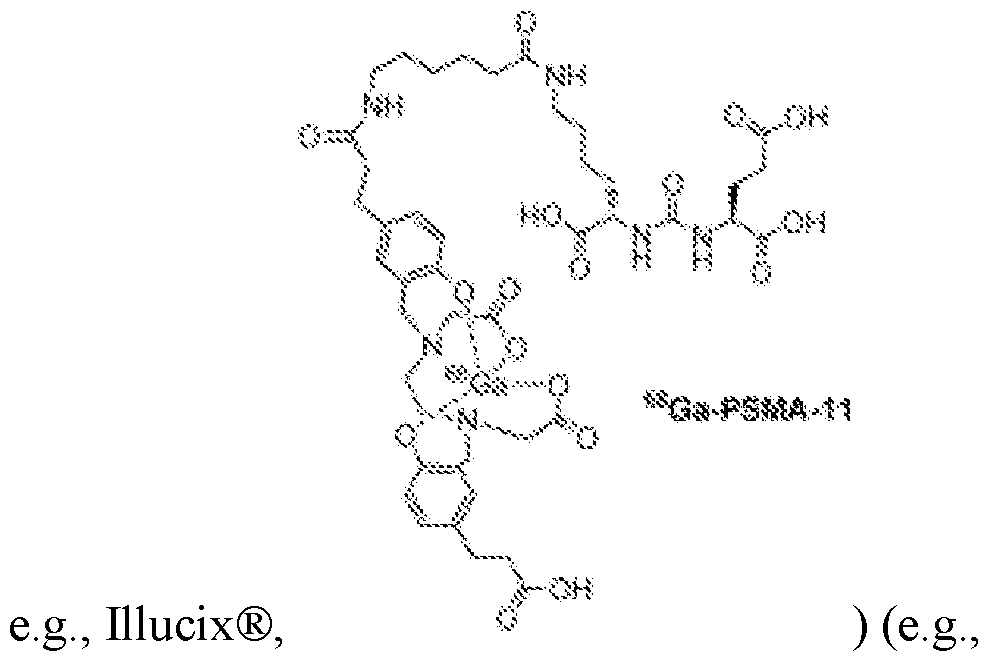

68Ga-PSMA-11 (gallium (

68Ga) gozetotide, e.g., Illucix®) and [

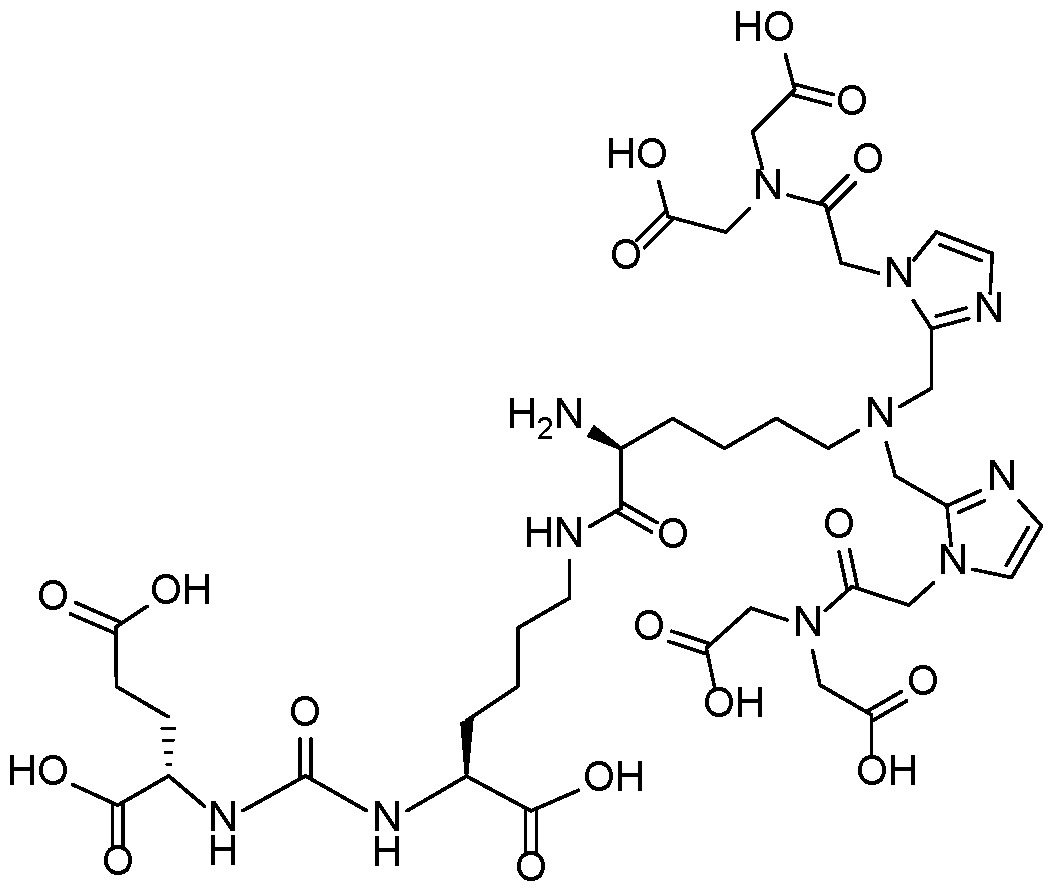

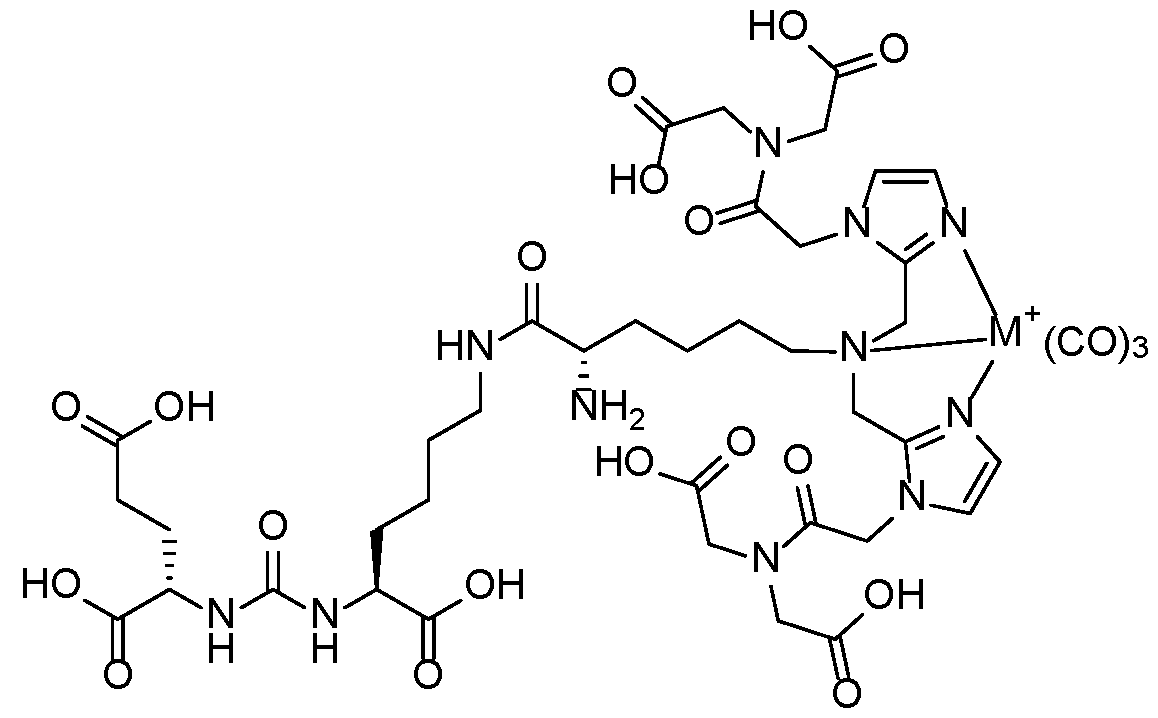

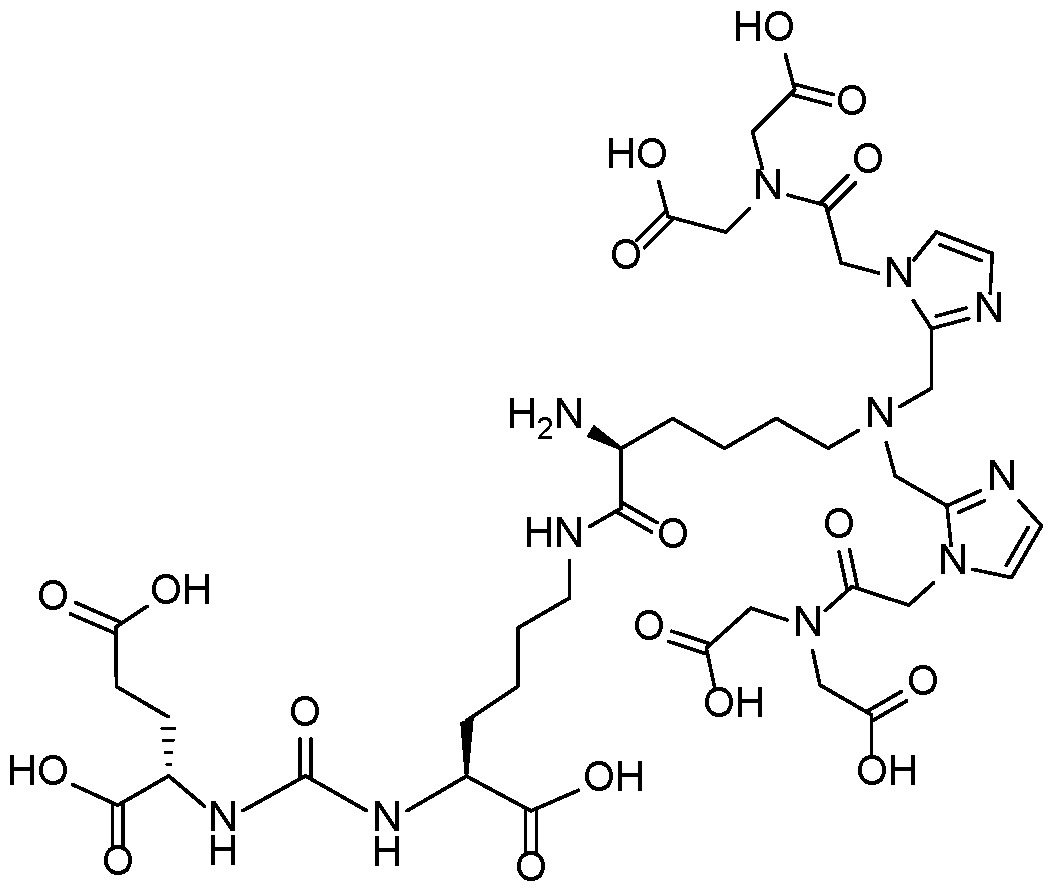

18F]DCFPyL (piflufolastat F 18, e.g., PYLARIFY®) were approved by the - 1 - 12297548v2

Attorney Docket No.2010358-0329 U.S. Food and Drug Administration in 2020 and 2021, respectively, and there is a growing body of evidence that supports integration of PSMA-PET into clinical guidelines. [0004] Existing reporting guidelines, and approaches for risk assessment provide frameworks that can guide physicians in their assessment(s) and supply them with a standardized format in which to report their results. Still, assessments made, even within a framework supplied by clinical guidelines, involve time-consuming and error prone manual evaluation of images and leave room for subjective judgement calls that limit reproducibility and leave the door open for human error, inter-operator variability, and the like. [0005] For example, patterns of PSMA expression in the prostate can be characterized from PSMA-PET images using a scoring system referred to as a PRIMARY score, described, for example, in Ceci et al., “The EANM Standardized Reporting Guidelines v1.0 for PSMA- PET,” Eur J Nucl Med Mol Imaging 2021; 48: 1626-38, Seifert et al., “Second Version of the Prostate Cancer Molecular Imaging Standardized Evaluation Framework Including Response Evaluation for Clinical Trials (PROMISE V2),” European Urology 83 (2023) pp.405-412, and Emmet et al., “The PRIMARY Score: Using Intraprostatic 68Ga-PSMA PET/CT Patterns to Optimize Prostate Cancer Diagnosis,” The Journal of Nuclear Medicine 63 (2022) pp.1644-1650, the texts of which are incorporated herein by reference in their entireties. [0006] The PRIMARY score system takes into account uptake locations within the prostate, the peak standardized uptake value (SUV) of uptake regions of interest, the SUV value of the liver and aorta, and the shape of uptakes, and assigns a numerical grade from 1-5, where 1 indicates least intense cancer and 5 indicates most intense cancer. Staging via the PRIMARY score system offers utility across a range of indications including, for example, the staging of high-risk patients, identification and/or prediction of cancer recurrence, estimation of risk of metastases, tracking of disease progression, evaluation of suitability for radioligand therapy, and assessment of efficacy of treatment. [0007] There is a need for systems and methods for more reproducible and standardized reporting of PSMA-PET staging results to support wider integration of PSMA- PET into clinical guidelines. - 2 - 12297548v2

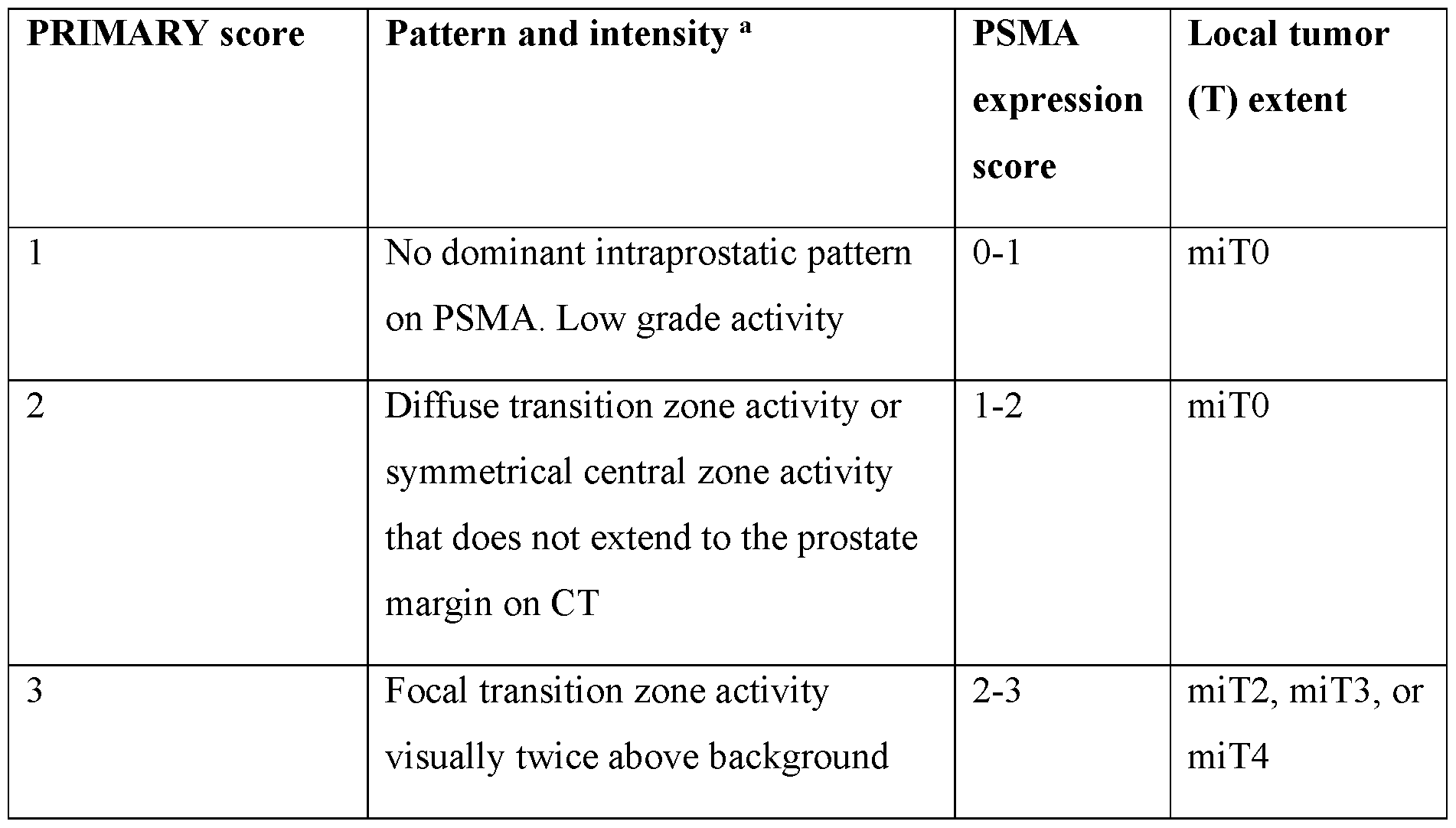

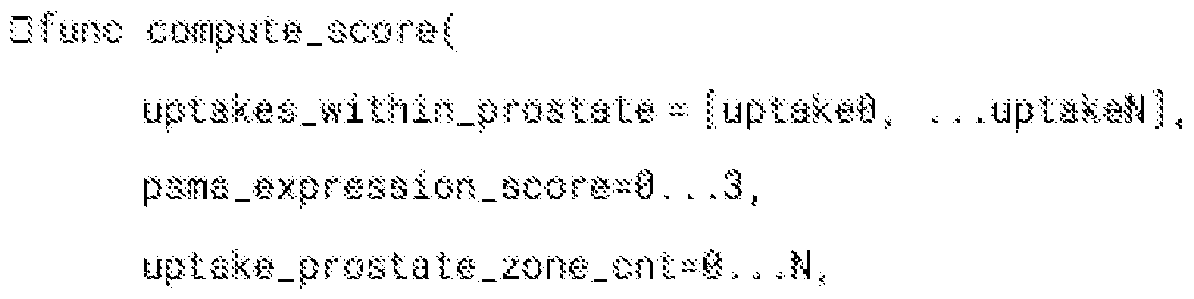

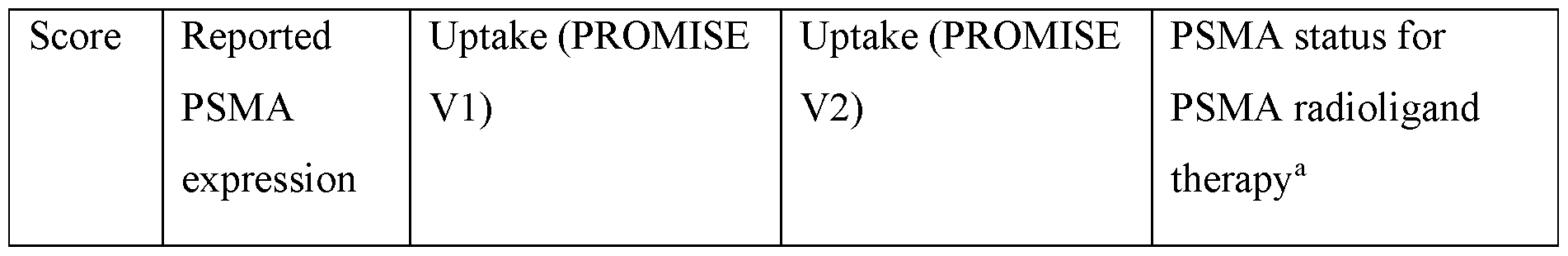

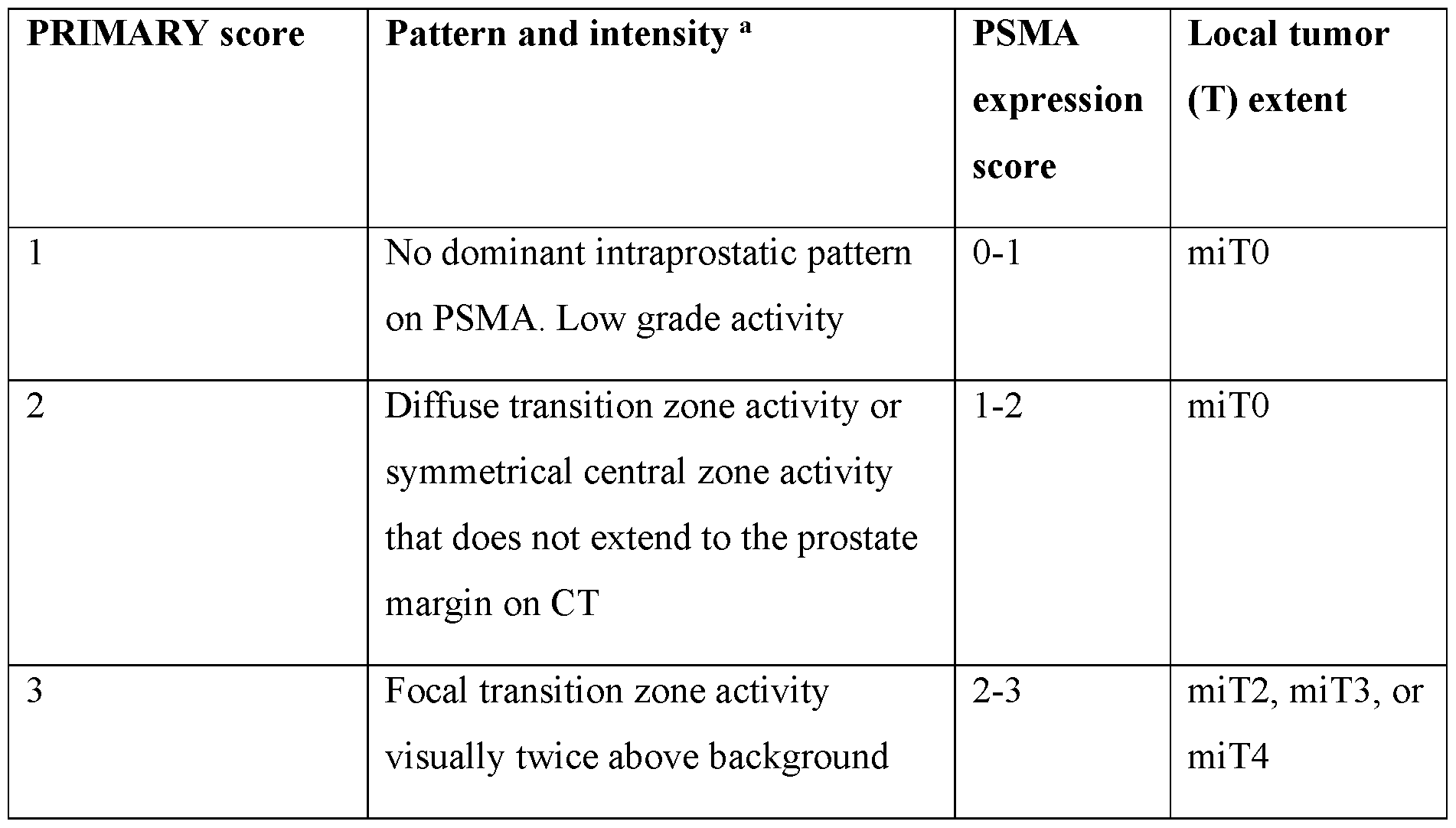

Attorney Docket No.2010358-0329 SUMMARY [0008] Presented herein are systems and methods for accurate and automated cancer staging and/or risk prediction. In certain embodiments, cancer staging and/or risk prediction technologies of the present disclosure utilize machine learning techniques to, for example, accurately identify image features indicative of cancerous lesions, such as hotspots resulting from high-levels of radiopharmaceutical uptake in tumors. Additionally or alternatively, in certain embodiments, systems and methods of the present disclosure include machine learning techniques that generate predictions of how a disease will evolve, such as whether cancer that appears localized has already, or will eventually, metastasize. [0009] For example, in certain embodiments, automated cancer staging technologies presented herein include systems and methods for the automated determination of a prostate cancer staging score (e.g., a PRIMARY score) for a subject. In certain embodiments, the systems and methods employ a machine learning model (e.g., one or more convolutional neural networks, CNNs) to analyze three-dimensional (3D) images obtained via both a functional imaging modality and an anatomical imaging modality. In addition to identifying regions of PSMA binding agent uptake (hotspots), the techniques described herein are able to accurately and automatically associate specific prostate zones to each hotspot and use this information in the determination of the staging score. [0010] Examples of the functional imaging modality include PET, SPECT (single- photon emission computerized tomography), and MRI (magnetic resonance imaging). Examples of the anatomical imaging modality includes computed tomography (CT), X-ray, and MRI. In particular embodiments, a PSMA binding agent is administered to the subject prior to obtaining the functional image (e.g., a 3D PSMA-PET image is obtained). The CT image is used to locate the prostate and/or other organs (e.g., liver and aorta) within the PSMA-PET image, and techniques described herein are used to identify uptake regions (hotspots) and, for each uptake region, identify one or more corresponding prostate zones (e.g., central, fibromuscular, peripheral, transition, and/or ureter zones). The identified and localized hotspots are then used to determine the prostate cancer staging score (e.g., the PRIMARY score) in an automated, reproducible way. [0011] Additionally or alternatively, in certain embodiments the present disclosure provides systems and methods for predicting presence and/or risk of metastases in a subject, based on medical image data that reflects presence of localized disease. In particular, among - 3 - 12297548v2

Attorney Docket No.2010358-0329 other things, metastatic disease prediction technologies of the present disclosure leverage artificial neural networks (ANNs) to analyze image data that is associated with and reflects presence of localized disease, such as images of regions about a single primary tumor and/or one or more lesions confined to a single tissue region or organ, where cancer was first detected. Among other things, technologies described herein make use of the insight that, while such images of localized disease may not include conventional or express hallmarks of metastatic disease, such as presence of hotspots dispersed outside the primary organ and/or tumor, they nonetheless may reflect patterns, features, such as particular intensity patterns and/or hotspot features, etc. that are indicative of (e.g., correlate with) presence and/or risk of metastases. While such patterns and their relationship / implications for whether a particular subject has or will develop metastatic disease may escape conventional image analysis methods and/or review by human professionals, such as physicians, radiologists, and the like, ANN technologies of the present disclosure can be trained and used to generate predictions of whether a subject has or will develop metastases – i.e., one or more cancerous lesions outside of a primary tumor and/or site (e.g., organ or tissue region) where cancer was originally detected. [0012] In one aspect, the invention is directed to a method for automated determination of a prostate cancer staging score for a subject, the method comprising: (a) receiving, by a processor of a computing device, a 3D functional image of the subject (e.g., a 3D PET, SPECT, or MRI scan); (b) determining, by the processor, a prostate volume within the 3D functional image, said prostate volume identifying a region of the 3D functional image corresponding to a prostate of the subject; (c) localizing (e.g., detecting and/or segmenting), by the processor, one or more uptake regions within the 3D functional image, each determined to represent a lesion or potential lesion within the prostate of the subject or a vicinity thereof; (d) determining, by the processor, for each particular uptake region of the one or more uptake regions: (i) values of one or more uptake region intensity metrics, each corresponding to a measure of intensity within and/or characteristic of the particular uptake region [e.g., SUVmax, SUVmean, SUVpeak, etc.; e.g., a lesion index (e.g., a PSMA expression score)]; and (ii) a set of assigned prostate zones identifying, for the particular uptake region, one or more spatial zones (e.g., sub-regions) within or about the prostate of the subject which the particular uptake region is associated with (e.g., within which at least a portion of a lesion or potential lesion represented by the particular uptake region is determined to be likely to be located; e.g., from which radiopharmaceutical uptake and - 4 - 12297548v2

Attorney Docket No.2010358-0329 radiation therefrom is determined to have produced the particular uptake region in the 3D functional image); and (e) determining, by the processor, the prostate cancer staging score based at least in part on (i) the values of the one or more intensity metrics and (ii) the set of assigned prostate zones determined for the one or more uptake regions. [0013] In certain embodiments, the set of assigned prostate zones identified for each of the one or more uptake regions is selected from a set of possible prostate zones, said set of possible prostate zones comprising one or more of (A), (B), and (C) as follows: (A) a central zone surrounding ejaculatory ducts and comprising about 25% of a prostate total mass, (B) a transition zone comprising a portion of the prostate surrounding a urethra, and (C) a peripheral zone situated toward a back of the prostate and comprising a majority of prostate tissue. [0014] In certain embodiments, the set of possible prostate zones further comprises a fibromuscular zone and/or a ureter zone. [0015] In certain embodiments, the one or more uptake regions are hotspots. [0016] In certain embodiments, the 3D functional image is a three-dimensional (3D) positron emission tomography (PET) image of the subject obtained following administration to the subject of a radiopharmaceutical comprising a prostate-specific membrane antigen (PSMA) binding agent. [0017] In certain embodiments, the PSMA binding agent comprises [

18F]DCFPyL. [0018] In certain embodiments, the PSMA binding agent comprises

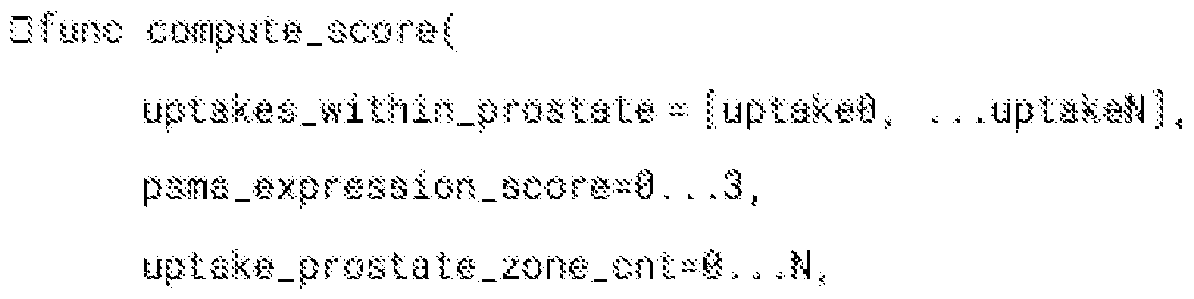

68Ga-PSMA-11. [0019] In certain embodiments, the one or more uptake region intensity metrics comprise a peak uptake region intensity. [0020] In certain embodiments, the method comprises determining, by the processor, for each particular uptake region of the one or more uptake regions, a corresponding uptake classification label indicative of whether the particular uptake region is focal or diffuse and, at step (e) using the uptake classification labels determined for the one or more uptake regions to determine the prostate cancer staging score. [0021] In certain embodiments, determining the set of assigned prostate zones for each particular uptake region of the one or more uptake regions comprises, (i) sorting a list of prostate zones in descending order starting from a zone in which a peak of the particular - 5 - 12297548v2

Attorney Docket No.2010358-0329 uptake region is located and ending in a zone with a least number voxels of the uptake region, and (ii) identifying whether the uptake region extends outside the prostate. [0022] In certain embodiments, the method comprises localizing, by the processor, within the 3D functional image, a liver volume and/or an aorta volume; and determining, by the processor, one or more liver reference intensities for a liver and/or one or more aorta reference intensities for an aorta, each corresponding to a measure of intensity within and/or characteristic of uptake in the liver volume and/or the aorta volume, respectively. [0023] In certain embodiments, the method comprises determining a lesion index value based on (i) the one or more uptake region intensity metrics and (ii) the one or more uptake intensity metrics for the liver and/or the one or more uptake intensity metrics for the aorta. [0024] In certain embodiments, the method comprises, at step (b): using one or more machine learning module(s) implementing convolutional neural networks (CNNs) to segment a 3D anatomical image (e.g., a CT, X-ray, or MRI image) and generate a 3D segmentation map that identifies a 3D boundary of a prostate representation within the 3D anatomical image; and transferring the 3D segmentation map to the 3D functional image to localize the prostate volume therein. [0025] In certain embodiments, the method comprises, at step (c), localizing the one or more uptake regions within the 3D functional image using one or more machine learning module(s). [0026] In another aspect, the invention is directed to a system for automated determination of a prostate cancer staging score for a subject, the system comprising: a processor of a computing device; and memory having instructions stored thereon, wherein the memory, when executed by the processor, causes the processor to: (a) receive a 3D functional image of the subject (e.g., a 3D PET, SPECT, or MRI scan); (b) determine a prostate volume within the functional image, said prostate volume identifying a region of the 3D functional image corresponding to a prostate of the subject; (c) localize (e.g., detect and/or segment) one or more uptake regions within the 3D functional image, each determined to represent a lesion or potential lesion within the prostate of the subject or a vicinity thereof; (d) determine, for each particular uptake region of the one or more uptake regions: (i) values of one or more uptake region intensity metrics, each corresponding to a measure of intensity within and/or characteristic of the particular uptake region [e.g., SUVmax, SUVmean, SUVpeak, etc.; e.g., - 6 - 12297548v2

Attorney Docket No.2010358-0329 a lesion index (e.g., a PSMA expression score)]; and (ii) a set of assigned prostate zones identifying, for the particular uptake region, one or more spatial zones (e.g., sub-regions) within or about the prostate of the subject which the particular uptake region is associated with (e.g., within which at least a portion of a lesion or potential lesion represented by the particular uptake region is determined to be likely to be located; e.g., from which radiopharmaceutical uptake and radiation therefrom is determined to have produced the particular uptake region in the 3D functional image); and (e) determine the prostate cancer staging score based at least in part on (i) the values of the one or more intensity metrics and (ii) the set of assigned prostate zones determined for the one or more uptake regions. [0027] In certain embodiments, the set of assigned prostate zones identified for each of the one or more uptake regions is selected from a set of possible prostate zones, said set of possible prostate zones comprising one or more of (A), (B), and (C) as follows: (A) a central zone surrounding ejaculatory ducts and comprising about 25% of a prostate total mass, (B) a transition zone comprising a portion of the prostate surrounding a urethra, and (C) a peripheral zone situated toward a back of the prostate and comprising a majority of prostate tissue. [0028] In certain embodiments, the set of possible prostate zones further comprises a fibromuscular zone and/or a ureter zone. [0029] In certain embodiments, the one or more uptake regions are hotspots. [0030] In certain embodiments, the 3D functional image is a three-dimensional (3D) positron emission tomography (PET) image of the subject obtained following administration to the subject of a radiopharmaceutical comprising a prostate-specific membrane antigen (PSMA) binding agent. [0031] In certain embodiments, the instructions cause the processor to, at step (b): use one or more machine learning module(s), implementing convolutional neural networks (CNNs), to segment a 3D anatomical image (e.g., a CT, X-ray, or MRI image) and generate a 3D segmentation map that identifies a 3D boundary of a prostate representation within the 3D anatomical image; and transfer the 3D segmentation map to the 3D functional image to localize the prostate volume therein. [0032] In another aspect, the invention is directed to a method for automated determination of a prostate cancer staging score (e.g., a PRIMARY score) for a subject, the method comprising: (a) receiving, by a processor of a computing device, a first image of the - 7 - 12297548v2

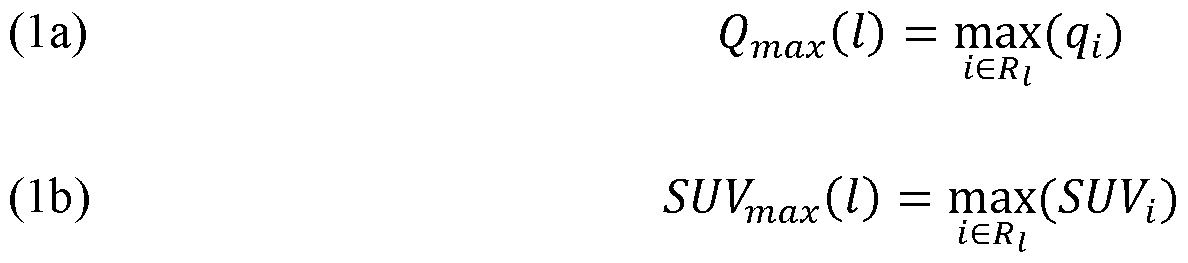

Attorney Docket No.2010358-0329 subject obtained using a functional imaging modality (e.g., a 3D PET, SPECT, or MRI scan) and a second image of the subject obtained using an anatomical imaging modality (e.g., a CT, X-ray, or MRI image) {e.g., receiving a combined 3D PET/CT image (e.g., a PSMA-PET/CT image) that comprises the first image and the second image}; (b) converting, by the processor, the first image into an SUV image whose intensity values correspond to standardized uptake values (SUV), thereby obtaining an SUV-converted first image; (c) localizing, by the processor, volumes of interest (VOIs) in the second image corresponding to one or more organs of the subject (e.g., a prostate, a liver, and an aorta) (e.g., using the second image to obtain one or more organ segmentation masks corresponding to one or more organs of the subject) and determining corresponding organ volumes in the SUV-converted first image (e.g., regions in the SUV-converted first image corresponding to the prostate, the liver, and/or the aorta); (d) localizing, by the processor, in the SUV-converted first image, one or more uptake regions (e.g., hotspots) corresponding to lesions (or potential lesions) in the prostate of the subject and determining, by the processor, values of an SUV uptake metric (e.g., and/or peak intensity value and/or peak intensity location) for each of the one or more uptake regions (e.g., and, optionally, determining, by the processor, whether each said uptake region is focal or diffuse), thereby determining one or more values of the SUV uptake metric; (e) for each of the one or more uptake regions, identifying, by the processor, one or more prostate zones (e.g., central, fibromuscular, peripheral, transition, and/or ureter zones) corresponding to said uptake region (e.g., by fitting a 3D prostate clinical model to the prostate segmentation mask); (f) determining, by the processor, a PSMA expression score using the SUV-converted first image (e.g., comparing a highest uptake peak within the prostate with aorta and/or liver SUV mean values); and (g) determining, by the processor, the prostate cancer staging score (e.g., the PRIMARY score) based at least on (i) the one or more values of the SUV uptake metric, (ii) the prostate zones identified for each of the one or more uptake regions (e.g., and, optionally, the determination of whether each said uptake region is focal or diffuse), and (iii) the PSMA expression score. [0033] In certain embodiments, the first image is a three-dimensional (3D) positron emission tomography (PET) image of the subject obtained following administration to the subject of a radiopharmaceutical comprising a prostate-specific membrane antigen (PSMA) binding agent. - 8 - 12297548v2

Attorney Docket No.2010358-0329 [0034] In certain embodiments, the PSMA binding agent comprises [

18F]DCFPyL (piflufolastat F 18,

wherein the method comprises receiving a combined 3D PSMA-PET/CT image that comprises the first image and the second image). [0035] In certain embodiments, the PSMA binding agent comprises

68Ga-PSMA-11 (gallium (

68Ga) gozetotide,

wherein the method comprises receiving a combined 3D PSMA-PET/CT image that comprises the first image and the second image). [0036] In certain embodiments, the SUV value determined for each of the one or more uptake regions at step (d) is a peak SUV value. [0037] In certain embodiments, the method comprises, determining, by the processor, for each particular uptake region of the one or more uptake regions, a corresponding uptake classification label indicative of whether the particular uptake region is focal or diffuse and, at step (g) using the uptake classification labels determined for the one or more uptake regions to determine the prostate cancer staging score. [0038] In certain embodiments, the one or more prostate zones identified for each of the one or more uptake regions are selected from a (e.g., static, finite) set of (e.g., 10 or fewer, e.g., 5 or fewer) possible prostate zones (e.g., as in an enumerated data type) (e.g., wherein the set of possible prostate zones comprises a central zone, a transition zone, and a peripheral zone; e.g., wherein the set of possible prostate zones comprises a central zone, a transition zone, and a peripheral zone, a fibromuscular zone, and an ureter zone). - 9 - 12297548v2

Attorney Docket No.2010358-0329 [0039] In certain embodiments, identifying the one or more prostate zones (e.g., central, fibromuscular, peripheral, transition, and/or ureter zones) corresponding to each of the one or more uptake regions in the SUV-converted first image comprises, for each uptake region, (i) sorting a list of prostate zones in descending order starting from a zone in which the hotspot peak is located (e.g., location of intensity peak for the uptake region) and ending in a zone with a least number of hotspot voxels, and (ii) identify whether the uptake region extends outside the prostate. [0040] In certain embodiments, the method comprises: localizing, by the processor, within the SUV-converted first image, a liver volume (e.g., corresponding to a liver within the subject) and/or an aorta volume (e.g., corresponding to an aorta, or portion thereof, within the subject) [e.g., the liver volume and/or aorta volume within the SUV-first image corresponding to (e.g., having been localized by mapping, to the SUV-converted first image,) a liver segmentation mask and/or an aorta segmentation mask determined from the second image); and determining, by the processor, a liver reference SUV value and/or an aorta reference SUV value based on SUV values of voxels of the SUV-converted first image within the liver volume and/or the aorta volume, respectively. [0041] In certain embodiments, the method comprises determining the PSMA expression score based on (i) the one or more values of the SUV uptake metric and (ii) the liver reference SUV value and/or the aorta reference SUV value. [0042] In certain embodiments, the method comprises, at step (c), localizing the VOIs in the second image using one or more machine learning module(s) [e.g., one or more convolutional neural networks (CNNs)]. [0043] In certain embodiments, the method comprises, at step (d), localizing the one or more uptake regions in the SUV-converted first image using one or more machine learning module(s) (e.g., one or more CNNs). [0044] In another aspect, the invention is directed to a method for automated determination of a prostate cancer staging score (e.g., a PRIMARY score) for a subject, the method comprising: (a) receiving, by a processor of a computing device, data comprising a first image of the subject obtained using a functional imaging modality (e.g., a 3D PET, SPECT, or MRI scan) and a second image of the subject obtained using an anatomical imaging modality (e.g., a CT, X-ray, or MRI image) {e.g., receiving a combined 3D PET/CT image (e.g., a PSMA-PET/CT image) that comprises the first image and the second image}; - 10 - 12297548v2

Attorney Docket No.2010358-0329 (b) using the received data to localize, by the processor, one or more uptake regions (e.g., hotspots) in the first image (e.g., an SUV-converted first image) corresponding to lesions (or potential lesions) in the prostate and to identify one or more prostate zones (e.g., central, fibromuscular, peripheral, transition, and/or ureter zones) corresponding to each said uptake region (e.g., by fitting a 3D prostate clinical model to the prostate segmentation mask); and (c) determining, by the processor, the prostate cancer staging score (e.g., the PRIMARY score) based at least on the localized one or more uptake regions and their identified prostate zones. [0045] In certain embodiments, the first image is a three-dimensional (3D) positron emission tomography (PET) image of the subject obtained following administration to the subject of a radiopharmaceutical comprising a prostate-specific membrane antigen (PSMA) binding agent. [0046] In certain embodiments, the PSMA binding agent comprises [18F]DCFPyL (piflufolastat F 18,

wherein the method comprises receiving a combined 3D PSMA-PET/CT image that comprises the first image and the second image). [0047] In certain embodiments, the PSMA binding agent comprises 68Ga-PSMA-11 (gallium (68Ga) gozetotide,

wherein the method comprises receiving a combined 3D PSMA-PET/CT image that comprises the first image and the second image). - 11 - 12297548v2

Attorney Docket No.2010358-0329 [0048] In certain embodiments, the method comprises, at step (b), localizing the one or more uptake regions in the first image using one or more machine learning module(s) (e.g., one or more CNNs). [0049] In another aspect, the invention is directed to a system for automated determination of a prostate cancer staging score (e.g., a PRIMARY score) for a subject, the system comprising: a processor of a computing device; and memory having instructions stored thereon, wherein the memory, when executed by the processor, causes the processor to: (a) receive a first image of the subject obtained using a functional imaging modality (e.g., a 3D PET, SPECT, or MRI scan) and a second image of the subject obtained using an anatomical imaging modality (e.g., a CT, X-ray, or MRI image) {e.g., receiving a combined 3D PET/CT image (e.g., a PSMA-PET/CT image) that comprises the first image and the second image}; (b) convert the first image into an SUV image whose intensity values correspond to standardized uptake values (SUV), thereby obtaining an SUV-converted first image; (c) localize volumes of interest (VOIs) in the second image corresponding to one or more organs of the subject (e.g., a prostate, a liver, and an aorta) (e.g., using the second image to obtain one or more organ segmentation masks corresponding to one or more organs of the subject) and determining corresponding organ volumes in the SUV-converted first image (e.g., regions in the SUV-converted first image corresponding to the prostate, the liver, and/or the aorta); (d) localize, in the SUV-converted first image, one or more uptake regions (e.g., hotspots) corresponding to lesions (or potential lesions) in the prostate of the subject and determining, by the processor, values of an SUV uptake metric (e.g., and/or peak intensity value and/or peak intensity location) for each of the one or more uptake regions (e.g., and, optionally, determining, by the processor, whether each said uptake region is focal or diffuse), thereby determining one or more values of the SUV uptake metric; (e) for each of the one or more uptake regions, identify one or more prostate zones (e.g., central, fibromuscular, peripheral, transition, and/or ureter zones) corresponding to said uptake region (e.g., by fitting a 3D prostate clinical model to the prostate segmentation mask); (f) determine a PSMA expression score using the SUV-converted first image (e.g., comparing a highest uptake peak within the prostate with aorta and/or liver SUV mean values); and (g) determine the prostate cancer staging score (e.g., the PRIMARY score) based at least on (i) the one or more values of the SUV uptake metric, (ii) the prostate zones identified for each of the one or more uptake regions (e.g., and, optionally, the determination of whether each said uptake region is focal or diffuse), and (iii) the PSMA expression score. - 12 - 12297548v2

Attorney Docket No.2010358-0329 [0050] In another aspect, the invention is directed to a system for automated determination of a prostate cancer staging score (e.g., a PRIMARY score) for a subject, the system comprising: a processor of a computing device; and memory having instructions stored thereon, wherein the memory, when executed by the processor, causes the processor to: (a) receive data comprising a first image of the subject obtained using a functional imaging modality (e.g., a 3D PET, SPECT, or MRI scan) and a second image of the subject obtained using an anatomical imaging modality (e.g., a CT, X-ray, or MRI image) {e.g., receiving a combined 3D PET/CT image (e.g., a PSMA-PET/CT image) that comprises the first image and the second image};(b) use the received data to localize one or more uptake regions (e.g., hotspots) in the first image (e.g., an SUV-converted first image) corresponding to lesions (or potential lesions) in the prostate and to identify one or more prostate zones (e.g., central, fibromuscular, peripheral, transition, and/or ureter zones) corresponding to each said uptake region (e.g., by fitting a 3D prostate clinical model to the prostate segmentation mask); and (c) determine the prostate cancer staging score (e.g., the PRIMARY score) based at least on the localized one or more uptake regions and their identified prostate zones. [0051] In another aspect, the invention is directed to a method for automatically processing one or more medical images of a subject and using the processed image(s) to automatically predict a presence and/or a risk of metastases, the method comprising: (a) receiving, by a processor of a computing device, one or more medical images of a prostate of the subject, wherein the one or more medical image(s) comprise a 3D functional image acquired following administration to the subject of an imaging agent; (b) automatically identifying, by the processor, within the 3D functional image, a prostate volume corresponding to the prostate of the subject; (c) automatically identifying, by the processor, one or more hotspots within the prostate volume, (e.g., said one or more hotspots corresponding to localized regions of high intensity relative to their surroundings and representing lesions or potential lesions within the subject); and (d) predicting, by the processor, using a neural network, (i) a presence of metastases in the subject, and/or (ii) a risk of metastases in the subject, said predicting based at least in part on the automatically identified prostate volume and the automatically identified one or more hotspot(s) within the prostate volume, wherein the neural network receives at least two channels of input, the at least two channels of input comprising: (A) a prostate intensity channel comprising intensities of voxels located within the prostate volume [e.g., a cuboid image region comprising a segmented prostate volume (e.g., and a small buffer about the segmented prostate, e.g., - 13 - 12297548v2

Attorney Docket No.2010358-0329 approximately 1, 5, 10, 25 voxels; e.g., approximately 1 mm, 2 mm, 5 mm, 10 mm)]; and (B) a hotspot mask channel comprising a mask identifying the one or more hotspots [e.g., the hotspot mask channel comprising a hotspot mask/map cropped (e.g., intersected with) a cuboid region of a same size as the prostate intensity channel]. [0052] In certain embodiments, the one or more medical images comprise a 3D anatomical image (e.g., a CT image) and a 3D functional image (e.g., a PET image). [0053] In certain embodiments, the one or more medical images comprise a PET image and/or a PET/CT image [e.g., obtained following administration to a subject of an imaging agent comprising PSMA binding agent (e.g., PyL)]. [0054] In certain embodiments, the one or more medical images are obtained within six (6) months or less (e.g., three months or less) from an initial diagnosis and/or pathological assessment. [0055] In certain embodiments, the one or more medical images are obtained prior to treatment. [0056] In certain embodiments, the one or more medical images are localized around the prostate volume [e.g., comprising a pelvic region (e.g., having been acquired at a single bed position); e.g., and wherein the one or more medical images are or comprise one or more SPECT and/or SPECT/CT images (e.g., having been obtained following administration to the subject of a PSMA binding agent)]. [0057] In certain embodiments, the one or more medical images comprise a 3D anatomical image co-aligned with the 3D functional image, and wherein step (b) comprises identifying an anatomical volume of interest (VOI) representing a prostate within the 3D anatomical image and using the anatomical VOI to identify the prostate volume within the functional image. [0058] In certain embodiments, the method comprises using a first machine learning model (e.g., an anatomical segmentation model) to (i) identify the prostate volume within the 3D functional image and/or (ii) identify the anatomical VOI within the 3D anatomical image. [0059] In certain embodiments, the method comprises using a second machine learning model to automatically identify the one or more hotspots. [0060] In certain embodiments, the neural network does not receive, as input, intensities of voxels located outside the prostate volume of the image(s) [e.g., outside a - 14 - 12297548v2

Attorney Docket No.2010358-0329 cuboid image region comprising a segmented prostate volume (e.g., and a small buffer about the segmented prostate, e.g., approximately 1, 5, 10, 25 voxels; e.g., approximately 1 mm, 2 mm, 5 mm, 10 mm)]. [0061] In certain embodiments, the neural network generates, as output, a likelihood value representing a likelihood (e.g., as determined by the neural network) that a subject has or will develop metastases [e.g., a risk that the subject has synchronous metastases, and/or a risk that the subject will develop metachronous metastases (e.g., six months or more following a time at which the one or more medical images were obtained; e.g., following curative intent therapy (e.g., surgery, chemotherapy, radiation, or combinations thereof))]. [0062] In certain embodiments, step (d) comprises using one or more measured features (e.g., PSA score, pathologic grade, percent positive cores, uptake peak value) (e.g., as input, alongside neural network output, to a classifier) to predict presence and/or risk of metastases in the subject. [0063] In certain embodiments, step (d) comprises using one or more computed features (e.g., a PRIMARY score, and/or a PSMA expression score) (e.g., as input, alongside neural network output, to a classifier) to predict presence and/or risk of metastases in the subject [0064] In certain embodiments, the one or more medical images are or comprise one or more 3D functional images acquired following administration to a subject of an imaging agent. [0065] In certain embodiments, the imaging agent is or comprises a PSMA binding agent (e.g., PyL; e.g., PSMA-11). [0066] In certain embodiments, the medical images do not include any graphical representation(s) of metastases outside the prostate volume [e.g., no representations of suspect regions (e.g., graphical representations of potential lesions (e.g., hotspots)) outside of the prostate volume]. [0067] In certain embodiments, the method comprises, at step (d), predicting a risk of metastases (e.g., a risk that the subject will develop metachronous metastases). [0068] In certain embodiments, the method comprises, at step (d), predicting a presence of metastases (e.g., predicting a presence of synchronous metastases). - 15 - 12297548v2

Attorney Docket No.2010358-0329 [0069] In certain embodiments, the neural network is a trained neural network, having been trained [e.g., to generate, as output, a metastases score representing the prediction of (i) the presence of metastases (e.g., the presence of synchronous metastases) in the subject and/or (ii) the risk of metastases (e.g., the risk of metachronous metastases)] using a plurality of example images each obtained from a particular patient and comprising a graphical representation of suspect regions within a prostate region of the particular subject, said plurality of example images comprising: (A) a plurality of positive example images obtained for subjects known to have (e.g., synchronous) metastases; and (B) a plurality of negative example images obtained for subjects having localized disease (e.g., without metastases). [0070] In certain embodiments, the plurality of positive example images are images obtained for subjects having synchronous metastases and wherein step (d) comprises using the neural network to predict the risk of metastases (e.g., metachronous metastases) for the subject. [0071] In certain embodiments, the subject is or has been determined to have localized prostate cancer, with observable lesions {e.g., as determined via pathological assessment; e.g., as determined based on analysis of the one or more medical images [e.g., and identification of one or more suspect regions meeting one or more criteria (e.g., having a minimum size, intensity, etc.)]} confined to a primary tumor volume comprising (e.g., and/or about) the prostate of the subject [e.g., comprising the prostate and a surrounding buffer/margin (e.g., approximately 1, 5, 10, 25 voxels; e.g., approximately 1 mm, 2 mm, 5 mm, 10 mm)] and wherein step (d) comprises predicting, as the risk of metastases, a likelihood that the subject will develop one or more observable lesions outside the primary tumor volume (e.g., outside the prostate and/or its surrounding buffer/margin) (e.g., thereby generating a quantitative prediction of risk that the localized disease will develop into metastatic disease). [0072] In certain embodiments, step (c) comprises automatically identifying the one or more hotspot(s) within the prostate volume (e.g., and/or the surrounding buffer/margin), but not identifying any hotspot(s) outside of the prostate volume and/or a surrounding buffer/margin. [0073] In certain embodiments, no hotspot(s) are identified outside of the prostate volume (e.g., and/or a surrounding buffer/margin thereof). - 16 - 12297548v2

Attorney Docket No.2010358-0329 [0074] In certain embodiments, step (d) comprises generating, by the neural network, (e.g., as the likelihood that the subject will develop one or more observable lesions outside the primary tumor volume) a likelihood value representing a risk that lesions will spread outside the primary tumor region (e.g., outside the prostate), within a particular period of time [e.g., within 6 months (synchronous metastasis) or e.g., after greater than 6 months (metachronous metastasis)]. [0075] In certain embodiments, step (d) comprises predicting a risk that the subject will develop metachronous metastases. [0076] In certain embodiments, the method comprises, at step (d), predicting a presence of metastases in or involving local lymph in the subject. [0077] In certain embodiments, the method comprises, at step (d) predicting a risk of metastases in or involving local lymph for the subject. [0078] In certain embodiments, the method comprises, at step (d), predicting a presence of metastases of one or more molecular imaging TNM (miTNM) lesion type classes in the subject. [0079] In certain embodiments, the method comprises predicting a presence of distant lymph node metastases (miMa) in the subject. [0080] In certain embodiments, the method comprises predicting a presence of one or more particular sub-classes of distant lymph node metastases (miMa) in the subject, said sub- classes of distant lymph metastases (miMa) selected from the group consisting of retroperitoneal (RP), supradiaphragmatic (SD), and other extrapelvic (OE). [0081] In certain embodiments, the method comprises, at step (d), predicting a risk of metastases of one or more molecular imaging TNM (miTNM) lesion type classes for the subject. [0082] In certain embodiments, the method comprises predicting a risk of distant lymph node metastases (miMa) for the subject. [0083] In certain embodiments, the method comprises predicting a risk of one or more particular sub-classes of distant lymph node metastases (miMa) for the subject, said sub-classes of distant lymph metastases (miMa) selected from the group consisting of retroperitoneal (RP), supradiaphragmatic (SD), and other extrapelvic (OE). - 17 - 12297548v2

Attorney Docket No.2010358-0329 [0084] In another aspect, the invention is directed to a system for automatically processing one or more medical images (e.g., 3D images) of a subject and using the processed image(s) to automatically predict a presence and/or a risk of metastases (e.g., to automatically predict whether localized disease has developed or will develop into metastatic cancer), the system comprising: a processor of a computing device; and memory having instructions stored thereon, wherein the instructions, when executed by the processor, cause the processor to: (a) automatically identify, within a 3D functional image acquired following administration to the subject or an imaging agent, a prostate volume corresponding to a prostate of the subject; (b) automatically identify one or more hotspots within the prostate volume, (e.g., said one or more hotspots corresponding to localized regions of high intensity relative to their surroundings and representing lesions or potential lesions within the subject); and (c) predict, using a neural network, (i) a presence of metastases in the subject, and/or (ii) a risk of metastases in the subject, said predicting based at least in part on the automatically identified prostate volume and the automatically identified one or more hotspots within the prostate volume, wherein the neural network receives at least two channels of input, the at least two channels of input comprising: (A) a prostate intensity channel comprising intensities of voxels located within the prostate volume; and (B) a hotspot mask channel comprising a mask identifying the one or more hotspots. [0085] In another aspect, the invention is directed to a method for automatically processing medical images of a subject presenting with localized prostate cancer and using the processed image(s) to automatically predict a risk that the subject will develop metastases, the method comprising: (a) receiving, by a processor of a computing device, a medical image of the subject, comprising a graphical representation of a prostate of the subject; (b) automatically identifying, by the processor, within the medical image, a prostate volume corresponding to a prostate of the subject; (c) automatically identifying, by the processor, one or more hotspots within the prostate volume (e.g., said one or more hotspots corresponding to localized regions of high intensity relative to their surroundings and representing lesions or potential lesions within the subject); and (d) predicting, by the processor, using a neural network, a risk of metastases in the subject based at least in part on the prostate volume and the automatically identified one or more hotspots within the prostate volume, wherein the neural network is a trained neural network, having been trained using a plurality of example images each obtained from a particular individual and comprising a graphical representation of hotspots within a prostate region of the particular subject, said - 18 - 12297548v2

Attorney Docket No.2010358-0329 plurality of example images comprising: (A) a plurality of positive example images obtained for individuals having synchronous metastases; and (B) a plurality of negative example images obtained for individuals having localized disease. [0086] In certain embodiments, the subject is or has been determined to have localized prostate cancer, with observable lesions confined to a primary tumor volume comprising and/or about the prostate of the subject and wherein step (d) comprises predicting, as the risk of metastases, a likelihood that the subject will develop one or more observable lesions outside the primary tumor volume. [0087] In certain embodiments, step (c) comprises automatically identifying the one or more hotspot(s) within the prostate volume, but not identifying any hotspot(s) outside of the prostate volume and/or a surrounding buffer/margin. [0088] In certain embodiments, no hotspot(s) are identified outside of the prostate volume. [0089] In certain embodiments, step (d) comprises generating, by the neural network, a likelihood value representing a risk that lesions will spread outside the prostate region within a particular period of time. [0090] In certain embodiments, the neural network receives at least two channels of input, the at least two channels of input comprising: (A) a prostate intensity channel comprising intensities of voxels located within the prostate volume of the image(s) corresponding to the prostate; and (B) a hotspot mask channel comprising a mask identifying the one or more hotspot(s). [0091] In another aspect, the invention is directed to a system for automatically processing medical images of a subject presenting with localized prostate cancer and using the processed image(s) to automatically predict a risk that the subject will develop metastases, the system comprising: a processor of a computing device; and memory having instructions stored thereon, wherein the instructions, when executed by the processor, cause the processor to: (a) receiving, by a processor of a computing device, a medical image of the subject, comprising a graphical representation of a prostate of the subject; (b) automatically identifying, by the processor, within the medical image, a prostate volume corresponding to a prostate of the subject; (c) automatically identifying, by the processor, one or more hotspots within the prostate volume, said one or more hotspots corresponding to localized regions of high intensity relative to their surroundings and representing lesions or potential lesions - 19 - 12297548v2

Attorney Docket No.2010358-0329 within the subject; and (d) predicting, by the processor, using a neural network, a risk of metastases in the subject based at least in part on the prostate volume and the automatically identified one or more hotspots within the prostate volume, wherein the neural network is a trained neural network, having been trained using a plurality of example images each obtained from a particular individual and comprising a graphical representation of hotspots within a prostate region of the particular subject, said plurality of example images comprising: (A) a plurality of positive example images obtained for individuals having synchronous metastases; and (B) a plurality of negative example images obtained for individuals having localized disease. [0092] In another aspect, the invention is directed to a method for automatically processing one or more medical images (e.g., 3D images) of a subject and using the processed image(s) to automatically predict presence and/or risk of metastases (e.g., to automatically predict whether localized disease has developed or will develop into metastatic cancer), the method comprising: (a) receiving, by a processor of a computing device, one or more medical images of a primary tumor region of the subject {e.g., a PET/CT image obtained with a PSMA targeted imaging agent, e.g., [F18]DCFPyL (PyL)}; (b) automatically identifying, by the processor, a volume of the image(s) corresponding the primary tumor region within the subject (e.g., segmenting a volume representing the primary tumor region); (c) automatically identifying, by the processor, one or more suspect regions (e.g., hotspots) within the volume corresponding to the primary tumor region; and (d) predicting, by the processor, using a neural network (e.g., a convolutional neural network) (e.g., wherein both the automatically identified primary tumor volume and the automatically identified hotspots are used as inputs of the neural network), (i) a presence of metastases in the subject (e.g., the presence of synchronous metastases) (e.g., predicting whether localized disease has developed into metastatic cancer), and/or (ii) a risk of metastases (e.g., the risk of metachronous metastases) (e.g., predicting whether localized disease will develop into metastatic cancer). [0093] In certain embodiments, the primary tumor region is or comprises one or more organs of the subject (e.g., one or both breasts of the subject; e.g., a colon of the subject; e.g., an esophagus of the subject; e.g., one or both lungs of the subject; e.g., one or both ovaries of the subject; e.g., a pancreas of the subject). [0094] In certain embodiments, the method comprises, at step (d), predicting a presence of metastases in or involving local lymph in the subject. - 20 - 12297548v2

Attorney Docket No.2010358-0329 [0095] In certain embodiments, the method comprises, at step (d) predicting a risk of metastases in or involving local lymph for the subject. [0096] In certain embodiments, the method comprises, at step (d), predicting presence of metastases of one or more molecular imaging TNM (miTNM) lesion type classes. [0097] In certain embodiments, the method comprises predicting presence of distant lymph node metastases (miMa) in the subject. [0098] In certain embodiments, the method comprises predicting presence of one or more particular sub-classes of distant lymph node metastases (miMa) in the subject, said sub- classes of distant lymph node metastases (miMa) selected from the group consisting of retroperitoneal (RP), supradiaphragmatic (SD), and other extrapelvic (OE). [0099] In certain embodiments, the method comprises, at step (d), predicting risk of metastases of one or more molecular imaging TNM (miTNM) lesion type classes for the subject. [0100] In certain embodiments, the method comprises predicting risk of distant lymph node metastases (miMa) for the subject. [0101] In certain embodiments, the method comprises predicting risk of one or more particular sub-classes of distant lymph node metastases (miMa) for the subject, said sub- classes of distant lymph node metastases (miMa) selected from the group consisting of retroperitoneal (RP), supradiaphragmatic (SD), and other extrapelvic (OE). [0102] In another aspect, the invention is directed to a system for automatically processing one or more medical images (e.g., 3D images) of a subject and using the processed image(s) to automatically predict presence and/or risk of metastases (e.g., to automatically predict whether localized disease has developed or will develop into metastatic cancer), the system comprising: a processor of a computing device; and memory having instructions stored thereon, wherein the instructions, when executed by the processor, cause the processor to: (a) receive one or more medical images of a primary tumor region of the subject {e.g., a PET/CT image obtained with a PSMA targeted imaging agent, e.g., [F18]DCFPyL (PyL)}; (b) automatically identify a volume of the image(s) corresponding the primary tumor region within the subject (e.g., segmenting a volume representing the primary tumor region); (c) automatically identify one or more suspect regions (e.g., hotspots) within volume corresponding to the primary tumor region; and (d) predict, using a neural network - 21 - 12297548v2

Attorney Docket No.2010358-0329 (e.g., a convolutional neural network) (e.g., wherein both the automatically identified primary tumor volume and the automatically identified hotspots are used as inputs of the neural network), (i) presence of metastases in the subject (e.g., predicting whether localized disease has developed into metastatic cancer), and/or (ii) a risk of metastases (e.g., predicting whether localized disease will develop into metastatic cancer). [0103] In certain embodiments, the primary tumor region is or comprises one or more organs of the subject (e.g., one or both breasts of the subject; e.g., a colon of the subject; e.g., an esophagus of the subject; e.g., one or both lungs of the subject; e.g., one or both ovaries of the subject; e.g., a pancreas of the subject). [0104] In certain embodiments, at step (d), the instructions cause the processor to predict a presence of metastases in or involving local lymph in the subject. [0105] In certain embodiments, at step (d), the instructions cause the processor to predict a risk of metastases in or involving local lymph for the subject. [0106] In certain embodiments, at step (d), the instructions cause the processor to predict presence of metastases of one or more molecular imaging TNM (miTNM) lesion type classes. [0107] In certain embodiments, the instructions cause the processor to predict presence of distant lymph node metastases (miMa) in the subject. [0108] In certain embodiments, the instructions cause the processor to predict presence of one or more particular sub-classes of distant lymph node metastases (miMa) in the subject, said sub-classes of distant lymph node metastases (miMa) selected from the group consisting of retroperitoneal (RP), supradiaphragmatic (SD), and other extrapelvic (OE). [0109] In certain embodiments, at step (d), the instructions cause the processor to predict risk of metastases of one or more molecular imaging TNM (miTNM) lesion type classes for the subject. [0110] In certain embodiments, the instructions cause the processor to predict risk of distant lymph node metastases (miMa) for the subject. [0111] In certain embodiments, the instructions cause the processor to predict risk of one or more particular sub-classes of distant lymph node metastases (miMa) for the subject, - 22 - 12297548v2

Attorney Docket No.2010358-0329 said sub-classes of distant lymph node metastases (miMa) selected from the group consisting of retroperitoneal (RP), supradiaphragmatic (SD), and other extrapelvic (OE). [0112] In another aspect, the invention is directed to a method for automatically processing one or more medical images (e.g., 3D images) of a subject and using the processed image(s) to automatically predict a presence and/or a risk of metastases (e.g., to automatically predict whether a localized disease has developed or will develop into metastatic cancer), the method comprising: (a) receiving, by a processor of a computing device, one or more medical images of a prostate of the subject {e.g., a PET/CT image obtained with a PSMA targeted imaging agent, e.g., [F18]DCFPyL (PyL)}; (b) automatically identifying, by the processor, a prostate volume of the image(s) corresponding to the prostate of the subject (e.g., segmenting the prostate); (c) automatically identifying, by the processor, one or more suspect regions (e.g., hotspots) within the prostate volume; and (d) predicting, by the processor, using a neural network (e.g., a convolutional neural network) (e.g., wherein both the automatically identified prostate volume and the automatically identified hotspots are used as inputs of the neural network), (i) a presence of metastases in the subject (e.g., predicting whether localized disease has developed into metastatic cancer), and/or (ii) a risk of metastases in the subject (e.g., predicting whether localized disease will develop into metastatic cancer), said predicting based at least in part on the automatically identified prostate and the automatically identified one or more suspect regions. [0113] In another aspect, the invention is directed to a system for automatically processing one or more medical images (e.g., 3D images) of a subject and using the processed image(s) to automatically predict a presence and/or a risk of metastases (e.g., to automatically predict whether a localized disease has developed or will develop into metastatic cancer), the system comprising: a processor of a computing device; and memory having instructions stored thereon, wherein the instructions, when executed by the processor, cause the processor to: (a) automatically identify a prostate volume of one or more medical image(s) {e.g., a PET/CT image obtained with a PSMA targeted imaging agent, e.g., [F18]DCFPyL (PyL)}, said automatically identified prostate volume corresponding to a prostate of a subject (e.g., segmenting the prostate); (b) automatically identify one or more suspect regions (e.g., hotspots) within the prostate; and (c) predict, using a neural network (e.g., a convolutional neural network) (e.g., wherein both the automatically identified prostate volume and the automatically identified hotspots are used as inputs of the neural network), (i) a presence of metastases in the subject (e.g., predicting whether localized disease has - 23 - 12297548v2

Attorney Docket No.2010358-0329 developed into metastatic cancer), and/or (ii) a risk of metastases in the subject (e.g., predicting whether localized disease will develop into metastatic cancer), said predicting based at least in part on the automatically identified prostate volume and the automatically identified one or more suspect regions. [0114] Features of embodiments described with respect to one aspect of the invention may be applied with respect to another aspect of the invention. BRIEF DESCRIPTION OF THE DRAWING [0115] The foregoing and other objects, aspects, features, and advantages of the present disclosure will become more apparent and better understood by referring to the following description taken in conjunction with the accompanying drawing, in which: [0116] FIG.1 is an image cube (e.g., a set of PET images) showing a prostate, cropped from a full-size PET image, and indicating a number of slices in every dimension, according to an illustrative embodiment. [0117] FIG.2 is a set of three images showing corresponding slices of a CT image, a PET image, and a PET/CT fusion, obtained from a 3D PET/CT scan, according to an illustrative embodiment. [0118] FIG.3 is a diagram illustrating an example process for segmenting an anatomical image and identifying anatomical boundaries in a co-aligned functional image, according to an illustrative embodiment. [0119] FIG.4A is a diagram illustrating an example process for segmenting and classifying hotspots, according to an illustrative embodiment. [0120] FIG.4B is a screenshot of a graphical user interface showing segmented anatomical regions corresponding to arms and a skull of a subject, according to an illustrative embodiment. [0121] FIG.4C is a block flow diagram of an example process for detecting hotspots in a high uptake organ, according to an illustrative embodiment. [0122] FIG.5A is a schematic showing an approach for computing lesion index values, according to an illustrative embodiment. - 24 - 12297548v2

Attorney Docket No.2010358-0329 [0123] FIG.5B is a schematic showing another approach for computing lesion index values, according to an illustrative embodiment. [0124] FIG.6 is a block flow diagram of an example process for automated determination of a prostate cancer staging score, according to an illustrative embodiment. [0125] FIG.7A is a block flow diagram of an example process for determining a machine-learning based metastases prediction via analysis of one or more medical images, according to an illustrative embodiment. [0126] FIG.7B is a block flow diagram illustrating inputs and outputs of a neural network for generating metastases predictions based on image data input channels, according to an illustrative embodiment. [0127] FIG.7C is a block flow diagram illustrating inputs and outputs of a fused model that combines neural network-based analysis of image data with measured and computed subject attributes, such as clinicopathological data, via a classifier to generate metastases predictions, according to an illustrative embodiment. [0128] FIG.8A is a block flow diagram of an example process for determining a machine-learning based metastases prediction via analysis of one or more medical images of localized prostate cancer, according to an illustrative embodiment. [0129] FIG.8B is a block flow diagram illustrating inputs and outputs of a neural network for generating metastases predictions based on prostate image data input channels, according to an illustrative embodiment. [0130] FIG.8C is a block flow diagram illustrating inputs and outputs of a fused model that combines neural network-based analysis of prostate image data with measured and computed patient attributes, such as clinicopathological data, via a classifier to generate metastases predictions, according to an illustrative embodiment. [0131] FIG.9 is a block diagram of an exemplary cloud computing environment, used in certain embodiments. [0132] FIG.10 is a block diagram of an example computing device and an example mobile computing device, used in certain embodiments. [0133] FIG.11 is a block flow diagram of an illustrative method for automated determination of a PRIMARY score for prostate cancer staging, according to an illustrative embodiment. - 25 - 12297548v2

Attorney Docket No.2010358-0329 [0134] FIG.12A is an example image of prostate-located uptake regions (hotspots) that is classified as “diffuse”, according to an illustrative embodiment. [0135] FIG.12B is an example image of prostate-located uptake regions (hotspots) that is classified as “focal”, according to an illustrative embodiment. [0136] FIG.13 is a graphical representation of a three-dimensional (3D) clinical prostate model used to identify one or more zones within the prostate in which each uptake region (hotspot) is located, according to an illustrative embodiment. [0137] FIG.14 is a graphical representation of the clinical prostate model fit to a prostate segmentation mask in the CT image, according to an illustrative embodiment. [0138] FIG.15 is a compilation of images depicting an example automated computation of a PRIMARY score, according to an illustrative embodiment. [0139] FIG.16 is a set of illustrative images showing input channels for a two- channel neural network model, according to an illustrative embodiment. [0140] FIG.17A is a diagram of an example CNN model architecture for performing binary classification, according to an illustrative embodiment. [0141] FIG.17B is a diagram of an example fused model combining a CNN model with patient attributes, according to an illustrative embodiment. [0142] FIG.18A is an example PET image of a prostate region, according to an illustrative embodiment. [0143] FIG.18B is an example 3D hotspot mask, according to an illustrative embodiment. [0144] FIG.18C is image showing the example PET image shown in FIG.18A overlaid with the hotspot mask shown in FIG.18B, according to an illustrative embodiment. [0145] FIG.19 is a schematic showing an example process for creation and evaluation of models for predicting metastases, according to an illustrative embodiment. [0146] FIG.20A is an image showing an attention map for an X gradient explainer computed for a CNN model that was trained on, and receives, as input, a single input channel comprising a prostate PET image, according to an illustrative embodiment. [0147] FIG.20B is an image showing the attention map of FIG.20A overlayed on an input PET image, according to an illustrative embodiment. - 26 - 12297548v2

Attorney Docket No.2010358-0329 [0148] FIG.21A is an image showing an attention map for an X gradient explainer computed for a CNN model that was trained on, and receives, as input, two input channels - one comprising a prostate PET image and a second comprising a hotspot mask, according to an illustrative embodiment. [0149] FIG.21B is an image showing a prostate PET image portion used as input to a two-input-channel CNN model, according to an illustrative embodiment. [0150] FIG.21C is an image showing a hotspot mask used as input to a two-input- channel CNN model, according to an illustrative embodiment. [0151] FIG.21D is an image showing the attention map of FIG.21A, the PET image of FIG.21B, and the hotspot mask of FIG.21C overlayed on each other, according to an illustrative embodiment. [0152] FIG.22A is a plot showing receiver operating characteristic (ROC) curves for three metastases prediction models, used in certain embodiments. [0153] FIG.22B is a plot showing precision-recall curves for three metastases prediction models, used in certain embodiments. [0154] FIG.22C is a plot showing receiver operating characteristic (ROC) curves for three metastases prediction models, used in certain embodiments. [0155] FIG.23 is a matrix table showing predictive contributions of various input features, according to an illustrative embodiment. [0156] FIG.24 is a graph showing four box and whisker plots for certain metastases prediction models, according to certain embodiments. [0157] FIG.25 is a graph showing power curves for various sample sizes, according to an illustrative embodiment. [0158] Features and advantages of the present disclosure will become more apparent from the detailed description of certain embodiments that is set forth below, particularly when taken in conjunction with the figures, in which like reference characters identify corresponding elements throughout. In the figures, like reference numbers generally indicate identical, functionally similar, and/or structurally similar elements. - 27 - 12297548v2

Attorney Docket No.2010358-0329 CERTAIN DEFINITIONS [0159] In order for the present disclosure to be more readily understood, certain terms are first defined below. Additional definitions for the following terms and other terms are set forth throughout the specification. [0160] A, an: The articles “a” and “an” are used herein to refer to one or to more than one (i.e., at least one) of the grammatical object of the article. By way of example, “an element” means one element or more than one element. Thus, in this specification and the appended claims, the singular forms “a,” “an,” and “the” include plural references unless the context clearly dictates otherwise. Thus, for example, reference to a pharmaceutical composition comprising “an agent” includes reference to two or more agents. [0161] About, approximately: As used in this application, the terms “about” and “approximately” are used as equivalents. Any numerals used in this application with or without about/approximately are meant to cover any normal fluctuations appreciated by one of ordinary skill in the relevant art. In certain embodiments, the term “approximately” or “about” refers to a range of values that fall within 25%, 20%, 19%, 18%, 17%, 16%, 15%, 14%, 13%, 12%, 11%, 10%, 9%, 8%, 7%, 6%, 5%, 4%, 3%, 2%, 1%, or less in either direction (greater than or less than) of the stated reference value unless otherwise stated or otherwise evident from the context (except where such number would exceed 100% of a possible value). [0162] First, second, etc.: It should be understood that any reference to an element herein using a designation such as "first," "second," and so forth does not limit the quantity or order of those elements, unless such limitation is explicitly stated. Rather, these designations may be used herein as a convenient method of distinguishing between two or more elements or instances of an element. Thus, a reference to first and second elements does not mean that only two elements may be employed or that the first element must precede the second element in some manner. In addition, unless stated otherwise, a set of elements may comprise one or more elements. [0163] Administering: As used herein, “administering” an agent means introducing a substance (e.g., an imaging agent) into a subject. In general, any route of administration may be utilized including, for example, parenteral (e.g., intravenous), oral, topical, subcutaneous, peritoneal, intraarterial, inhalation, vaginal, rectal, nasal, introduction into the cerebrospinal fluid, or instillation into body compartments. - 28 - 12297548v2

Attorney Docket No.2010358-0329 [0164] 3D, three-dimensional: As used herein, “3D” or “three-dimensional” with reference to an “image” means conveying information about three dimensions. A 3D image may be rendered as a dataset in three dimensions and/or may be displayed as a set of two- dimensional representations, or as a three-dimensional representation. In certain embodiments, a 3D image is represented as voxel (e.g., volumetric pixel) data. [0165] Image: As used herein, an “image” – for example, a three-dimensional (3D) image of subject, includes any visual representation, such as a photo, a video frame, streaming video, as well as any electronic, digital or mathematical analogue of a photo (e.g., a digital image), video frame, or streaming video, displayed or stored in memory (e.g., a digital image may, but need not be displayed for visual inspection). Any apparatus described herein, in certain embodiments, includes a display for displaying an image or any other result produced by the processor. Any method described herein, in certain embodiments, includes a step of displaying an image or any other result produced via the method. In certain embodiments, an image is a 3D image, conveying information that varies with position within a 3D volume. Such images may, for example, be represented digitally as a 3D matrix (e.g., a ^^^^ × ^^^^ × ^^^^ matrix) with each voxel of a 3D image represented by an element of a 3D matrix. Other representations are also contemplated and included, for example, a 3D matrix may be reshaped as a vector (e.g., a 1 × ^^^^ size vector, where K is a total number of voxels) by stitching each row or column end to end. Examples of images include, for example, medical images, such as bone-scan images (also referred to as scintigraphy images), computed tomography (CT) images, magnetic resonance images (MRIs), optical images (e.g., bright-field microscopy images, fluorescence images, reflection or transmission images, etc.), positron emission tomography (PET) images, single-photon emission tomography (SPECT) images, ultrasound images, x-ray images, and the like. In certain embodiments, a medical image is or comprises a nuclear medicine image, produced from radiation emitted from within a subject being imaged. In certain embodiments, a medical image is or comprises an anatomical image (e.g., a 3D anatomical image) conveying information regarding location and extent of anatomical structures such as internal organs, bones, soft-tissue, and blood vessels, within a subject. Examples of anatomical images include, without limitation, x-ray images, CT images, MRIs, and ultrasound images. In certain embodiments, a medical image is or comprises a functional image (e.g., a 3D functional image) conveying information relating to physiological activities within specific organs and/or tissue, such as metabolism, blood flow, regional chemical composition, absorption, etc. Examples of functional images - 29 - 12297548v2

Attorney Docket No.2010358-0329 include, without limitation, nuclear medicine images, such as PET images, SPECT images, as well as other functional imaging modalities, such as functional MRI (fMRI), which measures small changes in blood flow for use in assessing brain activity. [0166] Radionuclide: As used herein, “radionuclide” refers to a moiety comprising a radioactive isotope of at least one element. Exemplary suitable radionuclides include but are not limited to those described herein. In some embodiments, a radionuclide is one used in positron emission tomography (PET). In some embodiments, a radionuclide is one used in single-photon emission computed tomography (SPECT). In some embodiments, a non- limiting list of radionuclides includes

99mTc,

111In,

64Cu,

67Ga,

68Ga,

186Re,

188Re,

153Sm,

177Lu,

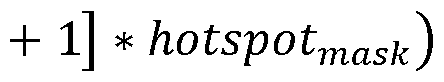

67Cu,

123I,

124I,

125I,

126I,

131I ,

11C,

13N,

15O,

18F,

153Sm,

166Ho,

177Lu,

149Pm,

90Y,

213Bi,

103Pd,

109Pd,

159Gd,

140La,

198Au,

199Au,

169Yb,

175Yb,

165Dy,

166Dy,

105Rh,

111Ag,

89Zr,

225Ac,

82Rb,

75Br,

76Br,

77Br,

80Br,

80mBr,

82Br,

83Br,

211At and

192Ir. [0167] Radiopharmaceutical: As used herein, the term “radiopharmaceutical” refers to a compound comprising a radionuclide. In certain embodiments, radiopharmaceuticals are used for diagnostic and/or therapeutic purposes. In certain embodiments, radiopharmaceuticals include small molecules that are labeled with one or more radionuclide(s), antibodies that are labeled with one or more radionuclide(s), and antigen-binding portions of antibodies that are labeled with one or more radionuclide(s). [0168] Machine learning module: Certain embodiments described herein make use of (e.g., include) software instructions that include one or more machine learning module(s), also referred to herein as artificial intelligence software. As used herein, the term “machine learning module” refers to a computer implemented process (e.g., function) that implements one or more specific machine learning algorithms in order to determine, for a given input (such as an image (e.g., a 2D image; e.g., a 3D image), dataset, and the like) one or more output values. For example, a machine learning module may receive as input a 3D image of a subject (e.g., a CT image; e.g., an MRI), and for each voxel of the image, determine a value that represents a likelihood that the voxel lies within a region of the 3D image that corresponds to a representation of a particular organ or tissue of the subject. In certain embodiments, two or more machine learning modules may be combined and implemented as a single module and/or a single software application. In certain embodiments, two or more machine learning modules may also be implemented separately, e.g., as separate software applications. A machine learning module may be software and/or hardware. For example, a machine learning module may be implemented entirely as software, or certain functions of a - 30 - 12297548v2

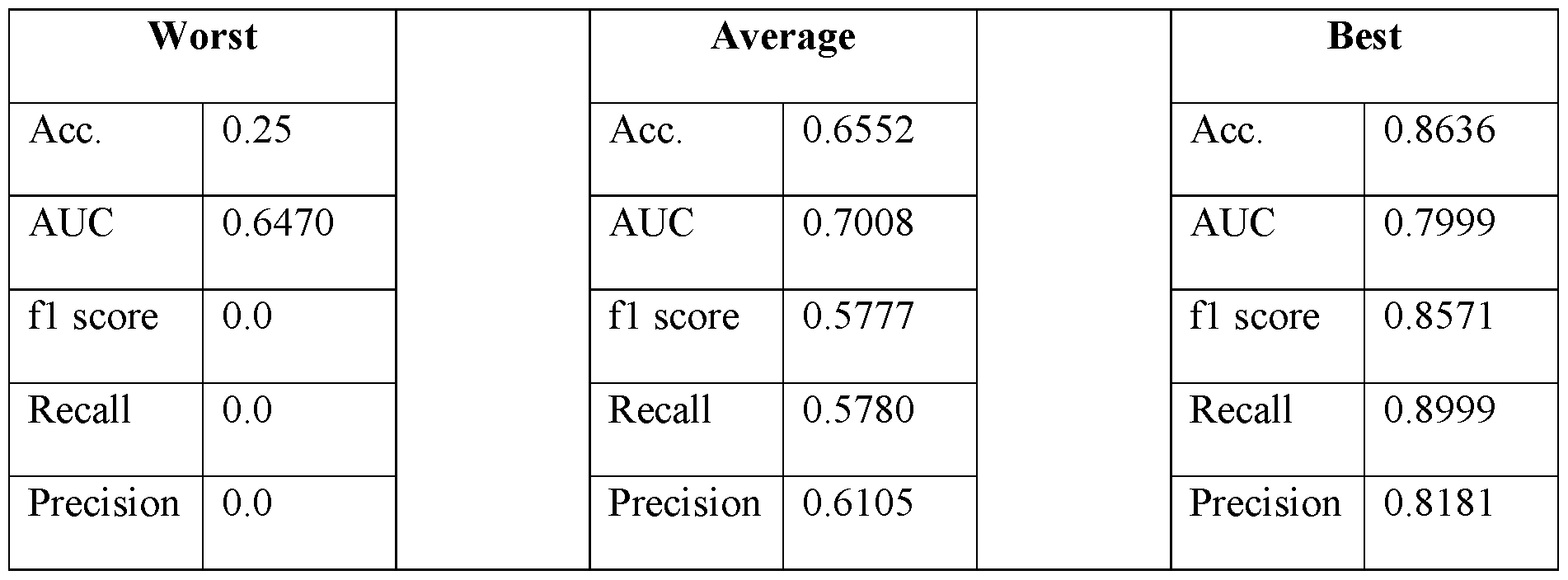

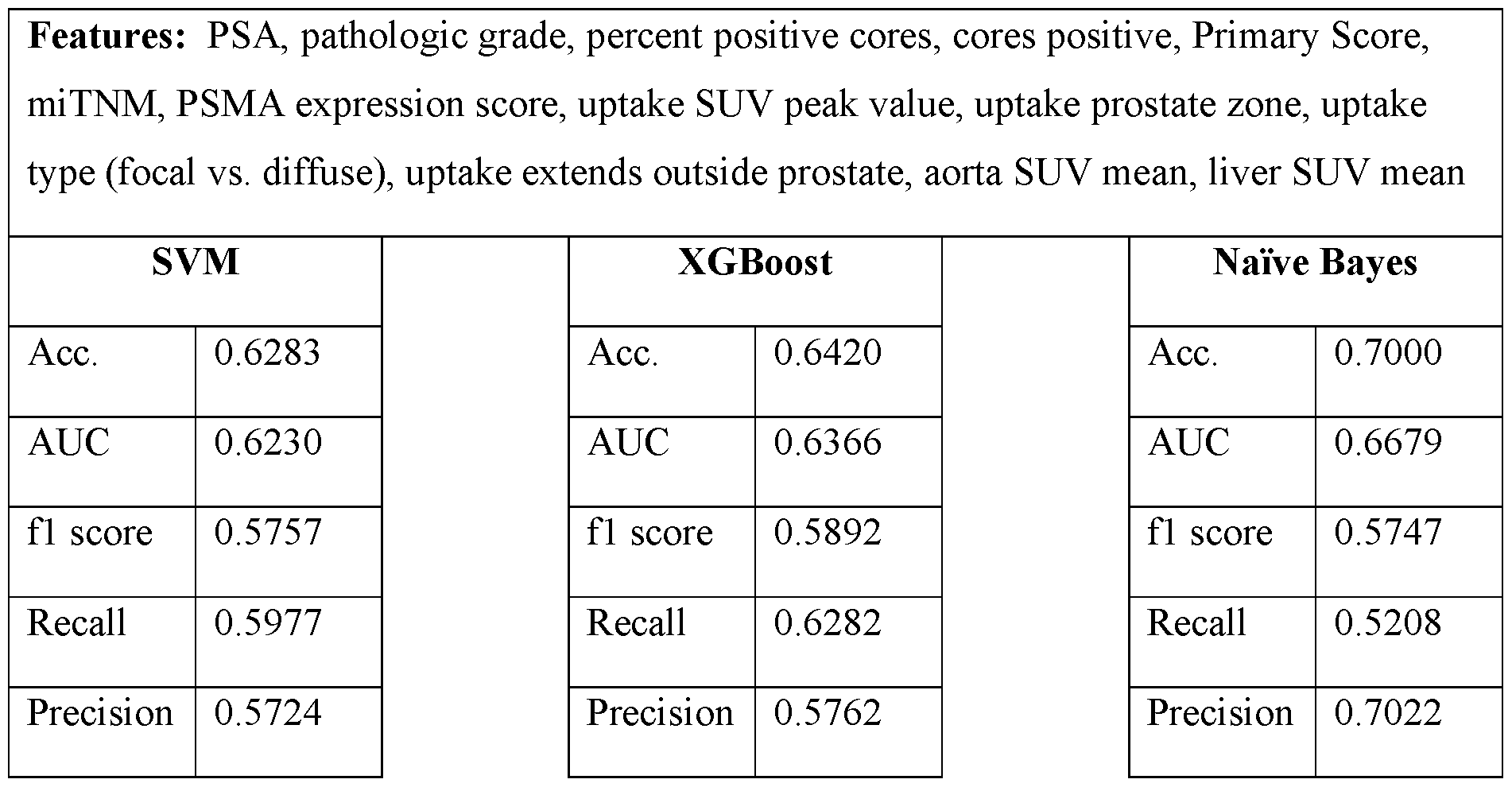

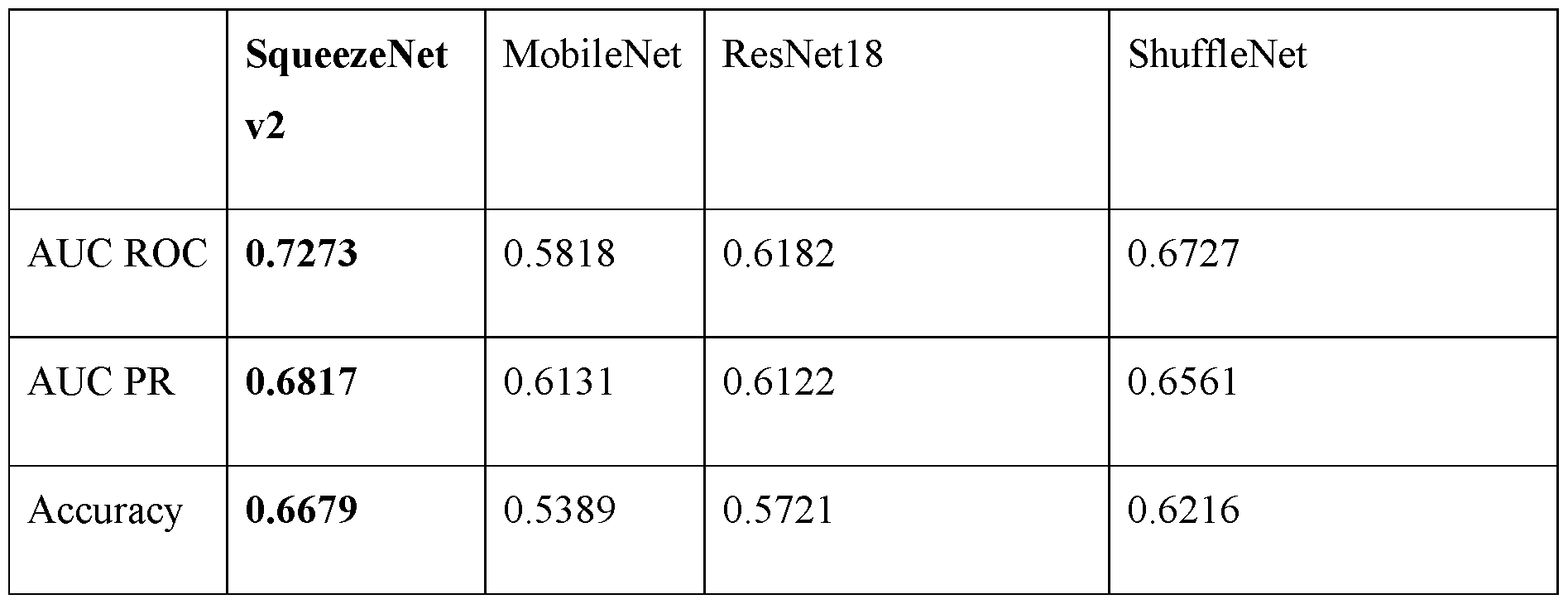

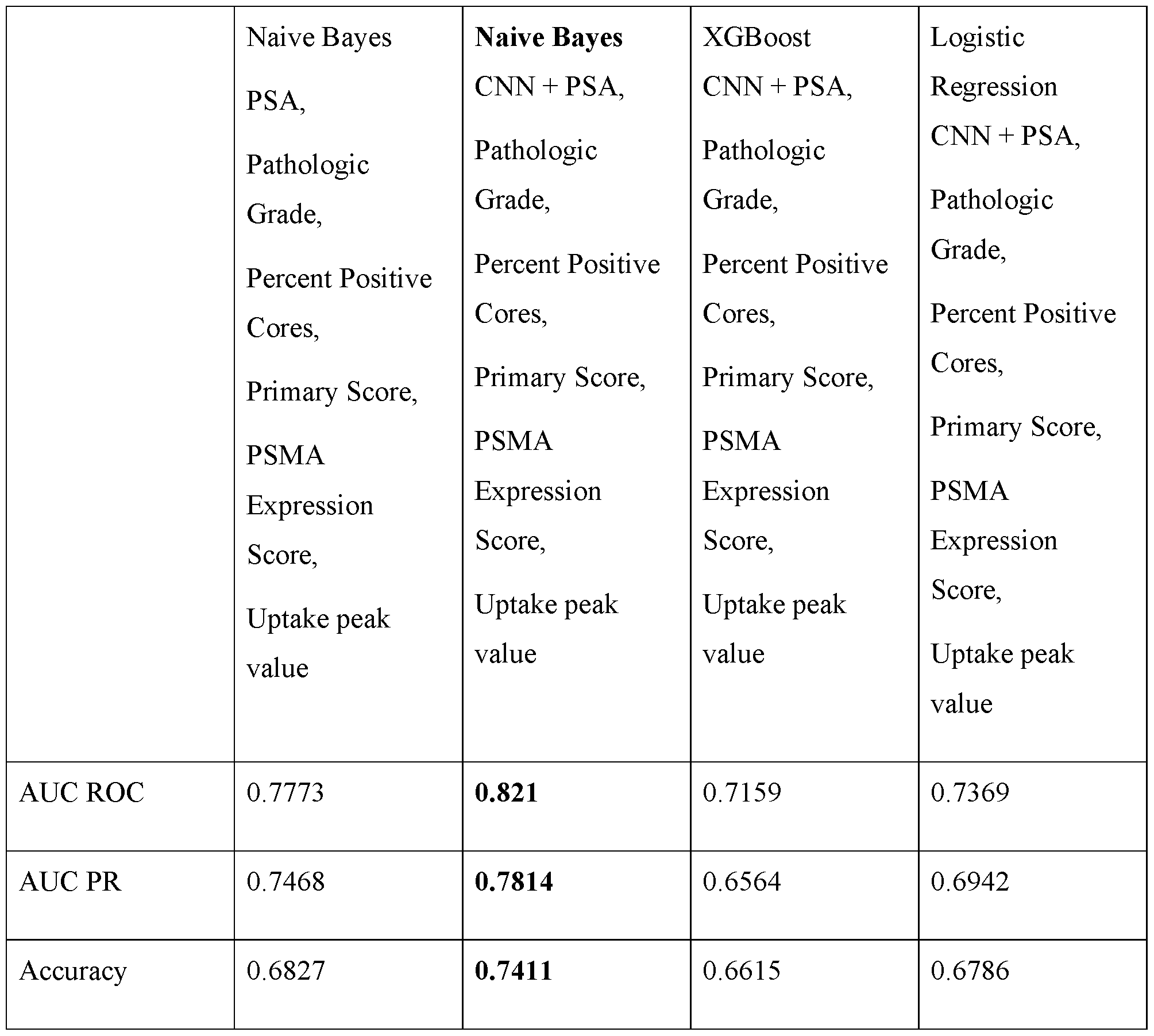

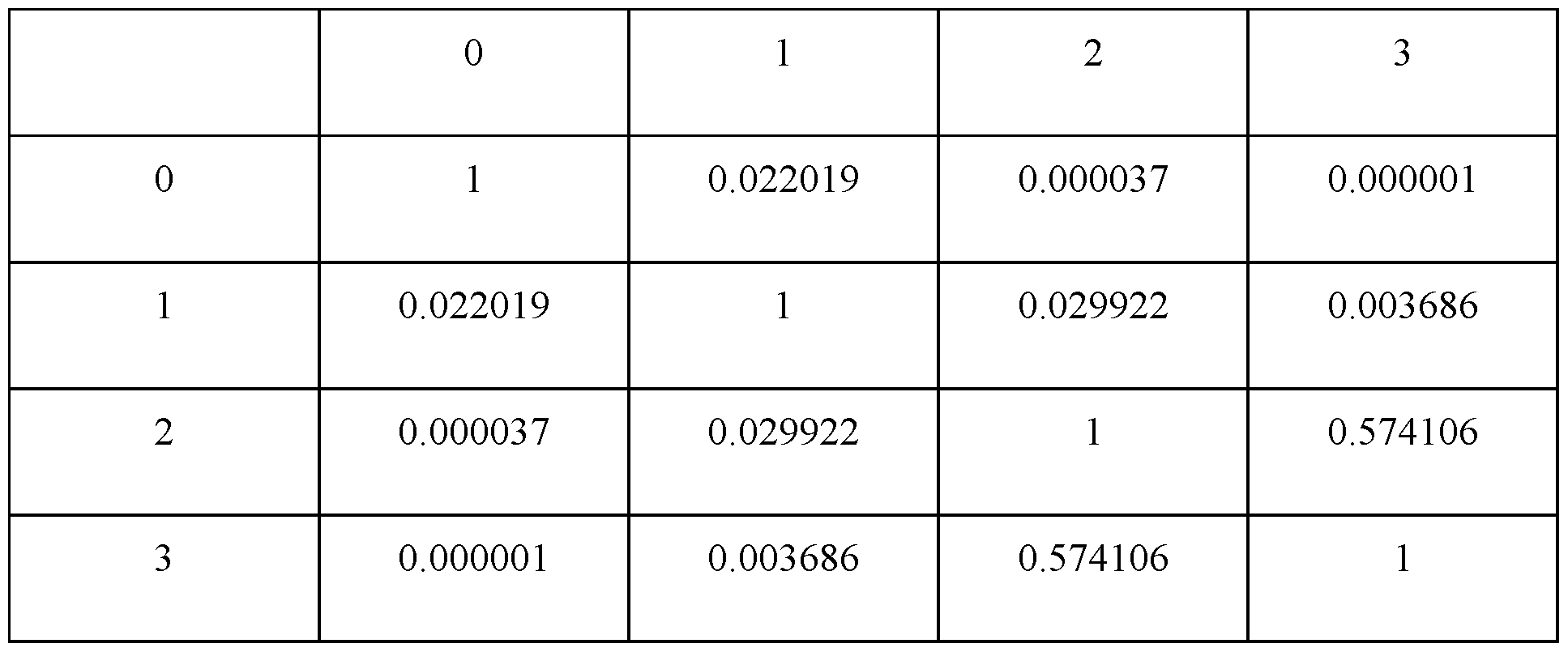

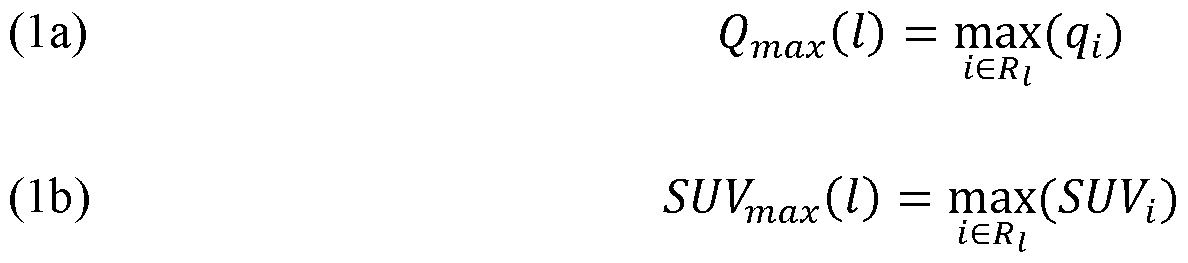

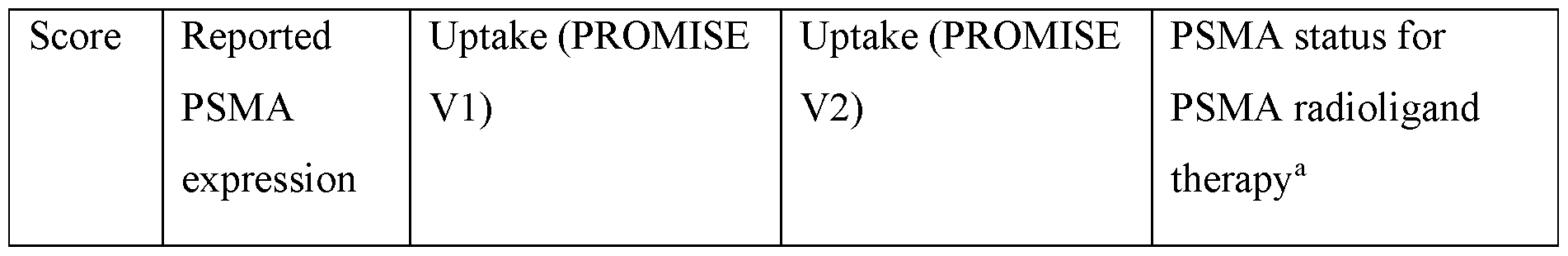

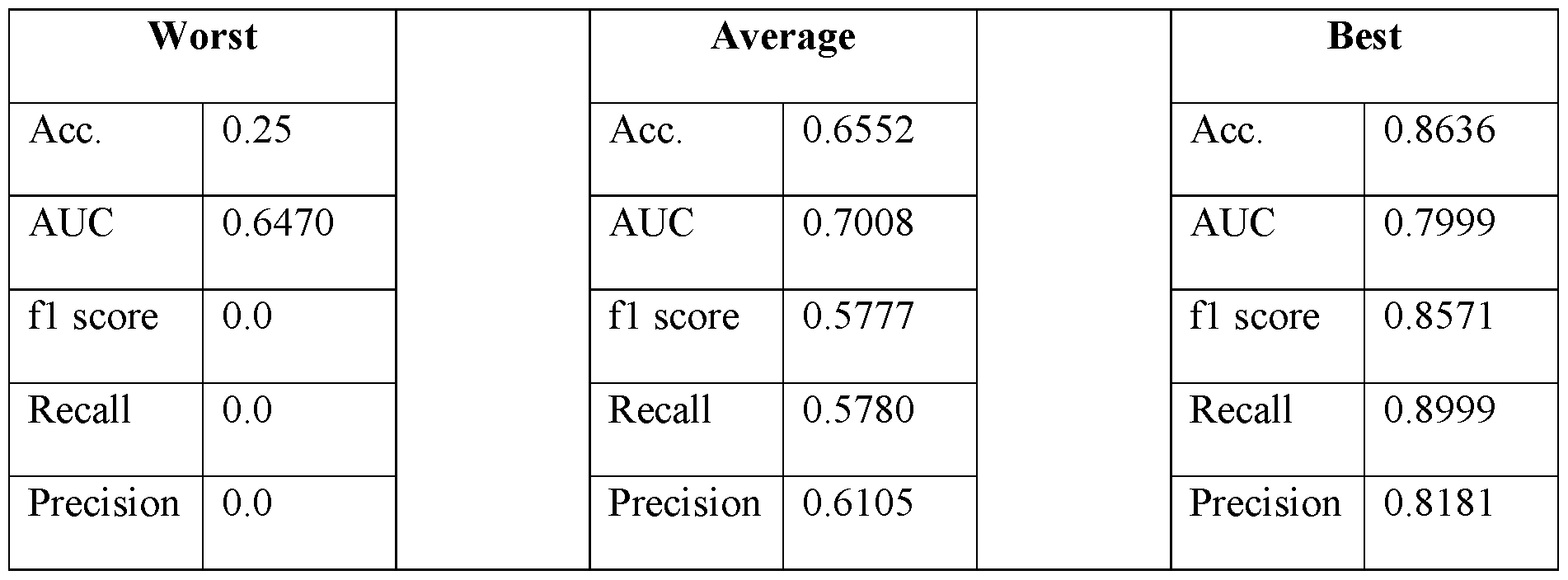

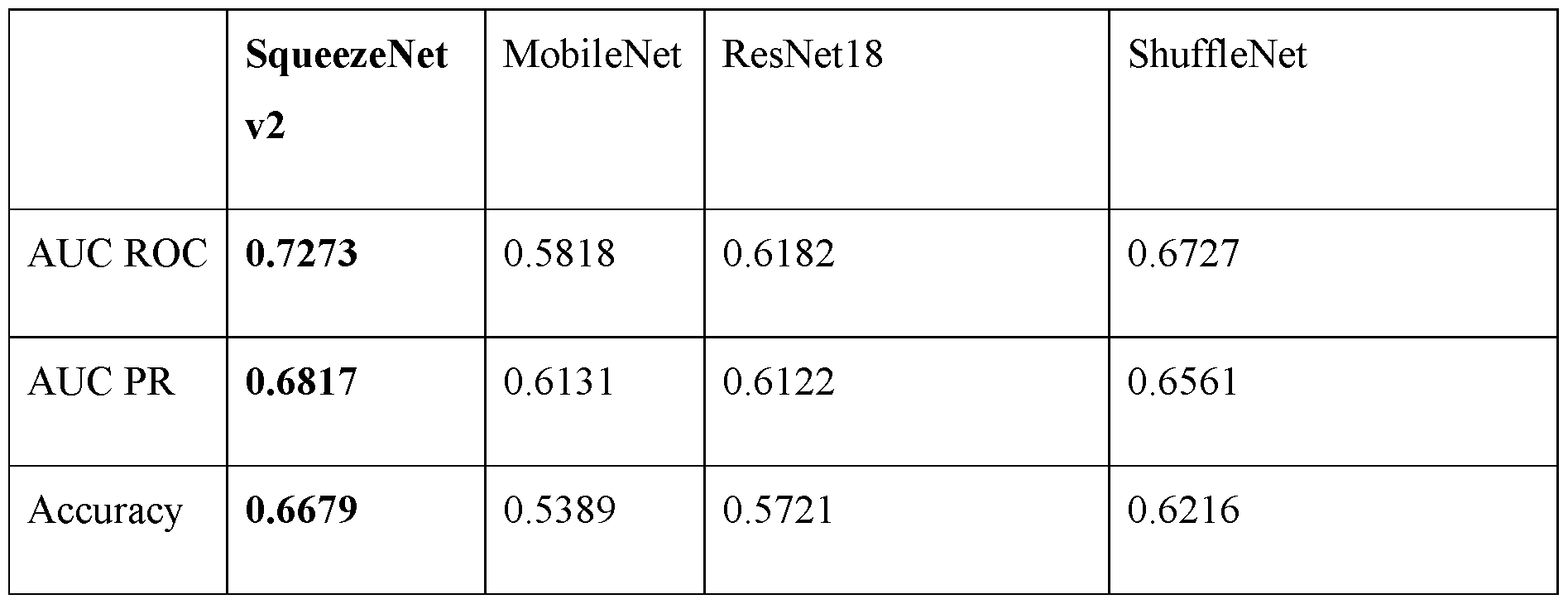

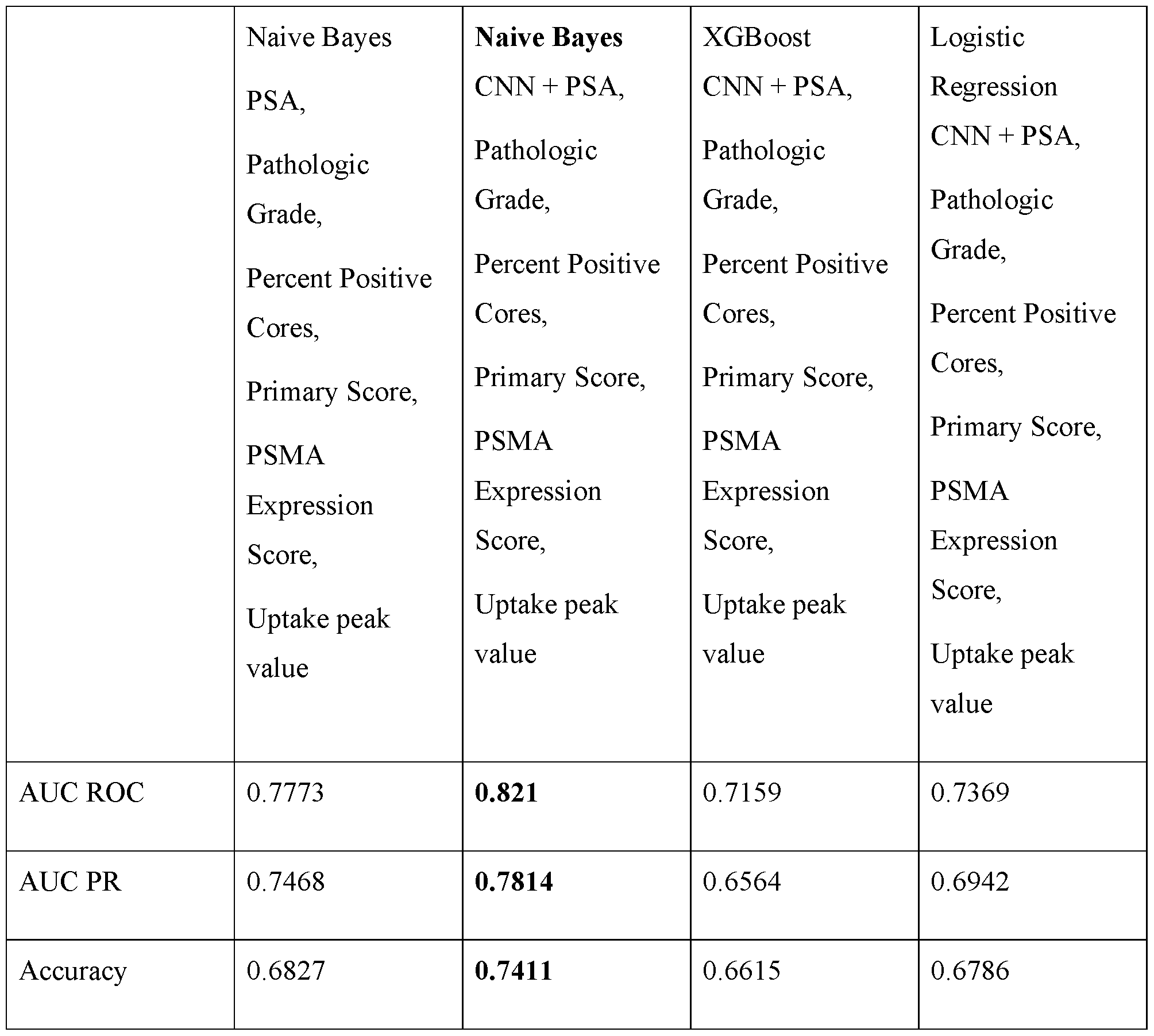

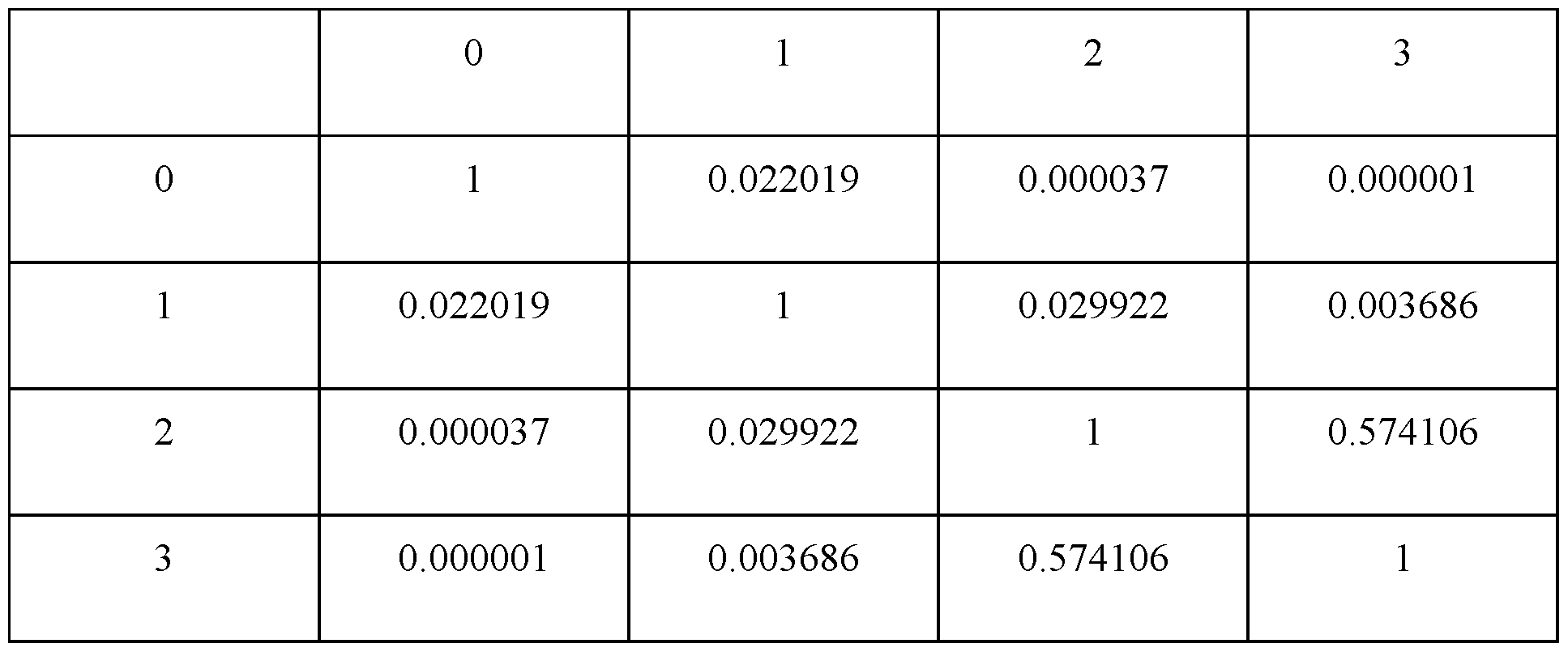

Attorney Docket No.2010358-0329 CNN module may be carried out via specialized hardware (e.g., via an application specific integrated circuit (ASIC)). [0169] Map: As used herein, the term “map” is understood to mean a visual display, or any data representation that may be interpreted for visual display, which contains spatially- correlated information. For example, a three-dimensional map of a given volume may include a dataset of values of a given quantity that varies in three spatial dimensions throughout the volume. A three-dimensional map may be displayed in two-dimensions (e.g., on a two-dimensional screen, or on a two-dimensional printout). [0170] Metachronous metastases: As used herein the term “metachronous metastases” refers to metastases within a patient that are not detected and/or do not appear until after a particular time interval following initial diagnosis and/or detection of cancer. [0171] Occult metastases: As used herein, the term “occult metastases” refers to metastases that are present within a patient, but not detected during initial pathological examination. In certain embodiments, for example, occult metastases may be undetectable via conventional imaging. For example, a patient may have existing metastases, but they may not yet be of a size that gives rise to observable suspect regions in CT, MRI, or nuclear medicine (e.g., as hotspots) images. [0172] Segmentation map: As used herein, the term “segmentation map” refers to a computer representation that identifies one or more 2D or 3D regions determined by segmenting an image. In certain embodiments, a segmentation map distinguishably identifies multiple different (e.g., segmented) regions, allowing them to be individually and distinguishably accessed and operated upon and/or used for operating on, for example, one or more images. [0173] Subject: As used herein, a “subject” means a human or other mammal (e.g., rodent (mouse, rat, hamster), pig, cat, dog, horse, primate, rabbit, and the like). The term “subject” is used herein interchangeably with the term “patient”. [0174] Synchronous metastases: As used herein, the term “synchronous metastases” refers to metastases within a patient that co-exist with the primary cancer tumor at a time of initial diagnosis and/or detection of cancer. In certain embodiments, an initial diagnosis and/or detection of cancer is a detection of a primary tumor and/or one or more lesions within a particular (e.g., single, isolated) organ or tissue region, such as a prostate, breast, liver, lung, colon, or rectum. - 31 - 12297548v2