CN116229075A - Data processing method, device, computer equipment and storage medium - Google Patents

Data processing method, device, computer equipment and storage medium Download PDFInfo

- Publication number

- CN116229075A CN116229075A CN202310233340.8A CN202310233340A CN116229075A CN 116229075 A CN116229075 A CN 116229075A CN 202310233340 A CN202310233340 A CN 202310233340A CN 116229075 A CN116229075 A CN 116229075A

- Authority

- CN

- China

- Prior art keywords

- image

- style

- color

- background

- description

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/20—Image preprocessing

- G06V10/26—Segmentation of patterns in the image field; Cutting or merging of image elements to establish the pattern region, e.g. clustering-based techniques; Detection of occlusion

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F16/00—Information retrieval; Database structures therefor; File system structures therefor

- G06F16/50—Information retrieval; Database structures therefor; File system structures therefor of still image data

- G06F16/53—Querying

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F16/00—Information retrieval; Database structures therefor; File system structures therefor

- G06F16/50—Information retrieval; Database structures therefor; File system structures therefor of still image data

- G06F16/58—Retrieval characterised by using metadata, e.g. metadata not derived from the content or metadata generated manually

- G06F16/583—Retrieval characterised by using metadata, e.g. metadata not derived from the content or metadata generated manually using metadata automatically derived from the content

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/70—Arrangements for image or video recognition or understanding using pattern recognition or machine learning

- G06V10/74—Image or video pattern matching; Proximity measures in feature spaces

- G06V10/761—Proximity, similarity or dissimilarity measures

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/70—Arrangements for image or video recognition or understanding using pattern recognition or machine learning

- G06V10/77—Processing image or video features in feature spaces; using data integration or data reduction, e.g. principal component analysis [PCA] or independent component analysis [ICA] or self-organising maps [SOM]; Blind source separation

- G06V10/774—Generating sets of training patterns; Bootstrap methods, e.g. bagging or boosting

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/70—Arrangements for image or video recognition or understanding using pattern recognition or machine learning

- G06V10/82—Arrangements for image or video recognition or understanding using pattern recognition or machine learning using neural networks

-

- Y—GENERAL TAGGING OF NEW TECHNOLOGICAL DEVELOPMENTS; GENERAL TAGGING OF CROSS-SECTIONAL TECHNOLOGIES SPANNING OVER SEVERAL SECTIONS OF THE IPC; TECHNICAL SUBJECTS COVERED BY FORMER USPC CROSS-REFERENCE ART COLLECTIONS [XRACs] AND DIGESTS

- Y02—TECHNOLOGIES OR APPLICATIONS FOR MITIGATION OR ADAPTATION AGAINST CLIMATE CHANGE

- Y02D—CLIMATE CHANGE MITIGATION TECHNOLOGIES IN INFORMATION AND COMMUNICATION TECHNOLOGIES [ICT], I.E. INFORMATION AND COMMUNICATION TECHNOLOGIES AIMING AT THE REDUCTION OF THEIR OWN ENERGY USE

- Y02D10/00—Energy efficient computing, e.g. low power processors, power management or thermal management

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- General Physics & Mathematics (AREA)

- Evolutionary Computation (AREA)

- Databases & Information Systems (AREA)

- Health & Medical Sciences (AREA)

- Artificial Intelligence (AREA)

- Computing Systems (AREA)

- General Health & Medical Sciences (AREA)

- Software Systems (AREA)

- General Engineering & Computer Science (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Multimedia (AREA)

- Data Mining & Analysis (AREA)

- Medical Informatics (AREA)

- Library & Information Science (AREA)

- Life Sciences & Earth Sciences (AREA)

- Biomedical Technology (AREA)

- Biophysics (AREA)

- Computational Linguistics (AREA)

- Molecular Biology (AREA)

- Mathematical Physics (AREA)

- Information Retrieval, Db Structures And Fs Structures Therefor (AREA)

Abstract

本发明公开了一种数据处理方法、装置、计算机设备及存储介质,方法部分包括:获取用户对期望图像的期望图像风格和期望内容描述;基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像,并对期望内容描述进行语义提取得到关键图像信息;对初始生成图像进行图像内容识别得到内容识别数据,并对初始生成图像进行风格识别,得到图像识别风格;在确定图像识别风格与期望图像风格一致,且内容识别数据与关键图像信息匹配时,将初始生成图像输出为期望图像对应的目标检索图像。本发明中,不仅能够使得用户检索得到更满意的图像检索数据,提高图像检索效果,还能够提高图像数据获取的灵活性。

The invention discloses a data processing method, device, computer equipment and storage medium. The method part includes: obtaining the user's expected image style and expected content description of the expected image; performing image generation based on the expected content description and expected image style, and obtaining the initial Generate an image, and perform semantic extraction on the desired content description to obtain key image information; perform image content recognition on the initial generated image to obtain content recognition data, and perform style recognition on the initial generated image to obtain the image recognition style; determine the image recognition style and expectations When the image style is consistent and the content recognition data matches the key image information, the initial generated image is output as the target retrieval image corresponding to the expected image. In the present invention, not only can the user retrieve more satisfactory image retrieval data, improve the image retrieval effect, but also improve the flexibility of image data acquisition.

Description

技术领域technical field

本发明涉及人工智能技术领域,尤其是一种数据处理方法、装置、计算机设备及存储介质。The invention relates to the technical field of artificial intelligence, in particular to a data processing method, device, computer equipment and storage medium.

背景技术Background technique

近年来,随着互联网的快速发展,如何快速找到用户需要的信息就成为了大数据利用和管理的关键问题,从而衍生了基于互联网大数据的人工智能系统。在人工智能系统中,人们通常会采用检索的方式寻找有用信息,如文本检索、图像检索等。In recent years, with the rapid development of the Internet, how to quickly find the information that users need has become a key issue in the utilization and management of big data, thus deriving artificial intelligence systems based on Internet big data. In artificial intelligence systems, people usually use retrieval methods to find useful information, such as text retrieval, image retrieval, etc.

以图像检索为例,现在常用的检索方式包括基于文本关键词的检索和基于输入图像的检索,基于文本关键词的检索即用户输入查询文本,系统将用户查询文本与图像数据的文本标签进行匹配,从而得到图像检索结果;基于输入图像的检索即用户输入标准图像,系统将标准图像与图像数据的进行相似度计算,从而得到图像检索结果。然而,这两种检索方式只能为用户提供数据库中已经存在的图像数据,并且需要对图像数据集进行大量的人工标注或计算,限制了图像数据获取的灵活性,也难以获得较为理想的图像检索结果,图像检索效果不佳。Taking image retrieval as an example, the commonly used retrieval methods include retrieval based on text keywords and retrieval based on input images. The retrieval based on text keywords means that the user enters the query text, and the system matches the user query text with the text label of the image data. , so as to obtain the image retrieval result; the retrieval based on the input image means that the user inputs a standard image, and the system calculates the similarity between the standard image and the image data to obtain the image retrieval result. However, these two retrieval methods can only provide users with image data that already exists in the database, and require a large amount of manual annotation or calculation on the image data set, which limits the flexibility of image data acquisition and makes it difficult to obtain ideal images. Retrieval results, poor image retrieval.

发明内容Contents of the invention

本发明提供一种数据处理方法、装置、计算机设备及存储介质,以解决现有图像检索过程中,仅能为用户提供已存在的图像数据,图像数据获取的灵活性低,且图像检索效果不佳的问题。The present invention provides a data processing method, device, computer equipment and storage medium to solve the problem that in the existing image retrieval process, only existing image data can be provided to users, the flexibility of image data acquisition is low, and the image retrieval effect is not good. good question.

提供一种数据处理方法,包括:Provide a data processing method, including:

获取用户对期望图像的图像描述信息,图像描述信息包括期望图像风格和期望内容描述;Obtain the user's image description information of the desired image, the image description information includes the desired image style and desired content description;

基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像,并对期望内容描述进行语义提取,得到期望内容描述对应的关键图像信息;Image generation is performed based on the desired content description and the desired image style to obtain an initial generated image, and semantic extraction is performed on the desired content description to obtain key image information corresponding to the desired content description;

对初始生成图像进行图像内容识别得到内容识别数据,并对初始生成图像进行风格识别,得到图像识别风格;Performing image content recognition on the initial generated image to obtain content recognition data, and performing style recognition on the initially generated image to obtain the image recognition style;

确定图像识别风格与期望图像风格是否一致,并确定内容识别数据与关键图像信息是否匹配;Determine whether the image recognition style is consistent with the expected image style, and determine whether the content recognition data matches the key image information;

若图像识别风格与期望图像风格一致,且内容识别数据与关键图像信息匹配,则将初始生成图像输出为期望图像对应的目标检索图像。If the image recognition style is consistent with the expected image style, and the content recognition data matches the key image information, the initial generated image is output as the target retrieval image corresponding to the expected image.

可选地,关键图像信息包括期望图像的多个期望元素,确定内容识别数据与关键图像信息是否匹配,包括:Optionally, the key image information includes multiple expected elements of the expected image, and determining whether the content identification data matches the key image information includes:

对内容识别数据进行组成元素识别,得到初始生成图像的多个组成元素;Recognize the constituent elements of the content recognition data to obtain multiple constituent elements of the initially generated image;

将多个期望元素与多个组成元素进行一一匹配,得到匹配结果;Match multiple expected elements with multiple constituent elements one by one to obtain a matching result;

若元素匹配结果为完全匹配,则确定内容识别数据与关键图像信息匹配;If the element matching result is an exact match, then determining that the content identification data matches the key image information;

若元素匹配结果为未完全匹配,则确定内容识别数据与关键图像信息不匹配。If the element matching result is an incomplete match, it is determined that the content identification data does not match the key image information.

可选地,关键图像信息还包括每一期望元素的位置和颜色,将多个期望元素与多个组成元素进行一一匹配,得到匹配结果,包括:Optionally, the key image information also includes the position and color of each expected element, and the multiple expected elements are matched with multiple constituent elements one by one to obtain a matching result, including:

确定多个组成元素与多个期望元素是否能够一一对应匹配;Determine whether a plurality of constituent elements and a plurality of expected elements can be matched one-to-one;

若多个组成元素与多个期望元素能够一一对应匹配,则基于内容识别数据确定每一组成元素的位置和颜色;If the plurality of constituent elements can be matched one-to-one with the plurality of expected elements, determining the position and color of each constituent element based on the content identification data;

确定每一组成元素的位置和颜色,与对应期望元素的位置和颜色是否匹配;Determine whether the position and color of each component element match the position and color of the corresponding expected element;

若每一组成元素的位置和颜色,与对应期望元素的位置和颜色匹配,则确定匹配结果为完全匹配;If the position and color of each component element match the position and color of the corresponding expected element, then determine that the matching result is a complete match;

若多个组成元素与多个期望元素不能一一对应匹配,或者任一组成元素的位置或颜色,与对应期望元素的位置或颜色不匹配,则确定匹配结果为未完全匹配。If the plurality of constituent elements cannot be matched one-to-one with the plurality of expected elements, or the position or color of any constituent element does not match the position or color of the corresponding expected element, then it is determined that the matching result is an incomplete match.

可选地,确定每一组成元素的位置和颜色,与对应期望元素的位置和颜色是否匹配,包括:Optionally, determining whether the position and color of each constituent element matches the position and color of the corresponding expected element includes:

计算每一组成元素的位置与对应期望元素的位置间的距离,得到每一组成元素的位置距离;Calculate the distance between the position of each component element and the position of the corresponding expected element, and obtain the position distance of each component element;

计算每一组成元素的颜色与对应期望元素的颜色间的相似度,得到每一组成元素的色差;Calculate the similarity between the color of each component element and the color of the corresponding desired element to obtain the color difference of each component element;

确定每一组成元素的位置距离是否小于预设距离,并确定每一组成元素的色差是否小于预设色差值;Determine whether the position distance of each component element is less than a preset distance, and determine whether the color difference of each component element is less than a preset color difference value;

若每一组成元素的位置距离均小于预设距离,且每一组成元素的色差均小于预设色差值,则确定每一组成元素的位置和颜色,与对应期望元素的位置和颜色匹配。If the position distance of each component element is less than the preset distance, and the color difference of each component element is less than the preset color difference value, then determine the position and color of each component element, and match the position and color of the corresponding desired element.

可选地,关键图像信息还包括期望背景描述,将多个期望元素与多个组成元素进行一一匹配,得到匹配结果之后,该方法还包括:Optionally, the key image information also includes an expected background description, matching multiple expected elements with multiple constituent elements one by one, and after obtaining the matching result, the method further includes:

若匹配结果为完全匹配,则对内容识别数据进行背景识别,得到初始生成图像的图像背景描述;If the matching result is a complete match, background recognition is performed on the content recognition data to obtain an image background description of the initially generated image;

确定期望背景描述与图像背景描述的背景相似度;determining the background similarity of the desired background description to the image background description;

若背景相似度大于或者等于预设背景相似度,则确定内容识别数据与关键图像信息匹配;If the background similarity is greater than or equal to the preset background similarity, it is determined that the content recognition data matches the key image information;

若背景相似度小于预设背景相似度,则确定内容识别数据与关键图像信息不匹配。If the background similarity is smaller than the preset background similarity, it is determined that the content recognition data does not match the key image information.

可选地,期望背景描述包括期望背景颜色和期望背景图案,图像背景描述包括识别背景颜色和识别背景图案,确定期望背景描述与图像背景描述的背景相似度,包括:Optionally, the desired background description includes a desired background color and a desired background pattern, the image background description includes identifying the background color and identifying the background pattern, and determining the background similarity between the expected background description and the image background description includes:

计算期望背景颜色与识别背景颜色的背景颜色相似度,并计算期望背景图案与识别背景图案的图案相似度;Calculate the background color similarity between the expected background color and the recognized background color, and calculate the pattern similarity between the expected background pattern and the recognized background pattern;

基于背景颜色相似度与图案相似度计算得到背景相似度。The background similarity is calculated based on the background color similarity and the pattern similarity.

可选地,基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像,包括:Optionally, image generation is performed based on the desired content description and desired image style to obtain an initial generated image, including:

获取基于模型样本数据集对生成对抗网络进行深度学习得到的目标图像生成模型,模型样本数据集中的每一历史图像样本对应有样本内容描述向量和样本风格向量,基于多个历史图像样本的样本内容描述向量和样本风格向量对生成对抗网络进行深度学习训练,得到的目标图像生成模型;Obtain the target image generation model based on the deep learning of the generative confrontation network based on the model sample data set. Each historical image sample in the model sample data set corresponds to a sample content description vector and a sample style vector. Based on the sample content of multiple historical image samples The description vector and the sample style vector perform deep learning training on the generative confrontation network to obtain the target image generation model;

对期望内容描述进行语义特征提取,得到图像描述向量,并对期望图像风格进行语义特征提取,得到期望图像风格向量;Perform semantic feature extraction on the desired content description to obtain an image description vector, and perform semantic feature extraction on the desired image style to obtain a desired image style vector;

将期望图像风格向量和图像描述向量输入目标图像生成模型进行图像生成,并获取目标图像生成模型输出的图像,作为初始生成图像。Input the desired image style vector and image description vector into the target image generation model for image generation, and obtain the image output by the target image generation model as the initial generated image.

提供一种数据处理装置,包括:A data processing device is provided, comprising:

获取模块,用于获取用户对期望图像的图像描述信息,图像描述信息包括期望图像风格和期望内容描述;An acquisition module, configured to acquire the user's image description information for the desired image, where the image description information includes a desired image style and a desired content description;

生成模块,用于基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像,并对期望内容描述进行语义提取,得到期望内容描述对应的关键图像信息;A generating module, configured to generate an image based on the desired content description and the desired image style, obtain an initial generated image, and perform semantic extraction on the desired content description to obtain key image information corresponding to the desired content description;

识别模块,用于对初始生成图像进行图像内容识别得到内容识别数据,并对初始生成图像进行风格识别,得到图像识别风格;The recognition module is used to perform image content recognition on the initially generated image to obtain content recognition data, and perform style recognition on the initially generated image to obtain the image recognition style;

确定模块,用于确定图像识别风格与期望图像风格是否一致,并确定内容识别数据与关键图像信息是否匹配;A determining module, configured to determine whether the image recognition style is consistent with the expected image style, and determine whether the content recognition data matches the key image information;

输出模块,用于若图像识别风格与期望图像风格一致,且内容识别数据与关键图像信息匹配,则将初始生成图像输出为期望图像对应的目标检索图像。An output module, configured to output the initially generated image as the target retrieval image corresponding to the expected image if the image recognition style is consistent with the expected image style, and the content recognition data matches the key image information.

提供一种计算机设备,包括存储器、处理器以及存储在存储器中并可在处理器上运行的计算机程序,其特征在于,处理器执行计算机程序时实现上述数据处理方法的步骤。Provided is a computer device, comprising a memory, a processor, and a computer program stored in the memory and operable on the processor, wherein the processor implements the steps of the above data processing method when executing the computer program.

提供一种计算机可读存储介质,计算机可读存储介质存储有计算机程序,计算机程序被处理器执行时实现上述数据处理方法的步骤。Provided is a computer-readable storage medium, where a computer program is stored in the computer-readable storage medium, and when the computer program is executed by a processor, the steps of the above data processing method are realized.

上述数据处理方法、装置、计算机设备及存储介质所提供的一个方案中,通过获取用户对期望图像的图像描述信息,图像描述信息包括期望图像风格和期望内容描述,基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像,并对期望内容描述进行语义提取,得到期望内容描述对应的关键图像信息,然后对初始生成图像进行图像内容识别得到内容识别数据,并对初始生成图像进行风格识别,得到图像识别风格,再确定图像识别风格与期望图像风格是否一致,并确定内容识别数据与关键图像信息是否匹配,若图像识别风格与期望图像风格一致,且内容识别数据与关键图像信息匹配,则将初始生成图像输出为期望图像对应的目标检索图像;本发明中,在用户进行期望图像检索时,基于用户输入的期望图像风格和期望内容描述生成初始生成图像,并在确定初始生成图像符合用户对期望图像的图像描述信息时,将生成的初始生成图像作为图像检索结果输出,不仅能够使得用户检索得到更满意的图像检索数据,提高图像检索效果,还能够提高图像数据获取的灵活性。In one solution provided by the above data processing method, device, computer equipment, and storage medium, by obtaining the image description information of the user for the desired image, the image description information includes the desired image style and the desired content description, based on the desired content description and the desired image style Perform image generation to obtain the initial generated image, and perform semantic extraction on the desired content description to obtain key image information corresponding to the desired content description, then perform image content recognition on the initial generated image to obtain content recognition data, and perform style recognition on the initially generated image , get the image recognition style, then determine whether the image recognition style is consistent with the expected image style, and determine whether the content recognition data matches the key image information, if the image recognition style is consistent with the expected image style, and the content recognition data matches the key image information, Then output the initial generated image as the target retrieval image corresponding to the desired image; in the present invention, when the user performs desired image retrieval, the initial generated image is generated based on the desired image style and desired content description input by the user, and when it is determined that the initially generated image conforms to When the user describes the image information of the desired image, the generated initial generated image is output as the image retrieval result, which not only enables the user to retrieve more satisfactory image retrieval data, improves the image retrieval effect, but also improves the flexibility of image data acquisition.

附图说明Description of drawings

为了更清楚地说明本发明实施例的技术方案,下面将对本发明实施例的描述中所需要使用的附图作简单地介绍,显而易见地,下面描述中的附图仅仅是本发明的一些实施例,对于本领域普通技术人员来讲,在不付出创造性劳动性的前提下,还可以根据这些附图获得其他的附图。In order to more clearly illustrate the technical solutions of the embodiments of the present invention, the following will briefly introduce the accompanying drawings that need to be used in the description of the embodiments of the present invention. Obviously, the accompanying drawings in the following description are only some embodiments of the present invention , for those skilled in the art, other drawings can also be obtained according to these drawings without paying creative labor.

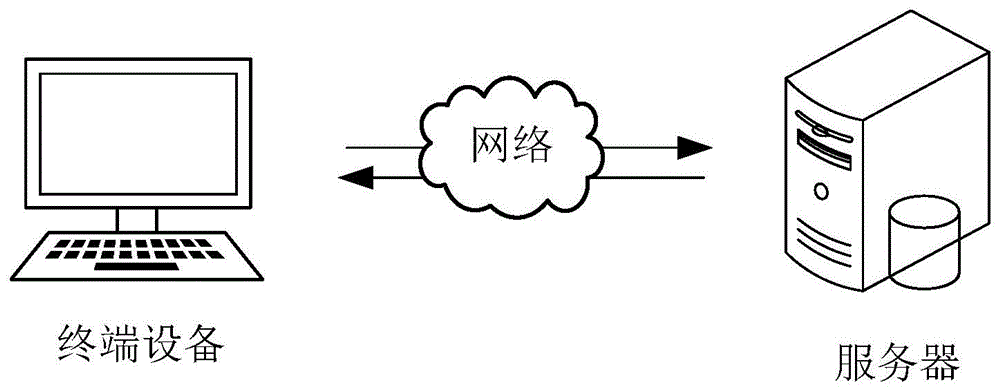

图1是本发明一实施例中数据处理方法的一应用环境示意图;Fig. 1 is a schematic diagram of an application environment of a data processing method in an embodiment of the present invention;

图2是本发明一实施例中数据处理方法的一流程示意图;Fig. 2 is a schematic flow chart of a data processing method in an embodiment of the present invention;

图3是图2中步骤S20的一实现流程示意图;FIG. 3 is a schematic diagram of an implementation process of step S20 in FIG. 2;

图4是图2中步骤S40的一实现流程示意图;FIG. 4 is a schematic diagram of an implementation process of step S40 in FIG. 2;

图5是图2中步骤S40的另一实现流程示意图;FIG. 5 is a schematic flow diagram of another implementation of step S40 in FIG. 2;

图6是本发明一实施例中数据处理这种的一结构示意图;Fig. 6 is a schematic structural diagram of data processing in an embodiment of the present invention;

图7是本发明一实施例中计算机设备的一结构示意图。FIG. 7 is a schematic structural diagram of a computer device in an embodiment of the present invention.

具体实施方式Detailed ways

下面将结合本发明实施例中的附图,对本发明实施例中的技术方案进行清楚、完整地描述,显然,所描述的实施例是本发明一部分实施例,而不是全部的实施例。基于本发明中的实施例,本领域普通技术人员在没有作出创造性劳动前提下所获得的所有其他实施例,都属于本发明保护的范围。The following will clearly and completely describe the technical solutions in the embodiments of the present invention with reference to the accompanying drawings in the embodiments of the present invention. Obviously, the described embodiments are some of the embodiments of the present invention, but not all of them. Based on the embodiments of the present invention, all other embodiments obtained by persons of ordinary skill in the art without creative efforts fall within the protection scope of the present invention.

本发明实施例提供的数据处理方法,可应用在如图1所示的应用场景中,其中,服务器和终端设备通过网络进行通信。其中,服务器可以是预设编辑平台的后台服务器,预设编辑平台为提供H5页面或banner等视觉数字产品的设计功能的平台。当用户进行视觉数字产品设计需要进行图像检索时,用户可以通过终端设备输入对期望图像的图像描述信息,图像描述信息包括期望图像的期望图像风格和期望内容描述,以便服务器基于图像描述信息进行图像生成和检索任务,从而为用户生成合适的图像,进而将生成图像与匹配图像一起作为图像检索结果返回给用户的终端设备,便于用户进行图像选取。服务器在获取用户对期望图像的图像描述信息后,基于图像描述信息中的期望内容描述和期望图像风格进行图像生成,得到初始生成图像,同时对期望内容描述进行语义提取,得到期望内容描述对应的关键图像信息;然后,服务器对初始生成图像进行图像内容识别得到内容识别数据,并对初始生成图像进行风格识别,得到图像识别风格;确定图像识别风格与期望图像风格是否一致,并确定内容识别数据与关键图像信息是否匹配,若图像识别风格与期望图像风格一致,且内容识别数据与关键图像信息匹配,则将初始生成图像输出为期望图像对应的目标检索图像。本实施例中,在用户进行期望图像检索时,基于用户输入的期望图像风格和期望内容描述生成初始生成图像,并在确定初始生成图像符合用户对期望图像的图像描述信息时,将生成的初始生成图像作为图像检索结果输出,不仅能够使得用户检索得到更满意的图像检索数据,提高图像检索效果,还能够提高图像数据获取的灵活性。The data processing method provided by the embodiment of the present invention can be applied in the application scenario shown in FIG. 1 , where the server and the terminal device communicate through a network. Wherein, the server may be a background server of a preset editing platform, and the preset editing platform is a platform that provides design functions of visual digital products such as H5 pages or banners. When the user needs to perform image retrieval for visual digital product design, the user can input the image description information of the desired image through the terminal device. The image description information includes the desired image style and desired content description of the desired image, so that the server can perform image retrieval based on the image description information. Generate and retrieve tasks, so as to generate suitable images for users, and then return the generated images and matching images as image retrieval results to the user's terminal device, which is convenient for users to select images. After obtaining the user's image description information for the desired image, the server generates the image based on the desired content description and the desired image style in the image description information to obtain the initial generated image, and at the same time extracts the semantics of the desired content description to obtain the desired content description. Key image information; then, the server performs image content recognition on the initial generated image to obtain content recognition data, and performs style recognition on the initial generated image to obtain the image recognition style; determines whether the image recognition style is consistent with the expected image style, and determines the content recognition data Whether it matches the key image information, if the image recognition style is consistent with the expected image style, and the content recognition data matches the key image information, then output the initial generated image as the target retrieval image corresponding to the expected image. In this embodiment, when the user retrieves the desired image, the initial generated image is generated based on the desired image style and desired content description input by the user, and when it is determined that the initially generated image conforms to the user's image description information for the desired image, the generated initial The generated image is output as the image retrieval result, which not only enables the user to retrieve more satisfactory image retrieval data, improves the image retrieval effect, but also improves the flexibility of image data acquisition.

其中,终端设备可以但不限于各种个人计算机、笔记本电脑、智能手机、平板电脑等设备。服务器可以用独立的服务器或者是多个服务器组成的服务器集群来实现。Wherein, the terminal device may be, but not limited to, various personal computers, notebook computers, smart phones, tablet computers and other devices. The server can be implemented by an independent server or a server cluster composed of multiple servers.

在一实施例中,如图2所示,提供一种数据处理方法,以该方法应用在图1中的服务器为例进行说明,包括如下步骤:In one embodiment, as shown in FIG. 2 , a data processing method is provided, which is described by taking the method applied to the server in FIG. 1 as an example, including the following steps:

S10:获取用户对期望图像的图像描述信息,图像描述信息包括期望图像风格和期望内容描述。S10: Acquiring the user's image description information of the desired image, the image description information including the desired image style and desired content description.

当用户需要进行图像搜索时,用户需要通过终端设备输入期望图像的关键字(文本信息或语音信息),以据此图像描述信息进行图像搜索,进而依据图像检索结果选取合适的图像,传统检索方式中,服务器返回的图像检索结果通常是数据库中已经存在的图像数据,导致图像数据获取的灵活性受到限制,图像检索结果不理想,难以找用户满意的图像。When the user needs to search for an image, the user needs to input the keyword (text information or voice information) of the desired image through the terminal device to search for the image based on the image description information, and then select the appropriate image according to the image retrieval result. The traditional retrieval method In this method, the image retrieval result returned by the server is usually the image data that already exists in the database, which leads to the limitation of the flexibility of image data acquisition, unsatisfactory image retrieval results, and it is difficult to find satisfactory images for users.

为解决前述问题,本实施例在用户存在图像检索需求时,例如,当用户在预设编辑平台,编辑H5、海报和活动页面时,需要新增或替换为用户满意的图像图片,可以利用该预设编辑平台上已有的搜索引擎进行图像搜索,从而搜索得到用户满意的图像。在搜索过程中,用户可以通过终端设备将用户对期望图像的图像描述信息输入预设编辑平台的搜索引擎,该预设编辑平台的服务器获取用户对期望图像的图像描述信息,并根据图像描述信息(图像描述信息包括用户对期望图像的期望图像风格和期望内容描述)生成图像;同时,服务器还会依据传统方式进行数据库图像匹配,即提取出图像描述信息中的关键字段,将该关键字段与数据库中已有图像(历史图像)的标签进行匹配,进而得到匹配到的图像,以便后续将生成的图像和匹配到的图像一同作为图像检索结果返回给用户,从而能够提高图像数据获取的灵活性、提高图像检索效果。In order to solve the aforementioned problems, in this embodiment, when the user has an image retrieval requirement, for example, when the user edits H5, posters and event pages on the preset editing platform, it needs to add or replace an image picture that the user is satisfied with, and this can be used The existing search engine on the preset editing platform is used for image search, so as to search for images satisfactory to users. During the search process, the user can input the user's image description information of the desired image into the search engine of the preset editing platform through the terminal device, and the server of the preset editing platform obtains the user's image description information of the desired image, and according to the image description information (The image description information includes the user's desired image style and desired content description of the desired image) to generate an image; at the same time, the server will also perform database image matching according to the traditional method, that is, extract the key fields in the image description information, and use the keyword Segments are matched with the labels of existing images (historical images) in the database, and then the matched images are obtained, so that the generated images and the matched images can be returned to the user as image retrieval results, thereby improving the efficiency of image data acquisition. Flexibility, improve image retrieval effect.

本实施例中,图像描述信息为用户对需要生成的图像(即期望图像)的内容描述,包括用户对期望图像的期望图像风格和期望内容描述,期望内容描述可以是图像元素(图像的组成元素)、图像背景(可以包括背景图形、背景纹理、背景颜色等)、图像元素的颜色和位置等。其中,图像描述信息为自然语言信息,既可以是文本信息,也可以是语音信息。In this embodiment, the image description information is the user's description of the content of the image that needs to be generated (i.e. the desired image), including the user's desired image style and desired content description of the desired image, and the desired content description can be an image element (a component element of the image) ), image background (can include background graphics, background texture, background color, etc.), color and position of image elements, etc. Wherein, the image description information is natural language information, which can be either text information or voice information.

S20:基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像,并对期望内容描述进行语义提取,得到期望内容描述对应的关键图像信息。S20: Generate an image based on the desired content description and the desired image style to obtain an initial generated image, and perform semantic extraction on the desired content description to obtain key image information corresponding to the desired content description.

在获取用户对期望图像的图像描述信息,即获取用户对期望图像的期望图像风格和期望内容描述之后,服务器需要基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像,不仅考虑到了图像内容,还考虑到了图像风格对生成效果的营销,使得初始生成图像最大限度的满足用户要求。本实施例中,可以基于期望内容描述和期望图像风格,采用预先训练的目标图像生成模型执行图像生成任务,从而得到初始生成图像。例如,目标图像生成模型可以是基于变分自编码器的模型,通过对期望内容描述进行编码得到期望内容描述的特征向量,并对期望图像风格进行编码得到期望图像风格的特征向量,然后利用目标图像生成模型对期望内容描述和期望图像风格的特征向量进行高维空间的像素映射,得到初始生成图像。在其他实施例中,预设图像生成模型还可以是其他通过文本进行图像生成的模型,在此不再赘述。After obtaining the user's image description information for the desired image, that is, after obtaining the user's desired image style and desired content description for the desired image, the server needs to generate an image based on the desired content description and desired image style to obtain the initial generated image. The content also takes into account the marketing effect of the image style on the generation effect, so that the initial generated image can meet the user's requirements to the greatest extent. In this embodiment, based on the desired content description and desired image style, the pre-trained target image generation model can be used to perform the image generation task, so as to obtain the initial generated image. For example, the target image generation model can be a model based on a variational autoencoder, which encodes the desired content description to obtain the feature vector of the desired content description, and encodes the desired image style to obtain the feature vector of the desired image style, and then uses the target The image generation model performs high-dimensional pixel mapping on the feature vectors of the desired content description and desired image style to obtain the initial generated image. In other embodiments, the preset image generation model may also be other models for image generation through text, which will not be repeated here.

同时,服务器还需要对期望内容描述进行语义提取,得到期望内容描述对应的关键图像信息。其中,关键图像信息包括期望图像的组成元素、图像背景,以及组成元素的颜色和位置等信息;通过丰富的关键图像信息,可以获得更多的期望图像细节,以便后续模型生成图像是可以更好的挖掘图像粒度,生成效果更好的图像。At the same time, the server also needs to perform semantic extraction on the desired content description to obtain key image information corresponding to the desired content description. Among them, the key image information includes information such as the components of the desired image, the image background, and the color and position of the components; through rich key image information, more details of the desired image can be obtained, so that the image generated by the subsequent model can be better. The mining image granularity can generate better images.

其中,对期望内容描述进行语义提取,得到期望内容描述对应的关键图像信息,包括:获取用户在预设编辑平台上编辑界面的编辑数据,并获取用户画像和用户在该预设编辑平台上的历史行为数据;在服务器对期望内容描述进行语义提取的过程中,基于知识图谱、用户在编辑界面上的编辑数据、用户画像和用户历史行为数据等数据进行语义联想,得到期望内容描述对应的关键图像信息。即在服务器对期望内容描述进行语义提取的过程中,若检测到用户在预设编辑平台上存在编辑数据(如正在编辑的海报或H5作品),可以获取用户在编辑界面上的编辑数据,然后服务器可以基于知识图谱、用户在编辑界面上的编辑数据、用户画像和用户历史行为数据等数据进行语义联想,从而得到更加完善的关键图像信息。在服务器对期望内容描述进行语义提取的过程中,基于知识图谱对进行语义联想,能够从图谱角度智能扩充期望内容描述,从而丰富用户的意图,增加期望内容描述的准确性,进而提高关键图像信息的准确性。而在语义提取时,在知识图谱的基础上增加用户画像和用户历史行为数据,以进行语义联想,可以进一步扩充期望内容描述,使得识别到的用户意图更贴近用户的行为偏好,进而提高关键图像信息的准确性,进而提升图像生成效果。在语义提取时,在知识图谱的基础上增加用户在编辑界面上的编辑数据进行语义联想,不仅可以进一步扩充期望内容描述,还能够使得识别到的用户意图更符合用户当前编辑需求,进而使得后续生成的图像更加符合用户需求。Wherein, performing semantic extraction on the desired content description to obtain the key image information corresponding to the desired content description includes: obtaining the editing data of the user's editing interface on the preset editing platform, and obtaining the user portrait and the user's image on the preset editing platform Historical behavior data; in the process of semantic extraction of the desired content description by the server, semantic association is performed based on data such as knowledge graphs, user editing data on the editing interface, user portraits, and user historical behavior data to obtain the key corresponding to the desired content description image information. That is, in the process of semantic extraction of the desired content description by the server, if it is detected that the user has editing data on the preset editing platform (such as a poster being edited or an H5 work), the editing data of the user on the editing interface can be obtained, and then The server can perform semantic association based on data such as knowledge graphs, user editing data on the editing interface, user portraits, and user historical behavior data, so as to obtain more complete key image information. In the process of semantic extraction of the desired content description by the server, semantic association based on the knowledge map can intelligently expand the desired content description from the perspective of the map, thereby enriching the user's intention, increasing the accuracy of the desired content description, and improving the key image information accuracy. In semantic extraction, user portraits and user historical behavior data are added on the basis of knowledge graphs for semantic association, which can further expand the description of desired content, making the identified user intentions closer to the user's behavior preferences, and then improve the key image. The accuracy of the information, thereby improving the image generation effect. In semantic extraction, on the basis of the knowledge map, adding the user’s editing data on the editing interface for semantic association can not only further expand the description of the desired content, but also make the identified user intent more in line with the user’s current editing needs, thereby enabling subsequent The generated images are more in line with user needs.

S30:对初始生成图像进行图像内容识别得到内容识别数据,并对初始生成图像进行风格识别,得到图像识别风格。S30: Perform image content recognition on the initially generated image to obtain content recognition data, and perform style recognition on the initially generated image to obtain an image recognition style.

在基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像之后,服务器需要对初始生成图像进行图像内容识别,从而得到内容识别数据;同时,服务器还需要对初始生成图像进行风格识别,得到图像识别风格。After the image is generated based on the desired content description and desired image style to obtain the initial generated image, the server needs to perform image content recognition on the initially generated image to obtain content recognition data; at the same time, the server also needs to perform style recognition on the initially generated image to obtain Image recognition style.

本实施例中,可以采用预先训练的风格识别模型对初始生成图像进行风格识别,以在保证识别精度的基础上,快速得到初始生成图像的图像识别风格。其中,可以采用预先训练的内容识别模型对初始生成图像进行组成元素、元素颜色、元素位置、背景等图像内容的识别,以快速得到准确、多样化的内容识别数据。In this embodiment, a pre-trained style recognition model may be used to perform style recognition on the initially generated image, so as to quickly obtain the image recognition style of the initially generated image on the basis of ensuring recognition accuracy. Among them, the pre-trained content recognition model can be used to identify the image content such as composition elements, element colors, element positions, and backgrounds of the initial generated image, so as to quickly obtain accurate and diverse content recognition data.

S40:确定图像识别风格与期望图像风格是否一致,并确定内容识别数据与关键图像信息是否匹配。S40: Determine whether the image recognition style is consistent with the expected image style, and determine whether the content recognition data matches the key image information.

在识别得到初始生成图像的内容识别数据和图像识别风格之后,服务器还需要确定图像识别风格与期望图像风格是否一致,并确定内容识别数据与关键图像信息是否匹配,以确认生成的初始生成图像是否满足用户输入的图像描述信息,即确认初始生成图像是否满足用户描述要求。After identifying the content recognition data and image recognition style of the initial generated image, the server also needs to determine whether the image recognition style is consistent with the expected image style, and determine whether the content recognition data matches the key image information, so as to confirm whether the generated initial generated image is Satisfy the image description information input by the user, that is, confirm whether the initially generated image meets the user description requirements.

S50:若图像识别风格与期望图像风格一致,且内容识别数据与关键图像信息匹配,则将初始生成图像输出为期望图像对应的目标检索图像。S50: If the image recognition style is consistent with the desired image style, and the content recognition data matches the key image information, output the initially generated image as the target retrieval image corresponding to the desired image.

在确定图像识别风格与期望图像风格是否一致,并确定内容识别数据与关键图像信息是否匹配之后,若确定图像识别风格与期望图像风格一致,且确定内容识别数据与关键图像信息匹配,表示初始生成图像的图像风格与用户输入的图像描述信息中要求的图像风格一致,且初始生成图像的图像内容为用户输入的图像描述信息中要求的图像内容,即生成的初始生成图像满足用户描述要求,则将初始生成图像输出为期望图像对应的目标检索图像,使得目标检索图像完全满足用户描述要求,更接近用户的期望图像,提高了目标检索图像的图像生成效果。After determining whether the image recognition style is consistent with the expected image style, and determining whether the content recognition data matches the key image information, if it is determined that the image recognition style is consistent with the expected image style, and it is determined that the content recognition data matches the key image information, it means that the initial generation The image style of the image is consistent with the image style required in the image description information input by the user, and the image content of the initially generated image is the image content required in the image description information input by the user, that is, the generated initial generated image meets the user description requirements, then The initial generated image is output as the target retrieval image corresponding to the desired image, so that the target retrieval image fully meets the user's description requirements, and is closer to the user's desired image, which improves the image generation effect of the target retrieval image.

同时,服务器还会依据传统方式进行数据库图像匹配,即提取出图像描述信息中的关键字段,将该关键字段与数据库中已有图像(历史图像)的标签进行匹配,进而得到匹配到的图像。以便后续将生成的图像和匹配到的图像一同作为图像检索结果返回给用户,从而能够提高图像数据获取的灵活性、提高图像检索效果。在将初始生成图像输出为期望图像对应的目标检索图像之后,服务器将该初始生成图像与在数据库匹配到的图像一同作为图像检索结果返回给用户,从而能够提高图像数据获取的灵活性、提高图像检索效果。At the same time, the server will also perform database image matching according to the traditional method, that is, extract the key fields in the image description information, match the key fields with the labels of existing images (historical images) in the database, and then obtain the matched image. In order to subsequently return the generated image and the matched image to the user as an image retrieval result, thereby improving the flexibility of image data acquisition and improving the image retrieval effect. After outputting the initial generated image as the target retrieval image corresponding to the desired image, the server returns the initial generated image together with the image matched in the database as the image retrieval result to the user, thereby improving the flexibility of image data acquisition and improving the image quality. Retrieve the effect.

若确定初始生成图像的图像识别风格与期望图像风格不一致,表示初始生成图像的图像风格与用户输入的图像描述信息中要求的图像风格不一致,生成的初始生成图像未满足用户描述要求,则该初始生成图像的效果不佳,服务器需要依据用户输入的图像描述信息重新生成新的初始生成图像,直至新的初始生成图像满足用户描述要求后,将初始生成图像输出为期望图像对应的目标检索图像。或者,若确定初始生成图像的内容识别数据与关键图像信息不匹配,例如初始生成图像的图像元素不匹配(漏掉期望内容描述的图像元素、多了期望内容描述中未包括的图像元素)、同一元素的位置或颜色不匹配、图像背景不匹配等,表示初始生成图像的图像内容不是用户输入的图像描述信息中要求的图像内容,则该初始生成图像的效果不佳,服务器需要依据用户输入的图像描述信息重新生成新的初始生成图像,直至新的初始生成图像满足用户描述要求后,将初始生成图像输出为期望图像对应的目标检索图像。在确定图像识别风格与期望图像风格不一致,或者内容识别数据与关键图像信息不匹配时,服务器再次生成新的图像,能够确保图像生成效果。If it is determined that the image recognition style of the initially generated image is inconsistent with the expected image style, it means that the image style of the initially generated image is inconsistent with the image style required in the image description information input by the user, and the generated initial generated image does not meet the user's description requirements, then the initial The effect of the generated image is not good. The server needs to regenerate a new initial generated image according to the image description information input by the user. After the new initial generated image meets the user description requirements, the initial generated image is output as the target retrieval image corresponding to the desired image. Or, if it is determined that the content identification data of the initially generated image does not match the key image information, for example, the image elements of the initially generated image do not match (missing image elements described in the desired content, adding image elements not included in the desired content description), If the position or color of the same element does not match, the image background does not match, etc., it means that the image content of the initially generated image is not the image content required in the image description information input by the user, then the effect of the initially generated image is not good, and the server needs to rely on user input The image description information regenerates a new initial generated image until the new initial generated image satisfies the description requirements of the user, and outputs the initial generated image as the target retrieval image corresponding to the desired image. When it is determined that the image recognition style is inconsistent with the expected image style, or the content recognition data does not match the key image information, the server generates a new image again, which can ensure the image generation effect.

本实施例中,在用户进行图像检索时,通过获取用户对期望图像的图像描述信息,图像描述信息包括期望图像风格和期望内容描述,基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像,并对期望内容描述进行语义提取,得到期望内容描述对应的关键图像信息,然后对初始生成图像进行图像内容识别得到内容识别数据,并对初始生成图像进行风格识别,得到图像识别风格,再确定图像识别风格与期望图像风格是否一致,并确定内容识别数据与关键图像信息是否匹配,若图像识别风格与期望图像风格一致,且内容识别数据与关键图像信息匹配,则将初始生成图像输出为期望图像对应的目标检索图像;本发明中,在用户进行期望图像检索时,基于用户输入的期望图像风格和期望内容描述生成初始生成图像,并在确定初始生成图像符合用户对期望图像的图像描述信息时,将生成的初始生成图像作为图像检索结果输出,不仅能够使得用户检索得到更满意的图像检索数据,提高图像检索效果,还能够提高图像数据获取的灵活性。In this embodiment, when the user performs image retrieval, by obtaining the user's image description information of the desired image, the image description information includes the desired image style and the desired content description, and the image is generated based on the desired content description and the desired image style, and the initial generation image, and perform semantic extraction on the desired content description to obtain the key image information corresponding to the desired content description, then perform image content recognition on the initial generated image to obtain content recognition data, and perform style recognition on the initially generated image to obtain the image recognition style, and then Determine whether the image recognition style is consistent with the expected image style, and determine whether the content recognition data matches the key image information. If the image recognition style is consistent with the expected image style, and the content recognition data matches the key image information, the initial generated image is output as The target retrieval image corresponding to the desired image; in the present invention, when the user performs desired image retrieval, an initial generated image is generated based on the desired image style and desired content description input by the user, and when it is determined that the initially generated image conforms to the user's image description of the desired image When information is generated, the generated initial image is output as the image retrieval result, which not only enables the user to retrieve more satisfactory image retrieval data, improves the image retrieval effect, but also improves the flexibility of image data acquisition.

在一实施例中,如图3所示,步骤S20中,即基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像,具体包括如下步骤:In one embodiment, as shown in FIG. 3, in step S20, the image is generated based on the desired content description and the desired image style to obtain an initially generated image, which specifically includes the following steps:

S21:获取基于模型样本数据集对生成对抗网络进行深度学习得到的目标图像生成模型。S21: Obtain a target image generation model obtained by performing deep learning on the generative adversarial network based on the model sample data set.

在获取用户对期望图像的图像描述信息之后,服务器还需要获取预先训练好的目标图像生成模型,以便调用目标图像生成模型进行基于期望图像风格和期望内容描述的图像生成。其中,目标图像生成模型为基于模型样本数据集对生成对抗网络进行深度学习得到的目标图像生成模型。该模型样本数据集包括多个历史图像样本,该模型样本数据集中的每一历史图像样本均对应有样本内容描述向量和样本风格向量。After obtaining the user's image description information for the desired image, the server also needs to obtain a pre-trained target image generation model, so as to call the target image generation model to generate images based on the desired image style and desired content description. Among them, the target image generation model is a target image generation model obtained by performing deep learning on the generative confrontation network based on the model sample data set. The model sample data set includes a plurality of historical image samples, and each historical image sample in the model sample data set corresponds to a sample content description vector and a sample style vector.

在模型训练过程中,基于多个历史图像样本的样本内容描述向量和样本风格向量对生成对抗网络进行深度学习训练,得到的目标图像生成模型。其中,生成对抗网络可以是通用的包括生成模块和判别模块的神经网络结构;在模型训练过程中,将某一历史图像样本的样本内容描述向量和样本风格向量作为生成模块的输入,以使生成模块基于样本内容描述向量和样本风格向量进行图像生成;判别模块用于判断生成模块生成的图像真实性,并基于该历史图像样本计算损失函数,直至损失函数收敛后输出生成模块为目标图像生成模型。In the process of model training, based on the sample content description vector and sample style vector of multiple historical image samples, deep learning training is carried out on the generative confrontation network, and the target image generation model is obtained. Among them, the generation confrontation network can be a general neural network structure including a generation module and a discrimination module; The module generates images based on the sample content description vector and the sample style vector; the discrimination module is used to judge the authenticity of the image generated by the generation module, and calculates the loss function based on the historical image samples until the loss function converges and outputs the generation module as the target image generation model .

S22:对期望内容描述进行语义特征提取,得到图像描述向量,并对期望图像风格进行语义特征提取,得到期望图像风格向量。S22: Perform semantic feature extraction on the desired content description to obtain an image description vector, and perform semantic feature extraction on the desired image style to obtain a desired image style vector.

在获取预先训练好的目标图像生成模型之后,需要对期望内容描述进行语义特征提取,得到图像描述向量,并对期望图像风格进行语义特征提取,得到期望图像风格向量。After obtaining the pre-trained target image generation model, it is necessary to perform semantic feature extraction on the desired content description to obtain an image description vector, and perform semantic feature extraction on the desired image style to obtain the desired image style vector.

其中,在对期望内容描述和/或期望图像风格进行语义特征提取的过程中,可以先基于知识图谱、用户在编辑界面上的编辑数据、用户画像和用户历史行为数据等数据进行语义联想,得到语义联想后的期望内容描述和/或期望图像风格,然后对语义联想后的期望内容描述和/或期望图像风格进行特征编码,对应得到图像描述向量和期望图像风格向量。在服务器对期望内容描述进行语义特征提取的过程中,基于知识图谱对进行语义联想,能够从图谱角度智能扩充期望内容描述,从而丰富用户的意图,增加期望内容描述的多样性,进而提高图像描述向量、期望图像风格向量的准确性,以便提高后续图像的生成效果。而在语义特征提取时,在知识图谱的基础上增加用户画像和用户历史行为数据,以进行语义联想,可以进一步扩充期望内容描述,使得识别到的用户意图更贴近用户的行为偏好,进一步提高了对应向量的准确性,进而提升图像生成效果。在语义提取时,在知识图谱的基础上增加用户在编辑界面上的编辑数据进行语义联想,不仅可以进一步扩充期望内容描述,还能够使得识别到的用户意图更符合用户当前编辑需求,进而使得后续生成的图像更加符合用户需求。Among them, in the process of extracting the semantic features of the desired content description and/or desired image style, semantic association can be performed based on data such as the knowledge graph, user editing data on the editing interface, user portraits, and user historical behavior data to obtain The expected content description and/or expected image style after semantic association, and then perform feature encoding on the expected content description and/or expected image style after semantic association, and correspondingly obtain an image description vector and an expected image style vector. In the process of server extracting the semantic features of the desired content description, semantic association based on the knowledge map can intelligently expand the desired content description from the perspective of the map, thereby enriching the user's intention, increasing the diversity of the desired content description, and improving the image description. Vector, the accuracy of the expected image style vector, in order to improve the generation effect of subsequent images. When extracting semantic features, adding user portraits and user historical behavior data on the basis of knowledge graphs for semantic association can further expand the description of expected content, making the identified user intentions closer to the user's behavior preferences, and further improving the user experience. Corresponding to the accuracy of the vector, thereby improving the image generation effect. In semantic extraction, on the basis of the knowledge map, adding the user’s editing data on the editing interface for semantic association can not only further expand the description of the desired content, but also make the identified user intent more in line with the user’s current editing needs, thereby enabling subsequent The generated images are more in line with user needs.

S23:将期望图像风格向量和图像描述向量输入目标图像生成模型进行图像生成,并获取目标图像生成模型输出的图像,作为初始生成图像。S23: Input the desired image style vector and the image description vector into the target image generation model for image generation, and obtain an image output by the target image generation model as an initial generated image.

在获得图像描述向量、期望图像风格向量之后,将期望图像风格向量和图像描述向量输入目标图像生成模型进行图像生成,并获取目标图像生成模型输出的图像,作为初始生成图像。After obtaining the image description vector and the desired image style vector, input the desired image style vector and image description vector into the target image generation model for image generation, and obtain the image output by the target image generation model as the initial generated image.

本实施例中,通过获取基于模型样本数据集对生成对抗网络进行深度学习得到的目标图像生成模型,然后对期望内容描述进行语义特征提取,得到图像描述向量,并对期望图像风格进行语义特征提取,得到期望图像风格向量,再将期望图像风格向量和图像描述向量输入目标图像生成模型进行图像生成,并获取目标图像生成模型输出的图像,作为初始生成图像;明确了基于期望内容描述和期望图像风格进行图像生成,得到初始生成图像的具体步骤,基于多个历史图像样本的样本内容描述向量和样本风格向量,采用生成对抗网络训练得到高精度的目标图像生成模型,并在后续调用模型直接进行图像生成,简单快速且图像质量较高。In this embodiment, by obtaining the target image generation model obtained by performing deep learning on the generative adversarial network based on the model sample data set, and then performing semantic feature extraction on the desired content description to obtain an image description vector, and performing semantic feature extraction on the desired image style , get the desired image style vector, then input the desired image style vector and image description vector into the target image generation model for image generation, and obtain the image output by the target image generation model as the initial generated image; it is clear based on the expected content description and expected image style to generate an image, and obtain the specific steps of initial image generation. Based on the sample content description vector and sample style vector of multiple historical image samples, the high-precision target image generation model is obtained by using the generative confrontation network training, and the model is called directly in the subsequent call. Image generation, simple and fast with high image quality.

在一优选的实施例中,历史图像样本的样本内容描述向量包括样本组成元素的语义向量、样本组成元素的位置向量和颜色向量。生成对抗网络包括生成模块、风格迁移网络和判别模块,其中,风格迁移网络和判别模块用于辅助生成模块生成图像,以降低生成的图像在期望图像风格、图像元素的位置和颜色上与历史图像样本的差距。在模型训练过程中,将历史图像样本中样本组成元素的语义向量、样本组成元素的位置向量和颜色向量输入生成模块生成图像,然后引入风格迁移网络进行基于样本风格向量的渲染,用于生成模块生成图像风格信息;最后利用判别模块测算生成图像与历史图像样本之间的差异大小,用于引导生成模块生成的图像在图像风格、图像组成元素、位置和颜色上与历史图像样本更接近。在损失函数收敛后,将收敛的生成器和风格迁移网络输出为目标图像生成模型,其结构使得目标图像生成模型能够从细粒度挖掘图像信息并进行图像生成,生成图像的视觉质量上以及图像粒度上都有很好的表现。对应地,在对期望内容描述进行语义特征提取,得到图像描述向量的过程中,需要从期望内容描述提取到期望图像的期望元素的文本向量、期望元素的位置向量和颜色向量,即图像描述向量可以包括期望元素的文本向量、期望元素的位置向量和颜色向量,然后在训练得到目标图像生成模型后,将期望图像风格向量、期望元素的语义向量、期望元素的位置向量和颜色向量,输入目标图像生成模型进行图像生成,并获取目标图像生成模型输出的图像,作为期望图像对应的初始生成图像,可以得到在视觉质量上以及图像粒度上都有很好的表现的图像,提高了初始生成图像的生成效果。In a preferred embodiment, the sample content description vector of the historical image sample includes a semantic vector of sample constituent elements, a position vector and a color vector of sample constituent elements. The generation confrontation network includes a generation module, a style transfer network and a discrimination module. The style transfer network and the discrimination module are used to assist the generation module to generate images, so as to reduce the difference between the generated images and the historical images in terms of the desired image style, the position and color of image elements. sample gap. In the process of model training, the semantic vector of the sample constituent elements in the historical image sample, the position vector and the color vector of the sample constituent elements are input into the generation module to generate the image, and then the style transfer network is introduced to perform rendering based on the sample style vector, which is used in the generation module Generate image style information; finally, use the discriminant module to measure the difference between the generated image and the historical image samples, which is used to guide the image generated by the generation module to be closer to the historical image samples in terms of image style, image components, positions and colors. After the loss function converges, the converged generator and style transfer network are output as the target image generation model. Its structure enables the target image generation model to mine image information and generate images from fine-grained, visual quality and image granularity of generated images. All performed well. Correspondingly, in the process of extracting semantic features from the desired content description to obtain the image description vector, it is necessary to extract the text vector, the position vector and the color vector of the desired element of the desired image from the desired content description, that is, the image description vector It can include the text vector of the desired element, the position vector and the color vector of the desired element, and then after training the target image generation model, input the desired image style vector, the semantic vector of the desired element, the position vector and the color vector of the desired element into the target The image generation model performs image generation, and obtains the image output by the target image generation model as the initial generated image corresponding to the desired image, and can obtain an image with good visual quality and image granularity, which improves the initial generated image. generation effect.

在一实施例中,关键图像信息包括期望图像的多个期望元素。如图4所示,步骤S40中,即确定内容识别数据与关键图像信息是否匹配,具体包括如下步骤:In an embodiment, the key image information includes a plurality of desired elements of the desired image. As shown in Figure 4, in step S40, it is determined whether the content recognition data matches the key image information, specifically including the following steps:

S41:对内容识别数据进行组成元素识别,得到初始生成图像的多个组成元素;S41: Recognize the constituent elements of the content recognition data to obtain multiple constituent elements of the initially generated image;

S42:将多个期望元素与多个组成元素进行一一匹配,得到匹配结果;S42: Match multiple expected elements with multiple constituent elements one by one to obtain a matching result;

S43:若元素匹配结果为完全匹配,则确定内容识别数据与关键图像信息匹配;S43: If the element matching result is an exact match, determine that the content identification data matches the key image information;

S44:若元素匹配结果为未完全匹配,则确定内容识别数据与关键图像信息不匹配。S44: If the element matching result is an incomplete match, determine that the content identification data does not match the key image information.

本实施例中,关键图像信息包括期望图像的多个期望元素。在对初始生成图像进行图像内容识别得到内容识别数据之后,需要对内容识别数据进行组成元素识别,得到初始生成图像的多个组成元素;然后,将多个期望元素与多个组成元素进行一一匹配,从而得到匹配结果;若元素匹配结果为完全匹配,表示初始生成图像的组成元素集与期望图像的期望元素集完全重合,初始生成图像的组成元素等信息与用户期望图像相符,则确定内容识别数据与关键图像信息匹配;若元素匹配结果为未完全匹配,表示初始生成图像的组成元素集与期望图像的期望元素集未完全重合,初始生成图像的组成元素等信息与用户期望图像不相符,则确定内容识别数据与关键图像信息不匹配。In this embodiment, the key image information includes multiple expected elements of the expected image. After performing image content recognition on the initial generated image to obtain the content recognition data, it is necessary to perform element recognition on the content recognition data to obtain multiple constituent elements of the initial generated image; match to obtain the matching result; if the element matching result is a complete match, it means that the component element set of the initially generated image completely coincides with the expected element set of the expected image, and the component elements of the initially generated image are consistent with the user's expected image, then determine the content The recognition data matches the key image information; if the element matching result is not completely matched, it means that the element set of the initially generated image does not completely coincide with the expected element set of the expected image, and the information such as the elements of the initially generated image does not match the user's expected image , it is determined that the content-aware data does not match the key image information.

例如,多个期望元素包括元素A、元素B和元素C,而初始生成图像的多个组成元素为元素A、元素B和元素D,即初始生成图像的组成元素集与期望图像的期望元素集未完全重合,则确定内容识别数据与关键图像信息不匹配;若初始生成图像的多个组成元素也为元素A、元素B和元素C,则确定内容识别数据与关键图像信息匹配。For example, the plurality of desired elements include element A, element B, and element C, and the plurality of constituent elements of the initially generated image are element A, element B, and element D, that is, the composition element set of the initially generated image and the desired element set of the desired image If they are not completely overlapped, it is determined that the content identification data does not match the key image information; if the multiple elements of the initially generated image are also element A, element B, and element C, then it is determined that the content identification data does not match the key image information.

本实施例中,通过对内容识别数据进行组成元素识别,得到初始生成图像的多个组成元素,然后将多个期望元素与多个组成元素进行一一匹配,得到匹配结果,若元素匹配结果为完全匹配,则确定内容识别数据与关键图像信息匹配,若元素匹配结果为未完全匹配,则确定内容识别数据与关键图像信息不匹配,细化了确定内容识别数据与关键图像信息是否匹配的具体步骤。在进行图像数据匹配时对分别对图像的组成元素进行一一匹配,确保了匹配结果的准确性,也为后续数据处理提供了判断基础。In this embodiment, by identifying the constituent elements of the content recognition data, multiple constituent elements of the initially generated image are obtained, and then the multiple expected elements are matched one by one with the multiple constituent elements to obtain the matching result. If the element matching result is Complete match, it is determined that the content recognition data matches the key image information, if the element matching result is not a complete match, then it is determined that the content recognition data does not match the key image information, and the details of determining whether the content recognition data matches the key image information are refined. step. When performing image data matching, one-to-one matching is performed on the constituent elements of the image, which ensures the accuracy of the matching results and also provides a judgment basis for subsequent data processing.

在其他实施例中,关键图像信息还可以包括每一期望元素的位置和颜色,以及期望图像的背景等信息的一种或者多种,对应地,初始生成图像的内容识别数据也包括初始生成图像中每一组成元素的位置和颜色,以及初始生成图像的背景等信息的一种或者多种,在对初始生成图像与用户期望图像进行匹配时,不仅对图像元素本身进行匹配,还需要对其他信息进行匹配,以增加信息匹配粒度,从而增加匹配结果的准确性。In other embodiments, the key image information may also include one or more information such as the position and color of each desired element, and the background of the desired image. Correspondingly, the content identification data of the initial generated image also includes the initial generated image One or more information such as the position and color of each component element, and the background of the initial generated image. When matching the initial generated image with the user's desired image, not only the image element itself is matched, but other Information is matched to increase the granularity of information matching, thereby increasing the accuracy of matching results.

在一实施例中,以关键图像信息还包括每一期望元素的位置和颜色为例。步骤S42中,即将多个期望元素与多个组成元素进行一一匹配,得到匹配结果,具体包括如下步骤:In an embodiment, it is taken that the key image information further includes the position and color of each desired element as an example. In step S42, the multiple expected elements are matched one by one with multiple constituent elements to obtain the matching result, which specifically includes the following steps:

S421:确定多个组成元素与多个期望元素是否能够一一对应匹配;S421: Determine whether a plurality of constituent elements and a plurality of expected elements can be matched one-to-one;

S422:若多个组成元素与多个期望元素能够一一对应匹配,则基于内容识别数据确定每一组成元素的位置和颜色;S422: If the multiple constituent elements can be matched one-to-one with the multiple expected elements, then determine the position and color of each constituent element based on the content identification data;

S423:确定每一组成元素的位置和颜色,与对应期望元素的位置和颜色是否匹配;S423: Determine whether the position and color of each component element match the position and color of the corresponding desired element;

S424:若每一组成元素的位置和颜色,与对应期望元素的位置和颜色匹配,则确定匹配结果为完全匹配;S424: If the position and color of each component element match the position and color of the corresponding expected element, determine that the matching result is a complete match;

S425:若任一组成元素的位置或颜色,与对应期望元素的位置或颜色不匹配,则确定匹配结果为未完全匹配。S425: If the position or color of any component element does not match the position or color of the corresponding expected element, determine that the matching result is an incomplete match.

在对内容识别数据进行组成元素识别,得到初始生成图像的多个组成元素之后,确定多个组成元素与多个期望元素是否能够一一对应匹配,若多个组成元素与多个期望元素不能够一一对应匹配,即初始生成图像的组成元素集与期望图像的期望元素集未完全重合,表示初始生成图像的组成元素与用户期望元素不相符,确定内容识别数据与关键图像信息不匹配。After identifying the constituent elements of the content recognition data and obtaining the multiple constituent elements of the initially generated image, determine whether the multiple constituent elements and the multiple expected elements can be matched one-to-one. If the multiple constituent elements and the multiple expected elements cannot One-to-one correspondence matching, that is, the element set of the initially generated image does not completely coincide with the expected element set of the expected image, indicating that the elements of the initially generated image do not match the elements expected by the user, and it is determined that the content recognition data does not match the key image information.

若多个组成元素与多个期望元素能够一一对应匹配,即初始生成图像的组成元素集与期望图像的期望元素集未完全重合,表示初始生成图像的组成元素与用户期望元素相符,此时需要进一步判断其他信息。由于本实施例中关键图像信息包括多个期望元素,以及每一期望元素的位置和颜色,即还需要基于内容识别数据确定每一组成元素的位置和颜色。然后,确定每一组成元素的位置和颜色,与对应期望元素的位置和颜色是否匹配;若每一组成元素的位置和颜色,与对应期望元素的位置和颜色匹配,则确定匹配结果为完全匹配;若任一组成元素的位置或颜色,与对应期望元素的位置或颜色不匹配,则确定匹配结果为未完全匹配。If multiple constituent elements and multiple expected elements can be matched one-to-one, that is, the set of constituent elements of the initially generated image does not completely coincide with the set of expected elements of the expected image, which means that the constituent elements of the initially generated image match the elements expected by the user. At this time Additional information is required for further judgment. Since the key image information in this embodiment includes multiple desired elements, and the position and color of each desired element, it is also necessary to determine the position and color of each component element based on the content recognition data. Then, determine whether the position and color of each constituent element match the position and color of the corresponding expected element; if the position and color of each constituent element match the position and color of the corresponding expected element, then determine that the matching result is a complete match ; If the position or color of any component element does not match the position or color of the corresponding expected element, then determine the matching result as an incomplete match.

例如,多个期望元素包括元素A、元素B,而初始生成图像的多个组成元素为元素A、元素B。若期望图像中元素A的颜色和位置,与初始生成图像中元素A颜色和位置相同;期望图像中元素B的颜色和位置,与初始生成图像中元素B颜色和位置相同,则确定匹配结果为完全匹配。若期望图像中元素A(或B)的颜色或位置,与初始生成图像中元素A(或者B)颜色或位置任一不相同;或者,期望图像中元素A的颜色和位置,与初始生成图像中元素A颜色和位置相同,但望图像中元素B的颜色或位置,与初始生成图像中元素B颜色或位置不相同,确定匹配结果为未完全匹配。For example, the multiple expected elements include element A and element B, and the multiple constituent elements of the initially generated image are element A and element B. If the color and position of element A in the expected image are the same as the color and position of element A in the initially generated image; the color and position of element B in the expected image are the same as the color and position of element B in the initially generated image, then the matching result is determined to be match exactly. If the color or position of element A (or B) in the expected image is different from the color or position of element A (or B) in the initially generated image; or, the color and position of element A in the expected image are different from the initially generated image The color and position of element A in the image are the same, but the color or position of element B in the expected image is different from the color or position of element B in the initially generated image, and the matching result is determined to be an incomplete match.

本实施例中,关键图像信息还包括每一期望元素的位置和颜色。通过确定多个组成元素与多个期望元素是否能够一一对应匹配;若多个组成元素与多个期望元素能够一一对应匹配,则基于内容识别数据确定每一组成元素的位置和颜色;确定每一组成元素的位置和颜色,与对应期望元素的位置和颜色是否匹配;若每一组成元素的位置和颜色,与对应期望元素的位置和颜色匹配,则确定匹配结果为完全匹配;若多个组成元素与多个期望元素不能一一对应匹配,或者任一组成元素的位置或颜色,与对应期望元素的位置或颜色不匹配,则确定匹配结果为未完全匹配,明确了将多个期望元素与多个组成元素进行一一匹配,得到匹配结果的具体步骤,在进行元素匹配时,不仅匹配元素本身,还匹配元素的颜色和位置,增加了信息匹配的粒度,从而增加匹配结果的准确性。In this embodiment, the key image information also includes the position and color of each desired element. By determining whether a plurality of constituent elements and a plurality of expected elements can be matched in one-to-one correspondence; if a plurality of constituent elements can be matched in a one-to-one correspondence with a plurality of expected elements, then determine the position and color of each constituent element based on the content identification data; determine Whether the position and color of each component element matches the position and color of the corresponding expected element; if the position and color of each component element match the position and color of the corresponding expected element, then determine that the matching result is a complete match; if more A component element cannot be matched one-to-one with multiple expected elements, or the position or color of any component element does not match the position or color of the corresponding expected element, then the matching result is determined to be an incomplete match, and multiple expected One-to-one matching between an element and multiple constituent elements to obtain the specific steps of the matching result. When performing element matching, not only the element itself is matched, but also the color and position of the element are matched, which increases the granularity of information matching, thereby increasing the accuracy of the matching result sex.

此外,仅在每一组成元素的位置和颜色,与对应期望元素的位置和颜色均匹配时,才确定匹配结果为完全匹配,提高了匹配结果的精度,从而提高了后续输出的目标检索图像的精确性,进而提高了用户检索效果。在其他实施例中,当初始生成图像中多个组成元素的位置和颜色,与对应期望元素的位置和颜色进行匹配时,当组成元素匹配成功的比例大于预设比例(如80%)时,即可确定匹配结果为完全匹配。该方式降低了图像精度的要求,在初始生成图像基本满足用户描述的基础是哪个,可以快速得到满足要求的目标检索图像,增加目标检索图像的数量和多样性,为用户提供更多的图像选择。In addition, only when the position and color of each component element match the position and color of the corresponding expected element, the matching result is determined to be a complete match, which improves the accuracy of the matching result, thereby improving the accuracy of the subsequent output target retrieval image. Accuracy, thereby improving the user retrieval effect. In other embodiments, when the positions and colors of multiple constituent elements in the initially generated image are matched with the positions and colors of the corresponding desired elements, when the ratio of successful matching of the constituent elements is greater than a preset ratio (such as 80%), It can be determined that the matching result is an exact match. This method lowers the requirements for image accuracy. The initial generated image basically satisfies the user's description, which can quickly obtain target retrieval images that meet the requirements, increase the number and diversity of target retrieval images, and provide users with more image choices. .

在一实施例中,步骤S423中,即确定每一组成元素的位置和颜色,与对应期望元素的位置和颜色是否匹配,具体包括如下步骤:In one embodiment, in step S423, it is determined whether the position and color of each component element match the position and color of the corresponding desired element, specifically including the following steps:

S4231:计算每一组成元素的位置与对应期望元素的位置间的距离,得到每一组成元素的位置距离。S4231: Calculate the distance between the position of each component element and the position of the corresponding expected element to obtain the position distance of each component element.

本实施例中,关键图像信息包括多个期望元素、每一期望元素的位置和颜色;在对内容识别数据进行组成元素识别时,得到初始生成图像的多个组成元素,以及每一组成元素的位置和颜色。在确定多个组成元素与多个期望元素能够一一对应匹配之后,需要计算每一组成元素的位置与对应期望元素的位置间的距离,得到每一组成元素的位置距离。该位置距离越大,表示组成元素的位置与对应期望元素的位置的偏差越大;该位置距离越小,表示组成元素的位置与对应期望元素的位置的偏差越小,元素位置较为相近。In this embodiment, the key image information includes a plurality of desired elements, the position and color of each desired element; when identifying the constituent elements of the content recognition data, the multiple constituent elements of the initially generated image, and the position and color of each constituent element are obtained. position and color. After determining that the plurality of constituent elements and the plurality of expected elements can be matched one-to-one, it is necessary to calculate the distance between the position of each constituent element and the position of the corresponding expected element to obtain the position distance of each constituent element. The larger the position distance, the greater the deviation between the position of the component element and the position of the corresponding expected element; the smaller the position distance, the smaller the deviation between the position of the component element and the position of the corresponding expected element, and the closer the element positions.

其中,在计算位置距离时可以采用欧式距离算法,以计算两个位置直接的最短距离,简单直观。在其他实施例中,还可以使用其他距离计算方法,例如余弦距离算法、标准欧式距离算法等。Among them, the Euclidean distance algorithm can be used to calculate the direct shortest distance between two locations when calculating the location distance, which is simple and intuitive. In other embodiments, other distance calculation methods may also be used, such as cosine distance algorithm, standard Euclidean distance algorithm, and the like.

S4232:计算每一组成元素的颜色与对应期望元素的颜色间的相似度,得到每一组成元素的色差。S4232: Calculate the similarity between the color of each constituent element and the color of the corresponding desired element, and obtain the color difference of each constituent element.

在确定多个组成元素与多个期望元素能够一一对应匹配之后,还需要计算每一组成元素的颜色与对应期望元素的颜色间的相似度,得到每一组成元素的色差。其中,色差的算法可以是基于三维空间的色差计算方法,实现过程通常为:把RGB颜色空间转为归一化的hsv颜色空间,转化hsv颜色空间的三维坐标点,然后计算两个颜色的三维空间坐标点的距离,并将三维空间坐标点的距离作为两个颜色的色差;当两个颜色越相近,则两个颜色的坐标距离越接近于0,反之,当两个颜色相差越远,则坐标距离越接近于1。其中,HSV(Hue,Saturation,Value)是根据颜色的直观特性创建的一种颜色空间,也称六角锥体模型,在HSV中颜色的参数分别是色调(H)、饱和度(S)和明度(V)。After it is determined that the plurality of constituent elements and the plurality of expected elements can be matched one-to-one, it is also necessary to calculate the similarity between the color of each constituent element and the color of the corresponding expected element to obtain the color difference of each constituent element. Among them, the color difference algorithm can be a color difference calculation method based on three-dimensional space. The implementation process is usually: convert the RGB color space into a normalized hsv color space, convert the three-dimensional coordinate points of the hsv color space, and then calculate the three-dimensional coordinates of the two colors The distance between coordinate points in space, and the distance between coordinate points in three-dimensional space is used as the color difference between two colors; when the two colors are closer, the coordinate distance between the two colors is closer to 0, on the contrary, when the two colors are farther apart, The closer the coordinate distance is to 1. Among them, HSV (Hue, Saturation, Value) is a color space created according to the intuitive characteristics of color, also known as the hexagonal pyramid model. The parameters of color in HSV are hue (H), saturation (S) and brightness (V).

S4233:确定每一组成元素的位置距离是否小于预设距离,并确定每一组成元素的色差是否小于预设色差值。S4233: Determine whether the position distance of each component element is less than a preset distance, and determine whether the color difference of each component element is less than a preset color difference value.

组成元素之间的位置距离越小,则组成元素的位置与用户预期的位置越接近,图像精度越高;色差越小,组成元素之间的颜色越相近,与用户预期的元素颜色越接近,图像精度越高。在得到每一组成元素的位置距离、每一组成元素的色差之后,确定每一组成元素的位置距离是否小于预设距离,并确定每一组成元素的色差是否小于预设颜色相似度。The smaller the position distance between the constituent elements, the closer the position of the constituent elements is to the user's expected position, and the higher the image accuracy; the smaller the color difference, the closer the colors of the constituent elements are, and the closer to the user's expected element color, The higher the image accuracy. After obtaining the position distance of each component element and the color difference of each component element, it is determined whether the position distance of each component element is less than a preset distance, and whether the color difference of each component element is less than a preset color similarity.

S4234:若每一组成元素的位置距离均小于预设距离,且每一组成元素的色差均小于预设色差值,则确定每一组成元素的位置和颜色,与对应期望元素的位置和颜色匹配。S4234: If the position distance of each component element is less than the preset distance, and the color difference of each component element is less than the preset color difference value, determine the position and color of each component element, and the position and color of the corresponding desired element match.