CN116071290A - A method and system for visual inspection of industrial products - Google Patents

A method and system for visual inspection of industrial products Download PDFInfo

- Publication number

- CN116071290A CN116071290A CN202211098674.0A CN202211098674A CN116071290A CN 116071290 A CN116071290 A CN 116071290A CN 202211098674 A CN202211098674 A CN 202211098674A CN 116071290 A CN116071290 A CN 116071290A

- Authority

- CN

- China

- Prior art keywords

- image

- sub

- images

- classification

- features

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Pending

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/0002—Inspection of images, e.g. flaw detection

- G06T7/0004—Industrial image inspection

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

- G06N3/084—Backpropagation, e.g. using gradient descent

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/20—Image preprocessing

- G06V10/26—Segmentation of patterns in the image field; Cutting or merging of image elements to establish the pattern region, e.g. clustering-based techniques; Detection of occlusion

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/40—Extraction of image or video features

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/70—Arrangements for image or video recognition or understanding using pattern recognition or machine learning

- G06V10/764—Arrangements for image or video recognition or understanding using pattern recognition or machine learning using classification, e.g. of video objects

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/70—Arrangements for image or video recognition or understanding using pattern recognition or machine learning

- G06V10/77—Processing image or video features in feature spaces; using data integration or data reduction, e.g. principal component analysis [PCA] or independent component analysis [ICA] or self-organising maps [SOM]; Blind source separation

- G06V10/7715—Feature extraction, e.g. by transforming the feature space, e.g. multi-dimensional scaling [MDS]; Mappings, e.g. subspace methods

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/70—Arrangements for image or video recognition or understanding using pattern recognition or machine learning

- G06V10/77—Processing image or video features in feature spaces; using data integration or data reduction, e.g. principal component analysis [PCA] or independent component analysis [ICA] or self-organising maps [SOM]; Blind source separation

- G06V10/80—Fusion, i.e. combining data from various sources at the sensor level, preprocessing level, feature extraction level or classification level

- G06V10/806—Fusion, i.e. combining data from various sources at the sensor level, preprocessing level, feature extraction level or classification level of extracted features

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/70—Arrangements for image or video recognition or understanding using pattern recognition or machine learning

- G06V10/82—Arrangements for image or video recognition or understanding using pattern recognition or machine learning using neural networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20021—Dividing image into blocks, subimages or windows

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20081—Training; Learning

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20084—Artificial neural networks [ANN]

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/30—Subject of image; Context of image processing

- G06T2207/30108—Industrial image inspection

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- General Physics & Mathematics (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Evolutionary Computation (AREA)

- Multimedia (AREA)

- General Health & Medical Sciences (AREA)

- Software Systems (AREA)

- Computing Systems (AREA)

- Health & Medical Sciences (AREA)

- Artificial Intelligence (AREA)

- Databases & Information Systems (AREA)

- Medical Informatics (AREA)

- Biomedical Technology (AREA)

- Life Sciences & Earth Sciences (AREA)

- Quality & Reliability (AREA)

- Biophysics (AREA)

- Computational Linguistics (AREA)

- Data Mining & Analysis (AREA)

- Molecular Biology (AREA)

- General Engineering & Computer Science (AREA)

- Mathematical Physics (AREA)

- Image Analysis (AREA)

Abstract

The invention relates to an industrial visual inspection method and system, which belong to the field of industrial product inspection, wherein a plurality of sub-images are obtained by carrying out overlapping segmentation processing on an image to be inspected, so that on one hand, the influence of the size of the image on the precision of an inspection result is reduced, and on the other hand, the sub-images have sequence association characteristics; the classifying features of the current sub-image are obtained by fusing the classifying features of the current sub-image and the classifying features of the previous sub-image, so that the image features of the current sub-image are not lost, and the related features of the front sequence and the rear sequence can be obtained. By implementing an attention mechanism on the acquired classification features, soft attention to the defective areas can be improved. The method can detect the defects of the industrial product in the sub-image, can detect the defects of the cross-sub-image, can achieve the effects of high detection speed and high detection precision without position marking for the defect detection in the industrial product image, and reduces the data marking density requirement of the model.

Description

Technical Field

The disclosure relates to industrial product detection, and in particular relates to an industrial product visual detection method and system.

Background

In a traditional factory, industrial product defect detection depends on manual naked eye judgment, which not only requires workers to be familiar with all defect types of products, but also is a great test on the physical strength of the workers; because of the slow manual detection speed, a great deal of manual work is needed to complete the quality inspection of industrial products; and traditional manual quality inspection does not depend on a computer, so that data such as the number of defects cannot be further counted, and the problem that industrial manufacturing cannot accurately obtain a manufacturing process is caused. With the continuous development of artificial intelligence, intelligent detection of defects of industrial products has become a new development direction of great attention to factories.

The existing industrial product detection technology mainly has two detection modes. One is to extract and detect the image features of the industrial product by a target detection method, such as using a fast-RCNN algorithm to extract features and judge defects. However, the scheme not only needs to carry out mass defect labeling on the data, but also has long detection time and cannot meet the requirement of industrial detection speed. The other is to extract and detect the image features of the industrial product by adopting a machine vision and pattern recognition method, firstly, a preprocessing module extracts a detection area from the background by adopting an Ojin threshold method and a morphological processing method, and then, the product object distance is analyzed by using gray projection distribution anomaly detection and time-frequency domain template matching. However, the method needs to carry out template matching on similar products with different forms independently, and has poor applicability.

Disclosure of Invention

Aiming at the prior art, the technical problems solved by the invention at least comprise:

(1) The method has high requirements on the data sets, and the data set support model with high-precision labeling is required to be trained so as to detect the specific position of the defect in the image.

(2) The model has low defect detection efficiency.

(3) Data of different scale sizes cannot be flexibly processed.

(4) The traditional image method is used for processing, and a template library is required to be established for each industrial product.

In order to solve the technical problems, the invention aims to:

(1) And the defects in the industrial image do not need to be marked in position, so that the data marking density requirement of the model is reduced.

(2) On images without fine labels, the location information of the defect in the image can be deduced from the model.

(3) The aspect ratio requirement of the detection model on the sent detection data is reduced, so that the influence on the accuracy of the detection result is reduced after the size of the shot image is changed by the detection device.

(4) The detection speed is high, and the detection accuracy is high.

In order to achieve the above object, the technical scheme of the present invention is as follows.

In a first aspect, the present invention provides an industrial visual inspection method, the method comprising the steps of:

dividing an image to be detected of an industrial product into a plurality of sub-images, and enabling adjacent sub-images to have overlapping lengths of a set number, so that the sub-images have sequence relevance;

acquiring image features of a plurality of sub-images corresponding to the image to be detected by using a preset first model;

the method comprises the steps of utilizing a preset second model to obtain classification features of sub-images based on the image features of the sub-images, wherein the classification features comprise image features and sequence association features of the sub-images, and soft attention is obtained by adopting an attention mechanism according to each classification feature;

and acquiring fusion characteristics based on the soft attention and classification characteristics belonging to the same detection image, and classifying the products.

In the technical scheme, the images to be detected are subjected to overlapping segmentation processing to obtain a plurality of sub-images, so that on one hand, the influence of the image size on the accuracy of the detection result is reduced, and on the other hand, the sub-images have sequence correlation characteristics. And inputting each sub-image into a first model to obtain the image characteristics of each sub-image. The image features of each sub-image are input into a second model, and the classification features of the sub-images are obtained from the image features of the sub-images through the second model, wherein the classification features have the image features of the atomic images and the sequence association features of the previous sub-images. Attention to defective areas is heightened by the attention mechanism. The method can detect the defects of industrial products in the sub-images, can detect the defects of the cross-sub-images, can achieve the effects of high detection speed and high detection precision without position labeling for detecting the defects in the product images, and reduces the data labeling density requirement of the model.

In the technical scheme, the sub-image is acquired in a sliding window mode, so that the size of the sub-image meets the input requirement of a model, and meanwhile, the detection accuracy of defects cannot be affected by distortion of the product image. Specifically, the image to be detected is denoted as x, and the t th sub-image x t Can be expressed as:

x t =x[1+(d-b)×(t-1):d+(d-b)×(t-1)]

wherein: d represents the window length, b represents the overlap length of the windows, and the symbol: representing a crop from image x, the pixel values range from (d-b) x (t-1) to d.

In the above technical solution, the first model is a residual network model, so that the smaller stacked module can extract the image features that can be used for classification.

In the above technical solution, the second model is RNN, long-short-term memory network (LSTM), or gate-controlled cyclic unit (GRU), which is favorable for extracting sequence features of the sub-image sequence.

In the above technical solution, the soft attention is calculated by the following formula:

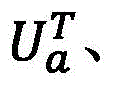

the soft attention corresponding to the t th sub-image is denoted as a t :

h t =σ(W e f t +W h h t-1 )

h 0 =0

Wherein:W a xi Juzhen, h is soft attention mechanics j For the classification feature of the jth sub-image, h t For the classification feature of the t sub-image, h t-1 For the classification feature of the t-1 th sub-image, f t For the image features of the sub-images, t=1, 2, …, T is the total number of sub-images, σ is the ReLU function, W e Extraction matrix for extracting classification features of sub-image from image features of sub-image, W h The sequence incidence matrix is the t-1 th sub-image and the t th sub-image.

In the above technical scheme, acquiring the fusion feature based on the soft attention and the classification feature belonging to the same detection image, classifying the industrial product, including:

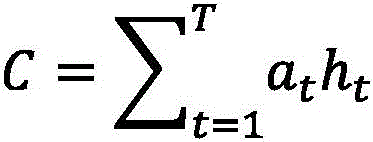

let the fusion feature be C, then:

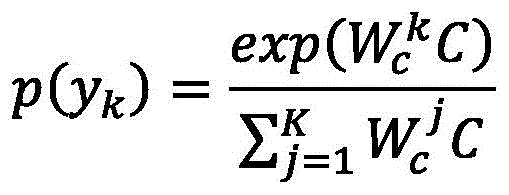

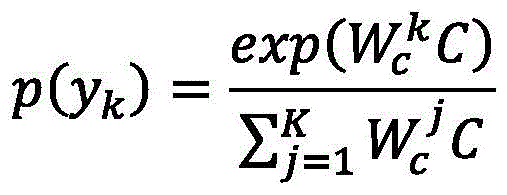

based on the fusion characteristics, calculating the probability of the category to which the industrial product belongs:

taking the classification corresponding to the maximum probability as the classification of the industrial products in the image to be detected;

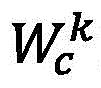

wherein K is the type of industrial product, W c In order to classify the matrix,for a classification matrix W c Line k, a t Soft attention, h, for the t th sub-image t For the classification feature of the T th sub-image, T is the total number of sub-images, p (y k ) Representing the probability of belonging to the kth class.

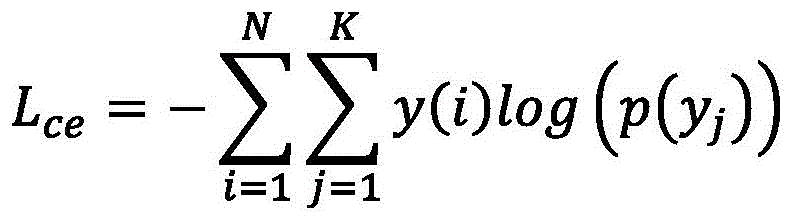

In the above technical solution, the first model and the second model update model parameters by back propagation of the loss values, so as to reduce training time and improve stability of the two models. Wherein the total loss value is calculated by the following formula:

L=λL reg +L ce

wherein: l is the total loss value, lambda is the regular coefficient, L ce Is the standard cross entropy, L reg Is a regular term. The initial value of lambda was 0.1.

In the above technical solution, one acquisition method of the regular term is: the regularization term is the square of the L2 norm of the first model parameter vector and the second model parameter vector.

In the above technical solution, the image input by the first model in the training stage is subjected to enhancement processing; the enhancement processing comprises random flipping, random shielding and random rotation. The enhancement process, when implemented, may optionally be combined with one or more of these, to provide generic feature information by data enhancement processing to train on small amounts of data.

In a second aspect, the present invention provides an industrial visual inspection system, where any of the above methods is applied to industrial visual inspection, so as to improve the inspection efficiency of product defects. The system comprises an external integrated subsystem, a classification detector and a database;

the external integrated subsystem is configured to call a message center system and receive feedback of the message center system, the message center system is configured to control starting of a machine system, and the control machine system is configured to control starting of a motor, a camera and a conveyor belt where an industrial product is located so as to acquire an image to be detected of the industrial product;

the classification detector is configured to divide an image to be detected of an industrial product into a plurality of sub-images, and enable adjacent sub-images to have overlapping lengths of a set number; acquiring image features of a plurality of sub-images corresponding to the image to be detected by using a preset first model; the method comprises the steps of utilizing a preset second model to obtain classification features of sub-images based on image features of the sub-images, and obtaining soft attention by adopting an attention mechanism according to each classification feature; acquiring fusion characteristics based on the soft attention and classification characteristics belonging to the same detection image, and classifying products;

the database is configured to store images to be detected and classification results of the images to be detected.

In the technical scheme of the system, the image data with different scales can be flexibly processed, a better training model can be obtained without high-precision labeling, and the defect detection efficiency of industrial products is improved.

In a third aspect, the present invention proposes a computer readable storage medium storing a computer program capable of being loaded by a processor and executing any one of the methods described above.

Drawings

In order to more clearly illustrate the technical solutions of the embodiments of the present application, the drawings that are needed in the description of the embodiments will be briefly described below, it being obvious that the drawings in the following description are only some embodiments of the present application, and that other drawings may be obtained according to these drawings without inventive effort to a person skilled in the art.

FIG. 1 is a block diagram of a detection system in one embodiment;

FIG. 2 is a schematic diagram of a detection flow in one embodiment;

FIG. 3 is a diagram of the internal architecture of the detection system in one embodiment;

fig. 4 is a schematic structural diagram of an electronic device in one embodiment.

Detailed Description

The following description of the embodiments of the present application will be made clearly and fully with reference to the accompanying drawings, in which it is evident that the embodiments described are only some, but not all, of the embodiments of the present application. All other embodiments, which can be made by one of ordinary skill in the art without undue burden from the present disclosure, are within the scope of the present disclosure.

The method can be realized as a classification detector for classifying whether the industrial product has defects or not, and is integrated in a quality inspection system, and the quality inspection system is shown in figure 1. In operation of the system, as shown in FIG. 2, a worker enters product information to be inspected to start the machine, including causing an initialization front end display. The information of the product to be detected is sent to a quality inspection system by a message center system, and the quality inspection system acquires detection information such as the material, the type, the size, the color and the like of the industrial product from an external information system. Meanwhile, the message center system controls the machine control system and the industrial camera to shoot images of industrial products. The machine control system comprises a motor, and after receiving a photographing signal, the motor drives the conveyor belt according to a preset speed, so that the conveyor belt and products sequentially pass through an industrial camera photographing area in the detection machine to photograph and collect data. And after the motor drives the conveyor belt to pass through the preset length, the motor pauses operation and sends a signal to the industrial camera by the message center system to take pictures, so that a plurality of products are photographed and image data are collected in a reciprocating mode. The industrial image is sent to an intelligent model scheduling engine of the quality inspection system, and an appropriate model is selected from a multi-example fusion learning framework through the intelligent model scheduling engine to carry out quality inspection, so that an inspection result is obtained. The quality inspection algorithm returns the detection result and sends it to the message center system, which controls the machine that records or stops. The quality detection system stores the images to be detected and the classification results of the images to be detected into an external storage system, the external storage system stores the detection classification results into a database, two databases are arranged for facilitating retrieval and management, one image database is used for storing the images to be detected, and the other result database is used for storing the classification results of the images to be detected. The external storage system displays the detection result at the front end. The operations of the above-described flow may be performed out of order. Rather, operations may be performed in reverse order or concurrently. Further, one or more other operations may be added to the flow, or one or more operations may be removed from the flow.

In the multi-example fusion learning framework, algorithms for detecting various industrial products are integrated, including the classification detector of the invention, so as to meet different detection requirements of the same type of industrial products or specific detection requirements of different types of industrial products.

The classification detector is used for detecting defects of industrial products, and the overall detection flow diagram is shown in fig. 3, and comprises the following steps:

step 1: and carrying out data preprocessing on the shot industrial image. The data preprocessing comprises a data acquisition module, an image block generator and a data enhancement module.

Step 1-1: the data acquisition module acquires an image to be detected from an external storage system.

Step 1-2: the data acquisition module transmits the image to be detected to the image block generator for processing. The image block generator is mainly used for reducing the influence of different aspect ratio sizes on the product defect detection classification. The image block generator performs a sliding window operation on the image to be detected, and performs segmentation sampling processing on the image to be detected according to a certain step length. The image to be detected is marked as x, d represents the window length, and b represents the windowOverlap length of the mouth, t th sub-image x t Can be expressed as:

x t =x[1+(d-b)×(t-1):d+(d-b)×(t-1)]

wherein: the symbols: representing a crop from image x, the pixel values range from (d-b) x (t-1) to d.

For example, for an image with a length and width of 1600 pixels×400 pixels, after processing according to a sample with a step length of 200 pixels and a division length of 400 pixels, 7 sub-images with a length and width of 400 pixels×400 pixels can be obtained, and two adjacent images have 200 pixels overlapping. After the processing of sliding window sampling, the length-width ratio of the original image to be detected, which is unbalanced by 4:1, is adjusted to be the length-width ratio which is balanced by 1:1. In other embodiments, the equalized aspect ratio may be 2:1.

Step 1-3: the data enhancement module is mainly used for a training stage of the defect detector, and if the current training stage is the training stage, the data enhancement operations such as random overturning, random shielding, random rotation and the like are respectively carried out on the image block, so that the defect detector can train on a small amount of data, and universal characteristic information is obtained; if the current detection stage is the detection stage, the module is directly skipped, and the data is transmitted to the first model.

Step 2: the first model mainly extracts image features from sub-images of the image to be detected, the second model extracts classification features of the sub-images from the image features of the sub-images, and soft attention is acquired by adopting an attention mechanism according to each classification feature.

In practice, the first model may be a residual network and the second model may be an RNN, long short term memory network (LSTM), or gate loop unit (GRU). In order to accelerate model training and improve the stability of the first model and the second model, the data set ImageNet-1K can be used for pre-training the first model, and then pre-trained parameters are used for initializing the first model for training with the second model.

The first model and the second model are trained by adopting a dynamic learning rate, the updating speed of the first model and the second model parameters is optimally controlled by the dynamic learning rate, and the model parameters are further updated by back propagation of the loss values. Wherein the total loss value is calculated by the following formula:

L=λL reg +L ce

wherein: l is the total loss value, lambda is a regular coefficient, and lambda has an initial value of 0.1.L (L) reg Is a regular term. L (L) ce For standard cross entropy, the following is calculated:

wherein: n is the number of images x (i) to be detected; k is the type of industrial product; y (i) is the real label of the input sample x (i); p (y) j ) Representing the probability of belonging to the j-th class.

The training process is further optimized by adopting an Adam optimizer, the learning rate is initialized to be 0.001, and when the error change rate meets the set condition, the learning rate is improved by 0.5 times. Another embodiment of the dynamic learning rate is to increase by a first set step size each time the first set training number is reached; and after the training time reaches the second set training time, the training time is reduced according to the second set step length until the second reference learning rate is reached. The update speed of the first model and the second model parameters is optimally controlled by the dynamic learning rate.

When the classification characteristic is acquired, in order not to lose the original image characteristic, the related characteristic of the front and back sequences can be acquired, and the classification characteristic h of the t th sub-image t Can be expressed as:

h t =σ(W e f t +W h h t-1 )

wherein: h is a t-1 For the classification feature of the t-1 th sub-image, f t For the image features of the sub-images, t=1, 2, …, T is the total number of sub-images, σ is the ReLU function, W e Extraction matrix for extracting classification features of sub-image from image features of sub-image, W h The sequence incidence matrix is the t-1 th sub-image and the t th sub-image. If t=1, then h 0 =0。

The classification features obtained in this way, except that the first graph does not have sequence-related features, all the other graphs can obtain sequence-related features containing the previous sub-image. The classification feature may be a sequence-related feature including a current image (t-th sub-image) and a subsequent sub-image (t+1th sub-image). That is, the classification feature in the present invention is extracted from the image feature of the current image and the sequence-related feature of the current image and the previous image or the next image, and if there is no previous image or next image, the classification feature is extracted only from the image feature of the current image. The classification feature obtained in this way is more concerned with sharp changes in the defective area.

And then, carrying out attention mechanism processing on the classification characteristics of a plurality of sub-images obtained by one image to be detected. The attention mechanism gives more weight to the defect target so as to achieve the effect of improving the defect detection accuracy. The soft attention corresponding to the t th sub-image is denoted as a t :

h t =σ(W e f t +W h h t-1 )

h 0 =0

Wherein:W a xi Juzhen, h is soft attention mechanics j For the classification feature of the jth sub-image, h t For the classification feature of the t sub-image, h t-1 For the classification feature of the t-1 th sub-image, f t For the image features of the sub-images, t=1, 2, …, T is the total number of sub-images, σ is the ReLU function, W e Extraction matrix for extracting classification features of sub-image from image features of sub-image, W h The sequence incidence matrix is the t-1 th sub-image and the t th sub-image.

step 3-1, marking the fusion characteristic as C, and then:

wherein a is t Soft attention, h, for the t th sub-image t And (3) for the classification characteristic of the T th sub-image, T is the total number of the sub-images.

Step 3-2, calculating the probability of the category to which the product belongs based on the fusion characteristics:

wherein: k is the kind of the product, W c In order to classify the matrix,for a classification matrix W c Is the k-th row, p (y k ) Representing the probability of belonging to the kth class.

And 3-3, taking the classification corresponding to the maximum probability value as the classification of the products in the image to be detected. If the current detection stage is the detection stage, outputting a detection result, and if the detection result is not defective, directly outputting a non-defective result; and if the sub-image block is defective, outputting the position of the sub-image block where the defect is located.

In the present embodiment, the classification categories are only two, "defective" and "non-defective". If the detection result is 'defect-free', the quality detection system adds one to the detection number of the front-end display, and the defect detection of the next image is continued; if the detection result is "defective", a "defective" signal is sent to the message center system, the number of defects prompted or displayed by the front-end display is increased by one, meanwhile, the defect sub-image block area obtained by the detection result is marked on the original image, for example, after the original image is marked by a red frame, the original image marked with the defect area is displayed on the front-end display, or the defect image is stored in the external storage system to be subjected to manual subsequent analysis to judge whether the model is misjudged. If the message center system receives a defect signal transmitted by the detection result, the motor is controlled to stop rotating, the industrial camera is controlled to stop photographing, and the detection system is suspended. While exposing the defective portion to the outside of the machine for processing by the worker.

In other embodiments, the category of classification may be a plurality of categories subdivided as desired.

In the following examples, specific industrial inductance is used as the detection object, but the detection method of the present invention is not limited to the inductance, and the method can be applied to other industrial products. The obtained images of the inductors are two groups, the sizes of the two groups of images are 1600 pixels multiplied by 400 pixels, the color of the adhesive surface of the inductor in one group of data is blue, and the color of the adhesive surface of the inductor in the other group of data is purple. The image data is shown in table 1 and the defect type data is shown in table 2.

TABLE 1

TABLE 2

The first model adopts a residual network ResNet34 neural network model, and the second model is an RNN neural network model. The overlapping division processing is performed on the two groups of images by the sliding window operation, the window length d=w=400, and the overlapping length b=200 of the window, and a total of 7 sub-images are obtained. The residual network is composed of two layers of convolution, the size of the convolution kernel is 3×3, each layer has 64 convolution kernels, the dimension of the extracted characteristic image is 512, the dimension of the soft attention is 256, and the detection result is 'no defect' or 'defective', so the product has the category k=2. The initial value of the dynamic learning rate is 0.001, and when the error change rate meets the set condition, the dynamic learning rate is improved by 0.5 times.

Based on the data sets of the 1 st and 2 nd groups, the classification detection method of the present invention was compared with Faster C-RNN, YOLO-V5 as shown in Table 3. Wherein F1, AUC and MCA are machine learning model performance evaluation indexes, param (parameter amount of model), flots (floating point operands) are used for analysis method complexity indexes. As can be seen from Table 3, the method of the present invention is superior to Faster R-CNN and YOLO-V5, and has the advantages of excellent performance, less required parameters and small calculated amount.

TABLE 3 Table 3

Next, a comparison is made in which the compared indices also include a machine learning model performance evaluation index and a complexity index of the analysis method:

(1) Vanilla Instance Learning (VIL): the step of overlapping and dividing the image to be detected in the method is replaced by directly adjusting the size of the image, and the rest steps are unchanged. Taking the above example of an image with a length and width of 1600 pixels×400 pixels, in the VIL, the sub-image with a length and width of 400 pixels×400 pixels is directly adjusted.

(2) Multi-instance Learning (MILs): the second model in the method of the invention was replaced with a multilayer perceptron (MLPs) with two layers.

(3) MIFL (woAttn) without attention mechanism.

(4) MIFL (woRNN) without RNN.

(5) Reducing resolution (LMIFL) of an image dataset

TABLE 4 Table 4

From the above, it can be seen that:

(1) MILs are superior to VIL in other than flow (floating point operations per second), because MILs do not distort an image using overlap segmentation, extracting image features from an undistorted image. Regarding Param, VIL and MIL are nearly identical, VIL uses global image features, but MIL uses a larger classification matrix because all sub-image features need to be stitched for judgment. However, the flow of VIL is much lower than MILs.

(2) woAttn uses RNN to fuse sub-image features, rather than simply stitching sub-image features together like MILs. It is therefore important to be able to fuse individual sub-image features.

(3) By comparing MILs, woAttn and woRNN, it can be seen that the attention mechanism also plays an important role in aggregation, which makes fault detection more accurate.

(4) It can be seen from table 4 that feature fusion and soft attention mutually promote each other, so that MIFL having both aspects exhibits good performance.

(5) As can be seen from LMIFL and MIFL, the resolution of the image system can be improved as much as possible, and the defect recognition rate can be improved.

Finally, the generalization ability of the method of the invention was further examined. The data sets of group 1 and group 2 were compared as a new group to group 1. The comparison results are shown in Table 5.

TABLE 5

As can be seen from table 5, the larger the data amount of training, the better the generalization of the method, and the input is made to have different modes by the color, and the generalization of the method can be improved without sacrificing the performance, thereby improving the detection efficiency.

In summary, the method of the invention obtains a plurality of sub-images by carrying out overlapping segmentation processing on the image to be detected, on one hand, the influence of the image size on the accuracy of the detection result is reduced, and on the other hand, the sub-images have sequence association characteristics; the classifying features of the current sub-image are obtained by fusing the classifying features of the current sub-image and the classifying features of the previous sub-image, so that the image features of the current sub-image are not lost, and the related features of the front sequence and the rear sequence can be obtained. By implementing an attention mechanism on the acquired classification features, attention to the defective region can be raised. If the method is applied to defect detection, not only the defects of industrial products in the sub-images can be detected, but also the defects of the cross-sub-images can be detected, the effects of high detection speed and high detection precision can be achieved without position marking for defect detection in the product images, and the data marking density requirement of the model is reduced.

The industrial visual inspection device provided in another embodiment may perform the embodiment of the method, and its implementation principle and technical effects are similar, and will not be described herein.

Fig. 4 is a schematic structural diagram of an electronic device according to an embodiment of the present invention. As shown in fig. 4, a schematic structural diagram of an electronic device 300 suitable for use in implementing embodiments of the present application is shown.

As shown in fig. 4, the electronic device 300 includes a Central Processing Unit (CPU) 301 that can perform various appropriate actions and processes according to a program stored in a Read Only Memory (ROM) 302 or a program loaded from a storage section 308 into a Random Access Memory (RAM) 303. In the RAM 303, various programs and data required for the operation of the device 300 are also stored. The CPU 301, ROM 302, and RAM 303 are connected to each other through a bus 304. An input/output (I/O) interface 305 is also connected to bus 304.

The following components are connected to the I/O interface 305: an input section 306 including a keyboard, a mouse, and the like; an output portion 307 including a Cathode Ray Tube (CRT), a Liquid Crystal Display (LCD), and the like, a speaker, and the like; a storage section 308 including a hard disk or the like; and a communication section 309 including a network interface card such as a LAN card, a modem, or the like. The communication section 309 performs communication processing via a network such as the internet. The driver 310 is also connected to the I/O interface 306 as needed. A removable medium 311 such as a magnetic disk, an optical disk, a magneto-optical disk, a semiconductor memory, or the like is installed on the drive 310 as needed, so that a computer program read therefrom is installed into the storage section 308 as needed.

In particular, according to embodiments of the present disclosure, the process described above with reference to fig. 1 may be implemented as a computer software program. For example, embodiments of the present disclosure include a computer program product comprising a computer program tangibly embodied on a machine-readable medium, the computer program comprising program code for performing the above-described step hydropower scheduling model construction method. In such an embodiment, the computer program may be downloaded and installed from a network via the communication portion 309, and/or installed from the removable medium 311.

The flowcharts and block diagrams in the figures illustrate the architecture, functionality, and operation of possible implementations of systems, methods and computer program products according to various embodiments of the present invention. In this regard, each block in the flowchart or block diagrams may represent a module, segment, or portion of code, which comprises one or more executable instructions for implementing the specified logical function(s). It should also be noted that, in some alternative implementations, the functions noted in the block may occur out of the order noted in the figures. For example, two blocks shown in succession may, in fact, be executed substantially concurrently, or the blocks may sometimes be executed in the reverse order, depending upon the functionality involved. It will also be noted that each block of the block diagrams and/or flowchart illustration, and combinations of blocks in the block diagrams and/or flowchart illustration, can be implemented by special purpose hardware-based systems which perform the specified functions or acts, or combinations of special purpose hardware and computer instructions.

The units or modules described in the embodiments of the present application may be implemented by software, or may be implemented by hardware. The described units or modules may also be provided in a processor. The names of these units or modules do not in some way constitute a limitation of the unit or module itself.

The system, apparatus, module or unit set forth in the above embodiments may be implemented in particular by a computer chip or entity, or by a product having a certain function. One typical implementation is a computer. In particular, the computer may be, for example, a personal computer, a notebook computer, a mobile phone, a smart phone, a personal digital assistant, a media player, a navigation device, an email device, a game console, a tablet computer, a wearable device, or a combination of any of these devices.

As another aspect, the present application also provides a storage medium, which may be a storage medium contained in the foregoing apparatus in the foregoing embodiment; or may be a storage medium that exists alone and is not incorporated into the device. The storage medium stores one or more programs for use by one or more processors in performing the step hydropower scheduling model construction method described in the present application.

Storage media, including both permanent and non-permanent, removable and non-removable media, may be implemented in any method or technology for storage of information. The information may be computer readable instructions, data structures, modules of a program, or other data. Examples of storage media for a computer include, but are not limited to, phase change memory (PRAM), static Random Access Memory (SRAM), dynamic Random Access Memory (DRAM), other types of Random Access Memory (RAM), read Only Memory (ROM), electrically Erasable Programmable Read Only Memory (EEPROM), flash memory or other memory technology, compact disc read only memory (CD-ROM), digital Versatile Discs (DVD) or other optical storage, magnetic cassettes, magnetic tape magnetic disk storage or other magnetic storage devices, or any other non-transmission medium, which can be used to store information that can be accessed by a computing device. Computer-readable media, as defined herein, does not include transitory computer-readable media (transmission media), such as modulated data signals and carrier waves.

It should be noted that the terms "comprises," "comprising," or any other variation thereof, are intended to cover a non-exclusive inclusion, such that a process, method, article, or apparatus that comprises a list of elements does not include only those elements but may include other elements not expressly listed or inherent to such process, method, article, or apparatus. Without further limitation, an element defined by the phrase "comprising one … …" does not exclude the presence of other like elements in a process, method, article or apparatus that comprises an element.

In this specification, each embodiment is described in a progressive manner, and identical and similar parts of each embodiment are all referred to each other, and each embodiment mainly describes differences from other embodiments. In particular, for system embodiments, since they are substantially similar to method embodiments, the description is relatively simple, as relevant to see a section of the description of method embodiments.

Claims (10)

1. A method for visual inspection of an industrial article, the method comprising the steps of:

dividing an image to be detected of an industrial product into a plurality of sub-images, and enabling adjacent sub-images to have overlapping lengths of a set number, so that the sub-images have sequence relevance;

acquiring image features of a plurality of sub-images corresponding to the image to be detected by using a preset first model;

the method comprises the steps of utilizing a preset second model to obtain classification features of sub-images based on the image features of the sub-images, wherein the classification features comprise image features and sequence association features of the sub-images, and soft attention is obtained by adopting an attention mechanism according to each classification feature;

and acquiring fusion characteristics based on the soft attention and classification characteristics belonging to the same detection image, and classifying the products.

2. The method according to claim 1, wherein the sub-image is acquired by means of a sliding window;

the image to be detected is marked as x, and then the t th sub-image x t Can be expressed as:

x t =x[1+(d-b)×(t-1):d+(d-b)×(t-1)]

wherein: d represents the window length, b represents the overlap length of the windows, and the symbol: representing a crop from image x, the pixel values range from (d-b) x (t-1) to d.

3. The method of claim 1, wherein the first model is a residual network model; the second model is RNN, long short term memory network (LSTM), or gated loop unit (GRU).

4. The method of claim 1, wherein the soft attention is calculated using the formula:

the soft attention corresponding to the t th sub-image is denoted as a t :

h t =σ(W e f t +W h h t-1 )

h 0 =0

Wherein:W a xi Juzhen, h is soft attention mechanics j For the classification feature of the jth sub-image, h t For the classification feature of the t sub-image, h t-1 For the classification feature of the t-1 th sub-image, f t For the image features of the sub-images, t=1, 2, …, T is the total number of sub-images, σ is the ReLU function, W e Extraction matrix for extracting classification features of sub-image from image features of sub-image, W h The sequence incidence matrix is the t-1 th sub-image and the t th sub-image.

5. The method of claim 1, wherein the obtaining of the fusion feature based on the soft attention and the classification feature belonging to the same detected image, classifying the product, comprises:

let the fusion feature be C, then:

based on the fusion characteristics, calculating the probability of the category to which the industrial product belongs:

taking the classification corresponding to the maximum probability as the classification of the industrial products in the image to be detected;

wherein K is the type of industrial product, W c In order to classify the matrix,for a classification matrix W c Line k, a t Soft attention, h, for the t th sub-image t For the classification feature of the T th sub-image, T is the total number of sub-images, p (y k ) Representing the probability of belonging to the kth class. />

6. The method of claim 1, wherein the first model, second model update model parameters by back-propagation of loss values; wherein the loss value is calculated by the following formula:

L=λL reg +L ce

wherein: l is a loss value, lambda is a regular coefficient, L ce Is the standard cross entropy, L reg Is a regular term.

7. The method of claim 6, wherein the regularization term is a square of an L2 norm of the first model parameter vector and the second model parameter vector.

8. The method of claim 1, wherein the image input by the first model during the training phase is subjected to enhancement processing; the enhancement processing comprises random flipping, random shielding and random rotation.

9. An industrial visual inspection system is characterized by comprising an external integrated subsystem, a classification detector and a database;

the external integrated subsystem is configured to call a message center system and receive feedback of the message center system, the message center system is configured to control starting of a machine system, and the control machine system is configured to control starting of a motor, a camera and a conveyor belt where an industrial product is located so as to acquire an image to be detected of the industrial product;

the classification detector is configured to divide an image to be detected of an industrial product into a plurality of sub-images, and enable adjacent sub-images to have overlapping lengths of a set number; acquiring image features of a plurality of sub-images corresponding to the image to be detected by using a preset first model; the method comprises the steps of utilizing a preset second model to obtain classification features of sub-images based on image features of the sub-images, and obtaining soft attention by adopting an attention mechanism according to each classification feature; acquiring fusion characteristics based on the soft attention and classification characteristics belonging to the same detection image, and classifying products;

the database is configured to store images to be detected and classification results of the images to be detected.

10. A computer-readable storage medium, characterized by: a computer program stored which can be loaded by a processor and which performs the method according to any one of claims 1 to 8.

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202211098674.0A CN116071290A (en) | 2022-09-08 | 2022-09-08 | A method and system for visual inspection of industrial products |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202211098674.0A CN116071290A (en) | 2022-09-08 | 2022-09-08 | A method and system for visual inspection of industrial products |

Publications (1)

| Publication Number | Publication Date |

|---|---|

| CN116071290A true CN116071290A (en) | 2023-05-05 |

Family

ID=86173779

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202211098674.0A Pending CN116071290A (en) | 2022-09-08 | 2022-09-08 | A method and system for visual inspection of industrial products |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN116071290A (en) |

Citations (6)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US20190138786A1 (en) * | 2017-06-06 | 2019-05-09 | Sightline Innovation Inc. | System and method for identification and classification of objects |

| CN110186938A (en) * | 2019-06-28 | 2019-08-30 | 笪萨科技(上海)有限公司 | Two-sided defect analysis equipment and defects detection and analysis system |

| CN113743180A (en) * | 2021-05-06 | 2021-12-03 | 西安电子科技大学 | CNNKD-based radar HRRP small sample target identification method |

| WO2022067606A1 (en) * | 2020-09-30 | 2022-04-07 | 中国科学院深圳先进技术研究院 | Method and system for detecting abnormal behavior of pedestrian, and terminal and storage medium |

| CN114693624A (en) * | 2022-03-23 | 2022-07-01 | 腾讯科技(深圳)有限公司 | Image detection method, device and equipment and readable storage medium |

| CN114881994A (en) * | 2022-05-25 | 2022-08-09 | 鞍钢集团北京研究院有限公司 | Product defect detection method, device and storage medium |

-

2022

- 2022-09-08 CN CN202211098674.0A patent/CN116071290A/en active Pending

Patent Citations (6)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US20190138786A1 (en) * | 2017-06-06 | 2019-05-09 | Sightline Innovation Inc. | System and method for identification and classification of objects |

| CN110186938A (en) * | 2019-06-28 | 2019-08-30 | 笪萨科技(上海)有限公司 | Two-sided defect analysis equipment and defects detection and analysis system |

| WO2022067606A1 (en) * | 2020-09-30 | 2022-04-07 | 中国科学院深圳先进技术研究院 | Method and system for detecting abnormal behavior of pedestrian, and terminal and storage medium |

| CN113743180A (en) * | 2021-05-06 | 2021-12-03 | 西安电子科技大学 | CNNKD-based radar HRRP small sample target identification method |

| CN114693624A (en) * | 2022-03-23 | 2022-07-01 | 腾讯科技(深圳)有限公司 | Image detection method, device and equipment and readable storage medium |

| CN114881994A (en) * | 2022-05-25 | 2022-08-09 | 鞍钢集团北京研究院有限公司 | Product defect detection method, device and storage medium |

Non-Patent Citations (3)

| Title |

|---|

| WEN SU等: "Soft Regression of Monocular Depth Using Scale-Semantic Exchange Network", 《IEEE ACCESS》, vol. 8, 18 June 2020 (2020-06-18) * |

| 石昊南: "基于弱监督学习的工业半成品缺陷检测方法研究", 《全国优秀硕士学位论文数据集》, 15 March 2025 (2025-03-15) * |

| 程睿: "基于通道和空间注意力的深度多示例算法研究", 《全国优秀硕士学位论文数据集》, 15 July 2022 (2022-07-15) * |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN114821286B (en) | A lightweight underwater target detection method and system based on image enhancement | |

| CN114549507B (en) | Improved Scaled-YOLOv4 fabric defect detection method | |

| CN112308019A (en) | SAR ship target detection method based on network pruning and knowledge distillation | |

| CN113192040A (en) | Fabric flaw detection method based on YOLO v4 improved algorithm | |

| CN111932511B (en) | A method and system for quality detection of electronic components based on deep learning | |

| CN112085735A (en) | Aluminum image defect detection method based on self-adaptive anchor frame | |

| CN115861170A (en) | Surface defect detection method based on improved YOLO V4 algorithm | |

| CN107644415A (en) | A kind of text image method for evaluating quality and equipment | |

| CN111339902B (en) | A method and device for identifying digital display numbers on an LCD screen of a digital display instrument | |

| CN113962980A (en) | Glass container flaw detection method and system based on improved YOLOV5X | |

| CN109509170A (en) | A kind of die casting defect inspection method and device | |

| CN115457026A (en) | Paper defect detection method based on improved YOLOv5 | |

| CN120318607B (en) | Penicillin bottle body defect detection method based on improvement YOLOv8 | |

| CN115829995A (en) | Cloth flaw detection method and system based on pixel-level multi-scale feature fusion | |

| WO2021237682A1 (en) | Display panel detection device and detection method, electronic device, and readable medium | |

| CN112733686A (en) | Target object identification method and device used in image of cloud federation | |

| CN118097125A (en) | Transparent instrument instance segmentation method based on CTAIS-SOLOv2 | |

| CN108133235A (en) | A kind of pedestrian detection method based on neural network Analysis On Multi-scale Features figure | |

| CN114898290A (en) | Real-time detection method and system for marine ship | |

| CN112465821A (en) | Multi-scale pest image detection method based on boundary key point perception | |

| CN119516434A (en) | A method for evaluating students' class status as a whole based on the class | |

| CN117115722B (en) | Construction scene detection method and device, storage medium and electronic equipment | |

| CN114332112A (en) | Cell image segmentation method and device, electronic equipment and storage medium | |

| CN118135353A (en) | A DDETR small sample target detection method based on transfer learning fine-tuning | |

| CN117745691A (en) | Sample defect identification and calibration method based on Mask R-CNN |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination |