CN115131648A - On-line handwritten Chinese text line recognition method based on real-time updating of local feature map - Google Patents

On-line handwritten Chinese text line recognition method based on real-time updating of local feature map Download PDFInfo

- Publication number

- CN115131648A CN115131648A CN202210823282.XA CN202210823282A CN115131648A CN 115131648 A CN115131648 A CN 115131648A CN 202210823282 A CN202210823282 A CN 202210823282A CN 115131648 A CN115131648 A CN 115131648A

- Authority

- CN

- China

- Prior art keywords

- real

- time

- image

- stroke

- feature map

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Pending

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V30/00—Character recognition; Recognising digital ink; Document-oriented image-based pattern recognition

- G06V30/10—Character recognition

- G06V30/18—Extraction of features or characteristics of the image

- G06V30/1801—Detecting partial patterns, e.g. edges or contours, or configurations, e.g. loops, corners, strokes or intersections

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/40—Extraction of image or video features

- G06V10/44—Local feature extraction by analysis of parts of the pattern, e.g. by detecting edges, contours, loops, corners, strokes or intersections; Connectivity analysis, e.g. of connected components

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/70—Arrangements for image or video recognition or understanding using pattern recognition or machine learning

- G06V10/764—Arrangements for image or video recognition or understanding using pattern recognition or machine learning using classification, e.g. of video objects

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/70—Arrangements for image or video recognition or understanding using pattern recognition or machine learning

- G06V10/82—Arrangements for image or video recognition or understanding using pattern recognition or machine learning using neural networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V30/00—Character recognition; Recognising digital ink; Document-oriented image-based pattern recognition

- G06V30/10—Character recognition

- G06V30/19—Recognition using electronic means

- G06V30/191—Design or setup of recognition systems or techniques; Extraction of features in feature space; Clustering techniques; Blind source separation

- G06V30/19173—Classification techniques

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V30/00—Character recognition; Recognising digital ink; Document-oriented image-based pattern recognition

- G06V30/10—Character recognition

- G06V30/32—Digital ink

- G06V30/333—Preprocessing; Feature extraction

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V30/00—Character recognition; Recognising digital ink; Document-oriented image-based pattern recognition

- G06V30/10—Character recognition

- G06V30/32—Digital ink

- G06V30/36—Matching; Classification

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- General Physics & Mathematics (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Multimedia (AREA)

- Evolutionary Computation (AREA)

- Artificial Intelligence (AREA)

- General Health & Medical Sciences (AREA)

- Health & Medical Sciences (AREA)

- Computing Systems (AREA)

- Software Systems (AREA)

- Databases & Information Systems (AREA)

- Medical Informatics (AREA)

- Biomedical Technology (AREA)

- Mathematical Physics (AREA)

- General Engineering & Computer Science (AREA)

- Molecular Biology (AREA)

- Data Mining & Analysis (AREA)

- Computational Linguistics (AREA)

- Biophysics (AREA)

- Life Sciences & Earth Sciences (AREA)

- Character Discrimination (AREA)

Abstract

Description

技术领域technical field

本发明涉及计算机视觉与文档图像分析技术领域,尤其是基于局部特征图实时更新的联机手写中文文本行识别方法。The invention relates to the technical field of computer vision and document image analysis, in particular to an online handwritten Chinese text line recognition method based on real-time updating of local feature maps.

背景技术Background technique

手写文本识别作为光学字符识别(OCR)中极为重要的一部分,一直是许多工作人员重点研究的一部分。联机手写中文文本行识别相对于文档印刷体文本识别来说更加复杂,具有字体风格变化大、种类繁多等问题。目前使用的识别大部分是基于图像的编解码识别方法,这种方法取得了一定的成效。但对于中文文本来说,特别是长文本行,由于生成的图像太大,难以流畅实现联机手写文本的实时识别。对于用户来说,若是等整个句子书写完成再点击识别,用户需要一个点击操作以及一小段时间的等待,这是难以忍受的。在实际使用时,为了在实际使用中不出现卡顿,常常在应用时限制为识别单字或者短文本,这限制了系统的实用性。目前的实时识别方法大多数使用的是基于轨迹的过切分方法文本行识别,这类方法主要是基于LSTM进行识别,但由于联机手写中文文本行中存在大量的长文本行情况,轨迹序列非常长,使用LSTM方法难以进行准确的过切分,也难以取得很好的识别精度。随着深度学习的发展,基于图像的无切分识别方法近几年逐渐在文本行识别模型中主导地位,对长文本行识别有一定的优势。但为了保留完整的信息,在联机手写文本行识别中需要生成较大的文本行图像,如果用基于图像的文本行识别方法进行实时识别则需要花费大量时间,影响用户的书写体验,不利于实时识别在嵌入式设备等实际场景中应用。As an extremely important part of Optical Character Recognition (OCR), handwritten text recognition has been the focus of many researchers. Compared with document print text recognition, online handwritten Chinese text line recognition is more complex, and has problems such as large changes in font styles and a wide variety of types. Most of the currently used recognition methods are image-based codec recognition methods, which have achieved certain results. However, for Chinese texts, especially long text lines, it is difficult to realize real-time recognition of online handwritten texts smoothly because the generated images are too large. For users, if they wait for the entire sentence to be written and then click for recognition, the user needs a click operation and a short wait, which is unbearable. In actual use, in order not to be stuck in actual use, it is often limited to recognize single words or short texts in application, which limits the practicability of the system. Most of the current real-time recognition methods use the trajectory-based over-segmentation method for text line recognition. This type of method is mainly based on LSTM for recognition. However, due to the large number of long text lines in the online handwritten Chinese text lines, the trajectory sequence is very It is difficult to perform accurate over-segmentation using the LSTM method, and it is also difficult to achieve good recognition accuracy. With the development of deep learning, image-based non-segmentation recognition methods have gradually become dominant in text line recognition models in recent years, and have certain advantages for long text line recognition. However, in order to retain complete information, a large text line image needs to be generated in online handwritten text line recognition. If real-time recognition is performed using the image-based text line recognition method, it will take a lot of time, affecting the user's writing experience, and is not conducive to real-time recognition. Recognition is applied in practical scenarios such as embedded devices.

发明内容SUMMARY OF THE INVENTION

本发明提出基于局部特征图实时更新的联机手写中文文本行识别方法,速度大幅提升,具有较低的图像大小依赖性、较强的可拓展性与鲁棒性,能用于各种资源受限的嵌入式设备中,具有较高的应用价值。The invention proposes an online handwritten Chinese text line recognition method based on real-time update of local feature maps, which greatly improves the speed, has low image size dependence, strong scalability and robustness, and can be used for various resource constraints. It has high application value in embedded devices.

本发明采用以下技术方案。The present invention adopts the following technical solutions.

基于局部特征图实时更新的联机手写中文文本行识别方法,用于手写文本的实时识别,包括以下步骤;An online handwritten Chinese text line recognition method based on real-time update of local feature maps for real-time recognition of handwritten text, including the following steps;

步骤S1、初始化实时识别模型;Step S1, initialize the real-time recognition model;

步骤S2、在启动笔画输入时,以笔尖触碰手写设备的手写面为开始标志;Step S2, when starting the stroke input, use the pen tip to touch the handwriting surface of the handwriting device as a start sign;

步骤S3、采集联机手写中文文本行轨迹,记录每一个笔画的坐标点序列,以提笔作为一个笔画的结束标志;Step S3, collects the online handwritten Chinese text line track, records the coordinate point sequence of each stroke, and takes the pen as the end mark of a stroke;

步骤S4、截取新输入的笔画对应的局部图像,并对其进行预处理;Step S4, intercepting the local image corresponding to the newly input stroke, and preprocessing it;

步骤S5、计算新输入笔画对应的局部图像的CNN特征,将新特征替换到上一次的特征图上,实现新笔画的局部特征实时更新;Step S5, calculate the CNN feature of the local image corresponding to the new input stroke, replace the new feature on the last feature map, and realize the real-time update of the local feature of the new stroke;

步骤S6、使用CTC结合n-gram语言模型解码更新识别结果,实现联机手写中文文本行实时识别。Step S6, using the CTC combined with the n-gram language model to decode and update the recognition result, so as to realize the real-time recognition of online handwritten Chinese text lines.

在步骤S1中,实时识别模型包括一个深度卷积神经网络编码器、一个RNN 解码器、以及CTC与语言模型转录层;实时识别模型在初始化时,先初始化一张预设高度和宽度,且像素值全零的文本行图像,再将文本行图像送入手写文本行识别模型,得到手写文本行识别模型中每一个卷积层、池化层的全局输出特征图,用于后续的实时特征更新。In step S1, the real-time recognition model includes a deep convolutional neural network encoder, an RNN decoder, and a CTC and language model transcription layer; when the real-time recognition model is initialized, a preset height and width are first initialized, and the pixel The text line image with all zero values is sent to the handwritten text line recognition model, and the global output feature map of each convolution layer and pooling layer in the handwritten text line recognition model is obtained for subsequent real-time feature updates. .

所述文本行图像的高度、宽度与实际手写场景匹配,手写文本的书写顺序是从左到右且允许倒插笔,手写文字的大小与文本行图像的尺寸匹配。The height and width of the text line image match the actual handwriting scene, the writing order of the handwritten text is from left to right and allows the pen to be inserted backwards, and the size of the handwritten text matches the size of the text line image.

步骤S1中,实时识别模型在CRNN的基础上进行图像的特征提取,其中网络中的池化层都设置为平均池化,除了前两个卷积层,后面其他所有卷积层后都接着批归一化层;In step S1, the real-time recognition model performs image feature extraction on the basis of CRNN, in which the pooling layers in the network are all set to average pooling, except for the first two convolutional layers, all subsequent convolutional layers are followed by batches. normalization layer;

实时识别模型使用7个卷积层以及4个池化层来提取特征,对应的卷积核个数即输出的特征图数,7个卷积层的卷积核个数分别为{64,128,256,256,256,512, 512},最后使用一个全局平均池化层将图像池化到高度为1,全局平均池化过程宽度保持不变;The real-time recognition model uses 7 convolutional layers and 4 pooling layers to extract features, and the corresponding number of convolution kernels is the number of output feature maps. }, and finally use a global average pooling layer to pool the image to a height of 1, and the global average pooling process width remains unchanged;

实时识别模型RNN部分使用的是两层堆叠的双向LSTM,隐藏层节点数为 256。最后经过CTC与Beam Search引入语言模型进行最终解码。The RNN part of the real-time recognition model uses a two-layer stacked bidirectional LSTM, and the number of hidden layer nodes is 256. Finally, the language model is introduced through CTC and Beam Search for final decoding.

步骤S3中,采集联机手写中文文本行轨迹,记录每一个笔画的坐标点序列并表示为(x1,y1),(x2,y2),…,(xn,yn),以提笔作为一个笔画的结束标志。In step S3, the online handwritten Chinese text line trajectory is collected, the coordinate point sequence of each stroke is recorded and expressed as (x1, y1), (x2, y2), ..., (xn, yn), and the pen is taken as a stroke. end sign.

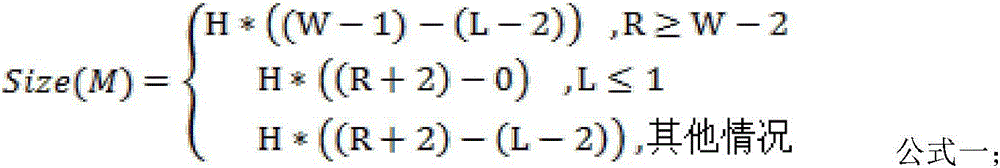

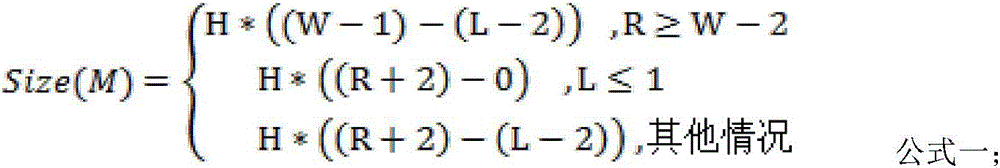

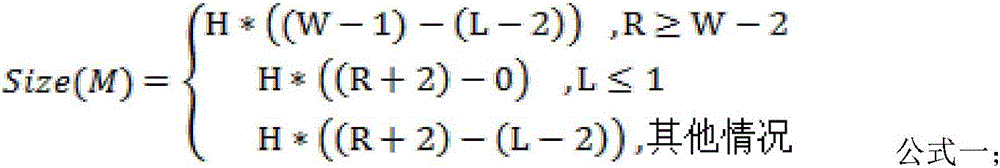

步骤S4中,截取新输入的笔画对应局部图像并进行预处理;具体为:当用户输入一个新的笔画后,先求出该笔画的左右两个x轴边界,记为L,R,设卷积核大小为3×3,且需加入边界两边各2列像素,由此得到截取的局部图像M大小为:In step S4, the corresponding partial image of the newly inputted stroke is intercepted and preprocessed; specifically: when the user inputs a new stroke, first obtain the left and right x-axis boundaries of the stroke, denoted as L, R, and set the volume The size of the product kernel is 3×3, and 2 columns of pixels on both sides of the boundary need to be added, so the size of the intercepted local image M is:

其中,H、W分别为图像的高度和宽度;公式一右侧上两行分别对应的第一种情况、第二种情况,分别是笔画边界在原图左右两边缘的情况,公式一右侧第三行对应的第三种情况为笔画书写后截取的局部图像是左右边界各扩充2列像素组成的矩形区域;Among them, H and W are the height and width of the image respectively; the first case and the second case corresponding to the upper two lines on the right side of

第一种情况是在原图左边缘(L≤1),这时候右边界扩充2列像素,左边界扩充到左侧第一列,因此宽度为((W-1)-(L-2));The first case is at the left edge of the original image (L≤1). At this time, the right border is expanded by 2 columns of pixels, and the left border is expanded to the first column on the left, so the width is ((W-1)-(L-2)) ;

第二种情况是在原图右边缘(R≥W-2),这时候左边界扩充2列像素,右边界扩充到右侧最后一列,因此宽度为((R+2)-0)。The second case is at the right edge of the original image (R≥W-2). At this time, the left border is expanded by 2 columns of pixels, and the right border is expanded to the last column on the right, so the width is ((R+2)-0).

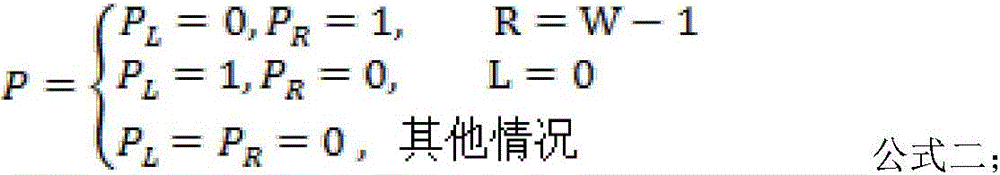

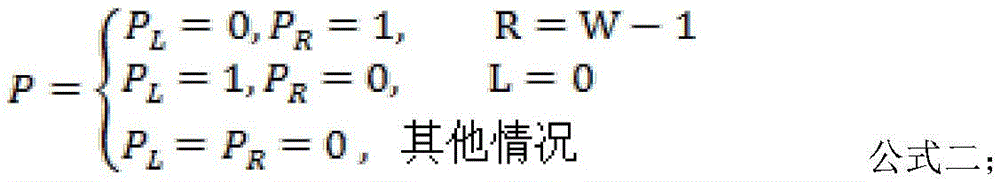

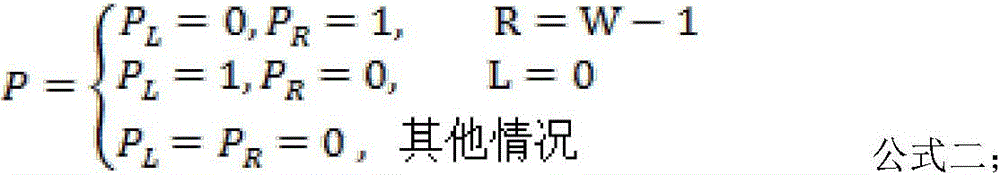

在截取局部图像后,对截取的局部图像M进行填充padding,具体方法为:局部图像M上下两边各padding 1行像素来模拟原图卷积所需的padding;若局部图像M在原图左右两侧,则根据不同情况进行padding,以公式表述为After the partial image is intercepted, padding is performed on the intercepted partial image M. The specific method is: padding 1 row of pixels on each of the upper and lower sides of the partial image M to simulate the padding required for the convolution of the original image; if the partial image M is on the left and right sides of the original image , then padding is performed according to different situations, which is expressed by the formula as

公式中PL、PR分别是左右两侧的padding情况;如果局部图像不在原图左右两侧,这时候局部图像左右两侧已经扩充2列像素,不需要进行padding操作,即 PL=PR=0;如果局部图像在原图左右两侧,比如左侧(L=0),这时候需要在左侧padding一列全0像素来模拟原图的padding操作。In the formula, P L and P R are the padding conditions on the left and right sides respectively; if the partial image is not on the left and right sides of the original image, at this time, the left and right sides of the partial image have been expanded by 2 columns of pixels, and no padding operation is required, that is, P L =P R = 0; if the partial image is on the left and right sides of the original image, such as the left side (L=0), at this time, it is necessary to padding a column of all 0 pixels on the left side to simulate the padding operation of the original image.

步骤S5中,仅计算新输入笔画对应的局部图片的CNN特征,将新特征替换到上一次的特征图上,实现新笔画的局部特征实时更新,具体方法为:In step S5, only the CNN feature of the local image corresponding to the new input stroke is calculated, and the new feature is replaced on the previous feature map to realize the real-time update of the local feature of the new stroke. The specific method is:

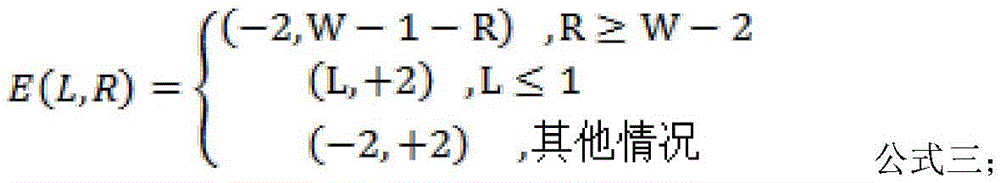

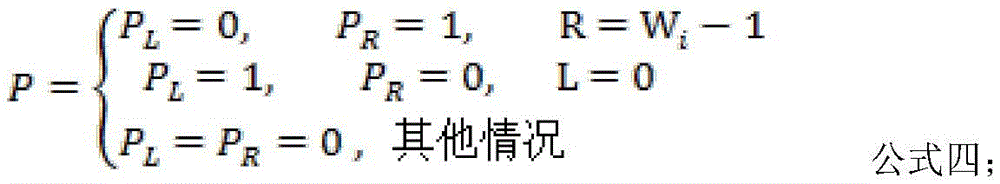

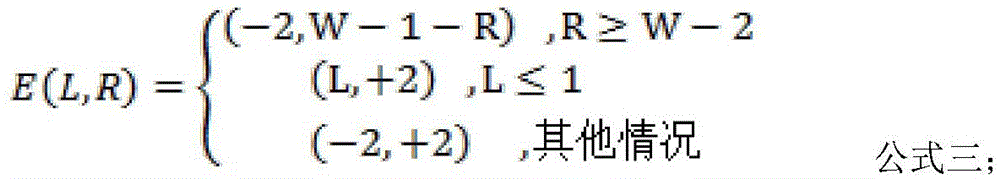

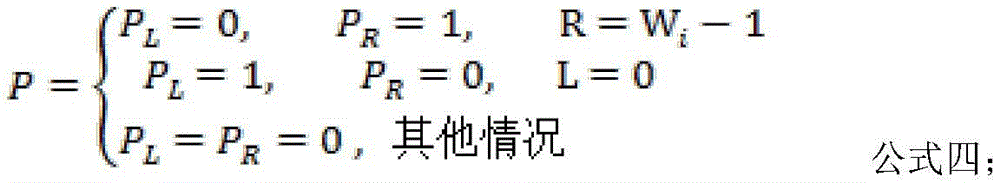

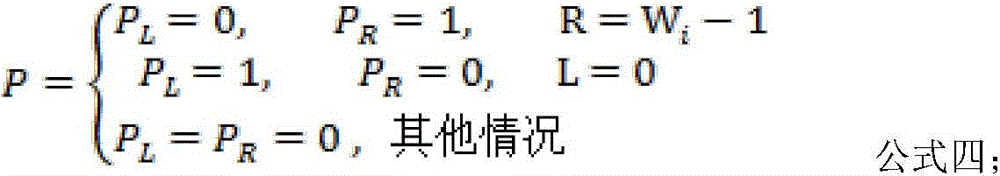

将截取的局部图像进行局部卷积操作,迭代地卷积、池化并更新每一个网络层的特征图。池化过程中不padding,卷积过程要扩充的像素与padding的情况分别为:The local convolution operation is performed on the intercepted local image, and the feature map of each network layer is iteratively convolved, pooled and updated. There is no padding in the pooling process. The pixels and padding to be expanded in the convolution process are as follows:

Wi表示第i层特征图的宽度;E(L,R)表示要扩充的像素,当是(-2,+2)时,即左右两侧扩充2列,则原特征图对应像素值到要更新的局部特征图上,继续下一层的迭代卷积与特征图更新;Wi represents the width of the feature map of the i -th layer; E(L, R) represents the pixel to be expanded. When it is (-2,+2), that is, the left and right sides are expanded by 2 columns, then the corresponding pixel value of the original feature map is On the local feature map to be updated, continue the iterative convolution and feature map update of the next layer;

在扩充特征图像素时考虑是否能被池化层步长整除,具体为:池化层步长为2 的时候,若当前的L不能被步长2整除,则应该向左扩充L%2列像素;若扩展到步长为S的情况,计当前第L列,则需要向左扩充L%S列像素;同理,对于局部特征右侧,计当前第R列,则需要向右扩充S-L%S列像素。Consider whether it can be divisible by the step size of the pooling layer when expanding the feature map pixels, specifically: when the step size of the pooling layer is 2, if the current L cannot be divisible by the step size 2, the L%2 column should be expanded to the left Pixels; if the step size is S, counting the current L-th column, you need to expand L%S column pixels to the left; similarly, for the right side of the local feature, counting the current R-th column, you need to expand S-L to the right %S columns of pixels.

步骤S6中,使用CTC结合N-Gram语言模型解码更新识别结果,实现联机手写中文文本行实时识别。In step S6, use the CTC combined with the N-Gram language model to decode and update the recognition result, so as to realize the real-time recognition of online handwritten Chinese text lines.

本发明提出了一种基于局部特征图实时更新的联机手写中文文本行实时识别方法。针对联机手写中文文本行进行识别,改进了CRNN来进行图像特征的抽取与解码,在推理阶段,实现对联机手写中文文本行的实时识别。该方法在两种不同类别的手写场景中都取得优秀的结果,并且速度有大幅提升,具有较低的图像大小依赖性,较强的可拓展性与鲁棒性,该方法能用于各种资源受限的嵌入式设备中,具有较高的应用价值。The invention proposes a real-time recognition method for online handwritten Chinese text lines based on real-time updating of local feature maps. For the recognition of online handwritten Chinese text lines, the CRNN is improved to extract and decode image features. In the inference stage, real-time recognition of online handwritten Chinese text lines is realized. The method achieves excellent results in two different categories of handwriting scenes, and the speed is greatly improved, with low image size dependence, strong scalability and robustness, the method can be used in various It has high application value in embedded devices with limited resources.

本发明的识别速度大幅提升,具有较低的图像大小依赖性、较强的可拓展性与鲁棒性,能用于各种资源受限的嵌入式设备中,具有较高的应用价值。The recognition speed of the invention is greatly improved, and the invention has lower image size dependence, stronger expandability and robustness, can be used in various embedded devices with limited resources, and has higher application value.

相较于现有技术,本发明还具有以下有益效果:Compared with the prior art, the present invention also has the following beneficial effects:

(1)实现联机手写中文文本行识别。(1) Realize online handwritten Chinese text line recognition.

(2)实现联机手写中文文本行实时识别。(2) Real-time recognition of online handwritten Chinese text lines is realized.

(3)本发明提出的方法具有较高的可扩展性,可在其他网络结构甚至其他额外特征比如路径重积分特征上进行应用。(3) The method proposed in the present invention has high scalability and can be applied to other network structures and even other additional features such as path reintegration features.

附图说明Description of drawings

下面结合附图和具体实施方式对本发明进一步详细的说明:The present invention will be described in further detail below in conjunction with the accompanying drawings and specific embodiments:

附图1是本发明所述方法的流程框图;Accompanying drawing 1 is the flow chart of the method of the present invention;

附图2是联机手写文本行识别总体网络结构示意图;Accompanying drawing 2 is the overall network structure schematic diagram of online handwritten text line recognition;

附图3是局部更新区域示意图;Accompanying drawing 3 is the schematic diagram of partial update area;

附图4是所述方法的实施示例示意图。FIG. 4 is a schematic diagram of an implementation example of the method.

具体实施方式Detailed ways

如图所示,基于局部特征图实时更新的联机手写中文文本行识别方法,用于手写文本的实时识别,包括以下步骤;As shown in the figure, an online handwritten Chinese text line recognition method based on real-time update of local feature maps is used for real-time recognition of handwritten text, including the following steps;

步骤S1、初始化实时识别模型;Step S1, initialize the real-time recognition model;

步骤S2、在启动笔画输入时,以笔尖触碰手写设备的手写面为开始标志;Step S2, when starting the stroke input, use the pen tip to touch the handwriting surface of the handwriting device as a start sign;

步骤S3、采集联机手写中文文本行轨迹,记录每一个笔画的坐标点序列,以提笔作为一个笔画的结束标志;Step S3, collects the online handwritten Chinese text line track, records the coordinate point sequence of each stroke, and takes the pen as the end mark of a stroke;

步骤S4、截取新输入的笔画对应的局部图像,并对其进行预处理;Step S4, intercepting the local image corresponding to the newly input stroke, and preprocessing it;

步骤S5、计算新输入笔画对应的局部图像的CNN特征,将新特征替换到上一次的特征图上,实现新笔画的局部特征实时更新;Step S5, calculate the CNN feature of the local image corresponding to the new input stroke, replace the new feature on the last feature map, and realize the real-time update of the local feature of the new stroke;

步骤S6、使用CTC结合n-gram语言模型解码更新识别结果,实现联机手写中文文本行实时识别。Step S6, using the CTC combined with the n-gram language model to decode and update the recognition result, so as to realize the real-time recognition of online handwritten Chinese text lines.

在步骤S1中,实时识别模型包括一个深度卷积神经网络编码器、一个RNN 解码器、以及CTC与语言模型转录层;实时识别模型在初始化时,先初始化一张预设高度和宽度,且像素值全零的文本行图像,再将文本行图像送入手写文本行识别模型,得到手写文本行识别模型中每一个卷积层、池化层的全局输出特征图,用于后续的实时特征更新。In step S1, the real-time recognition model includes a deep convolutional neural network encoder, an RNN decoder, and a CTC and language model transcription layer; when the real-time recognition model is initialized, a preset height and width are first initialized, and the pixel The text line image with all zero values is sent to the handwritten text line recognition model, and the global output feature map of each convolution layer and pooling layer in the handwritten text line recognition model is obtained for subsequent real-time feature updates. .

所述文本行图像的高度、宽度与实际手写场景匹配,手写文本的书写顺序是从左到右且允许倒插笔,手写文字的大小与文本行图像的尺寸匹配。The height and width of the text line image match the actual handwriting scene, the writing order of the handwritten text is from left to right and allows the pen to be inserted backwards, and the size of the handwritten text matches the size of the text line image.

步骤S1中,实时识别模型在CRNN的基础上进行图像的特征提取,其中网络中的池化层都设置为平均池化,除了前两个卷积层,后面其他所有卷积层后都接着批归一化层;In step S1, the real-time recognition model performs image feature extraction on the basis of CRNN, in which the pooling layers in the network are all set to average pooling, except for the first two convolutional layers, all subsequent convolutional layers are followed by batches. normalization layer;

实时识别模型使用7个卷积层以及4个池化层来提取特征,对应的卷积核个数即输出的特征图数,7个卷积层的卷积核个数分别为{64,128,256,256,256,512, 512},最后使用一个全局平均池化层将图像池化到高度为1,全局平均池化过程宽度保持不变;The real-time recognition model uses 7 convolution layers and 4 pooling layers to extract features. The corresponding number of convolution kernels is the number of output feature maps. The number of convolution kernels of the 7 convolution layers is {64, 128 respectively. , 256, 256, 256, 512, 512}, and finally use a global average pooling layer to pool the image to a height of 1, and the global average pooling process width remains unchanged;

实时识别模型RNN部分使用的是两层堆叠的双向LSTM,隐藏层节点数为 256。最后经过CTC与Beam Search引入语言模型进行最终解码。The RNN part of the real-time recognition model uses a two-layer stacked bidirectional LSTM, and the number of hidden layer nodes is 256. Finally, the language model is introduced through CTC and Beam Search for final decoding.

步骤S3中,采集联机手写中文文本行轨迹,记录每一个笔画的坐标点序列并表示为(x1,y1),(x2,y2),…,(xn,yn),以提笔作为一个笔画的结束标志。In step S3, the online handwritten Chinese text line trajectory is collected, the coordinate point sequence of each stroke is recorded and expressed as (x1, y1), (x2, y2), ..., (xn, yn), and the pen is taken as a stroke. end sign.

步骤S4中,截取新输入的笔画对应局部图像并进行预处理;具体为:当用户输入一个新的笔画后,先求出该笔画的左右两个x轴边界,记为L,R,设卷积核大小为3×3,且需加入边界两边各2列像素,由此得到截取的局部图像M大小为:In step S4, the corresponding partial image of the newly inputted stroke is intercepted and preprocessed; specifically: when the user inputs a new stroke, first obtain the left and right x-axis boundaries of the stroke, denoted as L, R, and set the volume The size of the product kernel is 3×3, and 2 columns of pixels on both sides of the boundary need to be added, so the size of the intercepted local image M is:

其中,H、W分别为图像的高度和宽度;公式一右侧上两行分别对应的第一种情况、第二种情况,分别是笔画边界在原图左右两边缘的情况,公式一右侧第三行对应的第三种情况为笔画书写后截取的局部图像是左右边界各扩充2列像素组成的矩形区域;Among them, H and W are the height and width of the image respectively; the first case and the second case corresponding to the upper two lines on the right side of

第一种情况是在原图左边缘(L≤1),这时候右边界扩充2列像素,左边界扩充到左侧第一列,因此宽度为((W-1)-(L-2));The first case is at the left edge of the original image (L≤1). At this time, the right border is expanded by 2 columns of pixels, and the left border is expanded to the first column on the left, so the width is ((W-1)-(L-2)) ;

第二种情况是在原图右边缘(R≥W-2),这时候左边界扩充2列像素,右边界扩充到右侧最后一列,因此宽度为((R+2)-0)。The second case is at the right edge of the original image (R≥W-2). At this time, the left border is expanded by 2 columns of pixels, and the right border is expanded to the last column on the right, so the width is ((R+2)-0).

在截取局部图像后,对截取的局部图像M进行填充padding,具体方法为:局部图像M上下两边各padding 1行像素来模拟原图卷积所需的padding;若局部图像M在原图左右两侧,则根据不同情况进行padding,以公式表述为After the partial image is intercepted, padding is performed on the intercepted partial image M. The specific method is: padding 1 row of pixels on each of the upper and lower sides of the partial image M to simulate the padding required for the convolution of the original image; if the partial image M is on the left and right sides of the original image , then padding is performed according to different situations, which is expressed by the formula as

公式中PL、PR分别是左右两侧的padding情况;如果局部图像不在原图左右两侧,这时候局部图像左右两侧已经扩充2列像素,不需要进行padding操作,即 PL=PR=0;如果局部图像在原图左右两侧,比如左侧(L=0),这时候需要在左侧padding一列全0像素来模拟原图的padding操作。In the formula, P L and P R are the padding conditions on the left and right sides respectively; if the partial image is not on the left and right sides of the original image, at this time, the left and right sides of the partial image have been expanded by 2 columns of pixels, and no padding operation is required, that is, P L =P R = 0; if the partial image is on the left and right sides of the original image, such as the left side (L=0), at this time, it is necessary to padding a column of all 0 pixels on the left side to simulate the padding operation of the original image.

步骤S5中,仅计算新输入笔画对应的局部图片的CNN特征,将新特征替换到上一次的特征图上,实现新笔画的局部特征实时更新。具体方法为:In step S5, only the CNN feature of the local picture corresponding to the new input stroke is calculated, and the new feature is replaced on the previous feature map, so that the local feature of the new stroke is updated in real time. The specific method is:

将截取的局部图像进行局部卷积操作,迭代地卷积、池化并更新每一个网络层的特征图。池化过程中不padding,卷积过程要扩充的像素与padding的情况分别为:The local convolution operation is performed on the intercepted local image, and the feature map of each network layer is iteratively convolved, pooled and updated. There is no padding in the pooling process. The pixels and padding to be expanded in the convolution process are as follows:

Wi表示第i层特征图的宽度;E(L,R)表示要扩充的像素,当是(-2,+2)时,即左右两侧扩充2列,则原特征图对应像素值到要更新的局部特征图上,继续下一层的迭代卷积与特征图更新;Wi represents the width of the feature map of the i -th layer; E(L, R) represents the pixel to be expanded. When it is (-2,+2), that is, the left and right sides are expanded by 2 columns, then the corresponding pixel value of the original feature map is On the local feature map to be updated, continue the iterative convolution and feature map update of the next layer;

在扩充特征图像素时考虑是否能被池化层步长整除,具体为:池化层步长为2 的时候,若当前的L不能被步长2整除,则应该向左扩充L%2列像素;若扩展到步长为S的情况,计当前第L列,则需要向左扩充L%S列像素;同理,对于局部特征右侧,计当前第R列,则需要向右扩充S-L%S列像素。Consider whether it can be divisible by the step size of the pooling layer when expanding the feature map pixels, specifically: when the step size of the pooling layer is 2, if the current L cannot be divisible by the step size 2, the L%2 column should be expanded to the left Pixels; if the step size is S, counting the current L-th column, you need to expand L%S column pixels to the left; similarly, for the right side of the local feature, counting the current R-th column, you need to expand S-L to the right %S columns of pixels.

步骤S6中,使用CTC结合N-Gram语言模型解码更新识别结果,实现联机手写中文文本行实时识别。In step S6, use the CTC combined with the N-Gram language model to decode and update the recognition result, so as to realize the real-time recognition of online handwritten Chinese text lines.

本例中,步骤S1中初始化高度为128,宽度为2400的全黑文本行图像。In this example, a full black text line image with a height of 128 and a width of 2400 is initialized in step S1.

本例中,CTC为文本识别算法,N-Gram是大词汇连续语音识别中常用的一种语言模型,对中文而言,可称之为汉语语言模型(CLM,Chinese Language Model)。In this example, CTC is a text recognition algorithm, and N-Gram is a language model commonly used in large-vocabulary continuous speech recognition. For Chinese, it can be called a Chinese Language Model (CLM, Chinese Language Model).

Claims (9)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202210823282.XA CN115131648A (en) | 2022-07-13 | 2022-07-13 | On-line handwritten Chinese text line recognition method based on real-time updating of local feature map |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202210823282.XA CN115131648A (en) | 2022-07-13 | 2022-07-13 | On-line handwritten Chinese text line recognition method based on real-time updating of local feature map |

Publications (1)

| Publication Number | Publication Date |

|---|---|

| CN115131648A true CN115131648A (en) | 2022-09-30 |

Family

ID=83383494

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202210823282.XA Pending CN115131648A (en) | 2022-07-13 | 2022-07-13 | On-line handwritten Chinese text line recognition method based on real-time updating of local feature map |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN115131648A (en) |

Citations (6)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US20140363083A1 (en) * | 2013-06-09 | 2014-12-11 | Apple Inc. | Managing real-time handwriting recognition |

| CN110298343A (en) * | 2019-07-02 | 2019-10-01 | 哈尔滨理工大学 | A kind of hand-written blackboard writing on the blackboard recognition methods |

| CN110619325A (en) * | 2018-06-20 | 2019-12-27 | 北京搜狗科技发展有限公司 | Text recognition method and device |

| CN113420760A (en) * | 2021-06-22 | 2021-09-21 | 内蒙古师范大学 | Handwritten Mongolian detection and identification method based on segmentation and deformation LSTM |

| JP2021179848A (en) * | 2020-05-14 | 2021-11-18 | キヤノン株式会社 | Image processing system, image processing method, and program |

| CN114612911A (en) * | 2022-01-26 | 2022-06-10 | 哈尔滨工业大学(深圳) | Stroke-level handwritten character sequence recognition method, device, terminal and storage medium |

-

2022

- 2022-07-13 CN CN202210823282.XA patent/CN115131648A/en active Pending

Patent Citations (6)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US20140363083A1 (en) * | 2013-06-09 | 2014-12-11 | Apple Inc. | Managing real-time handwriting recognition |

| CN110619325A (en) * | 2018-06-20 | 2019-12-27 | 北京搜狗科技发展有限公司 | Text recognition method and device |

| CN110298343A (en) * | 2019-07-02 | 2019-10-01 | 哈尔滨理工大学 | A kind of hand-written blackboard writing on the blackboard recognition methods |

| JP2021179848A (en) * | 2020-05-14 | 2021-11-18 | キヤノン株式会社 | Image processing system, image processing method, and program |

| CN113420760A (en) * | 2021-06-22 | 2021-09-21 | 内蒙古师范大学 | Handwritten Mongolian detection and identification method based on segmentation and deformation LSTM |

| CN114612911A (en) * | 2022-01-26 | 2022-06-10 | 哈尔滨工业大学(深圳) | Stroke-level handwritten character sequence recognition method, device, terminal and storage medium |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| Xie et al. | Learning spatial-semantic context with fully convolutional recurrent network for online handwritten Chinese text recognition | |

| CN113673432B (en) | Handwriting recognition method, touch display device, computer device and storage medium | |

| CN110969681B (en) | Handwriting word generation method based on GAN network | |

| Jain et al. | Unconstrained OCR for Urdu using deep CNN-RNN hybrid networks | |

| CN115017884B (en) | Parallel sentence pair extraction method based on text-image multimodal gating enhancement | |

| CN112464926B (en) | Online Chinese-English Mixed Handwriting Recognition Method | |

| CN117975241B (en) | Directional target segmentation-oriented semi-supervised learning method | |

| US6256410B1 (en) | Methods and apparatus for customizing handwriting models to individual writers | |

| CN108664975A (en) | A kind of hand-written Letter Identification Method of Uighur, system and electronic equipment | |

| CN113902764A (en) | Semantic-based image-text cross-modal retrieval method | |

| CN114495118A (en) | Personalized handwritten character generation method based on countermeasure decoupling | |

| CN115620314A (en) | Text recognition method, answer text verification method, device, equipment and medium | |

| Malik et al. | An efficient segmentation technique for Urdu optical character recognizer (OCR) | |

| CN106127222A (en) | The similarity of character string computational methods of a kind of view-based access control model and similarity determination methods | |

| CN114241495B (en) | Data enhancement method for off-line handwritten text recognition | |

| CN112329803B (en) | Natural scene character recognition method based on standard font generation | |

| CN111738167A (en) | A Recognition Method of Unconstrained Handwritten Text Images | |

| CN111985184A (en) | Auxiliary writing font copying method, system and device based on AI vision | |

| CN114511853B (en) | Character image writing track recovery effect discrimination method | |

| Liwicki et al. | Feature selection for HMM and BLSTM based handwriting recognition of whiteboard notes | |

| CN110633706A (en) | A Semantic Segmentation Method Based on Pyramid Network | |

| Zhou et al. | Training convolutional neural network for sketch recognition on large-scale dataset. | |

| CN113989816A (en) | Handwriting font removing method based on artificial intelligence | |

| CN115131648A (en) | On-line handwritten Chinese text line recognition method based on real-time updating of local feature map | |

| El-Awadly et al. | Arabic handwritten text recognition systems and challenges and opportunities |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination |