CN114926468A - Ultrasonic image quality control method, ultrasonic device, and storage medium - Google Patents

Ultrasonic image quality control method, ultrasonic device, and storage medium Download PDFInfo

- Publication number

- CN114926468A CN114926468A CN202210868362.7A CN202210868362A CN114926468A CN 114926468 A CN114926468 A CN 114926468A CN 202210868362 A CN202210868362 A CN 202210868362A CN 114926468 A CN114926468 A CN 114926468A

- Authority

- CN

- China

- Prior art keywords

- image

- ultrasonic image

- processed

- reconstructed

- qualified

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/0002—Inspection of images, e.g. flaw detection

- G06T7/0012—Biomedical image inspection

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T3/00—Geometric image transformations in the plane of the image

- G06T3/40—Scaling of whole images or parts thereof, e.g. expanding or contracting

- G06T3/4053—Scaling of whole images or parts thereof, e.g. expanding or contracting based on super-resolution, i.e. the output image resolution being higher than the sensor resolution

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/10—Image acquisition modality

- G06T2207/10132—Ultrasound image

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/30—Subject of image; Context of image processing

- G06T2207/30168—Image quality inspection

Landscapes

- Engineering & Computer Science (AREA)

- Physics & Mathematics (AREA)

- General Physics & Mathematics (AREA)

- Theoretical Computer Science (AREA)

- Health & Medical Sciences (AREA)

- General Health & Medical Sciences (AREA)

- Medical Informatics (AREA)

- Nuclear Medicine, Radiotherapy & Molecular Imaging (AREA)

- Radiology & Medical Imaging (AREA)

- Quality & Reliability (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Ultra Sonic Daignosis Equipment (AREA)

Abstract

The invention discloses an ultrasonic image quality control method, ultrasonic equipment and a storage medium. The method comprises the following steps: acquiring an ultrasonic image to be processed; performing quality detection on the ultrasonic image to be processed, and judging whether the ultrasonic image to be processed is a qualified ultrasonic image; if the ultrasonic image to be processed is an unqualified ultrasonic image, performing super-resolution processing on the ultrasonic image to be processed to obtain a reconstructed ultrasonic image; carrying out consistency judgment on the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image; and if the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image have consistency, determining that the reconstructed ultrasonic image is the qualified ultrasonic image with improved quality. The method can effectively guarantee the image quality of the reconstructed ultrasonic image and is beneficial to improving the accuracy of the auxiliary diagnosis result by means of the ultrasonic image.

Description

Technical Field

The present invention relates to the field of ultrasound imaging technologies, and in particular, to an ultrasound image quality control method, an ultrasound device, and a storage medium.

Background

The quality control of ultrasound images is one of the main contents of ultrasound department work in medical institutions, which mainly takes a spot-check mode as a main mode at present, and the quality evaluation of ultrasound images mainly depends on personal experience of doctors, which easily causes human errors in image acquisition stages or diagnosis and treatment stages, so that a computer technology is urgently needed to perform quality control on the acquired ultrasound images so as to improve the accuracy of auxiliary diagnosis results by means of ultrasound images.

At present, the main way of implementing quality control on an ultrasound image by means of a computer technology is as follows: in the first mode, by means of an artificial intelligence technology, key part parameters in a standard section of an ultrasonic image are analyzed to determine image quality control; in the second mode, an ultrasonic quality control management platform is established by utilizing a computer technology to control the quality of the ultrasonic image. Both the two ultrasonic image quality control methods cannot realize large-scale and automatic quality control, the first method is limited by the fact that effective quality scoring cannot be performed on the definition of a standard section of an ultrasonic image, and the second method does not provide a reliable quality control screening scheme from a clinical perspective, so that both the ultrasonic image quality control methods cannot guarantee the image quality of the ultrasonic image, and an ultrasonic image with low image quality influences the accuracy of an auxiliary diagnosis result.

Disclosure of Invention

The embodiment of the invention provides an ultrasonic image quality control method, ultrasonic equipment and a storage medium, which are used for solving the problem that the quality control of the existing ultrasonic image cannot guarantee the image quality of the ultrasonic image.

An ultrasound image quality control method, comprising:

acquiring an ultrasonic image to be processed;

performing quality detection on the ultrasonic image to be processed, and judging whether the ultrasonic image to be processed is a qualified ultrasonic image;

if the ultrasonic image to be processed is an unqualified ultrasonic image, performing super-resolution processing on the ultrasonic image to be processed to obtain a reconstructed ultrasonic image;

carrying out consistency judgment on the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image;

and if the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image have consistency, determining that the reconstructed ultrasonic image is the qualified ultrasonic image with improved quality.

An ultrasound image quality control apparatus comprising:

the ultrasonic image acquisition module is used for acquiring an ultrasonic image to be processed;

the quality detection module is used for carrying out quality detection on the ultrasonic image to be processed and judging whether the ultrasonic image to be processed is a qualified ultrasonic image;

the super-resolution processing module is used for performing super-resolution processing on the ultrasonic image to be processed to obtain a reconstructed ultrasonic image if the ultrasonic image to be processed is an unqualified ultrasonic image;

the consistency judgment module is used for carrying out consistency judgment on the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image;

and the qualified ultrasonic image acquisition module is used for determining that the reconstructed ultrasonic image is the qualified ultrasonic image with improved quality if the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image are consistent.

A computer device comprising a memory, a processor and a computer program stored in the memory and executable on the processor, the processor implementing the ultrasound image quality control method when executing the computer program.

A computer-readable storage medium, in which a computer program is stored which, when being executed by a processor, carries out the above-mentioned ultrasound image quality control method.

According to the ultrasonic image quality control method, the ultrasonic equipment and the storage medium, the quality of the ultrasonic image to be processed is detected, when the ultrasonic image to be processed is an unqualified ultrasonic image, the ultrasonic image to be processed is subjected to super-resolution processing, and a reconstructed ultrasonic image is obtained, so that the image quality of the reconstructed ultrasonic image is improved; and then, consistency judgment is carried out on the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image, and when the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image are consistent, the reconstructed ultrasonic image is determined to be the qualified ultrasonic image with improved quality, so that the image quality of the reconstructed ultrasonic image can be effectively guaranteed, and the accuracy of the auxiliary diagnosis result by means of the ultrasonic image can be improved.

Drawings

In order to more clearly illustrate the technical solutions of the embodiments of the present invention, the drawings required to be used in the description of the embodiments of the present invention will be briefly introduced below, and it is obvious that the drawings in the description below are only some embodiments of the present invention, and it is obvious for those skilled in the art that other drawings can be obtained according to the drawings without inventive labor.

FIG. 1 is a schematic view of an ultrasound apparatus in an embodiment of the present invention;

FIG. 2 is a flow chart of the method for controlling the quality of an ultrasound image according to an embodiment of the present invention;

FIG. 3 is another flow chart of the method for controlling the quality of an ultrasound image according to an embodiment of the present invention;

FIG. 4 is another flow chart of the method for controlling the quality of an ultrasound image according to an embodiment of the present invention;

FIG. 5 is another flow chart of the method for controlling the quality of an ultrasound image according to an embodiment of the present invention;

FIG. 6 is another flow chart of a method for controlling quality of an ultrasound image according to an embodiment of the present invention;

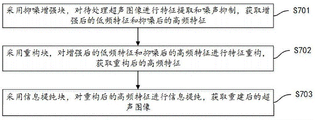

FIG. 7 is another flow chart of a method for controlling quality of an ultrasound image according to an embodiment of the present invention;

FIG. 8 is another flow chart of a method for controlling the quality of an ultrasound image according to an embodiment of the present invention;

FIG. 9 is another flow chart of a method for controlling the quality of an ultrasound image according to an embodiment of the present invention;

FIG. 10 is another flow chart of a method for controlling the quality of an ultrasound image according to an embodiment of the present invention;

FIG. 11 is a schematic diagram of an ultrasound image quality control apparatus according to an embodiment of the present invention;

FIG. 12 is an exemplary view of an ultrasound image data set, FIG. 12a is a blurred ultrasound image, FIG. 12b is a noisy ultrasound image, and FIG. 12c is a normal ultrasound image;

FIG. 13 is a comparison of the NS-LESRCNN model and the LESRCNN model in accordance with an embodiment of the present invention;

FIG. 14 is a schematic diagram of the NS-IEEB module of the NS-LESRCNN model shown in FIG. 13;

FIG. 15 is a comparison graph of super-resolution model test results formed by testing the LESRCNN model and the NS-LESRCNN model, wherein the test indexes in the test results are PSNR/SSIM.

Detailed Description

The technical solutions in the embodiments of the present invention will be clearly and completely described below with reference to the drawings in the embodiments of the present invention, and it is obvious that the described embodiments are some, not all, embodiments of the present invention. All other embodiments, which can be derived by a person skilled in the art from the embodiments given herein without making any creative effort, shall fall within the protection scope of the present invention.

The method for controlling the quality of an ultrasound image provided by the embodiment of the invention can be applied to ultrasound equipment, and as shown in fig. 1, the ultrasound equipment comprises a main controller, an ultrasound probe connected with the main controller, a beam forming processor, an image processor and a display screen. The main controller is a controller of the ultrasonic equipment, and is connected with other functional modules in the ultrasonic equipment, including but not limited to an ultrasonic probe, a beam forming processor, an image processor, a display screen and the like, and is used for controlling the work of each functional module.

An ultrasound probe is a transmitting and receiving device of ultrasound waves. In this example, in order to ensure that ultrasound images at different angles can have a larger coverage range of transverse scanning, that is, to ensure that ultrasound images at different angles have a larger overlapping range, the conventional ultrasound probe generally comprises a plurality of strip-shaped piezoelectric transducers (each single piezoelectric transducer is called an array element) with the same size arranged at equal intervals; or a plurality of piezoelectric transducers are arranged in a two-dimensional array, namely array elements are arranged in a two-dimensional matrix shape. A piezoelectric transducer in the ultrasonic probe excites and converts voltage pulses applied to the piezoelectric transducer into mechanical vibration, so that ultrasonic waves are emitted outwards; ultrasonic waves are transmitted in media such as human tissues and the like, echo analog signals such as reflected waves and scattered waves can be generated, each piezoelectric transducer can convert the echo analog signals into echo electric signals, the echo electric signals are amplified and subjected to analog-to-digital conversion, the echo electric signals are converted into echo digital signals, and then the echo digital signals are sent to a beam synthesis processor.

The wave beam synthesis processor is connected with the ultrasonic probe and used for receiving the echo digital signals sent by the ultrasonic probe, carrying out wave beam synthesis on the echo digital signals of one or more channels, obtaining one or more paths of echo synthesis signals and sending the echo synthesis signals to the image processor.

The image processor is connected with the beam forming processor and used for receiving the echo synthesis signal sent by the beam forming processor, carrying out image synthesis, space composition and other image processing operations on the echo synthesis signal, and sending the processed ultrasonic image to the display screen so as to enable the display screen to display the processed ultrasonic image. In this example, before sending the ultrasound image to the display screen for display, the image processor may also perform quality control on the ultrasound image to send the qualified ultrasound image screened by the quality control to the display screen for display, thereby ensuring the image quality of the ultrasound image and further ensuring the accuracy of the auxiliary diagnosis result by means of the ultrasound image.

In an embodiment, as shown in fig. 2, an ultrasound image quality control method is provided, which is described by taking an application of the method in an image processor as an example, and includes the following steps:

s201: acquiring an ultrasonic image to be processed;

s202: performing quality detection on the ultrasound image to be processed, and judging whether the ultrasound image to be processed is a qualified ultrasound image;

s203: if the ultrasonic image to be processed is an unqualified ultrasonic image, performing super-resolution processing on the ultrasonic image to be processed to obtain a reconstructed ultrasonic image;

s204: carrying out consistency judgment on the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image;

s205: and if the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image have consistency, determining the reconstructed ultrasonic image as the qualified ultrasonic image with improved quality.

The ultrasound image to be processed refers to an ultrasound image which needs to be processed.

As an example, in step S201, the image processor may acquire an ultrasound image to be processed input by the user. The ultrasonic image to be processed is an ultrasonic image which needs quality control. That is, the ultrasound image to be processed is an ultrasound image for which quality evaluation is required to determine whether quality improvement processing is required.

The qualified ultrasonic image is an ultrasonic image with qualified image quality, the concept opposite to the qualified ultrasonic image is an unqualified ultrasonic image, and the unqualified ultrasonic image is an ultrasonic image with unqualified image quality.

As an example, in step S202, after the image processor acquires the ultrasound image to be processed, the image processor may adopt a preset image quality evaluation algorithm to perform quality detection on the ultrasound image to be processed, so as to determine whether the ultrasound image to be processed is a qualified ultrasound image according to a quality detection result. The image quality evaluation algorithm herein refers to an algorithm for analyzing image quality that is set in advance. In this example, the image quality evaluation algorithm may adopt, but is not limited to, a No Reference (NR) image quality evaluation algorithm, and the No Reference image quality evaluation algorithm may be applied to a scene where No Reference image is compared, and may perform detection calculation on the image quality of the ultrasound image to be processed. When the quality of the ultrasonic image to be processed is detected by adopting the no-reference image quality evaluation algorithm, the no-reference image evaluation index for detecting the image quality in the field of natural images can be used for reference for quality detection.

The reconstructed ultrasonic image is an ultrasonic image obtained by performing super-resolution processing on the ultrasonic image to be processed.

As an example, in step S203, when the image processor performs quality detection and determines that the ultrasound image to be processed is a non-conforming ultrasound image, it indicates that the image quality of the ultrasound image to be processed does not meet the preset quality standard, and at this time, a preset super-resolution model needs to be used to perform super-resolution processing on the ultrasound image to be processed, so as to obtain a reconstructed ultrasound image. In this example, the super-resolution model may be an existing super-resolution model or an autonomously constructed super-resolution model, as long as it can implement super-resolution processing, so that the processed reconstructed ultrasound image has a higher resolution to achieve the purpose of improving image quality.

As an example, in step S204, since the ultrasound image has consistency differences besides the image quality, and these consistency differences may affect the accuracy of the auxiliary diagnosis result of the ultrasound image, after the reconstructed ultrasound image is obtained, the image processor needs to perform consistency judgment on the image characteristics of the reconstructed ultrasound image and the preset image characteristics of the qualified ultrasound image, so as to determine whether the reconstructed ultrasound image meets the preset consistency standard.

As an example, in step S205, the image processor determines that the image feature of the reconstructed ultrasound image is consistent with the image feature of the qualified ultrasound image, and the image style of the reconstructed ultrasound image is consistent with the preset image style of the qualified ultrasound image, and at this time, the reconstructed ultrasound image is determined to be the qualified ultrasound image with improved quality, and the reconstructed ultrasound image is output to the display screen for displaying, so as to ensure the image quality of the ultrasound image displayed in the display screen, and further improve the accuracy of the auxiliary diagnosis result with the help of the ultrasound image. Namely, the reconstructed ultrasonic image is an ultrasonic image with higher resolution and the image style consistent with that of the qualified ultrasonic image, and the image quality of the reconstructed ultrasonic image can be guaranteed.

In the embodiment, quality detection is performed on the ultrasonic image to be processed, when the ultrasonic image to be processed is an unqualified ultrasonic image, super-resolution processing is performed on the ultrasonic image to be processed, and a reconstructed ultrasonic image is obtained, so that the image quality of the reconstructed ultrasonic image is improved; and then, consistency judgment is carried out on the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image, and when the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image are consistent, the reconstructed ultrasonic image is determined to be the qualified ultrasonic image with improved quality, so that the image quality of the reconstructed ultrasonic image can be effectively guaranteed, and the accuracy of the auxiliary diagnosis result by means of the ultrasonic image can be improved.

In an embodiment, as shown in fig. 2, after step S201, that is, after the ultrasound image to be processed is acquired, the method for controlling quality of ultrasound image further includes:

s301: carrying out fault detection on an ultrasonic image to be processed to obtain a fault detection result;

s302: and if the fault detection result is that the fault detection is passed, performing quality detection on the ultrasonic image to be processed, and judging whether the ultrasonic image to be processed is a qualified ultrasonic image.

As an example, in step S301, after acquiring the ultrasound image to be processed, the image processor needs to perform fault detection on the ultrasound image to be processed by using a preset device fault detection logic to acquire a fault detection result. The fault detection result is used for reflecting whether the ultrasonic image to be processed is the ultrasonic image acquired by the ultrasonic equipment with the fault.

In this example, it may be determined according to a preliminary investigation that there is an apparatus fault in the ultrasound apparatus, image feature distribution of the ultrasound image acquired by the ultrasound apparatus is determined as fault histogram distribution, a corresponding apparatus fault detection logic is configured based on the fault histogram distribution, and the apparatus fault detection logic is written into a memory of the ultrasound apparatus, so as to invoke the apparatus fault detection logic set in advance to perform fault detection on the ultrasound image to be processed according to actual needs, and obtain a fault detection result.

As an example, in step S302, when the fault detection result is that the fault detection is passed, the image processor indicates that there is no equipment fault in the ultrasound equipment that acquires the ultrasound image to be processed, that is, the ultrasound image to be processed is an ultrasound image acquired by normal ultrasound equipment, which is the ultrasound equipment without equipment fault. Therefore, the ultrasound image to be processed does not have interference information caused by the failure of the ultrasound device, and at this time, step S102 may be executed, that is, the quality of the ultrasound image to be processed is detected by using a preset image quality evaluation algorithm, and whether the ultrasound image to be processed is a qualified ultrasound image is determined.

In this example, the image processor indicates that there is an apparatus fault in the ultrasound apparatus that acquires the ultrasound image to be processed when the fault detection result indicates that the fault detection fails. That is to say, the ultrasound image to be processed is an ultrasound image acquired by a faulty ultrasound device, which is an ultrasound device with a device fault. Therefore, interference information caused by ultrasonic equipment faults exists in the ultrasonic image to be processed, and at the moment, equipment fault prompt information can be directly output without executing subsequent processing steps, so that computing resources are saved. The equipment fault prompt information is used for prompting that the ultrasonic equipment for acquiring the ultrasonic image to be processed has a fault.

In this embodiment, a fault detection is performed on the ultrasound image to be processed first to determine whether an ultrasound device that acquires the ultrasound image to be processed has a device fault, and a fault detection result is obtained, so as to determine whether a subsequent processing step needs to be performed according to the fault detection result, thereby avoiding performing subsequent quality control on the ultrasound image to be processed that has the device fault, and saving computational resources.

In an embodiment, as shown in fig. 4, step S301, performing fault detection on the ultrasound image to be processed, and acquiring a fault detection result includes:

s401: extracting the characteristics of the ultrasonic image to be processed to obtain a target histogram of the ultrasonic image to be processed;

s402: and carrying out fault detection on the target histogram of the ultrasonic image to be processed to obtain a fault detection result.

As an example, in step S401, after acquiring the ultrasound image to be processed, the image processor may perform histogram extraction on the ultrasound image to be processed, and acquire a target histogram of the ultrasound image to be processed. The target histogram refers to a feature map obtained by extracting a histogram of the ultrasonic image to be processed. In this example, the histogram extraction can be performed by using the prior art, and the extraction process is simple and convenient.

As an example, in step S402, after the image processor extracts the target histogram corresponding to the ultrasound image to be processed, the image processor may perform fault detection on the target histogram corresponding to the ultrasound image to be processed to obtain a fault detection result.

In this example, the fault detection of the target histogram is specifically used to analyze whether the target histogram matches with a preset fault histogram distribution; if the target histogram is matched with the preset fault histogram distribution, determining that the ultrasonic image to be processed corresponding to the target histogram has interference information formed by equipment faults, and acquiring a fault detection result with the equipment faults; if the target histogram is not matched with the preset fault histogram distribution, the ultrasonic image to be processed corresponding to the target histogram is determined to have no interference information caused by equipment faults, and a fault detection result without the equipment faults can be obtained. The fault histogram distribution is determined by counting and analyzing a histogram of an ultrasonic image acquired by the fault ultrasonic equipment in advance.

In this example, as can be seen from a large amount of data analysis, when there is an equipment failure or there is no equipment failure in the ultrasound equipment, there is a great difference in the target histogram of the ultrasound image acquired by the ultrasound equipment. Generally, the histogram of the ultrasound image acquired by the normal ultrasound device tends to be normally distributed, and the histogram of the ultrasound image acquired by the failed ultrasound device has a peak or an extreme value at two ends, or has a sharp peak, so that the image processor can analyze the target histogram of the ultrasound image to be processed by extracting the target histogram to determine whether the device failure exists.

TABLE 1 Fault analysis and comparison TABLE

As shown in table 1, when the ultrasound device has a device fault, image representations such as a part of black and white color block, damaged image quality, band or stripe noise, scattered white noise, or black stripe interference may occur in the ultrasound image acquired by the faulty ultrasound device, and the ultrasound image corresponding to each image representation is subjected to histogram extraction and summarization, so that it can be determined that the fault histogram distribution and the fault cause corresponding to each image representation are different: (1) if there are some black and white blocks in the ultrasound image, a fault histogram distribution with peaks appearing at both ends will be formed, mainly due to the reasons of probe fault, damage of the pulse/receiving circuit or switch circuit fault. (2) If the image quality in the ultrasound image is damaged, a failure histogram distribution with uniform distribution and extreme values at both ends is formed, which is mainly caused by a probe failure, a pulse/receiving circuit damage or a switch circuit failure. (3) If the ultrasonic image has band-shaped or strip-shaped noise, a fault histogram distribution with a peak value at the left end is formed, which is mainly caused by the reasons of probe fault or power frequency interference and the like. (4) If scattered white noise exists in the ultrasonic image, failure histogram distribution which tends to be normal distribution but is concentrated is formed, and the failure histogram distribution is mainly caused by reasons such as probe failure or power frequency interference. (5) If black strip interference exists in the ultrasound image, a fault histogram distribution with a peak value at the right end is formed, mainly due to a probe fault or damage to a pulse/receiving circuit and the like.

In an embodiment, as shown in fig. 5, the step S102 of performing quality detection on the ultrasound image to be processed and determining whether the ultrasound image to be processed is a qualified ultrasound image includes:

s501: respectively carrying out quality detection on the ultrasonic image to be processed by adopting a Brenner gradient function, a Laplacian gradient function and a Vollant function to obtain a Brenner gradient measured value, a Laplacian gradient measured value and a Vollant gradient measured value;

s502: if the Brenner gradient measured value, the Laplacian gradient measured value and the Vollant gradient measured value all accord with the corresponding target gradient threshold value, determining the ultrasonic image to be processed as a qualified ultrasonic image;

s503: and if at least one of the Brenner gradient measured value, the Laplacian gradient measured value and the Vollant gradient measured value does not meet the corresponding target gradient threshold value, determining that the ultrasonic image to be processed is a disqualified ultrasonic image.

As an example, in step S501, the image processor performs quality detection on the ultrasound image to be processed by using the Brenner gradient function, the Laplacian gradient function, and the Vollath function, respectively, to obtain a Brenner gradient measured value output by the Brenner gradient function, a Laplacian gradient measured value output by the Laplacian gradient function, and a Laplacian gradient measured value output by the Vollath function. In this example, the Brenner gradient measured value is a measured gradient value that is used by the Brenner gradient function to evaluate whether the image contour is obvious, and generally speaking, the larger the Brenner gradient measured value is, the more obvious the image contour is. The Laplacian gradient measured value is a gradient measured value for evaluating whether noise interference is small by using a Laplacian gradient function, and generally, the Laplacian gradient measured value is small, and the noise interference is small. The Vollath gradient measured value is a gradient measured value for evaluating the sharpness of an image by using the Vollath function, and generally, the larger the Vollath gradient measured value is, the sharper the image is.

Wherein the target gradient threshold is a preset threshold for evaluating whether the qualified quality standard is reached. Since in this example, the Brenner gradient measured value, the Laplacian gradient measured value and the Vollath gradient measured value need to be analyzed in comparison, the target gradient threshold includes the Brenner gradient threshold, the Laplacian gradient threshold and the Vollath gradient threshold.

As an example, in step S502, after acquiring the Brenner gradient measured value, the Laplacian gradient measured value, and the Vollath gradient measured value corresponding to the ultrasound image to be processed, the image processor may compare the Brenner gradient measured value, the Laplacian gradient measured value, and the Vollath gradient measured value with their corresponding target gradient thresholds, respectively, and if the Brenner gradient measured value, the Laplacian gradient measured value, and the Vollath gradient measured value all conform to their corresponding target gradient thresholds, determine that the ultrasound image to be processed is a qualified ultrasound image. In this example, the image processor compares the Brenner gradient measured value with the Brenner gradient threshold, compares the Laplacian gradient measured value with the Laplacian gradient threshold, and compares the Vollath gradient measured value with the Vollath gradient threshold, and if the Brenner gradient measured value is greater than the Brenner gradient threshold, the Laplacian gradient measured value is less than the Laplacian gradient threshold, and the Vollath gradient measured value is greater than the Vollath gradient threshold, the ultrasound image to be processed is determined to satisfy the predetermined criterion, and the ultrasound image to be processed is determined to be a qualified ultrasound image.

As an example, in step S503, after acquiring the Brenner gradient measured value, the Laplacian gradient measured value, and the Vollath gradient measured value corresponding to the ultrasound image to be processed, the image processor may compare the Brenner gradient measured value, the Laplacian gradient measured value, and the Vollath gradient measured value with their corresponding target gradient thresholds, respectively, and determine that the ultrasound image to be processed is an unqualified ultrasound image if at least one of the Brenner gradient measured value, the Laplacian gradient measured value, and the Vollath gradient measured value does not meet its corresponding target gradient threshold. In this example, the image processor compares the Brenner gradient measured value with the Brenner gradient threshold, the Laplacian gradient measured value with the Laplacian gradient threshold, and the Vollath gradient measured value with the Vollath gradient; if the Brenner gradient measured value is not greater than the Brenner gradient threshold, or the Laplacian gradient measured value is not less than the Laplacian gradient threshold, or the Vollath gradient measured value is not greater than the Vollath gradient threshold, the ultrasound image to be processed is determined not to meet the preset standard, and the ultrasound image to be processed can be determined to be an unqualified ultrasound image.

TABLE 2 image quality evaluation index comparison table

As an example, as shown in fig. 12, the ultrasound images to be processed received by the image processor may be blurred ultrasound images (as in fig. 12 a), noisy ultrasound images (as in fig. 12 b), and qualified ultrasound images (as in fig. 12 c). The fuzzy ultrasonic image is an ultrasonic image with the definition not reaching the preset definition standard. The noise ultrasonic image is an ultrasonic image with more noise information, and the qualified ultrasonic image is an ultrasonic image with less noise information and the definition reaching a preset definition standard. As can be seen from the noisy ultrasound image, the presence of noise makes the ultrasound image have a sharper contour, and the high-frequency features in the ultrasound image are more obvious. As can be seen by combining the image quality evaluation index comparison table shown in table 2, in all the non-reference image evaluation indexes, gradient correlation functions such as Laplacian gradient function and Brenner gradient function can effectively analyze noise interference distributed in the ultrasound image according to the contour characteristics. In addition, for a noise ultrasound image without a significant contour, such as a pepper salt noise, noise is scattered in the ultrasound image, and the Laplacian gradient function is more suitable for the scene than the Brenner gradient function, so that the Laplacian gradient function is more suitable for evaluating the noise ultrasound image, and the Brenner gradient function is more suitable for evaluating the blurred ultrasound image. The variance function, the gray variance product function, the energy gradient function and the like are evaluated from the aspect of definition, and although indexes of the variance function and the gray variance product function can also represent characteristic values of pixel gradients, the overall expression effect of the variance function, the gray variance product function, the energy gradient function and the like is not as good as that of the Laplacian gradient function and the Brenner gradient function. The Vollanth function is mainly suitable for text noise analysis, can be combined with mean difference and image gradient information to screen and supplement the recognition result of the Brenner gradient function, and is beneficial to ensuring the accuracy of the recognized fuzzy ultrasonic image. The entropy function is mainly combined with a camera focusing principle of a natural image, and the higher the definition of the image is, the more information contained in the image is, the larger the entropy value is, so that the entropy function is utilized to evaluate the image quality.

In this embodiment, the Brenner gradient function, the Laplacian gradient function, and the Vollath function are used to perform quality detection on the ultrasound image to be processed, and whether the ultrasound image to be processed is a blurred ultrasound image, a noise ultrasound image, or a qualified ultrasound image can be quickly and accurately identified according to the evaluation results of the three quality evaluation indexes, so as to ensure the image quality of the qualified ultrasound image.

In an embodiment, as shown in fig. 6, in step S103, performing super-resolution processing on the ultrasound image to be processed to obtain a reconstructed ultrasound image, the method includes:

s601: acquiring an unqualified type corresponding to an ultrasonic image to be processed;

s602: if the unqualified type is the fuzzy ultrasonic image, performing super-resolution processing on the ultrasonic image to be processed by adopting an LESRCNN model to obtain a reconstructed ultrasonic image;

s603: if the unqualified type is a noise ultrasonic image, performing noise suppression and super-resolution processing on the ultrasonic image to be processed by adopting an NS-LESRCNN model to obtain a reconstructed ultrasonic image;

the NS-LESRCNN model is formed by replacing an enhanced block of the LESRCNN model with a noise suppression enhanced block, and the noise suppression enhanced block performs noise suppression in the feature extraction process.

As an example, in step S601, when the ultrasound image to be processed is an unqualified ultrasound image, the image processor needs to acquire an unqualified type corresponding to the ultrasound image to be processed, and the unqualified type can be determined when a quality detection result determined by quality detection is performed on the ultrasound image to be processed.

In this example, when the Brenner gradient function, the Laplacian gradient function, and the Vollath function are used to perform quality detection on the ultrasound image to be processed, if at least one of the Brenner gradient measured value, the Laplacian gradient measured value, and the Vollath gradient measured value does not meet the corresponding target gradient threshold, it is determined that the ultrasound image to be processed is a non-qualified ultrasound image. Specifically, if the Brenner gradient measured value is not greater than the Brenner gradient threshold, the ultrasound image to be processed can be determined as a blurred ultrasound image; or if the Brenner gradient measured value is greater than the Brenner gradient threshold and the Vollath gradient measured value is not greater than the Vollath gradient threshold, the ultrasound image to be processed can be determined as a fuzzy ultrasound image so as to supplement the identification result of the Brenner gradient function by using the Brenner gradient function; or, if the Laplacian gradient measured value is not less than the Laplacian gradient threshold, the ultrasound image to be processed is determined to be a noise ultrasound image.

As an example, in step S602, when the unqualified type of the ultrasound image to be processed is a blurred ultrasound image, the image processor indicates that the ultrasound image to be processed has low definition and less noise information, and at this time, the LESRCNN model may be used to perform super-resolution processing on the ultrasound image to be processed, so as to obtain a processed reconstructed ultrasound image, so as to ensure that the reconstructed ultrasound image has good image quality, and achieve the purpose of improving the image quality.

In the prior art, a light-weighted blind Super-Resolution model (LESRCNN) may be used to perform Super-Resolution processing on an ultrasound Image, and a network architecture of the LESRCNN model is shown as the following 1, and includes an Enhancement Block (Information Extraction and Enhancement Block, hereinafter abbreviated as IEEB module), a Reconstruction Block (Reconstruction Block, hereinafter abbreviated as RB module), and an Information Refinement Block (Information Refinement Block, hereinafter abbreviated as IRB module).

In a specific embodiment, performing super-resolution processing on an ultrasound image to be processed by using an LESRCNN model to obtain a reconstructed ultrasound image includes:

(1) and (3) extracting the features of the ultrasonic image to be processed by adopting an enhancement block to obtain the enhanced low-frequency features and the high-frequency features without noise suppression. In this example, the IEEB module performs feature extraction on the input ultrasound image by using a heterogeneous structure (formed by convolution of 3 × 3 and 1 × 1), extracts enhanced low-frequency features and high-frequency features that are not noise-suppressed, and inputs the high-frequency features that are not noise-suppressed and the enhanced low-frequency features to the RB module through corresponding feature channels. The low frequency feature refers to a feature corresponding to the background of the ultrasound image. The high-frequency characteristics without noise suppression refer to the high-frequency characteristics which are extracted by the IEEB module and are not subjected to noise suppression. The high-frequency feature is a feature that is distinguished from the low-frequency feature, and includes not only a texture feature but also a noise feature.

(2) And performing feature reconstruction on the enhanced low-frequency features and the high-frequency features without noise suppression by adopting a reconstruction block to obtain the reconstructed high-frequency features. In this example, the RB module is used to perform upsampling processing on the received enhanced low-frequency features and the high-frequency features without noise suppression, obtain the reconstructed high-frequency features, and input the reconstructed high-frequency features into the IRB module for processing.

(3) And adopting an information purification block to purify the information of the reconstructed high-frequency characteristics to obtain the reconstructed ultrasonic image. In this example, the IRB module optimizes the received reconstructed high-frequency features and restores image details related to the high-frequency features to ensure the super-resolution performance of the model.

As an example, in step S603, when the reject type of the ultrasound image to be processed is a noise ultrasound image, it indicates that the ultrasound image to be processed has low definition and more noise information, and at this time, the NS-LESRCNN model may be used to perform noise suppression and super-resolution processing on the ultrasound image to be processed, so as to obtain a reconstructed ultrasound image. In this example, the NS-LESRCNN model is an LESRCNN model formed by replacing an enhanced block of the LESRCNN model with a noise suppression enhanced block, and the noise suppression enhanced block performs noise suppression in the feature extraction process. The noise suppression enhancement block is a module for noise suppression in the feature extraction process.

In this example, when the unqualified type of the ultrasound image to be processed is a noise ultrasound image, it is indicated that the ultrasound image to be processed contains more noise information, and if the processing continues to be performed by using the LESRCNN model, the IEEB module extracts enhanced low-frequency features and non-noise-suppressed high-frequency features from the enhanced low-frequency features and the non-noise-suppressed high-frequency features, where the non-noise-suppressed high-frequency features include not only texture features reflecting image details, but also noise features formed based on noise interference, and these ultrasonic features may cause the ultrasound image after the super-resolution processing to have too sharp texture and the like, and affect the image quality of the ultrasound image after the super-resolution processing. Therefore, an enhanced block of the noise suppression enhanced block LESRCNN model needs to be adopted for replacement to obtain the NS-LESRCNN model, and the noise suppression enhanced block of the NS-LESRCNN model can suppress noise information in the feature extraction process, especially in the high-frequency feature extraction process, so that the extracted noise-suppressed high-frequency features containing less noise information are input into the reconstruction block and the information purification block for super-resolution processing, so that the NS-LESRCNN model can realize noise suppression and super-resolution processing on the ultrasound image to be processed, obtain the reconstructed ultrasound image, and ensure that the reconstructed ultrasound image is suppressed in noise, thereby achieving the purpose of improving the image quality.

In this embodiment, when it is determined that the ultrasound image to be processed is a blurred ultrasound image, the LESRCNN model is used to perform super-resolution processing on the ultrasound image to be processed, and when it is determined that the ultrasound image to be processed is a noise ultrasound image, the NS-LESRCNN model is used to perform noise suppression and super-resolution processing on the ultrasound image to be processed, so that the ultrasound image after super-resolution processing and reconstruction has a higher resolution. By the operation, the ultrasonic image after super-resolution reconstruction has higher resolution, so that the reconstructed ultrasonic image is prevented from inhibiting noise, and the purpose of improving the image quality is achieved. The noise suppression enhancement block of the NS-LESRCNN model considers the interference caused by image noise when transmitting the characteristics of a shallow image, so that the ultrasonic image with the noise can meet the requirements of an ultrasonograph in sense and can reach the advanced super-resolution algorithm level in the industry on quantitative indexes (SSIM and PSNR) when performing super-resolution processing.

In one embodiment, the NS-LESRCNN model comprises a noise suppression enhancement block, a reconstruction block and an information purification block;

as shown in fig. 7, in step S603, the noise suppression and super-resolution processing are performed on the ultrasound image to be processed by using the NS-LESRCNN model, and the reconstructed ultrasound image is obtained, which includes:

s701: a noise suppression enhancement block is adopted to perform feature extraction and noise suppression on the ultrasonic image to be processed, and enhanced low-frequency features and noise-suppressed high-frequency features are obtained;

s702: performing feature reconstruction on the enhanced low-frequency features and the noise-suppressed high-frequency features by adopting a reconstruction block to obtain reconstructed high-frequency features;

s703: and adopting an information purification block to purify the information of the reconstructed high-frequency characteristics to obtain a reconstructed ultrasonic image.

As shown in fig. 13, a network architecture of a Lightweight Noise Suppression blind Super-Resolution model (Noise Suppression-light Image Super-Resolution with enhanced CNN, abbreviated as NS-LESRCNN) includes a Noise Suppression Enhancement Block (Information Extraction and Enhancement Block for Noise Suppression, abbreviated as NS-IEEB Block hereinafter), and a light Image Super-Resolution with enhanced CNN, that is, a Lightweight blind Super-Resolution model.

As an example, in step S701, the image processor uses an NS-IEEB module in an NS-LESRCNN model to perform feature extraction and noise suppression on the ultrasound image to be processed, obtain enhanced low-frequency features and noise-suppressed high-frequency features, and input the enhanced low-frequency features and noise-suppressed high-frequency features into an RB module for processing. The low-frequency features refer to features corresponding to the background of the ultrasound image. The high-frequency characteristic after noise suppression refers to the high-frequency characteristic which is extracted by the NS-IEEB module and subjected to noise suppression. In this example, the denoised high-frequency features are a concept opposite to the non-denoised high-frequency features output by the IEEB module, and the denoised high-frequency features also include texture features and noise features, but since the NS-IEEB module performs noise suppression in the feature extraction process, the noise features in the extracted denoised high-frequency features are much smaller than the noise features of the non-denoised high-frequency features.

As shown in fig. 13, the improved NS-LESRCNN model includes 17-layer convolved noise suppression enhancement blocks, 1-layer reconstruction blocks, and 5-layer information refinement blocks. And (3) performing feature extraction and feature enhancement on the low-resolution ultrasonic image to be processed by adopting 17 layers of convolution noise suppression enhancement blocks, and performing thinning processing on the extracted features to reduce the calculation amount. The feature extraction here means that feature extraction is performed on the ultrasound image to be processed by using the 3 × 3 convolution layer and the 1 × 1 convolution layer; the feature enhancement here means to adopt the residual error module in the NS-IEEB module shown in fig. 14 to superimpose the extracted features so as to achieve the purpose of feature enhancement; the feature refinement here means that the enhanced low-frequency features corresponding to the background are continuously reduced through a plurality of convolution operations, so as to implement reduction and refinement of the line width. In this example, since the image matrix of the ultrasound image to be processed becomes sparse after a plurality of convolution and activation processes, the amount of subsequent calculation is reduced, and meanwhile, in order to avoid losing the texture features of the refined image matrix, the noise-suppressed high-frequency features extracted by the NS-IEEB module need to be sent to the RB module through the NS-IEEB channel. In contrast, the IEEB module in the LESRCNN model sends the extracted high frequency features that are not noise-suppressed to the RB module through the IEEB channel. In this example, the denoised high-frequency features extracted by the NS-IEEB module or the non-denoised high-frequency features extracted by the IEEB module include all information of the ultrasound image to be processed, including both texture features and noise features, but the noise features in the denoised high-frequency features are much smaller than those in the non-denoised high-frequency features.

In this example, as shown in fig. 13, the IEEB module of the improved LESRCNN model includes a plurality of convolution units, each convolution unit includes 13 × 3 convolution layer, 1 ReLU active layer, and 1 × 1 convolution layer, and the high-frequency characteristics output by the convolution unit, especially the high-frequency characteristics output by the 3 × 3 convolution layer, are transmitted to the RB module through the IEEB channel. The improved NS-IEEB module of the NS-LESRCNN model comprises a plurality of convolution units, each convolution unit comprises 1 convolution layer 3 x 3, 1 ReLU activation layer and 1 convolution layer 1 x 1, the high-frequency characteristics output by the convolution unit are transmitted to the RB module through the NS-IEEB channel, the NS-IEEB channel adopts the structure shown in FIG. 14, and the NS-IEEB module can be used for realizing characteristic enhancement and noise suppression. In this example, the size of the convolution kernel has been noted, and the number of channels is 64. The input ultrasound image to be processed keeps the feature image size unchanged by way of Padding (Padding), Padding =0 when a 1 × 1 convolution operation is performed, and Padding =1 when a 3 × 3 convolution operation is performed. Therefore, the network does not need to adjust the image size of the feature transfer time Tensor (Tensor) when changing the image channel. In the improved NS-LESRCNN model, each sub-module of the convolutional neural network is generally connected in series, and then connected in series, and part of feature maps in fig. 9 are connected in an additive operation manner through a transmission channel, where the feature maps include a feature map corresponding to a high-frequency feature and a feature map corresponding to a low-frequency feature.

As an example, in step S702, the image processor performs feature reconstruction on the received enhanced low-frequency feature and the noise-suppressed high-frequency feature by using an RB module in the NS-LESRCNN model, obtains the reconstructed high-frequency feature, and inputs the reconstructed high-frequency feature into the IRB module for processing. In this example, the RB module is used to perform feature reconstruction on the enhanced low-frequency features and the noise-suppressed high-frequency features, which not only avoids noise interference, but also avoids loss of image detail information such as texture features, and is helpful to ensure the image quality of the finally obtained reconstructed ultrasound image.

As an example, in step S703, the image processor optimizes the received reconstructed high-frequency features in the NS-LESRCNN model, and restores image details related to the high-frequency features, so as to ensure the super-resolution performance of the model.

In this embodiment, the noise suppression enhancing block in the NS-LESRCNN model is used to perform feature extraction and noise suppression on the ultrasound image to be processed, and obtain the enhanced low-frequency feature and the noise-suppressed high-frequency feature, so as to implement noise suppression in the feature extraction process of the ultrasound image to be processed, avoid sharp textures such as contours in the reconstructed ultrasound image caused by noise interference, ensure that the reconstructed ultrasound image has good image quality, and achieve the purpose of improving image quality.

In an embodiment, as shown in fig. 8, step S702, that is, performing feature reconstruction on the enhanced low-frequency feature and the noise-suppressed high-frequency feature by using a reconstruction block, to obtain a reconstructed high-frequency feature, includes:

s801: acquiring a target amplification scale;

s802: respectively performing up-sampling processing on the enhanced low-frequency features and the noise-suppressed high-frequency features based on the target amplification scale to obtain low-frequency up-sampling features and high-frequency up-sampling features;

s803: and performing feature reconstruction on the low-frequency up-sampling feature and the high-frequency up-sampling feature to obtain the reconstructed high-frequency feature.

As an example, in step S801, the image processor needs to first acquire the target magnification scale after acquiring the enhanced low-frequency feature and the noise-suppressed high-frequency feature. The target enlargement scale herein refers to a scale for up-sampling an image feature of the ultrasound image to be processed, and the target enlargement scale may be a preset default value or a scale determined according to an image size and image quality of the ultrasound image to be processed.

The low-frequency upsampling feature refers to a feature obtained by performing upsampling processing on the enhanced low-frequency feature. The high-frequency up-sampling feature refers to a feature obtained after up-sampling processing is performed on the high-frequency feature after noise suppression.

As an example, in step S802, after obtaining the target amplification scale, the image processor may perform upsampling processing on the enhanced low-frequency feature based on the target amplification scale to obtain an upsampled low-frequency feature; and moreover, the high-frequency characteristics after noise suppression can be subjected to up-sampling processing based on the same target amplification scale, so that the high-frequency up-sampling characteristics are obtained. In this example, the enhanced low-frequency feature and the noise-suppressed high-frequency feature are subjected to upsampling processing based on the same target amplification scale, so that the low-frequency upsampling feature and the high-frequency upsampling feature have the same image size, and subsequent feature reconstruction is facilitated.

In this example, the image processor is provided with a sub-pixel Convolution layer, and the enhanced low-frequency feature and the noise-suppressed high-frequency feature are respectively subjected to upsampling processing in the sub-pixel Convolution layer based on a target amplification scale, and the sub-pixel Convolution layer can integrate two operations of point-by-point Convolution and Group Convolution (point Group Convolution) together, and can also provide a mechanism of inter-Group information exchange (Channel Shuffle), so as to achieve the purposes of reducing the calculated amount of the model and ensuring that the precision of the model is not changed.

As an example, in step S803, after acquiring the low-frequency upsampling feature and the high-frequency upsampling feature corresponding to the same target amplification scale, the image processor may perform feature addition on the low-frequency upsampling feature and the high-frequency upsampling feature, and then perform activation processing on the added features to acquire the reconstructed high-frequency feature, as shown in fig. 13. In this example, feature reconstruction operations such as feature addition and activation processing are performed on the low-frequency upsampling feature and the high-frequency upsampling feature, so that the obtained reconstructed high-frequency feature includes both the low-frequency feature and the high-frequency feature with higher resolution.

In the embodiment, the enhanced low-frequency features and the noise-suppressed high-frequency features are subjected to up-sampling processing based on the same target amplification scale, so that the low-frequency up-sampling features and the high-frequency up-sampling features have the same image size, and a technical basis is provided for feature reconstruction of the low-frequency up-sampling features and the high-frequency up-sampling features; and then, performing feature reconstruction on the low-frequency up-sampling feature and the high-frequency up-sampling feature, so that the obtained reconstructed high-frequency feature contains more image detail information.

In one embodiment, as shown in fig. 9, the step S801 of obtaining the target magnification scale includes:

s901: acquiring image size and quality detection values of an ultrasonic image to be processed;

s902: and determining a target magnification scale according to the image size and the quality detection value of the ultrasonic image to be processed.

The image size of the ultrasound image to be processed refers to the size of the ultrasound image to be processed. The quality detection value of the ultrasound image to be processed refers to a detection value determined after quality detection is performed on the ultrasound image to be processed. In this example, the quality detection value is a gradient value determined by performing quality detection on the ultrasound image to be processed by using the target gradient function. The objective gradient function herein refers to a gradient function for implementing mass detection, and includes, but is not limited to, Brenner gradient function, Laplacian gradient function, and Vollath function.

As an example, in step S901, the image processor may acquire an image size and a quality detection value of an input ultrasound image to be processed according to the ultrasound image to be processed. In this example, since the detection processing has been performed by using the target gradient function, such as the Brenner gradient function, the Laplacian gradient function, and the Vollath function, in the step S202, during the quality detection process of the ultrasound image to be processed, the Brenner gradient measured value, the Laplacian gradient measured value, and the Vollath gradient measured value determined in the quality detection process can be directly determined as the quality detection value, and the quality detection value represents the image quality of the ultrasound image to be processed.

As an example, in step S902, after the image size and quality detection value of the ultrasound image to be processed, the image processor may query a preset magnification scale comparison table according to the image size and quality detection value, and determine a target magnification scale corresponding to the image size and quality detection value from the magnification scale comparison table. For example, when the image size is low, the up-sampling processing is performed by adopting a large target amplification scale; or, when the image size is high, that is, when the image size meets the preset requirement, the ultrasound image to be processed with a low quality detection value may be upsampled by using a small target magnification scale.

For example, in the testing process, 2-time down-sampling or 4-time down-sampling is respectively performed on a normal reference image, so as to obtain a blurred reference image subjected to the 2-time down-sampling or the 4-time down-sampling; noise interference information is added to the blurred reference image after 2-time down sampling or 4-time down sampling, a noise reference image corresponding to the 2-time down sampling or 4-time down sampling can be obtained, a normal reference image, a blurred reference image and a noise parameter image are used as model input, an LESRCNN model and an NS-LESRCNN model are respectively input for testing, and test results corresponding to two test indexes of PSNR/SSIM are obtained as shown in FIG. 15: (1) inputting a normal reference image into an LESRCNN model and an NS-LESRCNN model, and respectively adopting target amplification scales of 2 times and 4 times to perform upsampling processing, wherein model scores of two tested indexes of PSNR/SSIM are shown in a line 1. (2) Inputting the noise reference image into an LESRCNN model and an NS-LESRCNN model, respectively adopting a target amplification scale of 2 times to perform up-sampling processing on the noise reference image corresponding to the down-sampling of 2 times, adopting a target amplification scale of 4 times to perform up-sampling processing on the noise reference image corresponding to the down-sampling of 4 times, and the model scores of two test indexes of PSNR/SSIM tested are shown as the line 2 and the line 3. (3) Inputting the fuzzy reference image into an LESRCNN model and an NS-LESRCNN model, respectively adopting a target amplification scale of 2 times to perform up-sampling processing on a noise reference image corresponding to the down-sampling of 2 times, adopting a target amplification scale of 4 times to perform up-sampling processing on a noise reference image corresponding to the down-sampling of 4 times, and obtaining model scores as shown in the 4 th line and the 5 th line. As can be seen from comparison of the test results shown in fig. 11, in the foreground where the image size meets the requirement of the preset size, after the up-sampling processing is performed by using a smaller target amplification scale, the model score of the ultrasound image after the super-resolution processing is performed on the noise reference image is higher, and is closer to the normal reference image, and the image quality is higher.

In this embodiment, the target amplification scale is determined adaptively according to the image size of the ultrasound image to be processed and the quality detection value (including but not limited to Brenner gradient measured value, Laplacian gradient measured value, and Vollath gradient measured value) representing the image quality of the ultrasound image to be processed, so that when the image size is low, the up-sampling processing can be performed by using a large target amplification scale; or, when the image size is high, that is, when the image size meets the preset requirement, the ultrasound image to be processed with a low quality detection value may be upsampled by using a small target magnification scale. That is to say, when the image size meets the preset requirement, the ultrasound image to be processed with lower image quality is subjected to upsampling processing by using a smaller target enlargement scale, which is determined by experiments, and then the reconstructed ultrasound image finally formed after the upsampling processing is performed by using the smaller target enlargement scale is closer to the reference ultrasound image.

In an embodiment, as shown in fig. 10, the step S204 of performing consistency between the image features of the reconstructed ultrasound image and the image features of the qualified ultrasound image includes:

s1001: acquiring a clustered data cluster, wherein the clustered data cluster comprises a qualified data cluster and an unqualified data cluster;

s1002: reducing the dimension of the reconstructed ultrasonic image to obtain the characteristics of the reconstructed image;

s1003: on the basis of the characteristics of the reconstructed image, respectively calculating Hamming distances between qualified data clusters and unqualified data clusters, and determining qualified Hamming distances and unqualified Hamming distances;

s1003: if the qualified Hamming distance is smaller than the unqualified Hamming distance, determining that the image characteristics of the reconstructed ultrasonic image and the image characteristics of the qualified ultrasonic image have consistency;

the qualified data cluster is formed by clustering qualified image features of qualified ultrasonic image dimensionality reduction, and the unqualified data cluster is formed by clustering unqualified image features of unqualified ultrasonic image dimensionality reduction.

The clustered data cluster refers to a data cluster formed by clustering based on image characteristics before the current moment. And the qualified data cluster is formed by clustering qualified image features of the qualified ultrasonic image for dimensionality reduction. And the unqualified data cluster is a data cluster formed by clustering unqualified image features of unqualified ultrasonic image dimensionality reduction. As an example, the unqualified data clusters include a noise data cluster and a fuzzy data cluster, where the noise data cluster refers to a data cluster formed by clustering noise image features of dimension reduction of a noise ultrasound image, and the fuzzy data cluster refers to a data cluster formed by clustering fuzzy image features of dimension reduction of a fuzzy ultrasound image.

As an example, in step S1001, the image processor may acquire a cluster data cluster formed by the training clusters. In this example, the image processor performs the clustering process before the current time as follows: (1) a training ultrasound image in the ultrasound image data set is acquired, where the training ultrasound image may be a high resolution ultrasound image. Each training ultrasonic image carries a quality label, and the quality labels can be qualified labels and unqualified labels, and can also be qualified labels, noise labels and fuzzy labels. (2) And reducing the dimensions of all the training ultrasonic images carrying the quality labels, and acquiring training image characteristics corresponding to the training ultrasonic images. In this example, the training ultrasound image may be feature extracted using, but not limited to, a convolutional neural network model of ResNet50 to convert the feature map of width x 3 to a feature array of 1 x 1000 for subsequent clustering with one-dimensional training image features. (3) And clustering all training image characteristics carrying the quality labels to obtain at least two clustered data clusters. For example, when the quality labels are qualified labels and unqualified labels, the formed clustered data clusters include qualified data clusters and unqualified data clusters. When the quality labels are qualified labels, noise labels and fuzzy labels, the formed clustered data clusters comprise qualified data clusters, noise data clusters and fuzzy data clusters, wherein the noise data clusters and the fuzzy data clusters are unqualified data clusters.

As an example, in step S1002, after acquiring the reconstructed ultrasound image, the image processor may perform dimension reduction on the reconstructed ultrasound image by using a convolutional neural network model, but not limited to ResNet50, to extract one-dimensional reconstructed image features. In this example, the ResNet50 convolutional neural network model performs dimensionality reduction on the reconstructed ultrasound image to convert the dimensionality of the reconstructed ultrasound image from width x height 3 to a feature array of 1 x 1000 to achieve the goal of obtaining one-dimensional reconstructed image features for distance computation using the one-dimensional reconstructed image features.

As an example, in step S1003, after obtaining the reconstructed image feature, the image processor may calculate the reconstructed image feature and the image feature corresponding to the qualified data cluster by using a hamming distance formula, and determine the hamming distance between the reconstructed image feature and the image feature corresponding to the qualified data cluster as the qualified hamming distance. Correspondingly, a Hamming distance formula can be adopted to calculate the reconstructed image features and the image features corresponding to the unqualified data clusters, and the Hamming distance between the reconstructed image features and the image features corresponding to the unqualified data clusters is determined as the unqualified Hamming distance. Understandably, when the disqualified data cluster includes a noisy data cluster and a fuzzy data cluster, the disqualified hamming distance thereof includes a noisy hamming distance and a fuzzy hamming distance.

As an example, in step S1004, after calculating and determining the qualified hamming distance and the unqualified hamming distance, the image processor may compare the sizes of the qualified hamming distance and the unqualified hamming distance, and when the qualified hamming distance is smaller than the unqualified hamming distance, it is indicated that the reconstructed image feature is closer to the qualified image feature of the qualified data cluster, and therefore, it may be determined that the image style of the reconstructed ultrasound image is consistent with the image style of the qualified ultrasound image. Correspondingly, when the qualified Hamming distance is not less than the unqualified Hamming distance, the reconstructed image features are closer to the unqualified image features of the unqualified data cluster, and therefore the image style of the reconstructed ultrasonic image is judged to be inconsistent with the image style of the qualified ultrasonic image. Understandably, when the unqualified data cluster comprises a noise data cluster and a fuzzy data cluster, and the unqualified Hamming distance comprises a noise Hamming distance and a fuzzy Hamming distance, the image characteristics closer to the qualified data cluster are determined when the qualified Hamming distance is smaller than the noise Hamming distance and the fuzzy Hamming distance at the same time, and the image characteristics of the reconstructed ultrasonic image and the qualified ultrasonic image can be judged to be consistent so as to determine that the image styles of the reconstructed ultrasonic image and the qualified ultrasonic image are consistent.

In this embodiment, the reconstructed image features obtained by dimensionality reduction of the reconstructed ultrasound image and the hamming distances between qualified data clusters and unqualified data clusters formed by pre-clustering are calculated, and qualified hamming distances and unqualified hamming distances are respectively determined, when the qualified hamming distances are smaller than the unqualified hamming distances, the reconstructed image features can be determined to be closer to the qualified image features in the qualified data clusters, and the image features of the reconstructed image features and the qualified image features are indicated to be closer, that is, the image style of the reconstructed ultrasound image is closer to the image style of the qualified ultrasound image, and the reconstructed ultrasound image is determined to meet the preset consistency standard, so that the image features of the reconstructed ultrasound image and the qualified ultrasound image are determined to have consistency, and the reconstructed ultrasound image is determined to be the qualified ultrasound image with improved quality.

It should be understood that, the sequence numbers of the steps in the foregoing embodiments do not imply an execution sequence, and the execution sequence of each process should be determined by its function and inherent logic, and should not constitute any limitation to the implementation process of the embodiments of the present invention.

In an embodiment, an ultrasound image quality control apparatus is provided, where the ultrasound image quality control apparatus corresponds to the ultrasound image quality control method in the above embodiment one to one. As shown in fig. 11, the ultrasound image quality control apparatus includes a to-be-processed ultrasound image acquisition module 1101, a quality detection module 1102, a super-resolution processing module 1103, a consistency determination module 1104, and a qualified ultrasound image acquisition module 1105. The detailed description of each functional module is as follows:

a to-be-processed ultrasound image acquisition module 1101 for acquiring a to-be-processed ultrasound image;

the quality detection module 1102 is configured to perform quality detection on the ultrasound image to be processed, and determine whether the ultrasound image to be processed is a qualified ultrasound image;

the super-resolution processing module 1103 is configured to, if the ultrasound image to be processed is an unqualified ultrasound image, perform super-resolution processing on the ultrasound image to be processed, and acquire a reconstructed ultrasound image;

a consistency judgment module 1104, configured to perform consistency judgment on the image characteristics of the reconstructed ultrasound image and the image characteristics of the qualified ultrasound image;