CN114239755A - Intelligent identification method for color steel tile buildings along railway based on deep learning - Google Patents

Intelligent identification method for color steel tile buildings along railway based on deep learning Download PDFInfo

- Publication number

- CN114239755A CN114239755A CN202210173638.XA CN202210173638A CN114239755A CN 114239755 A CN114239755 A CN 114239755A CN 202210173638 A CN202210173638 A CN 202210173638A CN 114239755 A CN114239755 A CN 114239755A

- Authority

- CN

- China

- Prior art keywords

- color steel

- point

- deep learning

- detection frame

- centerpoint

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Pending

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/21—Design or setup of recognition systems or techniques; Extraction of features in feature space; Blind source separation

- G06F18/214—Generating training patterns; Bootstrap methods, e.g. bagging or boosting

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/70—Determining position or orientation of objects or cameras

- G06T7/73—Determining position or orientation of objects or cameras using feature-based methods

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/10—Image acquisition modality

- G06T2207/10028—Range image; Depth image; 3D point clouds

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20081—Training; Learning

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- Computer Vision & Pattern Recognition (AREA)

- General Physics & Mathematics (AREA)

- Data Mining & Analysis (AREA)

- Artificial Intelligence (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Bioinformatics & Computational Biology (AREA)

- Evolutionary Biology (AREA)

- Evolutionary Computation (AREA)

- Life Sciences & Earth Sciences (AREA)

- General Engineering & Computer Science (AREA)

- Image Analysis (AREA)

Abstract

The invention relates to a railway line color steel tile building intelligent identification method based on deep learning. The method comprises the steps of combining a laser radar carried by an unmanned aerial vehicle with an image, carrying out intelligent instance segmentation on a color steel house by using the image, carrying out deep learning by using point cloud data scanned by the laser radar, obtaining the distance between the color steel house and a railway through an algorithm, and judging whether the color steel house is a violation building. Under the conditions that a multi-period image is not needed and a change detection algorithm is not introduced, the intelligent identification of the violation color steel house is completed by using a single high-efficiency algorithm.

Description

Technical Field

The invention relates to the technical field of unmanned aerial vehicle aerial photography, in particular to a railway line color steel tile building intelligent identification method based on deep learning.

Background

The illegal building refers to a building which violates the law regulations related to city planning and land management and does not obtain the planning permission of a construction land or change the planning permission of a construction project without permission. The color steel tile house is widely used for factory warehouses and temporary houses in construction sites, however, the buildings are not only suspected of illegal construction and disordered building, but also can possibly become potential safety hazards and influence railway traffic after the color steel tile building materials which are scarcely and firmly installed are blown by strong wind. Therefore, the detection of the color steel tile houses along the track by adopting an efficient and informationized means has very important significance.

At present, the aerial photography technology of the unmanned aerial vehicle is developed rapidly, many cities in China begin to adopt aerial photographs of the unmanned aerial vehicle to detect the color steel tile violation buildings, but the method mainly adopted at the present stage is a mode of combining the unmanned aerial vehicle and the transformation detection technology, and finally, the change result is judged through manual interpretation. Although the mode avoids the field investigation of workers, the full-scale artificial interpretation is still inefficient for massive unmanned aerial vehicle aerial pictures or videos due to the huge scale along the railway, and certain damage is caused to the eyesight of practitioners.

In recent years, target detection based on deep learning has made breakthrough progress in many fields. Therefore, the target detection technology based on deep learning is combined with the unmanned aerial vehicle aerial photography technology, and the intelligent detection of the violation color steel tile buildings in the unmanned aerial vehicle aerial photography image by adopting the deep learning method has very good technical advantages and realizability. The characteristics of a large number of colored steel tile buildings in the aerial images of the unmanned aerial vehicle are extracted through deep learning, repeated iterative learning training is carried out on the established model, corresponding parameters are continuously adjusted and optimized, the accuracy of the detection model is improved, and the expected detection effect is finally achieved.

Although the deep learning technology has been applied to city management, the research results of applying the deep learning to the detection of the illegal color steel tile buildings are still few. The main challenges of intelligent detection of violation color steel tile buildings are as follows: due to the fact that non-technical factors such as railway line management are involved, the detection accuracy rate of the violation color steel tile cannot reach 100% by adopting intelligent detection, and therefore manual checking steps are indispensable, the detection of the violation color steel tile pays more attention to the recall ratio, and the accuracy rate is improved while the specific recall ratio is guaranteed. Aiming at the problem, the intelligent detection method for the illegal color steel tiles along the railway is researched and suitable for the intelligent detection method of the illegal color steel tiles through optimizing and combining the deep learning method based on the obtained unmanned aerial vehicle image library.

At present, an intelligent recognition system for color steel tiles along a railway is not available in the market. The railway is along the border field of violating the regulations and building discernment and belongs to the railway and patrols and examines, but also is important field that can not neglect simultaneously, and in actual operation, the various steel tile houses in the safety control district scope along the railway because its material is lighter, easily under bad weather such as typhoon, is blown off by the strong wind and scrapes the railway to seriously influence the railway operation safety. In recent years, many safety accidents have occurred, resulting in power failure and outage and serious loss of large-area railways. Therefore, the detection and identification of the color steel tiles along the line are necessary. And when the year is 2019, the total mileage of the passing train of the China railway is 13.9 kilometers, wherein the total mileage of the high-speed railway reaches 3.5 kilometers. By the end of 2020, the total railway mileage is expected to reach 14.6 kilometers, covering about 99% of 20 million people and more cities. Wherein the high-speed rail (including the inter-city railway) is about 3.9 kilometers, and the rail continues to run the world. The market demand in such rapidly increasing miles is also quite enormous.

The prior art CN110427441A discloses a method for detecting and managing hidden dangers of the external environment of a railway based on the air-space-ground integration technology, which mainly uses the detection of the space remote sensing technology and is matched with manual work to carry out targeted recheck and problem disposal, thereby realizing high automation of obtaining detection results from data and outputting the detection results, improving the operation efficiency and the informatization degree, and realizing the fine management and analysis of the detection results and the closed-loop management from hidden danger discovery, on-site rechecking, information filling to hidden danger OA treatment. CN112396128A discloses a railway external environment risk source sample automatic labeling method, including: 1, collating DOM data and DLG acquisition result data in an area range and checking the quality; 2, extracting and screening the information of the target elements concerning the risk source; checking and repairing topology errors of vector polygon elements; 4, homogenizing the color of the digital orthoimage; 5, calculating a regular tile grid of the digital orthoimage and cutting and outputting sample data; 6, analyzing and operating the sample labeling range space; 7, correcting the space position of the regular sample vector polygon; generating a marking result and generating an intermediate result; 9, marking data to check local repair; and 10, sorting and uniformly outputting the sample database data. The method has the advantages that the large-format digital ortho-image data can be rapidly generated into the deep learning sample library in batches, the sample labeling efficiency is effectively improved, the offset of the vector acquisition and the image representation ground object boundary can be automatically corrected according to the sample data, and the sample labeling precision is improved. Referring to the attached figure 1, CN112307873A discloses an automatic illegal building identification method based on a full convolution neural network, a characteristic point coordinate is calculated for a photo shot by an unmanned aerial vehicle through a SURF algorithm, a homography matrix is calculated according to the coordinate, an old image is preprocessed and transformed through the homography matrix, so that the characteristic point can be coincided with a new image, the roof of a real scene is subjected to change detection through an image algebra, when the difference of pixel points meeting the requirement of length and width is detected, a detected suspicious result image is transmitted to a subsequent FCN frame for identification, and if the returned identification result image contains a specified pixel color area, the area is an illegal building, and the automatic identification function is realized.

However, the above prior art solutions have the following disadvantages: in the method for detecting and managing the hidden danger of the external environment of the railway based on the air-space-ground integration technology of CN110427441A, the classification and the change detection both adopt the traditional algorithm, the traditional algorithm is simple, but the result is not deep learning and good neural network, and the mode of combining the traditional algorithm and the deep learning can be considered to carry out corresponding operation on the image. In the method for automatically carrying out the external environment risk source sample marking along the railway by using the high-resolution remote sensing aerial survey data and the vector acquisition result data of CN112396128A, for the aerial image of the unmanned aerial vehicle, due to cost consideration, a multi-phase comparison algorithm is not needed, the multi-phase comparison algorithm does not belong to the part of intelligent identification, and an improvement space is provided. The training and image transformation of the old image takes much time, and the training difficulty is increased due to the two inconsistent data sets. In the automatic identification method of the violation buildings based on the full convolution neural network of the CN112307873A, the SURF algorithm for extracting the common feature points is not the best in terms of efficiency and speed, and there is still room for improvement in terms of algorithm, and a current efficient leading edge algorithm can be adopted. For the aerial images of the unmanned aerial vehicle, due to cost consideration, a multi-phase comparison algorithm is not needed, the multi-phase comparison algorithm does not belong to the intelligent identification part, and an improvement space is provided. The training and image transformation of the old image takes much time, and the training difficulty is increased due to the two inconsistent data sets. The SURF algorithm used is also not currently best in terms of efficiency and speed, and there is room for improvement in the algorithm. How to overcome the deficiencies of the prior art schemes is an urgent issue to be solved in the technical field.

Disclosure of Invention

In order to overcome the defects of the prior art, the invention provides an intelligent identification method of color steel tile buildings along a railway based on deep learning, which specifically adopts the following technical scheme:

a method for intelligently identifying color steel tile buildings along a railway based on deep learning comprises the following steps:

s1, acquiring original image data and point cloud data along a railway by using a camera and a laser radar carried by an unmanned aerial vehicle, and storing the original image data and the point cloud data into a server;

s2, reading the original image data and point cloud data from a data processing server;

s3, slicing the original image data by using a Swin transform main frame structure to form a characteristic diagram, meanwhile, transmitting the point cloud data into a CenterPoint network frame for training, and recording the relative distance between a color steel room and a railway;

s4, the Swin Transformer main frame structure passes through four stages, each stage changes the characteristic dimension of the divided characteristic diagram of each batch into a three-dimensional characteristic diagram by a linear embedding method, and then the three-dimensional characteristic diagram is sent into a Swin Transformer Block and is subjected to down-sampling treatment;

and S5, after iterative training, transmitting the detected suspicious result into a detection module for detection, and comparing the results obtained by the CenterPoint and the Swin transform to obtain accurate position information of the illegal building.

Further, in step S2, the data processing specifically includes: and (3) labeling and labeling the color steel room outline in the original image data by using LabelImg labeling software, and simultaneously resolving and registering the point cloud data to generate three-dimensional color point cloud.

Further, in step S3, the step of transmitting the point cloud data into a centrpoint network frame for training specifically includes: in the pre-training process, the hyper-parameters are set as follows: when the epochs is within 200, carrying out unfreezing training with the learning rate of 0.001, and then carrying out unfreezing training with the learning rate of 0.00001 and the iteration number of 5000; 5500 pictures containing color steel tiles were used for training, 500 color steel tile pictures were used for verification, and 100 random images were used for testing.

Further, the CenterPoint network frame detects the central point of the detection frame of the three-dimensional target by using the CenterPoint in the first stage, and regresses the size, the direction and the speed of the detection frame;

the CenterPoint network frame uses a redefinition module in the second stage, and for the detection frame in the first stage, the score of the point feature regression detection frame in the center of the detection frame is used and a redefinition method is executed.

Further, in step S4, the downsampling process specifically includes:

the picture size is set to 224 × 224, the window size is set to 7 × 7, that is, each window is fixed with 7 × 7 patches, the size of the patch is not fixed, which changes with the operation of patch merging batch, and finally, the whole picture retains one window, that is, 7 × 7 patches.

Further, the detecting frame center point using the centrpoint to detect the three-dimensional target and regressing the size, direction and speed of the detecting frame specifically includes: the input of the CenterPoint network is radar point cloud data; the CenterPoint provides two backbone network implementation modes, namely VoxelNet and PointPillar; the output of the centrpoint network is a class-based thermodynamic diagram, the size of the target, the turn angle and the speed.

Further, the regression method of the thermodynamic diagram specifically includes: generating a thermodynamic diagram with the size of W/R H/R K for an image with the arbitrary size of W H3, wherein K is the number of detected classes; the value of the element in the thermodynamic diagram is 0 or 1, wherein if the point in the thermodynamic diagram is 1, the point in the image is the center of a detection frame, and if the point is 0, the point is a background in the image.

Further, the using of one redefinition module specifically includes: for the detection frame in the first stage, regression of the score of the detection frame is performed by using the point feature of the center of the detection frame, and a redefinition method is performed; and extracting a point characteristic value of the central point of each surface of the target detection frame on the characteristic graph according to the target detection frame and the backbone network characteristic graph in the first stage, and sending the characteristic value into the fully-connected network to obtain the detection confidence coefficient and the fine-trimming result of the target detection frame.

Further, as the centers of the top surface and the ground of the target detection frame are the same point on the birdview, the point characteristics of the centers of the four outward surfaces on the birdview are actually selected as input values of the full-connection network, and meanwhile, for each point characteristic, bilinear interpolation is used for extracting the characteristic from the characteristic diagram of the birdview of the backbone network during actual extraction.

Further, in step S5, comparing the results obtained by centrpoint and Swin Transformer specifically includes:

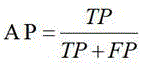

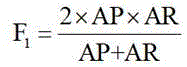

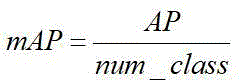

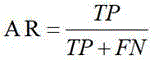

setting comparison indexes including Average Precision (Average Precision) AP, total Average Precision mAP, Average Recall (Average Recall) AR, Precision (Precision), detection time and transmission frame Per Second (Frames Per Second) FPS and F1 score; the mathematical function is expressed as follows:

wherein AR is the average recall ratio, which is the ratio of correctly identified positive samples among all positive sample samples in the test set, TP is the correctly classified positive samples, TN is the correctly classified negative samples, FP is the incorrectly classified negative samples, FN is the incorrectly classified positive samples, Precision (Precision) is the ratio of the correctly classified positive samples in the identified picture, AP is the identifier for a single class, and the total average Precision mAP is the average of the AP from the dimension of the class, so the performance of the multi-classifier can be evaluated,

thereby obtaining accurate illegal building position information registered with the information output by the GPS

The technical scheme of the invention obtains the following beneficial effects: under the conditions that a multi-period image is not needed and a change detection algorithm is not introduced, the intelligent identification of the violation color steel house is completed by using a single high-efficiency algorithm. The method comprises the steps of combining a laser radar carried by an unmanned aerial vehicle with an image, carrying out intelligent instance segmentation on a color steel house by utilizing the image, carrying out deep learning by utilizing point cloud data scanned by the laser radar, obtaining the distance between the color steel house and a railway through an algorithm, and judging whether the color steel house is a violation building.

Drawings

Fig. 1 is a block diagram of a violation building identification method disclosed in the prior art.

FIG. 2 is a flow chart of the method of the present invention.

Figure 3 is a network flow diagram of the centrpoint of the present invention.

Detailed Description

The invention is further described below with reference to the accompanying drawings. The following examples are only for illustrating the technical solutions of the present invention more clearly, and the protection scope of the present invention is not limited thereby. It should be noted that the following detailed description is exemplary and is intended to provide further explanation of the disclosure.

Unless defined otherwise, all technical and scientific terms used herein have the same meaning as commonly understood by one of ordinary skill in the art to which this application belongs. It is noted that the terminology used herein is for the purpose of describing particular embodiments only and is not intended to be limiting of example embodiments according to the present application. As used herein, the singular forms "a", "an" and "the" are intended to include the plural forms as well, and it should be understood that when the terms "comprises" and/or "comprising" are used in this specification, they specify the presence of stated features, steps, operations, devices, components, and/or combinations thereof, unless the context clearly indicates otherwise.

Referring to the attached figure 2, the intelligent identification method of the color steel tile buildings along the railway based on deep learning comprises the following steps:

step 1: the camera and the laser radar carried by the unmanned aerial vehicle acquire images and point cloud data along the railway and store the images and the point cloud data into the server to form a color steel shed roof data set and an orthoimage corresponding point cloud data set

Step 2: reading the image and the point cloud data from the data processing server, and processing the data; the concrete contents are as follows: and (3) labeling and labeling the color steel room outline in the original image data by using LabelImg labeling software, and simultaneously resolving and registering the point cloud data to generate three-dimensional color point cloud.

And step 3: the Swin Transformer main frame structure carries out slicing operation on original image data to form a characteristic diagram; and meanwhile, point cloud data are transmitted into a CenterPoint network framework for training, a computer configured as GTX1080ti and 32GB memory is adopted, and the environments are python3.6.9, pytorch1.4.0, torchvision0.5.0, numpy1.19.1 and opencv3.4.6. In the pre-training process, the hyper-parameters are set as follows: when the epochs is within 200, the training is unfrozen, the learning rate is 0.001, and then the training is unfrozen, the learning rate is 0.00001, and the iteration number is 5000. There were 5500 total pictures containing color steel tiles for training, 500 color steel tile pictures for verification, and 100 random images for testing. And recording the relative distance between the color steel house and the railway.

And 4, step 4: the Swin Transformer passes through four stages, each stage changes the characteristic dimension of the characteristic diagram of each divided batch into a three-dimensional characteristic diagram through a linear embedding, and then the three-dimensional characteristic diagram is sent into a Swin Transformer Block; and uses a down-sampling process. The specific down-sampling process is as follows:

assume that the picture size is 224 × 224 and the window size is fixed, 7 × 7. Each box is a window, and each window is fixed with 7 × 7 patches, but the size of the patch is not fixed, and it varies with the operation of patch merging. For example, if the patch size is 4 × 4, then the patch becomes 8 × 8. We pieced together the patches of the 4 windows in the periphery, which is equivalent to a 2X 2 times enlargement of the patches, resulting in patches of size 8X 8.

After the series of operations, the number of the patches is reduced, and the final whole graph has only one window, 7 patches. Therefore, it can be considered that down-sampling is to reduce the number of latches, but the size of the latches is increasing.

At the first stage, the CenterPoint is used for detecting the central point of the detection frame of the three-dimensional target and regressing the size, direction and speed of the detection frame. The specific implementation details are as follows: the input of the network is radar point cloud data. The 3D encoder part of the network uses the existing network model, and the CenterPoint provides two backbone network implementation modes, namely VoxelNet and PointPillar. The output of the network is a class-based thermodynamic diagram, the size of the target, the turn angle and the speed. Regression mode of thermodynamic diagram: for an image of arbitrary size W x H x 3, we generate a thermodynamic diagram of size W/R x H/R x K, where K is the number of classes detected. The value of the element in the thermodynamic diagram is 0 or 1, wherein if the point in the thermodynamic diagram is 1, the point in the image is the center of a detection frame, and if the point in the thermodynamic diagram is 0, the image is a background. But if a thermodynamic diagram is generated in this way, most of the thermodynamic diagram will be background, and the solution is: the Gaussian radius formula is set to be σ = max (f (wl); tau), wherein tau = 2 is the minimum Gaussian radius value, and f is the Gaussian radius solving method of CenterNet. Designing a redefinition module in the second stage, and performing redefinition on the detection frame in the first stage by using the point feature at the center of the detection frame to regress the score of the detection frame; and extracting a point characteristic value of the central point of each surface of the target detection frame on the characteristic graph according to the target detection frame and the backbone network characteristic graph in the first stage, and sending the characteristic value into the fully-connected network to obtain the detection confidence coefficient and the fine-trimming result of the target detection frame. Specifically, since the centers of the top surface and the ground of the target detection frame are the same point on the birdview, the point feature of the centers of the four outward surfaces on the birdview is actually selected as the input value of the fully-connected network. Meanwhile, for each point feature, bilinear interpolation is used for extracting the feature from the birdview feature map of the backbone network during actual extraction.

And 5: after iterative training, a detected suspicious result (the suspicious result refers to a test atlas with the identification rate of the color steel tile building structure in the test atlas being more than 70%) is transmitted to the detection module for detection. And comparing the results obtained by the centrpoint and Swin transducer, the comparison index is as follows: including Average Precision (AP), total Average Precision (mapp), Average Recall (AR), Precision (Precision), detection time and number of Frames Per Second (FPS), and F1 score, among others.

Strictly speaking, the size of the model is very interesting in some occasions, so that the size of the model can be an evaluation dimension in addition to the above three dimensions. Their mathematical function expressions are given below.

Wherein, AR is the average recall ratio, which is the proportion of all positive samples in the test set that are correctly identified as positive samples, TP is the correctly classified positive sample, and TN is the correctly classified negative sample. FP is a negative example of misclassification. FN is a positive sample that is misclassified. Precision is actually the ratio of correctly classified positive samples in the identified picture.

The AP is a recognizer for a single class, and the maps are averages of APs from the dimension of the class, so the performance of multiple classifiers can be evaluated.

And obtaining accurate illegal building position information which is registered with the information output by the GPS.

In the step 4, the calculation efficiency of the Swin Transformer is improved by a Shifted Window based Self-orientation mechanism:

to improve model efficiency, self-attention is computed within local windows arranged to divide the image uniformly in a non-overlapping manner. Suppose each window isSize, one sheetBig and small imageThe complexity based on the global MSA module and one W-MSA module is:

where the former is the patch number hw of the quadratic equation and the latter is linear when M is modified (set to 7 default). Global self-attention calculations are generally not affordable for large-scale self-attention calculations, whereas window-based self-attention is scalable.

In step 4, the principal contribution of centrpoint is as follows:

and (3) using points to represent targets, simplifying a three-dimensional target detection task: unlike image target detection, where the three-dimensional target in the point cloud does not follow any particular direction, box-based detectors have difficulty enumerating all directions or fitting an axis-aligned detection box for a rotating object. The Center-based approach does not have this concern. The point has no internal corner. This greatly reduces the search space while maintaining rotational invariance of the target.

The Center-based method simplifies the tracking task since the method does not require an additional motion model (e.g., Kalman filtering), so the tracking computation time is negligible and only needs to run for 1 millisecond on the basis of detection.

A point feature based redefinition module is used as the second stage of the network. The model prediction performance is guaranteed, and meanwhile, the method is faster than most of the existing redefinition methods. My understanding is that since the multi-target tracking process of "detection-tracking" is very sensitive to the error prediction in the detection stage, the error prediction generated in the first stage of CenterPoint is reduced by predicting the score of bbox in the second stage, the quality of target detection is improved, and the tracking result is further improved.

Centrpoint method first stage:

FIG. 3 is a flow chart of a network of CenterPoint with the input of the network being the radar point cloud data. The 3D encoder part of the network uses the existing network model, and the CenterPoint provides two backbone network implementation modes, namely VoxelNet and PointPillar.

The output of the network is the class-based Heatmap, the size of the target, the rotation angle and the speed. In which Heatmap is generated in a similar manner to centrnet.

Centrpoint method second phase:

according to the target detection box and the backbone network featuremap in the first stage, the author extracts the point characteristic value of the central point of each surface of the target detection box on the featuremap, and sends the characteristic value to the fully-connected network as shown in the first drawing d to obtain the detection confidence coefficient and the refinement result of the target detection box. Specifically, since the centers of the top surface and the ground of the target detection frame are the same point on the birdview, a point feature (the projection of the specific four points is shown in fig. one c) of the centers of the four outward surfaces on the birdview is actually selected as an input value of the fully-connected network. Meanwhile, for each point feature, bilinear interpolation is used for extracting the feature from the birdview feature map of the backbone network during actual extraction.

The invention provides an intelligent identification method for violation color steel tile buildings based on deep learning along a high-speed railway. The invention has the following characteristics: 1. railway images and point cloud data along the line are collected through a camera and a laser radar carried by an unmanned aerial vehicle, and the data source is more reliable and various than common data. 2. The raw image data was sliced into feature maps by the Swin transform network. 3. The calculation efficiency of the Swin Transformer is improved through a Shifted Window based Self-orientation mechanism, and the calculation speed is obviously superior to that of the conventional Swin Transformer method. 4. Detecting the central point of the detection frame of the three-dimensional target through the CenterPoint, and regressing the size, the direction and the speed of the detection frame.

As described above, only the preferred embodiments of the present invention are described, and it should be noted that, for those skilled in the art, several modifications and variations can be made without departing from the technical principle of the present invention, and these modifications and variations should be considered as the protection scope of the present invention.

Claims (10)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202210173638.XA CN114239755A (en) | 2022-02-25 | 2022-02-25 | Intelligent identification method for color steel tile buildings along railway based on deep learning |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202210173638.XA CN114239755A (en) | 2022-02-25 | 2022-02-25 | Intelligent identification method for color steel tile buildings along railway based on deep learning |

Publications (1)

| Publication Number | Publication Date |

|---|---|

| CN114239755A true CN114239755A (en) | 2022-03-25 |

Family

ID=80748089

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202210173638.XA Pending CN114239755A (en) | 2022-02-25 | 2022-02-25 | Intelligent identification method for color steel tile buildings along railway based on deep learning |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN114239755A (en) |

Cited By (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN114842351A (en) * | 2022-04-11 | 2022-08-02 | 中国人民解放军战略支援部队航天工程大学 | Remote sensing image semantic change detection method based on twin transforms |

| CN119071851A (en) * | 2024-09-25 | 2024-12-03 | 福州大学 | Slicing partitioning and collaborative offloading method for air-ground integrated network |

Citations (8)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN103605978A (en) * | 2013-11-28 | 2014-02-26 | 中国科学院深圳先进技术研究院 | Urban illegal building identification system and method based on three-dimensional live-action data |

| US20140313330A1 (en) * | 2013-04-19 | 2014-10-23 | James Carey | Video identification and analytical recognition system |

| CN107871125A (en) * | 2017-11-14 | 2018-04-03 | 深圳码隆科技有限公司 | Architecture against regulations recognition methods, device and electronic equipment |

| CN109753928A (en) * | 2019-01-03 | 2019-05-14 | 北京百度网讯科技有限公司 | Illegal building identification method and device |

| CN110765894A (en) * | 2019-09-30 | 2020-02-07 | 杭州飞步科技有限公司 | Object detection method, apparatus, device, and computer-readable storage medium |

| CN113269717A (en) * | 2021-04-09 | 2021-08-17 | 中国科学院空天信息创新研究院 | Building detection method and device based on remote sensing image |

| CN113378642A (en) * | 2021-05-12 | 2021-09-10 | 三峡大学 | Method for detecting illegal occupation buildings in rural areas |

| CN113591804A (en) * | 2021-09-27 | 2021-11-02 | 阿里巴巴达摩院(杭州)科技有限公司 | Image feature extraction method, computer-readable storage medium, and computer terminal |

-

2022

- 2022-02-25 CN CN202210173638.XA patent/CN114239755A/en active Pending

Patent Citations (8)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US20140313330A1 (en) * | 2013-04-19 | 2014-10-23 | James Carey | Video identification and analytical recognition system |

| CN103605978A (en) * | 2013-11-28 | 2014-02-26 | 中国科学院深圳先进技术研究院 | Urban illegal building identification system and method based on three-dimensional live-action data |

| CN107871125A (en) * | 2017-11-14 | 2018-04-03 | 深圳码隆科技有限公司 | Architecture against regulations recognition methods, device and electronic equipment |

| CN109753928A (en) * | 2019-01-03 | 2019-05-14 | 北京百度网讯科技有限公司 | Illegal building identification method and device |

| CN110765894A (en) * | 2019-09-30 | 2020-02-07 | 杭州飞步科技有限公司 | Object detection method, apparatus, device, and computer-readable storage medium |

| CN113269717A (en) * | 2021-04-09 | 2021-08-17 | 中国科学院空天信息创新研究院 | Building detection method and device based on remote sensing image |

| CN113378642A (en) * | 2021-05-12 | 2021-09-10 | 三峡大学 | Method for detecting illegal occupation buildings in rural areas |

| CN113591804A (en) * | 2021-09-27 | 2021-11-02 | 阿里巴巴达摩院(杭州)科技有限公司 | Image feature extraction method, computer-readable storage medium, and computer terminal |

Non-Patent Citations (4)

| Title |

|---|

| 3D视觉工坊: "CenterPoint:基于点云数据的3D目标检测与跟踪", 《HTTPS://ZHUANLAN.ZHIHU.COM/P/356431540》 * |

| HAOYI XIU等: "Collapsed Building Detection Using 3D Point Clouds and Deep Learning", 《REMOTE SENSING》 * |

| 施国武等: "基于深度学习的无人机影像建筑物自动提取", 《地矿测绘》 * |

| 炼己者: "Swin transformer理解要点", 《HTTPS://EVENTS.JIANSHU.IO/P/0635969F478B》 * |

Cited By (4)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN114842351A (en) * | 2022-04-11 | 2022-08-02 | 中国人民解放军战略支援部队航天工程大学 | Remote sensing image semantic change detection method based on twin transforms |

| CN114842351B (en) * | 2022-04-11 | 2025-05-02 | 中国人民解放军战略支援部队航天工程大学 | A Semantic Change Detection Method for Remote Sensing Images Based on Siamese Transformers |

| CN119071851A (en) * | 2024-09-25 | 2024-12-03 | 福州大学 | Slicing partitioning and collaborative offloading method for air-ground integrated network |

| CN119071851B (en) * | 2024-09-25 | 2025-10-28 | 福州大学 | Slicing dividing and collaborative unloading method for space-air-ground integrated network |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| Yang et al. | Deep learning‐based bolt loosening detection for wind turbine towers | |

| KR102507501B1 (en) | Artificial Intelligence-based Water Quality Contaminant Monitoring System and Method | |

| Bu et al. | A UAV photography–based detection method for defective road marking | |

| Chen et al. | A novel vehicle tracking and speed estimation with varying UAV altitude and video resolution | |

| CN110852164A (en) | YOLOv 3-based method and system for automatically detecting illegal building | |

| CN115995058A (en) | Artificial intelligence-based online monitoring method for power transmission channel safety | |

| CN116977572B (en) | Building elevation structure extraction method for multi-scale dynamic graph convolution | |

| CN114239755A (en) | Intelligent identification method for color steel tile buildings along railway based on deep learning | |

| Shao et al. | PTZ camera-based image processing for automatic crack size measurement in expressways | |

| Yang et al. | YOLOX with CBAM for insulator detection in transmission lines | |

| Li et al. | An efficient point cloud place recognition approach based on transformer in dynamic environment | |

| CN117218524B (en) | Urban violation area identification method based on space-sky-earth multi-view image collaboration | |

| Martinelli et al. | Object detection and localisation in thermal images by means of uav/drone | |

| Meivel et al. | Remote sensing analysis of the lidar drone mapping system for detecting damages to buildings, roads, and bridges using the faster cnn method | |

| Xiao et al. | UAV video vehicle detection: Benchmark and baseline | |

| CN117036319A (en) | Visibility level detection method based on monitoring camera image | |

| Fu et al. | LiDAR-camera fusion: dual-scale correction for vehicle multi-object detection and trajectory extraction | |

| Su et al. | Local fusion attention network for semantic segmentation of building facade point clouds | |

| CN110826478A (en) | A method for identifying illegal construction in aerial photography based on adversarial network | |

| CN120411120A (en) | A method and system for power transmission image defect detection and defect deduplication based on deep learning image segmentation algorithm | |

| Han et al. | Deep learning-based multi-category disease semantic image segmentation detection for concrete structures using the Res-Unet model | |

| CN110765900B (en) | A method and system for automatically detecting illegal buildings based on DSSD | |

| Wang et al. | Deep learning–based detection of vehicle axle type with images collected via UAV | |

| CN115035507B (en) | Intelligent mobile vehicle inspection device and method based on Beidou positioning and visual SLAM | |

| Quang et al. | Enhancing pedestrian detection in urban areas using high-resolution satellite imagery and CNN Models |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| RJ01 | Rejection of invention patent application after publication |

Application publication date: 20220325 |

|

| RJ01 | Rejection of invention patent application after publication |