CN114155569B - Cosmetic progress detection method, device, equipment and storage medium - Google Patents

Cosmetic progress detection method, device, equipment and storage medium Download PDFInfo

- Publication number

- CN114155569B CN114155569B CN202111015242.4A CN202111015242A CN114155569B CN 114155569 B CN114155569 B CN 114155569B CN 202111015242 A CN202111015242 A CN 202111015242A CN 114155569 B CN114155569 B CN 114155569B

- Authority

- CN

- China

- Prior art keywords

- image

- makeup

- frame image

- area

- target

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/22—Matching criteria, e.g. proximity measures

Landscapes

- Engineering & Computer Science (AREA)

- Data Mining & Analysis (AREA)

- Theoretical Computer Science (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Bioinformatics & Computational Biology (AREA)

- Artificial Intelligence (AREA)

- Evolutionary Biology (AREA)

- Evolutionary Computation (AREA)

- Physics & Mathematics (AREA)

- General Engineering & Computer Science (AREA)

- General Physics & Mathematics (AREA)

- Life Sciences & Earth Sciences (AREA)

- Image Processing (AREA)

- Image Analysis (AREA)

Abstract

本申请提出一种化妆进度检测方法、装置、设备及存储介质,该方法包括:获取用户当前进行特定妆容的实时化妆视频;根据实时化妆视频的初始帧图像和当前帧图像,确定用户进行特定妆容的当前化妆进度。本申请将用户化妆过程的当前帧图像与初始帧图像做对比来确定化妆进度。仅通过图像处理即可检测出化妆进度,化妆进度检测的准确性很高,对于高光、修容、腮红、粉底、遮瑕、眼影、眼线、眉毛等化妆过程均能实时检测用户的化妆进度。无需使用深度学习模型,运算量小,成本低,减少了服务器的处理压力,提高了化妆进度检测的效率,能够满足化妆进度检测的实时性要求。

The present application provides a method, device, device and storage medium for detecting makeup progress. The method includes: acquiring a real-time makeup video of a user currently performing a specific makeup look; and determining whether the user is performing a specific makeup look according to an initial frame image and a current frame image of the real-time makeup video. of the current makeup progress. The present application compares the current frame image of the user's makeup process with the initial frame image to determine the makeup progress. The makeup progress can be detected only through image processing, and the accuracy of makeup progress detection is very high. For makeup processes such as highlighting, contouring, blush, foundation, concealer, eye shadow, eyeliner, and eyebrows, the user's makeup progress can be detected in real time. There is no need to use a deep learning model, the calculation amount is small, the cost is low, the processing pressure of the server is reduced, the efficiency of makeup progress detection is improved, and the real-time requirements of makeup progress detection can be met.

Description

技术领域technical field

本申请属于图像处理技术领域,具体涉及一种化妆进度检测方法、装置、设备及存储介质。The present application belongs to the technical field of image processing, and in particular relates to a makeup progress detection method, device, equipment and storage medium.

背景技术Background technique

化妆已经成为很多人日常生活必不可少的环节,化妆过程的步骤繁多,如果能够将化妆进度实时反馈给用户,将可以极大减少化妆对用户精力的消耗,节省用户的化妆时间。Makeup has become an essential part of many people's daily life. There are many steps in the makeup process. If the makeup progress can be fed back to the user in real time, it will greatly reduce the energy consumption of the user and save the user's makeup time.

目前,相关技术中存在一些使用深度学习模型提供虚拟试妆、肤色侦测、个性化产品推荐等功能,这些功能均需要预先收集大量的人脸图片对深度学习模型进行训练。At present, there are some related technologies that use deep learning models to provide functions such as virtual makeup testing, skin color detection, and personalized product recommendations. These functions require pre-collecting a large number of face pictures to train deep learning models.

但人脸图片是用户的隐私数据,很难收集到庞大的人脸图片。且模型训练需耗费大量计算资源,成本高。模型的精度与实时性成反比,化妆进度检测需要实时捕获用户人脸面部信息来确定用户当前的化妆进度,实时性要求很高,能够满足实时性要求的深度学习模型,其检测的准确性不高。However, face pictures are user privacy data, and it is difficult to collect huge face pictures. Moreover, model training requires a large amount of computing resources and is expensive. The accuracy of the model is inversely proportional to the real-time performance. Make-up progress detection needs to capture the user's face and facial information in real time to determine the user's current make-up progress. The real-time requirements are very high. The deep learning model that can meet the real-time requirements is not accurate enough. high.

发明内容Contents of the invention

本申请提出一种化妆进度检测方法、装置、设备及存储介质,通过初始帧图像与当前帧图像之间的差别,确定出当前化妆进度,化妆进度检测的准确性很高,且运算量小,成本低,减少了服务器的处理压力,提高了化妆进度检测的效率,能够满足化妆进度检测的实时性要求。This application proposes a makeup progress detection method, device, equipment, and storage medium. The current makeup progress is determined through the difference between the initial frame image and the current frame image. The accuracy of makeup progress detection is high, and the calculation amount is small. The cost is low, the processing pressure of the server is reduced, the efficiency of makeup progress detection is improved, and the real-time requirement of makeup progress detection can be met.

本申请第一方面实施例提出了一种化妆进度检测方法,包括:The embodiment of the first aspect of the present application proposes a makeup progress detection method, including:

获取用户当前进行特定妆容的实时化妆视频;Obtain real-time makeup videos of users currently performing specific makeup looks;

根据所述实时化妆视频的初始帧图像和当前帧图像,确定所述用户进行所述特定妆容的当前化妆进度。According to the initial frame image and the current frame image of the real-time makeup video, the current makeup progress of the user performing the specific makeup is determined.

在本申请的一些实施例中,所述特定妆容包括高光妆容、或修容妆容;所述根据所述实时化妆视频的初始帧图像和当前帧图像,确定所述用户进行所述特定妆容的当前化妆进度,包括:In some embodiments of the present application, the specific makeup includes high-gloss makeup or grooming makeup; and according to the initial frame image and the current frame image of the real-time makeup video, it is determined that the current time for the user to perform the specific makeup is Makeup progress, including:

获取所述特定妆容对应的至少一个目标上妆区域;Obtain at least one target makeup area corresponding to the specific makeup;

根据所述目标上妆区域,从所述初始帧图像中获取所述特定妆容对应的第一目标区域图像,及从所述当前帧图像中获取所述特定妆容对应的第二目标区域图像;Acquiring a first target area image corresponding to the specific makeup from the initial frame image according to the target makeup area, and acquiring a second target area image corresponding to the specific makeup from the current frame image;

根据所述第一目标区域图像和所述第二目标区域图像,确定所述当前帧图像对应的当前化妆进度。According to the first target area image and the second target area image, the current makeup progress corresponding to the current frame image is determined.

在本申请的一些实施例中,所述根据所述目标上妆区域,从所述初始帧图像中获取所述特定妆容对应的第一目标区域图像,包括:In some embodiments of the present application, the acquiring the first target area image corresponding to the specific makeup from the initial frame image according to the target makeup area includes:

检测所述初始帧图像对应的第一人脸关键点;Detecting the first human face key point corresponding to the initial frame image;

根据所述第一人脸关键点,获取所述初始帧图像对应的人脸区域图像;Acquiring a face region image corresponding to the initial frame image according to the first face key point;

根据所述第一人脸关键点和所述目标上妆区域,从所述人脸区域图像中获取所述特定妆容对应的第一目标区域图像。According to the first human face key point and the target makeup area, a first target area image corresponding to the specific makeup is acquired from the human face area image.

在本申请的一些实施例中,所述根据所述第一人脸关键点和所述目标上妆区域,从所述人脸区域图像中提取所述特定妆容对应的第一目标区域图像,包括:In some embodiments of the present application, the extraction of the first target area image corresponding to the specific makeup from the face area image according to the first human face key point and the target makeup area includes :

从所述第一人脸关键点中确定出位于所述人脸区域图像中所述目标上妆区域对应的区域轮廓上的一个或多个目标关键点;Determining one or more target key points located on the contour of the area corresponding to the target makeup area in the human face area image from the first human face key points;

根据所述目标上妆区域对应的目标关键点,生成所述人脸区域图像对应的掩膜图像;Generate a mask image corresponding to the face area image according to the target key points corresponding to the target makeup area;

对所述掩膜图像和所述人脸区域图像进行与运算,获得所述特定妆容对应的第一目标区域图像。An AND operation is performed on the mask image and the face area image to obtain a first target area image corresponding to the specific makeup.

在本申请的一些实施例中,所述根据所述目标上妆区域对应的目标关键点,生成所述人脸区域图像对应的掩膜图像,包括:In some embodiments of the present application, the generating the mask image corresponding to the face area image according to the target key points corresponding to the target makeup area includes:

若存在目标上妆区域对应的目标关键点的数目为多个,则根据所述目标关键点,确定所述目标上妆区域在所述人脸区域图像中的每个边缘坐标;将所述每个边缘坐标围成的区域内的所有像素点的像素值均修改为预设值,得到所述目标上妆区域对应的掩膜区域;If there are multiple target key points corresponding to the target makeup area, then according to the target key points, determine each edge coordinate of the target makeup area in the face area image; The pixel values of all the pixel points in the area surrounded by two edge coordinates are all modified to preset values, so as to obtain the mask area corresponding to the target makeup area;

若存在目标上妆区域对应的目标关键点的数目为一个,则以所述目标关键点为中心,绘制预设大小的椭圆区域,将所述椭圆区域内的所有像素点的像素值均修改为预设值,得到所述目标上妆区域对应的掩膜区域;If there is one target key point corresponding to the target makeup area, then take the target key point as the center, draw an ellipse area of preset size, and modify the pixel values of all pixels in the ellipse area to A preset value to obtain a mask area corresponding to the target makeup area;

将所述掩膜区域之外的所有像素点的像素值均修改为零,得到所述人脸区域图像对应的掩膜图像。Modifying the pixel values of all pixels outside the mask area to zero to obtain a mask image corresponding to the face area image.

在本申请的一些实施例中,所述特定妆容包括腮红妆容;所述根据所述实时化妆视频的初始帧图像和当前帧图像,确定所述用户进行所述特定妆容的当前化妆进度,包括:In some embodiments of the present application, the specific makeup includes blush makeup; the determining the current makeup progress of the user performing the specific makeup according to the initial frame image and the current frame image of the real-time makeup video includes :

获取所述特定妆容对应的至少一个目标上妆区域;Obtain at least one target makeup area corresponding to the specific makeup;

根据所述目标上妆区域,生成美妆掩码图;Generate a beauty mask map according to the target makeup area;

根据所述美妆掩码图、所述初始帧图像和所述当前帧图像,确定所述当前帧图像对应的当前化妆进度。According to the makeup mask image, the initial frame image and the current frame image, determine the current makeup progress corresponding to the current frame image.

在本申请的一些实施例中,所述根据所述美妆掩码图、所述初始帧图像和所述当前帧图像,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the determining the current makeup progress corresponding to the current frame image according to the beauty mask image, the initial frame image and the current frame image includes:

以所述美妆掩码图为参照,从所述初始帧图像中获取上妆的第一目标区域图像,以及从所述当前帧图像中获取上妆的第二目标区域图像;Taking the makeup mask image as a reference, acquiring a makeup-on first target area image from the initial frame image, and acquiring a makeup-on second target area image from the current frame image;

根据所述第一目标区域图像和所述第二目标区域图像,确定所述当前帧图像对应的当前化妆进度。According to the first target area image and the second target area image, the current makeup progress corresponding to the current frame image is determined.

在本申请的一些实施例中,所述特定妆容包括眼线妆容;所述根据所述实时化妆视频的初始帧图像和当前帧图像,确定所述用户进行所述特定妆容的当前化妆进度,包括:In some embodiments of the present application, the specific makeup includes eyeliner makeup; the determining the current makeup progress of the user performing the specific makeup according to the initial frame image and the current frame image of the real-time makeup video includes:

获取所述初始帧图像和所述当前帧图像对应的美妆掩码图;Acquiring the beauty mask corresponding to the initial frame image and the current frame image;

根据所述初始帧图像,模拟生成眼线上妆完成后的结果图像;According to the initial frame image, simulate and generate the result image after eyeliner makeup is completed;

根据所述美妆掩码图、所述结果图像、所述初始帧图像和所述当前帧图像,确定所述当前帧图像对应的当前化妆进度。According to the makeup mask map, the result image, the initial frame image and the current frame image, determine the current makeup progress corresponding to the current frame image.

在本申请的一些实施例中,所述根据所述美妆掩码图、所述结果图像、所述初始帧图像和所述当前帧图像,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the determining the current makeup progress corresponding to the current frame image according to the beauty mask image, the result image, the initial frame image and the current frame image includes :

以所述初始帧图像对应的美妆掩码图为参照,从所述初始帧图像中获取上妆的第一目标区域图像;Taking the makeup mask image corresponding to the initial frame image as a reference, acquiring the image of the first target area of makeup from the initial frame image;

根据所述当前帧图像对应的美妆掩码图,从所述当前帧图像中获取上妆的第二目标区域图像;Acquiring a makeup-on second target area image from the current frame image according to the makeup mask image corresponding to the current frame image;

根据所述结果图像获取眼线上妆的第三目标区域图像;Acquiring the third target area image of eyeliner makeup according to the result image;

根据所述第一目标区域图像、所述第二目标区域图像和所述第三目标区域图像,确定所述当前帧图像对应的当前化妆进度。According to the first target area image, the second target area image and the third target area image, the current makeup progress corresponding to the current frame image is determined.

在本申请的一些实施例中,所述根据所述第一目标区域图像、所述第二目标区域图像和所述第三目标区域图像,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the determining the current makeup progress corresponding to the current frame image according to the first target area image, the second target area image and the third target area image includes:

分别将所述第一目标区域图像、所述第二目标区域图像和所述第三目标区域图像转换为HLS颜色空间下包含饱和度通道的图像;Converting the first target area image, the second target area image, and the third target area image into images containing a saturation channel in the HLS color space, respectively;

根据转换后的所述第一目标区域图像、所述第二目标区域图像和所述第三目标区域图像,确定所述当前帧图像对应的当前化妆进度。According to the converted first target area image, the second target area image and the third target area image, the current makeup progress corresponding to the current frame image is determined.

在本申请的一些实施例中,所述根据转换后的所述第一目标区域图像、所述第二目标区域图像和所述第三目标区域图像,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the current makeup progress corresponding to the current frame image is determined according to the converted first target area image, the second target area image and the third target area image ,include:

分别计算转换后的所述第一目标区域图像对应的第一平均像素值、所述第二目标区域图像对应的第二平均像素值和所述第三目标区域图像对应的第三平均像素值;respectively calculating a first average pixel value corresponding to the converted first target area image, a second average pixel value corresponding to the second target area image, and a third average pixel value corresponding to the third target area image;

计算第二平均像素值与所述第一平均像素值之间的第一差值,以及计算所述第三平均像素值与所述第一平均像素值之间的第二差值;calculating a first difference between a second average pixel value and the first average pixel value, and calculating a second difference between the third average pixel value and the first average pixel value;

计算所述第一差值与所述第二差值之间的比值,得到所述当前帧图像对应的当前化妆进度。Calculate the ratio between the first difference and the second difference to obtain the current makeup progress corresponding to the current frame image.

在本申请的一些实施例中,所述根据所述第一目标区域图像、所述第二目标区域图像和所述第三目标区域图像,确定所述当前帧图像对应的当前化妆进度之前,还包括:In some embodiments of the present application, before determining the current makeup progress corresponding to the current frame image according to the first target area image, the second target area image and the third target area image, further include:

对所述第一目标区域图像和所述第二目标区域图像进行对齐处理;performing alignment processing on the first target area image and the second target area image;

对所述第一目标区域图像和所述第三目标区域图像进行对齐处理。Perform alignment processing on the first target area image and the third target area image.

在本申请的一些实施例中,所述对所述第一目标区域图像和所述第二目标区域图像进行对齐处理,包括:In some embodiments of the present application, the aligning the first target area image and the second target area image includes:

分别对所述第一目标区域图像和所述第二目标区域图像进行二值化处理,得到所述第一目标区域图像对应的第一二值化掩膜图像及所述第二目标区域图像对应的第二二值化掩膜图像;Perform binarization processing on the first target area image and the second target area image respectively to obtain a first binarized mask image corresponding to the first target area image and a corresponding second target area image The second binarized mask image of ;

对所述第一二值化掩膜图像和所述第二二值化掩膜图像进行与运算,得到所述第一目标区域图像与所述第二目标区域图像的相交区域对应的第二掩膜图像。Perform an AND operation on the first binarized mask image and the second binarized mask image to obtain a second mask corresponding to the intersection area of the first target area image and the second target area image film image.

在本申请的一些实施例中,所述对所述第一目标区域图像和所述第二目标区域图像进行对齐处理,还包括:In some embodiments of the present application, the aligning the first target area image and the second target area image further includes:

获取所述初始帧图像对应的人脸区域图像及所述结果图像对应的人脸区域图像;Acquiring the face area image corresponding to the initial frame image and the face area image corresponding to the result image;

对所述第二掩膜图像和所述初始帧图像对应的人脸区域图像进行与运算,得到所述初始帧图像对应的新的第一目标区域图像;performing an AND operation on the second mask image and the face area image corresponding to the initial frame image to obtain a new first target area image corresponding to the initial frame image;

对所述第二掩膜图像和所述结果图像对应的人脸区域图像进行与运算,得到所述结果图像对应的新的第二目标区域图像。An AND operation is performed on the second mask image and the face area image corresponding to the result image to obtain a new second target area image corresponding to the result image.

在本申请的一些实施例中,所述获取所述初始帧图像和所述当前帧图像对应的美妆掩码图,包括:In some embodiments of the present application, the acquiring the beauty mask corresponding to the initial frame image and the current frame image includes:

获取用户选择的眼线样式图;Obtain the eyeliner style map selected by the user;

若所述初始帧图像中用户的眼部状态为睁眼状态,则获取所述眼线样式图对应的睁眼样式图;将所述睁眼样式图确定为所述初始帧图像对应的美妆掩码图;If the user's eye state in the initial frame image is the eye-open state, then obtain the eye-opening pattern map corresponding to the eyeliner pattern map; determine the eye-opening pattern map as the beauty mask corresponding to the initial frame image code map;

若所述初始帧图像中用户的眼部状态为闭眼状态,则获取所述眼线样式图对应的闭眼样式图,并将所述闭眼样式图确定为所述初始帧图像对应的美妆掩码图。If the eye state of the user in the initial frame image is the eye-closed state, then obtain the eye-closed pattern map corresponding to the eyeliner pattern map, and determine the eye-closed pattern map as the beauty makeup corresponding to the initial frame image mask map.

在本申请的一些实施例中,所述特定妆容包括眼影妆容;所述根据所述实时化妆视频的初始帧图像和当前帧图像,确定所述用户进行所述特定妆容的当前化妆进度,包括:In some embodiments of the present application, the specific makeup includes eye shadow makeup; the determining the current makeup progress of the user performing the specific makeup according to the initial frame image and the current frame image of the real-time makeup video includes:

获取眼影掩码图;Obtain the eye shadow mask map;

根据眼影上妆的每个目标上妆区域,分别从所述眼影掩码图中拆分出每个所述目标上妆区域对应的美妆掩码图;According to each target makeup area of eye shadow makeup, respectively split the beauty mask map corresponding to each target makeup area from the eye shadow mask map;

根据所述初始帧图像、所述当前帧图像及每个所述目标上妆区域对应的美妆掩码图,确定所述当前帧图像对应的当前化妆进度。The current makeup progress corresponding to the current frame image is determined according to the initial frame image, the current frame image, and the beauty mask map corresponding to each target makeup area.

在本申请的一些实施例中,所述根据所述初始帧图像、所述当前帧图像及每个所述目标上妆区域对应的美妆掩码图,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the current makeup corresponding to the current frame image is determined according to the initial frame image, the current frame image, and the beauty mask map corresponding to each of the target makeup areas. progress, including:

分别以每个所述目标上妆区域对应的美妆掩码图为参照,从所述初始帧图像中获取每个所述目标上妆区域对应的第一目标区域图像;Taking the beauty mask image corresponding to each target makeup area as a reference, acquiring a first target area image corresponding to each target makeup area from the initial frame image;

分别以每个所述目标上妆区域对应的美妆掩码图为参照,从所述当前帧图像中获取每个所述目标上妆区域对应的第二目标区域图像;Respectively using the beauty mask image corresponding to each target makeup area as a reference, acquiring a second target area image corresponding to each target makeup area from the current frame image;

根据每个所述目标上妆区域对应的第一目标区域图像及第二目标区域图像,确定所述当前帧图像对应的当前化妆进度。According to the first target area image and the second target area image corresponding to each target makeup area, the current makeup progress corresponding to the current frame image is determined.

在本申请的一些实施例中,所述根据每个所述目标上妆区域对应的第一目标区域图像及第二目标区域图像,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the determining the current makeup progress corresponding to the current frame image according to the first target area image and the second target area image corresponding to each of the target makeup areas includes:

分别将每个所述目标上妆区域对应的第一目标区域图像和第二目标区域图像转换为HLS颜色空间下包含预设单通道分量的图像;Respectively converting the first target area image and the second target area image corresponding to each target makeup area into images containing preset single-channel components under the HLS color space;

根据转换后的每个所述目标上妆区域对应的第一目标区域图像和第二目标区域图像,确定所述当前帧图像对应的当前化妆进度。According to the converted first target area image and second target area image corresponding to each target makeup area, the current makeup progress corresponding to the current frame image is determined.

在本申请的一些实施例中,所述根据转换后的每个所述目标上妆区域对应的第一目标区域图像和第二目标区域图像,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the determining the current makeup progress corresponding to the current frame image according to the converted first target area image and the second target area image corresponding to each target makeup area includes :

分别计算转换后同一目标上妆区域对应的第一目标区域图像和第二目标区域图像中位置相同的像素点对应的所述预设单通道分量的差值绝对值;Respectively calculate the difference absolute value of the preset single-channel component corresponding to the pixels in the same position in the first target area image corresponding to the same target makeup area after conversion and in the second target area image;

统计每个目标上妆区域对应的差值绝对值满足预设化妆完成条件的像素点数目;Count the number of pixels whose absolute value of the difference corresponding to each target makeup area satisfies the preset makeup completion condition;

分别计算每个目标上妆区域对应的所述像素点数目与对应目标上妆区域中的像素点总数目之间的比值,得到每个目标上妆区域对应的化妆进度;Calculating the ratio between the number of pixels corresponding to each target makeup area and the total number of pixels in the corresponding target makeup area to obtain the makeup progress corresponding to each target makeup area;

根据每个目标上妆区域对应的化妆进度及每个目标上妆区域对应的预设权重,计算所述当前帧图像对应的当前化妆进度。According to the makeup progress corresponding to each target makeup area and the preset weight corresponding to each target makeup area, the current makeup progress corresponding to the current frame image is calculated.

在本申请的一些实施例中,以美妆掩码图为参照,从所述初始帧图像中获取第一目标区域图像,包括:In some embodiments of the present application, the first target area image is obtained from the initial frame image with reference to the beauty mask image, including:

检测所述初始帧图像对应的第一人脸关键点;Detecting the first human face key point corresponding to the initial frame image;

根据所述第一人脸关键点,获取所述初始帧图像对应的人脸区域图像;Acquiring a face region image corresponding to the initial frame image according to the first face key point;

以美妆掩码图为参照,从所述人脸区域图像中获取上妆的第一目标区域图像。Taking the makeup mask image as a reference, the image of the first target area for applying makeup is acquired from the face area image.

在本申请的一些实施例中,所述以美妆掩码图为参照,从所述人脸区域图像中获取上妆的第一目标区域图像,包括:In some embodiments of the present application, the acquisition of the makeup-on first target area image from the face area image with reference to the beauty mask image includes:

分别将美妆掩码图和所述人脸区域图像转换为二值化图像;Converting the beauty mask image and the face area image into a binary image respectively;

对所述美妆掩码图对应的二值化图像和所述人脸区域图像对应的二值化图像进行与运算,获得所述美妆掩码图与所述人脸区域图像的相交区域对应的第一掩膜图像;Perform an AND operation on the binarized image corresponding to the beauty mask map and the binarized image corresponding to the face area image to obtain the correspondence between the intersection area of the beauty mask map and the face area image The first mask image of ;

对所述第一掩膜图像与所述初始帧图像对应的人脸区域图像进行与运算,获得第一目标区域图像。An AND operation is performed on the face area image corresponding to the first mask image and the initial frame image to obtain a first target area image.

在本申请的一些实施例中,所述对所述美妆掩码图对应的二值化图像和所述人脸区域图像对应的二值化图像进行与运算之前,还包括:In some embodiments of the present application, before performing the AND operation on the binarized image corresponding to the beauty mask map and the binarized image corresponding to the face region image, it further includes:

根据所述美妆掩码图对应的标准人脸关键点,确定所述美妆掩码图中位于每个上妆区域的轮廓上的一个或多个第一定位点;According to the standard human face key points corresponding to the beauty mask map, one or more first positioning points located on the outline of each makeup area in the beauty mask map are determined;

根据所述第一人脸关键点,从所述人脸区域图像中确定出与每个所述第一定位点对应的第二定位点;Determining a second anchor point corresponding to each of the first anchor points from the face area image according to the first face key point;

对所述美妆掩码图进行拉伸处理,将每个所述第一定位点拉伸至对应的每个所述第二定位点对应的位置处。Stretching is performed on the beauty mask image, and each of the first positioning points is stretched to a position corresponding to each of the second positioning points.

在本申请的一些实施例中,所述以美妆掩码图为参照,从所述人脸区域图像中获取上妆的第一目标区域图像,包括:In some embodiments of the present application, the acquisition of the makeup-on first target area image from the face area image with reference to the beauty mask image includes:

将所述美妆掩码图拆分为多个子掩码图,每个所述子掩码图中包括至少一个目标上妆区域;Splitting the beauty mask image into multiple sub-mask images, each of which includes at least one target makeup area;

分别将每个所述子掩码图及所述人脸区域图像转换为二值化图像;converting each of the sub-mask images and the face region image into a binary image;

分别对每个所述子掩码图对应的二值化图像与所述人脸区域图像对应的二值化图像进行与运算,获得每个所述子掩码图各自对应的子掩膜图像;performing an AND operation on the binarized image corresponding to each of the sub-masks and the binarized image corresponding to the face region image to obtain a respective sub-mask image corresponding to each of the sub-masks;

分别对每个所述子掩膜图像与所述初始帧图像对应的人脸区域图像进行与运算,获得所述初始帧图像对应的多个子目标区域图像;performing an AND operation on each of the sub-mask images and the face region images corresponding to the initial frame image to obtain a plurality of sub-target region images corresponding to the initial frame image;

将所述多个子目标区域图像合并为所述初始帧图像对应的第一目标区域图像。Merging the multiple sub-target area images into a first target area image corresponding to the initial frame image.

在本申请的一些实施例中,所述分别对每个所述子掩码图对应的二值化图像与所述人脸区域图像对应的二值化图像进行与运算之前,还包括:In some embodiments of the present application, before performing the AND operation on the binarized image corresponding to each of the sub-mask images and the binarized image corresponding to the face region image, it further includes:

根据所述美妆掩码图对应的标准人脸关键点,确定第一子掩码图中位于目标上妆区域的轮廓上的一个或多个第一定位点,所述第一子掩码图为所述多个子掩码图中的任一子掩码图;According to the standard human face key points corresponding to the beauty mask map, determine one or more first positioning points located on the contour of the target makeup area in the first sub-mask map, the first sub-mask map It is any sub-mask in the plurality of sub-masks;

根据所述第一人脸关键点,从所述人脸区域图像中确定出与每个所述第一定位点对应的第二定位点;Determining a second anchor point corresponding to each of the first anchor points from the face area image according to the first face key point;

对所述第一子掩码图进行拉伸处理,将每个所述第一定位点拉伸至对应的每个所述第二定位点对应的位置处。Stretching is performed on the first sub-mask image, and each of the first positioning points is stretched to a position corresponding to each of the second positioning points.

在本申请的一些实施例中,所述特定妆容包括眉毛妆容;所述根据所述实时化妆视频的初始帧图像和当前帧图像,确定所述用户进行所述特定妆容的当前化妆进度,包括:In some embodiments of the present application, the specific makeup includes eyebrow makeup; the determining the current makeup progress of the user performing the specific makeup according to the initial frame image and the current frame image of the real-time makeup video includes:

从所述初始帧图像中获取眉毛对应的第一目标区域图像,及从所述当前帧图像中获取眉毛对应的第二目标区域图像;Obtaining a first target area image corresponding to the eyebrows from the initial frame image, and acquiring a second target area image corresponding to the eyebrows from the current frame image;

根据所述第一目标区域图像和所述第二目标区域图像,确定所述当前帧图像对应的当前化妆进度。According to the first target area image and the second target area image, the current makeup progress corresponding to the current frame image is determined.

在本申请的一些实施例中,所述从所述初始帧图像中获取眉毛对应的第一目标区域图像,包括:In some embodiments of the present application, the obtaining the first target region image corresponding to the eyebrows from the initial frame image includes:

检测所述初始帧图像对应的第一人脸关键点;Detecting the first human face key point corresponding to the initial frame image;

根据所述第一人脸关键点,获取所述初始帧图像对应的人脸区域图像;Acquiring a face region image corresponding to the initial frame image according to the first face key point;

根据所述第一人脸关键点中包括的眉毛关键点,从所述人脸区域图像中获取眉毛对应的第一目标区域图像。According to the eyebrow key points included in the first human face key points, a first target area image corresponding to eyebrows is acquired from the human face area image.

在本申请的一些实施例中,所述根据所述第一人脸关键点中包括的眉毛关键点,从所述人脸区域图像中截取眉毛对应的第一目标区域图像,包括:In some embodiments of the present application, according to the eyebrow key points included in the first human face key points, intercepting the first target area image corresponding to the eyebrows from the face area image includes:

对所述第一人脸关键点包括的眉头至眉峰之间的眉毛关键点进行插值,得到多个插值点;Interpolating the eyebrow key points between the eyebrows and eyebrow peaks included in the first human face key points to obtain a plurality of interpolation points;

从所述人脸区域图像中截取出眉头至眉峰之间所有眉毛关键点及所述多个插值点连接而成的闭合区域,得到眉头至眉峰之间的部分眉毛图像;Intercepting all eyebrow key points between the eyebrow head and the eyebrow peak and the closed area formed by the connection of the plurality of interpolation points from the image of the human face area, obtaining a partial eyebrow image between the eyebrow head and the eyebrow peak;

从所述人脸区域图像中截取出眉峰至眉尾之间的所有眉毛关键点连接而成的闭合区域,得到眉峰至眉尾之间的部分眉毛图像;Intercepting the closed area formed by connecting all eyebrow key points between the eyebrow peak and the eyebrow tail from the image of the human face region, obtaining a partial eyebrow image between the eyebrow peak and the eyebrow tail;

将所述眉头至眉峰之间的部分眉毛图像与所述眉峰至眉尾之间的部分眉毛图像拼接为眉毛对应的第一目标区域图像。The partial eyebrow image between the eyebrow head and the eyebrow peak and the partial eyebrow image between the eyebrow peak and the eyebrow tail are spliced into a first target region image corresponding to the eyebrows.

在本申请的一些实施例中,所述根据所述第一目标区域图像和所述第二目标区域图像,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the determining the current makeup progress corresponding to the current frame image according to the first target area image and the second target area image includes:

分别将所述第一目标区域图像和所述第二目标区域图像转换为HSV颜色空间下包含预设单通道分量的图像;respectively converting the first target area image and the second target area image into images containing preset single-channel components in HSV color space;

根据转换后的所述第一目标区域图像和所述第二目标区域图像,确定所述当前帧图像对应的当前化妆进度。According to the converted first target area image and the second target area image, the current makeup progress corresponding to the current frame image is determined.

在本申请的一些实施例中,所述根据转换后的所述第一目标区域图像和所述第二目标区域图像,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the determining the current makeup progress corresponding to the current frame image according to the converted first target area image and the second target area image includes:

分别计算转换后的所述第一目标区域图像和所述第二目标区域图像中位置相同的像素点对应的所述预设单通道分量的差值绝对值;Calculating the absolute value of the difference between the preset single-channel components corresponding to pixels at the same position in the converted first target area image and the second target area image;

统计对应的差值绝对值满足预设化妆完成条件的像素点数目;Count the number of pixels whose absolute value of the corresponding difference satisfies the preset makeup completion condition;

计算统计的所述像素点数目与所述第一目标区域图像中所有目标上妆区域中的像素点总数目之间的比值,得到所述当前帧图像对应的当前化妆进度。Calculate the ratio between the counted number of pixels and the total number of pixels in all target makeup areas in the first target area image to obtain the current makeup progress corresponding to the current frame image.

在本申请的一些实施例中,所述根据所述第一目标区域图像和所述第二目标区域图像,确定所述当前帧图像对应的当前化妆进度之前,还包括:In some embodiments of the present application, before determining the current makeup progress corresponding to the current frame image according to the first target area image and the second target area image, further includes:

分别对所述第一目标区域图像和所述第二目标区域图像进行二值化处理,得到所述第一目标区域图像对应的第一二值化掩膜图像和所述第二目标区域图像对应的第二二值化掩膜图像;Performing binarization processing on the first target area image and the second target area image respectively, to obtain a first binarized mask image corresponding to the first target area image and corresponding to the second target area image The second binarized mask image of ;

对所述第一二值化掩膜图像和所述第二二值化掩膜图像进行与运算,得到所述第一目标区域图像与所述第二目标区域图像的相交区域对应的第二掩膜图像;Perform an AND operation on the first binarized mask image and the second binarized mask image to obtain a second mask corresponding to the intersection area of the first target area image and the second target area image film image;

获取所述初始帧图像对应的人脸区域图像及所述当前帧图像对应的人脸区域图像;Acquiring the face area image corresponding to the initial frame image and the face area image corresponding to the current frame image;

对所述第二掩膜图像和所述初始帧图像对应的人脸区域图像进行与运算,得到所述初始帧图像对应的新第一目标区域图像;performing an AND operation on the second mask image and the face area image corresponding to the initial frame image to obtain a new first target area image corresponding to the initial frame image;

对所述第二掩膜图像和所述当前帧图像对应的人脸区域图像进行与运算,得到所述当前帧图像对应的新第二目标区域图像。An AND operation is performed on the second mask image and the face area image corresponding to the current frame image to obtain a new second target area image corresponding to the current frame image.

在本申请的一些实施例中,所述确定所述当前帧图像对应的当前化妆进度之前,还包括:In some embodiments of the present application, before determining the current makeup progress corresponding to the current frame image, it also includes:

分别对所述第一目标区域图像和所述第二目标区域图像中的上妆区域进行边界腐蚀处理。Boundary erosion processing is performed on makeup areas in the first target area image and the second target area image respectively.

在本申请的一些实施例中,所述特定妆容包括粉底妆容;所述根据所述实时化妆视频的初始帧图像和当前帧图像,确定所述用户进行所述特定妆容的当前化妆进度,包括:In some embodiments of the present application, the specific makeup includes foundation makeup; the determining the current makeup progress of the user performing the specific makeup according to the initial frame image and the current frame image of the real-time makeup video includes:

根据所述初始帧图像,模拟生成完成所述特定妆容后的结果图像;According to the initial frame image, simulate and generate a result image after completing the specific makeup;

分别获取所述初始帧图像、所述结果图像和所述当前帧图像对应的整体图像亮度;Obtaining the overall image brightness corresponding to the initial frame image, the result image, and the current frame image respectively;

分别获取所述初始帧图像、所述结果图像和所述当前帧图像对应的人脸区域亮度;Obtaining the brightness of the face area corresponding to the initial frame image, the result image, and the current frame image respectively;

根据所述初始帧图像、所述结果图像和所述当前帧图像各自对应的整体图像亮度和人脸区域亮度,确定所述当前帧图像对应的当前化妆进度。The current makeup progress corresponding to the current frame image is determined according to the overall image brightness and face area brightness corresponding to the initial frame image, the result image and the current frame image respectively.

在本申请的一些实施例中,所述分别获取所述初始帧图像、所述结果图像和所述当前帧图像对应的整体图像亮度,包括:In some embodiments of the present application, said obtaining the overall image brightness corresponding to the initial frame image, the result image, and the current frame image respectively includes:

分别将所述初始帧图像、所述结果图像和所述当前帧图像转换为灰度图像;respectively converting the initial frame image, the result image and the current frame image into grayscale images;

分别计算转换后所述初始帧图像、所述结果图像和所述当前帧图像各自对应的灰度图像中像素点的灰度平均值;Calculating the grayscale average value of the pixels in the grayscale images respectively corresponding to the converted initial frame image, the resultant image, and the current frame image;

将所述初始帧图像、所述结果图像和所述当前帧图像各自对应的灰度平均值分别确定为所述初始帧图像、所述结果图像和所述当前帧图像各自对应的整体图像亮度。The respective grayscale average values corresponding to the initial frame image, the result image and the current frame image are respectively determined as the respective overall image luminances corresponding to the initial frame image, the result image and the current frame image.

在本申请的一些实施例中,所述分别获取所述初始帧图像、所述结果图像和所述当前帧图像对应的人脸区域亮度,包括:In some embodiments of the present application, said obtaining the brightness of the face area corresponding to the initial frame image, the result image and the current frame image respectively includes:

分别获取所述初始帧图像、所述结果图像和所述当前帧图像各自对应的人脸区域图像;Respectively acquire the face area images corresponding to the initial frame image, the result image and the current frame image;

分别将所述初始帧图像、所述结果图像和所述当前帧图像各自对应的人脸区域图像转换为人脸灰度图像;respectively converting the face area images corresponding to the initial frame image, the result image, and the current frame image into face grayscale images;

分别计算所述初始帧图像、所述结果图像和所述当前帧图像各自对应的人脸灰度图像中像素点的灰度平均值,得到所述初始帧图像、所述结果图像和所述当前帧图像各自对应的人脸区域亮度。Calculate the gray average value of the pixels in the face grayscale images corresponding to the initial frame image, the result image and the current frame image respectively, to obtain the initial frame image, the result image and the current frame image The brightness of the face area corresponding to each frame image.

在本申请的一些实施例中,所述根据所述初始帧图像、所述结果图像和所述当前帧图像各自对应的整体图像亮度和人脸区域亮度,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the current makeup corresponding to the current frame image is determined according to the overall image brightness and face area brightness corresponding to the initial frame image, the result image, and the current frame image. progress, including:

根据所述初始帧图像对应的整体图像亮度和人脸区域亮度以及所述当前帧图像对应的整体图像亮度和人脸区域亮度,确定所述当前帧图像对应的第一环境变化亮度;Determine the first environmental change brightness corresponding to the current frame image according to the overall image brightness and face area brightness corresponding to the initial frame image and the overall image brightness and face area brightness corresponding to the current frame image;

根据所述初始帧图像对应的整体图像亮度和人脸区域亮度以及所述结果图像对应的整体图像亮度和人脸区域亮度,确定所述结果图像对应的第二环境变化亮度;Determine the second environmental change brightness corresponding to the result image according to the overall image brightness and face area brightness corresponding to the initial frame image and the overall image brightness and face area brightness corresponding to the result image;

根据所述第一环境变化亮度、所述第二环境变化亮度、所述初始帧图像对应的人脸区域亮度、所述当前帧图像对应的人脸区域亮度、所述结果图像对应的人脸区域亮度,确定所述当前帧图像对应的当前化妆进度。According to the brightness of the first environmental change, the brightness of the second environmental change, the brightness of the face area corresponding to the initial frame image, the brightness of the face area corresponding to the current frame image, and the face area corresponding to the result image Brightness, to determine the current makeup progress corresponding to the current frame image.

在本申请的一些实施例中,所述根据所述初始帧图像对应的整体图像亮度和人脸区域亮度以及所述当前帧图像对应的整体图像亮度和人脸区域亮度,确定所述当前帧图像对应的第一环境变化亮度,包括:In some embodiments of the present application, the current frame image is determined according to the overall image brightness and face area brightness corresponding to the initial frame image and the overall image brightness and face area brightness corresponding to the current frame image The brightness of the corresponding first environment changes, including:

计算所述初始帧图像对应的整体图像亮度与其对应的人脸区域亮度之间的差值,得到所述初始帧图像的环境亮度;Calculating the difference between the overall image brightness corresponding to the initial frame image and the brightness of the corresponding face area to obtain the ambient brightness of the initial frame image;

计算所述当前帧图像对应的整体图像亮度与其对应的人脸区域亮度之间的差值,得到所述当前帧图像的环境亮度;Calculating the difference between the overall image brightness corresponding to the current frame image and the corresponding face area brightness to obtain the ambient brightness of the current frame image;

将所述当前帧图像的环境亮度与所述初始帧图像的环境亮度之间的差值绝对值确定为所述当前帧图像对应的第一环境变化亮度。Determine the absolute value of the difference between the ambient brightness of the current frame image and the ambient brightness of the initial frame image as the first ambient change brightness corresponding to the current frame image.

在本申请的一些实施例中,所述根据所述第一环境变化亮度、所述第二环境变化亮度、所述初始帧图像对应的人脸区域亮度、所述当前帧图像对应的人脸区域亮度、所述结果图像对应的人脸区域亮度,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the changing brightness according to the first environment, the changing brightness according to the second environment, the brightness of the face area corresponding to the initial frame image, and the face area corresponding to the current frame image Brightness, the brightness of the face area corresponding to the result image, determine the current makeup progress corresponding to the current frame image, including:

根据所述第一环境变化亮度、所述初始帧图像对应的人脸区域亮度、所述当前帧图像对应的人脸区域亮度,确定所述当前帧图像对应的上妆亮度变化值;According to the brightness of the first environmental change, the brightness of the face area corresponding to the initial frame image, and the brightness of the face area corresponding to the current frame image, determine the makeup brightness change value corresponding to the current frame image;

根据所述第二环境变化亮度、所述初始帧图像对应的人脸区域亮度、所述结果图像对应的人脸区域亮度,确定所述结果图像对应的上妆亮度变化值;According to the brightness of the second environmental change, the brightness of the face area corresponding to the initial frame image, and the brightness of the face area corresponding to the result image, determine the change value of the makeup brightness corresponding to the result image;

计算所述当前帧图像对应的上妆亮度变化值与所述结果图像对应的上妆亮度变化值的比值,得到所述当前帧图像对应的当前化妆进度。Calculate the ratio of the makeup brightness change value corresponding to the current frame image to the makeup brightness change value corresponding to the result image to obtain the current makeup progress corresponding to the current frame image.

在本申请的一些实施例中,所述根据所述第一环境变化亮度、所述初始帧图像对应的人脸区域亮度、所述当前帧图像对应的人脸区域亮度,确定所述当前帧图像对应的上妆亮度变化值,包括:In some embodiments of the present application, the current frame image is determined according to the brightness of the first environmental change, the brightness of the face area corresponding to the initial frame image, and the brightness of the face area corresponding to the current frame image The corresponding brightness change value of makeup includes:

计算所述当前帧图像对应的人脸区域亮度与所述初始帧图像对应的人脸区域亮度之间的差值,得到所述当前帧图像对应的总亮度变化值;calculating the difference between the brightness of the face area corresponding to the current frame image and the brightness of the face area corresponding to the initial frame image to obtain a total brightness change value corresponding to the current frame image;

计算所述总亮度变化值与所述第一环境变化亮度之间的差值,得到所述当前帧图像对应的上妆亮度变化值。Calculate the difference between the total brightness change value and the first environmental change brightness to obtain the makeup brightness change value corresponding to the current frame image.

在本申请的一些实施例中,所述方法还包括:In some embodiments of the present application, the method also includes:

若所述第一环境变化亮度大于预设阈值,则将上一帧图像对应的化妆进度确定为所述当前帧图像对应的当前化妆进度;If the brightness of the first environmental change is greater than a preset threshold, then determine the makeup progress corresponding to the previous frame image as the current makeup progress corresponding to the current frame image;

发送第一提示信息给所述用户的终端,所述第一提示信息用于提示所述用户回到所述初始帧图像对应的亮度环境下上妆。Sending first prompt information to the user's terminal, where the first prompt information is used to prompt the user to return to the brightness environment corresponding to the initial frame image to apply makeup.

在本申请的一些实施例中,所述特定妆容包括遮瑕妆容;所述根据所述实时化妆视频的初始帧图像和当前帧图像,确定所述用户进行所述特定妆容的当前化妆进度,包括:In some embodiments of the present application, the specific makeup includes concealer makeup; the determination of the current makeup progress of the user performing the specific makeup according to the initial frame image and the current frame image of the real-time makeup video includes:

分别获取所述初始帧图像和所述当前帧图像各自对应的脸部瑕疵信息;Respectively acquiring facial blemish information corresponding to the initial frame image and the current frame image;

根据所述初始帧图像对应的脸部瑕疵信息和所述当前帧图像对应的脸部瑕疵信息,计算所述当前帧图像与所述初始帧图像之间的脸部瑕疵差异值;According to the facial blemish information corresponding to the initial frame image and the facial blemish information corresponding to the current frame image, calculate the facial blemish difference value between the current frame image and the initial frame image;

若所述脸部瑕疵差异值大于预设阈值,则根据所述脸部瑕疵差异值和所述初始帧图像对应的脸部瑕疵信息,计算所述当前帧图像对应的当前化妆进度;If the facial blemish difference value is greater than a preset threshold, then calculate the current makeup progress corresponding to the current frame image according to the facial blemish difference value and the facial blemish information corresponding to the initial frame image;

若所述脸部瑕疵差异值小于或等于所述预设阈值,则获取所述用户完成遮瑕上妆后的结果图像,根据所述初始帧图像、所述结果图像和所述当前帧图像,确定所述当前帧图像对应的当前化妆进度。If the facial blemish difference value is less than or equal to the preset threshold, then obtain the result image after the user completes the concealment and makeup, and determine according to the initial frame image, the result image and the current frame image The current makeup progress corresponding to the current frame image.

在本申请的一些实施例中,所述脸部瑕疵信息包括瑕疵类别及对应的瑕疵数目;所述根据所述初始帧图像对应的脸部瑕疵信息和所述当前帧图像对应的脸部瑕疵信息,计算所述当前帧图像与所述初始帧图像之间的脸部瑕疵差异值,包括:In some embodiments of the present application, the facial blemish information includes blemish categories and corresponding blemish numbers; the facial blemish information corresponding to the initial frame image and the facial blemish information corresponding to the current frame image , calculating the facial blemish difference value between the current frame image and the initial frame image, including:

分别计算每种瑕疵类别下所述初始帧图像对应的瑕疵数目与所述当前帧图像对应的瑕疵数目之间的差值;Calculating the difference between the number of blemishes corresponding to the initial frame image and the number of blemishes corresponding to the current frame image under each blemish category;

计算每种瑕疵类别对应的差值之和,将得到的和值作为所述当前帧图像与所述初始帧图像之间的脸部瑕疵差异值。Calculate the sum of the differences corresponding to each blemish category, and use the obtained sum as the facial blemish difference value between the current frame image and the initial frame image.

在本申请的一些实施例中,所述根据所述脸部瑕疵差异值和所述初始帧图像对应的脸部瑕疵信息,计算所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the calculating the current makeup progress corresponding to the current frame image according to the facial blemish difference value and the facial blemish information corresponding to the initial frame image includes:

计算所述初始帧图像对应的脸部瑕疵信息中各瑕疵类别对应的瑕疵数目之和,得到总瑕疵数;Calculating the sum of the number of blemishes corresponding to each blemish category in the facial blemish information corresponding to the initial frame image to obtain the total number of blemishes;

计算所述脸部瑕疵差异值与所述总瑕疵数之间的比值,将所述比值作为所述当前帧图像对应的当前化妆进度。Calculate the ratio between the facial blemish difference value and the total number of blemishes, and use the ratio as the current makeup progress corresponding to the current frame image.

在本申请的一些实施例中,所述获取所述用户完成遮瑕上妆后的结果图像,根据所述初始帧图像、所述结果图像和所述当前帧图像,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the acquisition of the result image after the user completes the concealment and makeup, and determining the corresponding frame image of the current frame image according to the initial frame image, the result image and the current frame image Current makeup progress, including:

根据所述初始帧图像,模拟生成所述用户完成遮瑕上妆后的结果图像;According to the initial frame image, simulate and generate the result image after the user completes the concealer and makeup;

分别获取所述初始帧图像、所述结果图像和所述当前帧图像各自对应的人脸区域图像;Respectively acquire the face area images corresponding to the initial frame image, the result image and the current frame image;

根据所述初始帧图像、所述结果图像和所述当前帧图像各自对应的人脸区域图像,确定所述当前帧图像对应的当前化妆进度。According to the face area images corresponding to the initial frame image, the result image and the current frame image, the current makeup progress corresponding to the current frame image is determined.

在本申请的一些实施例中,所述根据所述初始帧图像、所述结果图像和所述当前帧图像各自对应的人脸区域图像,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the determining the current makeup progress corresponding to the current frame image according to the face area images corresponding to the initial frame image, the result image and the current frame image includes:

分别将所述初始帧图像、所述结果图像和所述当前帧图像各自对应的人脸区域图像转换为HLS颜色空间下包含饱和度通道的图像;Respectively converting the face area images corresponding to the initial frame image, the result image and the current frame image into images containing saturation channels in the HLS color space;

通过预设滤波算法分别计算转换后所述初始帧图像、所述结果图像和所述当前帧图像各自的人脸区域图像对应的平滑因子;Calculating the smoothing factors corresponding to the respective face area images of the initial frame image, the result image, and the current frame image after conversion through a preset filtering algorithm;

根据所述初始帧图像、所述结果图像和所述当前帧图像各自对应的平滑因子,确定所述当前帧图像对应的当前化妆进度。According to the respective smoothing factors corresponding to the initial frame image, the result image and the current frame image, the current makeup progress corresponding to the current frame image is determined.

在本申请的一些实施例中,所述根据所述初始帧图像、所述结果图像和所述当前帧图像各自对应的平滑因子,确定所述当前帧图像对应的当前化妆进度,包括:In some embodiments of the present application, the determining the current makeup progress corresponding to the current frame image according to the respective smoothing factors of the initial frame image, the result image and the current frame image includes:

计算所述当前帧图像对应的平滑因子与所述初始帧图像对应的平滑因子之间的第一差值;calculating a first difference between the smoothing factor corresponding to the current frame image and the smoothing factor corresponding to the initial frame image;

计算所述结果图像对应的平滑因子与所述初始帧图像对应的平滑因子之间的第二差值;calculating a second difference between the smoothing factor corresponding to the result image and the smoothing factor corresponding to the initial frame image;

计算所述第一差值与所述第二差值之间的比值,将所述比值作为所述当前帧图像对应的当前化妆进度。Calculate the ratio between the first difference and the second difference, and use the ratio as the current makeup progress corresponding to the current frame image.

在本申请的一些实施例中,所述分别获取所述初始帧图像和所述当前帧图像各自对应的脸部瑕疵信息,包括:In some embodiments of the present application, the respectively obtaining the facial blemish information corresponding to the initial frame image and the current frame image includes:

分别获取所述初始帧图像和所述当前帧图像各自对应的人脸区域图像;Respectively acquire the face area images corresponding to the initial frame image and the current frame image;

通过预设的皮肤检测模型分别检测所述初始帧图像和所述当前帧图像各自对应的人脸区域图像中各瑕疵类别对应的瑕疵数目,得到所述初始帧图像和所述当前帧图像各自对应的脸部瑕疵信息。The number of blemishes corresponding to each blemish category in the face area image corresponding to the initial frame image and the current frame image is respectively detected by the preset skin detection model, and the respective corresponding blemishes of the initial frame image and the current frame image are obtained. facial blemish information.

在本申请的一些实施例中,所述获取所述初始帧图像对应的人脸区域图像,包括:In some embodiments of the present application, the acquiring the face area image corresponding to the initial frame image includes:

根据所述初始帧图像对应的第一人脸关键点,对所述初始帧图像及所述第一人脸关键点进行旋转矫正;Performing rotation correction on the initial frame image and the first human face key point according to the first human face key point corresponding to the initial frame image;

根据矫正后的所述第一人脸关键点,从矫正后的所述初始帧图像中截取包含人脸区域的图像;According to the corrected first human face key point, intercepting an image containing a human face area from the corrected initial frame image;

将所述包含人脸区域的图像缩放至预设尺寸,得到所述初始帧图像对应的人脸区域图像。The image containing the face area is scaled to a preset size to obtain a face area image corresponding to the initial frame image.

在本申请的一些实施例中,所述根据所述第一人脸关键点,对所述初始帧图像及所述第一人脸关键点进行旋转矫正,包括:In some embodiments of the present application, performing rotation correction on the initial frame image and the first facial key point according to the first human face key point includes:

根据所述第一人脸关键点包括的左眼关键点和右眼关键点,分别确定左眼中心坐标和右眼中心坐标;Determine left eye center coordinates and right eye center coordinates respectively according to the left eye key point and right eye key point included in the first human face key point;

根据所述左眼中心坐标和所述右眼中心坐标,确定所述初始帧图像对应的旋转角度及旋转中心点坐标;Determine the rotation angle and rotation center point coordinates corresponding to the initial frame image according to the left eye center coordinates and the right eye center coordinates;

根据所述旋转角度和所述旋转中心点坐标,对所述初始帧图像及所述第一人脸关键点进行旋转矫正。Rotation correction is performed on the initial frame image and the first human face key point according to the rotation angle and the coordinates of the rotation center point.

在本申请的一些实施例中,所述根据矫正后的所述第一人脸关键点,从矫正后的所述初始帧图像中截取包含人脸区域的图像,包括:In some embodiments of the present application, according to the corrected first human face key point, intercepting an image containing a human face area from the corrected initial frame image includes:

根据矫正后的所述第一人脸关键点,对矫正后的所述初始帧图像中包含的人脸区域进行图像截取。According to the corrected key points of the first human face, image interception is performed on the human face area included in the corrected initial frame image.

在本申请的一些实施例中,所述根据矫正后的所述第一人脸关键点,对矫正后的所述初始帧图像中包含的人脸区域进行图像截取,包括:In some embodiments of the present application, the image interception of the face area contained in the corrected initial frame image according to the corrected first human face key point includes:

从矫正后的所述第一人脸关键点中确定最小横坐标值、最小纵坐标值、最大横坐标值和最大纵坐标值;Determining a minimum abscissa value, a minimum ordinate value, a maximum abscissa value, and a maximum ordinate value from the corrected first face key point;

根据所述最小横坐标值、所述最小纵坐标值、最大横坐标值和最大纵坐标值,确定矫正后的所述初始帧图像中人脸区域对应的截取框;According to the minimum abscissa value, the minimum ordinate value, the maximum abscissa value and the maximum ordinate value, determine the clipping frame corresponding to the face area in the corrected initial frame image;

根据所述截取框,从矫正后的所述初始帧图像中截取出包含所述人脸区域的图像。According to the clipping frame, an image including the face area is clipped from the rectified initial frame image.

在本申请的一些实施例中,所述方法还包括:In some embodiments of the present application, the method also includes:

将所述截取框放大预设倍数;Enlarging the interception frame by a preset multiple;

根据放大后的所述截取框,从矫正后的所述初始帧图像中截取出包含所述人脸区域的图像。According to the enlarged clipping frame, an image including the face area is clipped from the corrected initial frame image.

在本申请的一些实施例中,所述方法还包括:In some embodiments of the present application, the method also includes:

根据所述包含人脸区域的图像的尺寸及所述预设尺寸,对矫正后的所述第一人脸关键点进行缩放平移处理。According to the size of the image including the face area and the preset size, zooming and translation processing is performed on the corrected first key points of the face.

在本申请的一些实施例中,所述方法还包括:In some embodiments of the present application, the method also includes:

检测所述初始帧图像和所述当前帧图像中是否均仅包含同一个用户的人脸图像;Detecting whether both the initial frame image and the current frame image only contain the face image of the same user;

如果是,则执行所述确定所述用户进行所述特定妆容的当前化妆进度的操作;If yes, then perform the operation of determining the current makeup progress of the user performing the specific makeup look;

如果否,则发送提示信息给所述用户的终端,所述提示信息用于提示所述用户保持所述实时化妆视频中仅出现同一个用户的人脸。If not, send prompt information to the user's terminal, where the prompt information is used to prompt the user to keep only the face of the same user appearing in the real-time makeup video.

本申请第二方面的实施例提供了一种化妆进度检测装置,包括:The embodiment of the second aspect of the present application provides a makeup progress detection device, including:

视频获取模块,用于获取用户当前进行特定妆容的实时化妆视频;The video acquisition module is used to acquire the real-time makeup video of the user currently performing a specific makeup look;

化妆进度确定模块,用于根据所述实时化妆视频的初始帧图像和当前帧图像,确定所述用户进行所述特定妆容的当前化妆进度。The makeup progress determination module is configured to determine the current makeup progress of the user performing the specific makeup look according to the initial frame image and the current frame image of the real-time makeup video.

本申请第三方面的实施例提供了一种电子设备,包括存储器、处理器及存储在所述存储器上并可在所述处理器上运行的计算机程序,所述处理器运行所述计算机程序以实现上述第一方面所述的方法。The embodiment of the third aspect of the present application provides an electronic device, including a memory, a processor, and a computer program stored on the memory and operable on the processor, and the processor runs the computer program to Implement the method described in the first aspect above.

本申请第四方面的实施例提供了一种计算机可读存储介质,其上存储有计算机程序,所述程序被处理器执行实现上述第一方面所述的方法。The embodiment of the fourth aspect of the present application provides a computer-readable storage medium, on which a computer program is stored, and the program is executed by a processor to implement the method described in the first aspect above.

本申请实施例中提供的技术方案,至少具有如下技术效果或优点:The technical solutions provided in the embodiments of the present application have at least the following technical effects or advantages:

在本申请实施例中,将用户化妆过程的当前帧图像与初始帧图像做对比来确定化妆进度。仅通过图像处理即可检测出化妆进度,化妆进度检测的准确性很高,对于高光、修容、腮红、粉底、遮瑕、眼影、眼线、眉毛等化妆过程均能实时检测用户的化妆进度。无需使用深度学习模型,运算量小,成本低,减少了服务器的处理压力,提高了化妆进度检测的效率,能够满足化妆进度检测的实时性要求。In the embodiment of the present application, the progress of the makeup is determined by comparing the current frame image of the user's makeup process with the initial frame image. The makeup progress can be detected only through image processing, and the accuracy of makeup progress detection is very high. It can detect the user's makeup progress in real time for the makeup process such as highlights, contouring, blush, foundation, concealer, eye shadow, eyeliner, and eyebrows. There is no need to use a deep learning model, the amount of calculation is small, the cost is low, the processing pressure on the server is reduced, the efficiency of makeup progress detection is improved, and the real-time requirements of makeup progress detection can be met.

本申请附加的方面和优点将在下面的描述中部分给出,部分将从下面的描述中变的明显,或通过本申请的实践了解到。Additional aspects and advantages of the present application will be set forth in part in the description which follows, and in part will be obvious from the description which follows, or may be learned by practice of the present application.

附图说明Description of drawings

通过阅读下文优选实施方式的详细描述,各种其他的优点和益处对于本领域普通技术人员将变得清楚明了。附图仅用于示出优选实施方式的目的,而并不认为是对本申请的限制。而且在整个附图中,用相同的参考符号表示相同的部件。Various other advantages and benefits will become apparent to those of ordinary skill in the art upon reading the following detailed description of the preferred embodiment. The drawings are only for the purpose of illustrating the preferred embodiments and are not to be considered as limiting the application. Also throughout the drawings, the same reference numerals are used to designate the same components.

在附图中:In the attached picture:

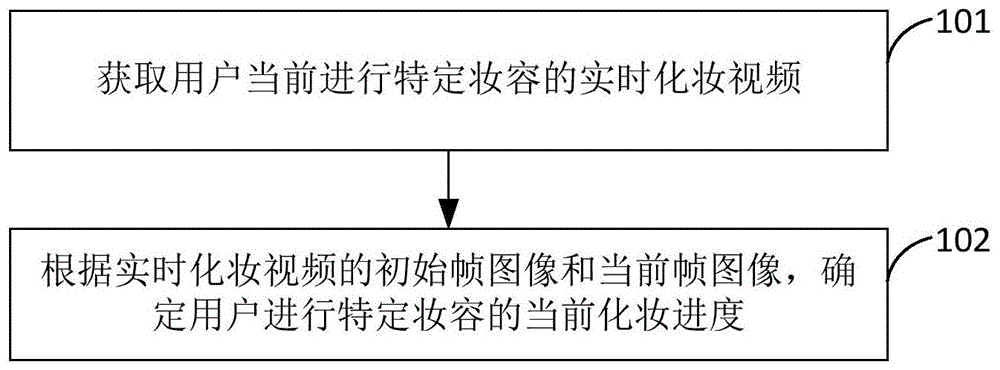

图1示出了本申请一实施例所提供的一种化妆进度检测方法的流程图;Fig. 1 shows a flowchart of a makeup progress detection method provided by an embodiment of the present application;

图2示出了本申请一实施例所提供的用于检测高光、修容等妆容的化妆进度检测方法的流程图;Fig. 2 shows a flow chart of a makeup progress detection method for detecting makeup such as highlights and grooming provided by an embodiment of the present application;

图3示出了本申请一实施例所提供的客户端显示的供用户选择目标上妆区域的显示界面的示意图;Fig. 3 shows a schematic diagram of a display interface for a user to select a target makeup area displayed by a client provided by an embodiment of the present application;

图4示出了本申请一实施例所提供的求解图像的旋转角度的示意图;FIG. 4 shows a schematic diagram of solving the rotation angle of an image provided by an embodiment of the present application;

图5示出了本申请一实施例所提供的两次坐标系转换的示意图;FIG. 5 shows a schematic diagram of two coordinate system transformations provided by an embodiment of the present application;

图6示出了本申请一实施例所提供的用于检测高光、修容等妆容的化妆进度检测方法的模块流程示意图;Fig. 6 shows a schematic diagram of a module flow chart of a makeup progress detection method for detecting highlights, trimming and other makeup provided by an embodiment of the present application;

图7示出了本申请一实施例所提供的用于检测腮红等妆容的化妆进度检测方法的流程图;Fig. 7 shows a flow chart of a makeup progress detection method for detecting blush and other makeup provided by an embodiment of the present application;

图8示出了本申请一实施例所提供的客户端显示的供用户选择上妆区域的显示界面的另一示意图;Fig. 8 shows another schematic diagram of the display interface displayed by the client for the user to select a makeup area provided by an embodiment of the present application;

图9示出了本申请一实施例所提供的用于检测腮红等妆容的化妆进度检测方法的模块流程示意图;Fig. 9 shows a schematic diagram of a module flow chart of a makeup progress detection method for detecting blush and other makeup provided by an embodiment of the present application;

图10示出了本申请一实施例所提供的用于检测眼线妆容的化妆进度检测方法的流程图;Fig. 10 shows a flowchart of a makeup progress detection method for detecting eyeliner makeup provided by an embodiment of the present application;

图11示出了本申请一实施例所提供的用于检测眼线妆容的化妆进度检测方法的模块流程示意图;Fig. 11 shows a schematic diagram of a module flow chart of a makeup progress detection method for detecting eyeliner makeup provided by an embodiment of the present application;

图12示出了本申请一实施例所提供的用于检测眼影妆容的化妆进度检测方法的流程图;Fig. 12 shows a flowchart of a makeup progress detection method for detecting eye shadow makeup provided by an embodiment of the present application;

图13示出了本申请一实施例所提供的用于检测眼影妆容的化妆进度检测方法的模块流程示意图;Fig. 13 shows a schematic diagram of a module flow chart of a makeup progress detection method for detecting eye shadow makeup provided by an embodiment of the present application;

图14示出了本申请一实施例所提供的用于检测眼影妆容的化妆进度检测装置的结构示意图;Fig. 14 shows a schematic structural diagram of a makeup progress detection device for detecting eyeshadow makeup provided by an embodiment of the present application;

图15示出了本申请一实施例所提供的用于检测眉毛妆容的化妆进度检测方法的流程图;Fig. 15 shows a flowchart of a makeup progress detection method for detecting eyebrow makeup provided by an embodiment of the present application;

图16示出了本申请一实施例所提供的用于检测眉毛妆容的化妆进度检测方法的模块流程示意图;Fig. 16 shows a schematic diagram of a module flow chart of a makeup progress detection method for detecting eyebrow makeup provided by an embodiment of the present application;

图17示出了本申请一实施例所提供的用于检测眉毛妆容的化妆进度检测装置的结构示意图;Fig. 17 shows a schematic structural diagram of a makeup progress detection device for detecting eyebrow makeup provided by an embodiment of the present application;

图18示出了本申请一实施例所提供的用于检测粉底、散粉等妆容的化妆进度检测方法的流程图;Fig. 18 shows a flow chart of a makeup progress detection method for detecting foundation, loose powder and other makeup provided by an embodiment of the present application;

图19示出了本申请一实施例所提供的用于检测粉底、散粉等妆容的化妆进度检测方法的另一流程图;Fig. 19 shows another flow chart of a makeup progress detection method for detecting foundation, loose powder and other makeup provided by an embodiment of the present application;

图20示出了本申请一实施例所提供的用于检测粉底、散粉等妆容的化妆进度检测方法的模块流程示意图;Fig. 20 shows a schematic diagram of a module flow chart of a makeup progress detection method for detecting foundation, loose powder and other makeup provided by an embodiment of the present application;

图21示出了本申请一实施例所提供的用于检测遮瑕妆容的化妆进度检测方法的流程图;Fig. 21 shows a flowchart of a makeup progress detection method for detecting concealer makeup provided by an embodiment of the present application;

图22示出了本申请一实施例所提供的用于检测遮瑕妆容的化妆进度检测方法的模块流程示意图;Fig. 22 shows a schematic diagram of a module flow chart of a makeup progress detection method for detecting concealer makeup provided by an embodiment of the present application;

图23示出了本申请一实施例所提供的一种妆容颜色识别方法的流程图;Fig. 23 shows a flowchart of a makeup color recognition method provided by an embodiment of the present application;

图24示出了本申请一实施例所提供的一种妆容颜色识别装置的结构示意图;Fig. 24 shows a schematic structural view of a makeup color recognition device provided by an embodiment of the present application;

图25示出了本申请一实施例所提供的祛除面部瑕疵的图像处理方法的流程图;Fig. 25 shows a flowchart of an image processing method for removing facial blemishes provided by an embodiment of the present application;

图26(a)示出了用户的人脸图像,图26(b)示出了图26(a)所示人脸图像对应的瑕疵纹理贴图;Figure 26(a) shows the user's face image, and Figure 26(b) shows the blemish texture map corresponding to the face image shown in Figure 26(a);

图27示出了本申请一实施例所提供的祛除面部瑕疵的图像处理装置的结构示意图;Fig. 27 shows a schematic structural diagram of an image processing device for removing facial blemishes provided by an embodiment of the present application;

图28示出了本申请一实施例所提供的一种化妆进度检测装置的结构示意图;Fig. 28 shows a schematic structural diagram of a makeup progress detection device provided by an embodiment of the present application;

图29示出了本申请一实施例所提供的一种电子设备的结构示意图;Fig. 29 shows a schematic structural diagram of an electronic device provided by an embodiment of the present application;

图30示出了本申请一实施例所提供的一种存储介质的示意图。FIG. 30 shows a schematic diagram of a storage medium provided by an embodiment of the present application.

具体实施方式Detailed ways

下面将参照附图更详细地描述本申请的示例性实施方式。虽然附图中显示了本申请的示例性实施方式,然而应当理解,可以以各种形式实现本申请而不应被这里阐述的实施方式所限制。相反,提供这些实施方式是为了能够更透彻地理解本申请,并且能够将本申请的范围完整的传达给本领域的技术人员。Exemplary embodiments of the present application will be described in more detail below with reference to the accompanying drawings. Although exemplary embodiments of the present application are shown in the drawings, it should be understood that the present application may be embodied in various forms and should not be limited to the embodiments set forth herein. Rather, these embodiments are provided for thorough understanding of the application and to fully convey the scope of the application to those skilled in the art.

需要注意的是,除非另有说明,本申请使用的技术术语或者科学术语应当为本申请所属领域技术人员所理解的通常意义。It should be noted that, unless otherwise specified, technical terms or scientific terms used in this application shall have the usual meanings understood by those skilled in the art to which this application belongs.

下面结合附图来描述根据本申请实施例提出的一种化妆进度检测方法、装置、设备及存储介质。A makeup progress detection method, device, device and storage medium according to the embodiments of the present application will be described below with reference to the accompanying drawings.

目前相关技术中存在一些虚拟试妆功能,可以应用在销售柜台或者手机应用软件中,采用人脸识别技术对用户提供虚拟试妆服务,可以将多种妆容进行搭配和实时的面部贴合展示。此外还是提供人脸皮肤检测服务,但这些服务只能解决用户挑选适合自己的化妆品,或者选取适合自己的皮肤保养方案的需求。基于这些服务可以帮助用户挑选适合自己的高光/修容化妆产品,但是无法对上妆的进度进行显示,不能满足用户实时化妆的需求。相关技术中还存在一些使用深度学习模型提供虚拟试妆、肤色侦测、个性化产品推荐等功能,这些功能均需要预先收集大量的人脸图片对深度学习模型进行训练。但人脸图片是用户的隐私数据,很难收集到庞大的人脸图片。且模型训练需耗费大量计算资源,成本高。模型的精度与实时性成反比,化妆进度检测需要实时捕获用户人脸面部信息来确定用户当前的化妆进度,实时性要求很高,能够满足实时性要求的深度学习模型,其检测的准确性不高。At present, there are some virtual makeup trial functions in related technologies, which can be applied to sales counters or mobile phone application software. Face recognition technology is used to provide users with virtual makeup trial services, which can match various makeup looks and display facial fit in real time. In addition, face skin detection services are provided, but these services can only solve the needs of users to choose cosmetics that suit them, or choose a skin care plan that suits them. Based on these services, users can choose highlighting/contour makeup products that suit them, but they cannot display the progress of makeup, and cannot meet the needs of users for real-time makeup. In related technologies, there are still some functions that use deep learning models to provide virtual makeup testing, skin color detection, and personalized product recommendations. These functions require pre-collecting a large number of face pictures to train deep learning models. However, face pictures are user privacy data, and it is difficult to collect huge face pictures. Moreover, model training requires a large amount of computing resources and is expensive. The accuracy of the model is inversely proportional to the real-time performance. Make-up progress detection needs to capture the user's face and facial information in real time to determine the user's current make-up progress. The real-time requirements are very high. The deep learning model that can meet the real-time requirements is not accurate enough. high.

基于此,本申请实施例提供了一种化妆进度检测方法,该方法将用户化妆过程的当前帧图像与初始帧图像(即第一帧图像)做对比来确定化妆进度。仅通过图像处理即可检测出化妆进度,化妆进度检测的准确性很高,对于高光、修容、腮红、粉底、遮瑕、眼影、眼线、眉毛等化妆过程均能实时检测用户的化妆进度。无需使用深度学习模型,运算量小,成本低,减少了服务器的处理压力,提高了化妆进度检测的效率,能够满足化妆进度检测的实时性要求。Based on this, an embodiment of the present application provides a makeup progress detection method, which compares the current frame image of the user's makeup process with the initial frame image (ie, the first frame image) to determine the makeup progress. The makeup progress can be detected only through image processing, and the accuracy of makeup progress detection is very high. It can detect the user's makeup progress in real time for the makeup process such as highlights, contouring, blush, foundation, concealer, eye shadow, eyeliner, and eyebrows. There is no need to use a deep learning model, the amount of calculation is small, the cost is low, the processing pressure on the server is reduced, the efficiency of makeup progress detection is improved, and the real-time requirements of makeup progress detection can be met.

参见图1,该方法具体包括以下步骤:Referring to Figure 1, the method specifically includes the following steps:

步骤101:获取用户当前进行特定妆容的实时化妆视频。Step 101: Obtain a real-time makeup video of the user currently performing a specific makeup.

步骤102:根据实时化妆视频的初始帧图像和当前帧图像,确定用户进行特定妆容的当前化妆进度。Step 102: According to the initial frame image and the current frame image of the real-time makeup video, determine the current makeup progress of the user performing a specific makeup look.

特定妆容可以为高光妆容、修容妆容、腮红妆容、粉底妆容、遮瑕妆容、眼影妆容、眼线妆容、眉毛妆容等。下面分别对检测不同妆容的化妆进度的过程进行详细说明。Specific makeup can be highlight makeup, trimming makeup, blush makeup, foundation makeup, concealer makeup, eye shadow makeup, eyeliner makeup, eyebrow makeup, etc. The process of detecting the makeup progress of different makeup looks will be described in detail below.

实施例一Embodiment one

本申请实施例提供一种化妆进度检测方法,该方法用于检测高光妆容、修容妆容或其他任意会产生明暗变化的妆容对应的化妆进度。参见图2,该实施例具体包括以下步骤:An embodiment of the present application provides a method for detecting makeup progress, which is used to detect the makeup progress corresponding to highlight makeup, trimming makeup or any other makeup that may produce light and dark changes. Referring to Fig. 2, this embodiment specifically comprises the following steps:

步骤201:获取特定妆容对应的至少一个目标上妆区域及用户当前进行特定妆容的实时化妆视频。Step 201: Obtain at least one target makeup area corresponding to a specific makeup look and a real-time makeup video of the user currently performing the specific makeup look.

本申请实施例的执行主体为服务器。用户的手机或电脑等终端上安装有与服务器提供的化妆进度检测服务相适配的客户端。当用户需要使用化妆进度检测服务时,用户打开终端上的该客户端,客户端显示特定妆容对应的所有目标上妆区域,特定妆容可以包括高光妆容或修容妆容等。目标上妆区域可以包括额头区域、鼻梁区域、鼻尖区域、左侧脸颊区域、右侧脸颊区域、下巴区域等。用户从显示的多个目标上妆区域中选择自己需要化特定妆容的一个或多个目标上妆区域。The execution subject of this embodiment of the application is a server. A client that is compatible with the makeup progress detection service provided by the server is installed on the terminal such as the user's mobile phone or computer. When the user needs to use the makeup progress detection service, the user opens the client on the terminal, and the client displays all target makeup areas corresponding to the specific makeup. The specific makeup can include highlight makeup or trimming makeup, etc. The target makeup area may include a forehead area, a nose bridge area, a nose tip area, a left cheek area, a right cheek area, a chin area, and the like. The user selects one or more target makeup areas where he needs to apply specific makeup from the multiple displayed target makeup areas.

作为一种示例,客户端可以以文本选择项的形式来显示特定妆容对应的所有目标上妆区域。如图3所示的显示界面,该显示界面中包括多个目标上妆区域对应的文本选择项及提交按键,用户可以单击选择出自己需要的目标上妆区域的文本选择项,然后点击提交按键。客户端检测到提交按键触发的提交指令后,从该显示界面中获取用户选择的一个或多个目标上妆区域。As an example, the client may display all target makeup areas corresponding to a specific makeup in the form of text selection items. The display interface shown in Figure 3, the display interface includes multiple text selection items corresponding to the target makeup area and the submit button, the user can click to select the text selection item of the target makeup area that he needs, and then click Submit button. After the client detects the submit instruction triggered by the submit button, it acquires one or more target makeup areas selected by the user from the display interface.

作为另一种示例,客户端可以显示一张脸部图像,在该脸部图像上标识出特定妆容对应的所有目标上妆区域。用户通过单击操作从显示的该脸部图像中选择出自己需要的目标上妆区域。客户端显示的显示界面中可以包括一张脸部图像和提交按键,该脸部图像中可以通过实线圈出特定妆容对应的每个目标上妆区域,用户点击自己需要的目标上妆区域后,被选中的目标上妆区域的轮廓线可以显示为预设颜色(如红色或黄色),或者被选中的目标上妆区域的全部会显示为预设颜色。用户选择出自己需要的目标上妆区域后,点击提交按键。客户端检测到提交按键触发的提交指令后,从显示的脸部图像中获取用户选择的一个或多个目标上妆区域。As another example, the client may display a face image on which all target makeup areas corresponding to a specific makeup look are identified. The user selects the desired target makeup area from the displayed face image by clicking. The display interface displayed by the client can include a face image and a submit button. Each target makeup area corresponding to a specific makeup can be drawn in the face image by a solid line. After the user clicks the target makeup area that he needs, The outline of the selected target makeup area can be displayed in a preset color (such as red or yellow), or all of the selected target makeup area can be displayed in a preset color. After the user selects the target makeup area he needs, he clicks the submit button. After the client detects the submit command triggered by the submit button, it acquires one or more target makeup areas selected by the user from the displayed face image.

用户的终端通过上述任一方式获得用户选择的一个或多个目标上妆区域之后,将该一个或多个目标上妆区域的区域标识信息发送给服务器,该区域标识信息中可以包括用户选择的每个目标上妆区域的名称或编号等标识信息。服务器接收用户的终端发送的该区域标识信息,根据该区域标识信息确定出用户选择的特定妆容对应的至少一个目标上妆区域。After the user's terminal obtains one or more target makeup areas selected by the user through any of the above methods, it sends the area identification information of the one or more target makeup areas to the server. The area identification information may include the Identification information such as the name or number of each target makeup area. The server receives the area identification information sent by the user's terminal, and determines at least one target makeup application area corresponding to the specific makeup look selected by the user according to the area identification information.

通过上述方式由用户自定义选择自己需要化特定妆容的区域,能够满足不同用户对高光、修容等特定妆容的个性化需求。Through the above method, the user can customize the area where he needs to apply a specific makeup look, which can meet the individual needs of different users for specific makeup looks such as highlighting and contouring.

在本申请的另一些实施例中,也可以不由用户自己选择目标上妆区域,而是预先在服务器中配置好特定妆容对应的多个目标上妆区域。如此在用户需要检测化妆进度时,无需从用户的终端获取目标上妆区域,节省带宽,同时简化了用户操作,缩短了处理时间。In some other embodiments of the present application, instead of the user selecting the target makeup application area, multiple target makeup application areas corresponding to a specific makeup look may be pre-configured in the server. In this way, when the user needs to detect the makeup progress, there is no need to obtain the target makeup area from the user's terminal, which saves bandwidth, simplifies user operations, and shortens processing time.

上述客户端的显示界面中还设置有视频上传接口,当检测到用户点击该视频上传接口时,调用终端的摄像装置拍摄用户的化妆视频,在拍摄过程中用户在自己脸部的上述目标上妆区域中进行上述特定妆容的化妆操作。用户的终端将拍摄的化妆视频以视频流的形式传输给服务器。服务器接收用户的终端传输的该化妆视频的每一帧图像。The display interface of the above-mentioned client is also provided with a video upload interface. When it is detected that the user clicks on the video upload interface, the camera device of the terminal is called to take a video of the user's makeup. Perform makeup operations for the specific makeup above. The user's terminal transmits the captured makeup video to the server in the form of a video stream. The server receives each frame of the makeup video transmitted by the user's terminal.

在本申请实施例中,服务器将接收到的第一帧图像作为初始帧图像,以该初始帧图像作为参考来比对后续接收到的每一帧图像对应的特定妆容的当前化妆进度。由于对于后续每一帧图像的处理方式都相同,因此本申请实施例以当前时刻接收到的当前帧图像为例来阐述化妆进度检测的过程。In the embodiment of the present application, the server takes the received first frame image as the initial frame image, and uses the initial frame image as a reference to compare the current makeup progress of the specific makeup corresponding to each subsequently received frame image. Since the processing method for each subsequent image frame is the same, the embodiment of the present application uses the current frame image received at the current moment as an example to illustrate the process of makeup progress detection.

服务器通过本步骤获得特定妆容对应的至少一个目标上妆区域及用户化妆视频的初始帧图像和当前帧图像后,通过如下步骤202和203的操作来确定用户的当前化妆进度。After the server obtains at least one target makeup area corresponding to a specific makeup look and the initial frame image and current frame image of the user's makeup video through this step, the server determines the user's current makeup progress through the following

步骤202:根据目标上妆区域,从初始帧图像中获取特定妆容对应的第一目标区域图像,及从当前帧图像中获取特定妆容对应的第二目标区域图像。Step 202: Obtain a first target area image corresponding to the specific makeup from the initial frame image according to the target makeup area, and acquire a second target area image corresponding to the specific makeup from the current frame image.

服务器具体通过如下步骤S1-S3的操作来获取初始帧图像对应的第一目标区域图像,包括:The server specifically obtains the first target area image corresponding to the initial frame image through the following steps S1-S3, including:

S1:检测初始帧图像对应的第一人脸关键点。S1: Detect the first face key point corresponding to the initial frame image.

服务器中配置了预先训练好的用于检测人脸关键点的检测模型,通过该检测模型提供人脸关键点检测的接口服务。服务器获取到用户化妆视频的初始帧图像后,调用人脸关键点检测的接口服务,通过检测模型识别出初始帧图像中用户脸部的所有人脸关键点。为了与当前帧图像对应的人脸关键点进行区分,本申请实施例将初始帧图像对应的所有人脸关键点称为第一人脸关键点。将当前帧图像对应的所有人脸关键点称为第二人脸关键点。The server is configured with a pre-trained detection model for detecting key points of the face, and the interface service of key point detection of the face is provided through the detection model. After the server obtains the initial frame image of the user's makeup video, it calls the interface service of face key point detection, and uses the detection model to identify all face key points of the user's face in the initial frame image. In order to distinguish the face key points corresponding to the current frame image, all face key points corresponding to the initial frame image are referred to as first human face key points in the embodiment of the present application. All face key points corresponding to the current frame image are referred to as second face key points.

其中,识别出的人脸关键点包括用户脸部轮廓上的关键点及嘴巴、鼻子、眼睛、眉毛等部位的关键点。识别出的人脸关键点的数目可以为106个。Among them, the recognized key points of the human face include key points on the outline of the user's face and key points of the mouth, nose, eyes, eyebrows and other parts. The number of recognized face key points may be 106.