Image selection method and system based on deep learning SAR image classification

Technical Field

The invention relates to the technical field of image analysis, in particular to an image selection method and system based on deep learning SAR image classification.

Background

From the stage where the fusion is in the recognition process, there are three levels of fusion: a feature layer, a matching layer and a decision layer. Feature layer fusion refers to extracting corresponding feature vectors from biological feature data of different modalities and "fusing" them in a unified space into a new feature vector with higher dimension for identification.

The SAR image classification based on deep learning is widely applied to the fields of terrain surface classification, sea ice classification, ocean monitoring and the like. More data is not beneficial for maximizing useful information due to non-idealities, mismatches, and estimated defects. Longbotham et al confirmed the above conclusion. Therefore, designing an image selection algorithm can not only maximize the extraction information but also save the amount of computation.

In the prior art, for example, Chlaily et al propose an image selection algorithm based on a heterogeneous sensor fusion decision layer. Guerriro et al propose an SAR image selection algorithm based on the fusion detection of SAR images and radar sequences. Fusing data at the feature level is more efficient because the feature set contains more information than the decision set. However, there is currently no image selection algorithm that fuses in feature sets.

The key to designing an image selection algorithm is to establish a relationship model between corresponding pixels of different images. Hedhli et al establishes the relationship between corresponding pixels as a multi-layered Markov model when fusing SAR images with optical images. Tuia et al established the relationship between corresponding pixels as a conditional random field. Kang et al established relationships between corresponding pixels using a graph network.

Disclosure of Invention

The invention mainly relates to an image selection algorithm with feature layer fusion, wherein the relation between corresponding pixels of different images is established as a Markov model, and a belief propagation algorithm is utilized, so that the joint statistical distribution of a calculation sensor is avoided, and the calculation of the image selection algorithm is facilitated to be simplified. Mutual information, as a metric based on information theory, can be used to describe the accurate correlation of the estimates. No other research is currently devoted to discussing the image selection problem of feature-layer fusion.

The present invention is directed to solving at least one of the problems of the prior art. To this end, the invention discloses an image selection method based on deep learning SAR image classification, which comprises the following steps:

initializing an image to be analyzed;

introducing a factor graph to describe the relation between corresponding pixels in different images, and establishing the relation between the corresponding pixels of the different images as a Markov model;

by utilizing the belief propagation algorithm, joint statistical distribution of the computational sensor is avoided, and the simplification of the calculation of the image selection algorithm is facilitated, wherein the belief propagation algorithm serving as a message transmission mechanism can simplify a message updating rule into a linear fusion model;

the feature correlations between the selected image and other images are determined, and the capacity of sensor fusion is determined to complete the image selection process.

Still further, the method further comprises: feature-layer fusion can be expressed as a maximum a posteriori probability (MAP) estimate,

wherein, S represents the mark of the corresponding pixel of N images, and Y is [ Y ═ Y-1,…,yN]Including the characteristics of corresponding pixels of different images.

Further, a factor graph is introduced to describe the relationship between corresponding pixels in different images, and the posterior distribution p (SY) is decomposed into single variable and paired terms

Wherein each univariate Φn(Sn) Simulating S in joint distributionnOf each pair of terms Ψij(Si,Sj) Representing the edge S in the diagramiAnd SjThe interdependence of (a).

Still further, the method further comprises: as message deliveryThe mechanism belief propagation algorithm can simplify the message updating rule into a linear fusion model and reduce LnAnd θ is defined as:

for each of the pixels/the number of pixels is,

θ=AlLn, (5)

wherein A islIs a 1 XN vector, each element aiRepresenting the relationship between any image and other images, LnIs an N × 1 vector.

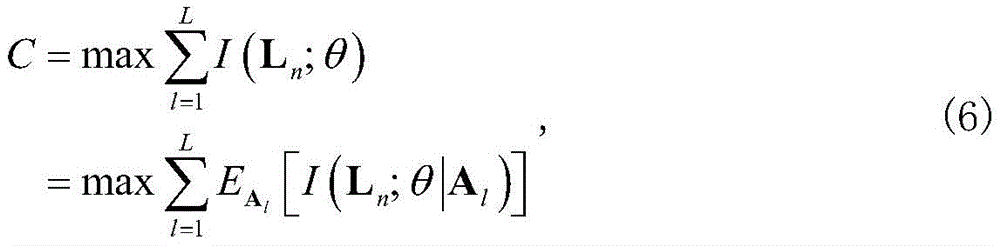

Further, the sensor fusion capacity is represented by θ and LnMutual information definition of (2):

where l is the pixel number.

Further, the capacity of a multiple-input single-output (MISO) system with power constraints per pixel is obtained

Wherein M is the number of selected images and does not exceed N, sigma2Is the power of additive white Gaussian noise, aiIs AlIth element of (2), piIs the power per pixel.

Further, due to piSet to be constant, equation (7) becomes

Wherein, a

iRepresenting the feature correlation between image n and other images, computed using a typical correlation analysis (CCA) method,

is the average signal-to-noise ratio of each image.

The invention further discloses a system for selecting images based on deep learning SAR image classification, which comprises a processor and a machine-readable storage medium, wherein the machine-readable storage medium is connected with the processor and is used for storing programs, instructions or codes, and the processor is used for executing the programs, the instructions or the codes in the machine-readable storage medium so as to realize the method for selecting images based on deep learning SAR image classification.

Compared with the prior art, the invention has the beneficial effects that: the algorithm adopted by the invention can greatly and effectively reduce the calculated amount and achieve the optimal image classification performance.

Drawings

The invention will be further understood from the following description in conjunction with the accompanying drawings. The components in the figures are not necessarily to scale, emphasis instead being placed upon illustrating the principles of the embodiments. In the drawings, like reference numerals designate corresponding parts throughout the different views.

FIG. 1 is a logic flow diagram of the present invention.

Fig. 2 is a process of an image selection method according to an embodiment of the invention.

Detailed Description

Example one

As shown in fig. 1, the present embodiment discloses an image selection method based on deep learning SAR image classification, which includes the following steps:

step 1, initializing an image to be analyzed;

step 2, introducing a factor graph to describe the relation between corresponding pixels in different images, and establishing the relation between the corresponding pixels of the different images as a Markov model;

step 3, the belief propagation algorithm is utilized, joint statistical distribution of the computing sensor is avoided, and the simplification of the computation of the image selection algorithm is facilitated, wherein the belief propagation algorithm serving as a message transmission mechanism can simplify a message updating rule into a linear fusion model;

and 4, determining the characteristic correlation between the selected image and other images and determining the capacity of sensor fusion.

Still further, the step 2 further comprises: feature-layer fusion can be expressed as a maximum a posteriori probability (MAP) estimate,

wherein, S represents the mark of the corresponding pixel of N images, and Y is [ Y ═ Y-1,…,yN]Including the characteristics of corresponding pixels of different images.

Further, a factor graph is introduced to describe the relationship between corresponding pixels in different images, and the posterior distribution p (S | Y) is decomposed into single variables and pairs of terms

Wherein each univariate Φn(Sn) Simulating S in joint distributionnOf each pair of terms Ψij(Si,Sj) Representing the edge S in the diagramiAnd SjThe interdependence of (a).

Still further, the step 3 further comprises: the belief propagation algorithm serving as a message transmission mechanism can simplify the message updating rule into a linear fusion model and reduce LnAnd θ is defined as:

for each of the pixels/the number of pixels is,

θ=AlLn, (5)

wherein A islIs a 1 XN vector, each element aiRepresenting the relationship between any image and other images, LnIs an N × 1 vector.

Further, the sensor fusion capacity is represented by θ and LnMutual information definition of (2):

where l is the pixel number.

Further, the capacity of a multiple-input single-output (MISO) system with power constraints per pixel is obtained

Wherein M is the number of selected images and does not exceed N, sigma2Is the power of additive white Gaussian noise, aiIs AlIth element of (2), piIs the power per pixel.

Further, due to piSet to be constant, equation (7) becomes

Wherein, a

iRepresenting the feature correlation between image n and other images, computed using a typical correlation analysis (CCA) method,

is the average signal-to-noise ratio of each image.

The invention further discloses a system for selecting images based on deep learning SAR image classification, which comprises a processor and a machine-readable storage medium, wherein the machine-readable storage medium is connected with the processor and is used for storing programs, instructions or codes, and the processor is used for executing the programs, the instructions or the codes in the machine-readable storage medium so as to realize the method for selecting images based on deep learning SAR image classification.

Example two

The process of the image selection method of the present invention is illustrated in fig. 2.

First, let image 1 be the reference image. If M is 2, CCA between image 1 and image 2 is calculated to obtain ai. If M is 3, CCA between the image 1 and other images is calculated to obtain ai. Then, let image 2 be the reference image. If M is 2, the CCA between image 2 and image 3 is calculated to obtain ai。

It should also be noted that the terms "comprises," "comprising," or any other variation thereof, are intended to cover a non-exclusive inclusion, such that a process, method, article, or apparatus that comprises a list of elements does not include only those elements but may include other elements not expressly listed or inherent to such process, method, article, or apparatus. Without further limitation, an element defined by the phrase "comprising an … …" does not exclude the presence of other like elements in a process, method, article, or apparatus that comprises the element.

As will be appreciated by one skilled in the art, embodiments of the present application may be provided as a method, system, or computer program product. Accordingly, the present application may take the form of an entirely hardware embodiment, an entirely software embodiment or an embodiment combining software and hardware aspects. Furthermore, the present application may take the form of a computer program product embodied on one or more computer-usable storage media (including, but not limited to, disk storage, CD-ROM, optical storage, and the like) having computer-usable program code embodied therein.

Although the invention has been described above with reference to various embodiments, it should be understood that many changes and modifications may be made without departing from the scope of the invention. It is therefore intended that the foregoing detailed description be regarded as illustrative rather than limiting, and that it be understood that it is the following claims, including all equivalents, that are intended to define the spirit and scope of this invention. The above examples are to be construed as merely illustrative and not limitative of the remainder of the disclosure. After reading the description of the invention, the skilled person can make various changes or modifications to the invention, and these equivalent changes and modifications also fall into the scope of the invention defined by the claims.