CN113499173B - Real-time instance segmentation-based terrain identification and motion prediction system for lower artificial limb - Google Patents

Real-time instance segmentation-based terrain identification and motion prediction system for lower artificial limb Download PDFInfo

- Publication number

- CN113499173B CN113499173B CN202110780493.5A CN202110780493A CN113499173B CN 113499173 B CN113499173 B CN 113499173B CN 202110780493 A CN202110780493 A CN 202110780493A CN 113499173 B CN113499173 B CN 113499173B

- Authority

- CN

- China

- Prior art keywords

- lower limb

- terrain

- information

- limb prosthesis

- real

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Classifications

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61F—FILTERS IMPLANTABLE INTO BLOOD VESSELS; PROSTHESES; DEVICES PROVIDING PATENCY TO, OR PREVENTING COLLAPSING OF, TUBULAR STRUCTURES OF THE BODY, e.g. STENTS; ORTHOPAEDIC, NURSING OR CONTRACEPTIVE DEVICES; FOMENTATION; TREATMENT OR PROTECTION OF EYES OR EARS; BANDAGES, DRESSINGS OR ABSORBENT PADS; FIRST-AID KITS

- A61F2/00—Filters implantable into blood vessels; Prostheses, i.e. artificial substitutes or replacements for parts of the body; Appliances for connecting them with the body; Devices providing patency to, or preventing collapsing of, tubular structures of the body, e.g. stents

- A61F2/50—Prostheses not implantable in the body

- A61F2/60—Artificial legs or feet or parts thereof

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/22—Matching criteria, e.g. proximity measures

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/045—Combinations of networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

-

- A—HUMAN NECESSITIES

- A61—MEDICAL OR VETERINARY SCIENCE; HYGIENE

- A61F—FILTERS IMPLANTABLE INTO BLOOD VESSELS; PROSTHESES; DEVICES PROVIDING PATENCY TO, OR PREVENTING COLLAPSING OF, TUBULAR STRUCTURES OF THE BODY, e.g. STENTS; ORTHOPAEDIC, NURSING OR CONTRACEPTIVE DEVICES; FOMENTATION; TREATMENT OR PROTECTION OF EYES OR EARS; BANDAGES, DRESSINGS OR ABSORBENT PADS; FIRST-AID KITS

- A61F2/00—Filters implantable into blood vessels; Prostheses, i.e. artificial substitutes or replacements for parts of the body; Appliances for connecting them with the body; Devices providing patency to, or preventing collapsing of, tubular structures of the body, e.g. stents

- A61F2/50—Prostheses not implantable in the body

- A61F2/68—Operating or control means

- A61F2/70—Operating or control means electrical

- A61F2002/704—Operating or control means electrical computer-controlled, e.g. robotic control

Landscapes

- Engineering & Computer Science (AREA)

- Health & Medical Sciences (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- Life Sciences & Earth Sciences (AREA)

- Data Mining & Analysis (AREA)

- General Engineering & Computer Science (AREA)

- Artificial Intelligence (AREA)

- Biomedical Technology (AREA)

- Evolutionary Computation (AREA)

- General Health & Medical Sciences (AREA)

- General Physics & Mathematics (AREA)

- Software Systems (AREA)

- Transplantation (AREA)

- Molecular Biology (AREA)

- Mathematical Physics (AREA)

- Computational Linguistics (AREA)

- Biophysics (AREA)

- Computing Systems (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Heart & Thoracic Surgery (AREA)

- Evolutionary Biology (AREA)

- Orthopedic Medicine & Surgery (AREA)

- Bioinformatics & Computational Biology (AREA)

- Cardiology (AREA)

- Oral & Maxillofacial Surgery (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Vascular Medicine (AREA)

- Animal Behavior & Ethology (AREA)

- Public Health (AREA)

- Veterinary Medicine (AREA)

- Image Analysis (AREA)

- Image Processing (AREA)

Abstract

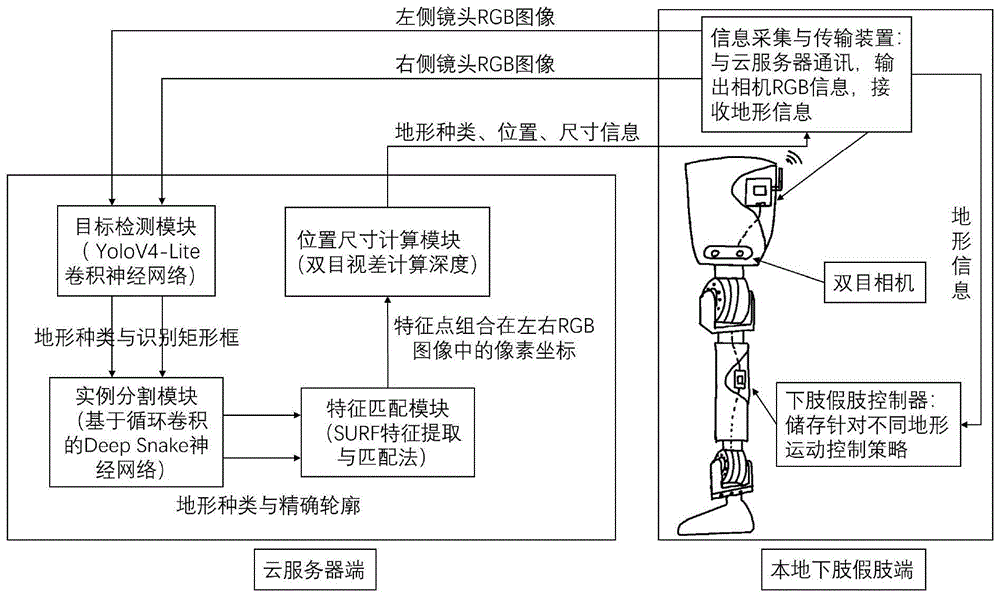

本发明提供了一种基于实时实例分割的下肢假肢地形识别与运动预测系统,包括:双目相机采集下肢假肢周围的地形信息,图像采集与信息传输硬件平台采集双目相机得到的信息,并上传至云服务器,图像采集与信息传输硬件平台与下肢假肢控制器电连接,下肢假肢控制器控制下肢假肢的运动方式;云服务器上部署有目标地形监测模块、实例分割模块、特征匹配模块和位置尺寸计算模块,地形信息依次经过上述模块处理后,反馈至图像采集与信息传输硬件平台。本发明实现了对多运动模式下肢假肢的智能优化提升,使其能够实时精确感知周围地形分布情况,对运动控制策略及时校正与调整,提升下肢假肢穿戴者运动能力与穿戴舒适度。

The invention provides a real-time instance segmentation-based terrain recognition and motion prediction system for lower limb prostheses, comprising: a binocular camera collects terrain information around the lower limb prosthesis, an image acquisition and information transmission hardware platform collects the information obtained by the binocular camera, and uploads the information. To the cloud server, the image acquisition and information transmission hardware platform is electrically connected to the lower limb prosthesis controller, and the lower limb prosthesis controller controls the movement mode of the lower limb prosthesis; the cloud server is deployed with a target terrain monitoring module, an instance segmentation module, a feature matching module and a position size The computing module, the terrain information is fed back to the image acquisition and information transmission hardware platform after being processed by the above modules in turn. The invention realizes the intelligent optimization and improvement of the multi-motion mode lower limb prosthesis, so that it can accurately perceive the surrounding terrain distribution in real time, correct and adjust the motion control strategy in time, and improve the exercise ability and wearing comfort of the lower limb prosthesis wearer.

Description

技术领域technical field

本发明涉及在复杂地形环境中下肢假肢主动式环境感知领域,具体地,涉及基于实时实例分割的下肢假肢地形识别与运动预测系统。The invention relates to the field of active environment perception of lower limb prostheses in a complex terrain environment, in particular to a terrain recognition and motion prediction system for lower limb prostheses based on real-time instance segmentation.

背景技术Background technique

下肢假肢用于辅助残疾人运动和康复训练,是一种融合了机械、电子、传感器、智能控制、传动等技术,根据残疾人人体工学结构特点设计的外置动力机械助残装置,为残疾人提供支撑、保护、运动等一系列辅助功能,下肢假肢智能化控制水平与舒适度很大程度上决定了其穿戴者的运动能力。The lower limb prosthesis is used to assist the disabled in sports and rehabilitation training. It is an external power mechanical disabled device designed according to the ergonomic structure characteristics of the disabled, which integrates machinery, electronics, sensors, intelligent control, transmission and other technologies. A series of auxiliary functions such as support, protection, and movement. The level of intelligent control and comfort of lower limb prostheses largely determines the exercise ability of the wearer.

在公告号为CN209422174U的中国发明专利文件中,公开了一种融合视觉的动力假肢环境识别系统,包括假肢本体、动力模块、运动感应模块、视觉探测模块以及控制模块,动力模块用以使假肢本体运动,运动感应模块用以获取假肢本体的状态信息,视觉探测模块用以获取假肢本体的周边环境信息,控制模块可通过获取的假肢本体的状态信息、假肢本体的周边环境信息判断人体周围的路况及障碍物信息,并预测假肢的运动趋势、判断人体的运动意图,由此控制动力模块使假肢本体合理运动,以辅助患者适应不同路况或跨越障碍物;该系统可在患者使用假肢的过程中,提前感知人体的运动意图并持续检测人体周围的路况环境,数据反馈实时性及稳定性强,便于患者使用。In the Chinese invention patent document with the notification number CN209422174U, a power prosthetic environment recognition system that integrates vision is disclosed, including a prosthesis body, a power module, a motion sensing module, a visual detection module, and a control module. The power module is used to make the prosthesis body Motion, the motion sensing module is used to obtain the state information of the prosthesis body, the visual detection module is used to obtain the surrounding environment information of the prosthesis body, and the control module can judge the road conditions around the human body through the acquired state information of the prosthesis body and the surrounding environment information of the prosthesis body and obstacle information, predict the movement trend of the prosthesis, and judge the movement intention of the human body, so as to control the power module to make the prosthesis body move reasonably, so as to assist the patient to adapt to different road conditions or cross obstacles; , Perceive the movement intention of the human body in advance and continuously detect the road conditions around the human body. The data feedback is real-time and stable, which is convenient for patients to use.

目前,传统下肢假肢存在智能化程度低、运动模式单一、策略切换时延长;对周围地形环境识别不准确、地形识别时延长、对地形无预测能力;在连续变化的复杂地形环境中实用性差等一系列问题。这些问题不仅使得下肢假肢穿戴者无法恢复较好的运动能力,甚至使得穿戴者有受伤风险。At present, traditional lower limb prostheses have low intelligence, single movement mode, and prolonged strategy switching time; inaccurate recognition of the surrounding terrain environment, prolonged terrain recognition time, and no ability to predict the terrain; poor practicability in continuously changing complex terrain environments, etc. series of questions. These problems not only prevent the wearer of the lower limb prosthesis from recovering better movement ability, but even put the wearer at risk of injury.

发明内容Contents of the invention

针对现有技术中的缺陷,本发明的目的是提供一种基于实时实例分割的下肢假肢地形识别与运动预测系统。Aiming at the defects in the prior art, the object of the present invention is to provide a lower limb prosthetic terrain recognition and motion prediction system based on real-time instance segmentation.

根据本发明提供的一种基于实时实例分割的下肢假肢地形识别与运动预测系统,本地端包括下肢假肢、下肢假肢控制器、双目相机、图像采集与信息传输硬件平台,云端包括云服务器;According to the real-time instance segmentation-based lower limb prosthesis terrain recognition and motion prediction system provided by the present invention, the local end includes a lower limb prosthesis, a lower limb prosthesis controller, a binocular camera, an image acquisition and information transmission hardware platform, and the cloud includes a cloud server;

所述双目相机安装在下肢假肢上,采集下肢假肢周围的地形信息,所述图像采集与信息传输硬件平台采集双目相机得到的信息,并上传至云服务器,所述下肢假肢控制器控制下肢假肢的运动方式;The binocular camera is installed on the lower limb prosthesis to collect terrain information around the lower limb prosthesis. The image acquisition and information transmission hardware platform collects the information obtained by the binocular camera and uploads it to the cloud server. The lower limb prosthesis controller controls the lower limb prosthesis. movement of the prosthesis;

所述云服务器上部署有目标地形监测模块、实例分割模块、特征匹配模块和位置尺寸计算模块,地形信息依次经过上述模块处理后,反馈至下肢假肢控制器。The cloud server is deployed with a target terrain monitoring module, an instance segmentation module, a feature matching module, and a position and size calculation module. After the terrain information is processed by the above modules in turn, it is fed back to the lower limb prosthesis controller.

优选的,所述下肢假肢包括膝关节和踝关节,所述膝关节具有一个自由度,所述踝关节具有屈伸以及外旋/内翻两个主动自由度。Preferably, the lower limb prosthesis includes a knee joint and an ankle joint, the knee joint has one degree of freedom, and the ankle joint has two active degrees of freedom of flexion and extension and external rotation/varus.

优选的,所述双目像机安装于膝关节部位前端,用于采集下肢假肢周围地形的RGB图像,所述双目像机的配置为:1280*720分辨率、30帧/秒采样率。Preferably, the binocular camera is installed at the front of the knee joint to collect RGB images of the terrain around the lower limb prosthesis, and the configuration of the binocular camera is: 1280*720 resolution, 30 frames/second sampling rate.

优选的,所述图像采集与信息传输硬件平台位于下肢假肢结构体内部空隙,实时接收双目相机采集RGB图像,通过无线网络传输至云端服务器,并实时接收云服务器反馈的地形信息,输出至下肢假肢运动控制器。Preferably, the image acquisition and information transmission hardware platform is located in the internal gap of the lower limb prosthetic body, receives the RGB image collected by the binocular camera in real time, transmits it to the cloud server through the wireless network, and receives the terrain information fed back by the cloud server in real time, and outputs it to the lower limb Prosthetic motion controller.

优选的,所述云服务器搭载有CPU和GPU,所述CPU具有多线程处理特点,为多用户同时提供服务;所述GPU加快RGB图像处理速度。Preferably, the cloud server is equipped with a CPU and a GPU, the CPU has the characteristics of multi-thread processing, and provides services for multiple users at the same time; the GPU speeds up RGB image processing.

优选的,所述下肢假肢控制器控制下肢假肢运动包括以下步骤:Preferably, the lower limb prosthesis controller controlling the movement of the lower limb prosthesis includes the following steps:

步骤S1:基于IMU采集健康人在不同地形环境中人体下肢的运动数据,存储于下肢假肢控制器中;Step S1: Based on the IMU, collect the movement data of the lower limbs of healthy people in different terrain environments, and store them in the lower limb prosthetic controller;

步骤S2:下肢假肢控制器控制接收云服务器发出的下肢假肢目前所在的地形数据;Step S2: the lower limb prosthesis controller controls and receives the current terrain data of the lower limb prosthesis from the cloud server;

步骤S3:下肢假肢控制器根据人体下肢的运动数据控制下肢假肢采取与所在地形适宜的运动状态;Step S3: The lower limb prosthesis controller controls the lower limb prosthesis to adopt a motion state suitable for the terrain according to the motion data of the human lower limb;

步骤S4:对尚有一定距离的地形或障碍,下肢假肢控制器根据当前运动方向与速度,预测是否可能经过以及预计到达时间,以从软件上实现更加平滑的状态切换。Step S4: For terrain or obstacles that still have a certain distance, the lower limb prosthetic controller predicts whether it may pass and the estimated arrival time according to the current direction and speed of movement, so as to achieve a smoother state switching from the software.

优选的,所述目标检测模块使用经过训练并优化的基于MobileNet的YoloV4-Lite卷积神经网络,初步识别RGB图像中不同的地形信息,使用Box将其分别标记,输出至实例分割模块。Preferably, the target detection module uses the trained and optimized YoloV4-Lite convolutional neural network based on MobileNet to preliminarily identify different terrain information in the RGB image, use Box to mark them respectively, and output them to the instance segmentation module.

优选的,所述实例分割模块使用经过训练的基于循环卷积的Deep Snake卷积神经网络,对目标检测得到的Box使用Extreme Points法提取初始轮廓,进而使用Deep Snake网络变形初始轮廓得到精确贴合各地形的地形轮廓,输出至特征匹配模块。Preferably, the instance segmentation module uses a trained Deep Snake convolutional neural network based on circular convolution, uses the Extreme Points method to extract the initial contour of the Box obtained from target detection, and then uses the Deep Snake network to deform the initial contour to obtain an accurate fit The terrain contours of each terrain are output to the feature matching module.

优选的,所述特征匹配模块对双目相机采集的、经过实例分割模块处理过的同一时刻同一类型地形轮廓范围内的像素,使用Speeded Up Robust Features方法,分别进行特征提取与特征匹配,根据相同特征点视差,结合双目相机参数计算出特征点深度信息,进而计算出其三维坐标。Preferably, the feature matching module uses the Speeded Up Robust Features method to perform feature extraction and feature matching on the pixels within the range of the same type of terrain contour at the same moment processed by the binocular camera and processed by the instance segmentation module, respectively, according to the same Feature point parallax, combined with binocular camera parameters to calculate the depth information of feature points, and then calculate its three-dimensional coordinates.

优选的,所述位置尺寸计算模块对于识别到地形,根据位于轮廓边缘特征点坐标计算其位置与长宽范围,对于识别到的台阶、障碍物等,根据其轮廓角点坐标计算其长宽高尺寸以及位置信息。Preferably, for the recognized terrain, the position and size calculation module calculates its position and length and width range according to the coordinates of the feature points located on the edge of the contour, and for the recognized steps, obstacles, etc., calculates its length, width and height according to the coordinates of its contour corner points size and location information.

与现有技术相比,本发明具有如下的有益效果:Compared with the prior art, the present invention has the following beneficial effects:

1、本发明的基于实时实例分割的下肢假肢地形识别与运动预测系统,实现了对多运动模式下肢假肢的智能优化提升,使其能够实时精确感知周围地形分布情况,对运动控制策略及时校正与调整,提升下肢假肢穿戴者运动能力与穿戴舒适度。1. The lower limb prosthesis terrain recognition and motion prediction system based on real-time instance segmentation of the present invention realizes the intelligent optimization and improvement of multi-motion mode lower limb prostheses, enabling it to accurately perceive the surrounding terrain distribution in real time, and timely correct and coordinate motion control strategies. Adjustment to improve the movement ability and wearing comfort of the lower limb prosthetic wearer.

附图说明Description of drawings

通过阅读参照以下附图对非限制性实施例所作的详细描述,本发明的其它特征、目的和优点将会变得更明显:Other characteristics, objects and advantages of the present invention will become more apparent by reading the detailed description of non-limiting embodiments made with reference to the following drawings:

图1为本申请实施例基于实时实例分割的下肢假肢地形识别与运动预测系统的结构示意图;1 is a schematic structural diagram of a lower limb prosthesis terrain recognition and motion prediction system based on real-time instance segmentation according to an embodiment of the present application;

图2为本申请实施例基于实时实例分割的下肢假肢地形识别与运动预测系统中的深度可分离卷积示意图;Fig. 2 is a schematic diagram of the depth separable convolution in the lower limb prosthesis terrain recognition and motion prediction system based on real-time instance segmentation according to the embodiment of the present application;

图3为本申请实施例基于实时实例分割的下肢假肢地形识别与运动预测系统中的YOLOV4网络结构图;Fig. 3 is the YOLOV4 network structure diagram in the lower limb prosthesis terrain recognition and motion prediction system based on real-time instance segmentation in the embodiment of the present application;

图4为本申请实施例基于实时实例分割的下肢假肢地形识别与运动预测系统中的基于循环卷积的Deep Snake网络结构图;Fig. 4 is the Deep Snake network structure diagram based on circular convolution in the lower limb prosthesis terrain recognition and motion prediction system based on real-time instance segmentation in the embodiment of the present application;

图5为本申请实施例基于实时实例分割的下肢假肢地形识别与运动预测系统中国的双目视觉定位原理图。Fig. 5 is a schematic diagram of binocular vision positioning in China of the lower limb prosthesis terrain recognition and motion prediction system based on real-time instance segmentation according to the embodiment of the present application.

具体实施方式Detailed ways

下面结合具体实施例对本发明进行详细说明。以下实施例将有助于本领域的技术人员进一步理解本发明,但不以任何形式限制本发明。应当指出的是,对本领域的普通技术人员来说,在不脱离本发明构思的前提下,还可以做出若干变化和改进。这些都属于本发明的保护范围。The present invention will be described in detail below in conjunction with specific embodiments. The following examples will help those skilled in the art to further understand the present invention, but do not limit the present invention in any form. It should be noted that those skilled in the art can make several changes and improvements without departing from the concept of the present invention. These all belong to the protection scope of the present invention.

一种基于实时实例分割的下肢假肢地形识别与运动预测系统,参照图1,包括本地端和云端,本地端包括下肢假肢、下肢假肢控制器、双目相机、图像采集与信息传输硬件平台,云端包括云服务器。A terrain recognition and motion prediction system for lower limb prosthesis based on real-time instance segmentation, referring to Figure 1, includes a local terminal and a cloud. The local terminal includes a lower limb prosthesis, a lower limb prosthetic controller, a binocular camera, an image acquisition and information transmission hardware platform, and a cloud Including cloud servers.

下肢假肢具有多运动模式,假肢的肢干及足部采用基于3D打印的ABS树脂材料制成,下肢假肢包括膝关节和踝关节,膝关节和踝关节采用1060铝合金材料制成。下肢假肢的膝关节与踝关节用Maxon电机进行驱动,膝关节具一个自由度,脚踝处具备屈伸及外旋/内翻两个主动自由度。The lower limb prosthesis has multi-motion modes. The limbs and feet of the prosthesis are made of 3D-printed ABS resin materials. The lower limb prosthesis includes knee joints and ankle joints. The knee joints and ankle joints are made of 1060 aluminum alloy materials. The knee joint and ankle joint of the lower limb prosthesis are driven by Maxon motors. The knee joint has one degree of freedom, and the ankle has two active degrees of freedom of flexion and extension and external rotation/varus.

双目相机安装在下肢假肢上的膝关节部位前端,并通过电动云台固定,用于采集下肢假肢周围地形的RGB图像,双目像机的配置为:1280*720分辨率、30帧/秒采样率。图像采集与信息传输硬件平台位于下肢假肢结构体内部空隙,实时接收双目相机采集RGB图像,通过无线网络传输至云端服务器,并实时接收云服务器反馈的地形信息,输出至下肢假肢运动控制器,下肢假肢控制器控制下肢假肢的运动方式。The binocular camera is installed at the front end of the knee joint on the lower limb prosthesis, and is fixed by an electric pan/tilt to collect RGB images of the terrain around the lower limb prosthesis. The configuration of the binocular camera is: 1280*720 resolution, 30 frames per second Sampling Rate. The image acquisition and information transmission hardware platform is located in the internal gap of the lower limb prosthetic body. It receives the RGB image collected by the binocular camera in real time, transmits it to the cloud server through the wireless network, and receives the terrain information fed back by the cloud server in real time, and outputs it to the lower limb prosthesis motion controller. The lower limb prosthetic controller controls the movement mode of the lower limb prosthesis.

下肢假肢控制器控制下肢假肢运动包括以下步骤:The lower limb prosthesis controller controls the movement of the lower limb prosthesis including the following steps:

步骤S1:基于IMU采集健康人在沙地、草地、沥青地面行走与奔跑,以及上下坡道、台阶,跨越不同宽度和高度障碍物人体下肢的运动数据,存储于下肢假肢控制器中;Step S1: Based on the IMU, collect the movement data of the lower limbs of healthy people walking and running on sand, grass, and asphalt, as well as up and down ramps, steps, and across obstacles of different widths and heights, and store them in the lower limb prosthesis controller;

步骤S2:下肢假肢控制器控制接收云服务器发出的下肢假肢目前所在的地形数据;Step S2: the lower limb prosthesis controller controls and receives the current terrain data of the lower limb prosthesis from the cloud server;

步骤S3:下肢假肢控制器根据人体下肢的运动数据控制下肢假肢采取与所在地形适宜的运动状态;Step S3: The lower limb prosthesis controller controls the lower limb prosthesis to adopt a motion state suitable for the terrain according to the motion data of the human lower limb;

步骤S4:对尚有一定距离的地形或障碍,下肢假肢控制器根据当前运动方向与速度,预测是否可能经过以及预计到达时间,为运动策略切换做好准备,以从软件上实现更加平滑的状态切换,提高假肢舒适度。Step S4: For terrain or obstacles that still have a certain distance, the lower limb prosthetic controller predicts whether it may pass and the estimated arrival time according to the current direction and speed of movement, and prepares for the movement strategy switch to achieve a smoother state from the software Toggle for improved prosthetic comfort.

云服务器上部署有目标地形监测模块、实例分割模块、特征匹配模块和位置尺寸计算模块,地形信息依次经过上述模块处理后,反馈至图像采集与信息传输硬件平台,图像采集与信息传输硬件平台对下肢假肢控制器发出控制指令。The cloud server is deployed with target terrain monitoring module, instance segmentation module, feature matching module and position size calculation module. After the terrain information is processed by the above modules in sequence, it is fed back to the image acquisition and information transmission hardware platform. The image acquisition and information transmission hardware platform The lower limb prosthetic controller issues control commands.

云服务器搭载有CPU和GPU,CPU具有多线程处理特点,为多用户同时提供服务;GPU加快RGB图像处理速度。考虑到用户数量增加与故障问题,其性能方面具有极强可扩展性,以及冗余备份机制。The cloud server is equipped with a CPU and a GPU. The CPU has the characteristics of multi-thread processing and provides services for multiple users at the same time; the GPU speeds up RGB image processing. Considering the increase in the number of users and the problem of failure, its performance has strong scalability and a redundant backup mechanism.

目标地形检测模块,需要进行细粒度图像检测,设计并搭建基于深度卷积神经网络的实时性好、准确率高的检测框架,通过卷积神经网络,完成目标物品的类别和精确轮廓的确定。收集目标地形的检测数据集,完成人工标注,进行训练集、验证集、测试集划分,以及数据预处理等工作。设计并搭建合适结构的深度卷积神经网络,并设计合适的损失函数,在数据集上训练深度卷积神经网络。根据训练结果不断调试超参数和网络结构,旨在训练出实时性好、识别准确率高的深度卷积神经网络。本发明使用改进后的基于Mobilenet的YoloV4-Lite进行草地、沙地、沥青地面、台阶的识别,Mobilenet最大的特点是使用了深度可分离卷积,这种逐通道的线性卷积可以在保证特征提取效果的前提下,极大幅度的减少参数量,如图2所示。The target terrain detection module needs to perform fine-grained image detection, design and build a detection framework based on deep convolutional neural network with good real-time performance and high accuracy, and complete the determination of the category and precise outline of the target object through the convolutional neural network. Collect the detection data set of the target terrain, complete the manual labeling, divide the training set, verification set, test set, and data preprocessing. Design and build a deep convolutional neural network with a suitable structure, and design a suitable loss function to train the deep convolutional neural network on the data set. According to the training results, the hyperparameters and network structure are continuously adjusted, aiming to train a deep convolutional neural network with good real-time performance and high recognition accuracy. The present invention uses the improved Mobilenet-based YoloV4-Lite to identify grass, sand, asphalt ground, and steps. The biggest feature of Mobilenet is the use of depth-separable convolution. This channel-by-channel linear convolution can guarantee feature Under the premise of extracting the effect, the amount of parameters is greatly reduced, as shown in Figure 2.

参照图3,原始YOLOV4网络的输入为高416宽416的RGB图片,并通过CSPDarknet-53主干网络提取特征。使用了三个不同大小的特征层,实现多尺度的特征融合,对目标位置相对于默认矩形框(default box)的偏移进行回归,同时输出一个分类的置信度。原始的YOLOV4网络结构较复杂,预测速度较慢,为了满足手眼协调中检测速度的要求,需要对网络的结构进行调整。考虑到精度和速度之间的权衡,使用MobileNet网络作为主干网络提取特征,但不改变输入图片的分辨率,并且使用相同数量的特征进行目标检测。Referring to Figure 3, the input of the original YOLOV4 network is an RGB image with a height of 416 and a width of 416, and features are extracted through the CSPDarknet-53 backbone network. Three feature layers of different sizes are used to achieve multi-scale feature fusion, regression is performed on the offset of the target position relative to the default rectangular box (default box), and a classification confidence is output at the same time. The original YOLOV4 network structure is complex and the prediction speed is slow. In order to meet the detection speed requirements in hand-eye coordination, the network structure needs to be adjusted. Considering the trade-off between accuracy and speed, the MobileNet network is used as the backbone network to extract features, but the resolution of the input image is not changed, and the same number of features is used for target detection.

实例分割模块,需要对目标地形检测模块得到的一系列矩形框与地形类别标签进行进一步背景分割,得到各目标地形精确轮廓,并且要求算法计算复杂度低,实时性好。如图4所示,Deep Snake算法将物体形状表示为其最外部轮廓,参数量极小接近于矩形框表示法。首先,将识别得到的某一目标地形矩形框四条边的中点连接得到一个菱形,把菱形四个顶点输入到Deep Snake网络中,预测得到指向目标地形在图像中最上、最下、最左、最右的四个极限像素点的Offsets,菱形根据Offsets变形就可以得到这四个极限像素点坐标;其次,在每个点上延伸出一条线段,之后将每条线段连接起来得到一个不规则八边形,这个八边形作为初始轮廓;之后,考虑到物体轮廓可以视为一个Cycle Graph,每个节点的相邻节点数固定为2,相邻顺序固定,这样就可以定义卷积核使用卷积处理轮廓迭代优化问题,于是使用基于循环卷积的深度卷积网络,对当前轮廓点的特征进行融合和预测,映射得到指向物体轮廓的Offsets,使用Offsets对轮廓点坐标变形;最后,以初始轮廓为起点,不断重复上述过程,直至计算出的轮廓较好的贴合目标物体实际边缘,此时预测得到Offsets小于阈值,即可输出由地形类别标签和表示轮廓点的坐标集合组成的结果。The instance segmentation module needs to perform further background segmentation on a series of rectangular boxes and terrain category labels obtained by the target terrain detection module to obtain the precise outline of each target terrain, and the algorithm requires low computational complexity and good real-time performance. As shown in Figure 4, the Deep Snake algorithm represents the shape of the object as its outermost contour, and the parameter amount is very small, which is close to the rectangular box representation. First, connect the midpoints of the four sides of the recognized target terrain rectangle to obtain a rhombus, input the four vertices of the rhombus into the Deep Snake network, and predict the top, bottom, left, and bottom points of the target terrain in the image. The Offsets of the four rightmost limit pixels, the rhombus can be transformed according to the Offsets to obtain the coordinates of these four limit pixels; secondly, a line segment is extended on each point, and then each line segment is connected to obtain an irregular eight The polygon, this octagon is used as the initial outline; later, considering that the outline of the object can be regarded as a Cycle Graph, the number of adjacent nodes of each node is fixed at 2, and the adjacent order is fixed, so that the convolution kernel can be defined to use the volume Contour iterative optimization problem of product processing, so the deep convolutional network based on circular convolution is used to fuse and predict the features of the current contour point, and the Offsets pointing to the contour of the object are obtained by mapping, and the coordinates of the contour point are deformed by using Offsets; finally, the initial The contour is the starting point, and the above process is repeated until the calculated contour fits the actual edge of the target object well. At this time, the predicted Offsets is less than the threshold, and the result consisting of the terrain category label and the coordinate set representing the contour point can be output.

此外,地形属于背景类别的目标,其前景遮挡现象十分普遍,针对这一问题,可以采用分组检测方法,将地形被前景物体截断的不连续部分划分到不同目标检测矩形框中,之后在每个矩形框中分别提取轮廓。In addition, the foreground occlusion phenomenon is very common for objects whose terrain belongs to the background category. To solve this problem, the group detection method can be used to divide the discontinuous parts of the terrain truncated by foreground objects into different target detection rectangles, and then in each Contours are extracted respectively in the rectangular frame.

特征匹配模块和位置尺寸计算模块,对双目相机采集的、经过实例分割模块处理过的同一时刻同一类型地形轮廓范围内的像素,使用Speeded Up Robust Features方法,分别进行特征提取与特征匹配,根据相同特征点视差,结合双目相机参数计算出特征点深度信息,进而计算出其三维坐标,双目视觉定位原理如图5所示。对于识别到的草地、沙地、沥青地面等,可以根据位于轮廓边缘特征点坐标计算其位置与长宽范围,对于识别到的台阶、障碍物等,可以根据其轮廓角点坐标计算其长宽高尺寸以及位置信息。The feature matching module and the position and size calculation module use the Speeded Up Robust Features method to perform feature extraction and feature matching on the pixels within the range of the same type of terrain contour collected by the binocular camera and processed by the instance segmentation module at the same time. The parallax of the same feature point is combined with the parameters of the binocular camera to calculate the depth information of the feature point, and then calculate its three-dimensional coordinates. The principle of binocular vision positioning is shown in Figure 5. For the recognized grassland, sandy land, asphalt ground, etc., its position and length and width range can be calculated according to the coordinates of the feature points located on the edge of the contour. High size and location information.

本领域技术人员知道,除了以纯计算机可读程序代码方式实现本发明提供的系统及其各个装置、模块、单元以外,完全可以通过将方法步骤进行逻辑编程来使得本发明提供的系统及其各个装置、模块、单元以逻辑门、开关、专用集成电路、可编程逻辑控制器以及嵌入式微控制器等的形式来实现相同功能。所以,本发明提供的系统及其各项装置、模块、单元可以被认为是一种硬件部件,而对其内包括的用于实现各种功能的装置、模块、单元也可以视为硬件部件内的结构;也可以将用于实现各种功能的装置、模块、单元视为既可以是实现方法的软件模块又可以是硬件部件内的结构。Those skilled in the art know that, in addition to realizing the system provided by the present invention and its various devices, modules, and units in a purely computer-readable program code mode, the system provided by the present invention and its various devices can be completely programmed by logically programming the method steps. , modules, and units implement the same functions in the form of logic gates, switches, ASICs, programmable logic controllers, and embedded microcontrollers. Therefore, the system and its various devices, modules, and units provided by the present invention can be regarded as a hardware component, and the devices, modules, and units included in it for realizing various functions can also be regarded as hardware components. The structure; the devices, modules, and units for realizing various functions can also be regarded as not only the software modules for realizing the method, but also the structures in the hardware components.

在本申请的描述中,需要理解的是,术语“上”、“下”、“前”、“后”、“左”、“右”、“竖直”、“水平”、“顶”、“底”、“内”、“外”等指示的方位或位置关系为基于附图所示的方位或位置关系,仅是为了便于描述本申请和简化描述,而不是指示或暗示所指的装置或元件必须具有特定的方位、以特定的方位构造和操作,因此不能理解为对本申请的限制。In the description of this application, it should be understood that the terms "upper", "lower", "front", "rear", "left", "right", "vertical", "horizontal", "top", The orientation or positional relationship indicated by "bottom", "inner", "outer", etc. is based on the orientation or positional relationship shown in the drawings, and is only for the convenience of describing the application and simplifying the description, rather than indicating or implying the referred device Or elements must have a certain orientation, be constructed and operate in a certain orientation, and thus should not be construed as limiting the application.

以上对本发明的具体实施例进行了描述。需要理解的是,本发明并不局限于上述特定实施方式,本领域技术人员可以在权利要求的范围内做出各种变化或修改,这并不影响本发明的实质内容。在不冲突的情况下,本申请的实施例和实施例中的特征可以任意相互组合。Specific embodiments of the present invention have been described above. It should be understood that the present invention is not limited to the specific embodiments described above, and those skilled in the art may make various changes or modifications within the scope of the claims, which do not affect the essence of the present invention. In the case of no conflict, the embodiments of the present application and the features in the embodiments can be combined with each other arbitrarily.

Claims (6)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202110780493.5A CN113499173B (en) | 2021-07-09 | 2021-07-09 | Real-time instance segmentation-based terrain identification and motion prediction system for lower artificial limb |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202110780493.5A CN113499173B (en) | 2021-07-09 | 2021-07-09 | Real-time instance segmentation-based terrain identification and motion prediction system for lower artificial limb |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN113499173A CN113499173A (en) | 2021-10-15 |

| CN113499173B true CN113499173B (en) | 2022-10-28 |

Family

ID=78012619

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202110780493.5A Active CN113499173B (en) | 2021-07-09 | 2021-07-09 | Real-time instance segmentation-based terrain identification and motion prediction system for lower artificial limb |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN113499173B (en) |

Families Citing this family (5)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN114145890B (en) * | 2021-12-02 | 2023-03-10 | 中国科学技术大学 | Prosthetic device with terrain recognition |

| CN115844603A (en) * | 2022-12-12 | 2023-03-28 | 上海理工大学 | Hip and knee integrated intelligent artificial limb auxiliary obstacle crossing control system and method |

| CN116206358B (en) * | 2022-12-27 | 2025-12-26 | 北京通用人工智能研究院 | A method and system for predicting lower limb exoskeleton movement patterns based on VIO system |

| CN116030536B (en) * | 2023-03-27 | 2023-06-09 | 国家康复辅具研究中心 | Data collection and evaluation system for use state of upper limb prosthesis |

| CN116869713B (en) * | 2023-07-31 | 2024-11-15 | 南方科技大学 | Vision-assisted prosthetic control method, device, prosthesis and storage medium |

Citations (8)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN104408718A (en) * | 2014-11-24 | 2015-03-11 | 中国科学院自动化研究所 | Gait data processing method based on binocular vision measuring |

| CN205031391U (en) * | 2015-10-13 | 2016-02-17 | 河北工业大学 | Road condition recognition device of power type artificial limb |

| CN109446911A (en) * | 2018-09-28 | 2019-03-08 | 北京陌上花科技有限公司 | Image detecting method and system |

| CN209422174U (en) * | 2018-08-02 | 2019-09-24 | 南方科技大学 | Dynamic artificial limb environment recognition system integrating vision |

| CN110901788A (en) * | 2019-11-27 | 2020-03-24 | 佛山科学技术学院 | Biped mobile robot system with literacy ability |

| CN110974497A (en) * | 2019-12-30 | 2020-04-10 | 南方科技大学 | Electric prosthesis control system and control method |

| CN111247557A (en) * | 2019-04-23 | 2020-06-05 | 深圳市大疆创新科技有限公司 | Method, system and movable platform for moving target object detection |

| CN111658246A (en) * | 2020-05-19 | 2020-09-15 | 中国科学院计算技术研究所 | Intelligent joint prosthesis regulating and controlling method and system based on symmetry |

Family Cites Families (4)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN101453964B (en) * | 2005-09-01 | 2013-06-12 | 奥瑟Hf公司 | System and method for determining terrain transitions |

| US8229163B2 (en) * | 2007-08-22 | 2012-07-24 | American Gnc Corporation | 4D GIS based virtual reality for moving target prediction |

| CN109766864A (en) * | 2019-01-21 | 2019-05-17 | 开易(北京)科技有限公司 | Image detecting method, image detection device and computer readable storage medium |

| CN111174781B (en) * | 2019-12-31 | 2022-03-04 | 同济大学 | An Inertial Navigation Positioning Method Based on Joint Target Detection of Wearable Devices |

-

2021

- 2021-07-09 CN CN202110780493.5A patent/CN113499173B/en active Active

Patent Citations (8)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN104408718A (en) * | 2014-11-24 | 2015-03-11 | 中国科学院自动化研究所 | Gait data processing method based on binocular vision measuring |

| CN205031391U (en) * | 2015-10-13 | 2016-02-17 | 河北工业大学 | Road condition recognition device of power type artificial limb |

| CN209422174U (en) * | 2018-08-02 | 2019-09-24 | 南方科技大学 | Dynamic artificial limb environment recognition system integrating vision |

| CN109446911A (en) * | 2018-09-28 | 2019-03-08 | 北京陌上花科技有限公司 | Image detecting method and system |

| CN111247557A (en) * | 2019-04-23 | 2020-06-05 | 深圳市大疆创新科技有限公司 | Method, system and movable platform for moving target object detection |

| CN110901788A (en) * | 2019-11-27 | 2020-03-24 | 佛山科学技术学院 | Biped mobile robot system with literacy ability |

| CN110974497A (en) * | 2019-12-30 | 2020-04-10 | 南方科技大学 | Electric prosthesis control system and control method |

| CN111658246A (en) * | 2020-05-19 | 2020-09-15 | 中国科学院计算技术研究所 | Intelligent joint prosthesis regulating and controlling method and system based on symmetry |

Non-Patent Citations (1)

| Title |

|---|

| 基于深度学习的轮廓检测算法:综述;林川等;《广西科技大学学报》;20190415;第30卷(第02期);正文第1-9页 * |

Also Published As

| Publication number | Publication date |

|---|---|

| CN113499173A (en) | 2021-10-15 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN113499173B (en) | Real-time instance segmentation-based terrain identification and motion prediction system for lower artificial limb | |

| CN106250867B (en) | A kind of implementation method of the skeleton tracking system based on depth data | |

| CN110441791B (en) | Ground obstacle detection method based on forward-leaning 2D laser radar | |

| Liu et al. | Vision-assisted autonomous lower-limb exoskeleton robot | |

| CN111027432B (en) | A Vision-Following Robot Method Based on Gait Features | |

| CN107423730A (en) | A kind of body gait behavior active detecting identifying system and method folded based on semanteme | |

| CN117671738B (en) | Human body posture recognition system based on artificial intelligence | |

| CN107742097B (en) | Human behavior recognition method based on depth camera | |

| CN109919137B (en) | Pedestrian structural feature expression method | |

| CN109344694A (en) | A real-time recognition method of basic human actions based on 3D human skeleton | |

| Hu et al. | 3D Pose tracking of walker users' lower limb with a structured-light camera on a moving platform | |

| CN102156994B (en) | Joint positioning method for single-view unmarked human motion tracking | |

| CN115346272A (en) | Real-time tumble detection method based on depth image sequence | |

| CN117529278A (en) | Gait monitoring method and robot | |

| CN118470902B (en) | A method for generating a safe path to a smart toilet and detecting falls. | |

| CN114548224B (en) | 2D human body pose generation method and device for strong interaction human body motion | |

| CN116206358A (en) | Lower limb exoskeleton movement mode prediction method and system based on VIO system | |

| CN116092128A (en) | A monocular real-time human fall detection method and system based on machine vision | |

| CN115797397A (en) | A method and system for a robot to autonomously follow a target person around the clock | |

| Haker et al. | Self-organizing maps for pose estimation with a time-of-flight camera | |

| CN207529394U (en) | A kind of remote class brain three-dimensional gait identifying system towards under complicated visual scene | |

| Kurmankhojayev et al. | Monocular pose capture with a depth camera using a Sums-of-Gaussians body model | |

| Lim et al. | Depth image based gait tracking and analysis via robotic walker | |

| Miao et al. | Stereo-based Terrain Parameters Estimation for Lower Limb Exoskeleton | |

| CN114494655A (en) | Blind guiding method and device for assisting user to intelligent closestool based on artificial intelligence |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |