CN113286040A - Method for selecting image and apparatus thereof - Google Patents

Method for selecting image and apparatus thereof Download PDFInfo

- Publication number

- CN113286040A CN113286040A CN202110533008.4A CN202110533008A CN113286040A CN 113286040 A CN113286040 A CN 113286040A CN 202110533008 A CN202110533008 A CN 202110533008A CN 113286040 A CN113286040 A CN 113286040A

- Authority

- CN

- China

- Prior art keywords

- image

- user

- album

- images

- selecting

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

Images

Landscapes

- Processing Or Creating Images (AREA)

Abstract

A method for selecting an image and an apparatus thereof are provided. The method comprises the following steps: acquiring an image; determining at least one parameter associated with the image; at least one image associated with the image is selected from a user's photo album in accordance with the at least one parameter. The present disclosure may help a user quickly select images in a photo album.

Description

Technical Field

The present disclosure relates to the field of electronic technology. More particularly, the present disclosure relates to a method for selecting an image and an apparatus for selecting an image.

Background

When the shooting is a necessary link for home and out, the user can take a picture when going out or participating in activities such as party and the like, and the users who participate in the activities together can share the shot images with each other.

At present, when image sharing is performed, a user needs to select an image by himself. For example, a user needs to manually select a photo to be sent on the social software, or needs to share the photo with the social software after manually selecting the photo in a local album. If the number of images of the user is large, it takes time and effort to perform the filtering, thereby causing inconvenience to the user.

Disclosure of Invention

Exemplary embodiments according to the present disclosure provide a method for selecting an image and an apparatus for selecting an image to solve at least the above-mentioned problems.

According to an exemplary embodiment of the present disclosure, there is provided a method for selecting an image, which may include: acquiring an image; determining at least one parameter associated with the image; at least one image associated with the image is selected from a user's photo album in accordance with the at least one parameter.

Alternatively, the at least one parameter may include at least one of a photographing location and a photographing time of the image.

Optionally, the step of acquiring the image may comprise: acquiring the image in response to the user's selection of the image in the photo album; or receiving the image from another user while the user is communicating with the other user; or when the user communicates with another user, receiving a first image from the another user and selecting a second image in the photo album by the user, the first image and the second image being the images.

Optionally, the step of determining at least one parameter related to the image may comprise: determining whether the user has an intention to select an image in the photo album; in the event that it is determined that the user has an intent to select an image in the album, at least one parameter associated with the image is determined.

Optionally, the step of determining that the user has an intention to select an image in the photo album by: detecting that a dwell time of the user in the photo album is greater than or equal to a predetermined time threshold; or detecting that the user has a sliding operation in the photo album; or detecting that the user has an operation to continue selecting an image.

Optionally, the step of determining at least one parameter related to the image may comprise: respectively calculating the correlation between each image in the images and other images except the image; determining the at least one parameter of the image having the greatest correlation with the other images.

Optionally, the step of determining at least one parameter related to the image may comprise: determining whether the shooting time and/or the shooting place of the first image and the second image are within a preset range; in the case where it is determined that the photographing time and/or photographing place of the first image and the second image are within a preset range, the preset range is determined as a criterion for selecting the at least one image.

Optionally, the step of selecting at least one image associated with the image from the user's photo album in accordance with the at least one parameter may comprise: determining a criterion for selecting the at least one image in dependence on the at least one parameter; selecting the at least one image from the photo album that matches the criteria and marking the at least one image in the photo album.

Alternatively, the criterion may include at least one of a time range from a predetermined time before and after the photographing time and a distance range from the photographing place by a predetermined distance.

According to another exemplary embodiment of the present disclosure, there is provided an apparatus for selecting an image, which may include: an acquisition module configured to acquire an image; a determination module configured to determine at least one parameter related to the image; and a selection module configured to select at least one image associated with the image from a user's photo album in accordance with the at least one parameter.

Alternatively, the at least one parameter may include at least one of a photographing location and a photographing time of the image.

Optionally, the obtaining module may be configured to: acquiring the image in response to the user's selection of the image in the photo album; or receiving the image from another user while the user is communicating with the other user; or when the user communicates with another user, receiving a first image from the another user and selecting a second image in the photo album by the user, the first image and the second image being the images.

Optionally, the determining module may be configured to: determining whether the user has an intention to select an image in the photo album; in the event that it is determined that the user has an intent to select an image in the album, at least one parameter associated with the image is determined.

Optionally, the determination module may be configured to determine that the user has an intention to select an image in the album based on: the user's dwell time in the album is greater than or equal to a predetermined time threshold; or the user has a sliding operation in the photo album; or the user has an operation to continue selecting an image.

Optionally, the determining module may be configured to: respectively calculating the correlation between each image in the images and other images except the image; determining the at least one parameter of the image having the greatest correlation with the other images.

Optionally, the determining module may be configured to: determining whether the shooting time and/or the shooting place of the first image and the second image are within a preset range; in the case where it is determined that the photographing time and/or photographing place of the first image and the second image are within a preset range, the preset range is determined as a criterion for selecting the at least one image.

Optionally, the selection module may be configured to: determining a criterion for selecting the at least one image in dependence on the at least one parameter; selecting the at least one image from the photo album that matches the criteria and marking the at least one image in the photo album.

Alternatively, the criterion may include at least one of a time range from a predetermined time before and after the photographing time and a distance range from the photographing place by a predetermined distance.

According to an exemplary embodiment of the present disclosure, there is provided a computer-readable storage medium having stored thereon instructions which, when executed by a processor, implement a method for selecting an image according to an exemplary embodiment of the present disclosure.

According to an exemplary embodiment of the present disclosure, there is provided a computing apparatus including: a processor; a memory storing instructions that, when executed by the processor, implement a method for selecting an image according to an exemplary embodiment of the present disclosure.

According to an exemplary embodiment of the present disclosure, a computer program product is provided, in which instructions are executed by at least one processor in an electronic device to perform the method for selecting an image as described above.

The method and the device can quickly and accurately select the image matched with the corresponding time or place for the user, save the operation time of the user and further improve the user experience.

Additional aspects and/or advantages of the present general inventive concept will be set forth in part in the description which follows and, in part, will be obvious from the description, or may be learned by practice of the general inventive concept.

Drawings

These and/or other aspects and advantages of the present disclosure will become apparent and more readily appreciated from the following description of the embodiments, taken in conjunction with the accompanying drawings of which:

fig. 1 shows a schematic structural diagram of an electronic device according to an exemplary embodiment of the present disclosure;

fig. 2 illustrates a flowchart of a method for selecting an image according to an exemplary embodiment of the present disclosure.

FIG. 3 shows a flowchart of a method for selecting an image according to another exemplary embodiment of the present disclosure;

FIG. 4 shows a flowchart of a method for selecting an image according to another exemplary embodiment of the present disclosure;

FIG. 5 shows a flowchart of a method for selecting an image according to another exemplary embodiment of the present disclosure;

fig. 6 illustrates a block diagram of an apparatus for selecting an image according to an exemplary embodiment of the present disclosure;

FIG. 7 shows a schematic diagram of a computing device according to an example embodiment of the present disclosure;

fig. 8 and 9 illustrate interface diagrams for selecting an image according to an exemplary embodiment of the present disclosure.

Detailed Description

The following detailed description is provided to assist the reader in obtaining a thorough understanding of the methods, devices, and/or systems described herein. However, various changes, modifications, and equivalents of the methods, apparatus, and/or systems described herein will be apparent to those skilled in the art after reviewing the disclosure of the present application. For example, the order of operations described herein is merely an example, and is not limited to those set forth herein, but may be changed as will become apparent after understanding the disclosure of the present application, except to the extent that operations must occur in a particular order. Moreover, descriptions of features known in the art may be omitted for clarity and conciseness.

The features described herein may be embodied in different forms and should not be construed as limited to the examples described herein. Rather, the examples described herein have been provided to illustrate only some of the many possible ways to implement the methods, devices, and/or systems described herein, which will be apparent after understanding the disclosure of the present application.

The terminology used herein is for the purpose of describing various examples only and is not intended to be limiting of the disclosure. The singular is also intended to include the plural unless the context clearly indicates otherwise. The terms "comprises," "comprising," and "having" specify the presence of stated features, quantities, operations, elements, components, and/or combinations thereof, but do not preclude the presence or addition of one or more other features, quantities, operations, components, elements, and/or combinations thereof.

Unless otherwise defined, all terms (including technical and scientific terms) used herein have the same meaning as commonly understood by one of ordinary skill in the art to which this disclosure belongs after understanding the present disclosure. Unless explicitly defined as such herein, terms (such as those defined in general dictionaries) should be interpreted as having a meaning that is consistent with their meaning in the context of the relevant art and the present disclosure, and should not be interpreted in an idealized or overly formal sense.

It should be noted that the terms "first," "second," and the like in the description and claims of the present disclosure and in the above-described drawings are used for distinguishing between similar elements and not necessarily for describing a particular sequential or chronological order. It is to be understood that the data so used is interchangeable under appropriate circumstances such that the embodiments of the disclosure described herein are capable of operation in sequences other than those illustrated or otherwise described herein. The implementations described in the exemplary embodiments below are not intended to represent all implementations consistent with the present disclosure. Rather, they are merely examples of apparatus and methods consistent with certain aspects of the present disclosure, as detailed in the appended claims.

Further, in the description of the examples, when it is considered that detailed description of well-known related structures or functions will cause a vague explanation of the present disclosure, such detailed description will be omitted.

Hereinafter, embodiments will be described in detail with reference to the accompanying drawings. However, the embodiments may be implemented in various forms and are not limited to the examples described herein.

The present disclosure provides a method for automatically selecting an image, which intelligently helps a user select an image to be transmitted/shared from an album, thereby saving the user's time and effort.

Fig. 1 shows a schematic structural diagram of an electronic device according to an exemplary embodiment of the present disclosure. The electronic device of fig. 1 can help a user quickly select an image to be transmitted/shared from an album.

The electronic device may be any electronic device capable of performing human-computer interaction, which has functions of voice/text reception, voice/text recognition, command execution, and the like, for example, a user may perform human-computer interaction by using a voice assistant (e.g., bixby of Samsung, Siri of Apple, and the like) installed in the mobile terminal device, but the present application is not limited thereto.

In an example embodiment of the present disclosure, the electronic device may include, for example, but not limited to, a portable communication device (e.g., a smartphone), a computer device, a portable multimedia device, a portable medical device, a camera, a wearable device, and the like. According to the embodiments of the present disclosure, the electronic apparatus is not limited to the above.

As shown in fig. 1, the electronic device 100 may include: processing component 101, communication bus 102, network interface 103, input output interface 104, memory 105, and power component 106. Wherein the communication bus 102 is used for enabling connection communication between these components. The input-output interface 104 may include a video display (such as a liquid crystal display), a microphone and speakers, and a user interaction interface (such as a keyboard, mouse, touch input device, etc.), and optionally the input-output interface 104 may also include a standard wired interface, a wireless interface. Network interface 103 may optionally include standard wired interfaces, wireless interfaces (e.g., wireless fidelity interfaces). The memory 105 may be a high-speed random access memory or a stable nonvolatile memory. The memory 105 may alternatively be a storage device separate from the processing component 101 described above.

Those skilled in the art will appreciate that the configuration shown in FIG. 1 does not constitute a limitation of electronic device 100, and may include more or fewer components than shown, or some components in combination, or a different arrangement of components.

As shown in fig. 1, the memory 105, which is a kind of storage medium, may include therein an operating system (such as a MAC operating system), a data storage module, a network communication module, a user interface module, an image program for automatic selection, and a database.

In the electronic device 100 shown in fig. 1, the network interface 103 is mainly used for data communication with an external device/terminal; the input/output interface 104 is mainly used for data interaction with a user; the processing component 101 and the memory 105 in the electronic device 100 may be disposed in the electronic device 100, and the electronic device 100 executes the method for selecting an image provided by the embodiments of the present disclosure by the processing component 101 calling the program for selecting an image stored in the memory 105 and various APIs provided by the operating system.

The processing component 101 may include at least one processor, and the memory 105 has stored therein a set of computer-executable instructions that, when executed by the at least one processor, perform a method of selecting an image according to an embodiment of the present disclosure. Further, the processing component 101 may perform encoding operations, decoding operations, and the like. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

The input output interface 104 may acquire images. As an example, the input-output interface 104 may obtain an image based on a user selection of the image in an album of the electronic device. For example, the acquired image is an image selected by the user in the photo album.

As another example, when the user communicates with another user, the input-output interface 104 may receive an image from the other user to acquire the image. For example, the acquired image is an image that the user receives from the other party.

As another example, when the user communicates with another user, the input-output interface 104 may receive a first image from the another user and receive a selection of a second image in the photo album by the user, and the first image and the second image may be taken as the acquired images. For example, the acquired image is both the image selected by the user and the image received from the other party.

The processing component 101 may determine at least one parameter associated with the acquired image. For example, the at least one parameter may include at least one of a photographing location and a photographing time of the acquired image. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

According to an embodiment of the present disclosure, prior to determining the parameters related to the acquired images, the processing component 101 may first determine whether the user has an intent to select an image in the album. That is, the processing component 101 may first determine whether the user has an intent to continue selecting images or a multiple selection intent.

For example, the processing component 101 may determine that the user has an intent to continue selecting images when it is detected that the user's dwell time in the album is greater than or equal to a predetermined time threshold. For example, upon detecting that the user has a slide operation in the album, the processing component 101 may determine that the user has an intention to continue selecting images. Alternatively, when it is detected that the user has an operation to continue selecting an image, the processing component 101 may determine that the user has an intention to continue selecting an image. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

In the event that it is determined that the user has an intent to continue selecting images in the album, the processing component 101 may determine at least one parameter associated with the captured images.

In the case where the acquired image is one image, the processing component 101 may use the shooting time and the shooting place of the image as reference information for selecting the image.

In the case where the acquired images are two or more images selected by the user or received from each other, the processing component 101 may take an image having the highest correlation among the acquired images as a reference. For example, the processing component 101 may calculate a correlation between each of the acquired images and the other ones of the acquired images except the image, and then determine at least one parameter of the image of the acquired images having the greatest correlation with the other images. The similarity distance between the images can be used to determine the image with the highest correlation.

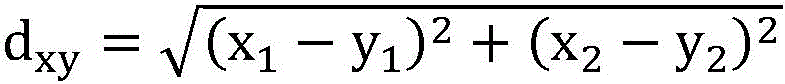

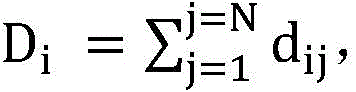

For example, the processing component 101 may use the address information and the time information of the images to form a vector with 2 dimensions, assuming that the obtained vector of the two images is x (x)1,x2) And y (y)1,y2) The similarity distance between two images can be usedRepresenting, calculating the sum of similarity distance between each image and other N imagesTake the minimum DiDenotes D at the minimumiThe corresponding image has the greatest correlation with other images, and thus the image is determined to be the image having the highest correlation. The processing component 101 may find the image with the largest correlation with other images according to the calculation result, and use the time and address information of the image as reference information for automatically selecting the image. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

As another example, in the case where the acquired image includes both the image selected by the user (i.e., the first image) and the image received from the other (i.e., the second image), the processing component 101 may first determine whether there is a commonality between the first image and the second image. For example, it may be determined whether the photographing time and/or photographing place is within a preset range according to photographing time information and/or photographing place information of the first image and the second image, such as whether a predetermined time period is separated between the photographing time of the first image and the photographing time of the second image or whether a predetermined distance is separated between the photographing place of the first image and the photographing place of the second image. If the preset range (i.e., the predetermined length of time and/or the predetermined distance) is satisfied, the preset range may be used as reference information for automatically selecting images in the photo album. Alternatively, the processing component 101 may calculate the correlation between the first image and the second image, taking the image with the highest correlation as the reference image. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

For example, the processing component 101 may calculate whether there is a commonality between the first image and the second image in terms of address information and time information, and when there is a commonality, may determine a preset range as a criterion for quickly selecting an image. Alternatively, the processing component 101 may determine, as a parameter for determining the criterion, a preset range from which a maximum value and a minimum value are centered, and take a threshold range before and after the maximum value and the minimum value as the criterion for quickly selecting the image.

If there is no commonality, the processing component 101 may not perform quick image selection. Alternatively, a priority between the first image and the second image may be set, for example, the priority of the first image is higher than the priority of the second image, and if there is no commonality, the processing component 101 may select the first image selected by the user as the reference image for quickly selecting the image.

The processing component 101 may select the associated at least one image from the user's photo album in accordance with the determined at least one parameter. As an example, the processing component 101 may determine a criterion for selecting an image according to the determined at least one parameter. For example, the processing component 101 may use at least one of a time range a predetermined time before and after the photographing time of the reference image and a distance range a predetermined distance from the photographing place as a criterion for selecting an image in the album. Next, the processing component 101 can automatically select at least one image from the album that matches the criteria and mark the at least one image in the album.

The electronic device 100 may receive or output images, video, and/or audio via the input-output interface 104. For example, a user may share images, video, and/or audio to an external user via the input-output interface 104.

By way of example, the electronic device 100 may be a PC computer, tablet device, personal digital assistant, smart phone, or other device capable of executing the set of instructions described above. Here, the electronic device 100 need not be a single electronic device, but can be any collection of devices or circuits that can execute the above instructions (or sets of instructions) either individually or in combination. The electronic device 100 may also be part of an integrated control system or system manager, or may be configured as a portable electronic device that interfaces with local or remote (e.g., via wireless transmission).

In the electronic device 100, the processing component 101 may include a Central Processing Unit (CPU), a Graphics Processing Unit (GPU), a programmable logic device, a special-purpose processor system, a microcontroller, or a microprocessor. By way of example and not limitation, processing component 101 may also include an analog processor, a digital processor, a microprocessor, a multi-core processor, a processor array, a network processor, and the like.

The processing component 101 may execute instructions or code stored in a memory, wherein the memory 105 may also store data. Instructions and data may also be sent and received over a network via network interface 103, where network interface 103 may employ any known transmission protocol.

Taking a certain social software as an example, referring to fig. 8 and 9, when a user clicks an album button (as shown in fig. 8) in a dialog box of the social software, the user can automatically jump to an album interface (as shown in fig. 9), and the album interface includes corresponding photos (such as (r), (c) and (c) in fig. 9) that have been checked according to photos sent by the other party. However, this example is merely illustrative, and the present disclosure is not limited thereto.

The electronic equipment can quickly and accurately select the image matched with the corresponding time or place for the user, so that the operation time of the user is saved, and the user experience is improved.

Fig. 2 illustrates a flowchart of a method for selecting an image according to an exemplary embodiment of the present disclosure. Among other things, the method for selecting images shown in fig. 2 may be performed in any electronic device book having a photo album.

Referring to fig. 2, in step S201, an image is acquired. As an example, an image may be obtained based on a user selection of the image in an album of the electronic device. For example, the acquired image is an image selected by the user in the photo album.

As another example, when a user communicates with another user, an image may be received from the other user to acquire the image. For example, the acquired image is an image that the user receives from the other party.

As another example, when the user communicates with another user, a first image may be received from the other user and a selection of a second image in the photo album by the user may be received, and the first image and the second image may be taken as the acquired images. For example, the acquired image is both the image selected by the user and the image received from the other party.

At step S202, at least one parameter related to the acquired image is determined. For example, the at least one parameter may include at least one of a photographing location and a photographing time of the acquired image. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

According to an embodiment of the present disclosure, before determining the parameter related to the acquired image, it may be first determined whether the user has an intention to select an image in the album. That is, it may be determined first whether the user has an intention to continue selecting images or a multi-selection intention.

For example, when it is detected that the user stays in the album for a time greater than or equal to a predetermined time threshold, it may be determined that the user has an intention to continue selecting images. For example, when it is detected that the user has a slide operation in the album, it may be determined that the user has an intention to continue selecting images. Alternatively, when it is detected that the user has an operation to continue selecting an image, it may be determined that the user has an intention to continue selecting an image. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

In the event that it is determined that the user has an intent to continue selecting images in the album, at least one parameter associated with the captured images is determined.

In the case where the acquired image is one image, the shooting time and the shooting place of the image may be used as reference information for selecting the image.

In the case where the acquired images are two or more images selected by the user or received from each other, an image having the highest correlation among the acquired images may be taken as a reference. For example, the correlation between each of the acquired images and the other ones of the acquired images except the image may be calculated, respectively, and then at least one parameter of the image of the acquired images having the greatest correlation with the other images is determined. For example, a vector is composed using address information and time information of an image 2-dimensional information, and then correlation function calculation is performed. The vector of each of the acquired images, the correlation function between them is calculated. For example, the image having the largest similarity may be determined according to the similarity distance between the images. And finding the image with the highest correlation with other images according to the calculation result, and using the time and address information of the image as reference information for automatically selecting the image. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

As another example, in the case where the acquired image includes both the image selected by the user (i.e., the first image) and the image received from the other (i.e., the second image), it is first determined whether there is a commonality between the first image and the second image. For example, it may be determined whether the photographing time and/or photographing place is within a preset range according to photographing time information and/or photographing place information of the first image and the second image, such as whether a predetermined time period is separated between the photographing time of the first image and the photographing time of the second image or whether a predetermined distance is separated between the photographing place of the first image and the photographing place of the second image. If the preset range (i.e., the predetermined length of time and/or the predetermined distance) is satisfied, the preset range may be used as reference information for automatically selecting images in the photo album. Alternatively, the correlation between the first image and the second image may be calculated, and the image having the highest correlation is taken as the reference image. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

For example, whether or not there is a commonality between the first image and the second image in terms of address information and time information is calculated, and when there is a commonality, a preset range may be determined as a criterion for quickly selecting an image. Alternatively, the parameter for determining the standard may be determined from a preset range, and a threshold range before and after the maximum value and the minimum value of the preset range may be used as the standard for quickly selecting the image, with the maximum value and the minimum value of the preset range as the center.

If there is no commonality, quick selection of images may not be performed. Alternatively, a priority between the first image and the second image may be set, for example, the priority of the first image is higher than that of the second image, and if there is no commonality, the first image selected by the user may be used as a reference image for quickly selecting an image.

In step S203, an associated at least one image is selected from the user' S photo album according to the determined at least one parameter.

As an example, the criteria for selecting an image may be determined in accordance with the determined at least one parameter. For example, at least one of a time range a predetermined time before and after the photographing time of the reference image and a distance range a predetermined distance from the photographing place may be used as the criterion for selecting the image in the album.

Next, at least one image matching the criteria is automatically selected from the photo album and the at least one image is tagged in the photo album.

According to the embodiment of the disclosure, the user can be quickly and accurately helped to automatically select the image to be shared, and meanwhile, the user can randomly change the automatically selected image according to the self requirement, so that the user experience is improved.

Fig. 3 illustrates a flowchart of a method for selecting an image according to another exemplary embodiment of the present disclosure. The method shown in fig. 3 can be applied to quickly select a scene of a local image from images transmitted by the other party.

Referring to fig. 3, in step S301, when a user opens an album on a social application, a quick selection image function may be initiated. Here, the quick selection image function may refer to a function of automatically selecting an associated at least one image from the local album based on the acquired images as described above. For example, when a user is conversing with another user using a chat software, in a case where the user opens a local photo album through the chat software, the user may indicate an intention to share images with the other party, and at this time, the electronic apparatus may turn on a function of quickly selecting images.

In step S302, it is determined whether there is an image transmitted by the other party within a specified time in the dialog box of the social software. For example, the electronic device may determine whether the other party transmitted an image within a predetermined time period in the current dialog box of the social software. In the case where the image from the other party is determined, step S303 may be entered, otherwise, the image may be selected by the user. Alternatively, in the case where it is determined that the partner does not have an image transmitted thereto, the image may be automatically selected with reference to fig. 4, which will be described in detail below.

In step S303, a criterion for automatically selecting an image from the album is determined according to the image transmitted by the other party. A specific section range of image information (such as a photographing time and a photographing place) transmitted by the other party may be used as a "selection criterion". For example: assuming that an image is transmitted by the other party within a predetermined period of time, the image can be used as a reference image for quickly selecting an image from the photo album. When the photographing time of the image is determined to be "05-month-08-2020, 10: 54: 09 "in Guangdong province, Guangzhou city, Cambodia district, scientific avenue, 185, China", the "selection criteria" can be made as "year 2020, 05, month 08, 10: 00: 08/00/2020/05/12: 00: 00 ' and ' distance from Guangdong province, Guangzhou city, Bomb region, scientific avenue, 185, 3 km in China '. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

When it is determined that the partner transmits the plurality of images within the predetermined time, an image having the highest correlation among the plurality of images may be used as the reference image. Specifically, the address information and the 2-dimensional information of the time information of the acquired images can be respectively used for forming a plurality of vectors, then the correlation between the vectors is calculated by using a correlation function, one image with the maximum correlation with the other images is found according to the calculation result, and the time and address information of the image is used as reference information.

In step S304, an image meeting the standard is automatically selected from the photo album according to the standard, and the local image meeting the standard is marked, thereby implementing a quick image selection function. For example, the electronic device may search for images with address and time information locally, matching according to criteria. If the criteria are met, the electronic device can prompt the user or automatically check the corresponding image.

According to the embodiment of the disclosure, when the user receives the photo sent by the other party, the user is automatically helped to select the corresponding photo when the user performs the photo selection operation according to the received photo information (shooting time/place).

Fig. 4 illustrates a flowchart of a method for selecting an image according to another exemplary embodiment of the present disclosure. The method shown in fig. 4 can be applied to images according to the user's own selection and scenes in which the user has a multi-selection intention.

Referring to fig. 4, when the user opens the album operation, the quick selection image function may be activated at step S401. For example, when a user opens a photo album in an electronic device, which may indicate that the user has an intention to select a plurality of images, the electronic device may turn on a function of quickly selecting images. Or, when the user is conversing with another user by using the chat software, in the case that the user opens the local photo album through the chat software, the user may indicate an intention of sharing images with the other party, and at this time, the electronic device may turn on a function of quickly selecting images.

In step S402, when the user selects one image from the photo album, time information and position information of the selected image may be extracted.

In step S403, it is determined whether the user has a multiple-choice intention. If the user has the multiple-selection intention, the step S404 is performed, otherwise, the subsequent operation may not be performed. Alternatively, the order of step S402 and step S403 may be interchanged.

The following method may be used to determine whether the user has a multi-selection intention. For example, when it is judged that the stay time of the user in the photo album is greater than or equal to the predetermined time threshold, it may be determined that the user has a multi-selection intention. For example, when it is judged that the user has a slide-up and down operation in the photo album, it can be determined that the user has a multi-selection intention. Alternatively, when it is judged that the user has an operation of continuing to select an image, it may be determined that the user has a multi-selection intention.

In step S404, criteria for automatically selecting an image may be determined according to the image selected by the user. For example, an image selected by the user is taken as a reference image, and a preset section range of the location information and the time information of the reference image is taken as a criterion for automatically selecting the image.

In step S405, local images in the photo album that meet the criteria are marked and selected to implement the quick select image function. Optionally, the user may be prompted to check for local images that meet the criteria, for example, in a highlighted form to prompt the user to check for associated images. However, the above examples are merely exemplary, and the present disclosure is not limited thereto.

According to the embodiment of the disclosure, when a user shares a picture, after the user selects one picture, the user can be recommended to select the picture according to the address and the time information of the picture, and a convenient operation mode is provided.

Fig. 5 illustrates a flowchart of a method for selecting an image according to another exemplary embodiment of the present disclosure. The method shown in fig. 5 can be applied to quickly select a scene of a local image based on an image transmitted from the other party and the first image selected by the user himself.

In step S501, when the user opens the social application and performs an open album operation, the quick select image function is started. For example, when a user is conversing with another user using a chat software, in a case where the user opens a local photo album through the chat software, the user may indicate an intention to share images with the other party, and at this time, the electronic apparatus may turn on a function of quickly selecting images.

In step S502, one image may be selected in the photo album by the user.

In step S503, it is determined whether or not the image transmitted by the partner is received within the specified time. For example, the electronic device may determine whether the other party has sent an image within a certain historical time interval in the current dialog box of the chat software. Here, the present disclosure does not limit the designated time.

If it is determined that the image transmitted from the other party is received within the designated time, step S504 is performed, otherwise the user may continue to select the image from the photo album, or the method of fig. 4 may be employed to automatically select the image.

In step S504, a criterion for selecting an image may be determined according to the image selected by the user and the image transmitted by the counterpart.

As an example, the correlation between the image selected by the user and the image transmitted by the other party may be calculated first, and the image having the greatest correlation with the other images among these images may be used as the reference image. Then, the preset section range of the photographing time and the photographing place of the reference image is used as a criterion for automatically selecting an image.

As another example, it may be determined whether there is a commonality between the image selected by the user and the image transmitted by the other party. Specifically, it may be determined whether a photographing location and/or photographing time of an image selected by a user and a photographing location and/or photographing time of an image transmitted by an opposite party are within a preset range, for example, whether a photographing time of a first image and a photographing time of a second image are separated by a predetermined time period, or whether a photographing location of a first image and a photographing location of a second image are separated by a predetermined distance. When there is commonality, a preset range may be determined as a criterion for automatically selecting an image. When there is no commonality, it is possible to use an image selected by the user himself as a reference image and then determine a corresponding criterion using reference information of the image. Alternatively, when there is no commonality, the image may be continuously selected by the user himself.

In step S505, the local images meeting the criteria are selected and marked, thereby implementing a quick image selection function.

Fig. 6 illustrates a block diagram of an apparatus for selecting an image according to an exemplary embodiment of the present disclosure. Referring to fig. 6, an apparatus 600 for selecting an image may include a data acquisition module 601, a determination module 602, and a selection module 603. Each module in the apparatus 600 may be implemented by one or more modules, and names of the corresponding modules may vary according to types of the modules. In various embodiments, some modules in apparatus 600 may be omitted, or additional modules may also be included. Furthermore, modules/elements according to various embodiments of the present disclosure may be combined to form a single entity, and thus the functions of the respective modules/elements may be equivalently performed prior to the combination.

The acquisition module 601 may be configured to acquire an image.

As an example, the obtaining module 601 may be configured to obtain an image in response to a user selecting an image in an album of the electronic device. When a user communicates with another user, the acquisition module 601 may receive an image from another user as an acquired image. Alternatively, when the user communicates with another user, the acquiring module 601 may receive a first image from another user and select a second image in the album by the user, taking the first image and the second image as the acquired images.

The determination module 602 may be configured to determine at least one parameter related to the acquired image. The at least one parameter may include at least one of a photographing location and a photographing time of the image.

Alternatively, the determination module 602 may be configured to determine whether the user has an intention to select an image in the album. In the event that it is determined that the user has an intent to select an image in the album, the determination module 602 may determine at least one parameter associated with the captured image.

Alternatively, the determination module 602 may determine whether the user has an intention to select an image in the album according to the following condition. The determination module 602 may determine that the user has an intention to select an image in the album when it is detected that the stay time of the user in the album is greater than or equal to a predetermined time threshold, or the determination module 602 may determine that the user has an intention to select an image in the album when it is detected that the user has a sliding operation in the album, or the determination module 602 may determine that the user has an intention to select an image in the album when it is detected that the user has an operation to continue selecting images.

In determining the parameters for acquiring the image, in the case where the acquired image is an image, the determining module 602 may use the shooting time and the shooting location of the image as reference information for acquiring the image.

In the case where the acquired images are a plurality of images, the determining module 602 may be configured to calculate correlations between each of the acquired images and the other images, respectively, and then determine at least one parameter of an image having the greatest correlation with the other images among the acquired images as the reference information.

Alternatively, the determining module 602 may be configured to determine whether the photographing time and/or the photographing place of the first and second images are within a preset range, and in case that it is determined that the photographing time and/or the photographing place of the first and second images are within the preset range, determine the preset range as a criterion for selecting the at least one image.

The selection module 603 may be configured to select at least one image associated with the acquired image from the user's photo album in accordance with the determined at least one parameter.

Alternatively, the selection module 603 may be configured to determine a criterion for selecting at least one image in dependence on the determined at least one parameter, select at least one image from the album that matches the criterion, and mark the selected at least one image in the album. Alternatively, the criterion may include at least one of a time range from a predetermined time before and after the photographing time and a distance range from the photographing place by a predetermined distance.

Fig. 7 shows a schematic diagram of a computing device according to an exemplary embodiment of the present disclosure.

Referring to fig. 7, a computing apparatus 700 according to an exemplary embodiment of the present disclosure includes a memory 701 and a processor 702, the memory 701 having stored thereon a computer program that, when executed by the processor 702, implements a method for selecting an image according to an exemplary embodiment of the present disclosure.

As an example, the computer program, when executed by the processor 702, may implement the steps of: acquiring an image; determining at least one parameter associated with the image; at least one image associated with the image is selected from a user's photo album in accordance with the at least one parameter.

The computing devices in the embodiments of the present disclosure may include, but are not limited to, devices such as mobile phones, notebook computers, PDAs (personal digital assistants), PADs (tablet computers), desktop computers, and the like. The computing device illustrated in fig. 7 is only one example and should not impose any limitations on the functionality or scope of use of embodiments of the disclosure.

As used herein, the term "module" may include units implemented in hardware, software, or firmware, and may be used interchangeably with other terms (e.g., "logic," "logic block," "portion," or "circuitry"). A module may be a single integrated component adapted to perform one or more functions or a minimal unit or portion of the single integrated component. For example, according to an embodiment, the modules may be implemented in the form of Application Specific Integrated Circuits (ASICs).

The various embodiments set forth herein may be implemented as software including one or more instructions stored in a storage medium readable by a machine (e.g., a mobile device). For example, under control of a processor, the processor of the machine may invoke and execute at least one of the one or more instructions stored in the storage medium with or without the use of one or more other components. This enables the machine to be operable to perform at least one function in accordance with the invoked at least one instruction. The one or more instructions may include code generated by a compiler or code capable of being executed by an interpreter. The machine-readable storage medium may be provided in the form of a non-transitory storage medium. Where the term "non-transitory" simply means that the storage medium is a tangible device and does not include a signal (e.g., an electromagnetic wave), the term does not distinguish between data being semi-permanently stored in the storage medium and data being temporarily stored in the storage medium.

According to embodiments, methods according to various embodiments of the present disclosure may be included and provided in a computer program product. The computer program product may be used as a product for conducting a transaction between a seller and a buyer. The computer program product may be distributed in the form of a machine-readable storage medium, such as a compact disc read only memory (CD-ROM), or may be distributed (e.g., downloaded or uploaded) online via an application store (e.g., a Play store), or may be distributed (e.g., downloaded or uploaded) directly between two user devices (e.g., smartphones). At least part of the computer program product may be temporarily generated if it is published online, or at least part of the computer program product may be at least temporarily stored in a machine readable storage medium, such as a memory of a manufacturer's server, a server of an application store, or a forwarding server.

According to various embodiments, each of the above components (e.g., modules or programs) may comprise a single entity or multiple entities (e.g., in fig. 7, memory 701 may comprise one or more memories and processor 702 may comprise one or more processors). According to various embodiments, one or more of the above-described components may be omitted, or one or more other components may be added. Alternatively or additionally, multiple components (e.g., modules or programs) may be integrated into a single component. In such a case, according to various embodiments, the integrated component may still perform one or more functions of each of the plurality of components in the same or similar manner as the corresponding one of the plurality of components performed the one or more functions prior to integration. Operations performed by a module, program, or another component may be performed sequentially, in parallel, repeatedly, or in a heuristic manner, or one or more of the operations may be performed in a different order or omitted, or one or more other operations may be added, in accordance with various embodiments.

At least one of the plurality of modules may be implemented by an AI model. The functions associated with the AI may be performed by the non-volatile memory, the volatile memory, and the processor.

The processor may include one or more processors. At this time, the one or more processors may be general-purpose processors such as a Central Processing Unit (CPU), an Application Processor (AP), etc., processors for graphics only (e.g., a Graphics Processor (GPU), a Vision Processor (VPU), and/or an AI-specific processor (e.g., a Neural Processing Unit (NPU)).

The one or more processors control the processing of the input data according to predefined operating rules or Artificial Intelligence (AI) models stored in the non-volatile memory and the volatile memory. Predefined operating rules or artificial intelligence models may be provided through training or learning. Here, the provision by learning means that a predefined operation rule or AI model having a desired characteristic is formed by applying a learning algorithm to a plurality of learning data. The learning may be performed in the device itself performing the AI according to the embodiment, and/or may be implemented by a separate server/device/system.

As an example, the artificial intelligence model may be composed of multiple neural network layers. Each layer has a plurality of weight values, and a layer operation is performed by calculation of a previous layer and operation of the plurality of weight values. Examples of neural networks include, but are not limited to, Convolutional Neural Networks (CNNs), Deep Neural Networks (DNNs), Recurrent Neural Networks (RNNs), Restricted Boltzmann Machines (RBMs), Deep Belief Networks (DBNs), Bidirectional Recurrent Deep Neural Networks (BRDNNs), generative countermeasure networks (GANs), and deep Q networks.

A learning algorithm is a method of training a predetermined target device (e.g., a robot) using a plurality of learning data to cause, allow, or control the target device to make a determination or prediction. Examples of learning algorithms include, but are not limited to, supervised learning, unsupervised learning, semi-supervised learning, or reinforcement learning.

The method and the device can quickly and accurately select the image matched with the corresponding time or place for the user, save the operation time of the user and further improve the user experience.

While the present disclosure has been particularly shown and described with reference to exemplary embodiments thereof, it will be understood by those of ordinary skill in the art that various changes in form and details may be made therein without departing from the spirit and scope of the present disclosure as defined by the following claims.

Claims (20)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202110533008.4A CN113286040B (en) | 2021-05-17 | 2021-05-17 | Method and device for selecting images |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202110533008.4A CN113286040B (en) | 2021-05-17 | 2021-05-17 | Method and device for selecting images |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN113286040A true CN113286040A (en) | 2021-08-20 |

| CN113286040B CN113286040B (en) | 2024-01-23 |

Family

ID=77279367

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202110533008.4A Active CN113286040B (en) | 2021-05-17 | 2021-05-17 | Method and device for selecting images |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN113286040B (en) |

Cited By (1)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN116010637A (en) * | 2022-12-09 | 2023-04-25 | 维沃移动通信有限公司 | Image recommendation method, device, electronic device and storage medium |

Citations (14)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP2006178844A (en) * | 2004-12-24 | 2006-07-06 | Noritsu Koki Co Ltd | Electronic album creation apparatus and electronic album creation system |

| JP2006270940A (en) * | 2005-02-28 | 2006-10-05 | Fuji Photo Film Co Ltd | Electronic album editing system, electronic album editing method, and electronic album editing program |

| CN202923167U (en) * | 2012-12-10 | 2013-05-08 | 王锡军 | Photo album |

| CN105243060A (en) * | 2014-05-30 | 2016-01-13 | 小米科技有限责任公司 | Picture retrieval method and apparatus |

| CN105814602A (en) * | 2013-12-05 | 2016-07-27 | 脸谱公司 | Indicating User Availability for Communication |

| CN106776774A (en) * | 2016-11-11 | 2017-05-31 | 努比亚技术有限公司 | Mobile terminal chooses picture device and method |

| CN107391588A (en) * | 2017-06-26 | 2017-11-24 | 珠海格力电器股份有限公司 | Picture sharing method, picture sharing device and mobile terminal |

| CN109241314A (en) * | 2018-08-27 | 2019-01-18 | 维沃移动通信有限公司 | Method and device for selecting similar images |

| CN110147461A (en) * | 2019-04-30 | 2019-08-20 | 维沃移动通信有限公司 | Image display method, device, terminal device, and computer-readable storage medium |

| CN110543579A (en) * | 2019-07-26 | 2019-12-06 | 华为技术有限公司 | Image display method and electronic equipment |

| CN110889002A (en) * | 2019-11-26 | 2020-03-17 | 维沃移动通信有限公司 | Image display method and electronic device |

| CN111371999A (en) * | 2020-03-17 | 2020-07-03 | Oppo广东移动通信有限公司 | Image management method, device, terminal and storage medium |

| CN111368110A (en) * | 2020-02-18 | 2020-07-03 | 深圳传音控股股份有限公司 | Gallery searching method, terminal and computer storage medium |

| CN111475093A (en) * | 2019-08-02 | 2020-07-31 | 广州三星通信技术研究有限公司 | Word selection method and electronic equipment |

-

2021

- 2021-05-17 CN CN202110533008.4A patent/CN113286040B/en active Active

Patent Citations (14)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP2006178844A (en) * | 2004-12-24 | 2006-07-06 | Noritsu Koki Co Ltd | Electronic album creation apparatus and electronic album creation system |

| JP2006270940A (en) * | 2005-02-28 | 2006-10-05 | Fuji Photo Film Co Ltd | Electronic album editing system, electronic album editing method, and electronic album editing program |

| CN202923167U (en) * | 2012-12-10 | 2013-05-08 | 王锡军 | Photo album |

| CN105814602A (en) * | 2013-12-05 | 2016-07-27 | 脸谱公司 | Indicating User Availability for Communication |

| CN105243060A (en) * | 2014-05-30 | 2016-01-13 | 小米科技有限责任公司 | Picture retrieval method and apparatus |

| CN106776774A (en) * | 2016-11-11 | 2017-05-31 | 努比亚技术有限公司 | Mobile terminal chooses picture device and method |

| CN107391588A (en) * | 2017-06-26 | 2017-11-24 | 珠海格力电器股份有限公司 | Picture sharing method, picture sharing device and mobile terminal |

| CN109241314A (en) * | 2018-08-27 | 2019-01-18 | 维沃移动通信有限公司 | Method and device for selecting similar images |

| CN110147461A (en) * | 2019-04-30 | 2019-08-20 | 维沃移动通信有限公司 | Image display method, device, terminal device, and computer-readable storage medium |

| CN110543579A (en) * | 2019-07-26 | 2019-12-06 | 华为技术有限公司 | Image display method and electronic equipment |

| CN111475093A (en) * | 2019-08-02 | 2020-07-31 | 广州三星通信技术研究有限公司 | Word selection method and electronic equipment |

| CN110889002A (en) * | 2019-11-26 | 2020-03-17 | 维沃移动通信有限公司 | Image display method and electronic device |

| CN111368110A (en) * | 2020-02-18 | 2020-07-03 | 深圳传音控股股份有限公司 | Gallery searching method, terminal and computer storage medium |

| CN111371999A (en) * | 2020-03-17 | 2020-07-03 | Oppo广东移动通信有限公司 | Image management method, device, terminal and storage medium |

Cited By (1)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN116010637A (en) * | 2022-12-09 | 2023-04-25 | 维沃移动通信有限公司 | Image recommendation method, device, electronic device and storage medium |

Also Published As

| Publication number | Publication date |

|---|---|

| CN113286040B (en) | 2024-01-23 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN106202330B (en) | Junk information judgment method and device | |

| CN107995428B (en) | Image processing method, image processing device, storage medium and mobile terminal | |

| TW202036464A (en) | Text recognition method and apparatus, electronic device, and storage medium | |

| WO2021056808A1 (en) | Image processing method and apparatus, electronic device, and storage medium | |

| CN106845398B (en) | Face key point positioning method and device | |

| CN111553464B (en) | Image processing method and device based on super network and intelligent equipment | |

| CN110163380B (en) | Data analysis method, model training method, device, equipment and storage medium | |

| CN108121952A (en) | Face key point positioning method, device, equipment and storage medium | |

| CN115035596B (en) | Behavior detection method and device, electronic equipment and storage medium | |

| CN107133354B (en) | Image description information acquisition method and device | |

| TW202036476A (en) | Method, device and electronic equipment for image processing and storage medium thereof | |

| CN114494835A (en) | Target detection method, device and equipment | |

| CN108154093B (en) | Face information identification method and device, electronic equipment and machine-readable storage medium | |

| KR101979650B1 (en) | Server and operating method thereof | |

| CN112036307A (en) | Image processing method and device, electronic equipment and storage medium | |

| CN115205925A (en) | Expression coefficient determining method and device, electronic equipment and storage medium | |

| CN113837932B (en) | Face generation method, face recognition method and device | |

| CN109325141B (en) | Image retrieval method and device, electronic equipment and storage medium | |

| CN113505256B (en) | Feature extraction network training method, image processing method and device | |

| CN107748867A (en) | The detection method and device of destination object | |

| US12327358B2 (en) | Method for reconstructing dendritic tissue in image, device and storage medium | |

| CN113286040B (en) | Method and device for selecting images | |

| CN117237719A (en) | Image target detection method, device, equipment and storage medium | |

| CN107480773B (en) | Method, device and storage medium for training convolutional neural network model | |

| CN111782767B (en) | Question and answer method, device, equipment and storage medium |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |