CN113205495A - Image quality evaluation and model training method, device, equipment and storage medium - Google Patents

Image quality evaluation and model training method, device, equipment and storage medium Download PDFInfo

- Publication number

- CN113205495A CN113205495A CN202110471056.5A CN202110471056A CN113205495A CN 113205495 A CN113205495 A CN 113205495A CN 202110471056 A CN202110471056 A CN 202110471056A CN 113205495 A CN113205495 A CN 113205495A

- Authority

- CN

- China

- Prior art keywords

- feature

- image

- score

- fusion

- output

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/0002—Inspection of images, e.g. flaw detection

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/24—Classification techniques

- G06F18/241—Classification techniques relating to the classification model, e.g. parametric or non-parametric approaches

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/24—Classification techniques

- G06F18/241—Classification techniques relating to the classification model, e.g. parametric or non-parametric approaches

- G06F18/2415—Classification techniques relating to the classification model, e.g. parametric or non-parametric approaches based on parametric or probabilistic models, e.g. based on likelihood ratio or false acceptance rate versus a false rejection rate

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/045—Combinations of networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/047—Probabilistic or stochastic networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/40—Extraction of image or video features

- G06V10/44—Local feature extraction by analysis of parts of the pattern, e.g. by detecting edges, contours, loops, corners, strokes or intersections; Connectivity analysis, e.g. of connected components

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/10—Image acquisition modality

- G06T2207/10004—Still image; Photographic image

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20016—Hierarchical, coarse-to-fine, multiscale or multiresolution image processing; Pyramid transform

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20076—Probabilistic image processing

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20081—Training; Learning

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20084—Artificial neural networks [ANN]

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/30—Subject of image; Context of image processing

- G06T2207/30168—Image quality inspection

-

- Y—GENERAL TAGGING OF NEW TECHNOLOGICAL DEVELOPMENTS; GENERAL TAGGING OF CROSS-SECTIONAL TECHNOLOGIES SPANNING OVER SEVERAL SECTIONS OF THE IPC; TECHNICAL SUBJECTS COVERED BY FORMER USPC CROSS-REFERENCE ART COLLECTIONS [XRACs] AND DIGESTS

- Y02—TECHNOLOGIES OR APPLICATIONS FOR MITIGATION OR ADAPTATION AGAINST CLIMATE CHANGE

- Y02P—CLIMATE CHANGE MITIGATION TECHNOLOGIES IN THE PRODUCTION OR PROCESSING OF GOODS

- Y02P90/00—Enabling technologies with a potential contribution to greenhouse gas [GHG] emissions mitigation

- Y02P90/30—Computing systems specially adapted for manufacturing

Landscapes

- Engineering & Computer Science (AREA)

- Physics & Mathematics (AREA)

- Theoretical Computer Science (AREA)

- Data Mining & Analysis (AREA)

- General Physics & Mathematics (AREA)

- General Engineering & Computer Science (AREA)

- Life Sciences & Earth Sciences (AREA)

- Artificial Intelligence (AREA)

- Evolutionary Computation (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Health & Medical Sciences (AREA)

- Biophysics (AREA)

- Biomedical Technology (AREA)

- General Health & Medical Sciences (AREA)

- Molecular Biology (AREA)

- Computing Systems (AREA)

- Computational Linguistics (AREA)

- Mathematical Physics (AREA)

- Software Systems (AREA)

- Bioinformatics & Computational Biology (AREA)

- Evolutionary Biology (AREA)

- Probability & Statistics with Applications (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Quality & Reliability (AREA)

- Multimedia (AREA)

- Image Analysis (AREA)

Abstract

The invention discloses an image quality evaluation and model training method, device, equipment and storage medium, and relates to the technical field of artificial intelligence, in particular to the technical fields of computer vision, deep learning and the like. The image quality evaluation method comprises the following steps: extracting image features of each image in an image pair by using a feature extraction network in an image quality evaluation model, wherein the image features comprise features to be fused and output features, and the image pair comprises: the method comprises the steps of referring to an image and an image to be evaluated, and carrying out spatial alignment processing on the feature to be fused to obtain the fusion feature of each image; and determining a network by adopting the score in the image quality evaluation model, and determining the quality score of the image to be evaluated based on the fusion characteristic and the output characteristic. The present disclosure can improve the image quality evaluation effect.

Description

Technical Field

The present disclosure relates to the field of artificial intelligence technologies, and in particular, to the field of computer vision, deep learning, and the like, and in particular, to a method, an apparatus, a device, and a storage medium for image quality evaluation and model training.

Background

Image Quality Assessment (IQA) may process an Image using an Image quality assessment model to obtain a quality score. Specifically, the image quality evaluation model may be used to extract image features of the image, fuse the image features to obtain fusion features, and perform image quality evaluation based on the fusion features.

In the related art, image features are subjected to direct vector splicing when being fused.

Disclosure of Invention

The disclosure provides an image quality evaluation and model training method, device, equipment and storage medium.

According to an aspect of the present disclosure, there is provided an image quality evaluation method including: extracting image features of each image in an image pair by using a feature extraction network in an image quality evaluation model, wherein the image features comprise features to be fused and output features, and the image pair comprises: the method comprises the steps of referring to an image and an image to be evaluated, and carrying out spatial alignment processing on the feature to be fused to obtain the fusion feature of each image; and determining a network by adopting the score in the image quality evaluation model, and determining the quality score of the image to be evaluated based on the fusion characteristic and the output characteristic.

According to another aspect of the present disclosure, there is provided a training method of an image quality evaluation model, the image quality evaluation model including: a feature extraction network and a score determination network, the method comprising: extracting image features of each image in a sample image pair by using the feature extraction network, wherein the image features comprise to-be-fused features and output features, the sample image pair comprises a reference image and a distorted image, and the to-be-fused features are subjected to spatial alignment processing to obtain fused features of each image; determining a prediction score for the distorted image based on the fused feature and the output feature using the score determination network; determining a loss function based on the prediction score, and training the feature extraction network and the score determination network based on the loss function.

According to another aspect of the present disclosure, there is provided an image quality evaluation apparatus including: an extraction module, configured to extract an image feature of each image in an image pair by using a feature extraction network in an image quality evaluation model, where the image feature includes a feature to be fused and an output feature, and the image pair includes: the method comprises the steps of referring to an image and an image to be evaluated, and carrying out spatial alignment processing on the feature to be fused to obtain the fusion feature of each image; and the determining module is used for determining a network by adopting the score in the image quality evaluation model and determining the quality score of the image to be evaluated based on the fusion characteristic and the output characteristic.

According to another aspect of the present disclosure, there is provided a training apparatus of an image quality evaluation model, the image quality evaluation model including: a feature extraction network and a score determination network, the apparatus comprising: the extraction module is used for extracting the image characteristics of each image in a sample image pair by adopting the characteristic extraction network, wherein the image characteristics comprise the characteristics to be fused and output characteristics, the sample image pair comprises a reference image and a distorted image, and the characteristics to be fused are subjected to space alignment processing to obtain the fusion characteristics of each image; a determination module to determine a prediction score for the distorted image based on the fused feature and the output feature using the score determination network; a training module to determine a loss function based on the prediction score, and train the feature extraction network and the score determination network based on the loss function.

According to another aspect of the present disclosure, there is provided an electronic device including: at least one processor; and a memory communicatively coupled to the at least one processor; wherein the memory stores instructions executable by the at least one processor to enable the at least one processor to perform the method of any one of the above aspects.

According to another aspect of the present disclosure, there is provided a non-transitory computer readable storage medium having stored thereon computer instructions for causing the computer to perform the method according to any one of the above aspects.

According to another aspect of the present disclosure, there is provided a computer program product comprising a computer program which, when executed by a processor, implements the method according to any one of the above aspects.

According to the technical scheme disclosed by the invention, the image quality evaluation effect can be improved.

It should be understood that the statements in this section do not necessarily identify key or critical features of the embodiments of the present disclosure, nor do they limit the scope of the present disclosure. Other features of the present disclosure will become apparent from the following description.

Drawings

The drawings are included to provide a better understanding of the present solution and are not to be construed as limiting the present disclosure. Wherein:

FIG. 1 is a schematic diagram according to a first embodiment of the present disclosure;

FIG. 2 is a schematic diagram according to a second embodiment of the present disclosure;

FIG. 3 is a schematic diagram according to a third embodiment of the present disclosure;

FIG. 4 is a schematic diagram according to a fourth embodiment of the present disclosure;

FIG. 5 is a schematic diagram according to a fifth embodiment of the present disclosure;

FIG. 6 is a schematic diagram according to a sixth embodiment of the present disclosure;

FIG. 7 is a schematic diagram according to a seventh embodiment of the present disclosure;

FIG. 8 is a schematic diagram according to an eighth embodiment of the present disclosure;

FIG. 9 is a schematic diagram according to a ninth embodiment of the present disclosure;

fig. 10 is a schematic diagram of an electronic device for implementing any one of the image quality evaluation and model training methods according to the embodiments of the present disclosure.

Detailed Description

Exemplary embodiments of the present disclosure are described below with reference to the accompanying drawings, in which various details of the embodiments of the disclosure are included to assist understanding, and which are to be considered as merely exemplary. Accordingly, those of ordinary skill in the art will recognize that various changes and modifications of the embodiments described herein can be made without departing from the scope and spirit of the present disclosure. Also, descriptions of well-known functions and constructions are omitted in the following description for clarity and conciseness.

The purpose of IQA is to make the evaluation result obtained by using the image quality evaluation model consistent with the subjective quality evaluation, i.e., the quality score of IQA should be higher for the image with good subjective evaluation quality. When the model is adopted for quality evaluation, the quality evaluation can be carried out based on the fusion characteristics by fusing the plurality of image characteristics. In the related art, during fusion, each image feature can be converted into a vector, and then the vectors corresponding to each image feature are directly spliced. However, this approach ignores the spatial positional relationship between the respective image features, and affects the image quality evaluation effect.

In order to improve the image quality evaluation effect, the present disclosure shows the following embodiments.

Fig. 1 is a schematic diagram according to a first embodiment of the present disclosure. The embodiment provides an image quality evaluation method, which comprises the following steps:

101. extracting image features of each image in an image pair by using a feature extraction network in an image quality evaluation model, wherein the image features comprise features to be fused and output features, and the image pair comprises: and carrying out spatial alignment processing on the fusion features to obtain the fusion features of each image.

102. And determining a network by adopting the score in the image quality evaluation model, and determining the quality score of the image to be evaluated based on the fusion characteristic and the output characteristic.

In the image quality evaluation, an image quality evaluation model may be used. As shown in fig. 2, the image quality evaluation model may include a feature extraction network 201 and a score determination network 202. The image quality evaluation may include reference image-based image quality evaluation, and when the reference image-based image quality evaluation is adopted, the input of the image quality evaluation model includes: and outputting the reference image and the image to be evaluated as the quality score of the image to be evaluated. The quality score is used for evaluating the quality of the image to be evaluated relative to the reference image. The reference image may be determined based on a specific application scene, for example, the image quality evaluation may be used in scenes such as image compression, image encoding and decoding, and the like, taking image compression as an example, the reference image may be an image before compression, and the image to be evaluated is a compressed image, and by determining the quality score of the compressed image, the compression effect may be evaluated, for example, the quality score of the compressed image is higher, and the compression effect is better.

The feature extraction Network may be a Deep Convolutional Neural Network (DCNN), and its backbone Network (backbone) is, for example, Resnet 50.

As shown in fig. 3, the feature extraction network may include a backbone network 301 and a fusion module 302. The backbone network 301 is configured to extract image features of an input image, and includes a plurality of convolutional layers, where each convolutional layer may output image features of a corresponding layer, so that image features of different layers may be extracted. The image features of partial layers can be selected as features to be fused according to the setting, and the image features output by the last layer of the backbone network can be used as output features. The fusion module 302 is configured to perform fusion processing on the feature to be fused to obtain a fusion feature, where the specific fusion processing mode is to perform spatial alignment processing on the feature to be fused.

The feature extraction networks process the image to be evaluated and the reference image respectively, and for convenience of understanding, fig. 2 shows two feature extraction networks which process the image to be evaluated and the reference image respectively, and the two feature extraction networks share parameters. In other words, in implementation, the image to be evaluated and the reference image may be input into the same feature extraction network at different times, so as to obtain the fusion feature and the output feature corresponding to the image to be evaluated and the reference image, respectively.

As shown in fig. 3, taking the feature extraction network as an example to process the image to be evaluated, taking the block-based image processing as an example, the image to be evaluated may be divided into a plurality of image blocks, the input of the feature extraction network is the image block, and a is usedmRepresentation, where m is the index of the block, the output is the corresponding fused feature and output feature, the fused feature is usedFor indicating, outputting characteristicsAnd (4) showing.

Similarly, the corresponding reference image can output the corresponding fusion feature of the reference image through the processing of the feature extraction networkAnd output image features

Further, the fusion module 302 may perform a spatial alignment process on the Feature to be fused by using a Feature Pyramid Network (FPN) to obtain a fusion Feature.

As shown in fig. 4, the feature to be fused includes a plurality of feature maps (feature maps), each feature map having a different size, and the deeper a layer is, i.e., the farther a layer is from the input image, the smaller the size of the image feature (or referred to as a feature map) of the corresponding layer is. According to the sequence of the layers from shallow to deep, the corresponding layers can be called from bottom to top, when FPN processing is adopted, 1 × 1 convolution can be carried out on the feature diagram of the top layer to realize smoothing processing, then the feature diagram of the top layer is converted into the feature diagram with the same size as the feature diagram of the next layer of the top layer through up sampling, and then the converted feature diagram of the top layer with the same size and the feature diagram of the next layer of the top layer are subjected to corresponding channel splicing, namely corresponding elements in the diagram are added, so that layer-by-layer processing is realized, and the spatial alignment processing of the feature diagrams of different layers is realized. For example, in fig. 4, by taking an example that the feature to be fused includes 3 feature maps, the features from the bottom layer to the top layer are respectively represented by C1 to C3, and the corresponding smoothed features are respectively represented by P1 to P3, then the fused features can be obtained by up-sampling and stitching layer by layer.

Through FPN processing, the obtained fusion features retain a large amount of bottom-layer texture information and top-layer semantic information, and spatial position alignment is realized to the maximum extent, so that the image quality evaluation effect is improved. In addition, by fusing the image features, the parameter amount of calculation can be reduced, and the image quality evaluation efficiency can be improved.

Assuming that the fusion feature and the output feature corresponding to the reference image are referred to as a first fusion feature and a first output feature, and the fusion feature and the output feature corresponding to the image to be evaluated are referred to as a second fusion feature and a second output feature, the first fusion feature, the second fusion feature, the first output feature and the second output feature can be obtained through the processing of the feature extraction network. These features may then be input into a score determination network to derive a quality score for the image to be evaluated.

The method specifically comprises the following steps: determining a difference feature of the first fused feature and the second fused feature to obtain a first difference feature; determining a difference characteristic of the first output characteristic and the second output characteristic to obtain a second difference characteristic; converting the first differential feature into a fractional feature using a first conversion network; converting the second difference characteristic into a weight characteristic by adopting a second conversion network; and determining the quality score of the image to be evaluated based on the score feature and the weight feature.

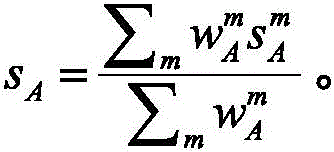

As shown in fig. 5, the score determination network may include: a difference determination module 501, a feature conversion network 502 and a score determination module 503. The difference determining module 501 is configured to determine a first difference feature and a second difference feature, where the first difference feature is a difference feature of the first fused feature and the second fused feature, the second difference feature is a difference feature of the first output feature and the second output feature, the difference feature is a difference between the two corresponding features, and the first difference feature is expressed as a difference between the two corresponding featuresThe second difference characteristic is expressed asThe feature transformation network 502 is used for transforming the first difference feature into a fractional feature and the second difference feature into a weighted featureAnd (5) carrying out characterization. The feature conversion network may specifically comprise two fully connected networks (full connections), denoted FC1 and FC2 in fig. 5, for converting the first difference feature into a fractional feature and the second difference feature into a weighted feature, respectively, the fractional feature being usable as the weighted featureThe weight characteristics can be expressedAnd (4) showing. The score determining module 503 is configured to determine a quality score of the image to be evaluated based on the score feature and the weight feature. For example, the score determination module 503 may determine the quality score s using the following calculation formulaA:

Through the above processing of the score determination network, the quality score of the image to be evaluated can be determined.

In this embodiment, by performing spatial alignment processing on the features to be fused, spatial position relationships among different features to be fused can be considered, and the image quality evaluation effect is improved.

Fig. 6 is a schematic diagram of a sixth embodiment of the present disclosure, which provides a training method for an image quality evaluation model, where the image quality evaluation model includes: a feature extraction network and a score determination network, the method comprising:

601. and extracting the image characteristics of each image in a sample image pair by adopting the characteristic extraction network, wherein the image characteristics comprise the characteristics to be fused and output characteristics, the sample image pair comprises a reference image and a distorted image, and the characteristics to be fused are subjected to space alignment processing to obtain the fusion characteristics of each image.

602. Determining a prediction score for the distorted image based on the fused feature and the output feature using the score determination network.

603. Determining a loss function based on the prediction score, and training the feature extraction network and the score determination network based on the loss function.

The method comprises the steps of performing space alignment processing on features to be fused, determining a prediction score of a distorted image, and enabling the feature to be similar to a processing flow in image quality evaluation.

Specifically, the performing the spatial alignment processing on the feature to be fused may include: and performing spatial alignment processing on the features to be fused by adopting a feature pyramid network.

Through FPN processing, the obtained fusion features retain a large amount of bottom-layer texture information and top-layer semantic information, and spatial position alignment is realized to the maximum extent, so that the image quality evaluation effect is improved. In addition, by fusing the image features, the parameter amount of calculation can be reduced, and the training efficiency of the image quality evaluation model can be improved.

The determining a prediction score of the distorted image based on the fusion feature and the output feature may include: determining a difference feature of the first fused feature and the second fused feature to obtain a first difference feature; determining a difference characteristic of the first output characteristic and the second output characteristic to obtain a second difference characteristic; adopting the scores to determine a first conversion network in the network, and converting the first differential features into score features; determining a second conversion network in the network by adopting the fraction, and converting the second differential feature into a weight feature; determining a prediction score for the distorted image based on the score features and the weight features.

Through the above-described processing of the score determination network, a prediction score of a distorted image can be determined.

Unlike image quality evaluation, the sample image pairs are two pairs at the time of model training, and a probability determination module is further included.

As shown in fig. 7, when the model is trained, the training system includes: an image quality evaluation model 701 and a probability determination module 702. Three features in the image quality evaluation model 701 extract network sharing parameters, and two scores determine the network sharing parameters. The image quality evaluation models are divided into two groups, and each group corresponds to a pair of sample image pairs. Only the image quality evaluation models corresponding to the first distorted image and the reference image are shown in fig. 7, and the image quality evaluation models corresponding to the second distorted image and the reference image are similar.

Specifically, the sample groups may be obtained first, where the sample groups are multiple groups, each group of sample groups is a triplet, and each group of sample groups includes: the image distortion method comprises a reference image, a first distortion image and a second distortion image, wherein the first distortion image and the second distortion image are obtained by performing distortion processing on the reference image by adopting two different distortion processing modes. After obtaining the ternary sample set, two pairs of sample images may be formed, and assuming that the two pairs of sample images are respectively referred to as a first sample image pair and a second sample image pair, the first sample image pair includes the reference image and the first distorted image, and the second sample image pair includes the reference image and the second distorted image.

As shown in fig. 7, after passing through the feature extraction network and the score determination network of the image quality evaluation model corresponding to each pair of sample image pairs, the prediction scores of the distorted images in each pair of sample image pairs can be obtained, and assuming that the first distorted image is represented by a and the second distorted image is represented by B, the corresponding prediction scores can be respectively referred to as a first prediction score and a second prediction score, which are represented as sA and sB.

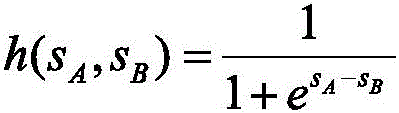

After the first prediction score and the second prediction score are obtained, the two prediction scores may be input into a probability determination module, and the probability determination module processes the two prediction scores and outputs the prediction preference probability. Predicting preference probability h(s)A,sB) To express, the specific form of the h (#) function may be as desiredSelecting, for example, the following calculation formula:

the preference probability is used to indicate the preference of the first distorted image a relative to the second distorted image B, and the smaller the preference probability, the closer the first distorted image a is to the reference image.

For the purpose of differentiation, the preference probability can be divided into a prediction preference probability and a real preference probability, and the prediction preference probability refers to the preference probability obtained based on the two prediction scores during training. The real preference probability refers to preference probability calculated based on real scores of two distorted images, wherein the distorted images are sample images, and the real scores of the distorted images can be determined by processing the sample images such as manual labeling. After the true score is obtained, a true preference probability may be calculated based on h (×) above.

After the prediction preference probability and the true preference probability are obtained, a loss function may be determined based on the two preference probabilities, and an image quality evaluation model may be trained based on the loss function.

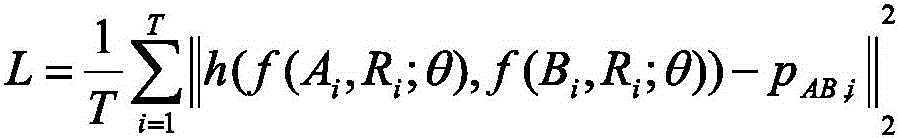

Wherein the loss function may be of the form:

when training the model based on the loss function, the training goal may be to minimize the loss function described above, i.e., the final parameters of the image quality evaluation model are expressed as:

in the above formula, f (—) is a function corresponding to the image quality evaluation model, θ is a parameter of the image quality evaluation model, θ is a final parameter, i is a sample image index, T is a total number of samples, Ai and Bi are a first distorted image and a second distorted image, respectively, and Ri isReference picture, pAB,iIs the true preference probability and h (×) is the predicted preference probability.

By determining the loss function based on the prediction preference probability and the true preference probability, robustness of model training may be improved.

In some embodiments, a class learning idea may be adopted in the training process, that is, the sample groups may be grouped into multiple classes of sample groups, where the difficulty degrees corresponding to different classes of sample groups are different, the difficulty degree is determined based on an absolute value of a score difference, and the score difference is a difference between a true score of the first distorted image and a true score of the second distorted image; correspondingly, during training, the feature extraction network and the score determination network are trained according to the sequence of the difficulty degrees from easy to difficult and based on the loss functions corresponding to the sample groups of the corresponding classes.

The samples are classified into three types according to various categories, and the corresponding sample groups can be called an easy sample group, a general sample group and a difficult sample group according to the sequence of the difficulty degrees from easy to difficult. The difficulty level of the above three types of sample groups is determined based on the absolute value of the score difference, for example, a first threshold and a second threshold may be preset, where the first threshold is greater than the second threshold, the sample group whose absolute value of the score difference is greater than the first threshold is regarded as an easy sample group, the sample group whose absolute value of the score difference is less than the second threshold is regarded as a difficult sample group, and the rest are general sample groups, where the first threshold is, for example, 0.5, and the second threshold is, for example, 0.1.

For example, one group of sample groups is represented by < a1, B1, R1>, another group of sample groups is represented by < a2, B2, R2>, and another group of sample groups is represented by < A3, B3, R3>, if the absolute value of the difference between the true score of a1 and the true score of B1 is greater than 0.5, if the absolute value of the difference between the true score of a2 and the true score of B2 is less than 0.1, if the absolute value of the difference between the true score of A3 and the true score of B3 is between 0.1 and 0.5, the group of sample corresponding to < a1, B1, R1> is an easy group of sample, < a2, B2, R2> is a difficult group of sample, and the group of sample corresponding to < A3, B3, R3> is a general group of sample group.

In the training process, the parameters of the model may be continuously adjusted by using the easy sample set, for example, after 32 rounds of adjustment, the parameters of the model may be continuously adjusted by using the general sample set, and after 32 rounds of adjustment, the parameters of the model may be continuously adjusted by using the difficult sample set until a training end condition is reached, where the training end condition is preset, for example, a predicted training frequency is reached, or a loss function is converged. And taking the model parameters when the training end conditions are reached as final parameters.

In the image quality evaluation stage, the image quality evaluation can be carried out on the image to be evaluated by adopting the model with the final parameters.

By introducing the course learning idea into the model training process, the model convergence can be accelerated, and the model is ensured to gradually converge to an optimal space.

In some embodiments, due to the limited number of existing image pairs, the existing image pairs may be data enhanced to obtain extended image pairs, after which the existing image pairs and/or the extended image pairs may be used as the sample image pairs described above.

That is, the method may further include: obtaining an existing image pair, the existing image pair comprising: an existing reference image and an existing distorted image; carrying out random erasing processing on the same position of the existing reference image and the existing distorted image to obtain an extended image pair; constructing the sample image pair based on the existing image pair and the extended image pair.

For example, if the existing image pair includes a distorted image a and a reference image R, which are represented as < a, R >, the same random position erasing process may be performed on the distorted image a and the reference image R, for example, an element in a certain region D of the distorted image a is replaced by 0, an element in a region corresponding to the position of the region D in the reference image R is also replaced by 0, and if the processed images are represented as a 'and R', respectively, a 'and R' form an extended image pair, which is represented as < a ', R' >. Then, a sample image pair may be composed of multiple sets of < a, R >, < a', R >.

The same random position erasing processing is carried out on the existing image pairs to obtain the extended image pairs, so that the number of the sample image pairs can be extended, and the training effect is improved.

In this embodiment, by performing spatial alignment processing on the features to be fused, spatial position relationships between different features to be fused can be considered, and the training effect of the image quality evaluation model is improved.

Fig. 8 is a schematic diagram of an eighth embodiment of the present disclosure, which provides an image quality evaluation apparatus 800 including: an extraction module 801 and a determination module 802.

The extraction module 801 is configured to extract an image feature of each image in an image pair by using a feature extraction network in an image quality evaluation model, where the image feature includes a feature to be fused and an output feature, and the image pair includes: the method comprises the steps of referring to an image and an image to be evaluated, and carrying out spatial alignment processing on the feature to be fused to obtain the fusion feature of each image; the determining module 802 is configured to determine a network by using the score in the image quality evaluation model, and determine the quality score of the image to be evaluated based on the fusion feature and the output feature.

In some embodiments, the extraction module 801 is specifically configured to: and performing spatial alignment processing on the features to be fused by adopting a feature pyramid network.

In some embodiments, the fusion features include a first fusion feature and a second fusion feature, the first fusion feature is a fusion feature corresponding to the reference image, the second fusion feature is a fusion feature corresponding to the image to be evaluated, the output features include a first output feature and a second output feature, the first output feature is an output feature corresponding to the reference image, the second output feature is an output feature corresponding to the image to be evaluated, and the determining module 802 is specifically configured to: determining a difference feature of the first fused feature and the second fused feature to obtain a first difference feature; determining a difference characteristic of the first output characteristic and the second output characteristic to obtain a second difference characteristic; converting the first differential feature into a fractional feature using a first conversion network; converting the second difference characteristic into a weight characteristic by adopting a second conversion network; and determining the quality score of the image to be evaluated based on the score feature and the weight feature.

In the embodiment of the disclosure, by performing spatial alignment processing on the features to be fused, spatial position relations among different features to be fused can be considered, and the image quality evaluation effect is improved.

Fig. 9 is a schematic diagram of a ninth embodiment of the present disclosure, which provides a training apparatus for an image quality evaluation model, where the image quality evaluation model includes: a feature extraction network and a score determination network, the apparatus 900 comprising: an extraction module 901, a determination module 902 and a training module 903.

The extraction module 901 is configured to extract, by using the feature extraction network, image features of each image in a sample image pair, where the image features include a feature to be fused and an output feature, the sample image pair includes a reference image and a distorted image, and perform spatial alignment processing on the feature to be fused to obtain a fusion feature of each image; a determining module 902 is configured to determine a prediction score of the distorted image based on the fused feature and the output feature using the score determination network;

the training module 903 is configured to determine a loss function based on the prediction score, and train the feature extraction network and the score determination network based on the loss function.

In some embodiments, the extracting module 901 is specifically configured to: and performing spatial alignment processing on the features to be fused by adopting a feature pyramid network.

In some embodiments, the fused feature includes a first fused feature and a second fused feature, the first fused feature is a fused feature corresponding to the reference image, the second fused feature is a fused feature corresponding to the distorted image, the output feature includes a first output feature and a second output feature, the first output feature is an output feature corresponding to the reference image, and the determining module 902 is specifically configured to: determining a difference feature of the first fused feature and the second fused feature to obtain a first difference feature; determining a difference characteristic of the first output characteristic and the second output characteristic to obtain a second difference characteristic; adopting the scores to determine a first conversion network in the network, and converting the first differential features into score features; determining a second conversion network in the network by adopting the fraction, and converting the second differential feature into a weight feature; determining a prediction score for the distorted image based on the score features and the weight features.

In some embodiments, the sample image pair comprises two sample image pairs comprising the same reference image and two different distorted images, the prediction scores comprise a first prediction score and a second prediction score corresponding to the two distorted images, respectively, and the training module 903 is specifically configured to: determining a prediction preference probability based on the first prediction score and the second prediction score; determining a loss function based on the predicted preference probability and the true preference probability.

In some embodiments, the sample image pair is constructed from a plurality of sets of samples, each set of samples comprising: a reference image, a first distorted image, and a second distorted image, the apparatus further comprising: a grouping module, configured to group the sample groups into multiple categories of sample groups, where difficulty levels corresponding to different categories of sample groups are different, where the difficulty level is determined based on an absolute value of a score difference, and the score difference is a difference between a true score of the first distorted image and a true score of the second distorted image; the training module 903 is specifically configured to: and training the feature extraction network and the score determination network according to the sequence of the difficulty degrees from easy to difficult and based on the loss function corresponding to the sample group of the corresponding category.

In some embodiments, the apparatus further comprises: the device comprises an acquisition module, an expansion module and a construction module. The acquisition module is used for acquiring an existing image pair, wherein the existing image pair comprises: an existing reference image and an existing distorted image; the expansion module is used for carrying out the same random position erasing treatment on the existing reference image and the existing distorted image so as to obtain an expanded image pair; a construction module is to construct the sample image pair based on the existing image pair and the extended image pair.

In the embodiment of the disclosure, by performing spatial alignment processing on the features to be fused, spatial position relations among different features to be fused can be considered, and the training effect of the image quality evaluation model is improved.

It is to be understood that in the disclosed embodiments, the same or similar elements in different embodiments may be referenced.

It is to be understood that "first", "second", and the like in the embodiments of the present disclosure are used for distinction only, and do not indicate the degree of importance, the order of timing, and the like.

The present disclosure also provides an electronic device, a readable storage medium, and a computer program product according to embodiments of the present disclosure.

FIG. 10 illustrates a schematic block diagram of an example electronic device 1000 that can be used to implement embodiments of the present disclosure. Electronic devices are intended to represent various forms of digital computers, such as laptops, desktops, workstations, servers, blade servers, mainframes, and other appropriate computers. The electronic device may also represent various forms of mobile devices, such as personal digital assistants, cellular telephones, smart phones, wearable devices, and other similar computing devices. The components shown herein, their connections and relationships, and their functions, are meant to be examples only, and are not meant to limit implementations of the disclosure described and/or claimed herein.

As shown in fig. 10, the electronic device 1000 includes a computing unit 1001 that can perform various appropriate actions and processes according to a computer program stored in a Read Only Memory (ROM)1002 or a computer program loaded from a storage unit 508 into a Random Access Memory (RAM) 1003. In the RAM 1003, various programs and data necessary for the operation of the electronic apparatus 1000 can also be stored. The calculation unit 1001, the ROM 1002, and the RAM 1003 are connected to each other by a bus 1004. An input/output (I/O) interface 1005 is also connected to bus 1004.

A number of components in the electronic device 1000 are connected to the I/O interface 1005, including: an input unit 1006 such as a keyboard, a mouse, and the like; an output unit 1007 such as various types of displays, speakers, and the like; a storage unit 1008 such as a magnetic disk, an optical disk, or the like; and a communication unit 1009 such as a network card, a modem, a wireless communication transceiver, or the like. The communication unit 1009 allows the electronic device 1000 to exchange information/data with other devices through a computer network such as the internet and/or various telecommunication networks.

Various implementations of the systems and techniques described here above may be implemented in digital electronic circuitry, integrated circuitry, Field Programmable Gate Arrays (FPGAs), Application Specific Integrated Circuits (ASICs), Application Specific Standard Products (ASSPs), system on a chip (SOCs), load programmable logic devices (CPLDs), computer hardware, firmware, software, and/or combinations thereof. These various embodiments may include: implemented in one or more computer programs that are executable and/or interpretable on a programmable system including at least one programmable processor, which may be special or general purpose, receiving data and instructions from, and transmitting data and instructions to, a storage system, at least one input device, and at least one output device.

Program code for implementing the methods of the present disclosure may be written in any combination of one or more programming languages. These program codes may be provided to a processor or controller of a general purpose computer, special purpose computer, or other programmable data processing apparatus, such that the program codes, when executed by the processor or controller, cause the functions/operations specified in the flowchart and/or block diagram to be performed. The program code may execute entirely on the machine, partly on the machine, as a stand-alone software package partly on the machine and partly on a remote machine or entirely on the remote machine or server.

In the context of this disclosure, a machine-readable medium may be a tangible medium that can contain, or store a program for use by or in connection with an instruction execution system, apparatus, or device. The machine-readable medium may be a machine-readable signal medium or a machine-readable storage medium. A machine-readable medium may include, but is not limited to, an electronic, magnetic, optical, electromagnetic, infrared, or semiconductor system, apparatus, or device, or any suitable combination of the foregoing. More specific examples of a machine-readable storage medium would include an electrical connection based on one or more wires, a portable computer diskette, a hard disk, a Random Access Memory (RAM), a read-only memory (ROM), an erasable programmable read-only memory (EPROM or flash memory), an optical fiber, a portable compact disc read-only memory (CD-ROM), an optical storage device, a magnetic storage device, or any suitable combination of the foregoing.

To provide for interaction with a user, the systems and techniques described here can be implemented on a computer having: a display device (e.g., a CRT (cathode ray tube) or LCD (liquid crystal display) monitor) for displaying information to a user; and a keyboard and a pointing device (e.g., a mouse or a trackball) by which a user can provide input to the computer. Other kinds of devices may also be used to provide for interaction with a user; for example, feedback provided to the user can be any form of sensory feedback (e.g., visual feedback, auditory feedback, or tactile feedback); and input from the user may be received in any form, including acoustic, speech, or tactile input.

The systems and techniques described here can be implemented in a computing system that includes a back-end component (e.g., as a data server), or that includes a middleware component (e.g., an application server), or that includes a front-end component (e.g., a user computer having a graphical user interface or a web browser through which a user can interact with an implementation of the systems and techniques described here), or any combination of such back-end, middleware, or front-end components. The components of the system can be interconnected by any form or medium of digital data communication (e.g., a communication network). Examples of communication networks include: local Area Networks (LANs), Wide Area Networks (WANs), and the Internet.

The computer system may include clients and servers. A client and server are generally remote from each other and typically interact through a communication network. The relationship of client and server arises by virtue of computer programs running on the respective computers and having a client-server relationship to each other. The Server may be a cloud Server, which is also called a cloud computing Server or a cloud host, and is a host product in a cloud computing service system, so as to solve the defects of high management difficulty and weak service extensibility in a traditional physical host and a VPS service ("Virtual Private Server", or "VPS" for short). The server may also be a server of a distributed system, or a server incorporating a blockchain.

It should be understood that various forms of the flows shown above may be used, with steps reordered, added, or deleted. For example, the steps described in the present disclosure may be executed in parallel, sequentially, or in different orders, as long as the desired results of the technical solutions disclosed in the present disclosure can be achieved, and the present disclosure is not limited herein.

The above detailed description should not be construed as limiting the scope of the disclosure. It should be understood by those skilled in the art that various modifications, combinations, sub-combinations and substitutions may be made in accordance with design requirements and other factors. Any modification, equivalent replacement, and improvement made within the spirit and principle of the present disclosure should be included in the scope of protection of the present disclosure.

Claims (21)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202110471056.5A CN113205495B (en) | 2021-04-28 | 2021-04-28 | Image quality evaluation and model training method, device, equipment and storage medium |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202110471056.5A CN113205495B (en) | 2021-04-28 | 2021-04-28 | Image quality evaluation and model training method, device, equipment and storage medium |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN113205495A true CN113205495A (en) | 2021-08-03 |

| CN113205495B CN113205495B (en) | 2023-08-22 |

Family

ID=77027775

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202110471056.5A Active CN113205495B (en) | 2021-04-28 | 2021-04-28 | Image quality evaluation and model training method, device, equipment and storage medium |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN113205495B (en) |

Cited By (7)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN113674276A (en) * | 2021-10-21 | 2021-11-19 | 北京金山云网络技术有限公司 | Image quality difference scoring method and device, storage medium and electronic equipment |

| CN113705587A (en) * | 2021-09-30 | 2021-11-26 | 北京金山云网络技术有限公司 | Image quality scoring method, device, storage medium and electronic equipment |

| CN114462477A (en) * | 2021-12-22 | 2022-05-10 | 浙江大华技术股份有限公司 | Training method, electronic device and computer-readable storage medium |

| CN114612714A (en) * | 2022-03-08 | 2022-06-10 | 西安电子科技大学 | A no-reference image quality assessment method based on curriculum learning |

| CN115631163A (en) * | 2022-10-21 | 2023-01-20 | 阿里巴巴(中国)有限公司 | Image processing method |

| CN116129208A (en) * | 2021-11-12 | 2023-05-16 | 中国移动通信有限公司研究院 | Image quality assessment and model training method and device thereof, and electronic equipment |

| CN116823700A (en) * | 2022-03-21 | 2023-09-29 | 北京沃东天骏信息技术有限公司 | A method and device for determining image quality |

Citations (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN101334893A (en) * | 2008-08-01 | 2008-12-31 | 天津大学 | Comprehensive Evaluation Method of Fusion Image Quality Based on Fuzzy Neural Network |

| US20200160559A1 (en) * | 2018-11-16 | 2020-05-21 | Uatc, Llc | Multi-Task Multi-Sensor Fusion for Three-Dimensional Object Detection |

-

2021

- 2021-04-28 CN CN202110471056.5A patent/CN113205495B/en active Active

Patent Citations (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN101334893A (en) * | 2008-08-01 | 2008-12-31 | 天津大学 | Comprehensive Evaluation Method of Fusion Image Quality Based on Fuzzy Neural Network |

| US20200160559A1 (en) * | 2018-11-16 | 2020-05-21 | Uatc, Llc | Multi-Task Multi-Sensor Fusion for Three-Dimensional Object Detection |

Non-Patent Citations (1)

| Title |

|---|

| 胡晋滨;柴雄力;邵枫;: "基于伪参考图像深层特征相似性的盲图像质量评价", 光电子・激光, no. 11 * |

Cited By (9)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN113705587A (en) * | 2021-09-30 | 2021-11-26 | 北京金山云网络技术有限公司 | Image quality scoring method, device, storage medium and electronic equipment |

| CN113674276A (en) * | 2021-10-21 | 2021-11-19 | 北京金山云网络技术有限公司 | Image quality difference scoring method and device, storage medium and electronic equipment |

| CN113674276B (en) * | 2021-10-21 | 2022-03-08 | 北京金山云网络技术有限公司 | Image quality difference scoring method and device, storage medium and electronic equipment |

| CN116129208A (en) * | 2021-11-12 | 2023-05-16 | 中国移动通信有限公司研究院 | Image quality assessment and model training method and device thereof, and electronic equipment |

| CN114462477A (en) * | 2021-12-22 | 2022-05-10 | 浙江大华技术股份有限公司 | Training method, electronic device and computer-readable storage medium |

| CN114612714A (en) * | 2022-03-08 | 2022-06-10 | 西安电子科技大学 | A no-reference image quality assessment method based on curriculum learning |

| CN114612714B (en) * | 2022-03-08 | 2024-09-27 | 西安电子科技大学 | Curriculum learning-based reference-free image quality evaluation method |

| CN116823700A (en) * | 2022-03-21 | 2023-09-29 | 北京沃东天骏信息技术有限公司 | A method and device for determining image quality |

| CN115631163A (en) * | 2022-10-21 | 2023-01-20 | 阿里巴巴(中国)有限公司 | Image processing method |

Also Published As

| Publication number | Publication date |

|---|---|

| CN113205495B (en) | 2023-08-22 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN113205495B (en) | Image quality evaluation and model training method, device, equipment and storage medium | |

| CN114549904B (en) | Visual processing and model training method, device, storage medium and program product | |

| CN114282670B (en) | Compression method, device and storage medium for neural network model | |

| CN113379627A (en) | Training method of image enhancement model and method for enhancing image | |

| CN112862877A (en) | Method and apparatus for training image processing network and image processing | |

| CN113378911B (en) | Image classification model training method, image classification method and related device | |

| CN113989174B (en) | Image fusion method and image fusion model training method and device | |

| CN113436105A (en) | Model training and image optimization method and device, electronic equipment and storage medium | |

| CN114449343A (en) | Video processing method, device, equipment and storage medium | |

| CN113657468A (en) | Pre-training model generation method and device, electronic equipment and storage medium | |

| CN114359993A (en) | Model training method, face recognition device, face recognition equipment, face recognition medium and product | |

| CN113657466A (en) | Method, device, electronic device and storage medium for generating pre-training model | |

| CN113361535A (en) | Image segmentation model training method, image segmentation method and related device | |

| CN115019057A (en) | Image feature extraction model determining method and device and image identification method and device | |

| CN114187318B (en) | Image segmentation method, device, electronic equipment and storage medium | |

| CN114550236B (en) | Image recognition and its model training method, device, equipment and storage medium | |

| CN107507255A (en) | Picture compression quality factor acquisition methods, system, equipment and storage medium | |

| CN114037965B (en) | Model training and lane line prediction method and equipment and automatic driving vehicle | |

| CN113610731B (en) | Method, apparatus and computer program product for generating image quality improvement model | |

| CN113033408B (en) | Data queue dynamic updating method and device, electronic equipment and storage medium | |

| CN113033373A (en) | Method and related device for training face recognition model and recognizing face | |

| CN115601620B (en) | Feature fusion method, device, electronic device and computer-readable storage medium | |

| CN117459719A (en) | Reference frame selection method, device, electronic device and storage medium | |

| CN113283305B (en) | Face recognition method, device, electronic equipment and computer readable storage medium | |

| CN116311551A (en) | Liveness detection method and system |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |