Disclosure of Invention

The invention aims to provide an automatic face replacement method based on an offline face database, which solves the problem of low replacement efficiency in the prior art.

The technical scheme adopted by the invention is that an automatic face replacement method based on an off-line face database is implemented according to the following steps:

step 1, constructing an off-line face database;

step 2, carrying out mirror image processing on each face image in the data set obtained in the step 1, then taking the original face image and the face image after mirror image as a candidate face image set, and classifying all face images in the candidate face image set according to the Euler angles of the face postures;

step 3, inputting a face image to be detected, using a dlib detection model to execute face detection to extract all faces, estimating the face postures of the face image to be detected, calculating Euler angles of all the faces in the face image to be detected, and aligning the face image in the candidate face image set and the face image to be detected to a common coordinate system;

step 4, comparing the face image to be detected with all face images in the candidate face image set to obtain an initial candidate face set;

step 5, adjusting the illumination of all face images in the initial candidate face set according to the face image to be detected to obtain an adjusted candidate face set;

and 6, calculating Euclidean distances between the candidate face images in the candidate face set obtained in the step 5 and the face image to be detected, arranging the Euclidean distances in a sequence from small to large, and selecting the candidate face with the first rank and the face to be detected for replacement output.

The present invention is also characterized in that,

in step 2, classifying all the face images in the candidate face image set according to the euler angles of the face postures specifically comprises the following steps:

step 2.1, using a dlib detection model to perform feature point detection on the face images in the candidate face image set, obtaining 6 key point coordinates of the face images, namely the coordinates of a left canthus, a right canthus, a nose tip, a left mouth angle, a right mouth angle and a lower jaw, then using an average face model to take 6 key points as basic points of a 3D model, constructing a corresponding 3D model, then using a solvePp function of OpenCV to calculate a rotation vector according to the positions of the key points in the 3D model, and then calculating a Yaw angle Yaw and a Pitch angle Pitch of an Euler angle according to the rotation vector;

step 2.2, selecting a face image with a yaw angle within +/-25 degrees and a pitch angle within +/-15 degrees as a candidate face image;

step 2.3, classifying the alternative face images, specifically:

the yaw angle is evenly divided into 5 intervals from-25 degrees to 25 degrees as the abscissa, the pitch angle is evenly divided into three intervals from-15 degrees to 15 degrees as the ordinate, and the corresponding face images meeting the numerical values of the abscissa and the ordinate are placed in corresponding grids to form 15 attitude boxes, namely, the alternative face images are divided into 15 types.

And 3, calculating Euler angles of all the faces in the face image to be detected according to the method in the step 2.1, and calculating a Yaw angle Yaw and a Pitch angle Pitch.

The step 4 specifically comprises the following steps:

step 4.1, carrying out gender screening on the alternative face image: according to the gender of the face image to be detected, selecting the face image with the same gender as that of the face image to be detected in 15 gesture boxes of the alternative face image to be reserved as the next candidate face image;

and 4.2, performing age screening on all candidate face images obtained in the step 4.1: according to the age interval of the face image to be detected, detecting a face in accordance with the corresponding age interval in the candidate face image obtained in the step 4.1 as a next candidate face image;

4.3, selecting a face which has a difference of not more than 3 degrees with the yaw angle and the pitch angle of the face image to be detected in the candidate face image obtained in the step 4.2 as a candidate face image of the next step;

step 4.4, selecting the face meeting the resolution requirement in the candidate face set in the step 4.3 as a candidate face image of the next step;

step 4.5, calculating the fuzzy distance d between the candidate face image obtained by the processing of the step 4.4 and the face image to be detectedBThe calculated fuzzy distance dBSorting from small to large, and reserving the images ranked in the first 50 percent as candidate face images of the next step;

step 4.6, respectively calculating the illumination distance d between the candidate face image obtained in the step 4.5 and the face image to be detectedLThen to dLSorting according to the rule from small to large, and finally reserving the illumination distance dLThe top 10% of the face images are used as the next candidate face.

The step 4.5 is specifically as follows:

step 4.5.1, normalizing the gray level intensity of the eye area of each face image into a zero mean value and a unit variance, which specifically comprises the following steps:

wherein, x is the candidate face image obtained in the step 4.4 or the eye area list of the face image to be detectedThe intensity value of the gray level in each pixel,

is the mean value of the gray intensity of the candidate face image or the eye region of the face image to be detected obtained in the step 4.4, sigma is the standard deviation of the gray intensity of the candidate face image or the eye region of the face image to be detected obtained in the step 4.4, and x

*Is the normalized gray scale intensity value;

step 4.5.2, calculating histogram h of normalized eye zone gradient size

(1)And h

(2)Multiplying the histogram by a weighting function using the square of the histogram index bin, resulting in two weighted histograms

And

the method specifically comprises the following steps:

where n denotes the bin index number of the histogram, h

(i)A histogram representing the normalized eye region gradient size of the candidate face image or the face image to be measured, respectively,

representing a weighted histogram corresponding to the candidate face image or the face image to be detected, wherein i is 1 to represent the candidate face image, and i is 2 to represent the face image to be detected;

step 4.5.3, calculating the fuzzy distance between the candidate face image and the face image to be detected, namely the histogram intersection distance HID, specifically comprising the following steps:

wherein d isBRepresenting two facesA blur distance of the image;

step 4.5.4, calculating the fuzzy distance d between all the candidate face images and the face image to be detected according to the steps 4.5.1-4.5.3BThen, the images are sorted according to a rule from small to large, and the top 50% of the images are reserved as the candidate face images of the next step.

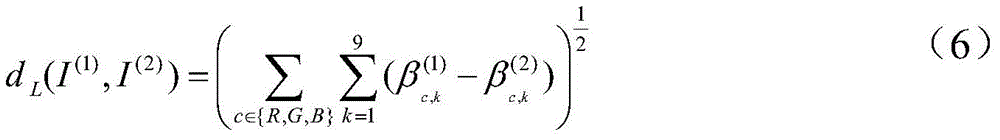

The step 4.6 is specifically as follows:

step 4.6.1, representing the candidate face image and the face image to be detected obtained in the step 4.5 into a cylindrical average face shape by using a face re-marking method;

step 4.6.2, calculating the image intensity of the face replacement area in each RGB color channel

The method specifically comprises the following steps:

wherein,

representing the calculation of the image intensity of the face replacement region in each RGB color channel; n (x, y) is the surface face normal at image location (x, y), ρ

cIs a constant albedo, coefficient a, for each of three color channels

c,kLight conditions, H

k(n (x, y) is a spherical harmonic image;

step 4.6.3 by applying Schmitt orthogonalization to the harmonic basis H

k(n (x, y) to create an orthogonal basis ψ

k(x, y) then

Expressed as:

wherein, betac,kRepresenting the illumination coefficient, #k(x, y) representsSpherical harmonic images after Schmidt orthogonalization;

step 4.6.4, calculating the corresponding candidate face image obtained in step 4.5 and the face image to be tested according to the step 4.6.1-4.6.3

Are used separately

And

representing and then calculating the illumination distance d between the candidate face image and the face image to be measured

LThe method specifically comprises the following steps:

wherein,

representing the corresponding illumination coefficients of the candidate face image,

representing the corresponding illumination coefficient of the face image to be measured, d

L(I

(1),I

(2)) Representing the illumination distance between the candidate face image and the face image to be measured, wherein I

(1)As candidate face image, I

(2)The image is a human face image to be detected;

step 4.6.5, calculating the illumination distance d between all the candidate face images and the face image to be detectedLThe calculated illumination distance dLOrdering according to a rule from small to large, reserving dLThe top 10% of the faces are used as the next candidate face image.

The step 5 specifically comprises the following steps:

using an image formula to convert the face image I to be detected in the replacement area(2)Is applied to the candidate face image obtained in step 4I(1)And if so, the image intensity corresponding to the candidate face image after application is as follows:

wherein,

is a candidate face image I calculated according to the formula (5)

(1)The image intensity of the face replacement area in each corresponding RGB color channel;

is a face image I to be measured calculated according to the formula (5)

(2)The image intensity of the face replacement area in each corresponding RGB color channel;

to be measured face image I

(2)Illumination candidate face image I

(1)And the image intensity corresponding to the candidate face image.

The step 6 specifically comprises the following steps: and (5) calculating Euclidean distances between all the candidate face images processed in the step (5) and the face image to be detected, arranging the calculated Euclidean distances in a sequence from small to large, and selecting the candidate face with the first rank and the face to be detected for replacement output.

The invention has the beneficial effects that:

(1) compared with the traditional method, the method can fully automatically replace the human faces with different postures, sexes, ages, resolutions, image blurring degrees and illumination conditions without redundant manual assistance.

(2) The method utilizes the off-line face database to carry out full-automatic face exchange, is different from the traditional method of blurring identity by utilizing pixelation and painting black pixels, and achieves good effect.

Detailed Description

The present invention will be described in detail below with reference to the accompanying drawings and specific embodiments.

The invention relates to an automatic face replacement method based on an off-line face database, which is implemented according to the following steps:

step 1, constructing an off-line face database;

step 2, carrying out mirror image processing on each face image in the data set obtained in the step 1, then taking the original face image and the face image after mirror image as a candidate face image set, and classifying all face images in the candidate face image set according to the Euler angles of the face postures; the classification of all the face images in the candidate face image set according to the euler angles of the face postures specifically comprises the following steps:

step 2.1, using a dlib detection model to perform feature point detection on the face images in the candidate face image set, obtaining 6 key point coordinates of the face images, as shown in fig. 2, namely, coordinates of a left eye corner, a right eye corner, a nose tip, a left mouth corner, a right mouth corner and a lower jaw, then using an average face model to take the 6 key points as basic points of the 3D model, constructing a corresponding 3D model, then using a solvePnP function of OpenCV, calculating a rotation vector of the face according to the positions of the key points in the 3D model, and then calculating a Yaw angle Yaw and a Pitch angle Pitch of an euler angle by using the rotation vector;

step 2.2, selecting a face image with a yaw angle within +/-25 degrees and a pitch angle within +/-15 degrees as a candidate face image;

step 2.3, classifying the alternative face images, specifically:

uniformly dividing a yaw angle from-25 degrees to 25 degrees into 5 intervals as horizontal coordinates, uniformly dividing a pitch angle from-15 degrees to 15 degrees into three intervals as vertical coordinates, and placing corresponding face images meeting the numerical values of the horizontal and vertical coordinates into corresponding grids to form 15 attitude boxes, namely, dividing the alternative face images into 15 types;

step 3, inputting a face image to be detected, using a dlib detection model to execute face detection to extract all faces, estimating the face postures of the face image to be detected, calculating the Yaw angle Yaw and the Pitch angle Pitch of all the faces in the face image to be detected, and aligning the face image in the candidate face image set and the face image to be detected to a common coordinate system;

step 4, comparing the face image to be detected with all face images in the candidate face image set to obtain an initial candidate face set; the method specifically comprises the following steps:

step 4.1, carrying out gender screening on the alternative face image: according to the gender of the face image to be detected, selecting the face image with the same gender as that of the face image to be detected in 15 gesture boxes of the alternative face image to be reserved as the next candidate face image;

and 4.2, performing age screening on all candidate face images obtained in the step 4.1: according to the age interval of the face image to be detected, detecting a face in accordance with the corresponding age interval in the candidate face image obtained in the step 4.1 as a next candidate face image;

4.3, selecting a face which has a difference of not more than 3 degrees with the yaw angle and the pitch angle of the face image to be detected in the candidate face image obtained in the step 4.2 as a candidate face image of the next step;

step 4.4, selecting the face meeting the resolution requirement in the candidate face set in the step 4.3 as a candidate face image of the next step;

step 4.5, calculating the fuzzy distance d between the candidate face image obtained by the processing of the step 4.4 and the face image to be detectedBThe calculated fuzzy distance dBSorting from small to large, and reserving the images ranked in the first 50 percent as candidate face images of the next step;

step 4.6, calculating the illumination distance d between the candidate face image obtained in the step 4.5 and the face image to be detectedLThen to dLSorting according to the rule from small to large, and finally reserving the illumination distance dLThe top 10% of the face images are used as the next candidate face.

The step 4.5 is specifically as follows:

step 4.5.1, normalizing the gray level intensity of the eye area of each face image into a zero mean value and a unit variance, which specifically comprises the following steps:

wherein x is the gray intensity value in a single pixel point of the candidate face image or the eye region of the face image to be detected obtained in the step 4.4,

is the mean value of the gray intensity of the candidate face image or the eye region of the face image to be detected obtained in the step 4.4, sigma is the standard deviation of the gray intensity of the candidate face image or the eye region of the face image to be detected obtained in the step 4.4, and x

*Is the normalized gray scale intensity value;

step 4.5.2, calculating histogram h of normalized eye zone gradient size

(1)And h

(2)Multiplying the histogram by a weighting function using the square of the histogram index bin, resulting in two weighted histograms

And

the method specifically comprises the following steps:

where n denotes the bin index number of the histogram, h

(i)A histogram representing the normalized eye region gradient size of the candidate face image or the face image to be measured, respectively,

representing a weighted histogram corresponding to the candidate face image or the face image to be detected, wherein i is 1 to represent the candidate face image, and i is 2 to represent the face image to be detected;

step 4.5.3, calculating the fuzzy distance between the candidate face image and the face image to be detected, namely the histogram intersection distance HID, specifically comprising the following steps:

wherein d isBRepresenting the fuzzy distance of two face images;

step 4.5.4, calculating the fuzzy distance d between all the candidate face images and the face image to be detected according to the steps 4.5.1-4.5.3BThen, ordering according to a rule from small to large, and reserving the first 50 percent of images as candidate face images of the next step

The step 4.6 is specifically as follows:

step 4.6.1, representing the candidate face image and the face image to be detected obtained in the step 4.5 into a cylindrical average face shape by using a face re-marking method;

step 4.6.2, calculating the image intensity of the face replacement area in each RGB color channel

The method specifically comprises the following steps:

wherein,

representing the calculation of the image intensity of the face replacement region in each RGB color channel; n (x, y) is the surface face normal at image location (x, y), ρ

cIs a constant albedo, coefficient a, for each of three color channels

c,kLight conditions, H

k(n (x, y) is a spherical harmonic image;

step 4.6.3 by applying Schmitt orthogonalization to the harmonic basis H

k(n (x, y) to create an orthogonal basis ψ

k(x, y) then

Expressed as:

wherein, betac,kRepresenting the illumination coefficient, #k(x, y) represents a spherical harmonic image after schmidt orthogonalization;

step 4.6.4, calculating the corresponding candidate face image obtained in step 4.5 and the face image to be tested according to the step 4.6.1-4.6.3

Are used separately

And

representing and then calculating the illumination distance d between the candidate face image and the face image to be measured

LThe method specifically comprises the following steps:

wherein,

representing the corresponding illumination coefficients of the candidate face image,

representing the corresponding illumination coefficient of the face image to be measured, d

L(I

(1),I

(2)) Representing the illumination distance between the candidate face image and the face image to be measured, wherein I

(1)As candidate face image, I

(2)The image is a human face image to be detected;

step 4.6.5, calculating the illumination distance between all the candidate face images and the face image to be detected, and calculating the illumination distance dLOrdering according to a rule from small to large, reserving dLThe top 10% of the faces are used as the next candidate face image.

Step 5, adjusting the illumination of all face images in the initial candidate face set according to the face image to be detected to obtain an adjusted candidate face set; the method specifically comprises the following steps:

using an image formula to convert the face image I to be detected in the replacement area(2)Is applied to the candidate face image I obtained in step 4(1)And if so, the image intensity corresponding to the candidate face image after application is as follows:

wherein,

is a candidate face image I calculated according to the formula (5)

(1)The image intensity of the face replacement area in each corresponding RGB color channel;

is a face image I to be measured calculated according to the formula (5)

(2)The image intensity of the face replacement area in each corresponding RGB color channel;

to be measured face image I

(2)Illumination candidate face image I

(1)And the image intensity corresponding to the candidate face image.

And 6, calculating Euclidean distances between all the candidate face images processed in the step 5 and the face image to be detected, arranging the Euclidean distances from small to large, and selecting the candidate face with the first rank and the face to be detected for replacement output.

Examples

An automatic face replacement method based on an off-line face database is implemented according to the following steps:

step 1, constructing a face database, and using the existing most popular high-definition face data set celebA-HQ data set. The method comprises the steps of cutting and correcting 30000 high-definition face images with the resolution of 1024 x 1024, carrying out batch mirror image processing on all face images in a database by using a python function, and increasing the number of candidate faces to 60000.

And 2, grouping the face data sets obtained in the step 1, calculating by using face key points obtained by dlib to obtain Euler angles, and classifying each face into 15 categories according to the Euler angles of the faces.

The problem of pose estimation is also known as the PnP (coherent-n-Point problemm), and face pose estimation mainly obtains angular information of face orientation. We represent the obtained face pose information by three euler angles (pitch, yaw, roll). Firstly, a 3D face model with 6 key points (a left canthus, a right canthus, a nose tip, a left mouth corner, a right mouth corner and a lower jaw) is defined, then, corresponding 6 face key points in a picture are detected by adopting Dlib, a rotation vector is solved by adopting a solvePp function of OpenCV, and finally, the rotation vector is converted into an Euler angle. And dividing the human face in the database into 15 attitude boxes according to the pitch angle and the yaw angle in the Euler angles. Because all faces in the CelebA-HQ face dataset are aligned, only the yaw and pitch angles are considered. To replace faces in different directions, we assigned each face to one of 15 pose boxes using yaw and pitch angles. When our system provides a test image containing a face to be replaced, it performs face detection to extract the face, estimates the pose, and aligns the face to a common coordinate system. The system then looks at the face database and selects possible candidate faces for replacement. Note that only candidate faces in the same pose bin in the library are considered; this ensures that the replacement faces are relatively similar in pose, allowing the system to use simple 2D image compilation rather than a three-dimensional model-based approach that requires precise alignment.

Step 2.1, as shown in fig. 4, feature point detection is performed on the two-dimensional face image in the face database by using the detection model shape _ predictor _68_ face _ landworks.dat of 68 key points of dlib, and 68 key points in a fixed index order are obtained.

Step 2.2, selecting points at the positions of canthus (horns of the eyes), nose tip (tip of the nose), mouth corner (horns of the mouth) and the like from the detection result of the previous step, and recording indexes id of required 6 key points, wherein the indexes id are respectively as follows: the chin: 8; nose tip: 30, of a nitrogen-containing gas; left canthus: 36; right canthus: 45, a first step of; left mouth angle: 48; right mouth angle: 54 as known 2D coordinates.

And 2.3, obtaining a 3-dimensional coordinate corresponding to each 2-dimensional coordinate point. In practical applications, there is no need to obtain an accurate three-dimensional model of the face and no such model can be obtained, so we can use the average face model coordinates. The coordinates of the 6 key points in the above figure are generally used: respectively as follows: nose tip: (0.0, 0.0, 0.0); the chin: (0.0, -330.0, -65.0); upper left corner of left eye: (-225.0f, 170.0f, -135.0); upper right corner of right eye: (225.0, 170.0, -135.0); mouth left corner: (-150.0, -150.0, -125.0); right angle of mouth: (150.0, -150.0, -125.0). It should be noted that these points are in an arbitrary coordinate system. I.e., World Coordinates.

And 2.4, solving the rotation vector by using a solvePnP function of Opencv, wherein the output result of the solvePnP function comprises a rotation vector (rotation vector) and a translation vector (translation vector). Here we are only concerned with rotation information, so the rotation vector will operate mainly.

Step 2.5, the rotation vector is one of the representation modes of the object rotation information, and is a common representation mode of OpenCV. Because we need the Euler angle, the rotation vector is converted into the Euler angle here. The specific conversion mode is to convert the rotation vector into a quadruple and then convert the quadruple into an Euler angle.

And 2.6, dividing the face database according to the yaw angle and the pitch angle in the Euler angle. Since less reliable extreme poses may lead to unreliable face changes, we only select images with a yaw angle within ± 25 ° and images with a pitch angle of ± 15 ° as alternatives. The span intervals of the yaw angle and the pitch angle are 10 degrees, the yaw angle ranges from-25 degrees to 25 degrees, 5 options are divided in total, the pitch angle ranges from-15 degrees to 15 degrees, and 3 options are divided in total. Considering the yaw angle and the pitch angle, the face database is classified into 15 attitude boxes according to the attitude, as shown in fig. 3.

And 3, when an image to be detected is input, using dlib to execute face detection to extract all faces, estimating the postures of the faces to be detected by using key point detection of dlib, calculating Euler angles of the faces, and aligning the faces to be detected and the faces in the database to a common coordinate system.

And 4, comparing the yaw angle and the pitch angle of the face to be detected and the faces in all the attitude boxes, and selecting candidate faces in the corresponding attitude boxes. In order to produce a perceptually realistic replacement image, the pose of the face to be measured and the pose of the replacement face must be completely similar, or even more similar than what is guaranteed to be in the same pose box. Therefore, the difference between the yaw angle and the pitch angle of the face selected from the corresponding attitude box and the yaw angle and the pitch angle of the face to be detected is not more than 3 degrees. In addition, the system requires that the selected candidate face be similar to the face to be tested in terms of gender, age, image quality, lighting, color, etc. We define some attributes to describe the similarity of the appearance of faces in images, mainly gender, age, resolution, blur level, lighting, color. In this section, we will describe these attributes and the corresponding criteria for selecting a set of candidate faces.

And 4.1, considering the authenticity after face changing, ensuring that the genders of the face to be detected and the candidate face are consistent, carrying out gender screening on the candidate face, and searching the male face in a pose box corresponding to a face database as a candidate face set of the next step if the male face is detected in the image to be detected.

And 4.2, ensuring that the face after face changing is better fused with the non-face part of the original image to achieve a better effect, and limiting the age of the candidate face, wherein the age is divided into the following 5 intervals, namely 0-10 years old, 10-20 years old, 20-40 years old, 40-60 years old and 60-80 years old. When the detected face belongs to an age interval, the face meeting the age requirement is searched in the candidate face set of the step 4.1 to be used as the next candidate face set.

And 4.3, in order to ensure that the postures of the face to be detected and the face to be replaced are very similar, more accurate posture selection is carried out on the candidate face set after the age screening in the step 4.2, so that the face candidate set in the step 4.2 and the face with the difference between the yaw angle and the pitch angle of the face to be detected and the yaw angle and the pitch angle of the face to be detected being not more than 3 degrees are selected as the candidate face set in the next step.

And 4.4, ensuring that the resolution of the candidate face is consistent with that of the original image. A significant difference in this property will result in a significant mismatch between the inner and outer regions of the face after replacement. We use the distance between the top left corner of the left eye and the top right corner of the right eye to define the resolution of the human face image. Since high resolution images can always be downsampled, we only need to define a lower limit on the resolution of the candidate faces. Therefore, we select the face meeting the resolution requirement from the candidate face set in step 4.3 as the candidate face set of the next step, and the distance between the left and right eyes is at least 80% of the distance between the left and right eyes of the face in the image to be measured.

And 4.5, in order to ensure that the fuzzy degree of the candidate face is consistent with the face to be detected, a simple heuristic measurement is used for measuring the similarity of the fuzzy degrees in the two images. The step 4.5 is specifically as follows:

step 4.5.1, we normalize the gray-level intensity of the eye region of each aligned face image to zero mean and unit variance, as shown in equation (1):

wherein x is the gray intensity value in a single pixel point of the eye region of the human face to be detected,

is the mean value of the gray level intensity of the eye region of the face to be measured, sigma is the standard deviation of the gray level intensity of the eye region of the face to be measured, and x

*Is the gray intensity value after normalization.

Step 4.5.2, we calculate a histogram h of normalized eye region gradient size(1)And h(2)The histogram is multiplied by a weighting function that uses the square of the histogram index bin, as shown in equation (2):

wherein n represents bin index number of histogram, i-1 represents candidate face image, i-2 represents image to be measured, h represents histogram index number, and h represents histogram index number(i)A histogram showing the normalized eye region gradient size for each of the two images.

Step 4.5.3, fuzzy distance is calculated as Histogram Intersection Distance (HID), in two weighted histograms

And

as shown below in equation (3):

wherein d is

BRepresenting the blur distance of two faces of a person,

and

representing weighted histograms of the two images. Will blur the distance d

BAnd ordering according to a rule from small to large, and reserving the first 50 percent of images as candidate face images of the next step.

And 4.6, ensuring that the illumination of the face of the candidate face is basically consistent with the face to be detected. And 4.5, calculating the illumination and average color in the replacement area of each face in the candidate face set in the step 4.5, and selecting the face with illumination and color similar to the face to be detected as the candidate face set of the next step under the condition of giving the face to be detected.

The step 4.6 is specifically as follows:

step 4.6.1, the human face re-labeling method is used to represent the face shape as a cylinder-like "average face shape".

Step 4.6.2, a simple orthographic projection is used to define the mapping from table face to face. Image intensity I of face replacement region in each RGB color channel

c(x, y) can be approximated using a linear combination of 9 spherical harmonics to

As shown in equation (4):

wherein

Representing the approximate image intensity of the face replacement region in each RGB color channel, n (x, y) is the table face normal for the image location (x, y), ρ

cOf each of three colour channelsConstant albedo (representing the average color within the replacement area), coefficient a

c,kDescription of the Lighting conditions, H

k(n) is a spherical harmonic image.

Step 4.6.3 by applying Schmitt orthogonalization to the harmonic basis H

k(n) to create an orthogonal basis psi

k(x, y), approximate image intensity

This orthogonal basis expansion can be used:

wherein, betac,kRepresenting the illumination coefficient, #k(x, y) represents a spherical harmonic image after schmidt orthogonalization. The other parameters are consistent with the above face formula.

Step 4.6.4, convert RGB albedo to HSV color space, for comparison of illumination, we will illuminate distance dLDefined as L between the corresponding illumination coefficients2(euclidean distance) distance:

wherein d isLRepresenting the illumination distances of the two images, dLOrdering according to a rule from small to large, reserving dLThe top 10% of the faces are used as the next candidate face image.

Selecting candidate faces from the library using the various appearance attributes described above is a nearest neighbor search problem. To speed up, a sequential selection method is used. Given a query face, we first select its pose, gender, age, resolution to approximate the face to be tested. This step enables us to reduce the number of potential candidate replacements from 60000 to thousands, next we use the fuzzy distance dBAnd a subsequent illumination distance dLFurther reducing the candidate face set, and finally selecting the first 50 faces as the lower faceAnd (5) one-step candidate face collection.

Step 5, adjusting the illumination of all face images in the initial candidate face set according to the face image to be detected to obtain an adjusted candidate face set; the method specifically comprises the following steps:

using an image formula to convert the face image I to be detected in the replacement area(2)Is applied to the candidate face image I obtained in step 4(1)And if so, the image intensity corresponding to the candidate face image after application is as follows:

wherein,

is a candidate face image I calculated according to the formula (5)

(1)The image intensity of the face replacement area in each corresponding RGB color channel;

is a face image I to be measured calculated according to the formula (5)

(2)The image intensity of the face replacement area in each corresponding RGB color channel;

to be measured face image I

(2)Illumination candidate face image I

(1)And the image intensity corresponding to the candidate face image.

And 6, calculating Euclidean distances between all the candidate face images processed in the step 5 and the face image to be detected, arranging the Euclidean distances from small to large, and selecting the candidate face with the first rank and the face to be detected for replacement output.