Disclosure of Invention

The embodiment of the application provides an image identification method and device, which can achieve a better neural network acceleration effect on the basis of ensuring the accuracy of an image classification result and greatly improve the operation efficiency of a system.

The application provides an image recognition method, which can comprise the following steps:

acquiring an image to be identified;

randomly cutting out image blocks with a preset image size from the image to be recognized;

inputting the image blocks into a pre-trained neural network classification model to obtain classification results of the image blocks; the classification result refers to that the image blocks are classified into one or more preset image types;

determining a classification confidence according to the classification result; the classification confidence refers to the probability that the image block is classified into each image type;

determining whether the current classification result is used as the final image recognition result of the corresponding image to be recognized or not according to the classification confidence; and when the current classification result cannot be used as the final image recognition result, obtaining a next image block again according to the feature map and a pre-established and trained positioning strategy network in an iterative calculation mode, and obtaining a next classification confidence coefficient according to the next image block until the current classification result is determined to be used as the final image recognition result of the corresponding image to be recognized according to the obtained classification confidence coefficient.

In an exemplary embodiment of the present application, the neural network classification model may include: a feature extraction network and a full connectivity layer;

the inputting the image block into a pre-trained neural network classification model, and obtaining the classification result of the image block may include:

inputting the image blocks into a pre-established and trained feature extraction network to obtain a feature map, inputting the feature map into a pre-established and trained full-connection layer, and obtaining the classification results of the image blocks.

In an exemplary embodiment of the present application, the determining whether to use the current classification result as the final image recognition result of the corresponding image to be recognized according to the classification confidence may include:

when the classification confidence coefficient is larger than or equal to a preset threshold value, determining that the current classification result is used as a final image recognition result of the image to be recognized;

and when the classification confidence is smaller than the preset threshold, determining that the current classification result cannot be used as the final image recognition result of the image to be recognized.

In an exemplary embodiment of the present application, the obtaining a next image block again according to the feature map and a pre-established and trained positioning policy network in an iterative computation manner, and obtaining a next classification confidence according to the next image block until determining, according to the obtained classification confidence, that a current classification result is used as a final image recognition result of a corresponding image to be recognized may include:

41. inputting the feature map obtained last time into a pre-established positioning strategy network, and obtaining the position normalization coordinates of the image block to be cut in the next step; cutting the next image block according to the position normalized coordinates of the image blocks;

42. inputting the image blocks into a pre-established and trained feature extraction network to obtain a feature map, inputting the feature map into a pre-established and trained full-connection layer, and obtaining classification results of the image blocks; determining a classification confidence according to the classification result;

43. determining whether the current classification result is used as a final image recognition result or not according to the classification confidence; if yes, go to step 44; if not, returning to the step 41;

44. and outputting the current classification result.

In an exemplary embodiment of the present application, the method may further include: when the classification confidence coefficient obtained after iteration circulation is performed for N times is still smaller than the preset threshold value, taking the classification result obtained for the Nth time as the final image recognition result; n is a positive integer and is a preset iteration threshold.

In an exemplary embodiment of the present application, the feature extraction network may include: a plurality of function layers arranged according to the ResNet (residual neural network) rule or the DenseNet (tightly-connected neural network) rule; and/or the presence of a gas in the gas,

the positioning policy network may include: a plurality of convolutional layers and a fully-connected layer, the convolutional layers and the fully-connected layer being sequentially arranged.

In an exemplary embodiment of the present application, the method may further include: extracting the feature according to the following first calculation formulaTaking the parameter theta of the networkgAnd the parameter theta of the full attachment layermTraining is carried out:

wherein, log [ ·]The function of the logarithm is represented and,

indicates the theta corresponding to the minimum value of the function

g,Θ

mValue of (a), g (x)

i,Θ

g) Indicates that an arbitrary ith image x is to be taken

iThe input parameter is theta

gIs used to extract the network g (x, theta)

g) The obtained characteristic diagram is used for describing the characteristics of the image,

representing an image x

iCorresponding classification result m (g (x)

i,Θ

g),Θ

m) Y in (1)

iElement, y

iAs an image x

iThe category label defined in (1);

representing the finally obtained optimized parameters; i is a positive integer.

In an exemplary embodiment of the present application, training the positioning policy network may include:

acquiring image data required by training to form a training set

And for the training set

Each image in (1) is marked with a corresponding category label of y

i;

By means of iterative calculation, according to the training set

Pre-established and trained feature extraction network and pre-established and trained full connectivity layer acquisition image x

iS of classification confidence series

i0,s

i1,...,s

i,N};

According to the classification confidence coefficient sequence si0,si1,...,si,NCalculating confidence increment deltas between two adjacent iterationsi,t+1Wherein, Δ si,t+1=si,t+1-si,t;

According to the confidence coefficient increment deltas

i,t+1And a preset second calculation formula is used for comparing the parameters of the positioning strategy network

And (5) training.

In an exemplary embodiment of the present application, the training set is calculated iteratively according to the training set

Pre-established and trained feature extraction network and pre-established and trained full connectivity layer acquisition image x

iS of classification confidence series

i0,s

i1,...,s

i,N}, may include:

81. from the training set

Image x of (1)

iRandomly cutting out image blocks with preset image size

Wherein i refers to the ith image, and i is a positive integer; i is less than or equal to x, x is a training set

Total number of images in (1); j refers to the jth iteration, j being an integer; j is less than or equal to N, and N is a preset iteration threshold;

82. the image block

Inputting the feature into a pre-established and trained feature extraction network to obtain a feature map f

i,jAnd applying the characteristic map f

i,jInputting a pre-established and trained full connection layer to obtain an image block

Classification result of (c)

i,j(ii) a According to the classification result c

i,jDetermining a classification confidence s

i,j;

83. And detecting whether j is satisfied or not. When j is equal to N, obtaining a classification confidence sequence si0,si1,...,si,N}; when j ≠ N, proceed to step 84;

84. the characteristic diagram f obtained in the last step

i,jInputting the position normalized coordinates of the image blocks required by the j +1 th iteration into a positioning strategy network p; normalizing the coordinates from the original image x according to the position

iObtaining the image block to be processed in the jth iteration by middle cutting

Using said image blocks

Updating the image block

And returns to step 83.

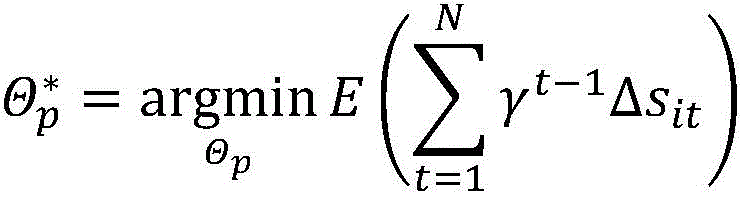

In an exemplary embodiment of the present application, the second calculation formula may include:

wherein, theta

pA parameter representing the policy network p,

expression to find the value of theta which minimizes the function

pE (-) represents a mathematical expectation operation, γ is a predefined discount rate parameter, γ is between 0 and 1; t is an integer, t is less than or equal to N, and N is a preset iteration threshold; Δ s

it=s

it-s

i(t-1)Refers to the image x

iCorresponding classification confidence series s

i0,s

i1,...,s

i,NThe difference between the t-th classification confidence and the t-1 st classification confidence in the data,

representing the resulting policy network parameters.

The embodiment of the application also provides an image recognition device, which may include a processor and a computer-readable storage medium, where instructions are stored in the computer-readable storage medium, and when the instructions are executed by the processor, the image recognition device implements the image recognition method described in any one of the above items.

Compared with the related art, the embodiment of the application can comprise the following steps: acquiring an image to be identified; randomly cutting out image blocks with a preset image size from the image to be recognized; inputting the image blocks into a pre-trained neural network classification model to obtain classification results of the image blocks; the classification result refers to that the image blocks are classified into one or more preset image types; determining a classification confidence according to the classification result; the classification confidence refers to the probability that the image block is classified into each image type; determining whether the current classification result is used as the final image recognition result of the corresponding image to be recognized or not according to the classification confidence; and when the current classification result cannot be used as the final image recognition result, obtaining a next image block again according to the feature map and a pre-established and trained positioning strategy network in an iterative calculation mode, and obtaining a next classification confidence coefficient according to the next image block until the current classification result is determined to be used as the final image recognition result of the corresponding image to be recognized according to the obtained classification confidence coefficient. Through the scheme of the embodiment, a better neural network acceleration effect is achieved on the basis of ensuring the accuracy of the image classification result, and the operation efficiency of the system is greatly improved.

Additional features and advantages of the application will be set forth in the description which follows, and in part will be obvious from the description, or may be learned by the practice of the application. Other advantages of the present application may be realized and attained by the instrumentalities and combinations particularly pointed out in the specification and the drawings.

Detailed Description

The present application describes embodiments, but the description is illustrative rather than limiting and it will be apparent to those of ordinary skill in the art that many more embodiments and implementations are possible within the scope of the embodiments described herein. Although many possible combinations of features are shown in the drawings and discussed in the detailed description, many other combinations of the disclosed features are possible. Any feature or element of any embodiment may be used in combination with or instead of any other feature or element in any other embodiment, unless expressly limited otherwise.

The present application includes and contemplates combinations of features and elements known to those of ordinary skill in the art. The embodiments, features and elements disclosed in this application may also be combined with any conventional features or elements to form a unique inventive concept as defined by the claims. Any feature or element of any embodiment may also be combined with features or elements from other inventive aspects to form yet another unique inventive aspect, as defined by the claims. Thus, it should be understood that any of the features shown and/or discussed in this application may be implemented alone or in any suitable combination. Accordingly, the embodiments are not limited except as by the appended claims and their equivalents. Furthermore, various modifications and changes may be made within the scope of the appended claims.

Further, in describing representative embodiments, the specification may have presented the method and/or process as a particular sequence of steps. However, to the extent that the method or process does not rely on the particular order of steps set forth herein, the method or process should not be limited to the particular sequence of steps described. Other orders of steps are possible as will be understood by those of ordinary skill in the art. Therefore, the particular order of the steps set forth in the specification should not be construed as limitations on the claims. Further, the claims directed to the method and/or process should not be limited to the performance of their steps in the order written, and one skilled in the art can readily appreciate that the sequences may be varied and still remain within the spirit and scope of the embodiments of the present application.

The present application provides an image recognition method, as shown in fig. 1, the method may include steps S101-S105:

s101, acquiring an image to be identified;

s102, randomly cutting out image blocks with preset image sizes from the image to be recognized;

s103, inputting the image blocks into a pre-trained neural network classification model to obtain classification results of the image blocks; the classification result refers to that the image blocks are classified into one or more preset image types;

s104, determining a classification confidence coefficient according to the classification result; the classification confidence refers to the probability that the image block is classified into each image type;

s105, determining whether the current classification result is used as the final image recognition result of the corresponding image to be recognized according to the classification confidence; and when the current classification result cannot be used as the final image recognition result, obtaining a next image block again according to the feature map and a pre-established and trained positioning strategy network in an iterative calculation mode, and obtaining a next classification confidence coefficient according to the next image block until the current classification result is determined to be used as the final image recognition result of the corresponding image to be recognized according to the obtained classification confidence coefficient.

In an exemplary embodiment of the application, a neural network acceleration method based on a visual attention mechanism is provided, which may include inputting an original image to be recognized into a neural network (which may include a neural network classification model and a positioning policy network) after being randomly cropped, extracting image features, and generating a classification result and a classification confidence according to the image features; determining whether the current classification result is used as a final image recognition result or not according to the classification confidence, if the current classification result cannot be used as the final image recognition result, determining the central position of the next cut image according to the feature map of the image, and iteratively generating a classification result and a classification confidence until a high-confidence classification result is obtained; finally, the neural network is deployed for image automatic recognition.

In the exemplary embodiment of the application, the scheme of the embodiment of the application effectively solves the problems of large calculation amount, high test time delay, difficulty in efficient deployment on mobile and embedded devices with limited resources and the like of an automatic image classification method based on deep learning, so that a neural network can obtain a correct image classification result with smaller calculation amount and lower time delay, the inference process of the neural network on a mobile platform is accelerated, and the operation efficiency of a system is greatly improved. Compared with the traditional self-adaptive reasoning method, the method can obtain better acceleration effect, does not modify the structure of the neural network, and has wider applicability.

In an exemplary embodiment of the present application, the neural network classification model may include: a feature extraction network and a full connectivity layer;

the inputting the image block into a pre-trained neural network classification model, and obtaining the classification result of the image block may include:

inputting the image blocks into a pre-established and trained feature extraction network to obtain a feature map, inputting the feature map into a pre-established and trained full-connection layer, and obtaining the classification results of the image blocks.

In an exemplary embodiment of the present application, the obtaining a next image block again according to the feature map and a pre-established and trained positioning policy network in an iterative computation manner, and obtaining a next classification confidence according to the next image block until determining that the current classification result is used as a final image recognition result of the corresponding image to be recognized according to the obtained classification confidence may include steps a 1-D1:

a1, inputting the feature map obtained last time into a pre-established positioning strategy network, and obtaining the position normalization coordinates of the image block to be cut in the next step; cutting the next image block according to the position normalized coordinates of the image blocks;

b1, inputting the image blocks into a pre-established and trained feature extraction network to obtain a feature map, inputting the feature map into a pre-established and trained full connection layer to obtain the classification results of the image blocks; determining a classification confidence according to the classification result;

c1, determining whether the current classification result is used as a final image recognition result according to the classification confidence; if yes, go to step D1; if not, returning to the step A1;

d1, outputting the current classification result.

In an exemplary embodiment of the present application, the determining whether to use the current classification result as the final image recognition result of the corresponding image to be recognized according to the classification confidence may include:

when the classification confidence coefficient is larger than or equal to a preset threshold value, determining that the current classification result is used as a final image recognition result of the image to be recognized;

and when the classification confidence is smaller than the preset threshold, determining that the current classification result cannot be used as the final image recognition result of the image to be recognized.

In an exemplary embodiment of the present application, based on the above embodiment scheme, the detailed image automatic identification method may include:

1. for each test image x, randomly cropping out image blocks with an image size of H' × W

Obtaining a feature map through the feature extraction network g and the full connection layer m

And classification results

Let the classification confidence be s

0=maxc

i0When s is

0Direct output of c when ≧ eta

0And as a recognition result, wherein eta is a preset threshold and takes a value between 0 and 1.

2. When s is

iWhen the position is less than eta, wherein i is 0, 1, and N-1, the position of the image block processed in the step i +1 is obtained through a positioning strategy network p, and the image block is cut out

Obtaining a classification result c through the feature extraction network g and the full connection layer m

i+1And classification confidence s

i+1. When s is

i+1Output c when ≧ η

i+1And (5) as a recognition result, otherwise, repeating the step 2.

In an exemplary embodiment of the present application, the method may further include: when the classification confidence coefficient obtained after iteration circulation is performed for N times is still smaller than the preset threshold value, taking the classification result obtained for the Nth time as the final image recognition result; n is a positive integer and is a preset iteration threshold.

In an exemplary embodiment of the present application, the classification confidence s obtained when iterating the nth timeNWhen < eta, output cNAs a result of the recognition.

In the exemplary embodiment of the present application, before the image recognition by the above embodiment scheme, a neural network (a feature extraction network, a full connection layer, and a positioning strategy network) for classification may be constructed and trained in advance.

In an exemplary embodiment of the present application, image data required for training may first be acquired as a training set

Wherein the training set

Is x

i,x

iIt may be a three-dimensional matrix of a × H × W, each element representing a pixel value of an image, a representing the number of channels of the image, and H and W representing the height and width of the image, respectively; each image x

iWith a category label y

iCorresponding to, y

iIs an integer with a value between 1 and K (assuming that K classification categories are shared, namely K classification recognition results) and is used for marking x

iClass to which it belongs, label y

iMay be given by manual labeling.

In an exemplary embodiment of the present application, a feature extraction network g and a full connectivity layer m may be established.

In an exemplary embodiment of the present application, the feature extraction network may include: a plurality of function layers arranged according to the ResNet (residual neural network) rule or the DenseNet (tightly-connected neural network) rule. The feature extraction network can be formed by arranging a plurality of function layers according to a ResNet rule or a DenseNet rule. The parameter of the feature extraction network can be set to thetagWith f ═ g (x, Θ)g) Representing the image x input parameter as ΘgThe characteristic diagram f obtained by the neural network is Af×Hf×WfOf a three-dimensional matrix offNumber of channels, H, of the feature mapfAnd WfRespectively, the height and width of the feature map.

In an exemplary embodiment of the present application, the parameter of the full connection layer may be set to ΘmUsing m (f, theta)m) Representing the feature diagram f with the input parameter thetamThe output c obtained from the fully connected layer of (2) is Kx 1And (3) vector, wherein each element takes a value between 0 and 1, and K is the total number of classification categories defined in the content.

In an exemplary embodiment of the present application, the training set may be selected from a training set

Image x of (1)

i(the dimension of which may be AxHxW as defined above) blocks of an image of size H '× W' are randomly cropped

Wherein H ' < H, W ' < W, i.e. respectively from the interval [0, H-H ']And [0, W-W']To generate a random integer h

i0And w

i0。

In an exemplary embodiment of the present application, the original image x may be subjected to the following clipping calculation formula

iCutting to obtain image block

The size is H '× W', the horizontal and vertical coordinates of the upper left corner are H

ijAnd w

ijThe values of the two are respectively in the interval of [0, H-H']And [0, W-W']And (4) the following steps.

In an exemplary embodiment of the present application, the method may further include: extracting the parameters theta of the network from the features according to a first calculation formulagAnd the parameter theta of the full attachment layermTraining is carried out:

wherein, log [ ·]The function of the logarithm is represented and,

indicates the theta corresponding to the minimum value of the function

g,Θ

mValue of (a), g (x)

i,Θ

g) Indicates that an arbitrary ith image x is to be taken

iThe input parameter is theta

gIs used to extract the network g (x, theta)

g) The obtained compoundThe figure is a figure of merit,

representing an image x

iCorresponding classification result m (g (x)

i,Θ

g),Θ

m) Y in (1)

iElement, y

iAs an image x

iThe category label defined in (1);

representing the finally obtained optimized parameters; i is a positive integer.

In an exemplary embodiment of the present application, a three-dimensional matrix having a dimension of a × H '× W', i.e., an image block, may be divided into

Obtaining a feature graph f output by the feature extraction network in the input defined feature extraction network

i0Then, the feature map f is used

i0And inputting the classification result output by the full connection layer into the defined full connection layer. And according to the first calculation formula, the parameter theta of the neural network g is calculated

gAnd the parameter theta of the full attachment layer m

mThe training is carried out, and the training is carried out,

in an exemplary embodiment of the present application, the location policy network may include: a plurality of convolutional layers and a fully-connected layer, the convolutional layers and the fully-connected layer being sequentially arranged.

In an exemplary embodiment of the present application, a positioning policy network p may be established, where the positioning policy network p may be formed by sequentially arranging a plurality of convolutional layers and a fully-connected layer, and a parameter of the positioning policy network p may be set to ΘpThe input of the positioning strategy network p is a feature graph f obtained by a defined feature extraction network, and the dimension of the feature graph f is Af×Hf×WfThe output of p is the normalized coordinates (h, w) of the position of the next image block to be cut, and is a2 x 1 vector, the value of each element in the vector is between 0 and 1, and the position of the upper left corner of the image block accounts for the proportion of the whole image.

In an exemplary embodiment of the present application, training the positioning strategy network may comprise steps A2-D2:

a2, obtaining image data required by training to form a training set

And for the training set

Each image x in (1)

iLabel the corresponding category label as y

i。

B2, through iterative calculation, according to the training set

Pre-established and trained feature extraction network and pre-established and trained full connectivity layer acquisition image x

iS of classification confidence series

i0,s

i1,…,s

i,N}。

In an exemplary embodiment of the present application, the training set is calculated iteratively according to the training set

Pre-established and trained feature extraction network and pre-established and trained full connectivity layer acquisition image x

iS of classification confidence series

i0,s

i1,...,s

i,NMay comprise steps A3-D3:

a3, from the training set

Image x of (1)

iRandomly cutting out image blocks with preset image size

Wherein i refers to the ith image, and i is a positive integer; i is less than or equal to x, x is a training set

Total number of images in (1);j refers to the jth iteration, j being an integer; j is less than or equal to N, and N is a preset iteration threshold;

b3, converting the image block

Inputting the feature into a pre-established and trained feature extraction network to obtain a feature map f

i,jAnd applying the characteristic map f

i,jInputting a pre-established and trained full connection layer to obtain an image block

Classification result of (c)

i,j(ii) a According to the classification result c

i,jDetermining a classification confidence s

i,j;

And C3, detecting whether j is equal to N. When j is equal to N, obtaining a classification confidence sequence si0,si1,...,si,N}; when j is not equal to N, entering the step D3;

d3, feature map f obtained in the previous step

i,jInputting the position normalized coordinates of the image blocks required by the j +1 th iteration into a positioning strategy network p; normalizing the coordinates from the original image x according to the position

iObtaining the image block to be processed in the jth iteration by middle cutting

Using said image blocks

Updating the image block

And returns to step C3.

C2, according to the classification confidence coefficient sequence si0,si1,...,si,NCalculating confidence increment deltas between two adjacent iterationsi,t+1Wherein, Δ si,t+1=si,t+1-si,t。

D2, increasing Δ s according to the confidence

i,t+1And a preset second calculation formula is used for comparing the parameters of the positioning strategy network

And (5) training.

In an exemplary embodiment of the present application, the second calculation formula may include:

wherein, theta

pA parameter representing the policy network p,

expression to find the value of theta which minimizes the function

pE (-) represents a mathematical expectation operation, γ is a predefined discount rate parameter, γ is between 0 and 1; t is an integer, t is less than or equal to N, and N is a preset iteration threshold; Δ s

it=s

it-s

i(t-1)Refers to the image x

iCorresponding classification confidence series s

i0,s

i1,...,s

i,NThe difference between the t-th classification confidence and the t-1 st classification confidence in the data,

representing the resulting policy network parameters.

In an exemplary embodiment of the present application, for the training set

Each image x in (1)

iThe corresponding category label is y

iRepeating the steps A3 and B3 to obtain corresponding characteristic graphs

And classification results

Let the classification confidence be c

i0Middle category labely

iThe corresponding component, i.e.

In exemplary embodiments of the present application, f may be used

i,j-1Representing the feature graph obtained by the feature extraction network g in the previous step, and converting the feature graph f into the feature graph

i,j-1Inputting the position normalized coordinates of the image block required by the step j into a positioning strategy network p to obtain: (h)

ij,w

ij)=p(f

i,j-1,Θ

p). Can be based on the above normalized coordinates (h)

ij,w

ij) From the original image x

iObtaining the image block to be processed in the j step by middle cutting

The size of the left upper corner is H 'multiplied by W', the horizontal and vertical coordinates of the left upper corner are H respectively

ijAnd w

ij. Can be used for image block

Inputting a feature extraction network g and a full connection layer m to obtain a feature graph and a classification result:

confidence of classification is

In an exemplary embodiment of the present application, the previous step is repeated for N rounds, where N is a pre-specified parameter, and may generally be equal to 5, so as to obtain a classification confidence sequence { s ═ si0,si1,...,si,N}。

In an exemplary embodiment of the present application, the confidence increment between two adjacent steps may be Δ s

i,t+1=s

i,t+1-s

i,t(ii) a By calculatingSolving the following problem in the training set

Upper training positioning strategy network p:

wherein gamma is a predefined discount rate parameter, the size of gamma is between 0 and 1, and the optimal positioning strategy network parameter can be obtained by minimizing the above formula

In the exemplary embodiment of the present application, a trained feature extraction network, a full connection layer and a positioning strategy network are obtained, and based on these trained neural networks, automatic image recognition can be realized according to steps S101 to S105.

In the exemplary embodiments of the present application, the embodiments of the present application include at least the following advantages:

1. the scheme of the embodiment of the application effectively solves the problems that an automatic image classification method based on deep learning is large in calculation amount, high in test time delay, difficult to efficiently deploy on mobile and embedded equipment with limited resources and the like, so that a neural network can obtain a correct image classification result with smaller calculation amount and lower time delay, the inference process of a neural network model on a mobile platform is accelerated, and the operation efficiency of the system is greatly improved. In addition, compared with other self-adaptive reasoning methods, the method does not modify the structure and parameters of the neural network, can be compatible with other network pruning, knowledge distillation and weight quantification methods, and has the characteristics of high efficiency and easiness in use.

2. The huge computing resource consumption of the deep convolutional neural network is not favorable for the deployment of the network model on actual systems such as mobile equipment. In view of this point, the embodiment of the present application dynamically determines the size of the network for processing each input image (that is, using image blocks) by using a self-adaptive inference method, and further adaptively determines the amount of computation power consumed by each input image, so that the same accuracy is achieved with smaller computation overhead, and the deployment cost of the deep convolutional neural network on the device with limited computation resources is greatly saved.

3. Other adaptive inference methods for neural network acceleration tend to directly modify a network model, such as embedding a multi-stage classifier in a network to realize adaptive inference. The embodiment of the application introduces a self-adaptive reasoning method based on a visual attention mechanism, which adopts image blocks with smaller sizes obtained by cutting from an original image as input, and realizes self-adaptive reasoning by selecting the amount of calculation power adopted by a decision network of the number of the image blocks without modifying the structure of a neural network. The embodiment of the application can also be compatible with other neural network compression and acceleration methods, such as network pruning, knowledge distillation and the like, so that the acceleration effect of the network model is further improved.

The embodiment of the present application further provides an image recognition apparatus 1, as shown in fig. 2, which may include a processor 11 and a computer-readable storage medium 12, where the computer-readable storage medium 12 stores instructions, and when the instructions are executed by the processor 11, the image recognition method described in any one of the above is implemented.

It will be understood by those of ordinary skill in the art that all or some of the steps of the methods, systems, functional modules/units in the devices disclosed above may be implemented as software, firmware, hardware, and suitable combinations thereof. In a hardware implementation, the division between functional modules/units mentioned in the above description does not necessarily correspond to the division of physical components; for example, one physical component may have multiple functions, or one function or step may be performed by several physical components in cooperation. Some or all of the components may be implemented as software executed by a processor, such as a digital signal processor or microprocessor, or as hardware, or as an integrated circuit, such as an application specific integrated circuit. Such software may be distributed on computer readable media, which may include computer storage media (or non-transitory media) and communication media (or transitory media). The term computer storage media includes volatile and nonvolatile, removable and non-removable media implemented in any method or technology for storage of information such as computer readable instructions, data structures, program modules or other data, as is well known to those of ordinary skill in the art. Computer storage media includes, but is not limited to, RAM, ROM, EEPROM, flash memory or other memory technology, CD-ROM, Digital Versatile Disks (DVD) or other optical disk storage, magnetic cassettes, magnetic tape, magnetic disk storage or other magnetic storage devices, or any other medium which can be used to store the desired information and which can accessed by a computer. In addition, communication media typically embodies computer readable instructions, data structures, program modules or other data in a modulated data signal such as a carrier wave or other transport mechanism and includes any information delivery media as known to those skilled in the art.