CN112560059B - Vertical federal model stealing defense method based on neural pathway feature extraction - Google Patents

Vertical federal model stealing defense method based on neural pathway feature extraction Download PDFInfo

- Publication number

- CN112560059B CN112560059B CN202011499140.XA CN202011499140A CN112560059B CN 112560059 B CN112560059 B CN 112560059B CN 202011499140 A CN202011499140 A CN 202011499140A CN 112560059 B CN112560059 B CN 112560059B

- Authority

- CN

- China

- Prior art keywords

- model

- edge

- loss

- sample

- sample set

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F21/00—Security arrangements for protecting computers, components thereof, programs or data against unauthorised activity

- G06F21/60—Protecting data

- G06F21/602—Providing cryptographic facilities or services

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/21—Design or setup of recognition systems or techniques; Extraction of features in feature space; Blind source separation

- G06F18/214—Generating training patterns; Bootstrap methods, e.g. bagging or boosting

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F21/00—Security arrangements for protecting computers, components thereof, programs or data against unauthorised activity

- G06F21/60—Protecting data

- G06F21/62—Protecting access to data via a platform, e.g. using keys or access control rules

- G06F21/6218—Protecting access to data via a platform, e.g. using keys or access control rules to a system of files or objects, e.g. local or distributed file system or database

- G06F21/6245—Protecting personal data, e.g. for financial or medical purposes

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/045—Combinations of networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

- G06N3/084—Backpropagation, e.g. using gradient descent

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- General Health & Medical Sciences (AREA)

- Health & Medical Sciences (AREA)

- General Engineering & Computer Science (AREA)

- General Physics & Mathematics (AREA)

- Software Systems (AREA)

- Data Mining & Analysis (AREA)

- Bioethics (AREA)

- Artificial Intelligence (AREA)

- Evolutionary Computation (AREA)

- Life Sciences & Earth Sciences (AREA)

- Biomedical Technology (AREA)

- Computer Hardware Design (AREA)

- Biophysics (AREA)

- Computational Linguistics (AREA)

- Computer Security & Cryptography (AREA)

- Molecular Biology (AREA)

- Computing Systems (AREA)

- Mathematical Physics (AREA)

- Bioinformatics & Computational Biology (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Evolutionary Biology (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Medical Informatics (AREA)

- Databases & Information Systems (AREA)

- Information Retrieval, Db Structures And Fs Structures Therefor (AREA)

Abstract

本发明公开了一种基于神经通路特征提取的垂直联邦下模型窃取防御方法,包括:(1)将数据集中的每张样本平均分成两部分,组成样本集DA和DB,且仅样本集DB包含样本标签;(2)依据DA对边缘终端PB的边缘模型MA进行训练,依据DB对边缘终端PB的边缘模型MB进行训练,PA将训练过程产生的特征数据发送给PB,PB利用接收的特征数据和激活神经元通路数据计算损失函数,PA和PB并将各自的损失函数掩码加密后上传至服务端;(3)服务端对上传的损失函数掩码解密并聚合后求解聚合的损失函数获得MA和MB的梯度信息,并返回梯度信息至PA和PB以更新边缘模型网络参数。来提高在垂直联邦场景下边缘模型的信息安全性。

The invention discloses a model stealing defense method under vertical federation based on neural pathway feature extraction. DB contains sample labels; (2 ) the edge model MA of the edge terminal PB is trained according to DA, the edge model MB of the edge terminal PB is trained according to DB , and the feature data generated by the training process is trained by P A Send to P B , P B uses the received feature data and activated neuron pathway data to calculate the loss function, P A and P B encrypt their respective loss function masks and upload them to the server; (3) The server pairs the uploaded After the loss function mask is decrypted and aggregated, the aggregated loss function is solved to obtain the gradient information of M A and M B , and the gradient information is returned to P A and P B to update the network parameters of the edge model. To improve the information security of edge models in vertical federation scenarios.

Description

技术领域technical field

本发明属于安全防御领域,具体涉及一种基于神经通路特征提取的垂直联邦下模型窃取防御方法。The invention belongs to the field of security defense, and in particular relates to a model stealing defense method under a vertical federation based on neural pathway feature extraction.

背景技术Background technique

近年来,深度学习模型被广泛应用在各种现实任务中,并取得了良好的效果。与此同时,数据孤岛以及模型训练和应用过程中的隐私泄露成为目前阻碍人工智能技术发展的主要难题。为了解决这一问题,联邦学习作为一种高效的隐私保护手段应运而生。联邦学习是一种分布式的机器学习方法,即参与方对本地数据进行训练后将更新的参数上传至服务器,再由服务器进行聚合得到总体参数的学习方法,通过参与方的本地训练与参数传递,训练出一个无损的学习模型。In recent years, deep learning models have been widely used in various real-world tasks and achieved good results. At the same time, data silos and privacy leakage during model training and application have become the main problems currently hindering the development of artificial intelligence technology. To solve this problem, federated learning emerges as an efficient privacy protection method. Federated learning is a distributed machine learning method, that is, the participants upload the updated parameters to the server after training local data, and then the server aggregates the learning method to obtain the overall parameters, through the local training and parameter transmission of the participants. , to train a lossless learning model.

按照数据分布的不同情况,联邦学习大致可以分为三类:水平联邦学习、垂直联邦学习和联邦迁移学习。横向联邦学习指的是在不同数据集之间数据特征重叠较多而用户重叠较少的情况下,按照用户维度对数据集进行切分,并取出双方数据特征相同而用户不完全相同的那部分数据进行训练。纵向联邦学习指的是在不同数据集之间用户重叠较多而数据特征重叠较少的情况下,按照数据特征维度对数据集进行切分,并取出双方针对相同用户而数据特征不完全相同的那部分数据进行训练。联邦迁移学习指的是在多个数据集的用户与数据特征重叠都较少的情况下,不对数据进行切分,而是利用迁移学习来克服数据或标签不足的情况。According to different data distribution, federated learning can be roughly divided into three categories: horizontal federated learning, vertical federated learning, and federated transfer learning. Horizontal federated learning refers to dividing the data set according to the user dimension when the data features overlap more and the users overlap less between different data sets, and extract the part with the same data features but not the same users. data for training. Longitudinal federated learning refers to dividing the dataset according to the dimension of data features when there is more overlap of users and less overlap of data features between different datasets, and extracts the data that both sides target the same user but have different data features. That part of the data for training. Federated transfer learning refers to the use of transfer learning to overcome the lack of data or labels without segmenting the data when there is less overlap between users and data features in multiple datasets.

与传统机器学习技术相比,联邦学习不仅可以提高学习效率,还能解决数据孤岛问题,保护本地数据隐私。但联邦学习中也存在较多的安全隐患,在联邦学习中的受到的攻击威胁主要有三种:中毒攻击、对抗攻击以及隐私泄露。其中隐私泄露问题是联邦学习场景下最重要的问题,因为联邦学习涉及多个参与方的模型信息交互,在这个过程中,很容易遭到恶意攻击,对联邦学习的模型隐私安全造成巨大威胁。Compared with traditional machine learning technologies, federated learning can not only improve learning efficiency, but also solve the problem of data silos and protect local data privacy. However, there are also many security risks in federated learning. There are three main attack threats in federated learning: poisoning attacks, adversarial attacks, and privacy leaks. Among them, the problem of privacy leakage is the most important problem in the federated learning scenario, because federated learning involves the interaction of model information of multiple participants. In this process, it is easy to be maliciously attacked, which poses a huge threat to the privacy and security of the federated learning model.

在垂直联邦场景下,为了保护深度模型的隐私安全,提出的主要隐私保护技术包括安全多方计算、同态加密和差分隐私保护,安全多方计算和同态加密技术会大大增加计算的复杂度,时间成本和计算成本都会提高,同时对设备的计算力要求也较高,而差分隐私保护技术需要通过添加噪声的方式实现隐私安全保护,会对模型在原有任务上的准确率产生影响。In the vertical federation scenario, in order to protect the privacy security of the deep model, the main privacy protection technologies proposed include secure multi-party computation, homomorphic encryption and differential privacy protection. Secure multi-party computation and homomorphic encryption technology will greatly increase the computational complexity and time. The cost and computing cost will increase, and the computing power of the device is also high. The differential privacy protection technology needs to achieve privacy security protection by adding noise, which will affect the accuracy of the model on the original task.

发明内容SUMMARY OF THE INVENTION

为了提高在垂直联邦场景下边缘模型的信息安全性,防止边缘端模型在信息传递的过程中,被恶意攻击者窃取,本发明提出了一种基于神经通路特征提取的垂直联邦下模型窃取防御方法。In order to improve the information security of the edge model in the vertical federation scenario and prevent the edge model from being stolen by malicious attackers in the process of information transmission, the present invention proposes a vertical federation model stealing defense method based on neural pathway feature extraction .

本发明的技术方案为:The technical scheme of the present invention is:

一种基于神经通路特征提取的垂直联邦下模型窃取防御方法,包括以下步骤:A model stealing defense method under vertical federation based on neural pathway feature extraction, comprising the following steps:

(1)将数据集中的每张样本平均分成两部分,组成样本集DA和样本集DB,且仅样本集DB包含样本标签,样本集DA、DB分配到边缘终端PA和边缘终端PB;(1) Divide each sample in the data set into two parts on average to form a sample set D A and a sample set DB , and only the sample set DB contains sample labels, and the sample sets D A and DB are allocated to the edge terminals P A and edge terminal P B ;

(2)依据样本集DA对边缘终端PB的边缘模型MA进行训练,依据样本集DB对边缘终端PB的边缘模型MB进行训练,边缘终端PA将训练过程产生的特征数据发送给PB,PB利用接收的特征数据和激活神经元通路数据计算损失函数,边缘终端PA和PB并将各自的损失函数掩码加密后上传至服务端;(2) The edge model M A of the edge terminal PB is trained according to the sample set D A , the edge model MB of the edge terminal PB is trained according to the sample set D B , and the edge terminal P A uses the feature data generated during the training process . Send to P B , P B uses the received feature data and activated neuron pathway data to calculate the loss function, and the edge terminals P A and P B encrypt their respective loss function masks and upload them to the server;

(3)服务端对边缘终端PA和PB上传的损失函数掩码解密后,聚合损失函数后求解聚合的损失函数获得MA和MB的梯度信息,并返回梯度信息至边缘终端PA和PB以更新边缘模型网络参数。(3) After the server decrypts the loss function mask uploaded by the edge terminals P A and P B , aggregates the loss function and solves the aggregated loss function to obtain the gradient information of M A and M B , and returns the gradient information to the edge terminal P A and P B to update the edge model network parameters.

与现有技术相比,本发明具有的有益效果至少包括:Compared with the prior art, the beneficial effects of the present invention at least include:

本发明提供的基于神经通路特征提取的垂直联邦下模型窃取防御方法,通过在训练的时候固定神经通道特征,并对损失函数进行加密上传,以防止被恶意攻击者窃取,垂直联邦场景下边缘模型的信息安全性。The defense method for model stealing under vertical federation based on neural pathway feature extraction provided by the present invention, by fixing neural pathway features during training, and encrypting and uploading the loss function to prevent theft by malicious attackers, the edge model under vertical federation scenario information security.

附图说明Description of drawings

为了更清楚地说明本发明实施例或现有技术中的技术方案,下面将对实施例或现有技术描述中所需要使用的附图做简单地介绍,显而易见地,下面描述中的附图仅仅是本发明的一些实施例,对于本领域普通技术人员来讲,在不付出创造性劳动前提下,还可以根据这些附图获得其他附图。In order to illustrate the embodiments of the present invention or the technical solutions in the prior art more clearly, the following briefly introduces the accompanying drawings used in the description of the embodiments or the prior art. Obviously, the drawings in the following description are only These are some embodiments of the present invention. For those of ordinary skill in the art, other drawings can also be obtained according to these drawings without creative efforts.

图1是本发明实施例提供的基于神经通路特征提取的垂直联邦下模型窃取防御方法的流程图;1 is a flowchart of a model stealing defense method under vertical federation based on neural pathway feature extraction provided by an embodiment of the present invention;

图2是本发明实施例提供的垂直联邦模型的训练示意图。FIG. 2 is a schematic diagram of training a vertical federation model provided by an embodiment of the present invention.

具体实施方式Detailed ways

为使本发明的目的、技术方案及优点更加清楚明白,以下结合附图及实施例对本发明进行进一步的详细说明。应当理解,此处所描述的具体实施方式仅仅用以解释本发明,并不限定本发明的保护范围。In order to make the objectives, technical solutions and advantages of the present invention clearer, the present invention will be further described in detail below with reference to the accompanying drawings and embodiments. It should be understood that the specific embodiments described herein are only used to explain the present invention, and do not limit the protection scope of the present invention.

针对垂直联邦场景下边缘端模型在模型信息交互的过程中,易受到恶意攻击者的威胁,攻击者窃取边缘端的模型信息后,通过梯度和损失值的计算,来窃取边缘端的模型。为了防止此类窃取边缘端模型,本发明实施例提供了一种基于神经通路特征提取的垂直联邦下模型窃取防御方案,通过在边缘端模型的训练阶段加入神经通路特征提取步骤,通过固定激活神经元的方法对训练阶段的模型参数传递过程进行加密,使得在垂直联邦场景下不同的边缘端进行模型参数交换的过程中,有效的防御恶意参与方窃取深度模型的隐私信息,攻击者在不了解固定神经通路的前提下,即使窃取了边缘端模型的传递信息,也无法还原模型的训练过程,达到了保护模型信息,对抗模型窃取攻击的防御目的。In the vertical federation scenario, the edge model is vulnerable to the threat of malicious attackers in the process of model information interaction. After stealing the model information at the edge, the attacker steals the model at the edge by calculating the gradient and loss value. In order to prevent such stealing of edge models, the embodiment of the present invention provides a vertical federation model stealing defense scheme based on neural pathway feature extraction. By adding a neural pathway feature extraction step in the training phase of edge model, the neural pathway is activated by fixed activation. The meta-method encrypts the model parameter transfer process in the training phase, so that in the process of model parameter exchange between different edge terminals in the vertical federation scenario, it can effectively prevent malicious participants from stealing the privacy information of the deep model. Under the premise of fixing the neural pathway, even if the transmission information of the edge model is stolen, the training process of the model cannot be restored, which achieves the defense purpose of protecting model information and resisting model stealing attacks.

图1是本发明实施例提供的基于神经通路特征提取的垂直联邦下模型窃取防御方法的流程图。如图1所示,实施例提供的基于神经通路特征提取的垂直联邦下模型窃取防御方法包括以下步骤:FIG. 1 is a flowchart of a model stealing defense method under vertical federation based on neural pathway feature extraction provided by an embodiment of the present invention. As shown in FIG. 1 , the model stealing defense method under vertical federation based on neural pathway feature extraction provided by the embodiment includes the following steps:

步骤1,数据集的划分和对齐。Step 1, the division and alignment of the dataset.

实施例中,采用MNIST数据集、CIFAR-10数据集和ImageNet数据集。其中,MNIST数据集的训练集共十类,每类6000张样本,测试集十类,每类1000张样本;CIFAR-10数据集的训练集共十类,每类5000张样本,测试集十类,每类1000张样本;ImageNet数据集共1000类,每类包含1000张样本,从每类中随机抽取30%的图片作为测试集,其余图片作为训练集。In the embodiment, the MNIST dataset, the CIFAR-10 dataset and the ImageNet dataset are used. Among them, the training set of the MNIST dataset consists of ten categories, with 6000 samples in each category, and the test set of ten categories with 1000 samples in each category; the training set of the CIFAR-10 dataset consists of ten categories, with 5000 samples in each category, and the test set consists of ten categories. Class, 1000 samples per class; ImageNet dataset has 1000 classes, each class contains 1000 samples, 30% of the images are randomly selected from each class as the test set, and the rest of the images are used as the training set.

本发明中,在垂直联邦下采用两个边缘终端PA和PB,在垂直联邦场景下,两个边缘终端PA和PB的数据具有不同的数据特征,因此需要对预处理后的数据集再进行特征的分割。将MNIST数据集、CIFAR-10数据集和ImageNet数据集中的每张样本图像平均分成两部分,分别作为样本集DA和样本集DB,其中样本集DB包含样本图像的样本类标。In the present invention, two edge terminals PA and PB are used in the vertical federation . In the vertical federation scenario, the data of the two edge terminals PA and PB have different data characteristics, so it is necessary to analyze the preprocessed data. Set and then perform feature segmentation. Each sample image in the MNIST dataset, the CIFAR-10 dataset and the ImageNet dataset is equally divided into two parts, which are respectively a sample set DA and a sample set DB, where the sample set DB contains the sample class labels of the sample images.

实施例中,对样本进行划分,获得样本集DA和样本集DB后,还需要对样本集DA和样本集DB中属于来源于同一个样本的部分样本对齐,即保证边缘模型MA和边缘模型MB同一次输入的部分样本来源于同一个样本。In the embodiment, after dividing the samples and obtaining the sample set D A and the sample set D B , it is also necessary to align some samples in the sample set D A and the sample set D B that belong to the same sample, that is, the edge model M is guaranteed. Some samples of the same input of A and edge model MB come from the same sample.

由于在垂直联邦场景下边缘终端PA和PB的用户群体是不同的,同时为了确保不同的边缘终端的样本集DA和DB不会暴露各自的原始数据,采取一种基于加密的用户ID对齐技术,将属于同一样本图像的两个部分图像对齐,以保证在两终端的训练的过程中,每次使用的部分图像数据来自于同一样本图像,在数据实体对齐的过程中,任意边缘终端的用户都不会被暴露出来。Since the user groups of edge terminals P A and P B are different in the vertical federation scenario, and in order to ensure that the sample sets D A and DB of different edge terminals do not expose their respective original data, an encryption-based user group is adopted. ID alignment technology aligns two partial images belonging to the same sample image to ensure that during the training process of the two terminals, the partial image data used each time comes from the same sample image. In the process of data entity alignment, any edge End users are not exposed.

步骤2,边缘终端利用各自的样本集训练各自的边缘模型,并掩码加密各自的损失函数后上传至服务端。In step 2, the edge terminal uses the respective sample sets to train the respective edge models, masks and encrypts the respective loss functions, and uploads them to the server.

实施例中,依据样本集DA对边缘终端PB的边缘模型MA进行训练,依据样本集DB对边缘终端PB的边缘模型MB进行训练,边缘终端PA将训练过程产生的特征数据发送给PB,PB利用接收的特征数据和激活神经元通路数据计算损失函数,边缘终端PA和PB并将各自的损失函数掩码加密后上传至服务端。In the embodiment, the edge model M A of the edge terminal PB is trained according to the sample set D A , and the edge model MB of the edge terminal PB is trained according to the sample set D B , and the edge terminal P A uses the features generated by the training process . The data is sent to P B , P B uses the received feature data and activated neuron pathway data to calculate the loss function, and the edge terminals P A and P B encrypt their respective loss function masks and upload them to the server.

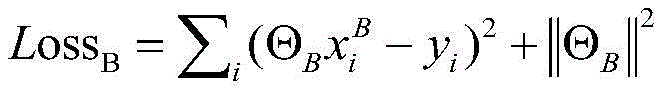

针对不同的数据集,两个边缘端都使用同样的模型结构进行训练,对于ImagNet数据集,使用ImageNet预训练的模型,训练设置统一的超参数:采用随机梯度下降(SGD)、adam优化器、学习率为η、正则化参数为λ、数据集 其中i表示某一个样本数据,yi表示对应的样本的原始标签,和分别表示数据的特征空间,与特征空间相关的模型参数表示为ΘA和ΘB,模型训练目标表示为:For different datasets, both edge ends use the same model structure for training. For the ImagNet dataset, the ImageNet pre-trained model is used, and the training sets uniform hyperparameters: stochastic gradient descent (SGD), adam optimizer, The learning rate is η, the regularization parameter is λ, the dataset where i represents a certain sample data, y i represents the original label of the corresponding sample, and respectively represent the feature space of the data, the model parameters related to the feature space are represented as Θ A and Θ B , and the model training target is represented as:

具体地,依据样本集DA对边缘模型MA进行训练时,边缘模型MA的损失函数LossA为:Specifically, when the edge model M A is trained according to the sample set D A , the loss function Loss A of the edge model M A is:

其中,ΘA表示边缘模型MA的模型参数,表示属于样本集A的第i个样本,||·||2表示L1范数的平方。where Θ A represents the model parameters of the edge model M A , represents the ith sample belonging to the sample set A, and ||·|| 2 represents the square of the L1 norm.

依据样本集DB对边缘模型MB进行训练时,边缘模型MB的总损失函数Losssum为:When training the edge model MB according to the sample set D B , the total loss function Loss sum of the edge model MB is:

losssum=lossB+λ*losstopk+lossAB loss sum = loss B +λ*loss topk +loss AB

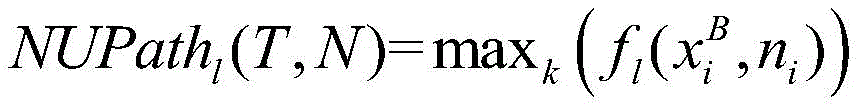

其中,lossB表示边缘模型MB的基本损失,losstopk表示神经通路损失,lossAB表示共同损失,λ表示自适应调节系数,作为神经通路加密的部分因子,ΘB表示边缘模型MB的模型参数,表示属于样本集B的第i个样本,yi表示对应的标签,||·||2表示L1范数的平方,i表示样本索引,N为样本数量,NUPathl(T,N)表示边缘模型第l层的多个最大激活神经元的激活值,L表示边缘模型的总层数,T每次输入样本个数,N表示每层的神经元个数。Among them, loss B represents the basic loss of the edge model MB, loss topk represents the neural pathway loss, loss AB represents the common loss, λ represents the adaptive adjustment coefficient, as a partial factor of neural pathway encryption, Θ B represents the model of the edge model MB parameter, Indicates the ith sample belonging to the sample set B, and y i represents The corresponding label, ||·|| 2 represents the square of the L1 norm, i represents the sample index, N is the number of samples, NUPath l (T, N) represents the activation value of the multiple maximum activation neurons in the lth layer of the edge model , L represents the total number of layers of the edge model, T represents the number of input samples each time, and N represents the number of neurons in each layer.

实施例中,以神经网络中输入层的任意一个神经元为起点,输出层中的任意一个神经元为终点,以数据的信息流动为方向,经过隐藏层中的若干神经元的连通路径,定义为神经通路。神经通路表示神经元之间的连接关系,当样本输入模型激活特定的神经元,这些激活态的神经元构成的通路称为激活神经通路。In the embodiment, with any neuron in the input layer in the neural network as the starting point, any neuron in the output layer as the end point, and the information flow of the data as the direction, through the connection paths of several neurons in the hidden layer, define for neural pathways. A neural pathway represents the connection relationship between neurons. When a sample input model activates a specific neuron, the pathway formed by these activated neurons is called an activated neural pathway.

固定神经通路时,边缘端模型训练过程中,每一轮的训练结束后,从步骤1中选取的数据集的测试集中随机选取样本作为样本输入到训练模型中,获取此时模型的最大激活神经通路:设N={n1,n2,...nn}为深度学习模型的一组神经元;设T={x1,x2,...xn}为一组测试集的输入;为功能函数,表示给定输入样本时,第l层中与输入样本对应的神经元ni的激活值,maxk(·)表示提取每层中激活值前k大的k个神经元的激活值。最大激活神经通路定义如下:When the neural pathway is fixed, during the training process of the edge-end model, after each round of training, randomly select samples from the test set of the data set selected in step 1 as samples and input them into the training model to obtain the maximum activated neural network of the model at this time. Path: Let N={n 1 ,n 2 ,...n n } be a group of neurons of the deep learning model; let T={x 1 ,x 2 ,...x n } be a set of test set enter; is a functional function, represents a given input sample When , the input sample in the lth layer is the same as the input sample The activation value of the corresponding neuron ni , max k (·) represents the activation value of the k neurons with the largest activation value in each layer. The maximum activation neural pathway is defined as follows:

训练时将由多个最大激活神经元的激活值组成的最大激活神经通道固定,即这部分神经元激活值不变,将各神经层中的k个神经元的激活值进行累积,构成通路损失函数。During training, the maximum activation neural channel composed of the activation values of multiple maximum activation neurons is fixed, that is, the activation value of this part of the neurons remains unchanged, and the activation values of k neurons in each neural layer are accumulated to form the path loss function. .

步骤3,服务端对边缘终端PA和PB上传的损失函数掩码解密后,聚合损失函数获得梯度信息并返回至边缘终端PA和PB以更新边缘模型网络参数。Step 3: After the server decrypts the loss function masks uploaded by the edge terminals P A and P B , the aggregated loss function obtains the gradient information and returns to the edge terminals P A and P B to update the network parameters of the edge model.

本实施例中,服务端对边缘终端PA和PB上传的损失函数掩码解密后,聚合损失函数后求解聚合的损失函数获得MA和MB的梯度信息并返回梯度信息至边缘终端PA和PB。具体地,服务端采用随机梯度下降求解聚合的损失函数的梯度信息。其中,服务端聚合的损失函数Loss为:In this embodiment, after the server decrypts the loss function masks uploaded by the edge terminals P A and P B , aggregates the loss function and solves the aggregated loss function to obtain the gradient information of M A and M B , and returns the gradient information to the edge terminal P A and P B . Specifically, the server uses stochastic gradient descent to solve the gradient information of the aggregated loss function. Among them, the loss function Loss of server-side aggregation is:

Loss=lossB+λ*losstopk+lossAB+lossA Loss=loss B +λ*loss topk +loss AB +loss A

MA和MB的梯度信息分别为和 The gradient information of M A and M B are respectively and

边缘终端PA和PB接收到服务端返回的梯度信息后,依据梯度信息更新各自边缘模型的MA和MB的网络参数,基于更新后的新网络参数再继续训练。After receiving the gradient information returned by the server, the edge terminals P A and P B update the network parameters of MA and MB of the respective edge models according to the gradient information, and continue training based on the updated new network parameters.

针对垂直联邦场景下模型窃取攻击,实施例提供的基于神经通路特征提取的垂直联邦下模型窃取防御方法,通过在边缘端模型的训练过程中固定神经通路特征并加密,避免在边缘模型的梯度和损失信息传递过程中被恶意攻击者窃取,从而导致模型被窃取。从特征提取的角度对模型的信息进行加密保护,在提高模型训练效率的同时,保护模型的隐私安全。Aiming at the model stealing attack in the vertical federation scenario, the embodiment provides a model stealing defense method under the vertical federation based on neural pathway feature extraction. By fixing the neural pathway features and encrypting them during the training process of the edge model, the gradient and the edge model are avoided. The loss information is stolen by malicious attackers during the transmission process, resulting in the model being stolen. The information of the model is encrypted and protected from the perspective of feature extraction, which not only improves the efficiency of model training, but also protects the privacy and security of the model.

以上所述的具体实施方式对本发明的技术方案和有益效果进行了详细说明,应理解的是以上所述仅为本发明的最优选实施例,并不用于限制本发明,凡在本发明的原则范围内所做的任何修改、补充和等同替换等,均应包含在本发明的保护范围之内。The above-mentioned specific embodiments describe in detail the technical solutions and beneficial effects of the present invention. It should be understood that the above-mentioned embodiments are only the most preferred embodiments of the present invention, and are not intended to limit the present invention. Any modifications, additions and equivalent replacements made within the scope shall be included within the protection scope of the present invention.

Claims (8)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202011499140.XA CN112560059B (en) | 2020-12-17 | 2020-12-17 | Vertical federal model stealing defense method based on neural pathway feature extraction |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202011499140.XA CN112560059B (en) | 2020-12-17 | 2020-12-17 | Vertical federal model stealing defense method based on neural pathway feature extraction |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN112560059A CN112560059A (en) | 2021-03-26 |

| CN112560059B true CN112560059B (en) | 2022-04-29 |

Family

ID=75063447

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202011499140.XA Active CN112560059B (en) | 2020-12-17 | 2020-12-17 | Vertical federal model stealing defense method based on neural pathway feature extraction |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN112560059B (en) |

Families Citing this family (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN113297575B (en) * | 2021-06-11 | 2022-05-17 | 浙江工业大学 | An autoencoder-based defense method for vertical federated models of multi-channel graphs |

| CN113362216B (en) * | 2021-07-06 | 2024-08-20 | 浙江工业大学 | Deep learning model encryption method and device based on back door watermark |

| CN113792890B (en) * | 2021-09-29 | 2024-05-03 | 国网浙江省电力有限公司信息通信分公司 | Model training method based on federal learning and related equipment |

Citations (11)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN1806337A (en) * | 2004-01-10 | 2006-07-19 | HVVi半导体股份有限公司 | Power semiconductor device and method thereof |

| US9614670B1 (en) * | 2015-02-05 | 2017-04-04 | Ionic Security Inc. | Systems and methods for encryption and provision of information security using platform services |

| CN109525384A (en) * | 2018-11-16 | 2019-03-26 | 成都信息工程大学 | The DPA attack method and system, terminal being fitted using neural network |

| CN110782042A (en) * | 2019-10-29 | 2020-02-11 | 深圳前海微众银行股份有限公司 | Horizontal and vertical federation methods, devices, equipment and media |

| CN111401552A (en) * | 2020-03-11 | 2020-07-10 | 浙江大学 | Federal learning method and system based on batch size adjustment and gradient compression rate adjustment |

| CN111598143A (en) * | 2020-04-27 | 2020-08-28 | 浙江工业大学 | A Defense Method for Federated Learning Poisoning Attack Based on Credit Evaluation |

| CN111625820A (en) * | 2020-05-29 | 2020-09-04 | 华东师范大学 | A Federal Defense Method Based on AIoT Security |

| CN111723946A (en) * | 2020-06-19 | 2020-09-29 | 深圳前海微众银行股份有限公司 | A federated learning method and device applied to blockchain |

| CN111783853A (en) * | 2020-06-17 | 2020-10-16 | 北京航空航天大学 | An Interpretability-Based Detection and Recovery Neural Network Adversarial Example Method |

| CN111860832A (en) * | 2020-07-01 | 2020-10-30 | 广州大学 | A Federated Learning-Based Approach to Enhance the Defense Capability of Neural Networks |

| CN111931242A (en) * | 2020-09-30 | 2020-11-13 | 国网浙江省电力有限公司电力科学研究院 | Data sharing method, computer equipment applying same and readable storage medium |

Family Cites Families (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| BRPI0513195A (en) * | 2004-07-09 | 2008-04-29 | Matsushita Electric Industrial Co Ltd | systems for administering user authentication and authorization, and for user support, methods for administering user authentication and authorization, for accessing services from multiple networks, for the authentication controller to process an authentication request message, to select the combination of authentication controllers. search result authentication, authenticating a user, and finding the way to a domain having business relationship with the home domain, for the authorization controller to process the service authorization request message, and perform service authorization for a domain controller. authentication and authorization perform authentication and service authorization, to protect the user token, and for the user's home domain access control authority to provide the authentication controller with a limited user signature profile information, to achieve authentication and authorize fast access, and to achieve single registration to access multiple networks, and formats for subscription capability information, for a user symbol, for a domain having business relationship with a user's home domain to request authentication and authorization assertion , and for a user terminal to indicate their credentials for accessing multiple networks across multiple administrative domains. |

| US20200202243A1 (en) * | 2019-03-05 | 2020-06-25 | Allegro Artificial Intelligence Ltd | Balanced federated learning |

-

2020

- 2020-12-17 CN CN202011499140.XA patent/CN112560059B/en active Active

Patent Citations (11)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN1806337A (en) * | 2004-01-10 | 2006-07-19 | HVVi半导体股份有限公司 | Power semiconductor device and method thereof |

| US9614670B1 (en) * | 2015-02-05 | 2017-04-04 | Ionic Security Inc. | Systems and methods for encryption and provision of information security using platform services |

| CN109525384A (en) * | 2018-11-16 | 2019-03-26 | 成都信息工程大学 | The DPA attack method and system, terminal being fitted using neural network |

| CN110782042A (en) * | 2019-10-29 | 2020-02-11 | 深圳前海微众银行股份有限公司 | Horizontal and vertical federation methods, devices, equipment and media |

| CN111401552A (en) * | 2020-03-11 | 2020-07-10 | 浙江大学 | Federal learning method and system based on batch size adjustment and gradient compression rate adjustment |

| CN111598143A (en) * | 2020-04-27 | 2020-08-28 | 浙江工业大学 | A Defense Method for Federated Learning Poisoning Attack Based on Credit Evaluation |

| CN111625820A (en) * | 2020-05-29 | 2020-09-04 | 华东师范大学 | A Federal Defense Method Based on AIoT Security |

| CN111783853A (en) * | 2020-06-17 | 2020-10-16 | 北京航空航天大学 | An Interpretability-Based Detection and Recovery Neural Network Adversarial Example Method |

| CN111723946A (en) * | 2020-06-19 | 2020-09-29 | 深圳前海微众银行股份有限公司 | A federated learning method and device applied to blockchain |

| CN111860832A (en) * | 2020-07-01 | 2020-10-30 | 广州大学 | A Federated Learning-Based Approach to Enhance the Defense Capability of Neural Networks |

| CN111931242A (en) * | 2020-09-30 | 2020-11-13 | 国网浙江省电力有限公司电力科学研究院 | Data sharing method, computer equipment applying same and readable storage medium |

Non-Patent Citations (3)

| Title |

|---|

| Real-Time Systems Implications in the Blockchain-Based Vertical Integration;C. T. B. Garrocho et al;《Computer》;20200907;全文 * |

| 一种支持隐私与权益保护的数据联合利用系统方案;李铮;《信息与电脑(理论版)》;20200725(第14期);全文 * |

| 深度学习模型的中毒攻击与防御综述;陈晋音等;《信息安全学报》;20200715(第04期);全文 * |

Also Published As

| Publication number | Publication date |

|---|---|

| CN112560059A (en) | 2021-03-26 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| Xu et al. | Fedv: Privacy-preserving federated learning over vertically partitioned data | |

| CN111600707B (en) | A method for decentralized federated machine learning under privacy protection | |

| CN113467928B (en) | Method and device for defending against member reasoning attacks of federated learning based on blockchain decentralization | |

| Shen et al. | Privacy-preserving federated learning against label-flipping attacks on non-iid data | |

| CN112560059B (en) | Vertical federal model stealing defense method based on neural pathway feature extraction | |

| CN115310120B (en) | A robust federated learning aggregation method based on double trapdoor homomorphic encryption | |

| CN112487456B (en) | Federated learning model training method, system, electronic device and readable storage medium | |

| Xu et al. | Information leakage by model weights on federated learning | |

| CN112738035B (en) | A model stealing defense method under vertical federation based on blockchain technology | |

| CN116127519A (en) | A blockchain-based dynamic differential privacy federated learning system | |

| CN114363043A (en) | Asynchronous federated learning method based on verifiable aggregation and differential privacy in peer-to-peer network | |

| CN115329981A (en) | Federal learning method with efficient communication, privacy protection and attack resistance | |

| Zhang et al. | Visual object detection for privacy-preserving federated learning | |

| CN118468986A (en) | Application of federal study in power data analysis and privacy protection method | |

| CN116861994A (en) | Privacy protection federal learning method for resisting Bayesian attack | |

| Sun et al. | Privacy-preserving vertical federated logistic regression without trusted third-party coordinator | |

| CN118690406A (en) | A Collusion-Resistant Byzantine Robust Privacy-Preserving Federated Learning Optimization Method | |

| Zhou et al. | Group verifiable secure aggregate federated learning based on secret sharing | |

| CN117709407A (en) | Federal distillation membership reasoning attack defense technology based on data generation | |

| CN118839365A (en) | Verifiable privacy protection linear model longitudinal federal learning method | |

| Masuda et al. | Model fragmentation, shuffle and aggregation to mitigate model inversion in federated learning | |

| Liu et al. | PPEFL: An Edge Federated Learning Architecture with Privacy‐Preserving Mechanism | |

| Wang et al. | Sgan-ra: Reconstruction attack for big model in asynchronous federated learning | |

| CN115913749A (en) | Blockchain DDoS detection method based on decentralized federated learning | |

| Chen et al. | Edge-based protection against malicious poisoning for distributed federated learning |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant | ||

| OL01 | Intention to license declared | ||

| OL01 | Intention to license declared |