CN112115953A - Optimized ORB algorithm based on RGB-D camera combined with plane detection and random sampling consistency algorithm - Google Patents

Optimized ORB algorithm based on RGB-D camera combined with plane detection and random sampling consistency algorithm Download PDFInfo

- Publication number

- CN112115953A CN112115953A CN202010985540.5A CN202010985540A CN112115953A CN 112115953 A CN112115953 A CN 112115953A CN 202010985540 A CN202010985540 A CN 202010985540A CN 112115953 A CN112115953 A CN 112115953A

- Authority

- CN

- China

- Prior art keywords

- point

- feature

- feature point

- algorithm

- feature points

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/40—Extraction of image or video features

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/40—Extraction of image or video features

- G06V10/44—Local feature extraction by analysis of parts of the pattern, e.g. by detecting edges, contours, loops, corners, strokes or intersections; Connectivity analysis, e.g. of connected components

-

- Y—GENERAL TAGGING OF NEW TECHNOLOGICAL DEVELOPMENTS; GENERAL TAGGING OF CROSS-SECTIONAL TECHNOLOGIES SPANNING OVER SEVERAL SECTIONS OF THE IPC; TECHNICAL SUBJECTS COVERED BY FORMER USPC CROSS-REFERENCE ART COLLECTIONS [XRACs] AND DIGESTS

- Y02—TECHNOLOGIES OR APPLICATIONS FOR MITIGATION OR ADAPTATION AGAINST CLIMATE CHANGE

- Y02T—CLIMATE CHANGE MITIGATION TECHNOLOGIES RELATED TO TRANSPORTATION

- Y02T10/00—Road transport of goods or passengers

- Y02T10/10—Internal combustion engine [ICE] based vehicles

- Y02T10/40—Engine management systems

Landscapes

- Engineering & Computer Science (AREA)

- Physics & Mathematics (AREA)

- General Physics & Mathematics (AREA)

- Multimedia (AREA)

- Theoretical Computer Science (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Image Analysis (AREA)

Abstract

Description

技术领域technical field

本发明是一种基于RGB-D相机结合平面检测与随机抽样一致算法的优化ORB算法,属于室内移动机器人路径规划导航技术领域。The invention is an optimized ORB algorithm based on RGB-D camera combined with plane detection and random sampling consistent algorithm, and belongs to the technical field of indoor mobile robot path planning and navigation.

背景技术Background technique

近年来,智能移动机器人技术得到迅速发展,已广泛应用于工业、军事、物流、办公和家庭服务等领域。随着RGB-D传感器的出现,采用RGB-D传感器进行移动机器人定位或SLAM的研究迅速发展起来。图像处理和点云处理等相关技术的进步,以及RGB-D传感器具有获取信息丰富、非接触测量、易安装使用和成本低廉等优点,使得RGB-D传感器被广泛地应用于目标识别、跟踪等领域。机器人导航问题中的第一问题就是如何确定场景模型,在过去的十几年里,许多解决方案都依赖于二维传感器如激光、雷达等进行地图的构建以及机器人位姿估计。随着RGB-D相机的问世,越来越多的研究人员开始关注使用RGB-D相机来解决机器人室内环境模型构建的问题,并且产生了很多有有影响力的研究成果。In recent years, intelligent mobile robot technology has developed rapidly and has been widely used in industries such as industry, military, logistics, office and home services. With the advent of RGB-D sensors, the research on mobile robot localization or SLAM using RGB-D sensors has developed rapidly. The advancement of related technologies such as image processing and point cloud processing, as well as the advantages of RGB-D sensors with rich information acquisition, non-contact measurement, easy installation and use, and low cost, make RGB-D sensors widely used in target recognition, tracking, etc. field. The first problem in the robot navigation problem is how to determine the scene model. In the past ten years, many solutions have relied on two-dimensional sensors such as laser and radar for map construction and robot pose estimation. With the advent of RGB-D cameras, more and more researchers have begun to focus on using RGB-D cameras to solve the problem of robotic indoor environment model building, and many influential research results have been produced.

在目前,视觉SLAM技术逐渐成为一种主流的定位方案,然而单目SLAM无法从一张图像中获取像素点的深度信息,需要通过三角化或者逆深度的方法来估计像素点的深度。并且单目SLAM估计出的深度信息具有尺度不确定性,并且随着定位误差的累积,容易出现“尺度漂移”现象。双目SLAM通过匹配左右摄像头的图像得到匹配特征点,然后根据视差法估计特征点的深度信息。双目SLAM具有测量范围大等优点,但是计算量大且对相机的精度要求高,通常需要GPU加速才能满足实时性要求。RGB-D相机是近年来兴起的一种新型相机,该相机可以通过物理硬件主动获取图像中像素点的深度信息。相比于单目和双目相机,RGB-D相机不需要消耗大量的计算资源来计算像素点的深度,能够直接对周围环境和障碍物进行三维测量,通过RGB-D SLAM技术生成稠密的点云地图,为后续导航规划提供便利。At present, visual SLAM technology has gradually become a mainstream positioning scheme. However, monocular SLAM cannot obtain the depth information of pixels from an image, and it is necessary to estimate the depth of pixels through triangulation or inverse depth methods. Moreover, the depth information estimated by monocular SLAM has scale uncertainty, and with the accumulation of positioning errors, the phenomenon of "scale drift" is prone to occur. The binocular SLAM obtains the matching feature points by matching the images of the left and right cameras, and then estimates the depth information of the feature points according to the parallax method. Binocular SLAM has the advantages of large measurement range, etc., but it requires a large amount of calculation and requires high accuracy of the camera, and usually requires GPU acceleration to meet the real-time requirements. RGB-D camera is a new type of camera emerging in recent years, which can actively obtain the depth information of pixels in the image through physical hardware. Compared with monocular and binocular cameras, RGB-D cameras do not need to consume a lot of computing resources to calculate the depth of pixel points, and can directly measure the surrounding environment and obstacles in 3D, and generate dense points through RGB-D SLAM technology. Cloud maps provide convenience for subsequent navigation planning.

现在常用的基于RGB-D相机的特征点提取匹配方法有ORB算法与ICP算法。ORB(Oriented FAST and Rotated BRIEF)是一种快速特征点提取和描述的算法。ORB算法分为两部分,分别是特征点提取和特征点描述。特征提取是由FAST(Features fromAccelerated Segment Test)算法发展来的,特征点描述是根据BRIEF(Binary RobustIndependent Elementary Features)特征描述算法改进的。ORB特征是将FAST特征点的检测方法与BRIEF特征描述子结合起来,并在它们原来的基础上做了改进与优化。ORB算法最大的特点就是计算速度快。这首先得益于使用FAST检测特征点。再次是使用BRIEF算法计算描述子,该描述子特有的2进制串的表现形式不仅节约了存储空间,而且大大缩短了匹配的时间。ICP算法由Besl and McKay 1992,Method for registration of 3-D shapes文章提出。ICP算法的基本原理是:分别在带匹配的目标点云P和源点云Q中,按照一定的约束条件,找到最邻近点(pi,qi),然后计算出最优匹配参数R和t,使得误差函数最小。这种方法可以提高产生最优解的效率,但是由于计算量较大,使得对于移动机器人来说实用性较差,从而使得成本增大。The commonly used RGB-D camera-based feature point extraction and matching methods include ORB algorithm and ICP algorithm. ORB (Oriented FAST and Rotated BRIEF) is a fast feature point extraction and description algorithm. The ORB algorithm is divided into two parts, namely feature point extraction and feature point description. Feature extraction is developed by the FAST (Features from Accelerated Segment Test) algorithm, and the feature point description is improved according to the BRIEF (Binary RobustIndependent Elementary Features) feature description algorithm. The ORB feature combines the detection method of FAST feature points with the Brief feature descriptor, and improves and optimizes them on the basis of their original ones. The biggest feature of the ORB algorithm is the fast calculation speed. This first benefits from the use of FAST to detect feature points. Thirdly, the descriptor is calculated using the BRIEF algorithm. The representation of the descriptor's unique binary string not only saves storage space, but also greatly shortens the matching time. The ICP algorithm was proposed by Besl and McKay 1992, Method for registration of 3-D shapes. The basic principle of the ICP algorithm is: in the target point cloud P and source point cloud Q with matching, respectively, according to certain constraints, find the nearest point (pi, qi), and then calculate the optimal matching parameters R and t, minimize the error function. This method can improve the efficiency of generating the optimal solution, but due to the large amount of calculation, it is less practical for mobile robots, thus increasing the cost.

发明内容SUMMARY OF THE INVENTION

有鉴于此,本发明的目的在于提供一种基于RGB-D相机结合平面检测与随机抽样一致算法的优化ORB算法,用于降低计算量,提高特征点提取的准确性,降低误匹配,从而实现移动机器人对精准性与实时性的要求。In view of this, the purpose of the present invention is to provide an optimized ORB algorithm based on RGB-D camera combined with plane detection and random sampling consensus algorithm, which is used to reduce the amount of calculation, improve the accuracy of feature point extraction, and reduce false matching, so as to achieve The requirements for accuracy and real-time performance of mobile robots.

为解决上述技术问题,本发明采用的技术方案为:In order to solve the above-mentioned technical problems, the technical scheme adopted in the present invention is:

一种基于RGB-D相机结合平面检测与随机抽样一致算法的优化ORB算法,该方法包括以下步骤:An optimized ORB algorithm based on RGB-D camera combined with plane detection and random sampling consensus algorithm, the method includes the following steps:

S1:使用RGB-D相机获取图像数据,图像数据包括彩色图像与深度图像;S1: Use an RGB-D camera to acquire image data, including color images and depth images;

S2:使用ORB算法对图像数据进行特征点提取,使用特征点均匀性评价方法判断特征点分布均匀性;S2: Use the ORB algorithm to extract feature points from the image data, and use the feature point uniformity evaluation method to judge the feature point distribution uniformity;

S3:对于特征点分布均匀的图像数据部分,生成点云并且对其进行降采样;S3: For the part of the image data where the feature points are evenly distributed, generate a point cloud and downsample it;

S4:对降采样后的点云进行平面检测提取,使用随机抽样一致算法消除误匹配;S4: Perform plane detection and extraction on the down-sampled point cloud, and use the random sampling consensus algorithm to eliminate false matches;

S5:对于特征点分布不均匀的图像数据部分,使用设置阈值进行特征点提取与非极大值抑制法剔除重叠特征点;S5: For the part of the image data with uneven distribution of feature points, use the set threshold for feature point extraction and the non-maximum value suppression method to eliminate overlapping feature points;

S6:对于S4消除误匹配后的点云以及S5剔除重叠特征点后的特征点,投影回二维图像平面,重建并使灰度图像均衡化。S6: For the point cloud after eliminating the mismatch in S4 and the feature point after eliminating the overlapping feature point in S5, project it back to the two-dimensional image plane, reconstruct and equalize the grayscale image.

优选地,S1中,RGB-D相机包括Kinect相机。Preferably, in S1, the RGB-D camera includes a Kinect camera.

优选地,S2具体包括以下步骤:Preferably, S2 specifically includes the following steps:

S21:使用特征点提取Oriented FAST算法,判别特征点x是否是一个特征点,当判断特征点x是一个特征点时,计算该特征点主方向,并命名该特征点为关键点,使关键点具有方向性;S21: Use the feature point extraction Oriented FAST algorithm to determine whether the feature point x is a feature point, when it is judged that the feature point x is a feature point, calculate the main direction of the feature point, and name the feature point as a key point, so that the key point directional;

判别特征点x是否是一个特征点的方法为:以特征点x为中心画圆,该圆过n个像素点,设在圆周上的n个像素点中是否最少有m个连续的像素点与特征点x之间的距离满足均比lx+t大,或者均比lx-t小,如果满足这样的要求,则判断特征点x是一个特征点;The method of judging whether the feature point x is a feature point is: draw a circle with the feature point x as the center. The distance between the feature points x is larger than l x +t, or is smaller than l x -t, if such a requirement is met, it is judged that the feature point x is a feature point;

其中,lx代表特征点x与圆上的像素点之间的距离;l代表距离;t代表阈值,是范围的调节量;n=16;9≤m≤12;Among them, l x represents the distance between the feature point x and the pixel point on the circle; l represents the distance; t represents the threshold, which is the adjustment amount of the range; n=16; 9≤m≤12;

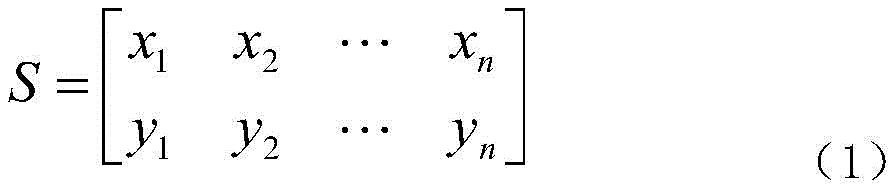

S22:使用特征点描述rBRIEF算法,对图像数据进行高斯平滑处理,以关键点为中心,在邻域内挑选像素点y,并组成n个点对(xi,yi),I(x,y)相互比较灰度值,I代表灰度值,x>y取1,反之取0,生成n维特征描述符,将n个点对(xi,yi)定义为2*n矩阵S,S22: Use the feature point to describe the rBRIEF algorithm, perform Gaussian smoothing on the image data, select the pixel point y in the neighborhood with the key point as the center, and form n point pairs (xi, yi), I (x, y) mutual Compare the gray value, I represents the gray value, x>y takes 1, otherwise takes 0, generates an n-dimensional feature descriptor, and defines n point pairs (xi, yi) as a 2*n matrix S,

利用θ对S进行旋转,Use θ to rotate S,

Sθ=RθS (2)S θ = R θ S (2)

式(2)中,Sθ代表旋转角度为θ的矩阵,θ为沿特征点主方向旋转θ角度;In formula (2), S θ represents the matrix whose rotation angle is θ, and θ is the rotation angle θ along the main direction of the feature point;

邻域为以该关键点为中心过k个像素点的圆内选择像素点y;其中,0<k<n;Neighborhood is the selection of pixel points y in the circle with the key point as the center passing through k pixels; among them, 0<k<n;

S23:特征点分布均匀性评价方法,通过不同划分方式将图像数据进行分割,分割后获得特征点分布均匀的图像数据部分和特征点分布不均匀的图像数据部分。S23 : a method for evaluating the uniformity of feature point distribution. The image data is segmented by different division methods, and after segmentation, the image data part with uniform feature point distribution and the image data part with uneven feature point distribution are obtained.

进一步优选地,特征点分布均匀性评价方法为:首先将图像数据初步分割成若干子区域Si,对每个子区域Si再次分割成若干二级子区域Sij,二级子区域Sij包括Si1至Sij个区域,根据二级子区域Sij内的特征点数目评价该区域特征点是否均匀分布;若Si1至Sij特征点数目均相近,相近的计算方法为:通过计算二级子区域统计分布的特征点方差数值,并根据该方差数值判断,当方差数值小于15时,则可以判定Si子区域特征点分布均匀,反之判定不均匀。Further preferably, the method for evaluating the uniformity of feature point distribution is as follows: first, the image data is initially divided into several sub-regions S i , and each sub-region S i is divided into several second-level sub-regions S ij , and the second-level sub-region S ij includes: S i1 to S ij regions, according to the number of feature points in the secondary sub-region S ij to evaluate whether the feature points in the region are evenly distributed; if the number of feature points from S i1 to S ij are all similar, the approximate calculation method is: If the variance value is less than 15, it can be judged that the distribution of feature points in the sub-region is uniform, otherwise it is judged to be uneven.

进一步优选地,图像数据分割方法包括分割为中心和四周方向、由左上至右下分割或者由左下至右上分割。Further preferably, the image data segmentation method includes segmentation into center and peripheral directions, segmentation from upper left to lower right, or segmentation from lower left to upper right.

优选地,S3具体包括以下步骤:Preferably, S3 specifically includes the following steps:

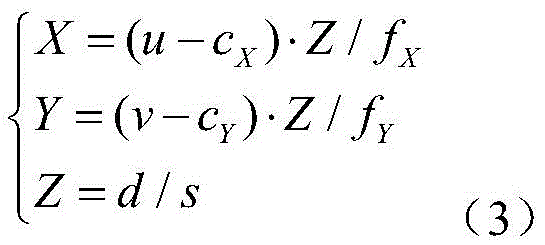

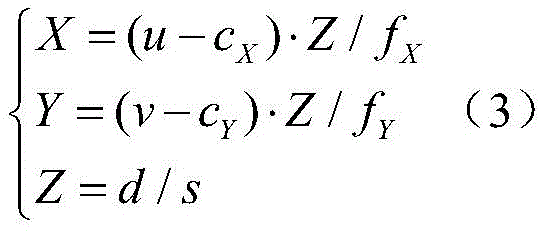

S31:根据特征点分布均匀的彩色图像与深度图像,采用下式(3)获得点云,S31: According to the color image and the depth image with uniform distribution of feature points, use the following formula (3) to obtain the point cloud,

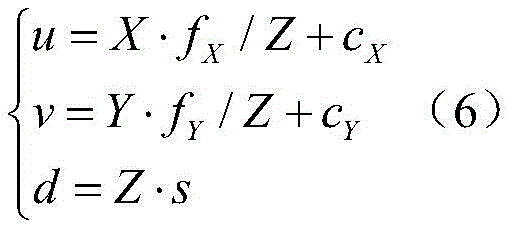

式(3)使用像素坐标系o-u-v;cX,cY,fX,fY,s为相机内参;u,v为特征点的像素坐标;d为特征点的深度;特征点的坐标为(X,Y,Z),若干由坐标定义的点构成点云;Formula (3) uses the pixel coordinate system ouv; c X , c Y , f X , f Y , s are the camera internal parameters; u, v are the pixel coordinates of the feature points; d is the depth of the feature points; the coordinates of the feature points are ( X, Y, Z), a number of points defined by coordinates constitute a point cloud;

S32:使用网格滤波器进行处理,从点云中提取出平面。S32: Process with grid filter to extract plane from point cloud.

优选地,S4具体包括以下步骤:Preferably, S4 specifically includes the following steps:

S41:平面提取的公式如下:S41: The formula for plane extraction is as follows:

aX+bY+cZ+d=0 (4)aX+bY+cZ+d=0 (4)

式(4)中,a,b,c代表常数;In formula (4), a, b, c represent constants;

S42:使用随机抽样一致方法从带有噪声的点云数据中提取平面,提取判定条件为:点云剩余点数大于点云总数的阈值g或者提取平面小于阈值h时,则提取特征点,当提取出这些特征点后,对剩余的点再次进行面提取,直到满足达到提取平面个数阈值h或者剩余点数小于阈值g;S42: Extract planes from the point cloud data with noise using the random sampling consensus method. The extraction judgment condition is: when the number of remaining points in the point cloud is greater than the threshold value g of the total number of point clouds or the extraction plane is smaller than the threshold value h, the feature points are extracted. After these feature points are extracted, the remaining points are extracted again until the threshold h for the number of extraction planes is reached or the number of remaining points is less than the threshold g;

其中,20%≤g≤40%;3≤h≤5。Among them, 20%≤g≤40%; 3≤h≤5.

优选地,S5具体包括以下步骤:Preferably, S5 specifically includes the following steps:

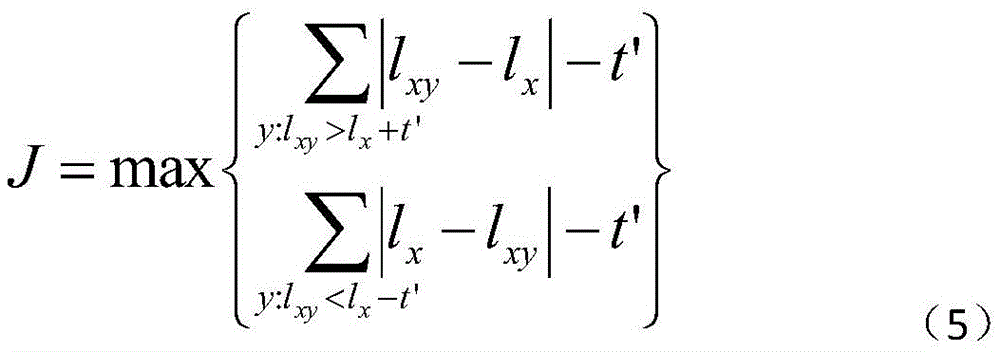

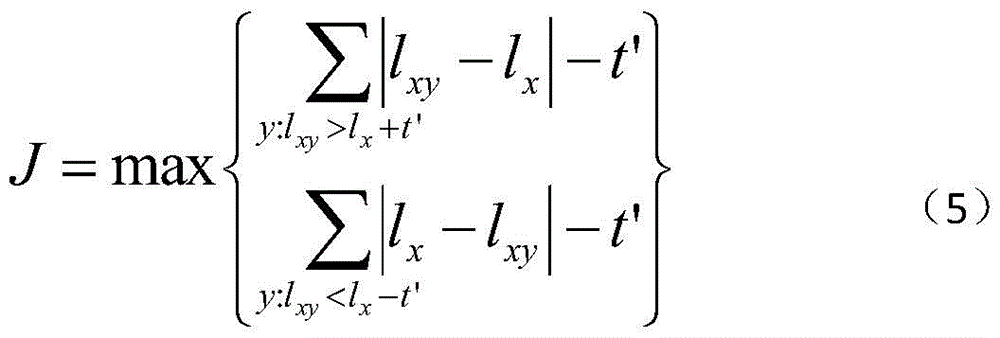

S51:根据S2的oFAST算法改变其中的阈值t,改变后的阈值t为t',并缩小lx+t'与lx-t'之间的范围,根据特征点提取结果调节t'的范围,确定改变后的t'值使其最优;S51: Change the threshold t according to the oFAST algorithm of S2, the changed threshold t is t', and reduce the range between l x +t' and l x -t', and adjust the range of t' according to the feature point extraction result , determine the changed t' value to make it optimal;

S52:若某个关键点的邻域内存在多个关键点时,比较这些特征点数值J的大小,J最大的保留,其余删除,其中该数值J的定义如下:S52: If there are multiple key points in the neighborhood of a certain key point, compare the value J of these feature points, keep the largest value J, and delete the rest, where the value J is defined as follows:

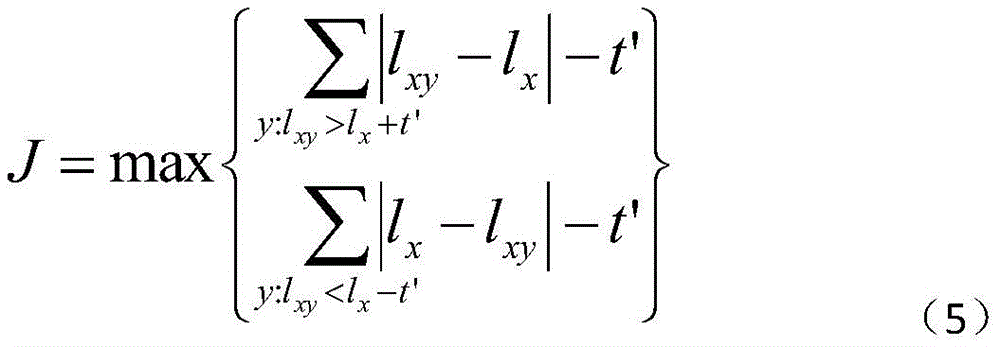

式(5)中,lxy-lx和lx-lxy均代表关键点和已知特征点的距离。In formula (5), l xy -l x and l x -l xy both represent the distance between the key point and the known feature point.

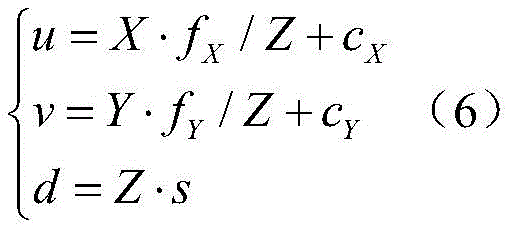

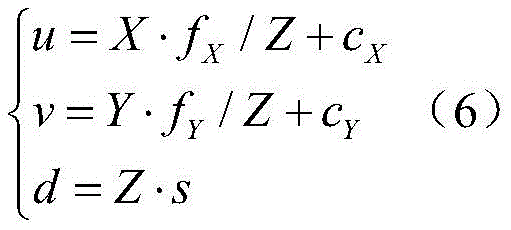

优选地,S6中,投影公式为:Preferably, in S6, the projection formula is:

式(6)中,s为比例因子,进行投影后,每个平面的灰度图像被重建,将灰度图像进行灰度直方图均衡化之后,使得图像更加清晰分明,并且可以减少深度上带来的噪声。In formula (6), s is the scale factor. After projection, the grayscale image of each plane is reconstructed. After the grayscale image is equalized by the grayscale histogram, the image is clearer and clearer, and the depth band can be reduced. noise coming.

本发明的有益效果:不论是单个物体的特征点提取还是复数个物体的特征点提取,本发明算法的准确率均明显高于单独使用ORB算法以及ICP算法,而相机的校准误差等参数尽管对结果有些许影响,但本发明算法带来的准确率依然远高于其他算法。另外,由于本发明算法结合了ORB算法,并且之中插入了对于区域特征点分布的均匀性判断并结合平面提取与随机抽样一致方法,因此运行时间比起单独使用ORB算法稍长,但不影响本发明算法的实用性。而ICP算法由于计算量过大导致运行时间过长削弱了其实用性并加大了对于移动机器人硬件的需求。可以说本发明算法仅仅牺牲了可以忽略不计的额外运行时间而能大幅提高对图片特征点提取的准确性。故本发明能在保持较少运行时间下提高特征点提取的准确率,从而提高机器人的定位精度与实时性。Beneficial effects of the present invention: whether it is the feature point extraction of a single object or the feature point extraction of multiple objects, the accuracy of the algorithm of the present invention is obviously higher than that of using the ORB algorithm and the ICP algorithm alone, and the parameters such as the calibration error of the camera are not The results are slightly affected, but the accuracy brought by the algorithm of the present invention is still much higher than other algorithms. In addition, because the algorithm of the present invention combines the ORB algorithm, and inserts the uniformity judgment of the distribution of regional feature points and combines the plane extraction and random sampling consistent methods, the running time is slightly longer than that of the ORB algorithm alone, but it does not affect Practicality of the algorithm of the present invention. However, the ICP algorithm has a long running time due to the excessive calculation amount, which weakens its practicability and increases the demand for mobile robot hardware. It can be said that the algorithm of the present invention can greatly improve the accuracy of image feature point extraction by sacrificing negligible extra running time. Therefore, the present invention can improve the accuracy of feature point extraction while keeping less running time, thereby improving the positioning accuracy and real-time performance of the robot.

附图说明Description of drawings

图1是本发明的流程图;Fig. 1 is the flow chart of the present invention;

图2是本发明中freiburg1_desk图片提取前(左)和提取后(右)的图片;Fig. 2 is the picture before (left) and after extraction (right) of freiburg1_desk picture among the present invention;

图3是本发明中freiburg1_room图片提取前(左)和提取后(右)的图片;Fig. 3 is the picture before (left) and after extraction (right) of freiburg1_room picture extraction among the present invention;

图4是本发明中freiburg1_teddy图片提取前(左)和提取后(右)的图片。FIG. 4 is a picture of the freiburg1_teddy picture before (left) and after (right) extraction in the present invention.

具体实施方式Detailed ways

下面结合附图以及具体实施例对本发明一种基于机器学习的SCR脱硝系统预测模型优化方法作进一步详细说明。A method for optimizing a prediction model of an SCR denitration system based on machine learning of the present invention will be described in further detail below with reference to the accompanying drawings and specific embodiments.

实施例1Example 1

如图1所示,一种基于RGB-D相机结合平面检测与随机抽样一致算法的优化ORB算法,该方法包括以下步骤:As shown in Figure 1, an optimized ORB algorithm based on RGB-D camera combined with plane detection and random sampling consensus algorithm, the method includes the following steps:

S1:使用RGB-D相机获取图像数据,图像数据包括彩色图像与深度图像;S1: Use an RGB-D camera to acquire image data, including color images and depth images;

S2:使用ORB算法对图像数据进行特征点提取,使用特征点均匀性评价方法判断特征点分布均匀性;S2: Use the ORB algorithm to extract feature points from the image data, and use the feature point uniformity evaluation method to judge the feature point distribution uniformity;

S3:对于特征点分布均匀的图像数据部分,生成点云并且对其进行降采样;S3: For the part of the image data where the feature points are evenly distributed, generate a point cloud and downsample it;

S4:对降采样后的点云进行平面检测提取,使用随机抽样一致算法消除误匹配;S4: Perform plane detection and extraction on the down-sampled point cloud, and use the random sampling consensus algorithm to eliminate false matches;

S5:对于特征点分布不均匀的图像数据部分,使用设置阈值进行特征点提取与非极大值抑制法剔除重叠特征点;S5: For the part of the image data with uneven distribution of feature points, use the set threshold for feature point extraction and the non-maximum value suppression method to eliminate overlapping feature points;

S6:对于S4消除误匹配后的点云以及S5剔除重叠特征点后的特征点,投影回二维图像平面,重建并使灰度图像均衡化。S6: For the point cloud after eliminating the mismatch in S4 and the feature point after eliminating the overlapping feature point in S5, project it back to the two-dimensional image plane, reconstruct and equalize the grayscale image.

优选地,S1中,RGB-D相机包括Kinect相机。Preferably, in S1, the RGB-D camera includes a Kinect camera.

优选地,S2具体包括以下步骤:Preferably, S2 specifically includes the following steps:

S21:使用特征点提取Oriented FAST算法(即oFAST算法),判别特征点x是否是一个特征点,当判断特征点x是一个特征点时,计算该特征点主方向,并命名该特征点为关键点,使关键点(即检测子)具有方向性;S21: Use the feature point extraction Oriented FAST algorithm (ie the oFAST algorithm) to determine whether the feature point x is a feature point, when it is judged that the feature point x is a feature point, calculate the main direction of the feature point, and name the feature point as the key point to make key points (ie, detectors) directional;

判别特征点x是否是一个特征点的方法为:以特征点x为中心画圆,该圆过16个像素点,设在圆周上的16个像素点中是否最少有12个连续的像素点与特征点x之间的距离满足均比lx+t大,或者均比lx-t小,如果满足这样的要求,则判断特征点x是一个特征点;The method of judging whether the feature point x is a feature point is: draw a circle with the feature point x as the center, the circle passes through 16 pixels, and whether there are at least 12 consecutive pixels among the 16 pixels on the circumference. The distance between the feature points x is larger than l x +t, or is smaller than l x -t, if such a requirement is met, it is judged that the feature point x is a feature point;

其中,lx代表特征点x分别与圆上的12个连续的像素点之间的距离;l代表距离;t代表阈值,是范围的调节量,设定t值可以筛掉距离接近x点的像素点,如果不设t值或t值太小会使满足该判定条件的像素点过多从而使特征点过多,同理t值过大会使判定的特征点过少;Among them, l x represents the distance between the feature point x and 12 consecutive pixels on the circle; l represents the distance; t represents the threshold, which is the adjustment amount of the range. Setting the t value can filter out the distance close to the x point. For pixel points, if the t value is not set or the t value is too small, there will be too many pixel points that meet the judgment condition, resulting in too many feature points.

S22:使用特征点描述rBRIEF算法,对图像数据进行高斯平滑处理,以关键点为中心,在邻域内挑选像素点y,并组成6个点对(xi,yi),点对是随机挑选的,I(x,y)相互比较灰度值,I代表灰度值,x>y取1,反之取0,生成n维特征描述符,将n个点对(xi,yi)定义为2*6矩阵S,S22: Use the feature points to describe the rBRIEF algorithm, perform Gaussian smoothing on the image data, select the pixel point y in the neighborhood with the key point as the center, and form 6 point pairs (xi, yi), the point pairs are randomly selected, I(x, y) compares the gray values with each other, I represents the gray value, x>y takes 1, otherwise takes 0, generates an n-dimensional feature descriptor, and defines n point pairs (xi, yi) as 2*6 matrix S,

利用θ对S进行旋转,Use θ to rotate S,

Sθ=RθS (2)S θ = R θ S (2)

式(2)中,Sθ代表旋转角度为θ的矩阵,θ为沿特征点主方向旋转θ角度;In formula (2), S θ represents the matrix whose rotation angle is θ, and θ is the rotation angle θ along the main direction of the feature point;

邻域为以该关键点为中心过k个像素点的圆内选择像素点y;其中,0<k<n;Neighborhood is the selection of pixel points y in the circle with the key point as the center passing through k pixels; among them, 0<k<n;

S23:特征点分布均匀性评价方法,通过不同划分方式将图像数据进行分割,分割后获得特征点分布均匀的图像数据部分和特征点分布不均匀的图像数据部分。S23 : a method for evaluating the uniformity of feature point distribution. The image data is segmented by different division methods, and after segmentation, the image data part with uniform feature point distribution and the image data part with uneven feature point distribution are obtained.

进一步优选地,特征点分布均匀性评价方法为:首先将图像数据初步分割成若干子区域Si,对每个子区域Si再次分割成若干二级子区域Sij,二级子区域Sij包括Si1至Sij个区域,根据二级子区域Sij内的特征点数目评价该区域特征点是否均匀分布;若Si1至Sij特征点数目均相近,相近的计算方法为:通过计算二级子区域统计分布的特征点方差数值,并根据该方差数值判断,当方差数值小于15时,则可以判定Si子区域特征点分布均匀,反之判定不均匀。Further preferably, the method for evaluating the uniformity of feature point distribution is as follows: first, the image data is initially divided into several sub-regions S i , and each sub-region S i is divided into several second-level sub-regions S ij , and the second-level sub-region S ij includes: S i1 to S ij regions, according to the number of feature points in the secondary sub-region S ij to evaluate whether the feature points in the region are evenly distributed; If the variance value is less than 15, it can be judged that the distribution of feature points in the sub-region is uniform, otherwise it is judged to be uneven.

进一步优选地,图像数据分割方法包括分割为中心和四周方向、由左上至右下分割或者由左下至右上分割。Further preferably, the image data segmentation method includes segmentation into center and peripheral directions, segmentation from upper left to lower right, or segmentation from lower left to upper right.

本实施例通过图像分割,对每个区域进行特征点分布情况判断,从而根据不同分布情况运用不同的算法。In this embodiment, the distribution of feature points is judged for each region through image segmentation, so that different algorithms are used according to different distributions.

优选地,S3具体包括以下步骤:Preferably, S3 specifically includes the following steps:

S31:根据特征点分布均匀的彩色图像与深度图像,采用下式(3)获得点云,S31: According to the color image and the depth image with uniform distribution of feature points, use the following formula (3) to obtain the point cloud,

式(3)使用像素坐标系o-u-v;cX,cY,fX,fY,s为相机内参;u,v为特征点的像素坐标;d为特征点的深度;特征点的坐标为(X,Y,Z),若干由坐标定义的点构成点云;Formula (3) uses the pixel coordinate system ouv; c X , c Y , f X , f Y , s are the camera internal parameters; u, v are the pixel coordinates of the feature points; d is the depth of the feature points; the coordinates of the feature points are ( X, Y, Z), a number of points defined by coordinates constitute a point cloud;

S32:使用网格滤波器进行处理,从点云中提取出平面,并且使用z方向区间滤波器来滤掉距离较远的点。距离较远的点即为与其他点距离过大导致如果不过滤掉会使提取的平面仅有一个点从而增加不符合特征点数的平面。S32: Use a grid filter to process, extract a plane from the point cloud, and use a z-direction interval filter to filter out points that are far away. Points with a long distance are too far away from other points, so if they are not filtered out, there will be only one point in the extracted plane, which will increase the number of planes that do not meet the feature points.

本实施例通过彩色图像获得色彩信息(即灰度),通过深度图像获得距离信息,从而能计算像素的3D相机坐标,生成点云。本实施例通过RGB图像和深度图像生成点云。In this embodiment, color information (ie, grayscale) is obtained through a color image, and distance information is obtained through a depth image, so that the 3D camera coordinates of the pixels can be calculated, and a point cloud can be generated. This embodiment generates a point cloud from an RGB image and a depth image.

优选地,S4具体包括以下步骤:Preferably, S4 specifically includes the following steps:

S41:平面提取的公式如下:S41: The formula for plane extraction is as follows:

aX+bY+cZ+d=0 (4)aX+bY+cZ+d=0 (4)

式(4)中,a,b,c代表常数;In formula (4), a, b, c represent constants;

S42:使用随机抽样一致方法从带有噪声的点云数据中提取平面,提取判定条件为:点云剩余点数大于总数的30%或者提取平面小于3则提取特征点,当提取出这些特征点后,对剩余的点再次进行面提取,直到满足达到提取平面个数3或者剩余点数小于总数的30%。S42: Extract planes from the point cloud data with noise by using the random sampling and consensus method. The extraction judgment condition is: the remaining points of the point cloud are greater than 30% of the total number or the extraction planes are less than 3, and feature points are extracted. When these feature points are extracted , and perform surface extraction on the remaining points again until the number of extracted planes reaches 3 or the number of remaining points is less than 30% of the total number.

优选地,S5具体包括以下步骤:Preferably, S5 specifically includes the following steps:

S51:根据S2的oFAST算法改变其中的阈值t,改变后的阈值t为t',并缩小lx+t'与lx-t'之间的范围,根据特征点提取结果调节t'的范围,确定改变后的t'值使其最优;S51: Change the threshold t according to the oFAST algorithm of S2, the changed threshold t is t', and reduce the range between l x +t' and l x -t', and adjust the range of t' according to the feature point extraction result , determine the changed t' value to make it optimal;

S52:若某个关键点的邻域内存在多个关键点时,比较这些特征点数值J的大小,J最大的保留,其余删除,其中该数值J的定义如下:S52: If there are multiple key points in the neighborhood of a certain key point, compare the value J of these feature points, keep the largest value J, and delete the rest, where the value J is defined as follows:

式(5)中,lxy-lx和lx-lxy均代表关键点和已知特征点的距离。In formula (5), l xy -l x and l x -l xy both represent the distance between the key point and the known feature point.

优选地,S6中,投影公式为:Preferably, in S6, the projection formula is:

式(6)中,s为比例因子,可根据实际情况进行选取,进行投影后,每个平面的灰度图像被重建,将灰度图像进行灰度直方图均衡化之后,使得图像更加清晰分明,并且可以减少深度上带来的噪声。In formula (6), s is the scale factor, which can be selected according to the actual situation. After projection, the grayscale image of each plane is reconstructed, and the grayscale image is equalized by the grayscale histogram to make the image more clear and distinct. , and can reduce the noise brought by the depth.

根据表1和表2取值进行不同的算法和本算法的特征点提取准确率与运行时间进行比较。According to the values in Table 1 and Table 2, different algorithms and the feature point extraction accuracy and running time of this algorithm are compared.

如图2~图4所示,本发明分别对书桌、房间以及泰迪熊进行特征点提取,提取后特征点分布较为均匀并具有代表性与准确性,从而使后续重建后的图像更加清晰。As shown in FIGS. 2 to 4 , the present invention extracts feature points for desks, rooms and teddy bears respectively. After extraction, feature points are distributed evenly, representative and accurate, so that subsequent reconstructed images are clearer.

表1为本发明算法与ORB算法以及ICP算法的3幅图片的特征点提取准确率对比表Table 1 is a comparison table of the accuracy of feature point extraction of three pictures of the algorithm of the present invention, the ORB algorithm and the ICP algorithm

表2为本发明算法与ORB算法以及ICP算法的3幅图片的运行时间对比表Table 2 is a comparison table of the running time of three pictures of the algorithm of the present invention, ORB algorithm and ICP algorithm

从表1可以看出,不论是单个物体的特征点提取还是复数个物体的特征点提取,本发明算法的准确率均明显高于单独使用ORB算法以及ICP算法,而相机的校准误差等参数尽管对结果有些许影响,但本发明算法带来的准确率依然远高于其他算法。As can be seen from Table 1, whether it is the feature point extraction of a single object or the feature point extraction of multiple objects, the accuracy of the algorithm of the present invention is significantly higher than that of the ORB algorithm and the ICP algorithm alone, and the parameters such as the calibration error of the camera are There is a slight impact on the results, but the accuracy brought by the algorithm of the present invention is still much higher than that of other algorithms.

从表2可以看出,由于本发明算法结合了ORB算法,并且之中插入了对于区域特征点分布的均匀性判断并结合平面提取与随机抽样一致方法,因此运行时间比起单独使用ORB算法稍长,但不影响本发明算法的实用性。而ICP算法由于计算量过大导致运行时间过长削弱了其实用性并加大了对于移动机器人硬件的需求。可以说本发明算法仅仅牺牲了可以忽略不计的额外运行时间而能大幅提高对图片特征点提取的准确性。As can be seen from Table 2, since the algorithm of the present invention combines the ORB algorithm, and inserts the uniformity judgment of the distribution of regional feature points and combines the consistent method of plane extraction and random sampling, the running time is slightly shorter than that of the ORB algorithm alone. long, but does not affect the practicability of the algorithm of the present invention. However, the ICP algorithm has a long running time due to the excessive calculation amount, which weakens its practicability and increases the demand for mobile robot hardware. It can be said that the algorithm of the present invention can greatly improve the accuracy of image feature point extraction by sacrificing negligible extra running time.

实施例2Example 2

本实施例与实施例1的区别仅在于:S21中,m=9;S42中,点云剩余点数大于总数的40%或者提取平面小于5则提取特征点。The difference between this embodiment and Embodiment 1 is only that: in S21, m=9; in S42, if the number of remaining points of the point cloud is greater than 40% of the total number or the extraction plane is less than 5, feature points are extracted.

实施例3Example 3

本实施例与实施例1的区别仅在于:S21中,m=10;S42中,点云剩余点数大于总数的20%或者提取平面小于4则提取特征点。The difference between this embodiment and Embodiment 1 is only that: in S21, m=10; in S42, feature points are extracted when the number of remaining points of the point cloud is greater than 20% of the total number or the extraction plane is less than 4.

在此处所提供的说明书中,说明了大量具体细节。然而,能够理解,本发明的实施例可以在没有这些具体细节的情况下被实践。在一些实例中,并未详细示出公知的方法、结构和技术,以便不模糊对本说明书的理解。In the description provided herein, numerous specific details are set forth. It will be understood, however, that embodiments of the invention may be practiced without these specific details. In some instances, well-known methods, structures and techniques have not been shown in detail in order not to obscure an understanding of this description.

在本发明的描述中,需要理解的是,术语“上”、“下”、“前”、“后”、“左”、“右”等指示的方位或位置关系为基于附图所示的方位或位置关系,仅是为了便于描述本发明和简化描述,而不是指示或暗示所指的装置或元件必须具有特定的方位、以特定的方位构造和操作,因此不能理解为对本发明的限制。In the description of the present invention, it should be understood that the orientation or positional relationship indicated by the terms "upper", "lower", "front", "rear", "left", "right", etc. are based on those shown in the accompanying drawings The orientation or positional relationship is only for the convenience of describing the present invention and simplifying the description, rather than indicating or implying that the indicated device or element must have a specific orientation, be constructed and operated in a specific orientation, and therefore should not be construed as a limitation of the present invention.

类似地,应当理解,为了精简本公开并帮助理解各个发明方面中的一个或多个,在上面对本发明的示例性实施例的描述中,本发明的各个特征有时被一起分组到单个实施例、图、或者对其的描述中。然而,并不应将该公开的方法解释成反映如下意图:即所要求保护的本发明要求比在每个权利要求中所明确记载的特征更多特征。更确切地说,如权利要求书所反映的那样,发明方面在于少于前面公开的单个实施例的所有特征。因此,遵循具体实施方式的权利要求书由此明确地并入该具体实施方式,其中每个权利要求本身都作为本发明的单独实施例。Similarly, it is to be understood that in the above description of exemplary embodiments of the invention, various features of the invention are sometimes grouped together into a single embodiment, figure, or its description. This disclosure, however, should not be interpreted as reflecting an intention that the invention as claimed requires more features than are expressly recited in each claim. Rather, as the following claims reflect, inventive aspects lie in less than all features of a single foregoing disclosed embodiment. Thus, the claims following the Detailed Description are hereby expressly incorporated into this Detailed Description, with each claim standing on its own as a separate embodiment of this invention.

如在此所使用的那样,除非另行规定,使用序数词“第一”、“第二”、“第三”等等来描述普通对象仅仅表示涉及类似对象的不同实例,并且并不意图暗示这样被描述的对象必须具有时间上、空间上、排序方面或者以任意其它方式的给定顺序。As used herein, unless otherwise specified, the use of the ordinal numbers "first," "second," "third," etc. to describe common objects merely refers to different instances of similar objects, and is not intended to imply such The objects being described must have a given order in time, space, ordinal, or in any other way.

尽管根据有限数量的实施例描述了本发明,但是受益于上面的描述,本技术领域内的技术人员明白,在由此描述的本发明的范围内,可以设想其它实施例。此外,应当注意,本说明书中使用的语言主要是为了可读性和教导的目的而选择的,而不是为了解释或者限定本发明的主题而选择的。因此,在不偏离所附权利要求书的范围和精神的情况下,对于本技术领域的普通技术人员来说许多修改和变更都是显而易见的。对于本发明的范围,对本发明所做的公开是说明性的,而非限制性的,本发明的范围由所附权利要求书限定。While the invention has been described in terms of a limited number of embodiments, those skilled in the art will appreciate, having the benefit of the above description, that other embodiments are conceivable within the scope of the invention thus described. Furthermore, it should be noted that the language used in this specification has been principally selected for readability and teaching purposes, rather than to explain or define the subject matter of the invention. Accordingly, many modifications and variations will be apparent to those skilled in the art without departing from the scope and spirit of the appended claims. This disclosure is intended to be illustrative, not restrictive, as to the scope of the present invention, which is defined by the appended claims.

以上所述仅是本发明的优选实施方式,应当指出:对于本技术领域的普通技术人员来说,在不脱离本发明原理的前提下,还可以做出若干改进和润饰,这些改进和润饰也应视为本发明的保护范围。The above is only the preferred embodiment of the present invention, it should be pointed out: for those skilled in the art, under the premise of not departing from the principle of the present invention, several improvements and modifications can also be made, and these improvements and modifications are also It should be regarded as the protection scope of the present invention.

Claims (8)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202010985540.5A CN112115953B (en) | 2020-09-18 | 2020-09-18 | Optimized ORB algorithm based on RGB-D camera combined plane detection and random sampling coincidence algorithm |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202010985540.5A CN112115953B (en) | 2020-09-18 | 2020-09-18 | Optimized ORB algorithm based on RGB-D camera combined plane detection and random sampling coincidence algorithm |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN112115953A true CN112115953A (en) | 2020-12-22 |

| CN112115953B CN112115953B (en) | 2023-07-11 |

Family

ID=73800133

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202010985540.5A Active CN112115953B (en) | 2020-09-18 | 2020-09-18 | Optimized ORB algorithm based on RGB-D camera combined plane detection and random sampling coincidence algorithm |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN112115953B (en) |

Cited By (24)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN112752028A (en) * | 2021-01-06 | 2021-05-04 | 南方科技大学 | Pose determination method, device and equipment of mobile platform and storage medium |

| CN112783995A (en) * | 2020-12-31 | 2021-05-11 | 杭州海康机器人技术有限公司 | V-SLAM map checking method, device and equipment |

| US11403069B2 (en) | 2017-07-24 | 2022-08-02 | Tesla, Inc. | Accelerated mathematical engine |

| US11409692B2 (en) | 2017-07-24 | 2022-08-09 | Tesla, Inc. | Vector computational unit |

| US11487288B2 (en) | 2017-03-23 | 2022-11-01 | Tesla, Inc. | Data synthesis for autonomous control systems |

| US11537811B2 (en) | 2018-12-04 | 2022-12-27 | Tesla, Inc. | Enhanced object detection for autonomous vehicles based on field view |

| US11561791B2 (en) | 2018-02-01 | 2023-01-24 | Tesla, Inc. | Vector computational unit receiving data elements in parallel from a last row of a computational array |

| US11562231B2 (en) | 2018-09-03 | 2023-01-24 | Tesla, Inc. | Neural networks for embedded devices |

| US11567514B2 (en) | 2019-02-11 | 2023-01-31 | Tesla, Inc. | Autonomous and user controlled vehicle summon to a target |

| US11610117B2 (en) | 2018-12-27 | 2023-03-21 | Tesla, Inc. | System and method for adapting a neural network model on a hardware platform |

| US11636333B2 (en) | 2018-07-26 | 2023-04-25 | Tesla, Inc. | Optimizing neural network structures for embedded systems |

| US11665108B2 (en) | 2018-10-25 | 2023-05-30 | Tesla, Inc. | QoS manager for system on a chip communications |

| US11681649B2 (en) | 2017-07-24 | 2023-06-20 | Tesla, Inc. | Computational array microprocessor system using non-consecutive data formatting |

| US11734562B2 (en) | 2018-06-20 | 2023-08-22 | Tesla, Inc. | Data pipeline and deep learning system for autonomous driving |

| US11748620B2 (en) | 2019-02-01 | 2023-09-05 | Tesla, Inc. | Generating ground truth for machine learning from time series elements |

| US11790664B2 (en) | 2019-02-19 | 2023-10-17 | Tesla, Inc. | Estimating object properties using visual image data |

| US11816585B2 (en) | 2018-12-03 | 2023-11-14 | Tesla, Inc. | Machine learning models operating at different frequencies for autonomous vehicles |

| US11841434B2 (en) | 2018-07-20 | 2023-12-12 | Tesla, Inc. | Annotation cross-labeling for autonomous control systems |

| US11893393B2 (en) | 2017-07-24 | 2024-02-06 | Tesla, Inc. | Computational array microprocessor system with hardware arbiter managing memory requests |

| US11893774B2 (en) | 2018-10-11 | 2024-02-06 | Tesla, Inc. | Systems and methods for training machine models with augmented data |

| US12014553B2 (en) | 2019-02-01 | 2024-06-18 | Tesla, Inc. | Predicting three-dimensional features for autonomous driving |

| US12307350B2 (en) | 2018-01-04 | 2025-05-20 | Tesla, Inc. | Systems and methods for hardware-based pooling |

| US12462575B2 (en) | 2021-08-19 | 2025-11-04 | Tesla, Inc. | Vision-based machine learning model for autonomous driving with adjustable virtual camera |

| US12522243B2 (en) | 2021-08-19 | 2026-01-13 | Tesla, Inc. | Vision-based system training with simulated content |

Citations (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN107220995A (en) * | 2017-04-21 | 2017-09-29 | 西安交通大学 | A kind of improved method of the quick point cloud registration algorithms of ICP based on ORB characteristics of image |

| CN110414533A (en) * | 2019-06-24 | 2019-11-05 | 东南大学 | An Improved ORB Feature Extraction and Matching Method |

| US20190362178A1 (en) * | 2017-11-21 | 2019-11-28 | Jiangnan University | Object Symmetry Axis Detection Method Based on RGB-D Camera |

-

2020

- 2020-09-18 CN CN202010985540.5A patent/CN112115953B/en active Active

Patent Citations (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN107220995A (en) * | 2017-04-21 | 2017-09-29 | 西安交通大学 | A kind of improved method of the quick point cloud registration algorithms of ICP based on ORB characteristics of image |

| US20190362178A1 (en) * | 2017-11-21 | 2019-11-28 | Jiangnan University | Object Symmetry Axis Detection Method Based on RGB-D Camera |

| CN110414533A (en) * | 2019-06-24 | 2019-11-05 | 东南大学 | An Improved ORB Feature Extraction and Matching Method |

Non-Patent Citations (1)

| Title |

|---|

| JIANGYING QIN等: "Accumulative Errors Optimizaton for Visual Odometry od ORB-SLAM2 Based on RGB-D Cameras", ISPRS INTERNATIONAL JOURNAL OF GEO-INFORMATION * |

Cited By (41)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US11487288B2 (en) | 2017-03-23 | 2022-11-01 | Tesla, Inc. | Data synthesis for autonomous control systems |

| US12020476B2 (en) | 2017-03-23 | 2024-06-25 | Tesla, Inc. | Data synthesis for autonomous control systems |

| US12216610B2 (en) | 2017-07-24 | 2025-02-04 | Tesla, Inc. | Computational array microprocessor system using non-consecutive data formatting |

| US12536131B2 (en) | 2017-07-24 | 2026-01-27 | Tesla, Inc. | Vector computational unit |

| US11409692B2 (en) | 2017-07-24 | 2022-08-09 | Tesla, Inc. | Vector computational unit |

| US11893393B2 (en) | 2017-07-24 | 2024-02-06 | Tesla, Inc. | Computational array microprocessor system with hardware arbiter managing memory requests |

| US12086097B2 (en) | 2017-07-24 | 2024-09-10 | Tesla, Inc. | Vector computational unit |

| US11403069B2 (en) | 2017-07-24 | 2022-08-02 | Tesla, Inc. | Accelerated mathematical engine |

| US11681649B2 (en) | 2017-07-24 | 2023-06-20 | Tesla, Inc. | Computational array microprocessor system using non-consecutive data formatting |

| US12307350B2 (en) | 2018-01-04 | 2025-05-20 | Tesla, Inc. | Systems and methods for hardware-based pooling |

| US11561791B2 (en) | 2018-02-01 | 2023-01-24 | Tesla, Inc. | Vector computational unit receiving data elements in parallel from a last row of a computational array |

| US11797304B2 (en) | 2018-02-01 | 2023-10-24 | Tesla, Inc. | Instruction set architecture for a vector computational unit |

| US12455739B2 (en) | 2018-02-01 | 2025-10-28 | Tesla, Inc. | Instruction set architecture for a vector computational unit |

| US11734562B2 (en) | 2018-06-20 | 2023-08-22 | Tesla, Inc. | Data pipeline and deep learning system for autonomous driving |

| US11841434B2 (en) | 2018-07-20 | 2023-12-12 | Tesla, Inc. | Annotation cross-labeling for autonomous control systems |

| US12079723B2 (en) | 2018-07-26 | 2024-09-03 | Tesla, Inc. | Optimizing neural network structures for embedded systems |

| US11636333B2 (en) | 2018-07-26 | 2023-04-25 | Tesla, Inc. | Optimizing neural network structures for embedded systems |

| US11562231B2 (en) | 2018-09-03 | 2023-01-24 | Tesla, Inc. | Neural networks for embedded devices |

| US12346816B2 (en) | 2018-09-03 | 2025-07-01 | Tesla, Inc. | Neural networks for embedded devices |

| US11983630B2 (en) | 2018-09-03 | 2024-05-14 | Tesla, Inc. | Neural networks for embedded devices |

| US11893774B2 (en) | 2018-10-11 | 2024-02-06 | Tesla, Inc. | Systems and methods for training machine models with augmented data |

| US11665108B2 (en) | 2018-10-25 | 2023-05-30 | Tesla, Inc. | QoS manager for system on a chip communications |

| US12367405B2 (en) | 2018-12-03 | 2025-07-22 | Tesla, Inc. | Machine learning models operating at different frequencies for autonomous vehicles |

| US11816585B2 (en) | 2018-12-03 | 2023-11-14 | Tesla, Inc. | Machine learning models operating at different frequencies for autonomous vehicles |

| US12198396B2 (en) | 2018-12-04 | 2025-01-14 | Tesla, Inc. | Enhanced object detection for autonomous vehicles based on field view |

| US11908171B2 (en) | 2018-12-04 | 2024-02-20 | Tesla, Inc. | Enhanced object detection for autonomous vehicles based on field view |

| US11537811B2 (en) | 2018-12-04 | 2022-12-27 | Tesla, Inc. | Enhanced object detection for autonomous vehicles based on field view |

| US11610117B2 (en) | 2018-12-27 | 2023-03-21 | Tesla, Inc. | System and method for adapting a neural network model on a hardware platform |

| US12136030B2 (en) | 2018-12-27 | 2024-11-05 | Tesla, Inc. | System and method for adapting a neural network model on a hardware platform |

| US11748620B2 (en) | 2019-02-01 | 2023-09-05 | Tesla, Inc. | Generating ground truth for machine learning from time series elements |

| US12223428B2 (en) | 2019-02-01 | 2025-02-11 | Tesla, Inc. | Generating ground truth for machine learning from time series elements |

| US12014553B2 (en) | 2019-02-01 | 2024-06-18 | Tesla, Inc. | Predicting three-dimensional features for autonomous driving |

| US12164310B2 (en) | 2019-02-11 | 2024-12-10 | Tesla, Inc. | Autonomous and user controlled vehicle summon to a target |

| US11567514B2 (en) | 2019-02-11 | 2023-01-31 | Tesla, Inc. | Autonomous and user controlled vehicle summon to a target |

| US12236689B2 (en) | 2019-02-19 | 2025-02-25 | Tesla, Inc. | Estimating object properties using visual image data |

| US11790664B2 (en) | 2019-02-19 | 2023-10-17 | Tesla, Inc. | Estimating object properties using visual image data |

| CN112783995B (en) * | 2020-12-31 | 2022-06-03 | 杭州海康机器人技术有限公司 | V-SLAM map checking method, device and equipment |

| CN112783995A (en) * | 2020-12-31 | 2021-05-11 | 杭州海康机器人技术有限公司 | V-SLAM map checking method, device and equipment |

| CN112752028A (en) * | 2021-01-06 | 2021-05-04 | 南方科技大学 | Pose determination method, device and equipment of mobile platform and storage medium |

| US12462575B2 (en) | 2021-08-19 | 2025-11-04 | Tesla, Inc. | Vision-based machine learning model for autonomous driving with adjustable virtual camera |

| US12522243B2 (en) | 2021-08-19 | 2026-01-13 | Tesla, Inc. | Vision-based system training with simulated content |

Also Published As

| Publication number | Publication date |

|---|---|

| CN112115953B (en) | 2023-07-11 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN112115953B (en) | Optimized ORB algorithm based on RGB-D camera combined plane detection and random sampling coincidence algorithm | |

| CN111462200B (en) | A cross-video pedestrian positioning and tracking method, system and device | |

| Kang et al. | Automatic targetless camera–lidar calibration by aligning edge with gaussian mixture model | |

| CN107093205B (en) | A kind of three-dimensional space building window detection method for reconstructing based on unmanned plane image | |

| CN107067415B (en) | A target localization method based on image matching | |

| CN111640157A (en) | A Neural Network-Based Checkerboard Corner Detection Method and Its Application | |

| CN111914832B (en) | SLAM method of RGB-D camera under dynamic scene | |

| CN112907610B (en) | A Step-by-Step Inter-Frame Pose Estimation Algorithm Based on LeGO-LOAM | |

| CN101216895A (en) | An Automatic Extraction Method of Ellipse Image Feature in Complicated Background Image | |

| CN109472820B (en) | Monocular RGB-D camera real-time face reconstruction method and device | |

| CN111768447A (en) | A method and system for object pose estimation of monocular camera based on template matching | |

| CN107862735B (en) | RGBD three-dimensional scene reconstruction method based on structural information | |

| CN103136525A (en) | High-precision positioning method for special-shaped extended target by utilizing generalized Hough transformation | |

| CN114331879A (en) | Visible light and infrared image registration method for equalized second-order gradient histogram descriptor | |

| CN101383046B (en) | Three-dimensional reconstruction method on basis of image | |

| CN110570473A (en) | A Weight Adaptive Pose Estimation Method Based on Point-Line Fusion | |

| Zhou et al. | MoNet3D: Towards accurate monocular 3D object localization in real time | |

| CN113409242A (en) | Intelligent monitoring method for point cloud of rail intersection bow net | |

| WO2020197495A1 (en) | Method and system for feature matching | |

| CN120147628A (en) | A semantic SLAM method combining instance segmentation and optical flow estimation | |

| CN105574875B (en) | A kind of fish eye images dense stereo matching process based on polar geometry | |

| CN120807854B (en) | A Prefabrication Yard Layout Planning and Modeling Method and System Based on Digital-Aided Design | |

| CN111179327B (en) | A Calculation Method of Depth Map | |

| Yoshisada et al. | Indoor map generation from multiple LiDAR point clouds | |

| CN114444586B (en) | Initialization method of monocular camera visual odometry based on top viewpoint line feature fusion |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |