CN111459278A - Discrimination method of robot grasping state based on haptic array - Google Patents

Discrimination method of robot grasping state based on haptic array Download PDFInfo

- Publication number

- CN111459278A CN111459278A CN202010252733.XA CN202010252733A CN111459278A CN 111459278 A CN111459278 A CN 111459278A CN 202010252733 A CN202010252733 A CN 202010252733A CN 111459278 A CN111459278 A CN 111459278A

- Authority

- CN

- China

- Prior art keywords

- layer

- robot

- model

- grasping

- grabbing

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Pending

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/016—Input arrangements with force or tactile feedback as computer generated output to the user

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/045—Combinations of networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Physics & Mathematics (AREA)

- General Engineering & Computer Science (AREA)

- General Physics & Mathematics (AREA)

- General Health & Medical Sciences (AREA)

- Computing Systems (AREA)

- Computational Linguistics (AREA)

- Data Mining & Analysis (AREA)

- Evolutionary Computation (AREA)

- Biomedical Technology (AREA)

- Molecular Biology (AREA)

- Biophysics (AREA)

- Artificial Intelligence (AREA)

- Life Sciences & Earth Sciences (AREA)

- Mathematical Physics (AREA)

- Software Systems (AREA)

- Health & Medical Sciences (AREA)

- Human Computer Interaction (AREA)

- Manipulator (AREA)

Abstract

本公开提供一种基于触觉阵列的机器人抓取状态判别方法,包括:步骤S1:构建机器人抓取物体的触觉数据集;步骤S2:对步骤S1构建的数据集进行归一化处理;步骤S3:构造基于多层感知机的训练模型;步骤S4:初始化训练模型参数;步骤S5:基于步骤S2处理后的数据集进行模型训练和收敛,得到模型最优参数;以及步骤S6:进行实际抓取操作完成基于触觉阵列的机器人抓取状态的判别,上述方法能够应用于针对多种物体的机器人抓取操作中,根据抓取力分布判断抓取是否成功,并实时调整机器人抓取力,实现高准确度的机器人抓取操作,抓取成功率超过99%。

The present disclosure provides a method for discriminating the grasping state of a robot based on a tactile array, including: step S1: constructing a tactile data set for grasping objects by a robot; step S2: normalizing the data set constructed in step S1; step S3: Constructing a training model based on a multilayer perceptron; step S4: initializing the training model parameters; step S5: performing model training and convergence based on the data set processed in step S2, to obtain the optimal parameters of the model; and step S6: performing an actual grasping operation Complete the discrimination of the grasping state of the robot based on the tactile array. The above method can be applied to the grasping operation of the robot for a variety of objects, according to the grasping force distribution to judge whether the grasping is successful, and adjust the grasping force of the robot in real time to achieve high accuracy The robot grasping operation of high degree, the grasping success rate is over 99%.

Description

技术领域technical field

本公开涉及机器学习和深度学习技术领域,尤其涉及一种基于触觉阵列的机器人抓取状态判别方法。The present disclosure relates to the technical fields of machine learning and deep learning, and in particular, to a method for discriminating the grasping state of a robot based on a haptic array.

背景技术Background technique

传统针对机器人抓取力的判别包含以下两种:一种是利用预设力阈值的方法给予可以实现抓取物体的控制力。力阈值的设置可以基于经验或者基于机器人动力学计算抓取力的封闭解;一种是利用传感器反馈抓取力的大小,实现机器人抓取的力反馈控制。利用传感器进行抓取力反馈也包含着两种,一种间接抓取力反馈,这种是基于六维力传感器或者关节力矩传感器,直接获取的是机器人末端执行器的六维力信息和关节力矩信息,进而通过机器人动力学间接求解末端执行器的抓取力大小。这种方法求解得到的抓取力数据包含了传感器的测量误差和动力学模型误差,使得反馈结果精度不足,难以适用于较为精确的抓取操作。一种是直接抓取力反馈,通过安装在机器人末端执行器上的压力传感器或者触觉传感器,直接获得机器人抓取物体的抓取力信息,从而根据反馈力直接判断抓取是否成功。There are two traditional methods for determining the grasping force of a robot: one is to use a preset force threshold method to give the control force that can grasp the object. The setting of the force threshold can be based on experience or based on the robot dynamics to calculate the closed solution of the grasping force; one is to use the sensor to feedback the magnitude of the grasping force to realize the force feedback control of the robot grasping. The use of sensors for grasping force feedback also includes two types, one is indirect grasping force feedback, which is based on a six-dimensional force sensor or joint torque sensor, which directly obtains the six-dimensional force information and joint torque of the robot end effector. information, and then indirectly solve the grasping force of the end effector through robot dynamics. The grasping force data obtained by this method includes the measurement error of the sensor and the error of the dynamic model, which makes the feedback result less accurate and difficult to apply to more accurate grasping operations. One is the direct grasping force feedback. Through the pressure sensor or tactile sensor installed on the robot end effector, the grasping force information of the robot grasping the object can be directly obtained, so as to directly judge whether the grasping is successful according to the feedback force.

压力传感器为单点判别,仅能反映机器人与待操作物体的单点接触情况,对于大部分情况下是面接触的抓取操作,难以完善地反映接触力情况,使得通过单点压力传感器判断抓取成功与否的准确率不高,容易产生滑落、旋转等不稳定情况。而阵列式触觉传感器能够有效地反映抓取时机器人末端执行器与物体之间的接触情况,并将其采用数据矩阵的形式反馈给机器人,通过数值分析判别抓取成功与否。The pressure sensor is a single-point judgment, which can only reflect the single-point contact between the robot and the object to be operated. For most of the grasping operations that are in surface contact, it is difficult to perfectly reflect the contact force. Therefore, the single-point pressure sensor can judge grasping The accuracy of the success or not is not high, and it is easy to cause unstable situations such as slipping and rotation. The array tactile sensor can effectively reflect the contact between the robot end effector and the object during grasping, and feed it back to the robot in the form of a data matrix, and judge whether the grasping is successful or not through numerical analysis.

目前存在抓取判别方法,分为基于传统机器学习(支持向量机等)方法和深度卷积神经网络方法两大类,然而大多数依托的数据均为单点的压力传感器数据,同时使用的数据集规模较小,导致其判别准确率较低,而且深度卷积神经网络的复杂性导致其训练和判别的耗时均较长,难以配合机器人抓取操作的控制周期进行实时判别。同时,由于不同的判别方法均基于不同的压力传感器或触觉阵列,各传感器的规模、测量方式、量程、精度均不同,导致该方法得到的判别模型不具备通用性。因此,一种能够消除触觉阵列物理差异、实时准确高效的抓取判别方法对于机器人自主抓取具有重要意义。At present, there are grasping discrimination methods, which are divided into two categories: traditional machine learning (support vector machines, etc.) methods and deep convolutional neural network methods. However, most of the data relied on are single-point pressure sensor data, and the data used simultaneously The small size of the set leads to a low discrimination accuracy rate, and the complexity of the deep convolutional neural network leads to a long time for its training and discrimination, and it is difficult to match the control cycle of the robot grasping operation for real-time discrimination. At the same time, since different discrimination methods are based on different pressure sensors or tactile arrays, the scale, measurement method, range and accuracy of each sensor are different, so the discriminant model obtained by this method is not universal. Therefore, a real-time, accurate and efficient grasping discrimination method that can eliminate the physical differences of the haptic array is of great significance for the autonomous grasping of robots.

公开内容public content

(一)要解决的技术问题(1) Technical problems to be solved

基于上述问题,本公开提供了一种基于触觉阵列的机器人抓取状态判别方法,以缓解现有技术中判别准确率较低,而且深度卷积神经网络的复杂性导致其训练和判别的耗时均较长,难以配合机器人抓取操作的控制周期进行实时判别以及得到的判别模型不具备通用性等技术问题。Based on the above problems, the present disclosure provides a method for discriminating the grasping state of a robot based on a haptic array, so as to alleviate the low discrimination accuracy in the prior art, and the complexity of the deep convolutional neural network leads to time-consuming training and discrimination. Both are relatively long, it is difficult to coordinate with the control cycle of the robot grasping operation to perform real-time discrimination, and the obtained discriminant model does not have technical problems such as generality.

(二)技术方案(2) Technical solutions

本公开提供一种基于触觉阵列的机器人抓取状态判别方法,包括:The present disclosure provides a method for discriminating the grasping state of a robot based on a haptic array, including:

步骤S1:构建机器人抓取物体的触觉数据集;Step S1: construct a tactile data set for the robot to grasp the object;

步骤S2:对步骤S1构建的数据集进行归一化处理;Step S2: normalize the data set constructed in step S1;

步骤S3:构造基于多层感知机的训练模型;Step S3: construct a training model based on a multilayer perceptron;

步骤S4:初始化训练模型参数;Step S4: Initialize training model parameters;

步骤S5:基于步骤S2处理后的数据集进行模型训练和收敛,得到模型最优参数;以及Step S5: performing model training and convergence based on the data set processed in step S2 to obtain optimal parameters of the model; and

步骤S6:进行实际抓取操作完成基于触觉阵列的机器人抓取状态的判别。Step S6: Perform an actual grasping operation to complete the discrimination of the grasping state of the robot based on the haptic array.

在本公开实施例中,在机器人的机械臂手爪上安装触觉传感阵列。In the embodiment of the present disclosure, a tactile sensing array is installed on the gripper of the robot arm.

在本公开实施例中,所述触觉传感阵列包括:16×10的传感单元,每一个传感单元能够感知机器人抓取物体时施加在物体上的压力分布。In the embodiment of the present disclosure, the tactile sensing array includes: 16×10 sensing units, and each sensing unit can sense the pressure distribution exerted on the object when the robot grasps the object.

在本公开实施例中,每个传感单元的力感知范围为0-5N,力分辨率0.1N。In the embodiment of the present disclosure, the force sensing range of each sensing unit is 0-5N, and the force resolution is 0.1N.

在本公开实施例中,步骤S1中,所述抓取物体种类包括:苹果,棒球,水瓶,杯子,罐子,开瓶器,橘子和网球;将每种物体抓取成功的情况作为正样本,抓取不成功或不牢固的情况作为负样本;每一组样本包含16×10个传感单元的灰度图像和对应的抓取情况,可以表示为[x1,x2,...xi,x160,y],作为模型的输入,其中,xi表示第i个传感单元的灰度图像像素值,抓取成功y为1,不成功或抓取不牢固y为0。In the embodiment of the present disclosure, in step S1, the types of grasped objects include: apples, baseballs, water bottles, cups, jars, bottle openers, oranges and tennis balls; the successful grasp of each object is taken as a positive sample, Unsuccessful or weak grasps are used as negative samples; each set of samples contains grayscale images of 16×10 sensing units and the corresponding grasping conditions, which can be expressed as [x 1 , x 2 ,...x i , x 160 , y], as the input of the model, where x i represents the pixel value of the gray image of the i-th sensing unit, y is 1 for successful capture, and 0 for unsuccessful or weak capture.

在本公开实施例中,步骤S2中,为了提升模型精度和收敛速度,对模型的数据进行归一化处理,如公式(1)所示:In the embodiment of the present disclosure, in step S2, in order to improve the accuracy and convergence speed of the model, the data of the model is normalized, as shown in formula (1):

其中,将xi作为样本原始数据,xi *为归一化后数据,μ为样本原始数据的均值,σ为样本原始数据的标准差。Among them, x i is taken as the original sample data, x i * is the normalized data, μ is the mean of the original sample data, and σ is the standard deviation of the original sample data.

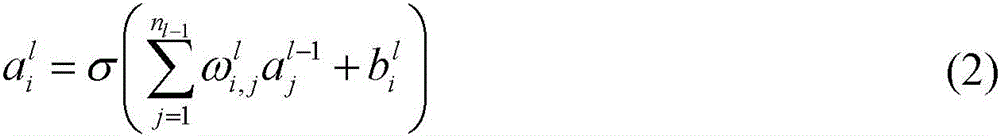

在本公开实施例中,步骤S3中,所述多层感知机模型共有4层,其中包含输入层、2层隐藏层和输出层;各层的神经元个数可以用向量n=(n1 n2 n3 n4)T来表示,其中输入层n1=160个神经元,隐藏层第一层n2=100个神经元,隐藏层第二层n3=30个神经元,输出层n4=1个神经元。In the embodiment of the present disclosure, in step S3, the multi-layer perceptron model has a total of 4 layers, including an input layer, 2 hidden layers and an output layer; the number of neurons in each layer can be represented by a vector n=(n 1 n 2 n 3 n 4 ) T to represent, where n 1 = 160 neurons in the input layer, n 2 = 100 neurons in the first hidden layer,

在本公开实施例中,步骤S3中,所述多层感知机训练模型为多层全连接神经网络,隐藏层和输出层通过线性关系权重、偏倚和激活函数与前一层的神经元连接;定义表示第l-1层的第j个神经元到第l层的第i个神经元的权重;表示第l层第i个神经元的偏倚;表示第l层第i个神经元的输出;于是各层间的传递关系可以表示为:In the embodiment of the present disclosure, in step S3, the multi-layer perceptron training model is a multi-layer fully connected neural network, and the hidden layer and the output layer are connected with the neurons of the previous layer through linear relationship weights, biases and activation functions; definition represents the weight of the jth neuron in the l-1th layer to the ith neuron in the lth layer; represents the bias of the ith neuron in the lth layer; Represents the output of the i-th neuron in the l-th layer; then the transfer relationship between the layers can be expressed as:

其中σ(·)表示激活函数,其函数形式为σ(x)=max(0,x);输出层输出0到1之间的值,其函数形式为f(x)=(1+e-x)T,以表征机器人抓取成功的概率;输出越接近于1,表示基于该多层感知机模型预测抓取成功的概率越高。where σ( ) represents the activation function, and its functional form is σ(x)=max(0, x); the output layer outputs values between 0 and 1, and its functional form is f(x)=(1+e - x ) T , to represent the probability of successful grasping by the robot; the closer the output is to 1, the higher the probability of predicting successful grasping based on the multilayer perceptron model.

在本公开实施例中,步骤S4中,为了避免模型训练过程中可能引发的梯度消失和梯度爆炸问题,对模型各层初始化权重与偏倚,权重随机生成且服从以0为均值,以为标准差的正态分布,即设定的初始值为0。In the embodiment of the present disclosure, in step S4, in order to avoid the problems of gradient disappearance and gradient explosion that may be caused during the model training process, the weights and biases of each layer of the model are initialized, and the weights are randomly generated and subject to 0 as the mean value, with 0 as the mean value. is a normal distribution with standard deviation, that is set up The initial value of is 0.

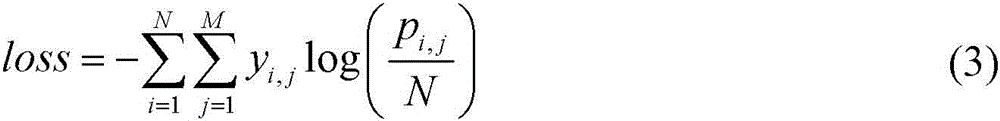

在本公开实施例中,步骤S5中,设置模型的损失函数为logloss,其表达式为:In the embodiment of the present disclosure, in step S5, the loss function of the model is set as logloss, and its expression is:

其中,N表示样本数,M表示分类问题的类别,yi,j表示第i个样本属于分类j时为1,属于其他分类为0;pi,j表示第i个样本被预测为j分类的概率;Among them, N represents the number of samples, M represents the category of the classification problem, y i, j represents 1 when the ith sample belongs to category j, and 0 when it belongs to other categories; p i, j represents that the ith sample is predicted to be classified as j The probability;

基于输入参数和初始化的权重和偏倚,正向传播计算各层输出,并在输出层得到预测值y;计算损失,并得到预测值与实际值之间的偏差,进而通过反向传播求解损失函数对权重和偏倚的梯度,更新权重和偏倚,直到损失函数收敛;在训练过程中,对模型进行L2正则化,此时的loss函数表示为:Based on the input parameters and initialized weights and biases, forward propagation calculates the output of each layer, and obtains the predicted value y at the output layer; calculates the loss, and obtains the deviation between the predicted value and the actual value, and then solves the loss function through back propagation For the gradients of weights and biases, update the weights and biases until the loss function converges; during the training process, perform L2 regularization on the model, and the loss function at this time is expressed as:

其中λ为L2正则项参数,L为模型层数,为第l层权重矩阵。where λ is the L2 regular term parameter, L is the number of model layers, is the weight matrix of the lth layer.

(三)有益效果(3) Beneficial effects

从上述技术方案可以看出,本公开基于触觉阵列的机器人抓取状态判别方法至少具有以下有益效果其中之一或其中一部分:It can be seen from the above technical solutions that the method for discriminating the grasping state of a robot based on the haptic array of the present disclosure has at least one or a part of the following beneficial effects:

(1)能够应用于针对多种物体的机器人抓取操作中,根据抓取力分布判断抓取是否成功,并实时调整机器人抓取力,实现高准确度的机器人抓取操作,抓取成功率超过99%;(1) It can be applied to robot grasping operations for a variety of objects, judge whether the grasping is successful according to the grasping force distribution, and adjust the grasping force of the robot in real time to achieve high-accuracy robot grasping operation, grasping success rate more than 99%;

(2)训练模型简单、所需的数据集规模较小、训练结果准确率高的特点,且能够有效地避免模型训练过程中的梯度消失、梯度爆炸和过拟合问题;(2) The training model is simple, the required data set is small, and the accuracy of the training results is high, and it can effectively avoid the gradient disappearance, gradient explosion and overfitting problems in the model training process;

(3)通过对采集数据的灰度图像化处理,能够有效地消除不同触觉阵列传感器的物理差异,使得该模型可以应用于不同触觉传感器所采集的数据集,具有一定的普适性。(3) The physical differences of different tactile array sensors can be effectively eliminated through the grayscale image processing of the collected data, so that the model can be applied to the data sets collected by different tactile sensors, and has certain universality.

附图说明Description of drawings

图1为本公开实施例基于触觉阵列的机器人抓取状态判别方法的流程示意图。FIG. 1 is a schematic flowchart of a method for discriminating a grasping state of a robot based on a haptic array according to an embodiment of the present disclosure.

图2为本公开实施例基于触觉阵列的机器人抓取状态判别方法的架构示意图。FIG. 2 is a schematic structural diagram of a method for discriminating a grasping state of a robot based on a haptic array according to an embodiment of the present disclosure.

图3为本公开实施例的16×10触觉阵列示意图。FIG. 3 is a schematic diagram of a 16×10 haptic array according to an embodiment of the present disclosure.

图4为本公开实施例的用于抓取判定的多层感知机模型示意图。FIG. 4 is a schematic diagram of a multi-layer perceptron model for grasping determination according to an embodiment of the present disclosure.

具体实施方式Detailed ways

本公开提供了一种基于触觉阵列的机器人抓取状态判别方法,为了实现机器人的稳定抓取操作,在机器人手爪上安装触觉传感阵列,通过触觉传感阵列获取机器人在抓取过程中的触觉力信息,建立针对多类物体不同抓取状态的数据集;将机器人抓取判别问题作为以触觉信息为输入的二分类问题,基于多层感知机构造训练模型;通过训练收敛得到模型参数,从而实现针对不同物体的高精度抓取判别。该模型具有训练速度快,判别结果精确的特点,优于其他机器学习模型和深度学习模型。The present disclosure provides a method for judging the grasping state of a robot based on a tactile array. In order to realize the stable grasping operation of the robot, a tactile sensing array is installed on the robot gripper, and the tactile sensing array is used to obtain the grasping state of the robot during the grasping process. The tactile force information is used to establish a data set for different grasping states of multiple types of objects; the robot grasping discrimination problem is regarded as a binary classification problem with tactile information as input, and a training model is constructed based on a multi-layer perceptron; model parameters are obtained through training convergence, In this way, high-precision grasping and discrimination for different objects can be realized. The model has the characteristics of fast training speed and accurate discrimination results, which is superior to other machine learning models and deep learning models.

为使本公开的目的、技术方案和优点更加清楚明白,以下结合具体实施例,并参照附图,对本公开进一步详细说明。In order to make the objectives, technical solutions and advantages of the present disclosure clearer, the present disclosure will be further described in detail below with reference to the specific embodiments and the accompanying drawings.

在本公开实施例中,提供一种基于触觉阵列的机器人抓取状态判别方法,结合图1至图4所示,所述基于触觉阵列的机器人抓取状态判别方法,包括:In an embodiment of the present disclosure, a method for discriminating the grasping state of a robot based on a haptic array is provided. With reference to FIGS. 1 to 4 , the method for discriminating the grasping state of a robot based on a haptic array includes:

步骤S1:构建机器人抓取物体的触觉数据集;Step S1: construct a tactile data set for the robot to grasp the object;

步骤S2:对步骤S1构建的数据集进行归一化处理;Step S2: normalize the data set constructed in step S1;

步骤S3:构造基于多层感知机的训练模型;Step S3: construct a training model based on a multilayer perceptron;

步骤S4:初始化训练模型参数;Step S4: Initialize training model parameters;

步骤S5:基于步骤S2处理后的数据集进行模型训练和收敛,得到模型最优参数;以及Step S5: performing model training and convergence based on the data set processed in step S2 to obtain optimal parameters of the model; and

步骤S6:进行实际抓取操作完成基于触觉阵列的机器人抓取状态的判别。Step S6: Perform an actual grasping operation to complete the discrimination of the grasping state of the robot based on the haptic array.

步骤S1中,在机器人的机械臂二指手爪上安装触觉传感阵列,通过触觉传感阵列获取机器人在抓取过程中的触觉信息,提出抓取判别算法判断物体是否抓取成功。将机器人抓取判别问题作为以触觉传感阵列获取的触觉信息为输入的二分类问题,两个类别为抓取成功和抓取失败。抓取成功定义标准为抓取完成10s时间内抓取的物体不滑落、不自旋并保持稳定。In step S1, a tactile sensing array is installed on the two-fingered gripper of the robotic arm of the robot, the tactile information of the robot in the grasping process is obtained through the tactile sensing array, and a grasping discrimination algorithm is proposed to judge whether the object is successfully grasped. The robot grasping discrimination problem is regarded as a binary classification problem with the tactile information obtained by the tactile sensor array as the input, and the two categories are grasping success and grasping failure. The definition criterion of successful grasping is that the grasped object does not slip, spin and remain stable within 10s after the grasping is completed.

所述触觉传感阵列包括:16×10的传感单元,其阵列形式如图3所示,每一个传感单元能够感知机器人抓取物体时施加在物体上的压力分布。每个单元的力感知范围为0-5N,力分辨率0.1N。抓取物体时,压力分布按照从左到右、从上到下的顺序输出为一个数组。在采集数据样本时,为了便于数据统一和后续将不同阵列采集的数据集进行融合,构造压力感知与阵列可视化颜色变化的映射,建立力感知范围与黑-灰-白色域RGB值的映射关系,消除数据中的物理信息,则获得的数据样本为抓取时阵列可视化的灰度图像。The tactile sensing array includes: 16×10 sensing units, the array form of which is shown in Figure 3, and each sensing unit can sense the pressure distribution applied to the object when the robot grasps the object. Each unit has a force sensing range of 0-5N and a force resolution of 0.1N. When grabbing an object, the pressure distribution is output as an array in the order from left to right and top to bottom. When collecting data samples, in order to facilitate data unification and subsequent fusion of data sets collected by different arrays, the mapping between pressure perception and array visualization color changes is constructed, and the mapping relationship between force perception range and black-gray-white domain RGB values is established. Eliminating the physical information in the data, the obtained data samples are grayscale images of the array visualization at the time of grabbing.

抓取物体种类包括:苹果,棒球,水瓶,杯子,罐子,开瓶器,橘子和网球8种。The types of grabbed objects include: apples, baseballs, water bottles, cups, cans, corkscrews, oranges and tennis balls.

将每种物体抓取成功的情况作为正样本,无法抓取的情况和抓取不牢固的情况作为负样本。每一组样本包含16×10个传感单元的灰度图像和对应的抓取情况,可以表示为[x1,x2,...xi,x160,y],作为模型的输入。一个抓取状态取连续20帧数据(采样频率为20ms),每个物体分别在15个抓取状态下分别记录正负样本。将不同物体的数据根据正负样本进行汇总并去重,得到一共1562个样本。其中,训练集样本1085个,测试集样本477个。The successful grasping of each object is taken as a positive sample, and the ungraspable and weak grasping situations are taken as negative samples. Each set of samples contains grayscale images of 16 × 10 sensing units and the corresponding grasping conditions, which can be expressed as [x 1 , x 2 ,... xi , x 160 , y], as the input to the model. A grasping state takes 20 consecutive frames of data (sampling frequency is 20ms), and each object records positive and negative samples in 15 grasping states. The data of different objects are summarized and deduplicated according to the positive and negative samples, and a total of 1562 samples are obtained. Among them, there are 1085 samples in the training set and 477 samples in the test set.

步骤S2中,为了提升模型精度和收敛速度,对模型的数据进行归一化处理,基于z-score标准化方法,如式(1)所示,将原始数据减去均值与标准差的乘积得到归一化的数据。In step S2, in order to improve the accuracy and convergence speed of the model, the data of the model is normalized. Based on the z-score normalization method, as shown in equation (1), the original data is subtracted from the product of the mean and the standard deviation to obtain the normalization. normalized data.

其中,将xi作为样本原始数据,xi *为归一化后数据,μ为样本原始数据的均值,σ为样本原始数据的标准差。Among them, x i is taken as the original sample data, x i * is the normalized data, μ is the mean of the original sample data, and σ is the standard deviation of the original sample data.

步骤S3中,本公开提出的多层感知机模型共有4层,其中包含输入层、隐藏层(2层)和输出层。模型结构如图4所示。各层的神经元个数可以用向量n=(n1 n2 n3 n4)T来表示,其中输入层n1=160个神经元,隐藏层第一层n2=100个神经元,第二层n3=30个神经元,输出层n4=1个神经元。In step S3, the multi-layer perceptron model proposed by the present disclosure has 4 layers in total, including an input layer, a hidden layer (2 layers) and an output layer. The model structure is shown in Figure 4. The number of neurons in each layer can be represented by a vector n=(n 1 n 2 n 3 n 4 ) T , where n 1 = 160 neurons in the input layer, n 2 = 100 neurons in the first hidden layer, The second layer has n 3 =30 neurons, and the output layer has n 4 =1 neuron.

模型层与层之间是全连接,隐藏层和输出层通过线性关系权重、偏倚和激活函数与前一层的神经元连接。定义表示第l-1层的第j个神经元到第l层的第i个神经元的权重。表示第l层第i个神经元的偏倚。表示第l层第i个神经元的输出。于是各层间的传递关系可以表示为:The model layers are fully connected, and the hidden layer and output layer are connected to the neurons of the previous layer through linear relationship weights, biases and activation functions. definition represents the weight of the jth neuron in the l-1th layer to the ith neuron in the lth layer. represents the bias of the ith neuron in the lth layer. represents the output of the ith neuron in the lth layer. So the transfer relationship between the layers can be expressed as:

其中σ(·)表示激活函数,本模型使用ReLU函数作为连接各层的激活函数,其函数形式为σ(x)=max(0,x)。由于将机器人抓取判别作为二分类问题来处理,输出层仅有一个神经元,因此在输出层使用sigmoid作为激活函数输出0到1之间的值,其函数形式为f(x)=(1+e-x)T,以表征机器人抓取成功的概率。输出越接近于1,表示基于该多层感知机模型预测抓取成功的概率越高。Among them, σ(·) represents the activation function. This model uses the ReLU function as the activation function connecting each layer, and its function form is σ(x)=max(0,x). Since the robot grasping discrimination is treated as a two-class problem, the output layer has only one neuron, so the sigmoid is used as the activation function in the output layer to output values between 0 and 1, and its function form is f(x)=(1 +e -x ) T , to characterize the probability of successful grasping by the robot. The closer the output is to 1, the higher the probability of predicting successful grasping based on the MLP model.

步骤S4中,为了避免模型训练过程中可能引发的梯度消失和梯度爆炸问题,基于He initialization对模型各层初始化权重与偏倚,权重随机生成且服从以0为均值,以为标准差的正态分布,即设定的初始值为0。In step S4, in order to avoid the gradient disappearance and gradient explosion problems that may be caused during the model training process, the weights and biases of each layer of the model are initialized based on He initialization. is a normal distribution with standard deviation, that is set up The initial value of is 0.

步骤S5中,设置模型的损失函数为logloss,其表达式为:In step S5, the loss function of the model is set as logloss, and its expression is:

其中,N表示样本数,M表示分类问题的类别,yi,j表示第i个样本属于分类j时为1,属于其他分类为0;pi,j表示第i个样本被预测为j分类的概率。Among them, N represents the number of samples, M represents the category of the classification problem, y i, j represents 1 when the ith sample belongs to category j, and 0 when it belongs to other categories; p i, j represents that the ith sample is predicted to be classified as j The probability.

在对模型参数完成初始化之后,基于输入参数和初始化的权重和偏倚,正向传播计算各层输出,并在输出层利用sigmoid函数得到预测值y。计算损失,并得到预测值与实际值之间的偏差,进而通过反向传播求解损失函数对权重和偏倚的梯度,利用Adam算法更新权重和偏倚,直到损失函数收敛。在训练过程中,在每次迭代过程中打乱样本,并在损失函数中增加L2正则化项,对模型进行L2正则化,从而优化模型在收敛时使得ω尽量小,从而避免过拟合问题。After the model parameters are initialized, based on the input parameters and the initialized weights and biases, the output of each layer is calculated by forward propagation, and the predicted value y is obtained by using the sigmoid function at the output layer. Calculate the loss, and get the deviation between the predicted value and the actual value, and then solve the gradient of the loss function to the weight and bias through backpropagation, and use the Adam algorithm to update the weight and bias until the loss function converges. In the training process, the samples are scrambled in each iteration process, and the L2 regularization term is added to the loss function to perform L2 regularization on the model, so as to optimize the model to make ω as small as possible when converging, thereby avoiding the overfitting problem. .

此时的loss函数表示为:The loss function at this time is expressed as:

其中λ为L2正则项参数,L为模型层数,此处L=4。为第l层权重矩阵。设置L2正则项参数λ=0.0001,优化器输入的样本批次数量200,最大迭代步数500,优化公差1e-4。设置Adam算法中学习率α=0.001,一阶矩估计的指数衰减率β1=0.9,二阶矩估计的指数衰减率β2=0.999,数值稳定指标ε=1e-8。训练结束得到模型最优参数,同时所对应的最优化模型在测试集上预测的准确率能够达到99.74%。进而即可实现高准确度的机器人抓取操作,抓取成功率超过99%。Where λ is the L2 regular term parameter, L is the number of model layers, where L=4. is the weight matrix of the lth layer. Set the L2 regular term parameter λ=0.0001, the number of sample batches input by the optimizer is 200, the maximum number of iteration steps is 500, and the optimization tolerance is 1e-4. Set the learning rate α=0.001 in the Adam algorithm, the exponential decay rate β 1 =0.9 of the first-order moment estimation, the exponential decay rate β 2 =0.999 of the second-order moment estimation, and the numerical stability index ε=1e −8 . At the end of training, the optimal parameters of the model are obtained, and the prediction accuracy of the corresponding optimal model on the test set can reach 99.74%. Then high-accuracy robot grasping operation can be realized, and the grasping success rate exceeds 99%.

至此,已经结合附图对本公开实施例进行了详细描述。需要说明的是,在附图或说明书正文中,未绘示或描述的实现方式,均为所属技术领域中普通技术人员所知的形式,并未进行详细说明。此外,上述对各元件和方法的定义并不仅限于实施例中提到的各种具体结构、形状或方式,本领域普通技术人员可对其进行简单地更改或替换。So far, the embodiments of the present disclosure have been described in detail with reference to the accompanying drawings. It should be noted that, in the accompanying drawings or the text of the description, the implementations that are not shown or described are in the form known to those of ordinary skill in the technical field, and are not described in detail. In addition, the above definitions of various elements and methods are not limited to various specific structures, shapes or manners mentioned in the embodiments, and those of ordinary skill in the art can simply modify or replace them.

依据以上描述,本领域技术人员应当对本公开基于硅纳米柱的结构色成像结构、测试系统及制备方法有了清楚的认识。Based on the above description, those skilled in the art should have a clear understanding of the structure color imaging structure, testing system and preparation method based on silicon nanopillars of the present disclosure.

综上所述,本公开提供了一种基于硅纳米柱的结构色成像结构、测试系统及制备方法,通过电子束光刻和电子束蒸发沉积方法形成圆柱周期阵列,精度高;与传统的半导体工艺兼容,易于集成;采用圆柱纳米阵列结构激发米氏共振,根据折射率的变化,同时可以观察样品变化带来的结构色的变化,易于观察,且绿色环保;成像显示技术设计时,不同阵列周期或者不同尺寸圆柱结构的反射谱特性不同,研究人员可以根据需要制作不同的圆柱结构,满足不同波长情况下的测量。To sum up, the present disclosure provides a structure color imaging structure based on silicon nanopillars, a testing system and a preparation method, and a cylindrical periodic array is formed by electron beam lithography and electron beam evaporation deposition method, with high precision; The technology is compatible and easy to integrate; the cylindrical nano-array structure is used to excite the Mie resonance, and according to the change of the refractive index, the change of the structural color caused by the change of the sample can be observed at the same time, which is easy to observe, and is environmentally friendly; when designing the imaging display technology, different arrays Cylindrical structures with different periods or different sizes have different reflectance spectrum characteristics, and researchers can make different cylindrical structures as needed to meet the measurement at different wavelengths.

还需要说明的是,实施例中提到的方向用语,例如“上”、“下”、“前”、“后”、“左”、“右”等,仅是参考附图的方向,并非用来限制本公开的保护范围。贯穿附图,相同的元素由相同或相近的附图标记来表示。在可能导致对本公开的理解造成混淆时,将省略常规结构或构造。It should also be noted that the directional terms mentioned in the embodiments, such as "up", "down", "front", "rear", "left", "right", etc., only refer to the directions of the drawings, not used to limit the scope of protection of the present disclosure. Throughout the drawings, the same elements are denoted by the same or similar reference numbers. Conventional structures or constructions will be omitted when it may lead to obscuring the understanding of the present disclosure.

并且图中各部件的形状和尺寸不反映真实大小和比例,而仅示意本公开实施例的内容。另外,在权利要求中,不应将位于括号之间的任何参考符号构造成对权利要求的限制。Moreover, the shapes and sizes of the components in the figures do not reflect the actual size and proportion, but merely illustrate the contents of the embodiments of the present disclosure. Furthermore, in the claims, any reference signs placed between parentheses shall not be construed as limiting the claim.

除非有所知名为相反之意,本说明书及所附权利要求中的数值参数是近似值,能够根据通过本公开的内容所得的所需特性改变。具体而言,所有使用于说明书及权利要求中表示组成的含量、反应条件等等的数字,应理解为在所有情况中是受到「约」的用语所修饰。一般情况下,其表达的含义是指包含由特定数量在一些实施例中±10%的变化、在一些实施例中±5%的变化、在一些实施例中±1%的变化、在一些实施例中±0.5%的变化。Unless known to the contrary, the numerical parameters set forth in this specification and attached claims are approximations that can vary depending upon the desired properties sought to be obtained from the teachings of the present disclosure. Specifically, all numbers used in the specification and claims to indicate compositional contents, reaction conditions, etc., should be understood as being modified by the word "about" in all cases. In general, the meaning expressed is meant to include a change of ±10% in some embodiments, a change of ±5% in some embodiments, a change of ±1% in some embodiments, and a change of ±1% in some embodiments. Example ±0.5% variation.

再者,单词“包含”不排除存在未列在权利要求中的元件或步骤。位于元件之前的单词“一”或“一个”不排除存在多个这样的元件。Furthermore, the word "comprising" does not exclude the presence of elements or steps not listed in a claim. The word "a" or "an" preceding an element does not exclude the presence of a plurality of such elements.

说明书与权利要求中所使用的序数例如“第一”、“第二”、“第三”等的用词,以修饰相应的元件,其本身并不意味着该元件有任何的序数,也不代表某一元件与另一元件的顺序、或是制造方法上的顺序,该些序数的使用仅用来使具有某命名的一元件得以和另一具有相同命名的元件能做出清楚区分。The ordinal numbers such as "first", "second", "third", etc. used in the description and the claims are used to modify the corresponding elements, which themselves do not mean that the elements have any ordinal numbers, nor do they Representing the order of a certain element and another element, or the order in the manufacturing method, the use of these ordinal numbers is only used to clearly distinguish an element with a certain name from another element with the same name.

此外,除非特别描述或必须依序发生的步骤,上述步骤的顺序并无限制于以上所列,且可根据所需设计而变化或重新安排。并且上述实施例可基于设计及可靠度的考虑,彼此混合搭配使用或与其他实施例混合搭配使用,即不同实施例中的技术特征可以自由组合形成更多的实施例。Furthermore, unless the steps are specifically described or must occur sequentially, the order of the above steps is not limited to those listed above, and may be varied or rearranged according to the desired design. And the above embodiments can be mixed and matched with each other or with other embodiments based on the consideration of design and reliability, that is, the technical features in different embodiments can be freely combined to form more embodiments.

本领域那些技术人员可以理解,可以对实施例中的设备中的模块进行自适应性地改变并且把它们设置在与该实施例不同的一个或多个设备中。可以把实施例中的模块或单元或组件组合成一个模块或单元或组件,以及此外可以把它们分成多个子模块或子单元或子组件。除了这样的特征和/或过程或者单元中的至少一些是相互排斥之外,可以采用任何组合对本说明书(包括伴随的权利要求、摘要和附图)中公开的所有特征以及如此公开的任何方法或者设备的所有过程或单元进行组合。除非另外明确陈述,本说明书(包括伴随的权利要求、摘要和附图)中公开的每个特征可以由提供相同、等同或相似目的的替代特征来代替。并且,在列举了若干装置的单元权利要求中,这些装置中的若干个可以是通过同一个硬件项来具体体现。Those skilled in the art will understand that the modules in the device in the embodiment can be adaptively changed and arranged in one or more devices different from the embodiment. The modules or units or components in the embodiments may be combined into one module or unit or component, and further they may be divided into multiple sub-modules or sub-units or sub-assemblies. All features disclosed in this specification (including accompanying claims, abstract and drawings) and any method so disclosed may be employed in any combination, unless at least some of such features and/or procedures or elements are mutually exclusive. All processes or units of equipment are combined. Each feature disclosed in this specification (including accompanying claims, abstract and drawings) may be replaced by alternative features serving the same, equivalent or similar purpose, unless expressly stated otherwise. Also, in a unit claim enumerating several means, several of these means can be embodied by one and the same item of hardware.

类似地,应当理解,为了精简本公开并帮助理解各个公开方面中的一个或多个,在上面对本公开的示例性实施例的描述中,本公开的各个特征有时被一起分组到单个实施例、图、或者对其的描述中。然而,并不应将该公开的方法解释成反映如下意图:即所要求保护的本公开要求比在每个权利要求中所明确记载的特征更多的特征。更确切地说,如下面的权利要求书所反映的那样,公开方面在于少于前面公开的单个实施例的所有特征。因此,遵循具体实施方式的权利要求书由此明确地并入该具体实施方式,其中每个权利要求本身都作为本公开的单独实施例。Similarly, it will be appreciated that in the above description of exemplary embodiments of the disclosure, various features of the disclosure are sometimes grouped together into a single embodiment, figure, or its description. However, this method of disclosure should not be interpreted as reflecting an intention that the claimed disclosure requires more features than are expressly recited in each claim. Rather, as the following claims reflect, disclosed aspects lie in less than all features of a single foregoing disclosed embodiment. Thus, the claims following the Detailed Description are hereby expressly incorporated into this Detailed Description, with each claim standing on its own as a separate embodiment of the present disclosure.

以上所述的具体实施例,对本公开的目的、技术方案和有益效果进行了进一步详细说明,所应理解的是,以上所述仅为本公开的具体实施例而已,并不用于限制本公开,凡在本公开的精神和原则之内,所做的任何修改、等同替换、改进等,均应包含在本公开的保护范围之内。The specific embodiments described above further describe the purpose, technical solutions and beneficial effects of the present disclosure in detail. It should be understood that the above-mentioned specific embodiments are only specific embodiments of the present disclosure, and are not intended to limit the present disclosure. Any modification, equivalent replacement, improvement, etc. made within the spirit and principle of the present disclosure should be included within the protection scope of the present disclosure.

Claims (10)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202010252733.XA CN111459278A (en) | 2020-04-01 | 2020-04-01 | Discrimination method of robot grasping state based on haptic array |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202010252733.XA CN111459278A (en) | 2020-04-01 | 2020-04-01 | Discrimination method of robot grasping state based on haptic array |

Publications (1)

| Publication Number | Publication Date |

|---|---|

| CN111459278A true CN111459278A (en) | 2020-07-28 |

Family

ID=71678992

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202010252733.XA Pending CN111459278A (en) | 2020-04-01 | 2020-04-01 | Discrimination method of robot grasping state based on haptic array |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN111459278A (en) |

Cited By (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN114065806A (en) * | 2021-10-28 | 2022-02-18 | 贵州大学 | Manipulator touch data classification method based on impulse neural network |

| CN115519579A (en) * | 2022-10-24 | 2022-12-27 | 深圳先进技术研究院 | A Robot Grasp Prediction Method Based on Triple Contrast Network |

Citations (9)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| WO2011014810A1 (en) * | 2009-07-30 | 2011-02-03 | Northwestern University | Systems, methods, and apparatus for reconstruction of 3-d object morphology, position, orientation and texture using an array of tactile sensors |

| CN103049792A (en) * | 2011-11-26 | 2013-04-17 | 微软公司 | Discriminative pretraining of Deep Neural Network |

| CN105956351A (en) * | 2016-07-05 | 2016-09-21 | 上海航天控制技术研究所 | Touch information classified computing and modelling method based on machine learning |

| CN106671112A (en) * | 2016-12-13 | 2017-05-17 | 清华大学 | Judging method of grabbing stability of mechanical arm based on touch sensation array information |

| CN106960099A (en) * | 2017-03-28 | 2017-07-18 | 清华大学 | A kind of manipulator grasp stability recognition methods based on deep learning |

| WO2018236753A1 (en) * | 2017-06-19 | 2018-12-27 | Google Llc | PREDICTION OF ROBOTIC SEIZURE USING NEURAL NETWORKS AND A GEOMETRY-SENSITIVE REPRESENTATION OF OBJECT |

| CN110023965A (en) * | 2016-10-10 | 2019-07-16 | 渊慧科技有限公司 | Neural network for selecting actions to be performed by robotic agents |

| US20200086483A1 (en) * | 2018-09-15 | 2020-03-19 | X Development Llc | Action prediction networks for robotic grasping |

| CN110909644A (en) * | 2019-11-14 | 2020-03-24 | 南京理工大学 | A method and system for adjusting the grasping attitude of a robotic arm end effector based on reinforcement learning |

-

2020

- 2020-04-01 CN CN202010252733.XA patent/CN111459278A/en active Pending

Patent Citations (10)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| WO2011014810A1 (en) * | 2009-07-30 | 2011-02-03 | Northwestern University | Systems, methods, and apparatus for reconstruction of 3-d object morphology, position, orientation and texture using an array of tactile sensors |

| CN103049792A (en) * | 2011-11-26 | 2013-04-17 | 微软公司 | Discriminative pretraining of Deep Neural Network |

| CN105956351A (en) * | 2016-07-05 | 2016-09-21 | 上海航天控制技术研究所 | Touch information classified computing and modelling method based on machine learning |

| CN110023965A (en) * | 2016-10-10 | 2019-07-16 | 渊慧科技有限公司 | Neural network for selecting actions to be performed by robotic agents |

| CN106671112A (en) * | 2016-12-13 | 2017-05-17 | 清华大学 | Judging method of grabbing stability of mechanical arm based on touch sensation array information |

| CN106960099A (en) * | 2017-03-28 | 2017-07-18 | 清华大学 | A kind of manipulator grasp stability recognition methods based on deep learning |

| WO2018236753A1 (en) * | 2017-06-19 | 2018-12-27 | Google Llc | PREDICTION OF ROBOTIC SEIZURE USING NEURAL NETWORKS AND A GEOMETRY-SENSITIVE REPRESENTATION OF OBJECT |

| CN110691676A (en) * | 2017-06-19 | 2020-01-14 | 谷歌有限责任公司 | Robot crawling prediction using neural networks and geometrically-aware object representations |

| US20200086483A1 (en) * | 2018-09-15 | 2020-03-19 | X Development Llc | Action prediction networks for robotic grasping |

| CN110909644A (en) * | 2019-11-14 | 2020-03-24 | 南京理工大学 | A method and system for adjusting the grasping attitude of a robotic arm end effector based on reinforcement learning |

Non-Patent Citations (2)

| Title |

|---|

| 李铁军;刘应心;刘今越;杨冬;: "基于阵列式触觉传感器的操作意图实时感知" * |

| 段炼: "基于FSR传感器的半掌手系统设计及算法研究" * |

Cited By (5)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN114065806A (en) * | 2021-10-28 | 2022-02-18 | 贵州大学 | Manipulator touch data classification method based on impulse neural network |

| CN114065806B (en) * | 2021-10-28 | 2022-12-20 | 贵州大学 | Manipulator touch data classification method based on impulse neural network |

| CN115519579A (en) * | 2022-10-24 | 2022-12-27 | 深圳先进技术研究院 | A Robot Grasp Prediction Method Based on Triple Contrast Network |

| WO2024087331A1 (en) * | 2022-10-24 | 2024-05-02 | 深圳先进技术研究院 | Robotic grasping prediction method based on triplet contrastive network |

| CN115519579B (en) * | 2022-10-24 | 2025-06-10 | 深圳先进技术研究院 | Robot grabbing prediction method based on triplet comparison network |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| Deepa et al. | Ensemble of multi-stage deep convolutional neural networks for automated grading of diabetic retinopathy using image patches | |

| James et al. | Tactile Model O: Fabrication and testing of a 3d-printed, three-fingered tactile robot hand | |

| CN109657708B (en) | Workpiece recognition device and method based on image recognition-SVM learning model | |

| Spiers et al. | Single-grasp object classification and feature extraction with simple robot hands and tactile sensors | |

| CN112295933B (en) | A method for a robot to quickly sort goods | |

| CN104751229B (en) | Bearing fault diagnosis method capable of recovering missing data of back propagation neural network estimation values | |

| CN114998573B (en) | Grabbing pose detection method based on RGB-D feature depth fusion | |

| CN108010078A (en) | A kind of grasping body detection method based on three-level convolutional neural networks | |

| CN104063719A (en) | Method and device for pedestrian detection based on depth convolutional network | |

| CN111459278A (en) | Discrimination method of robot grasping state based on haptic array | |

| CN115471700A (en) | Knowledge transmission-based image classification model training method and classification method | |

| Yan et al. | A robotic grasping state perception framework with multi-phase tactile information and ensemble learning | |

| Xiong et al. | Robotic multifinger grasping state recognition based on adaptive multikernel dictionary learning | |

| Zhu et al. | Visual-tactile sensing for real-time liquid volume estimation in grasping | |

| CN111582395B (en) | Product quality classification system based on convolutional neural network | |

| Liu et al. | Comparison of different CNN models in tuberculosis detecting | |

| Li et al. | Frogger: Fast robust grasp generation via the min-weight metric | |

| CA3002100A1 (en) | Unsupervised domain adaptation with similarity learning for images | |

| CN116433636A (en) | An intelligent detection method based on deep reinforcement learning and large database | |

| Parag et al. | Learning incipient slip with GelSight sensors: Attention classification with video vision transformers | |

| Li et al. | Learning gentle grasping from human-free force control demonstration | |

| Yang et al. | Drop to transfer: Learning transferable features for robot tactile material recognition in open scene | |

| CN117621145B (en) | A flexible robotic arm system for fruit maturity detection based on FPGA | |

| Chen et al. | Three-dimension object detection and forward-looking control strategy for non-destructive grasp of thin-skinned fruits | |

| CN118133012A (en) | Volume prediction method based on enhanced attention bidirectional long short-term memory network |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| RJ01 | Rejection of invention patent application after publication | ||

| RJ01 | Rejection of invention patent application after publication |

Application publication date: 20200728 |