CN111414846A - Group behavior identification method based on key spatiotemporal information-driven and group co-occurrence structured analysis - Google Patents

Group behavior identification method based on key spatiotemporal information-driven and group co-occurrence structured analysis Download PDFInfo

- Publication number

- CN111414846A CN111414846A CN202010192335.3A CN202010192335A CN111414846A CN 111414846 A CN111414846 A CN 111414846A CN 202010192335 A CN202010192335 A CN 202010192335A CN 111414846 A CN111414846 A CN 111414846A

- Authority

- CN

- China

- Prior art keywords

- group

- network

- key

- occurrence

- stm

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V40/00—Recognition of biometric, human-related or animal-related patterns in image or video data

- G06V40/20—Movements or behaviour, e.g. gesture recognition

- G06V40/23—Recognition of whole body movements, e.g. for sport training

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/24—Classification techniques

- G06F18/241—Classification techniques relating to the classification model, e.g. parametric or non-parametric approaches

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/044—Recurrent networks, e.g. Hopfield networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/045—Combinations of networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/06—Physical realisation, i.e. hardware implementation of neural networks, neurons or parts of neurons

- G06N3/061—Physical realisation, i.e. hardware implementation of neural networks, neurons or parts of neurons using biological neurons, e.g. biological neurons connected to an integrated circuit

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V20/00—Scenes; Scene-specific elements

- G06V20/40—Scenes; Scene-specific elements in video content

- G06V20/41—Higher-level, semantic clustering, classification or understanding of video scenes, e.g. detection, labelling or Markovian modelling of sport events or news items

-

- Y—GENERAL TAGGING OF NEW TECHNOLOGICAL DEVELOPMENTS; GENERAL TAGGING OF CROSS-SECTIONAL TECHNOLOGIES SPANNING OVER SEVERAL SECTIONS OF THE IPC; TECHNICAL SUBJECTS COVERED BY FORMER USPC CROSS-REFERENCE ART COLLECTIONS [XRACs] AND DIGESTS

- Y02—TECHNOLOGIES OR APPLICATIONS FOR MITIGATION OR ADAPTATION AGAINST CLIMATE CHANGE

- Y02A—TECHNOLOGIES FOR ADAPTATION TO CLIMATE CHANGE

- Y02A90/00—Technologies having an indirect contribution to adaptation to climate change

- Y02A90/10—Information and communication technologies [ICT] supporting adaptation to climate change, e.g. for weather forecasting or climate simulation

Landscapes

- Engineering & Computer Science (AREA)

- Physics & Mathematics (AREA)

- Theoretical Computer Science (AREA)

- General Physics & Mathematics (AREA)

- Health & Medical Sciences (AREA)

- Life Sciences & Earth Sciences (AREA)

- Biomedical Technology (AREA)

- Biophysics (AREA)

- Data Mining & Analysis (AREA)

- Computational Linguistics (AREA)

- Molecular Biology (AREA)

- General Engineering & Computer Science (AREA)

- Evolutionary Computation (AREA)

- Software Systems (AREA)

- Artificial Intelligence (AREA)

- General Health & Medical Sciences (AREA)

- Computing Systems (AREA)

- Mathematical Physics (AREA)

- Multimedia (AREA)

- Neurology (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Psychiatry (AREA)

- Social Psychology (AREA)

- Human Computer Interaction (AREA)

- Evolutionary Biology (AREA)

- Microelectronics & Electronic Packaging (AREA)

- Bioinformatics & Computational Biology (AREA)

- Image Analysis (AREA)

Abstract

本发明公开一种基于关键时空信息驱动和组群共现性结构化分析的组群行为识别方法,1)基于关键人物候选子网络获得组群中每个成员的重要性权重;2)将个人重要性权重和边界框特征输入至主网络CNN,获得输入到层叠LSTM网络的空间特征;3)以2)的输出作为输入进行共现性特征建模,通过对层叠LSTM内部神经元分组,实现不同的组学习不同的共现性特征,获得组群特征;4)将边界框特征输入到关键时间片段候选子网络进行特征提取,获得当前帧的重要性权重;5)将3)中获得的组群特征和4)中获得的当前帧的的重要性权重相结合获得当前帧的组群特征,并将其输入到softmax进行组群行为识别,完成分类任务。本方案基于关键时空信息提取组群重要成员特征以及关键的场景帧,并结合共现性处理组群行为内部的交互信息,实现组群行为识别精度的提升。The invention discloses a group behavior identification method based on key spatiotemporal information driving and group co-occurrence structural analysis, 1) obtaining the importance weight of each member in the group based on the key person candidate sub-network; The importance weights and bounding box features are input to the main network CNN, and the spatial features input to the stacked LSTM network are obtained; 3) The output of 2) is used as input to model co-occurrence features, and by grouping the internal neurons of the stacked LSTM, the realization of Different groups learn different co-occurrence features to obtain group features; 4) Input the bounding box features into the key time segment candidate sub-network for feature extraction to obtain the importance weight of the current frame; The group feature is combined with the importance weight of the current frame obtained in 4) to obtain the group feature of the current frame, and input it to the softmax for group behavior recognition to complete the classification task. This scheme extracts the characteristics of important members of the group and key scene frames based on the key spatiotemporal information, and combines the co-occurrence to process the interaction information within the group behavior to improve the recognition accuracy of the group behavior.

Description

技术领域technical field

本发明涉及组群行为识别领域,具体涉及一种基于关键时空信息驱动和组群共现性结构化分析的组群行为识别方法。The invention relates to the field of group behavior identification, in particular to a group behavior identification method based on key spatiotemporal information driving and group co-occurrence structural analysis.

背景技术Background technique

近年来,视频中的人类行为识别在计算机视觉领域取得了举世瞩目的成就。人体行为识别在现实生活中也得到了广泛应用,如智能视频监控、异常事件检测、体育分析、理解社会行为等,这些应用都使得组群行为识别具有重要的科学实用性和巨大的经济价值。组群行为识别是由多个人共同完成的复杂活动,组群行为识别方法中最重要的是个人特征的研究以及如何以个人推断组群行为。In recent years, human action recognition in video has achieved world-renowned achievements in the field of computer vision. Human behavior recognition has also been widely used in real life, such as intelligent video surveillance, abnormal event detection, sports analysis, understanding social behavior, etc. These applications make group behavior recognition have important scientific practicability and huge economic value. Group behavior recognition is a complex activity completed by multiple people. The most important method of group behavior recognition is the study of individual characteristics and how to infer group behavior from individuals.

2016年发表在CVPR上的“A Hierarchical Deep Temporal Model for GroupActivity Recognition”构建了一个深度模型来捕获基于LSTM模型的动态,提出了一种新颖的深层体系结构,该体系结构在LSTM网络中对群组活动进行建模,在第一阶段对个人活动进行建模,然后将人员级别信息与代表团体活动相结合。该模型的时间表征值是基于长短期记忆(LSTM)网络,目标是利用个人行为和团体活动之间的层次结构中的判别信息。但是,该方法虽然使用了两层的LSTM网络,只是简单的将个人特征组合起来表示群组行为,不能利用个人的交互关系,而且无法识别群组中的关键人物,导致群组行为识别的精度较低;另外,考虑到群组活动中每个人对群组行为识别的重要性是不同的,该方案仅简单的对每个人进行建模,同时也会降低群组行为识别的精度。"A Hierarchical Deep Temporal Model for GroupActivity Recognition" published on CVPR in 2016 builds a deep model to capture the dynamics of LSTM-based models, proposing a novel deep architecture that Activities are modeled, individual activities are modeled in the first phase, and then personnel-level information is combined with representative group activities. The temporal representation of the model is based on a long short-term memory (LSTM) network, and the goal is to exploit discriminative information in the hierarchy between individual behaviors and group activities. However, although this method uses a two-layer LSTM network, it simply combines individual features to represent group behavior, cannot use personal interaction, and cannot identify key people in the group, resulting in the accuracy of group behavior recognition. In addition, considering that the importance of each person in group behavior recognition is different, this scheme simply models each person, and also reduces the accuracy of group behavior recognition.

另外,2019年发表在JOURNAL OF VISUAL COMMUNICATION AND IMAGEREPRESENTATION上的“Region based multi-stream convolutional neural networksfor collective activity recognition”提出了一种新的基于人的区域的多留体系结构,用于群组活动的识别,该方法除了使用整体的图像信息,还分析了多个局部区域,虽然很好的考虑了人-人和群组-人的交互信息,但是由于没有更好的利用LSTM网络,不能很好的捕捉视频的时序信息,也没有充分的考虑个人的光流运动信息;而且提出的Sum Fusion、MaxFusion、Concatenation Fusion等多种融合策略都是人为制定的,不能很好的进行特征表示。In addition, "Region based multi-stream convolutional neural networks for collective activity recognition" published in JOURNAL OF VISUAL COMMUNICATION AND IMAGEREPRESENTATION in 2019 proposes a new human-based region multi-stay architecture for group activity recognition, In addition to using the overall image information, this method also analyzes multiple local regions. Although the interaction information of people-people and groups-people is well considered, it cannot be well captured due to the lack of better use of the LSTM network. The timing information of the video does not fully consider the optical flow motion information of the individual; and the proposed Sum Fusion, MaxFusion, Concatenation Fusion and other fusion strategies are artificially formulated and cannot be well represented.

发明内容SUMMARY OF THE INVENTION

本发明为精确识别出组群中每个个体的行为,并利用个体以及他们之间的交互特征推断出组群行为,提出一种基于关键时空信息驱动和组群共现性结构化分析的组群行为识别方法。In order to accurately identify the behavior of each individual in the group and infer the group behavior by using the individual and their interaction characteristics, the present invention proposes a group based on key spatiotemporal information-driven and group co-occurrence structural analysis. Group behavior identification methods.

本发明是采用以下的技术方案实现的:一种基于关键时空信息驱动和组群共现性结构化分析的组群行为识别方法,包括以下步骤:The present invention is realized by adopting the following technical scheme: a group behavior identification method based on key spatiotemporal information driving and group co-occurrence structural analysis, comprising the following steps:

步骤A、针对待识别视频,跟踪视频中每个成员,获得其边界框图像xt,按时间顺序输入至关键人物候选子网络进行静态特征和动态特征提取,并识别个人行为属性,获得个人重要性权重αt;Step A: For the video to be identified, track each member in the video, obtain its bounding box image x t , input it to the key person candidate sub-network in chronological order to extract static and dynamic features, identify individual behavior attributes, and obtain personal important information. Sex weight α t ;

步骤B、将步骤A获得的个人重要性权重αt以及个人边界框图像xt输入至主网络CNN进行分析处理,获得输入到层叠LSTM网络的空间特征X't=xt*αt;Step B, input the personal importance weight α t and the personal bounding box image x t obtained in step A to the main network CNN for analysis and processing, and obtain the spatial feature X' t =x t *α t input to the stacked LSTM network;

步骤C、以步骤B的输出作为输入进行共现性特征建模,对层叠LSTM的神经元进行分组,不同的组学习不同的共现性特征,获得组群特征Zt;Step C, use the output of step B as input to perform co-occurrence feature modeling, group the neurons of the stacked LSTM, and learn different co-occurrence features in different groups to obtain group feature Z t ;

步骤D、将步骤A中的边界框图像xt输入到关键时间片段候选子网络进行特征提取,获得当前帧的重要性权重,即当前帧的重要性βt;Step D, input the bounding box image x t in step A to the key time segment candidate sub-network for feature extraction, and obtain the importance weight of the current frame, that is, the importance β t of the current frame;

步骤E、将步骤C中获得的组群特征Zt和步骤D中获得的当前帧的的重要性权重βt相结合,获得当前帧的组群特征Z't,并将Z't输入到softmax,完成组群行为识别。Step E. Combine the group feature Z t obtained in step C with the importance weight β t of the current frame obtained in step D to obtain the group feature Z' t of the current frame, and input Z' t into the softmax, complete group behavior recognition.

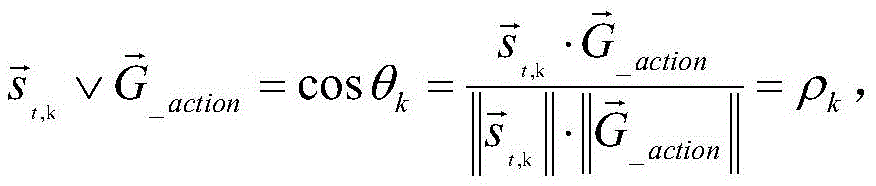

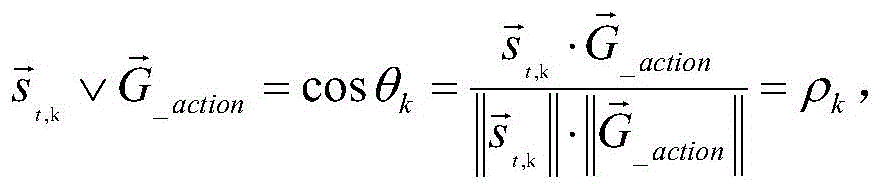

进一步的,所述步骤A中,在获得个人重要性权重αt时具体通过以下方式实现:Further, in the step A, when obtaining the personal importance weight α t , the specific implementation is as follows:

(1)首先,建立关键人物候选子网络,所述关键人物候选子网络包括串联连接的CNN和LSTM层、全连接层、tanh激活函数和全连接层;(1) First, establish a key person candidate sub-network, and the key person candidate sub-network includes CNN and LSTM layers connected in series, a fully connected layer, a tanh activation function, and a fully connected layer;

(2)其次,获取组群成员的行为属性得分,具体的:(2) Second, obtain the behavioral attribute scores of group members, specifically:

在第t时刻,设定场景中共有M个成员,其经CNN网络提取的边界框特征集合为xt=(xt,1,,...,xt,M)T,行为属性得分为st=(st,1,...,st,M)T,行为属性得分表示M个成员的行为类别判断,表示为:At time t, there are M members in the set scene, the bounding box feature set extracted by the CNN network is x t =(x t, 1 ,,...,x t, M ) T , and the behavior attribute score is s t =(s t,1 ,...,s t,M ) T , the behavior attribute score represents the behavior category judgment of M members, expressed as:

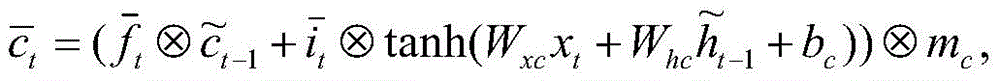

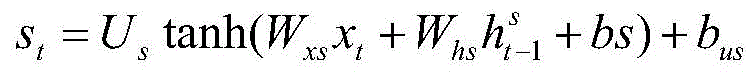

其中,T为视频序列长度,Us,Wxs,Whs为可学习参数矩阵,bs,bus为偏置向量,表示来自LSTM层的隐藏变量。Among them, T is the length of the video sequence, U s , W xs , W hs are the learnable parameter matrices, b s , bus are the bias vectors, represents the hidden variable from the LSTM layer.

(3)最后,获得每个成员的重要性权重,进而确定关键人物,具体的:(3) Finally, obtain the importance weight of each member, and then determine the key person, specifically:

给定组群行为类别集合G_action=(A1,A1,...,Aq)T,对于第k个人,计算其行为属性得分st,k在G_action的投影,具体地用这两个多维向量的余弦相似度来衡量:Given a group behavior category set G _action =(A 1 ,A 1 ,...,A q ) T , for the kth person, calculate the projection of its behavior attribute score s t,k on G _action , specifically using this The cosine similarity of two multidimensional vectors is measured by:

||||表示2范数; |||| represents the 2 norm;

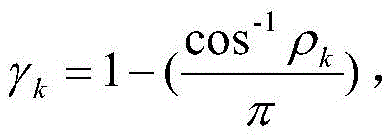

转化为余弦角度归一化系数为: Converted to cosine angle normalization coefficient is:

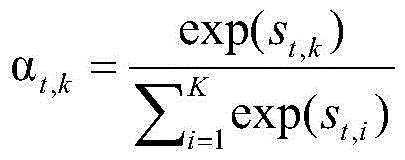

由以下公式计算空间场景中每个人的重要性权重:The importance weight of each person in the spatial scene is calculated by the following formula:

αt,k是为了确定每个成员对组群行为识别任务来说有多大的贡献值。α t,k is to determine how much each member contributes to the group behavior recognition task.

进一步的,所述步骤B具体通过以下方式实现:Further, the step B is specifically implemented in the following manner:

(1)首先,经重要性权重调制,获得t时刻第k个人的空间特征:(1) First, through the modulation of importance weights, the spatial characteristics of the kth person at time t are obtained:

x't,k=αt,k·xt,k x' t,k =α t,k ·x t,k

(2)然后,将所有经重要性权重调制的个体空间特征聚合做为主网络中层叠LSTM的输入,即得到:(2) Then, aggregate all individual spatial features modulated by importance weights as the input of the stacked LSTM in the main network, that is:

X't=(x't,1,...,x't,K)。X' t =(x' t,1 ,...,x' t,K ).

进一步的,所述步骤C中,进行共现性特征学习时,具体采用以下方式:Further, in the step C, when the co-occurrence feature learning is performed, the following methods are specifically adopted:

步骤C1、首先建立端到端的全连接深度LSTM网络模型,实现时序特征自动学习和运动建模;Step C1, first establish an end-to-end fully connected deep LSTM network model to realize automatic learning of time series features and motion modeling;

基于LSTM层和前馈层交替部署构成一个深层网络以捕获运动信息,前馈层处于两层LSTM网络之间,以使每层神经元都与后一层的神经元完全连接;Based on the alternate deployment of LSTM layers and feedforward layers, a deep network is formed to capture motion information, and the feedforward layer is located between the two layers of LSTM networks, so that the neurons of each layer are fully connected to the neurons of the next layer;

步骤C2、然后对层叠LSTM的神经元进行分组,在目标函数中引入对成员个体和神经元相连的权重的约束,使同一组的神经元对某些成员个体组成的子集有更大的权重连接,而对其他节点有较小的权重连接,从而挖掘成员个体的共现性。Step C2, then group the neurons of the layered LSTM, and introduce constraints on the weights of the individual members and the neurons in the objective function, so that the neurons in the same group have greater weights for the subsets composed of some individual members. connections, while connecting other nodes with smaller weights, so as to mine the co-occurrence of individual members.

进一步的,所述步骤C1中,为了确保全连接深度LSTM网络模型学习有效的特征,在模型的不同部分实施不同类型的正则化,具体包括两种类型的正则化:Further, in the step C1, in order to ensure that the fully connected deep LSTM network model learns effective features, different types of regularization are implemented in different parts of the model, including two types of regularization:

1)对于完全连接的层,引入正则化来驱动模型以学习不同层的个体的共现性特征,以及各LSTM层之间的节点的共现性特征学习;1) For fully connected layers, regularization is introduced to drive the model to learn co-occurrence features of individuals in different layers, and co-occurrence feature learning of nodes between LSTM layers;

2)对于LSTM神经元,导出一个新的Dropout层并将其应用于最后LSTM层中的LSTM神经元。2) For LSTM neurons, derive a new Dropout layer and apply it to the LSTM neurons in the last LSTM layer.

进一步的,所述步骤C2中,通过对每组神经元和成员个体的连接加入群组稀疏约束来实现共现性的挖掘和利用:Further, in the step C2, the co-occurrence mining and utilization are realized by adding group sparse constraints to the connections of each group of neurons and individual members:

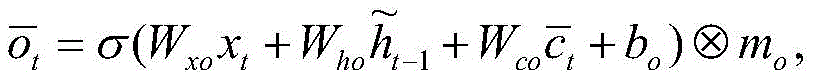

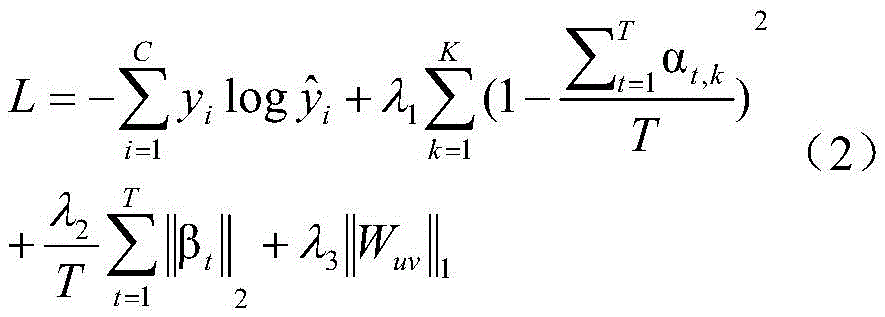

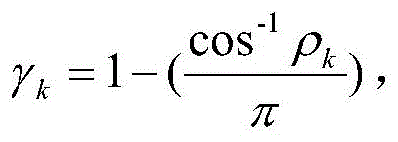

(1)根据组群行为类别数量对每层LSTM的神经元进行分组,对于第K组的神经元,此组神经元经过训练将自动区分不同的个体行为,在损失函数中加入共现性正则化:(1) Group the neurons of each layer of LSTM according to the number of group behavior categories. For the neurons in the Kth group, this group of neurons will automatically distinguish different individual behaviors after training, and the co-occurrence regularity is added to the loss function. change:

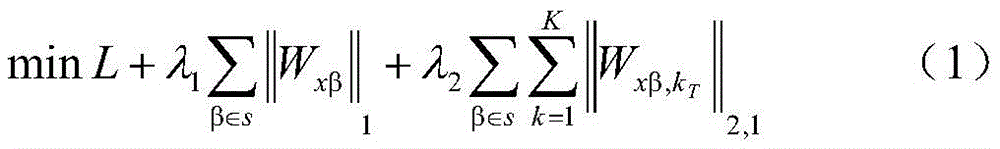

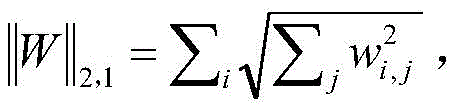

其中,L是深度LSTM网络的最大似然损失函数,Wxβ=[Wx,1;...;Wx,K]是和输入单元的β相连的权重矩阵,设N表示神经元的数量,这N个神经元被分为θ组,每组神经元的数量为ε=[N/θ],对于LSTM层,S={i,f,o,c}表示LSTM神经元中的输入门、遗忘门、输出门和cell单元,对于前馈层来说,S={h}表示神经元本身;where L is the maximum likelihood loss function of the deep LSTM network, W xβ = [W x,1 ;...;W x,K ] is the weight matrix connected to the β of the input unit, let N denote the number of neurons , these N neurons are divided into θ groups, the number of neurons in each group is ε=[N/θ], for LSTM layer, S={i,f,o,c} represents the input gate in LSTM neurons , forget gate, output gate and cell unit, for the feedforward layer, S={h} represents the neuron itself;

公式(1)中的第二项为L1正则化,在训练时用来确定相对重要的关键人物子集;在第三项当中,由于L2范数可以鼓励矩阵Wxβ,k变得稀疏,所以对每组单元使用L2范数定义为以驱动其选择具有不同描述性的特征做为输入,不同的神经元分组探索不同的共现性特征,然后采用梯度下降法求解。The second term in formula (1) is L1 regularization, which is used to determine a relatively important subset of key figures during training; in the third term, since the L2 norm can encourage the matrix W xβ,k to become sparse, so Using the L2 norm for each group of cells is defined as In order to drive it to select features with different descriptiveness as input, different neuron groups explore different co-occurrence features, and then use gradient descent method to solve.

进一步的,所述步骤D具体通过以下方式实现:Further, the step D is specifically realized in the following ways:

(1)首先,建立关键时间片段候选子网络,所述关键时间片段候选子网络包括串联连接的CNN和LSTM层、全连接层和Relu非线性单元;(1) First, establish a key time segment candidate sub-network, and the key time segment candidate sub-network includes CNN and LSTM layers connected in series, fully connected layers and Relu nonlinear units;

(2)根据输入该子网络的视频序列,利用Relu单元获得当前帧中的行为属性得分,即:ot=RELU(wx'xt+wh'h't-1+b')=(o1,o2,...oC)t,C表示行为类别总数,t表示当前帧,其大小取决于当前输入xt,LSTM层的t-1时间的隐藏状态h't-1;(2) According to the video sequence input to the sub-network, use the Relu unit to obtain the behavior attribute score in the current frame, namely: o t =RELU(w x' x t +w h' h' t-1 +b')= (o 1 ,o 2 ,...o C ) t , C is the total number of action categories, t is the current frame, the size of which depends on the current input x t , the hidden state h' t-1 of the LSTM layer at time t-1 ;

(3)最后,根据当前帧行为属性得分与组群行为属性之间的关联程度,进而获得当前帧在输入序列T中的重要性权重βt,具体为:(3) Finally, according to the correlation degree between the behavior attribute score of the current frame and the group behavior attribute, the importance weight β t of the current frame in the input sequence T is obtained, specifically:

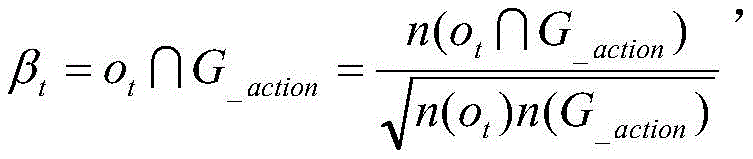

给定组群行为类别集合G_action=(A1,A1,...,Aq)T,时间重要性权重βt可以通过计算该集合与当前帧行为属性得分ot=(o1,o2,...oC)t这两个集合的交合相似度系数来表示,即:Given a group action category set G _action =(A 1 ,A 1 ,...,A q ) T , the temporal importance weight β t can be calculated by calculating the set and the current frame action attribute score o t =(o 1 , o 2 ,...o C ) t is represented by the intersection similarity coefficient of these two sets, namely:

其中,∩表示交集计算,n()表示求解集合中的元素的数量。Among them, ∩ represents the intersection calculation, and n() represents the number of elements in the solution set.

进一步的,所述步骤E中,基于组群特征Zt和当前帧的重要性权重βt进行组群行为识别时具体采用以下方式:Further, in the step E, the following methods are specifically adopted when the group behavior identification is performed based on the group feature Z t and the importance weight β t of the current frame:

(1)首先计算在第t时刻地帧组群特征为:(1) First calculate the frame group characteristics at time t as:

Z't=Zt·βt Z' t = Z t ·β t

(2)然后将其输入到softmax层进行最后的组群行为识别:(2) Then input it to the softmax layer for final group behavior recognition:

y=softmax(Z't)y=softmax(Z' t )

其中,y即组群行为类别。Among them, y is the group behavior category.

进一步的,基于模型的复杂性,对主网络、关键人物候选子网络和关键时间片段候选子网络进行联合训练,具体的,网络的联合训练过程如下:Further, based on the complexity of the model, the main network, the key person candidate sub-network and the key time segment candidate sub-network are jointly trained. Specifically, the joint training process of the network is as follows:

输入:模型的训练次数N1,N2;Input: The number of training times of the model N1, N2;

(1)使用高斯函数初始化网络参数;(1) Use the Gaussian function to initialize the network parameters;

(2)将关键人物候选子网络权重固定,联合训练仅具有主网络一个LSTM层的关键时间段候选子网络主网络,以获得关键时间段候选模型;(2) Fix the weight of the key person candidate sub-network, and jointly train the key time period candidate sub-network main network with only one LSTM layer of the main network to obtain the key time period candidate model;

(3)重复迭代,经过N1次迭代将其LSTM层增加到三层之后,训练主网络;(3) Repeat the iteration, and after N1 iterations increase its LSTM layer to three layers, train the main network;

(4)通过N2次迭代微调主网络和关键时间段候选子网络;(4) Fine-tune the main network and key time period candidate sub-networks through N2 iterations;

(5)将关键时间段候选子网络固定,联合训练仅具有一个LSTM层的关键人物候选子网络和主网络,以获得关键人物候选子网络;(5) Fix the key time period candidate sub-network, and jointly train the key person candidate sub-network and the main network with only one LSTM layer to obtain the key person candidate sub-network;

(6)重复迭代,经过N1次迭代将其LSTM层增加到三层之后,训练主网络;(6) Repeat the iteration, after N1 iterations increase its LSTM layer to three layers, train the main network;

(7)通过N2次迭代微调主网络和此关键时间段候选子网络;(7) Fine-tune the main network and the candidate sub-network for this key time period through N2 iterations;

(8)通过N1次迭代联合训练(4)和(7)中得到得子网络;(8) The sub-network obtained by jointly training (4) and (7) through N1 iterations;

(9)通过N2次迭代共同微调整个网络模型;(9) Jointly fine-tune the entire network model through N2 iterations;

输出:最终收敛得到整个组群行为识别模型。Output: The entire group behavior recognition model is finally converged.

与现有技术相比,本发明的优点和积极效果在于:Compared with the prior art, the advantages and positive effects of the present invention are:

本方案提出的基于时空重要性和共现性的组群行为识别方法,使用重要性机制关注组群行为当中的重要的个体行为,提取更加重要的个人特征以及重要的场景帧,并结合共现性处理组群行为内部的交互信息,通过时空重要性和共现性的结合可以更好的利用个人特征并有效的利用众多信息当中的关键信息,从而实现精度,效率的提升,具有重要的科学实用性和巨大的经济价值。The group behavior recognition method based on spatiotemporal importance and co-occurrence proposed in this scheme uses the importance mechanism to focus on important individual behaviors among group behaviors, extracts more important personal characteristics and important scene frames, and combines co-occurrence Sexual processing of interactive information within group behaviors, through the combination of spatiotemporal importance and co-occurrence, can make better use of individual characteristics and effectively use key information among many information, thereby achieving accuracy and efficiency improvement, and has important scientific Practicality and great economic value.

附图说明Description of drawings

图1为本发明实施例的整体网络架构示意图;1 is a schematic diagram of an overall network architecture according to an embodiment of the present invention;

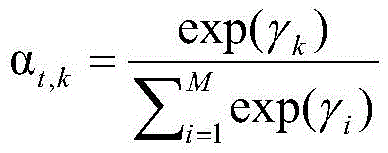

图2为本发明实施例所述主网络层叠LSTM内部结构图;FIG. 2 is an internal structure diagram of the main network stacked LSTM according to an embodiment of the present invention;

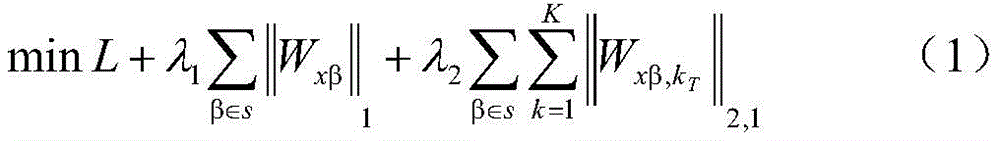

图3为本发明实施例每一层LSTM内神经元分组示意图;3 is a schematic diagram of grouping neurons in each layer of LSTM according to an embodiment of the present invention;

图4为本发明实施例LSTM神经元内部结构示意图。FIG. 4 is a schematic diagram of the internal structure of an LSTM neuron according to an embodiment of the present invention.

具体实施方式Detailed ways

为了能够更加清楚地理解本发明的上述目的、特征和优点,下面结合附图及实施例对本发明做进一步说明。在下面的描述中阐述了很多具体细节以便于充分理解本发明,但是,本发明还可以采用不同于在此描述的其他方式来实施,因此,本发明并不限于下面公开的具体实施例。In order to more clearly understand the above objects, features and advantages of the present invention, the present invention will be further described below with reference to the accompanying drawings and embodiments. Numerous specific details are set forth in the following description to facilitate a full understanding of the present invention, however, the present invention may also be implemented in other ways than those described herein, and therefore, the present invention is not limited to the specific embodiments disclosed below.

为精确识别出组群中每个个体的行为,并利用个体以及他们之间的交互特征推断出组群行为,本实施例提出一种基于关键时空信息驱动和组群共现性结构化分析的组群行为识别方法,包括以下步骤:In order to accurately identify the behavior of each individual in the group, and use the individual and their interaction characteristics to infer group behavior, this embodiment proposes a structured analysis based on key spatiotemporal information-driven and group co-occurrence. Group behavior identification method, including the following steps:

步骤A、针对待识别视频,跟踪视频中每个成员,获得其边界框图像xt,按时间顺序输入到关键人物候选子网络进行静态特征和动态特征提取,并识别个人行为属性,进而获得个人重要性权重αt;Step A. For the video to be identified, track each member in the video, obtain its bounding box image x t , input it to the key person candidate sub-network in chronological order to extract static features and dynamic features, identify individual behavior attributes, and then obtain the individual behavioral attributes. importance weight α t ;

步骤B、将步骤A获得的个人重要性权重αt和以及个人边界框图像xt输入至主网络进行相乘处理,以期获得输入到主网络层叠LSTM中的空间特征X't=xt*αt;Step B. Input the personal importance weight α t and the personal bounding box image x t obtained in step A to the main network for multiplication processing, in order to obtain the spatial feature X' t = x t * input into the main network layered LSTM α t ;

步骤C、以步骤B的输出作为输入,进行共现性特征建模,对层叠LSTM的神经元进行分组,不同的组学习不同的共现性特征,获得组群特征Zt;Step C, take the output of step B as input, carry out co-occurrence feature modeling, group the neurons of the stacked LSTM, and learn different co-occurrence features in different groups to obtain group feature Z t ;

步骤D、将步骤A中的边界框图像xt输入到关键时间片段候选子网络,进行特征提取,获得当前帧的重要性权重,即当前帧的重要性βt;Step D, input the bounding box image x t in step A into the key time segment candidate sub-network, perform feature extraction, and obtain the importance weight of the current frame, that is, the importance β t of the current frame;

步骤E、将步骤C中获得的组群特征Zt和步骤D中获得的当前帧的的重要性权重βt相结合获得当前帧的组群特征Z't,并将其输入到softmax进行组群行为识别,完成组群行为识别。Step E. Combine the group feature Z t obtained in step C and the importance weight β t of the current frame obtained in step D to obtain the group feature Z' t of the current frame, and input it into softmax for grouping. Group behavior identification, complete group behavior identification.

为了实现对组群行为识别的精度和效率,本方案设计了主网络和两个子网络(关键人物候选子网络和关键时间段候选子网络),主网络用于实现对特征进行提取、时空相关性利用和最终的分类;关键人物候选子网络用于给不同个体分配合适的重要性;关键时间段候选子网络用于给不同帧分配合适的重要性。单人的行为识别不在主网络里面进行,而是直接在子网络里对单人行为进行推断以获得关键人物排序,然后控制主网络的有用信息输入。In order to achieve the accuracy and efficiency of group behavior recognition, this scheme designs a main network and two sub-networks (key person candidate sub-network and key time period candidate sub-network). The main network is used to extract features and spatial-temporal correlation. Exploitation and final classification; key person candidate sub-network is used to assign appropriate importance to different individuals; key time period candidate sub-network is used to assign appropriate importance to different frames. The single-person behavior recognition is not carried out in the main network, but directly infers the single-person behavior in the sub-network to obtain the ranking of key people, and then controls the useful information input of the main network.

1、在具体的组群行为识别过程中,组群活动虽由多人共同完成,但决定组群行为的成员通常是少数成员(关键人物),由这些关键人物在某个时间段内完成组群行为。因此,本方案中设计两个子网络对关键人物信息进行关注,以起到屏蔽其他成员无用信息干扰的作用,优化模型识别精度;所述的两个子网络分别为关键人物候选子网络和关键时间段候选子网络,其中关键人物候选子网络根据个人行为与组群行为的关联性实现对组群成员所处空间位置的重要性进行量化排队,并以此控制主网络中的CNN空间信息的输入;而关键时间段候选子网络根据成员行为的类别与组群行为的关联性实现对主网络中的层叠LSTM输出的时间信息重要性进行量化取舍,关注有用的时间片端,优化输入到分类层softmax的信息;经过上述两个子网络实现对组群行为中的“关键时刻信息”的提纯,旨在屏蔽组群中无关人员干扰噪声的影响。1. In the specific group behavior identification process, although the group activities are completed by many people, the members who decide the group behavior are usually a few members (key people), and these key people complete the group within a certain period of time. group behavior. Therefore, in this scheme, two sub-networks are designed to pay attention to the key person information, so as to shield the useless information interference of other members and optimize the recognition accuracy of the model; the two sub-networks are the key person candidate sub-network and the key time period respectively. The candidate sub-network, in which the key person candidate sub-network quantifies the importance of the spatial position of the group members according to the correlation between the individual behavior and the group behavior, and controls the input of the CNN spatial information in the main network; The candidate sub-network in the key time period quantifies the importance of the time information output by the stacked LSTM in the main network according to the correlation between the category of member behavior and the group behavior, pays attention to the useful time slices, and optimizes the input to the classification layer softmax. Information; through the above two sub-networks, the "critical moment information" in the group behavior is purified, aiming to shield the influence of interference noise from irrelevant personnel in the group.

2、另外,作为一个复杂组群活动,组群的某个行为一般由组群内部的若干特定个体协同完成,组群成员是呈现结构化分组,这些成员在这个小集合内部的交互关系密切。对此,本方案中,重点关注关键人物形成的核心小组,降低不相关成员对组群行为识别的影响,并将核心小组成员协同交互合作决定组群行为判别的特性称为共现性。组群行为有多种,因此会存在相应的多个这样相对“稳定的共现性小组”。需要强调的是这些共现性小组的出现是时变的,并且某一时间片段只有一个“共现性小组”起主导作用。2. In addition, as a complex group activity, a certain behavior of the group is generally completed by a number of specific individuals within the group. The group members are structured groupings, and these members have a close interaction within this small group. In this regard, in this scheme, we focus on the core group formed by key figures, reduce the influence of irrelevant members on the identification of group behavior, and call the characteristic of core group members' collaborative interaction and cooperation to determine group behavior identification as co-occurrence. There are many kinds of group behaviors, so there will be a corresponding number of such relatively "stable co-occurrence groups". It should be emphasized that the appearance of these co-occurrence groups is time-varying, and that only one "co-occurrence group" plays a dominant role in a certain time segment.

为了刻画组群中的这样“共现性小组”,本方案中在主网络中设计了三层联合迭代的双向LSTM层,并对每一层LSTM神经元进行分组,每个神经元小组只关注一种组群行为类别,小组内的每个神经元需要连接组群中的每个成员,(比如,若将一个LSTM内的神经元分为6组,即可以专注6种不同小组行为,且这六组关注的行为属性是不变的)。这样,经过训练会使同一组中的神经元对某一特定行为的若干个体组成的子集有着更大的权重连接,而对其他一些相关程度较小的个体有较小的权重连接,以此种方法学习共现性特征,突显了关键核心小群体的共现性时序信息,再经过关键时间片段候选子网络特征学习后,可以进一步抑制LSTM中冗长无用信息,提升了共现性时序信息信噪比,作为分类层softmax的输入,进而提高组群行为识别精度。In order to describe such a "co-occurrence group" in the group, in this scheme, a three-layer joint iterative bidirectional LSTM layer is designed in the main network, and the LSTM neurons in each layer are grouped, and each neuron group only focuses on A category of group behavior, each neuron in the group needs to connect each member of the group, (for example, if the neurons in an LSTM are divided into 6 groups, you can focus on 6 different group behaviors, and The behavioral properties of these six groups of concern are invariant). In this way, neurons in the same group are trained to have more weighted connections to a subset of several individuals for a particular behavior, and less weighted connections to some other less related individuals, so that This method learns the co-occurrence features, which highlights the co-occurrence timing information of the key core small groups, and after learning the key time segment candidate sub-network features, it can further suppress the redundant and useless information in the LSTM, and improve the co-occurrence timing information information. The noise ratio is used as the input of the classification layer softmax, thereby improving the accuracy of group behavior recognition.

下面对本实施例所述的组群行为识别方法进行详细的介绍,具体的:The following describes the group behavior identification method described in this embodiment in detail, specifically:

步骤A中,在获得个人重要性权重αt时具体通过以下方式实现:In step A, when obtaining the personal importance weight α t , the specific implementation is as follows:

(1)首先,建立关键人物候选子网络,如图1所示,关键人物候选子网络包括串联连接的CNN和LSTM层、全连接层、tanh激活函数和全连接层;(1) First, establish a key person candidate sub-network, as shown in Figure 1, the key person candidate sub-network includes CNN and LSTM layers connected in series, a fully connected layer, a tanh activation function and a fully connected layer;

(2)其次,获取组群成员的行为属性得分,具体地:(2) Second, obtain the behavioral attribute scores of the group members, specifically:

在第t时刻,设定场景中共有M个成员,其经CNN网络提取的边界框特征集合xt=(xt,1,,...,xt,M)T,行为属性得分st=(st,1,...,st,M)T表示M个成员的行为类别判断,由如下公式得到:At time t, there are M members in the set scene, the bounding box feature set x t =(x t, 1 ,,...,x t, M ) T extracted by the CNN network, and the behavior attribute score s t =(s t,1 ,...,s t,M ) T represents the behavior category judgment of M members, which is obtained by the following formula:

其中,T为视频序列长度,Us,Wxs,Whs为可学习参数矩阵,bs,bus为偏置向量,表示来自LSTM层的隐藏变量;Among them, T is the length of the video sequence, U s , W xs , W hs are the learnable parameter matrices, b s , bus are the bias vectors, represents the hidden variables from the LSTM layer;

(4)最后,获取每个成员的重要性权重,进而确定关键人物,具体的:(4) Finally, obtain the importance weight of each member, and then determine the key person, specifically:

给定组群行为类别集合G_action=(A1,A1,...,Aq)T,对于第k个人,计算其行为属性得分st,k在G_action的投影,可以用这两个多维向量的余弦相似度来衡量:Given the group behavior category set G _action =(A 1 ,A 1 ,...,A q ) T , for the kth person, to calculate the projection of its behavior attribute score s t,k on G _action , you can use these two The cosine similarity of a multidimensional vector is measured by:

||||表示2范数; |||| represents the 2 norm;

转化为余弦角度归一化系数为:由以下公式计算空间场景中每个人的重要性权重:Converted to cosine angle normalization coefficient is: The importance weight of each person in the spatial scene is calculated by the following formula:

αt,k是为了确定每个成员对组群行为识别任务来说有多大的贡献值,也就能控制其流向主网络的信息量。α t,k is to determine how much each member contributes to the group behavior recognition task, and to control the amount of information flowing to the main network.

步骤B中,将步骤A获得的个人重要性权重αt和以及个人边界框特征xt输入至主网络CNN的特征进行相乘,以期获得输入到层叠LSTM网络的特征X't=xt*αt,具体通过以下方式实现:In step B, multiply the personal importance weight α t obtained in step A and the feature of the personal bounding box feature x t input to the main network CNN, in order to obtain the feature X' t = x t * input to the stacked LSTM network α t , which is implemented in the following ways:

(1)首先,经重要性权重调制,获得t时刻第k个人的空间特征:(1) First, through the modulation of importance weights, the spatial characteristics of the kth person at time t are obtained:

x't,k=αt,k·xt,k x' t,k =α t,k ·x t,k

(2)然后,将所有经重要性权重调制的个体空间特征聚合作为层叠LSTM的输入:(2) Then, aggregate all individual spatial features modulated by importance weights as the input of the stacked LSTM:

X't=(x't,1,...,x't,K)X' t = (x' t,1 ,...,x' t,K )

本实施例提出的关键人物候选子网络是基于当前时间的所有个体以及LSTM层中的隐藏变量来确定此个体行为的重要性,关键人物候选子网络的目的是对组群活动当中的个体分配重要性权重,考虑到LSTM隐藏变量ht-1包含了过去帧的信息,所以其能够探究长时间的动态。The key person candidate sub-network proposed in this embodiment determines the importance of the individual's behavior based on all individuals at the current time and the hidden variables in the LSTM layer. The purpose of the key person candidate sub-network is to assign importance to individuals in group activities. Sex weights, considering that the LSTM hidden variable h t-1 contains information from past frames, so it can explore long-term dynamics.

步骤C中,以步骤B的输出做为输入,进行共现性特征建模,对层叠LSTM的神经元进行分组,不同的组学习不同的共现性特征,获得组群特征Zt,具体通过以下方式实现:In step C, the output of step B is used as the input, and the co-occurrence feature modeling is performed, the neurons of the stacked LSTM are grouped, different groups learn different co-occurrence features, and the group feature Z t is obtained. Do this in the following way:

(1)首先建立端到端的全连接深度层叠LSTM网络模型,实现时序特征学习和运动建模;旨在对不同个体之间的复杂关系进行可靠建模,使用LSTM层和前馈层交替部署构成一个深层网络以捕获运动信息,如图2所示,前馈层处于两层LSTM网络之间,其作用是使每层神经元都与后一层的神经元完全连接,神经元之间不存在同层连接,同时也不存在跨层连接。(1) First, establish an end-to-end fully connected deep layered LSTM network model to achieve time-series feature learning and motion modeling; it aims to reliably model complex relationships between different individuals, using LSTM layers and feedforward layers to alternately deploy A deep network to capture motion information, as shown in Figure 2, the feedforward layer is between the two layers of LSTM networks, its role is to make each layer of neurons fully connected to the neurons of the next layer, there is no existence between neurons Same-layer connections, and no cross-layer connections.

为了确保模型学习有效的特征,在模型的不同部分实施了不同类型的正则化,从而有效的缓解过拟合的问题(模型为全连接的LSTM网络+前馈层构成的深度模型,结构相对复杂,容易造成过拟合问题,所以正则化的设计就是降低模型复杂度,来解决过拟合问题的。)具体来说,本实施例提出了两种类型的正则化:In order to ensure that the model learns effective features, different types of regularization are implemented in different parts of the model to effectively alleviate the problem of overfitting (the model is a deep model composed of a fully connected LSTM network + feedforward layer, and the structure is relatively complex , it is easy to cause the problem of over-fitting, so the design of regularization is to reduce the complexity of the model to solve the problem of over-fitting.) Specifically, this embodiment proposes two types of regularization:

1)对于完全连接的层,本实施例引入正则化来驱动模型以学习不同层的个体的共现性特征,以及各LSTM层之间的节点的共现性特征学习;1) For fully connected layers, this embodiment introduces regularization to drive the model to learn co-occurrence features of individuals in different layers, and co-occurrence feature learning of nodes between LSTM layers;

2)对于LSTM神经元,导出一个新的Dropout层并将其应用于最后LSTM层中的LSTM神经元这有助于网络学习复杂的运动动力学。2) For LSTM neurons, derive a new Dropout layer and apply it to the LSTM neurons in the last LSTM layer This helps the network learn complex motion dynamics.

(2)然后对层叠LSTM内部神经元进行分组,在目标函数中引入对成员个体和神经元相连的权重的约束,使同一组的神经元对某些成员个体组成的子集有更大的权重连接,而对其他节点有较小的权重连接,从而挖掘成员个体的共现性。(2) Then group the internal neurons of the stacked LSTM, and introduce constraints on the weights of the individual members and the neurons in the objective function, so that the neurons in the same group have greater weights for the subsets composed of some individual members. connections, while connecting other nodes with smaller weights, so as to mine the co-occurrence of individual members.

如图3所示,主网络LSTM层由若干个LSTM神经元组成,这些神经元被分为K组,同组中的每个神经元共同地和某些个体有更大的连接权值(即和某类组群行为密切相关的若干成员构成的子集有更大的连接权值),而和其他关个体有较小的连接权值。不同组的神经元对不同组群行为的敏感程度不同,体现在不同组的神经元对应于更大连接权值的个体子集也不同。在实现上,可以通过对每组神经元和成员个体的连接加入组稀疏约束来达到上述共现性的挖掘和利用。As shown in Figure 3, the LSTM layer of the main network consists of several LSTM neurons, which are divided into K groups, and each neuron in the same group has a larger connection weight with some individuals in common (ie A subset of several members closely related to a certain group behavior has a larger connection weight), while other related individuals have a smaller connection weight. Different groups of neurons have different degrees of sensitivity to the behavior of different groups, which is reflected in different groups of neurons corresponding to individual subsets of larger connection weights. In terms of implementation, the above co-occurrence mining and utilization can be achieved by adding group sparse constraints to the connections of each group of neurons and individual members.

1)根据组群行为类别数量对每层LSTM的神经元进行分组,例如有10个行为类别就分为10组。每个神经元和个体都是全连接的,每个个体的重要性也是不同的,经过训练神经元能够知道哪些个体重要,从而突出重要小组。1) Group the neurons of each layer of LSTM according to the number of group behavior categories, for example, if there are 10 behavior categories, they are divided into 10 groups. Each neuron and individual are fully connected, and the importance of each individual is also different. After training, the neurons can know which individuals are important, thereby highlighting important groups.

因此,本实施例设计了完全连接的主网络,允许每个神经元连接到任何一个个体实现自动探索组群内部的共现性特征,对同一层中的神经元分为θ组,允许不同的组专注于判别不同的行为分类。以第K组的神经元为例,此组神经元经过训练将自动区分不同的个体行为,在损失函数中加入了共现性正则化,设计如下:(模型使用正则化的目的有两个,一个是防止过拟合,另一个作用就是融入先验信息,强行让模型学习到我们想要的效果)Therefore, this embodiment designs a fully connected main network, allowing each neuron to be connected to any individual to automatically explore the co-occurrence characteristics within the group, dividing the neurons in the same layer into θ groups, allowing different Groups focus on discriminating between different behavioral categories. Taking the neurons in the K group as an example, this group of neurons will automatically distinguish different individual behaviors after training, and the co-occurrence regularization is added to the loss function. The design is as follows: (The model uses regularization for two purposes. One is to prevent overfitting, and the other is to incorporate prior information to force the model to learn the effect we want)

其中,L是深度LSTM网络的最大似然损失函数,Wxβ=[Wx,1;...;Wx,K]是和输入单元β相连的权重矩阵,设以N表示神经元的数量,这N个神经元被分为θ组,每组神经元的数量为ε=[N/θ],对于LSTM层,S={i,f,o,c}表示LSTM神经元中的输入门、遗忘门、输出门和cell单元,对于前馈层来说,S={h}表示神经元本身;Among them, L is the maximum likelihood loss function of the deep LSTM network, W xβ = [W x,1 ;...;W x,K ] is the weight matrix connected to the input unit β, let N represent the number of neurons , these N neurons are divided into θ groups, the number of neurons in each group is ε=[N/θ], for LSTM layer, S={i,f,o,c} represents the input gate in LSTM neurons , forget gate, output gate and cell unit, for the feedforward layer, S={h} represents the neuron itself;

公式(1)中的第二项为L1正则化,在训练时,L1正则化对于小权重减小地很快,对大权重减小较慢,因此最终模型的权重主要集中在高重要度的特征上,对于不重要的特征,权重会很快趋近于0,进而可以由此来确定相对重要的关键人物子集。在第三项当中,由于L2范数可以鼓励矩阵Wxβ,k变得稀疏,所以对每组单元使用L2范数定义为以驱动其可以选择具有不同描述性的特征做为输入,不同的神经元分组探索不同的共现性特征,以便获得识别多种动作类别的能力,然后采用梯度下降法求解。(矩阵Wxβ,k优化,其值变得稀疏,实际上就是对θ组共现性小组群体的特征重要性实现“厚此薄彼”实现选择)。The second term in formula (1) is L1 regularization. During training, L1 regularization reduces quickly for small weights and slows down for large weights, so the weights of the final model are mainly concentrated in high importance. In terms of features, for unimportant features, the weight will quickly approach 0, and then a relatively important subset of key figures can be determined. In the third term, since the L2 norm can encourage the matrix W xβ,k to become sparse, the use of the L2 norm for each group of cells is defined as In order to drive it to select features with different descriptive properties as input, different neuron groups explore different co-occurrence features in order to obtain the ability to identify multiple action categories, and then use the gradient descent method to solve. (The matrix W xβ,k is optimized, and its value becomes sparse, which is actually the realization of "favor one over the other" for the feature importance of the θ group co-occurrence group group).

在训练期间,网络会随机丢掉一些神经元,以迫使其余的网络单元进行补偿,在测试期间,网络将所有神经元一起使用进行预测。将此思想扩展到LSTM网络,本方案使用了一种新的梯度下降算法,允许LSTM神经元的内部门,cell和输出响应进行有选择的梯度下降,鼓励每个单元学习更好的参数,如图4所示,以展开的形式展示了LSTM神经元,对于LSTM网络,不希望擦除单元中的所有信息,因为该单元记忆了过去长时间发生的事件,因此,如图4所示,允许LSTM中的丢失影响沿各层虚线流动,禁止其沿时间轴流动。During training, the network randomly drops some neurons to force the remaining network units to compensate, and during testing, the network uses all neurons together to make predictions. Extending this idea to LSTM networks, this scheme uses a new gradient descent algorithm that allows selective gradient descent of internal gates, cells and output responses of LSTM neurons, encouraging each cell to learn better parameters, such as Figure 4 shows LSTM neurons in an expanded form. For LSTM networks, it is not desirable to erase all information in the unit because the unit remembers events that occurred over a long period of time in the past. Therefore, as shown in Figure 4, allowing The dropout effects in the LSTM flow along the dashed lines of the layers, prohibiting it from flowing along the time axis.

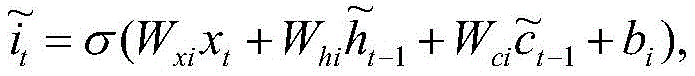

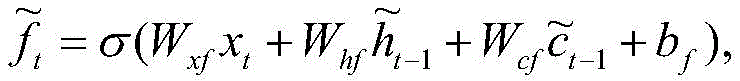

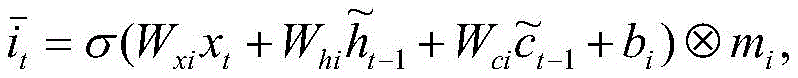

沿时间方向传输的没有丢失的单元的响应为:The response of a cell transmitted in the time direction without loss is:

有丢失的单元的响应为:The response for a cell with a loss is:

其中mi,mf,mc,mo和mh分别是输入门,遗忘门,cell记忆单元,输出门和输出响应的丢失二进制掩码向量,元素值为0表示发生了丢失。对于第一个LSTM层,输入xt是获得的单人行为特征;对于较高的LSTM层,输入xt是前一层的响应输出。where m i , m f , m c , m o and m h are the input gate, forget gate, cell memory unit, output gate and the loss binary mask vector of the output response, respectively, and an element value of 0 indicates that a loss has occurred. For the first LSTM layer, the input xt is the obtained single-person action feature; for higher LSTM layers, the input xt is the response output of the previous layer.

步骤D中,将步骤A中的边界框信息输入到关键时间片段候选子网络进行特征提取,获得当前帧的重要性权重,即,当前帧的重要性βt,具体通过以下方式实施:In step D, the bounding box information in step A is input into the key time segment candidate sub-network for feature extraction to obtain the importance weight of the current frame, that is, the importance β t of the current frame, which is specifically implemented in the following manner:

(1)首先,建立关键时间片段候选子网络,如图1所示,关键时间片段候选子网络包括串联连接的CNN和LSTM层、全连接层和Relu非线性单元;(1) First, establish the key time segment candidate sub-network, as shown in Figure 1, the key time segment candidate sub-network includes serially connected CNN and LSTM layers, fully connected layers and Relu nonlinear units;

(2)然后,根据输入该子网络的视频序列,利用Relu单元获得当前帧中的行为属性得分,即:ot=RELU(wx'xt+wh'h't-1+b')=(o1,o2,...oC)t,C表示行为类别总数,t表示当前帧,其大小取决于当前输入xt,LSTM层的t-1时间的隐藏状态h't-1。(2) Then, according to the video sequence input to the sub-network, use the Relu unit to obtain the behavior attribute score in the current frame, that is: o t =RELU(w x' x t +w h' h' t-1 +b' )=(o 1 ,o 2 ,...o C ) t , C represents the total number of action categories, t represents the current frame, and its size depends on the current input x t , the hidden state h't of the LSTM layer at time t -1 -1 .

对于一个视频帧序列,不同的帧所提供的有价值的信息的数量通常是不等的,仅某些帧包含最有区别的信息,而其他帧是作为补充提供上下文信息。例如,对于排球比赛中扣球的组群行为,接球,跳起等动作帧的重要性低于扣球的这一帧。For a sequence of video frames, the amount of valuable information provided by different frames is usually unequal, and only some frames contain the most distinguishing information, while other frames provide contextual information as a supplement. For example, for the group behavior of spiking in a volleyball game, action frames such as catching, jumping, etc., are less important than the spiking frame.

(3)最后,根据当前帧行为属性得分与组群行为属性之间的关联程度,进而获得当前帧在输入序列T中的重要性权重βt,具体为:(3) Finally, according to the correlation degree between the behavior attribute score of the current frame and the group behavior attribute, the importance weight β t of the current frame in the input sequence T is obtained, specifically:

给定组群行为类别集合G_action=(A1,A1,...,Aq)T,时间重要性权重βt可以通过计算该集合与当前帧行为属性得分ot=(o1,o2,...oC)t这两个集合的交合相似度系数来表示,即:Given a group action category set G _action =(A 1 ,A 1 ,...,A q ) T , the temporal importance weight β t can be calculated by calculating the set and the current frame action attribute score o t =(o 1 , o 2 ,...o C ) t is represented by the intersection similarity coefficient of these two sets, namely:

其中,∩表示计算两个集合的交集,n()表示求解集合中的元素的数量。Among them, ∩ means to calculate the intersection of two sets, and n() means to solve the number of elements in the set.

步骤E中,将步骤C中获得的组群特征Zt和步骤D中获得的当前帧的的重要性权重βt相结合获得当前帧的组群特征Z't,并将其输入到softmax层进行最后的组群行为识别,具体通过以下方式实施:In step E, combine the group feature Z t obtained in step C with the importance weight β t of the current frame obtained in step D to obtain the group feature Z' t of the current frame, and input it to the softmax layer. Carry out the final group behavior identification, which is implemented in the following ways:

(1)首先计算在第t时刻地帧组群特征为:(1) First calculate the frame group characteristics at time t as:

Z't=Zt·βt Z' t = Z t ·β t

(2)然后将其输入到softmax层进行最后的组群行为识别:(2) Then input it to the softmax layer for final group behavior recognition:

y=softmax(Z't)y=softmax(Z' t )

其中,y即组群行为类别。Among them, y is the group behavior category.

本发明设计子网络目的是使网络能够对不同的个体进行不同级别的关注,并且为不同的帧分配不同的重要性,如网络模型图1所示,关键人物候选子网络主要作用于主LSTM的输入,关键时间片段候选子网络主要作用于主LSTM网络的输出。两个子网络的目的是使网络能够对不同的个体进行不同级别的关注,并为不同的帧分配不同的重要性程度。The purpose of designing the sub-network in the present invention is to enable the network to pay attention to different individuals at different levels and assign different importance to different frames. As shown in Figure 1 of the network model, the key person candidate sub-network mainly acts on the main LSTM. The input, key-time segment candidate sub-network mainly acts on the output of the main LSTM network. The purpose of the two sub-networks is to enable the network to pay different levels of attention to different individuals and assign different levels of importance to different frames.

本方案在同一网络中集成了关键人物候选和关键时间段候选子网络,关键人物候选子网络作用于主LSTM网络的输入,关键时间段候选子网络作用于主LSTM网络的输出。将这两个子网络的最终目标函数公式化为具有正则化的交叉熵损失的序列,如下所示:This scheme integrates key person candidates and key time period candidate sub-networks in the same network. The key person candidate sub-network acts on the input of the main LSTM network, and the key time period candidate sub-network acts on the output of the main LSTM network. The final objective function of these two sub-networks is formulated as a sequence with a regularized cross-entropy loss as follows:

其中y=(y1,..,yC)表示真实标签,如果它属于第i类,对于j不等于i来说,yi=1和yj=0。表示将序列预测为第i类的概率标量。λ1,λ2和λ3平衡了三个正则项的贡献。where y=(y1,..,yC) denotes the true label, if it belongs to the i-th class, y i =1 and y j =0 for j not equal to i. A scalar representing the probability of predicting the sequence as class i. λ1, λ2 and λ3 balance the contributions of the three regularization terms.

第一个正则项旨在鼓励关键人物候选子网络动态地关注序列中的更多空间节点。本实施例发现该网络模型易于随时间不断忽略许多个体,即使这些个体对于确定动作类型也很有价值,即被困在局部最优位置,因此,引入此正则项以避免此类不适定的解决方案。第二个正则化项是在以L2范数控制的情况下对学习的关键时间段候选子网络进行正则化,而不是无限制地增加它们。这减轻了反向传播中的梯度消失,其中反向传播的梯度与1/βt成比例。L1范数的第三个正则项是减少网络的过度拟合,Wuv表示网络中的连接矩阵。The first regularization term aims to encourage the key person candidate sub-network to dynamically focus on more spatial nodes in the sequence. This example found that the network model tends to ignore many individuals over time, even if these individuals are valuable for determining the type of action, i.e. trapped in local optimal positions, therefore, this regularization term is introduced to avoid such ill-posed solutions Program. The second regularization term is to regularize the learned key-period candidate sub-network under the control of the L2 norm, instead of increasing them indefinitely. This alleviates vanishing gradients in backpropagation, where the gradient of backpropagation is proportional to 1/βt. The third regularization term of the L1 norm is to reduce the overfitting of the network, and W uv represents the connection matrix in the network.

最后考虑到模型的复杂性,提出了主网络与子网络联合训练的策略使模型能够达到更优的结果,具体的,网络的联合训练过程如下:Finally, considering the complexity of the model, a joint training strategy of the main network and the sub-network is proposed to enable the model to achieve better results. Specifically, the joint training process of the network is as follows:

由于这三个网络的相互影响,含有两个loss函数,优化工作相当困难。因此,本方案中提出采用联合训练的策略,可以有效的训练整个模型,训练过程如下:Due to the interaction of these three networks, including two loss functions, the optimization work is quite difficult. Therefore, the joint training strategy proposed in this scheme can effectively train the entire model. The training process is as follows:

输入:模型的训练次数N1,N2(例如,N1=1000,N2=500)Input: The number of training times of the model N1, N2 (for example, N1=1000, N2=500)

1、使用高斯函数初始化网络参数;1. Use the Gaussian function to initialize the network parameters;

2、将关键人物候选子网络权重固定(按照初始化权重),联合训练仅具有主网络一个LSTM层的关键时间段候选子网络主网络,以获得关键时间段候选模型;2. Fix the weight of the key person candidate sub-network (according to the initialization weight), and jointly train the main network of the key time period candidate sub-network with only one LSTM layer of the main network to obtain the key time period candidate model;

3、重复迭代,经过N1次迭代将其LSTM层增加到三层之后,训练主网络;3. Repeat the iteration, and after N1 iterations increase its LSTM layer to three layers, train the main network;

4、通过N2次迭代微调主网络和关键时间段候选子网络;4. Fine-tune the main network and candidate sub-networks for key time periods through N2 iterations;

5、将关键时间段候选子网络固定,联合训练仅具有一个LSTM层的关键人物候选子网络和主网络,以获得关键人物候选子网络;5. Fix the key time period candidate sub-network, and jointly train the key person candidate sub-network and the main network with only one LSTM layer to obtain the key person candidate sub-network;

6、重复迭代,经过N1次迭代将其LSTM层增加到三层之后,训练主网络;6. Repeat the iteration, and after N1 iterations increase its LSTM layer to three layers, train the main network;

7、通过N2次迭代微调主网络和此关键时间段候选子网络;7. Fine-tune the main network and the candidate sub-network for this key time period through N2 iterations;

8、通过N1次迭代联合训练步骤4和7中得到得子网络;8. The sub-network obtained in steps 4 and 7 is jointly trained by N1 iterations;

9、通过N2次迭代共同微调整个网络模型;9. Jointly fine-tune the entire network model through N2 iterations;

输出:最终收敛得到整个组群行为识别模型。Output: The entire group behavior recognition model is finally converged.

对于本方案所提出的组群行为识别方法,采用关键人物候选子网络对组群内部的每一个人分配不同的重要性权重,既不丢失信息,又能关注到对组群行为识别贡献大的个体;而且利用关键时间段候选子网络为每一帧分配不同的重要性权重,不丢弃任何帧,不会造成数据的缺失,并且通过模型不断地训练迭代,这可以大大提高组群行为识别地效率和精度。For the group behavior recognition method proposed in this scheme, the key person candidate sub-network is used to assign different importance weights to each person in the group, so as not to lose information, but also to pay attention to those who contribute greatly to group behavior recognition. In addition, the candidate sub-network of the key time period is used to assign different importance weights to each frame, no frame is discarded, and no data is missing, and the model is continuously trained and iterated, which can greatly improve the performance of group behavior recognition. Efficiency and Precision.

另外,现阶段的交互关系的表达都是使用图模型来对群组当中的人物交互关系进行建模,数据庞大,模型训练困难,而本方案中使用共现性来处理组群行为内部的交互关系,采用全连接的层叠双向LSTM,并且对其神经元分组,不同的组识别不同的行为,进一步有效的提高组群行为识别的精度。In addition, the current expression of the interaction relationship is to use the graph model to model the interaction relationship between the characters in the group, the data is huge, and the model training is difficult, and this solution uses the co-occurrence to deal with the interaction within the group behavior. relationship, using a fully connected layered bidirectional LSTM, and grouping its neurons, different groups recognize different behaviors, and further effectively improve the accuracy of group behavior recognition.

以上所述,仅是本发明的较佳实施例而已,并非是对本发明作其它形式的限制,任何熟悉本专业的技术人员可能利用上述揭示的技术内容加以变更或改型为等同变化的等效实施例应用于其它领域,但是凡是未脱离本发明技术方案内容,依据本发明的技术实质对以上实施例所作的任何简单修改、等同变化与改型,仍属于本发明技术方案的保护范围。The above are only preferred embodiments of the present invention, and are not intended to limit the present invention in other forms. Any person skilled in the art may use the technical content disclosed above to make changes or modifications to equivalent changes. The embodiments are applied to other fields, but any simple modifications, equivalent changes and modifications made to the above embodiments according to the technical essence of the present invention still belong to the protection scope of the technical solutions of the present invention without departing from the content of the technical solutions of the present invention.

Claims (9)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202010192335.3A CN111414846B (en) | 2020-03-18 | 2020-03-18 | Group Behavior Recognition Method Based on Key Spatiotemporal Information Driven and Group Co-occurrence Structural Analysis |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN202010192335.3A CN111414846B (en) | 2020-03-18 | 2020-03-18 | Group Behavior Recognition Method Based on Key Spatiotemporal Information Driven and Group Co-occurrence Structural Analysis |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN111414846A true CN111414846A (en) | 2020-07-14 |

| CN111414846B CN111414846B (en) | 2023-06-02 |

Family

ID=71494765

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN202010192335.3A Active CN111414846B (en) | 2020-03-18 | 2020-03-18 | Group Behavior Recognition Method Based on Key Spatiotemporal Information Driven and Group Co-occurrence Structural Analysis |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN111414846B (en) |

Cited By (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN114495151A (en) * | 2021-12-07 | 2022-05-13 | 海纳云物联科技有限公司 | Group behavior identification method |

| CN119672806A (en) * | 2024-12-02 | 2025-03-21 | 合肥大学 | A group behavior recognition method based on dynamic and static features and multiple interaction networks |

Citations (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US8009863B1 (en) * | 2008-06-30 | 2011-08-30 | Videomining Corporation | Method and system for analyzing shopping behavior using multiple sensor tracking |

| CN108764011A (en) * | 2018-03-26 | 2018-11-06 | 青岛科技大学 | Group recognition methods based on the modeling of graphical interactive relation |

| CN109101896A (en) * | 2018-07-19 | 2018-12-28 | 电子科技大学 | A kind of video behavior recognition methods based on temporal-spatial fusion feature and attention mechanism |

-

2020

- 2020-03-18 CN CN202010192335.3A patent/CN111414846B/en active Active

Patent Citations (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US8009863B1 (en) * | 2008-06-30 | 2011-08-30 | Videomining Corporation | Method and system for analyzing shopping behavior using multiple sensor tracking |

| CN108764011A (en) * | 2018-03-26 | 2018-11-06 | 青岛科技大学 | Group recognition methods based on the modeling of graphical interactive relation |

| CN109101896A (en) * | 2018-07-19 | 2018-12-28 | 电子科技大学 | A kind of video behavior recognition methods based on temporal-spatial fusion feature and attention mechanism |

Cited By (4)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN114495151A (en) * | 2021-12-07 | 2022-05-13 | 海纳云物联科技有限公司 | Group behavior identification method |

| CN114495151B (en) * | 2021-12-07 | 2024-09-27 | 海纳云物联科技有限公司 | Group behavior recognition method |

| CN119672806A (en) * | 2024-12-02 | 2025-03-21 | 合肥大学 | A group behavior recognition method based on dynamic and static features and multiple interaction networks |

| CN119672806B (en) * | 2024-12-02 | 2025-10-17 | 合肥大学 | Group behavior identification method based on dynamic and static characteristics and multiple interaction networks |

Also Published As

| Publication number | Publication date |

|---|---|

| CN111414846B (en) | 2023-06-02 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN111652066B (en) | Medical behavior identification method based on multi-self-attention mechanism deep learning | |

| Karim et al. | Insights into LSTM fully convolutional networks for time series classification | |

| CN108830157B (en) | Human action recognition method based on attention mechanism and 3D convolutional neural network | |

| CN113297936B (en) | A volleyball group behavior recognition method based on local graph convolutional network | |

| Yu et al. | Human action recognition using deep learning methods | |

| CN108596039A (en) | A kind of bimodal emotion recognition method and system based on 3D convolutional neural networks | |

| CN111988329B (en) | Network intrusion detection method based on deep learning | |

| US20130018832A1 (en) | Data structure and a method for using the data structure | |

| Liu et al. | SG-DSN: A semantic graph-based dual-stream network for facial expression recognition | |

| Kumar et al. | Deep learning as a frontier of machine learning: A | |

| CN111144500A (en) | Differential Privacy Deep Learning Classification Method Based on Analytic Gaussian Mechanism | |

| Sekh et al. | ELM-HTM guided bio-inspired unsupervised learning for anomalous trajectory classification | |

| Chergui et al. | Kinship verification through facial images using cnn-based features | |

| CN112836718A (en) | An Image Emotion Recognition Method Based on Fuzzy Knowledge Neural Network | |

| CN118135309A (en) | Traditional Chinese medicine image recognition method based on CNN multi-model fusion and attention mechanism | |

| Rahat et al. | Deep CNN-based mango insect classification | |

| CN111414846A (en) | Group behavior identification method based on key spatiotemporal information-driven and group co-occurrence structured analysis | |

| Shen et al. | Recognizing scoring in basketball game from AER sequence by spiking neural networks | |

| Shultana et al. | Cvtsrr: A convolutional vision transformer based method for social relation recognition | |

| CN119810923A (en) | Video behavior recognition method and system based on graph convolutional network | |

| CN116883783B (en) | An incremental learning method based on knowledge distillation and parameter isolation | |

| Ou et al. | Improving person re-identification by multi-task learning | |

| El-Sharkawi | Neural networks' power | |

| Chennupati | Hierarchical decomposition of large deep networks | |

| Venkatasaichandrakanth et al. | A detailed study on deep learning versus machine learning approaches for pest classification in field crops |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant | ||

| TR01 | Transfer of patent right |

Effective date of registration: 20240412 Address after: 509 Kangrui Times Square, Keyuan Business Building, 39 Huarong Road, Gaofeng Community, Dalang Street, Longhua District, Shenzhen, Guangdong Province, 518000 Patentee after: Shenzhen Litong Information Technology Co.,Ltd. Country or region after: China Address before: 266000 Songling Road, Laoshan District, Qingdao, Shandong Province, No. 99 Patentee before: QINGDAO University OF SCIENCE AND TECHNOLOGY Country or region before: China |

|

| TR01 | Transfer of patent right | ||

| TR01 | Transfer of patent right |

Effective date of registration: 20250327 Address after: Room 1002, 10th Floor, Building 7, One Hundred Million Midstream Intelligent Industry Accelerator, No. 789 Tiangu Sixth Road, High tech Zone, Xi'an City, Shaanxi Province, China 710000 Patentee after: Huayi Zhichuang Technology Development Co.,Ltd. Country or region after: China Address before: 509 Kangrui Times Square, Keyuan Business Building, 39 Huarong Road, Gaofeng Community, Dalang Street, Longhua District, Shenzhen, Guangdong Province, 518000 Patentee before: Shenzhen Litong Information Technology Co.,Ltd. Country or region before: China |

|

| TR01 | Transfer of patent right |