CN111385514A - Portrait processing method and device and terminal - Google Patents

Portrait processing method and device and terminal Download PDFInfo

- Publication number

- CN111385514A CN111385514A CN202010100149.2A CN202010100149A CN111385514A CN 111385514 A CN111385514 A CN 111385514A CN 202010100149 A CN202010100149 A CN 202010100149A CN 111385514 A CN111385514 A CN 111385514A

- Authority

- CN

- China

- Prior art keywords

- image

- user

- terminal

- portrait processing

- processor

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Granted

Links

Images

Classifications

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N7/00—Television systems

- H04N7/14—Systems for two-way working

- H04N7/141—Systems for two-way working between two video terminals, e.g. videophone

- H04N7/142—Constructional details of the terminal equipment, e.g. arrangements of the camera and the display

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F3/00—Input arrangements for transferring data to be processed into a form capable of being handled by the computer; Output arrangements for transferring data from processing unit to output unit, e.g. interface arrangements

- G06F3/01—Input arrangements or combined input and output arrangements for interaction between user and computer

- G06F3/011—Arrangements for interaction with the human body, e.g. for user immersion in virtual reality

-

- H—ELECTRICITY

- H04—ELECTRIC COMMUNICATION TECHNIQUE

- H04N—PICTORIAL COMMUNICATION, e.g. TELEVISION

- H04N21/00—Selective content distribution, e.g. interactive television or video on demand [VOD]

- H04N21/40—Client devices specifically adapted for the reception of or interaction with content, e.g. set-top-box [STB]; Operations thereof

- H04N21/47—End-user applications

- H04N21/478—Supplemental services, e.g. displaying phone caller identification, shopping application

- H04N21/4788—Supplemental services, e.g. displaying phone caller identification, shopping application communicating with other users, e.g. chatting

Landscapes

- Engineering & Computer Science (AREA)

- General Engineering & Computer Science (AREA)

- Multimedia (AREA)

- Signal Processing (AREA)

- Theoretical Computer Science (AREA)

- Human Computer Interaction (AREA)

- Physics & Mathematics (AREA)

- General Physics & Mathematics (AREA)

- User Interface Of Digital Computer (AREA)

Abstract

本申请提供了人像处理方法和装置以及终端,能够还原用户被虚拟现实设备遮挡的面部特征,从而提高接收端的用户体验。该方法包括:终端获取用户的第一图像,该第一图像包括该用户的面部,且该用户的面部的部分区域被虚拟现实VR装置遮挡,该部分区域包括该用户的双眼所在的区域;该终端将该第一图像输入人像处理模型,得到第二图像,该第二图像包括该用户完整的面部;该终端向接收端发送该第二图像。

The present application provides a portrait processing method and device, and a terminal, which can restore the facial features of the user blocked by the virtual reality device, thereby improving the user experience of the receiving end. The method includes: the terminal acquires a first image of the user, the first image includes the user's face, and a partial area of the user's face is blocked by the virtual reality VR device, and the partial area includes the area where the user's eyes are; the The terminal inputs the first image into the portrait processing model to obtain a second image, where the second image includes the complete face of the user; the terminal sends the second image to the receiving end.

Description

技术领域technical field

本申请涉及终端技术领域,并且更具体地,涉及终端技术领域中的人像处理方法和装置以及终端。The present application relates to the field of terminal technology, and more particularly, to a method and apparatus for processing portraits and a terminal in the field of terminal technology.

背景技术Background technique

随着通信技术的不断发展和进步,随时随地的大宽带服务为虚拟现实(virtualreality,VR)技术的应用发展提供更宽的平台。With the continuous development and progress of communication technology, large broadband service anytime and anywhere provides a wider platform for the application and development of virtual reality (virtual reality, VR) technology.

在众多的VR应用中,沉浸式视频通话是一种非常重要的业务。沉浸式视频通话指通话双方佩戴VR装置进行视频通话,每方通话者身边设置有摄像头,该摄像头能够拍摄通话者视频通话时所处的实景,并传输至对方的VR装置呈现出来,以实现模拟与对方实景对话的通话效果。In many VR applications, immersive video calling is a very important business. Immersive video call means that both parties wear VR devices to make a video call. Each caller is equipped with a camera. The camera can capture the real scene of the caller during the video call and transmit it to the other party's VR device for presentation to realize simulation. The call effect of the real-life dialogue with the other party.

然而,由于在用户佩戴VR装置进行视频通话的时候,VR装置会遮挡住用户面部的部分区域,例如,VR眼镜会遮挡住用户的眼睛,因此,通过摄像头拍摄用户的通话实景时,对方看不到用户的眼部动作和表情等面部特征,用户体验较差。However, when the user wears the VR device to make a video call, the VR device will block part of the user's face, for example, the VR glasses will block the user's eyes. Therefore, when the user's call is captured through the camera, the other party cannot see it. To the user's facial features such as eye movements and expressions, the user experience is poor.

发明内容SUMMARY OF THE INVENTION

本申请提供一种人像处理方法和装置以及终端,能够还原用户被VR装置遮挡的面部特征,从而提高接收端的用户体验。The present application provides a portrait processing method and device, and a terminal, which can restore a user's facial features blocked by a VR device, thereby improving the user experience at the receiving end.

第一方面,本申请提供一种人像处理方法,该方法包括:获取用户的第一图像,所述第一图像包括所述用户的面部,且所述用户的面部的部分区域被虚拟现实VR装置遮挡,所述部分区域包括所述用户的双眼所在的区域;将所述第一图像输入人像处理模型,得到第二图像,所述第二图像包括所述用户完整的面部,其中,所述人像处理模型是通过对样本训练数据集训练得到的,所述样本训练数据集包括多个原始图像和与所述多个原始图像对应的多个还原图像,其中,所述多个原始图像是对至少一个样本用户采集得到的,所述多个原始图像中的第一原始图像包括第一样本用户,且所述第一样本用户的面部的所述部分区域被VR装置遮挡,所述多个还原图像中第一还原图像包括所述第一样本用户完整的面部,所述至少一个样本用户包括所述第一样本用户;向接收端发送所述第二图像。In a first aspect, the present application provides a portrait processing method, the method includes: acquiring a first image of a user, where the first image includes the user's face, and a partial area of the user's face is captured by a virtual reality VR device occlusion, the partial area includes the area where the eyes of the user are located; the first image is input into a portrait processing model to obtain a second image, the second image includes the complete face of the user, wherein the portrait The processing model is obtained by training a sample training data set, and the sample training data set includes a plurality of original images and a plurality of restored images corresponding to the plurality of original images, wherein the plurality of original images are images of at least one original image. Collected from a sample user, the first original image in the multiple original images includes the first sample user, and the partial area of the face of the first sample user is blocked by the VR device, and the multiple original images include the first sample user. The first restored image in the restored image includes the complete face of the first sample user, and the at least one sample user includes the first sample user; and the second image is sent to the receiving end.

采用本申请实施例提供的人像处理方法,能够还原用户被VR装置遮挡的面部特征,从而提高接收端的用户体验。By using the portrait processing method provided by the embodiment of the present application, the facial features of the user blocked by the VR device can be restored, thereby improving the user experience of the receiving end.

可选地,所述人像处理装置还可以通过其它方式获取所述第一图像,本申请实施例对此不作限定。Optionally, the portrait processing apparatus may also acquire the first image in other manners, which is not limited in this embodiment of the present application.

在第一种可能的实现方式中,所述人像处理装置可以接收所述第一摄像装置拍摄的第三图像,所述第三图像包括所述用户,所述人像处理装置从所述第三图像中截取所述第一图像。In a first possible implementation manner, the portrait processing device may receive a third image captured by the first camera device, the third image includes the user, and the portrait processing device obtains the image from the third image to capture the first image.

需要说明的是,所述第一摄像装置可以为如图1中所示的摄像装置120。所述第一摄像装置和所述人像处理装置可以分别为独立的装置,或所述第一摄像装置和所述人像处理装置可以集成在同一个设备中,本申请实施例对此不作限定。It should be noted that the first camera device may be the

需要说明的是,所述第三图像可以至少包括所述用户。也就是说,所述第三图像还可以包括所述用户所处的环境或场景,本申请实施例对此不作限定。It should be noted that, the third image may include at least the user. That is, the third image may further include the environment or scene where the user is located, which is not limited in this embodiment of the present application.

需要说明的是,本申请实施例仅以所述用户的面部的部分区域为双眼所在区域为例进行介绍,应理解,该部分区域还可以包括所述用户的面部的其他区域,本申请实施例对此不作限定。It should be noted that this embodiment of the present application only takes a part of the face of the user as the area where the eyes are located as an example for introduction. It should be understood that this part of the area may also include other areas of the face of the user. The embodiment of the present application This is not limited.

还需要说明的是,所述第一图像可以至少包括所述用户的面部,其中,所述用户的面部的部分区域被VR装置遮挡。It should also be noted that the first image may include at least the face of the user, wherein a part of the face of the user is blocked by the VR device.

可选地,所述第一图像还可以包括所述用户的其他部位和所述用户所处的环境中的至少一项,或所述第一图像还可以包括除所述用户以外的其他用户,其中,对其它用户的人像处理方法与对所述用户人像处理方法类似,为避免重复,此处不再赘述。Optionally, the first image may further include at least one of other parts of the user and an environment where the user is located, or the first image may further include other users other than the user, The method for processing the portraits of other users is similar to the method for processing the portraits of the users, and in order to avoid repetition, details are not described here.

还需要说明的是,当所述场景图像中还包括其它用户,且其它用户的面部的部分区域也被该其它用户佩戴的VR装置遮挡时,所述人像处理装置可以采用与所述用户的人像处理方法类似的方法对其它用户进行人像处理,为避免重复,此处不再赘述。It should also be noted that when the scene image also includes other users, and a part of the face of the other user is also blocked by the VR device worn by the other user, the portrait processing device may use a portrait similar to the user. A method similar to the processing method is used to perform portrait processing on other users. To avoid repetition, details are not described here.

还需要说明的是,本申请实施例中所述的第一图像可以为单独的图像,或可以为视频流中的一帧图像,本申请实施例对此不作限定。It should also be noted that the first image described in this embodiment of the present application may be an independent image, or may be a frame of image in a video stream, which is not limited in this embodiment of the present application.

可选地,所述第一图像可以为原始拍摄的图像,或经过基础画质处理后得到的清晰度较高的图像。Optionally, the first image may be an originally captured image, or an image with higher definition obtained after basic image quality processing.

可选地,本申请实施例中所述的基础画质处理可以包括至少一种用于提高画质的图像处理步骤,例如:去噪、锐化、提高亮度等,本申请实施例对此不作限定。Optionally, the basic image quality processing described in this embodiment of the present application may include at least one image processing step for improving image quality, such as denoising, sharpening, and brightness enhancement, which are not performed in this embodiment of the present application. limited.

可选地,所述人像处理装置可以通过多种方式获取所述人像处理模型,本申请实施例对此不作限定。Optionally, the portrait processing apparatus may acquire the portrait processing model in various manners, which is not limited in this embodiment of the present application.

在第一种可能的实现方式中,所述人像处理装置可以在出厂时预配置所述人像处理模型。In a first possible implementation manner, the portrait processing apparatus may be preconfigured with the portrait processing model at the factory.

在第二种可能的实现方式中,所述人像处理装置可以从其它设备处接收所述人像处理模型,也就是说,所述人像处理模型是其它设备训练得到的。In a second possible implementation manner, the portrait processing apparatus may receive the portrait processing model from other devices, that is, the portrait processing model is obtained by training from other devices.

例如,所述人像处理模型可以存储在云端服务器,所述人像处理装置可以通过网络向云端服务器请求所述人像处理模型。For example, the portrait processing model may be stored in a cloud server, and the portrait processing apparatus may request the portrait processing model from the cloud server through a network.

在第三种可能的实现方式中,所述人像处理装置可以自己训练所述人像处理模型。In a third possible implementation manner, the portrait processing apparatus may train the portrait processing model by itself.

可选地,以该用户的双眼所在区域被VR装置遮挡为例,所述人像处理装置可以获取样本训练数据集,对所述样本训练数据集进行训练和学习得到所述人像处理模型。其中,所述样本训练数据集包括多个原始图像和所述多个原始图像对应的多个还原图像,其中,所述多个原始图像是对至少一个样本用户采集得到的,所述多个原始图像中的第一原始图像包括第一样本用户,且所述第一样本用户的面部的所述部分区域被VR装置遮挡,所述多个还原图像中第一还原图像包括所述第一样本用户完整的面部,所述至少一个样本用户包括所述第一样本用户。Optionally, taking the area where the user's eyes are located by the VR device as an example, the portrait processing device may acquire a sample training data set, and perform training and learning on the sample training data set to obtain the portrait processing model. The sample training data set includes multiple original images and multiple restored images corresponding to the multiple original images, wherein the multiple original images are collected from at least one sample user, and the multiple original images The first original image in the images includes a first sample user, and the partial area of the face of the first sample user is blocked by the VR device, and the first restored image among the plurality of restored images includes the first A complete face of a sample user, the at least one sample user including the first sample user.

可选地,所述人像处理装置可以通过多种方法对所述样本训练数据集进行训练和学习得到所述人像处理模型,本申请实施例对此不作限定。Optionally, the portrait processing apparatus may obtain the portrait processing model by training and learning the sample training data set through various methods, which is not limited in this embodiment of the present application.

在一种可能的实现方式中,所述人像处理装置可以通过神经网络模型对所述样本训练数据集进行训练和学习得到所述人像处理模型。In a possible implementation manner, the portrait processing apparatus may obtain the portrait processing model by training and learning the sample training data set through a neural network model.

例如,该神经网络模型可以为生成对抗网络(generative adversarial nets,GAN)模型,该GAN可以包括条件生成式对抗网络(Conditional GAN)、深度卷积生成式对抗网络(Deep Convolution GAN)等。For example, the neural network model may be a generative adversarial nets (GAN) model, and the GAN may include a conditional generative adversarial network (Conditional GAN), a deep convolutional generative adversarial network (Deep Convolution GAN), and the like.

采用本申请实施例提供的人像处理方法,通过人像处理模型能够还原第一图像中用户被VR装置遮挡的面部特征,从而提高接收端的用户体验。Using the portrait processing method provided by the embodiment of the present application, the facial features of the user blocked by the VR device in the first image can be restored through the portrait processing model, thereby improving the user experience at the receiving end.

可选地,所述人像处理装置可以将所述第一图像和特征参考信息输入所述人像处理模型,得到所述第二图像。其中,所述特征参考信息包括眼部特征参数和头部特征参数中的至少一项,所述眼部特征参数包括位置信息、尺寸信息、视角信息中的至少一项,所述位置信息用于指示所述用户的两只眼睛中每只眼睛的位置,所述尺寸信息用于指示所述每只眼睛的尺寸、所述视角信息用于指示所述每只眼睛的眼球注视角度,所述头部特征参数包括所述用户的头部的三轴姿态角和加速度。Optionally, the portrait processing apparatus may input the first image and feature reference information into the portrait processing model to obtain the second image. Wherein, the feature reference information includes at least one of an eye feature parameter and a head feature parameter, the eye feature parameter includes at least one of position information, size information, and viewing angle information, and the position information is used for Indicate the position of each of the two eyes of the user, the size information is used to indicate the size of each eye, the angle of view information is used to indicate the eye gaze angle of each eye, the head The facial feature parameters include the three-axis attitude angle and acceleration of the user's head.

采用本申请实施例提供的人像处理方法,结合第一图像和特征参考信息,输入人像处理装置,通过提供更多的人像相关特征,能够提高被遮挡区域的还原度和真实性。Using the portrait processing method provided by the embodiment of the present application, combining the first image and feature reference information, and inputting the portrait processing device, the restoration degree and authenticity of the occluded area can be improved by providing more portrait-related features.

可选地,所述人像处理装置可以通过多种方式获取所述特征参考信息,本申请实施例对此不作限定。Optionally, the portrait processing apparatus may acquire the feature reference information in various ways, which is not limited in this embodiment of the present application.

(1)获取眼部特征参数的方式(1) How to obtain eye feature parameters

在第一种可能的实现方式中,所述人像处理装置可以接收第二摄像装置拍摄的第四图像,所述第二摄像装置为所述VR装置内置的摄像装置,所述第四图像包括所述用户的两只眼睛;所述人像处理装置可以从所述第四图像中提取所述眼部特征参数。In a first possible implementation manner, the portrait processing device may receive a fourth image captured by a second camera, where the second camera is a built-in camera in the VR device, and the fourth image includes the the two eyes of the user; the portrait processing apparatus may extract the eye feature parameters from the fourth image.

需要说明的是,该第二摄像装置可以为所述VR装置内置的摄像装置,例如:内置的红外(infrared,IR)摄像头。It should be noted that, the second camera device may be a built-in camera device in the VR device, such as a built-in infrared (infrared, IR) camera.

可选地,本申请实施例仅以所述第二摄像装置为VR装置上的内置摄像装置为例进行介绍,所述第二摄像装置还可以为设置在其他位置的能够拍摄到所述用户被VR装置遮挡的面部区域的真实图像的摄像装置,本申请实施例对此不作限定。Optionally, the embodiments of the present application only take the second camera as an example of a built-in camera on a VR device, and the second camera may also be a camera located at another location and capable of capturing images of the user being touched. The camera device of the real image of the face area blocked by the VR device is not limited in this embodiment of the present application.

在第二种可能的实现方式中,所述人像处理装置可以从本地存储的所述用户的多个图像中提取所述眼部特征参数,其中,所述多个图像包括所述用户的眼睛。In a second possible implementation manner, the portrait processing apparatus may extract the eye feature parameters from multiple images of the user stored locally, where the multiple images include the eyes of the user.

需要说明的是,所述多个图像可以为所述用户日常拍摄的包含面部的照片,例如:自拍照。It should be noted that the plurality of images may be photos including the face taken by the user on a daily basis, such as self-portraits.

在第三种可能的实现方式中,所述人像处理装置可以通过用户日常拍摄的照片,建立该用户的面部特征数据库,该面部特征数据库中包括面部各器官的特征参数,例如眼部特征参数,所述人像处理装置可以从该面部特征数据库中调取该眼部特征参数。In a third possible implementation manner, the portrait processing device may establish a facial feature database of the user through the daily photos taken by the user, and the facial feature database includes feature parameters of various facial organs, such as eye feature parameters, The portrait processing device may retrieve the eye feature parameter from the facial feature database.

在第四种可能的实现方式中,所述人像处理装置可以从其它测量装置,如眼动跟踪传感器处接收所述眼部特征参数。In a fourth possible implementation manner, the human image processing device may receive the eye feature parameters from other measuring devices, such as an eye tracking sensor.

可选地,所述多个图像和所述面部特征数据库还可以存储在云端,所述人像处理装置可以通过网络向云端请求,本申请实施例对此不作限定。Optionally, the multiple images and the facial feature database may also be stored in the cloud, and the portrait processing apparatus may request the cloud through a network, which is not limited in this embodiment of the present application.

需要说明的是,在通过所述人像处理模型对所述第一图像中被遮挡的内容进行还原的时候,由于VR装置主要遮挡了用户的眼部区域,因此,结合用户的眼部特征参数,能够提高被遮挡内容的还原度和真实性。It should be noted that, when the occluded content in the first image is restored by the portrait processing model, since the VR device mainly occludes the user's eye area, combined with the user's eye feature parameters, It can improve the restoration and authenticity of the occluded content.

(2)获取头部特征参数的方式(2) How to obtain head feature parameters

在一种可能的实现方式中,所述人像处理装置可以接收惯性测量装置测量的所述头部特征参数。In a possible implementation manner, the portrait processing device may receive the head characteristic parameter measured by the inertial measurement device.

需要说明的是,惯性测量装置,也可以成为惯性测量单元(Inertial measurementunit,IMU)是测量物体三轴姿态角(或角速率)以及加速度的装置。一般的,一个IMU包含了三个单轴的加速度计和三个单轴的陀螺,加速度计检测物体在载体坐标系统独立三轴的加速度信号,而陀螺检测载体相对于导航坐标系的角速度信号,测量物体在三维空间中的角速度和加速度,并以此解算出物体的姿态。It should be noted that an inertial measurement device, which can also be an inertial measurement unit (Inertial measurement unit, IMU), is a device that measures the three-axis attitude angle (or angular rate) and acceleration of an object. Generally, an IMU contains three single-axis accelerometers and three single-axis gyroscopes. The accelerometer detects the acceleration signal of the object in the independent three-axis of the carrier coordinate system, and the gyroscope detects the angular velocity signal of the carrier relative to the navigation coordinate system. Measure the angular velocity and acceleration of an object in three-dimensional space, and use this to calculate the object's attitude.

可选地,所述惯性测量装置可以为固定在所述用户头部的独立的测量设备,或所述惯性测量装置可以集成在所述VR装置内,本申请实施例对此不作限定。Optionally, the inertial measurement device may be an independent measurement device fixed on the user's head, or the inertial measurement device may be integrated in the VR device, which is not limited in this embodiment of the present application.

需要说明的是,在通过所述人像处理模型对所述第一图像中被遮挡的内容进行还原的时候,由于用户的头部可能处于运动状态,即具有运动速度和转动角度,因此,结合用户的头部特征参数,能够进一步提高被遮挡内容的还原度和真实性。It should be noted that, when the occluded content in the first image is restored by the portrait processing model, since the user's head may be in a motion state, that is, it has a motion speed and a rotation angle, the user's head may be in motion. It can further improve the restoration degree and authenticity of the occluded content.

可选地,所述特征参考信息还可以包括其他特征参数,例如:鼻子特征参数、脸型特征参数,发型特征参数等能够描述所述用户的人像特征的参数,本申请实施例对此不作限定。Optionally, the feature reference information may further include other feature parameters, such as nose feature parameters, face shape feature parameters, hairstyle feature parameters and other parameters that can describe the user's portrait features, which are not limited in this embodiment of the present application.

可选地,所述人像处理装置可以向所述接收端发送所述第二图像,或所述人像处理装置可以向所述接收端发送目标图像,所述目标图像为对所述第二图像进行合成或拼接后的图像,或所述人像处理装置可以向所述接收端发送视频流,所述视频流中包括所述第二图像或所述目标图像,本申请实施例对此不作限定。Optionally, the portrait processing device may send the second image to the receiving end, or the portrait processing device may send a target image to the receiving end, where the target image is a The synthesized or spliced image, or the portrait processing apparatus may send a video stream to the receiving end, where the video stream includes the second image or the target image, which is not limited in this embodiment of the present application.

相应地,所述人像处理装置可以向所述接收端发送所述第二图像,或包含所述第二图像的目标图像或视频流。Correspondingly, the portrait processing apparatus may send the second image, or a target image or video stream including the second image, to the receiving end.

可选地,所述人像处理装置可以通过多种方式生成所述视频流,本申请实施例对此不作限定。Optionally, the portrait processing apparatus may generate the video stream in various manners, which is not limited in this embodiment of the present application.

在一种可能的实现方式中,所述人像处理装置向接收端发送所述第二图像,包括:所述人像处理装置将所述第二图像和第五图像进行拼接,得到目标图像,其中,所述第五图像为从所述第四图像中截取所述第一图像后的图像;所述人像处理装置向所述接收端发送所述目标图像。In a possible implementation manner, sending the second image by the portrait processing device to the receiving end includes: the portrait processing device splicing the second image and the fifth image to obtain a target image, wherein, The fifth image is an image obtained by cutting the first image from the fourth image; the portrait processing apparatus sends the target image to the receiving end.

在一种可能的实现方式中,所述人像处理装置向接收端发送所述第二图像,包括:所述人像处理装置将所述第二图像和所述第四图像进行合成,得到目标图像,其中,所述第二图像覆盖在所述第四图像中的所述第一图像的上层;所述人像处理装置向所述接收端发送所述目标图像。In a possible implementation manner, sending the second image by the portrait processing device to the receiving end includes: the portrait processing device synthesizing the second image and the fourth image to obtain a target image, Wherein, the second image is overlaid on the upper layer of the first image in the fourth image; the portrait processing apparatus sends the target image to the receiving end.

可选地,所述人像处理装置可以通过多种方式生成所述视频流,本申请实施例对此不作限定。Optionally, the portrait processing apparatus may generate the video stream in various manners, which is not limited in this embodiment of the present application.

在一种可能的实现方式中,所述人像处理装置可以对所述第二图像进行视频编码,得到视频图像;根据所述视频图像,得到所述视频流,所述视频流包括所述视频图像。In a possible implementation manner, the portrait processing apparatus may perform video encoding on the second image to obtain a video image; and obtain the video stream according to the video image, where the video stream includes the video image .

需要说明的是,考虑到还原后的第二图像中还原了用户被VR装置遮挡的眼部区域,而实际情况是所述用户面部佩戴了VR装置,因此,所述第二图像与实际情况之间存在一定的差异。It should be noted that, considering that the restored second image restores the user's eye area blocked by the VR device, and the actual situation is that the user wears the VR device on the face, therefore, the difference between the second image and the actual situation is There are certain differences.

因此,所述人像处理装置可以在所述第二图像中所述用户的眼部区域上叠加眼罩图层,其中,所述眼罩图层经过第一透明度的透视化处理,以模拟所述用户佩戴了VR装置,能够提高还原的真实度。Therefore, the portrait processing apparatus may superimpose an eye mask layer on the user's eye region in the second image, wherein the eye mask layer is subjected to perspective processing with a first transparency to simulate wearing by the user The VR device can improve the authenticity of the restoration.

还需要说明的是,该第一透明度的取值应满足既能够看出用户佩戴VR装置,又不影响看到VR装置下被遮挡的眼部区域的显示,本申请实施例对该第一透明度的具体数值不作限定。It should also be noted that the value of the first transparency should be such that it can be seen that the user is wearing the VR device without affecting the display of the occluded eye area under the VR device. The specific value is not limited.

第二方面,本申请实施例提供一种终端,包括:处理器和与所述处理器耦合的收发器,In a second aspect, an embodiment of the present application provides a terminal, including: a processor and a transceiver coupled to the processor,

所述处理器用于控制所述收发器获取用户的第一图像,所述第一图像包括所述用户的面部,且所述用户的面部的部分区域被虚拟现实VR装置遮挡;将所述第一图像输入人像处理模型,得到第二图像,所述第二图像包括所述用户完整的面部,其中,所述人像处理模型是通过对样本训练数据集训练得到的,所述样本训练数据集包括多个原始图像和与所述多个原始图像对应的多个还原图像,其中,所述多个原始图像是对至少一个样本用户采集得到的,所述多个原始图像中的第一原始图像包括第一样本用户,且所述第一样本用户的面部的所述部分区域被VR装置遮挡,所述多个还原图像中第一还原图像包括所述第一样本用户完整的面部,所述至少一个样本用户包括所述第一样本用户;并控制所述收发器向接收端发送所述第二图像。The processor is configured to control the transceiver to obtain a first image of the user, the first image includes the face of the user, and a part of the face of the user is blocked by a virtual reality VR device; The image is input into a portrait processing model to obtain a second image, where the second image includes the complete face of the user, wherein the portrait processing model is obtained by training a sample training data set, and the sample training data set includes multiple original images and multiple restored images corresponding to the multiple original images, wherein the multiple original images are collected from at least one sample user, and the first original image of the multiple original images includes the first original image. a sample user, and the partial area of the face of the first sample user is blocked by the VR device, the first restored image of the plurality of restored images includes the complete face of the first sample user, the At least one sample user includes the first sample user; and controls the transceiver to send the second image to the receiving end.

在一种可能的实现方式中,所述处理器具体用于:将所述第一图像和特征参考信息输入所述人像处理模型,得到所述第二图像,其中,所述特征参考信息包括眼部特征参数和头部特征参数中的至少一项,所述眼部特征参数包括位置信息、尺寸信息、视角信息中的至少一项,所述位置信息用于指示所述用户的两只眼睛中每只眼睛的位置,所述尺寸信息用于指示所述每只眼睛的尺寸、所述视角信息用于指示所述每只眼睛的眼球注视角度,所述头部特征参数包括所述用户的头部的三轴姿态角和加速度。In a possible implementation manner, the processor is specifically configured to: input the first image and feature reference information into the portrait processing model to obtain the second image, wherein the feature reference information includes eye at least one of a facial feature parameter and a head feature parameter, the eye feature parameter includes at least one of position information, size information, and viewing angle information, and the position information is used to indicate that the two eyes of the user are in the The position of each eye, the size information is used to indicate the size of each eye, the angle of view information is used to indicate the eye gaze angle of each eye, and the head feature parameter includes the head of the user The three-axis attitude angle and acceleration of the part.

在一种可能的实现方式中,所述特征参考信息包括所述眼部特征参数,所述处理器还用于在所述将所述第一图像和特征参考信息输入所述人像处理模型,得到所述第二图像之前,控制所述收发器接收第一摄像装置拍摄的所述用户的第三图像,所述第三图像包括所述用户的所述两只眼睛,其中,所述第一摄像头为所述VR装置的内置摄像装置;所述处理器用于从所述第二图像中提取所述眼部特征参数。In a possible implementation manner, the feature reference information includes the eye feature parameters, and the processor is further configured to input the first image and the feature reference information into the portrait processing model to obtain Before the second image, the transceiver is controlled to receive a third image of the user captured by a first camera, the third image including the two eyes of the user, wherein the first camera is a built-in camera device of the VR device; the processor is configured to extract the eye feature parameter from the second image.

在一种可能的实现方式中,所述特征参考信息包括所述头部特征参数,所述处理器还用于在所述将所述第一图像和特征参考信息输入所述人像处理模型,得到所述第二图像之前,控制所述收发器接收惯性测量装置测量的所述头部特征参数。In a possible implementation manner, the feature reference information includes the head feature parameter, and the processor is further configured to input the first image and the feature reference information into the portrait processing model to obtain Before the second image, the transceiver is controlled to receive the head feature parameter measured by the inertial measurement device.

在一种可能的实现方式中,所述处理器具体用于控制所述收发器接收第二摄像头拍摄的所述第一图像。In a possible implementation manner, the processor is specifically configured to control the transceiver to receive the first image captured by the second camera.

在一种可能的实现方式中,所述处理器具体用于:控制所述收发器接收第二摄像头拍摄的第三图像,所述第三图像包括所述用户;从所述第三图像中截取所述第一图像。In a possible implementation manner, the processor is specifically configured to: control the transceiver to receive a third image captured by a second camera, where the third image includes the user; intercept the third image from the third image the first image.

在一种可能的实现方式中,所述处理器还用于:将所述第二图像和第四图像进行拼接,得到目标图像,其中,所述第四图像为从所述第三图像中截取所述第一图像后的图像;控制所述收发器向所述接收端发送所述目标图像。In a possible implementation manner, the processor is further configured to: stitch the second image and the fourth image to obtain a target image, wherein the fourth image is captured from the third image the image after the first image; controlling the transceiver to send the target image to the receiving end.

在一种可能的实现方式中,所述处理器还用于:将所述第二图像和所述第三图像进行合成,得到目标图像,其中,所述第二图像覆盖在所述第三图像中的所述第一图像的上层;控制所述收发器向所述接收端发送所述目标图像。In a possible implementation manner, the processor is further configured to: combine the second image and the third image to obtain a target image, wherein the second image is overlaid on the third image in the upper layer of the first image; controlling the transceiver to send the target image to the receiving end.

在一种可能的实现方式中,所述处理器还用于在所述第二图像中所述用户双眼所在的区域上叠加眼罩图层,所述眼罩图层经过第一透明度的透视化处理。In a possible implementation manner, the processor is further configured to superimpose an eye mask layer on the area where the user's eyes are located in the second image, and the eye mask layer is subjected to the first transparency process.

在一种可能的实现方式中,所述人像处理模型是通过生成对抗网络模型对所述样本训练数据集训练得到的。In a possible implementation manner, the portrait processing model is obtained by training the sample training data set through a generative adversarial network model.

第三方面,本申请还提供一种人像处理装置,用于执行上述第一方面或第一方面的任意可能的实现方式中的方法。具体地,人像处理装置可以包括用于执行上述第一方面或第一方面的任意可能的实现方式中的方法的单元。In a third aspect, the present application further provides a portrait processing apparatus, which is configured to execute the method in the first aspect or any possible implementation manner of the first aspect. Specifically, the portrait processing apparatus may include a unit for executing the method in the above-mentioned first aspect or any possible implementation manner of the first aspect.

第四方面,本申请实施例还提供一种芯片装置,包括:通信接口和处理器,所述通信接口和所述处理器之间通过内部连接通路互相通信,所述处理器用于实现上述第一方面或其任意可能的实现方式中的方法。In a fourth aspect, an embodiment of the present application further provides a chip device, including: a communication interface and a processor, wherein the communication interface and the processor communicate with each other through an internal connection path, and the processor is configured to implement the above-mentioned first A method in an aspect or any possible implementation thereof.

第五方面,本申请实施例还提供一种计算机可读存储介质,用于存储计算机程序,所述计算机程序包括用于实现上述第一方面或其任意可能的实现方式中的方法的指令。In a fifth aspect, embodiments of the present application further provide a computer-readable storage medium for storing a computer program, where the computer program includes instructions for implementing the method in the first aspect or any possible implementation manner thereof.

第六方面,本申请实施例还提供一种计算机程序产品,所述计算机程序产品中包含指令,当所述指令在计算机上运行时,使得计算机实现上述第一方面或其任意可能的实现方式中的方法。In a sixth aspect, the embodiments of the present application further provide a computer program product, the computer program product includes instructions, when the instructions are run on a computer, the computer can implement the first aspect or any possible implementation manner thereof. Methods.

附图说明Description of drawings

图1提供了本申请实施例的应用场景100的示意图;FIG. 1 provides a schematic diagram of an application scenario 100 of an embodiment of the present application;

图2提供了本申请实施例的另一应用场景的示意图;FIG. 2 provides a schematic diagram of another application scenario of an embodiment of the present application;

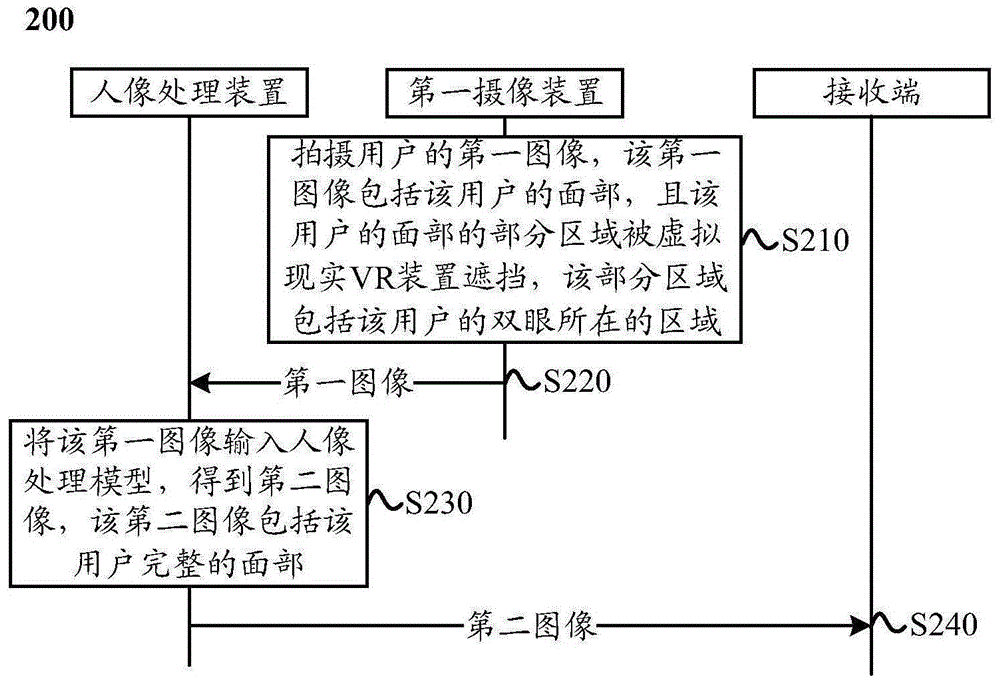

图3提供了本申请实施例的人像处理方法200的示意性流程图;FIG. 3 provides a schematic flowchart of a

图4提供了本申请实施例的VR装置110的示意性框图;FIG. 4 provides a schematic block diagram of the

图5提供的本申请实施例的接收端显示界面的示意图;FIG. 5 is a schematic diagram of a display interface of a receiving end according to an embodiment of the present application;

图6提供了本申请实施例的人像处理装置300的示意性框图;FIG. 6 provides a schematic block diagram of a portrait processing apparatus 300 according to an embodiment of the present application;

图7提供了本申请实施例的手机400的示意性框图;FIG. 7 provides a schematic block diagram of a mobile phone 400 according to an embodiment of the present application;

图8提供了本申请实施例的人像处理系统500的示意性框图。FIG. 8 provides a schematic block diagram of a portrait processing system 500 according to an embodiment of the present application.

具体实施方式Detailed ways

下面将结合本申请实施例中的附图,对本申请实施例中的技术方案进行描述。The technical solutions in the embodiments of the present application will be described below with reference to the accompanying drawings in the embodiments of the present application.

图1示出了本申请实施例提供的应用场景的示意图。如图1所示,用户佩戴VR装置110。FIG. 1 shows a schematic diagram of an application scenario provided by an embodiment of the present application. As shown in FIG. 1 , a user wears a

摄像装置120用于拍摄用户的场景图像,并将所述场景图像发送给人像处理装置130,其中,该场景图像包括该用户的面部,且该用户的面部的部分区域被VR装置110遮挡。The

人像处理装置130用于通过人像处理方法对该场景图像中该用户被VR装置遮挡的区域进行还原,得到目标图像,其中,该目标图像中包括该用户完整的面部。The

人像处理处理装置130还用于将该目标图像传输至接收端140进行显示。The

需要说明的是,所述场景图像至少包括所述用户的面部,图1中仅示意性示出该场景图像包括用户的面部的情况,但本申请实施例不限于此。It should be noted that the scene image includes at least the face of the user, and FIG. 1 only schematically shows a situation where the scene image includes the face of the user, but the embodiment of the present application is not limited thereto.

可选地,所述场景图像还可以包括所述用户的其他部位和所述用户所处的环境中的至少一项,或所述场景图像还可以包括除所述用户以外的其他用户,本申请实施例对此不作限定。例如:图2示出了该场景图像包括用户全身的情况。Optionally, the scene image may also include at least one of other parts of the user and the environment where the user is located, or the scene image may also include other users other than the user. The embodiment does not limit this. For example: FIG. 2 shows a situation where the scene image includes the whole body of the user.

还需要说明的是,当所述场景图像中还包括其它用户,且其它用户的面部的部分区域也被该其它用户佩戴的VR装置遮挡时,所述人像处理装置130可以采用与所述用户的人像处理方法类似的方法对其它用户进行人像处理,为避免重复,此处不再赘述。It should also be noted that when the scene image also includes other users, and a part of the face of the other user is also blocked by the VR device worn by the other user, the

还需要说明的是,本申请实施例中所述的场景图像可以为单独的图像,或可以为视频流中的一帧图像,本申请实施例对此不作限定。It should also be noted that the scene image described in this embodiment of the present application may be a separate image, or may be a frame of image in a video stream, which is not limited in this embodiment of the present application.

还需要说明的是,本申请实施例适用于任何需要拍摄所述用户的场景图像的情况。It should also be noted that the embodiments of the present application are applicable to any situation in which a scene image of the user needs to be captured.

例如:在所述用户与对侧用户进行视频通话的情况下,通过对侧用户的显示设备(接收端)显示所述用户的场景图像。For example, when the user conducts a video call with the opposite user, the user's scene image is displayed through the display device (receiving end) of the opposite user.

需要说明的是,本申请实施例所述的接收端可以为任何能够接收所述终端发送的目标图像,并进行显示的显示设备,本申请实施例对此不作限定。It should be noted that the receiving end described in the embodiments of the present application may be any display device capable of receiving and displaying the target image sent by the terminal, which is not limited in the embodiments of the present application.

又例如:在对所述用户进行监控的情况下,通过显示设备(接收端)显示所述用户的场景图像。For another example: in the case of monitoring the user, a scene image of the user is displayed through a display device (receiving end).

可选地,所述接收端还可以为VR装置、手机、智能电视等具有显示屏的终端,本申请实施例对此不作限定。Optionally, the receiving end may also be a terminal having a display screen, such as a VR device, a mobile phone, a smart TV, etc., which is not limited in this embodiment of the present application.

可选地,图1中的摄像装置120和人像处理装置130可以分别为独立的装置,或摄像装置120和人像处理装置130可以集成在同一个设备中,本申请实施例对此不作限定。Optionally, the

需要说明的是,无论摄像装置120和人像处理装置130是集成在一个设备中还是分别是独立的装置,在下文的描述中,都以摄像装置和人像处理装置进行描述。It should be noted that, regardless of whether the

需要说明的是,本申请实施例中所述的VR装置可以为能够实现虚拟现实功能的可穿戴设备。It should be noted that the VR device described in the embodiments of the present application may be a wearable device capable of realizing a virtual reality function.

还需要说明的是,可穿戴设备也称为穿戴式智能设备,是应用穿戴式技术对日常穿戴进行智能化设计、开发出可以穿戴的设备的总称,如眼镜、头盔、面具等。可穿戴设备即直接穿在身上,或是整合到用户的配件的一种便携式设备。可穿戴设备不仅仅是一种硬件设备,更是通过软件支持以及数据交互、云端交互来实现强大的功能。广义穿戴式智能设备包括功能全、尺寸大、可不依赖智能手机实现完整或者部分的功能,如智能头盔或智能眼镜等,以及只专注于某一类应用功能,需要和其它设备如智能手机配合使用,如各类能够进行人像处理和/或图像显示的智能手环、智能首饰、贴片等,本申请实施例对此不作限定。It should also be noted that wearable devices, also known as wearable smart devices, are a general term for devices that use wearable technology to intelligently design daily wear and develop wearable devices, such as glasses, helmets, masks, etc. A wearable device is a portable device that is worn directly on the body or integrated into the user's accessories. Wearable device is not only a hardware device, but also realizes powerful functions through software support, data interaction, and cloud interaction. In a broad sense, wearable smart devices include full-featured, large-scale, complete or partial functions without relying on smart phones, such as smart helmets or smart glasses, and only focus on a certain type of application function, which needs to be used in conjunction with other devices such as smart phones. , such as various types of smart bracelets, smart jewelry, patches, etc. that can perform portrait processing and/or image display, which are not limited in the embodiments of the present application.

可选地,所述人像处理装置130与所述接收端140之间可以通过有线方式或无线方式通信,本申请实施例对此不作限定。Optionally, communication between the

需要说明的是,上述有线方式可以为通过数据线连接、或通过内部总线连接实现通信。It should be noted that, the above-mentioned wired manner may be connected through a data line or through an internal bus connection to realize communication.

需要说明的是,上述无线方式可以为通过通信网络实现通信,该通信网络可以是局域网,也可以是通过中继(relay)设备转接的广域网,或者包括局域网和广域网。当该通信网络为局域网时,示例性的,该通信网络可以是wifi热点网络、wifi P2P网络、蓝牙网络、zigbee网络或近场通信(near field communication,NFC)网络等近距离通信网络。当该通信网络为广域网时,示例性的,该通信网络可以是第三代移动通信技术(3rd-generationwireless telephone technology,3G)网络、第四代移动通信技术(the 4th generationmobile communication technology,4G)网络、第五代移动通信技术(5th-generationmobile communication technology,5G)网络、未来演进的公共陆地移动网络(publicland mobile network,PLMN)或因特网等,本申请实施例对此不作限定。It should be noted that the above wireless manner may implement communication through a communication network, and the communication network may be a local area network, or a wide area network switched by a relay device, or includes a local area network and a wide area network. When the communication network is a local area network, exemplarily, the communication network may be a near field communication network such as a wifi hotspot network, a wifi P2P network, a Bluetooth network, a zigbee network, or a near field communication (NFC) network. When the communication network is a wide area network, exemplarily, the communication network may be a 3rd-generation wireless telephone technology (3G) network, a 4th generation mobile communication technology (4G) network , a fifth-generation mobile communication technology (5th-generation mobile communication technology, 5G) network, a future evolved public land mobile network (publicland mobile network, PLMN), or the Internet, etc., which are not limited in this embodiment of the present application.

上面介绍了本申请适用的应用场景,下面将详细介绍本申请实施例提供的人像处理方法。The application scenarios to which the present application is applicable are described above, and the portrait processing method provided by the embodiments of the present application will be described in detail below.

图3示出了本申请实施例提供的人像处理方法200的示意性流程图,该方法200可以应用于图1中所述的应用场景,并由人像处理装置130执行。FIG. 3 shows a schematic flowchart of a

S210,第一摄像装置拍摄用户的第一图像,所述第一图像包括所述用户的面部,且所述用户的面部的部分区域被虚拟现实VR装置遮挡,所述部分区域包括所述用户的双眼所在的区域;S210: The first camera device captures a first image of the user, where the first image includes the user's face, and a partial area of the user's face is blocked by the virtual reality VR device, and the partial area includes the user's face the area where the eyes are located;

S220,所述第一摄像装置向人像处理装置发送所述用户的第一图像;相应地,所述人像处理装置接收所述第一摄像装置发送的所述第一图像。S220, the first camera device sends the first image of the user to the portrait processing device; correspondingly, the portrait processing device receives the first image sent by the first camera device.

可选地,S220中,所述人像处理装置还可以通过其它方式获取所述第一图像,本申请实施例对此不作限定。Optionally, in S220, the portrait processing apparatus may also acquire the first image in other manners, which is not limited in this embodiment of the present application.

在第一种可能的实现方式中,所述人像处理装置可以接收所述第一摄像装置拍摄的第三图像,所述第三图像包括所述用户,所述人像处理装置从所述第三图像中截取所述第一图像。In a first possible implementation manner, the portrait processing device may receive a third image captured by the first camera device, the third image includes the user, and the portrait processing device obtains the image from the third image to capture the first image.

需要说明的是,所述第一摄像装置可以为如图1中所示的摄像装置120。所述第一摄像装置和所述人像处理装置可以分别为独立的装置,如人像处理装置为终端,第一摄像装置为摄像头;或所述第一摄像装置和所述人像处理装置可以集成在同一个设备,如终端中,本申请实施例对此不作限定。It should be noted that the first camera device may be the

需要说明的是,所述第三图像可以至少包括所述用户。也就是说,所述第三图像还可以包括所述用户所处的环境或场景,本申请实施例对此不作限定。It should be noted that, the third image may include at least the user. That is, the third image may further include the environment or scene where the user is located, which is not limited in this embodiment of the present application.

需要说明的是,本申请实施例仅以所述用户的面部的部分区域为双眼所在区域为例进行介绍,应理解,该部分区域还可以包括所述用户的面部的其他区域,本申请实施例对此不作限定。It should be noted that this embodiment of the present application only takes a part of the face of the user as the area where the eyes are located as an example for introduction. It should be understood that this part of the area may also include other areas of the face of the user. The embodiment of the present application This is not limited.

还需要说明的是,所述第一图像可以至少包括所述用户的面部,其中,所述用户的面部的部分区域被VR装置遮挡。It should also be noted that the first image may include at least the face of the user, wherein a part of the face of the user is blocked by the VR device.

可选地,所述第一图像还可以包括所述用户的其他部位和所述用户所处的环境中的至少一项,或所述第一图像还可以包括除所述用户以外的其他用户,其中,对其它用户的人像处理方法与对所述用户人像处理方法类似,为避免重复,此处不再赘述。Optionally, the first image may further include at least one of other parts of the user and an environment where the user is located, or the first image may further include other users other than the user, The method for processing the portraits of other users is similar to the method for processing the portraits of the users, and in order to avoid repetition, details are not described here.

还需要说明的是,当所述场景图像中还包括其它用户,且其它用户的面部的部分区域也被该其它用户佩戴的VR装置遮挡时,所述人像处理装置可以采用与所述用户的人像处理方法类似的方法对其它用户进行人像处理,为避免重复,此处不再赘述。It should also be noted that when the scene image also includes other users, and a part of the face of the other user is also blocked by the VR device worn by the other user, the portrait processing device may use the portrait of the user. A method similar to the processing method is used to perform portrait processing on other users. To avoid repetition, details are not described here.

还需要说明的是,本申请实施例中所述的第一图像可以为单独的图像,或可以为视频流中的一帧图像,本申请实施例对此不作限定。It should also be noted that the first image described in this embodiment of the present application may be an independent image, or may be a frame of image in a video stream, which is not limited in this embodiment of the present application.

可选地,所述第一图像可以为原始拍摄的图像,或经过基础画质处理后得到的清晰度较高的图像。Optionally, the first image may be an originally captured image, or an image with higher definition obtained after basic image quality processing.

可选地,本申请实施例中所述的基础画质处理可以包括至少一种用于提高画质的图像处理步骤,例如:去噪、锐化、提高亮度等,本申请实施例对此不作限定。Optionally, the basic image quality processing described in this embodiment of the present application may include at least one image processing step for improving image quality, such as denoising, sharpening, and brightness enhancement, which are not performed in this embodiment of the present application. limited.

S230,所述人像处理装置将所述第一图像输入人像处理模型,得到第二图像,所述第二图像包括所述用户完整的面部,其中,所述人像处理模型是通过对样本训练数据集训练得到的,所述样本训练数据集包括多个原始图像和与所述多个原始图像对应的多个还原图像,其中,所述多个原始图像是对至少一个样本用户采集得到的,所述多个原始图像中的第一原始图像包括第一样本用户,且所述第一样本用户的面部的所述部分区域被VR装置遮挡,所述多个还原图像中第一还原图像包括所述第一样本用户完整的面部,所述至少一个样本用户包括所述第一样本用户。S230, the portrait processing device inputs the first image into a portrait processing model to obtain a second image, where the second image includes the complete face of the user, wherein the portrait processing model is obtained by training a data set of samples obtained through training, the sample training data set includes multiple original images and multiple restored images corresponding to the multiple original images, wherein the multiple original images are collected from at least one sample user, and the A first original image of the plurality of original images includes a first sample user, and the partial area of the face of the first sample user is blocked by the VR device, and the first restored image of the plurality of restored images includes all the restored images. the complete face of the first sample user, and the at least one sample user includes the first sample user.

可选地,在S230之前,所述人像处理装置可以先获取所述人像处理模型。Optionally, before S230, the portrait processing apparatus may first acquire the portrait processing model.

可选地,所述人像处理装置可以通过多种方式获取所述人像处理模型,本申请实施例对此不作限定。Optionally, the portrait processing apparatus may acquire the portrait processing model in various manners, which is not limited in this embodiment of the present application.

在第一种可能的实现方式中,所述人像处理装置可以在出厂时预配置所述人像处理模型。In a first possible implementation manner, the portrait processing apparatus may be preconfigured with the portrait processing model at the factory.

在第二种可能的实现方式中,所述人像处理装置可以从其它设备处接收所述人像处理模型,也就是说,所述人像处理模型是其它设备训练得到的。In a second possible implementation manner, the portrait processing apparatus may receive the portrait processing model from other devices, that is, the portrait processing model is obtained by training from other devices.

例如,所述人像处理模型可以存储在云端服务器,所述人像处理装置可以通过网络向云端服务器请求所述人像处理模型。For example, the portrait processing model may be stored in a cloud server, and the portrait processing apparatus may request the portrait processing model from the cloud server through a network.

在第三种可能的实现方式中,所述人像处理装置可以自己训练所述人像处理模型。In a third possible implementation manner, the portrait processing apparatus may train the portrait processing model by itself.

下面将以人像处理装置自己训练所述人像处理模型为例,对该人像处理模型的训练过程进行介绍,其它设备训练该人像处理模型的过程与所述人像处理装置自己训练的过程类似,为避免重复,此处不再赘述。The following will introduce the training process of the portrait processing model by taking the portrait processing device training the portrait processing model by itself as an example. The process of training the portrait processing model by other equipment is similar to the training process of the portrait processing device itself. Repeat, and will not repeat them here.

可选地,以该用户的双眼所在区域被VR装置遮挡为例,所述人像处理装置可以获取样本训练数据集,对所述样本训练数据集进行训练和学习得到所述人像处理模型。其中,所述样本训练数据集包括多个原始图像和所述多个原始图像对应的多个还原图像,其中,所述多个原始图像是对至少一个样本用户采集得到的,所述多个原始图像中的第一原始图像包括第一样本用户,且所述第一样本用户的面部的所述部分区域被VR装置遮挡,所述多个还原图像中第一还原图像包括所述第一样本用户完整的面部,所述至少一个样本用户包括所述第一样本用户。Optionally, taking the area where the user's eyes are located by the VR device as an example, the portrait processing device may acquire a sample training data set, and perform training and learning on the sample training data set to obtain the portrait processing model. The sample training data set includes multiple original images and multiple restored images corresponding to the multiple original images, wherein the multiple original images are collected from at least one sample user, and the multiple original images The first original image in the images includes a first sample user, and the partial area of the face of the first sample user is blocked by the VR device, and the first restored image among the plurality of restored images includes the first A complete face of a sample user, the at least one sample user including the first sample user.

可选地,所述人像处理装置可以通过多种方法对所述样本训练数据集进行训练和学习得到所述人像处理模型,本申请实施例对此不作限定。Optionally, the portrait processing apparatus may obtain the portrait processing model by training and learning the sample training data set through various methods, which is not limited in this embodiment of the present application.

在一种可能的实现方式中,所述人像处理装置可以通过神经网络模型对所述样本训练数据集进行训练和学习得到所述人像处理模型。In a possible implementation manner, the portrait processing apparatus may obtain the portrait processing model by training and learning the sample training data set through a neural network model.

例如,该神经网络模型可以为生成对抗网络(generative adversarial nets,GAN)模型,该GAN可以包括条件生成式对抗网络(Conditional GAN)、深度卷积生成式对抗网络(Deep Convolution GAN)等。For example, the neural network model may be a generative adversarial nets (GAN) model, and the GAN may include a conditional generative adversarial network (Conditional GAN), a deep convolutional generative adversarial network (Deep Convolution GAN), and the like.

采用本申请实施例提供的人像处理方法,通过人像处理模型能够还原第一图像中用户被VR装置遮挡的面部特征,从而提高接收端的用户体验。Using the portrait processing method provided by the embodiment of the present application, the facial features of the user blocked by the VR device in the first image can be restored through the portrait processing model, thereby improving the user experience at the receiving end.

可选地,S230中,所述人像处理装置可以将所述第一图像和特征参考信息输入所述人像处理模型,得到所述第二图像。其中,所述特征参考信息包括眼部特征参数和头部特征参数中的至少一项,所述眼部特征参数包括位置信息、尺寸信息、视角信息中的至少一项,所述位置信息用于指示所述用户的两只眼睛中每只眼睛的位置,所述尺寸信息用于指示所述每只眼睛的尺寸、所述视角信息用于指示所述每只眼睛的眼球注视角度,所述头部特征参数包括所述用户的头部的三轴姿态角和加速度。Optionally, in S230, the portrait processing apparatus may input the first image and feature reference information into the portrait processing model to obtain the second image. Wherein, the feature reference information includes at least one of an eye feature parameter and a head feature parameter, the eye feature parameter includes at least one of position information, size information, and viewing angle information, and the position information is used for Indicate the position of each of the two eyes of the user, the size information is used to indicate the size of each eye, the angle of view information is used to indicate the eye gaze angle of each eye, the head The facial feature parameters include the three-axis attitude angle and acceleration of the user's head.

也就是说,在训练所述人像处理模型时使用的样本训练数据集可以包括多个原始图像、所述多个原始图像对应的多个还原图像和至少一个特征参考信息,其中,所述多个原始图像是对至少一个样本用户采集得到的,所述多个原始图像中的第一原始图像包括第一样本用户,且所述第一样本用户的面部的所述部分区域被VR装置遮挡,所述多个还原图像中第一还原图像包括所述第一样本用户完整的面部,所述至少一个样本用户包括所述第一样本用户,所述至少一个特征参考信息包括所述至少一个样本用户中每个样本用户的特征参考信息。That is, the sample training data set used in training the portrait processing model may include multiple original images, multiple restored images corresponding to the multiple original images, and at least one feature reference information, wherein the multiple original images The original image is collected from at least one sample user, the first original image in the plurality of original images includes the first sample user, and the partial area of the face of the first sample user is blocked by the VR device , the first restored image of the plurality of restored images includes the complete face of the first sample user, the at least one sample user includes the first sample user, and the at least one feature reference information includes the at least one sample user. Feature reference information for each sample user in a sample user.

采用本申请实施例提供的人像处理方法,结合第一图像和特征参考信息,输入人像处理装置,通过提供更多的人像相关特征,能够提高被遮挡区域的还原度和真实性。Using the portrait processing method provided by the embodiment of the present application, combining the first image and feature reference information, and inputting the portrait processing device, the restoration degree and authenticity of the occluded area can be improved by providing more portrait-related features.

可选地,所述人像处理装置可以通过多种方式获取所述特征参考信息,本申请实施例对此不作限定。Optionally, the portrait processing apparatus may acquire the feature reference information in various ways, which is not limited in this embodiment of the present application.

(1)获取眼部特征参数的方式(1) How to obtain eye feature parameters

在第一种可能的实现方式中,所述人像处理装置可以接收第二摄像装置拍摄的第四图像,所述第二摄像装置为所述VR装置内置的摄像装置,所述第四图像包括所述用户的两只眼睛;所述人像处理装置可以从所述第四图像中提取所述眼部特征参数。In a first possible implementation manner, the portrait processing device may receive a fourth image captured by a second camera, where the second camera is a built-in camera in the VR device, and the fourth image includes the the two eyes of the user; the portrait processing apparatus may extract the eye feature parameters from the fourth image.

需要说明的是,该第二摄像装置可以为所述VR装置内置的摄像装置,例如:内置的红外(infrared,IR)摄像头。It should be noted that, the second camera device may be a built-in camera device in the VR device, such as a built-in infrared (infrared, IR) camera.

例如:如图4所示,所述第二摄像装置可以为如图1中所示的VR装置110中内置的摄像装置150。For example, as shown in FIG. 4 , the second camera device may be the camera device 150 built in the

可选地,本申请实施例仅以所述第二摄像装置为VR装置上的内置摄像装置为例进行介绍,所述第二摄像装置还可以为设置在其他位置的能够拍摄到所述用户被VR装置遮挡的面部区域的真实图像的摄像装置,本申请实施例对此不作限定。Optionally, the embodiments of the present application only take the second camera as an example of a built-in camera on a VR device, and the second camera may also be a camera located at another location and capable of capturing images of the user being touched. The camera device of the real image of the face area blocked by the VR device is not limited in this embodiment of the present application.

在第二种可能的实现方式中,所述人像处理装置可以从本地存储的所述用户的多个图像中提取所述眼部特征参数,其中,所述多个图像包括所述用户的眼睛。In a second possible implementation manner, the portrait processing apparatus may extract the eye feature parameters from multiple images of the user stored locally, where the multiple images include the eyes of the user.

需要说明的是,所述多个图像可以为所述用户日常拍摄的包含面部的照片,例如:自拍照。It should be noted that the plurality of images may be photos including the face taken by the user on a daily basis, such as self-portraits.

在第三种可能的实现方式中,所述人像处理装置可以通过用户日常拍摄的照片,建立该用户的面部特征数据库,该面部特征数据库中包括面部各器官的特征参数,例如眼部特征参数,所述人像处理装置可以从该面部特征数据库中调取该眼部特征参数。In a third possible implementation manner, the portrait processing device may establish a facial feature database of the user through the daily photos taken by the user, and the facial feature database includes feature parameters of various facial organs, such as eye feature parameters, The portrait processing device may retrieve the eye feature parameter from the facial feature database.

在第四种可能的实现方式中,所述人像处理装置可以从其它测量装置,如眼动跟踪传感器处接收所述眼部特征参数。In a fourth possible implementation manner, the human image processing device may receive the eye feature parameters from other measuring devices, such as an eye tracking sensor.

可选地,所述多个图像和所述面部特征数据库还可以存储在云端,所述人像处理装置可以通过网络向云端请求,本申请实施例对此不作限定。Optionally, the multiple images and the facial feature database may also be stored in the cloud, and the portrait processing apparatus may request the cloud through a network, which is not limited in this embodiment of the present application.

需要说明的是,在通过所述人像处理模型对所述第一图像中被遮挡的内容进行还原的时候,由于VR装置主要遮挡了用户的眼部区域,因此,结合用户的眼部特征参数,能够提高被遮挡内容的还原度和真实性。It should be noted that, when the occluded content in the first image is restored by the portrait processing model, since the VR device mainly occludes the user's eye area, combined with the user's eye feature parameters, It can improve the restoration and authenticity of the occluded content.

(2)获取头部特征参数的方式(2) How to obtain head feature parameters

在一种可能的实现方式中,所述人像处理装置可以接收惯性测量装置测量的所述头部特征参数。In a possible implementation manner, the portrait processing device may receive the head characteristic parameter measured by the inertial measurement device.

需要说明的是,惯性测量装置,也可以成为惯性测量单元(Inertial measurementunit,IMU)是测量物体三轴姿态角(或角速率)以及加速度的装置。一般的,一个IMU包含了三个单轴的加速度计和三个单轴的陀螺,加速度计检测物体在载体坐标系统独立三轴的加速度信号,而陀螺检测载体相对于导航坐标系的角速度信号,测量物体在三维空间中的角速度和加速度,并以此解算出物体的姿态。It should be noted that an inertial measurement device, which can also be an inertial measurement unit (Inertial measurement unit, IMU), is a device that measures the three-axis attitude angle (or angular rate) and acceleration of an object. Generally, an IMU contains three single-axis accelerometers and three single-axis gyroscopes. The accelerometer detects the acceleration signal of the object in the independent three-axis of the carrier coordinate system, and the gyroscope detects the angular velocity signal of the carrier relative to the navigation coordinate system. Measure the angular velocity and acceleration of an object in three-dimensional space, and use this to calculate the object's attitude.

可选地,所述惯性测量装置可以为固定在所述用户头部的独立的测量设备,或所述惯性测量装置可以集成在所述VR装置内,本申请实施例对此不作限定。Optionally, the inertial measurement device may be an independent measurement device fixed on the user's head, or the inertial measurement device may be integrated in the VR device, which is not limited in this embodiment of the present application.

需要说明的是,在通过所述人像处理模型对所述第一图像中被遮挡的内容进行还原的时候,由于用户的头部可能处于运动状态,即具有运动速度和转动角度,因此,结合用户的头部特征参数,能够进一步提高被遮挡内容的还原度和真实性。It should be noted that, when the occluded content in the first image is restored by the portrait processing model, since the user's head may be in a motion state, that is, it has a motion speed and a rotation angle, the user's head may be in motion. It can further improve the restoration degree and authenticity of the occluded content.

可选地,所述特征参考信息还可以包括其他特征参数,例如:鼻子特征参数、脸型特征参数,发型特征参数等能够描述所述用户的人像特征的参数,本申请实施例对此不作限定。Optionally, the feature reference information may further include other feature parameters, such as nose feature parameters, face shape feature parameters, hairstyle feature parameters and other parameters that can describe the user's portrait features, which are not limited in this embodiment of the present application.

S240,所述人像处理装置向接收端发送所述第二图像;相应地,所述接收端接收所述人像处理装置发送的所述第二图像。S240, the portrait processing apparatus sends the second image to the receiving end; correspondingly, the receiving end receives the second image sent by the portrait processing device.

可选地,S240中,所述人像处理装置可以向所述接收端发送所述第二图像,或所述人像处理装置可以向所述接收端发送目标图像,所述目标图像为对所述第二图像进行合成或拼接后的图像,或所述人像处理装置可以向所述接收端发送视频流,所述视频流中包括所述第二图像或所述目标图像,本申请实施例对此不作限定。Optionally, in S240, the portrait processing apparatus may send the second image to the receiving end, or the portrait processing apparatus may send a target image to the receiving end, where the target image is for the first image. An image after synthesizing or splicing two images, or the portrait processing apparatus may send a video stream to the receiving end, and the video stream includes the second image or the target image, which is not implemented in this embodiment of the present application. limited.

相应地,S240中,所述人像处理装置可以向所述接收端发送所述第二图像,或包含所述第二图像的目标图像或视频流。Correspondingly, in S240, the portrait processing apparatus may send the second image, or a target image or video stream including the second image, to the receiving end.

可选地,在S240之前,所述人像处理装置可以通过多种方式生成所述目标图像,本申请实施例对此不作限定。Optionally, before S240, the portrait processing apparatus may generate the target image in various manners, which is not limited in this embodiment of the present application.

在第一种可能的实现方式中,所述人像处理装置可以将所述第二图像和第五图像进行拼接,得到目标图像,其中,所述第五图像为从所述第四图像中截取所述第一图像后的图像。In a first possible implementation manner, the portrait processing device may stitch the second image and the fifth image to obtain a target image, wherein the fifth image is obtained by cutting out the fourth image. The image after the first image is described.

在第二种可能的实现方式中,所述人像处理装置可以将所述第二图像和所述第四图像进行合成,得到目标图像,其中,所述第二图像覆盖在所述第四图像中的所述第一图像的上层。In a second possible implementation manner, the portrait processing apparatus may synthesize the second image and the fourth image to obtain a target image, wherein the second image is overlaid on the fourth image the upper layer of the first image.

可选地,在S240之前,所述人像处理装置可以通过多种方式生成所述视频流,本申请实施例对此不作限定。Optionally, before S240, the portrait processing apparatus may generate the video stream in various manners, which is not limited in this embodiment of the present application.

在一种可能的实现方式中,所述人像处理装置可以对所述第二图像进行视频编码,得到视频图像;根据所述视频图像,得到所述视频流,所述视频流包括所述视频图像。In a possible implementation manner, the portrait processing apparatus may perform video encoding on the second image to obtain a video image; and obtain the video stream according to the video image, where the video stream includes the video image .

需要说明的是,考虑到还原后的第二图像中还原了用户被VR装置遮挡的眼部区域,而实际情况是所述用户面部佩戴了VR装置,因此,所述第二图像与实际情况之间存在一定的差异。It should be noted that, considering that the restored second image restores the user's eye area blocked by the VR device, and the actual situation is that the user wears the VR device on the face, therefore, the difference between the second image and the actual situation is There are certain differences.

在一种可能的实现方式中,如图5所示,所述人像处理装置可以在所述第二图像中所述用户的眼部区域上叠加眼罩图层,其中,所述眼罩图层经过第一透明度的透视化处理。In a possible implementation manner, as shown in FIG. 5 , the portrait processing apparatus may superimpose an eye mask layer on the eye region of the user in the second image, wherein the eye mask layer passes through the first A transparent perspective processing.

还需要说明的是,该第一透明度的取值应满足既能够看出用户佩戴VR装置,又不影响看到VR装置下被遮挡的眼部区域的显示,本申请实施例对该第一透明度的具体数值不作限定。It should also be noted that the value of the first transparency should be such that it can be seen that the user is wearing the VR device without affecting the display of the occluded eye area under the VR device. The specific value is not limited.

采用本申请实施例提供的人像处理方法,通过在第二图像中所述用户的眼部区域上叠加经过上述透视化处理后的眼罩图层,能够模拟所述用户佩戴的VR装置,从而提高还原的真实度。Using the portrait processing method provided by the embodiment of the present application, by superimposing the eye mask layer after the above-mentioned perspective processing on the eye region of the user in the second image, the VR device worn by the user can be simulated, thereby improving the restoration performance. authenticity.

需要说明的是,以上述人像处理装置可以为终端,终端为了实现上述功能,其包含了执行各个功能相应的硬件和/或软件模块。结合本文中所公开的实施例描述的各示例的算法步骤,本申请能够以硬件或硬件和计算机软件的结合形式来实现。某个功能究竟以硬件还是计算机软件驱动硬件的方式来执行,取决于技术方案的特定应用和设计约束条件。本领域技术人员可以结合实施例对每个特定的应用来使用不同方法来实现所描述的功能,但是这种实现不应认为超出本申请的范围。It should be noted that the above-mentioned portrait processing apparatus may be a terminal, and in order to realize the above-mentioned functions, the terminal includes corresponding hardware and/or software modules for executing each function. The present application can be implemented in hardware or in the form of a combination of hardware and computer software in conjunction with the algorithm steps of each example described in conjunction with the embodiments disclosed herein. Whether a function is performed by hardware or computer software driving hardware depends on the specific application and design constraints of the technical solution. Those skilled in the art may use different methods to implement the described functionality for each particular application in conjunction with the embodiments, but such implementations should not be considered beyond the scope of this application.

本实施例可以根据上述方法示例对终端进行功能模块的划分,例如,可以对应各个功能划分各个功能模块,也可以将两个或两个以上的功能集成在一个处理模块中。上述集成的模块可以采用硬件的形式实现。需要说明的是,本实施例中对模块的划分是示意性的,仅仅为一种逻辑功能划分,实际实现时可以有另外的划分方式。In this embodiment, the terminal may be divided into functional modules according to the foregoing method example. For example, each functional module may be divided corresponding to each function, or two or more functions may be integrated into one processing module. The above-mentioned integrated modules can be implemented in the form of hardware. It should be noted that, the division of modules in this embodiment is schematic, and is only a logical function division, and there may be other division manners in actual implementation.

在采用对应各个功能划分各个功能模块的情况下,图6示出了上述实施例中涉及的人像处理装置300的一种可能的组成示意图,如图6所示,该装置300可以包括:收发单元310和处理单元320。In the case where each functional module is divided according to each function, FIG. 6 shows a possible schematic diagram of the composition of the portrait processing apparatus 300 involved in the above embodiment. As shown in FIG. 6 , the apparatus 300 may include: a transceiver unit 310 and processing unit 320.

其中,处理单元320可以控制收发单元310实现上述方法200实施例中所述的方法,和/或用于本文所描述的技术的其他过程。The processing unit 320 may control the transceiver unit 310 to implement the methods described in the above embodiments of the

需要说明的是,上述方法实施例涉及的各步骤的所有相关内容均可以援引到对应功能模块的功能描述,在此不再赘述。It should be noted that, all relevant contents of the steps involved in the above method embodiments can be cited in the functional description of the corresponding functional module, which will not be repeated here.

本实施例提供的装置300,用于执行上述人像处理方法,因此可以达到与上述实现方法相同的效果。The apparatus 300 provided in this embodiment is configured to execute the above-mentioned portrait processing method, and thus can achieve the same effect as the above-mentioned implementation method.

在采用集成的单元的情况下,装置300可以包括处理模块、存储模块和通信模块。其中,处理模块可以用于对装置300的动作进行控制管理,例如,可以用于支持装置200执行上述各个单元执行的步骤。存储模块可以用于支持装置300执行存储程序代码和数据等。通信模块,可以用于装置300与其他设备的通信。Where an integrated unit is employed, the apparatus 300 may include a processing module, a storage module, and a communication module. The processing module may be used to control and manage the actions of the apparatus 300, for example, may be used to support the

其中,处理模块可以是处理器或控制器。其可以实现或执行结合本申请公开内容所描述的各种示例性的逻辑方框,模块和电路。处理器也可以是实现计算功能的组合,例如包含一个或多个微处理器组合,数字信号处理(digital signal processing,DSP)和微处理器的组合等等。存储模块可以是存储器。通信模块具体可以为射频电路、蓝牙芯片、Wi-Fi芯片等与其他终端交互的设备。The processing module may be a processor or a controller. It may implement or execute the various exemplary logical blocks, modules and circuits described in connection with this disclosure. The processor may also be a combination that implements computing functions, such as a combination comprising one or more microprocessors, a combination of digital signal processing (DSP) and a microprocessor, and the like. The storage module may be a memory. The communication module may specifically be a device that interacts with other terminals, such as a radio frequency circuit, a Bluetooth chip, and a Wi-Fi chip.

在一种可能的实现方式中,本申请实施例所涉及的装置300可以为终端。In a possible implementation manner, the apparatus 300 involved in this embodiment of the present application may be a terminal.

需要说明的是,本申请实施例中所述的终端可以是移动的或者固定的,例如该终端可以是手机、照相机、摄像机、平板个人电脑(tablet personal computer)、智能电视、笔记本电脑(laptop computer)、个人数字助理(personal digital assistant,PDA)、个人计算机(personal computer)、或智能手表等可穿戴式设备(wearable device)等,本申请实施例对此不作限定。It should be noted that the terminal described in the embodiments of the present application may be mobile or fixed, for example, the terminal may be a mobile phone, a camera, a video camera, a tablet personal computer (tablet personal computer), a smart TV, a laptop computer (laptop computer) ), a personal digital assistant (PDA), a personal computer (personal computer), or a wearable device such as a smart watch, etc., which are not limited in this embodiment of the present application.

以终端是手机为例,图7示出了手机400的结构示意图。如图7所示,手机400可以包括处理器410,外部存储器接口420,内部存储器421,通用串行总线(universal serialbus,USB)接口430,充电管理模块440,电源管理模块441,电池442,天线1,天线2,移动通信模块450,无线通信模块460,音频模块470,扬声器470A,受话器470B,麦克风470C,耳机接口470D,传感器模块480,按键490,马达491,指示器492,摄像头493,显示屏494,以及用户标识模块(subscriber identification module,SIM)卡接口495等。Taking the terminal being a mobile phone as an example, FIG. 7 shows a schematic structural diagram of the mobile phone 400 . As shown in FIG. 7 , the mobile phone 400 may include a processor 410, an

可以理解的是,本申请实施例示意的结构并不构成对手机400的具体限定。在本申请另一些实施例中,手机400可以包括比图示更多或更少的部件,或者组合某些部件,或者拆分某些部件,或者不同的部件布置。图示的部件可以以硬件,软件或软件和硬件的组合实现。It can be understood that the structures illustrated in the embodiments of the present application do not constitute a specific limitation on the mobile phone 400 . In other embodiments of the present application, the mobile phone 400 may include more or less components than shown, or combine some components, or separate some components, or arrange different components. The illustrated components may be implemented in hardware, software, or a combination of software and hardware.

处理器410可以包括一个或多个处理单元,例如:处理器410可以包括应用处理器(application processor,AP),调制解调处理器,图形处理器(graphics processingunit,GPU),图像信号处理器(image signal processor,ISP),控制器,视频编解码器,数字信号处理器(digital signal processor,DSP),基带处理器,和/或神经网络处理器(neural-network processing unit,NPU)等。其中,不同的处理单元可以是独立的部件,也可以集成在一个或多个处理器中。在一些实施例中,手机400也可以包括一个或多个处理器410。其中,控制器可以根据指令操作码和时序信号,产生操作控制信号,完成取指令和执行指令的控制。在其他一些实施例中,处理器410中还可以设置存储器,用于存储指令和数据。示例性地,处理器410中的存储器可以为高速缓冲存储器。该存储器可以保存处理器410刚用过或循环使用的指令或数据。如果处理器410需要再次使用该指令或数据,可从所述存储器中直接调用。这样就避免了重复存取,减少了处理器410的等待时间,因而提高了手机400处理数据或执行指令的效率。The processor 410 may include one or more processing units, for example, the processor 410 may include an application processor (application processor, AP), a modem processor, a graphics processor (graphics processing unit, GPU), an image signal processor ( image signal processor, ISP), controller, video codec, digital signal processor (digital signal processor, DSP), baseband processor, and/or neural-network processing unit (neural-network processing unit, NPU), etc. Wherein, different processing units may be independent components, or may be integrated in one or more processors. In some embodiments, cell phone 400 may also include one or more processors 410 . The controller can generate an operation control signal according to the instruction operation code and the timing signal, and complete the control of fetching and executing instructions. In some other embodiments, a memory may also be provided in the processor 410 for storing instructions and data. Illustratively, the memory in the processor 410 may be a cache memory. This memory may hold instructions or data that have just been used or recycled by the processor 410 . If the processor 410 needs to use the instruction or data again, it can be called directly from the memory. In this way, repeated access is avoided, and the waiting time of the processor 410 is reduced, thereby improving the efficiency of the mobile phone 400 for processing data or executing instructions.

在一些实施例中,处理器410可以包括一个或多个接口。接口可以包括集成电路间(inter-integrated circuit,I2C)接口,集成电路间音频(inter-integrated circuitsound,I2S)接口,脉冲编码调制(pulse code modulation,PCM)接口,通用异步收发传输器(universal asynchronous receiver/transmitter,UART)接口,移动产业处理器接口(mobile industry processor interface,MIPI),通用输入输出(general-purposeinput/output,GPIO)接口,SIM卡接口,和/或USB接口等。其中,USB接口440是符合USB标准规范的接口,具体可以是Mini USB接口,Micro USB接口,USB Type C接口等。USB接口440可以用于连接充电器为手机400充电,也可以用于手机400与外围设备之间传输数据。该USB接口440也可以用于连接耳机,通过耳机播放音频。In some embodiments, processor 410 may include one or more interfaces. The interface may include an inter-integrated circuit (I2C) interface, an inter-integrated circuitsound (I2S) interface, a pulse code modulation (PCM) interface, a universal asynchronous transceiver (universal asynchronous transmitter) receiver/transmitter, UART) interface, mobile industry processor interface (mobile industry processor interface, MIPI), general-purpose input/output (general-purpose input/output, GPIO) interface, SIM card interface, and/or USB interface, etc. The

可以理解的是,本申请实施例示意的各模块间的接口连接关系,只是示意性说明,并不构成对手机400的结构限定。在本申请另一些实施例中,手机400也可以采用上述实施例中不同的接口连接方式,或多种接口连接方式的组合。It can be understood that the interface connection relationship between the modules illustrated in the embodiments of the present application is only a schematic illustration, and does not constitute a structural limitation of the mobile phone 400 . In other embodiments of the present application, the mobile phone 400 may also adopt different interface connection manners in the foregoing embodiments, or a combination of multiple interface connection manners.

充电管理模块440用于从充电器接收充电输入。其中,充电器可以是无线充电器,也可以是有线充电器。在一些有线充电的实施例中,充电管理模块440可以通过USB接口430接收有线充电器的充电输入。在一些无线充电的实施例中,充电管理模块440可以通过手机400的无线充电线圈接收无线充电输入。充电管理模块440为电池442充电的同时,还可以通过电源管理模块441为手机供电。The

电源管理模块441用于连接电池442,充电管理模块440与处理器410。电源管理模块441接收电池442和/或充电管理模块440的输入,为处理器410,内部存储器421,外部存储器,显示屏494,摄像头494,和无线通信模块460等供电。电源管理模块441还可以用于监测电池容量,电池循环次数,电池健康状态(漏电,阻抗)等参数。在其他一些实施例中,电源管理模块441也可以设置于处理器410中。在另一些实施例中,电源管理模块441和充电管理模块440也可以设置于同一个器件中。The

手机400的无线通信功能可以通过天线1,天线2,移动通信模块450,无线通信模块460,调制解调处理器以及基带处理器等实现。The wireless communication function of the mobile phone 400 can be realized by the antenna 1, the antenna 2, the

天线1和天线2用于发射和接收电磁波信号。手机400中的每个天线可用于覆盖单个或多个通信频带。不同的天线还可以复用,以提高天线的利用率。例如:可以将天线4复用为无线局域网的分集天线。在另外一些实施例中,天线可以和调谐开关结合使用。Antenna 1 and Antenna 2 are used to transmit and receive electromagnetic wave signals. Each antenna in handset 400 may be used to cover a single or multiple communication frequency bands. Different antennas can also be reused to improve antenna utilization. For example, the antenna 4 can be multiplexed into a diversity antenna of the wireless local area network. In other embodiments, the antenna may be used in conjunction with a tuning switch.

移动通信模块450可以提供应用在手机400上的包括2G/3G/4G/5G等无线通信的解决方案。移动通信模块450可以包括至少一个滤波器,开关,功率放大器,低噪声放大器(lownoise amplifier,LNA)等。移动通信模块450可以由天线1接收电磁波,并对接收的电磁波进行滤波,放大等处理,传送至调制解调处理器进行解调。移动通信模块450还可以对经调制解调处理器调制后的信号放大,经天线1转为电磁波辐射出去。在一些实施例中,移动通信模块450的至少部分功能模块可以被设置于处理器410中。在一些实施例中,移动通信模块450的至少部分功能模块可以与处理器410的至少部分模块被设置在同一个器件中。The

无线通信模块460可以提供应用在手机400上的包括无线局域网(wirelesslocalarea networks,WLAN)(如无线保真(wireless fidelity,Wi-Fi)网络),蓝牙(bluetooth,BT),全球导航卫星系统(global navigation satellite system,GNSS),调频(frequencymodulation,FM),近距离无线通信技术(near field communication,NFC),红外技术(infrared,IR)等无线通信的解决方案。The

可选地,无线通信模块460可以是集成至少一个通信处理模块的一个或多个器件,其中,一个通信处理模块可以对应于一个网络接口,该网络接口可以设置在不同的业务功能模式,设置在不同模式下的网络接口可以建立与该模式对应的网络连接。。Optionally, the

例如:通过P2P功能模式下的网络接口可以建立支持P2P功能的网络连接,通过STA功能模式下的网络接口可以建立支持STA功能的网络连接,通过AP模式下的网络接口可以建立支持AP功能的网络连接。For example, a network connection supporting the P2P function can be established through the network interface in the P2P function mode, a network connection supporting the STA function can be established through the network interface in the STA function mode, and a network supporting the AP function can be established through the network interface in the AP mode. connect.

无线通信模块460经由天线2接收电磁波,将电磁波信号调频以及滤波处理,将处理后的信号发送到处理器410。无线通信模块460还可以从处理器410接收待发送的信号,对其进行调频,放大,经天线2转为电磁波辐射出去。The

手机400通过GPU,显示屏494,以及应用处理器等实现显示功能。GPU为图像处理的微处理器,连接显示屏494和应用处理器。GPU用于执行数学和几何计算,用于图形渲染。处理器410可包括一个或多个GPU,其执行程序指令以生成或改变显示信息。The mobile phone 400 implements a display function through a GPU, a

显示屏494用于显示图像,视频等。显示屏494包括显示面板。显示面板可以采用液晶显示屏(liquid crystal display,LCD),有机发光二极管(organic light-emittingdiode,OLED),有源矩阵有机发光二极体或主动矩阵有机发光二极体(active-matrixorganic light emitting diode的,AMOLED),柔性发光二极管(flex light-emittingdiode,FLED),Miniled,MicroLed,Micro-oLed,量子点发光二极管(quantum dotlightemitting diodes,QLED)等。在一些实施例中,手机400可以包括1个或多个显示屏494。

在本申请的一些实施例中,当显示面板采用OLED、AMOLED、FLED等材料时,上述图7中的显示屏494可以被弯折。这里,上述显示屏494可以被弯折是指显示屏可以在任意部位被弯折到任意角度,并可以在该角度保持,例如,显示屏494可以从中部左右对折。也可以从中部上下对折。本申请中,将可以被弯折的显示屏称为可折叠显示屏。其中,该触摸显示屏可以是一块屏幕,也可以是多块屏幕拼凑在一起组合成的显示屏,在此不做限定。In some embodiments of the present application, when the display panel adopts materials such as OLED, AMOLED, FLED, etc., the

手机400的显示屏494可以是一种柔性屏,目前,柔性屏以其独特的特性和巨大的潜力而备受关注。柔性屏相对于传统屏幕而言,具有柔韧性强和可弯曲的特点,可以给用户提供基于可弯折特性的新交互方式,可以满足用户对于手机的更多需求。对于配置有可折叠显示屏的手机而言,手机上的可折叠显示屏可以随时在折叠形态下的小屏和展开形态下大屏之间切换。因此,用户在配置有可折叠显示屏的手机上使用分屏功能,也越来越频繁。The

手机400可以通过ISP,摄像头493,视频编解码器,GPU,显示屏494以及应用处理器等实现拍摄功能。The mobile phone 400 can realize the shooting function through the ISP, the

ISP用于处理摄像头494反馈的数据。例如,拍照时,打开快门,光线通过镜头被传递到摄像头感光元件上,光信号转换为电信号,摄像头感光元件将所述电信号传递给ISP处理,转化为肉眼可见的图像。ISP还可以对图像的噪点,亮度,肤色进行算法优化。ISP还可以对拍摄场景的曝光,色温等参数优化。在一些实施例中,ISP可以设置在摄像头494中。The ISP is used to process the data fed back by the

摄像头493用于捕获静态图像或视频。物体通过镜头生成光学图像投射到感光元件。感光元件可以是电荷耦合器件(charge coupled device,CCD)或互补金属氧化物半导体(complementary metal-oxide-semiconductor,CMOS)光电晶体管。感光元件把光信号转换成电信号,之后将电信号传递给ISP转换成数字图像信号。ISP将数字图像信号输出到DSP加工处理。DSP将数字图像信号转换成标准的RGB,YUV等格式的图像信号。在一些实施例中,手机400可以包括1个或多个摄像头493。

数字信号处理器用于处理数字信号,除了可以处理数字图像信号,还可以处理其他数字信号。例如,当手机400在频点选择时,数字信号处理器用于对频点能量进行傅里叶变换等。A digital signal processor is used to process digital signals, in addition to processing digital image signals, it can also process other digital signals. For example, when the mobile phone 400 selects a frequency point, the digital signal processor is used to perform Fourier transform on the energy of the frequency point, and the like.

视频编解码器用于对数字视频压缩或解压缩。手机400可以支持一种或多种视频编解码器。这样,手机400可以播放或录制多种编码格式的视频,例如:动态图像专家组(moving picture experts group,MPEG)1,MPEG2,MPEG4,MPEG4等。Video codecs are used to compress or decompress digital video. Cell phone 400 may support one or more video codecs. In this way, the mobile phone 400 can play or record videos in various encoding formats, such as: Moving Picture Experts Group (moving picture experts group, MPEG) 1, MPEG2, MPEG4, MPEG4 and so on.

NPU为神经网络(neural-network,NN)计算处理器,通过借鉴生物神经网络结构,例如借鉴人脑神经元之间传递业务功能,对输入信息快速处理,还可以不断的自学习。通过NPU可以实现手机400的智能认知等应用,例如:图像识别,人脸识别,语音识别,文本理解等。The NPU is a neural-network (NN) computing processor. By borrowing the structure of biological neural networks, such as the transfer of business functions between neurons in the human brain, it can quickly process input information and continuously learn by itself. Applications such as intelligent cognition of the mobile phone 400 can be implemented through the NPU, such as image recognition, face recognition, speech recognition, text understanding, and the like.

外部存储器接口420可以用于连接外部存储卡,例如Micro SD卡,实现扩展手机400的存储能力。外部存储卡通过外部存储器接口420与处理器410通信,实现数据存储功能。例如将音乐,视频等文件保存在外部存储卡中。The