Disclosure of Invention

The invention aims to provide a construction method and a method of a hierarchical colored drawing line draft extraction model of FDoG and neural network, which are used for solving the problem of low extraction quality of line drafts under complex disease backgrounds in the colored drawing line draft extraction method in the prior art.

In order to realize the task, the invention adopts the following technical scheme:

a method for constructing a hierarchical colored drawing manuscript extraction model is implemented according to the following steps:

step 1, collecting a plurality of colored drawing cultural relic images to obtain a first sample set;

performing edge extraction on the line manuscript image of each colored drawing cultural relic image in the first sample set by adopting an FDoG algorithm to obtain an FDoG label set;

acquiring a line draft image of each colored drawing cultural relic image in a first sample set, and acquiring a first line draft label set;

step 2, taking the first sample set as input, taking the FDoG label set and the first line draft label set as reference output, training an edge extraction network, and obtaining a coarse extraction model;

the edge extraction network is obtained by training a BDCN edge detection network by taking an edge data set as input and an edge label set as reference output;

the edge data set comprises a plurality of natural images, and the edge tag set comprises an edge tag image corresponding to each natural image in the edge data set and an FDoG edge tag image obtained after edge extraction is carried out on each natural image in the edge data set;

step 3, collecting a plurality of color-painted cultural relic images to obtain a second sample set; wherein each painted cultural relic image in the second sample set is different from the painted cultural relic image in the first sample set;

acquiring a line draft image of each colored drawing cultural relic image in the second sample set, and acquiring a second line draft label set;

step 4, inputting the second sample set obtained in the step 3 into the coarse extraction model obtained in the step 2 to obtain a coarse draft image set;

step 5, taking the thick line draft image set as input, taking the second line draft label set as reference output, training a convolutional neural network, and obtaining a thin line draft extraction model;

and 6, obtaining a line draft extraction model, wherein the line draft extraction model comprises a crude extraction model obtained in the step 2 and a fine line draft extraction model obtained in the step 5 which are sequentially connected.

Further, the convolutional neural network in the step 5 comprises a U-net network, a side output layer and a fusion output layer which are sequentially arranged;

the side output layer comprises a first convolution layer, an up-sampling layer and a first activation function layer which are sequentially arranged; the fusion output layer comprises a second convolution layer and a second activation function layer which are arranged in sequence.

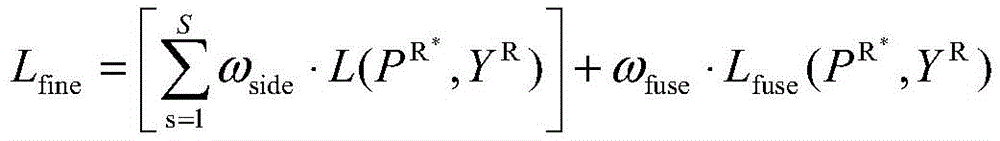

Further, when the convolutional neural network is trained in the step 5, a random gradient is adoptedTraining with a descent algorithm, wherein the loss function L fine Comprises the following steps:

wherein ω is

side Representing specific gravity, ω, in the loss function for different scale features

fuse Representing the specific gravity of the fusion feature in the loss function; s denotes the scale, S =1,2, \ 8230, S, S is a positive integer,

in the form of a single-channel loss function,

for a fusion loss function, is>

Y represents an image output after inputting a thick line draft image into a thin line draft extraction model

R A line draft label image corresponding to the thick line draft image is shown, and R shows the thick line draft image.

Further, when the BDCN edge detection network is trained by using the edge data set and the edge label set in step 2, the loss function L is obtained pre Comprises the following steps:

where S denotes the scale, S =1,2, \ 8230;, S, S are positive integers, α is the weight of the edge label in the objective function, β is the weight of the FDoG edge label, P is

N Representing the output image after the natural image N is input to the BDCN edge detection network,

represents the edge label image corresponding to the natural image N, and>

representing the FDoG edge label image corresponding to the natural image N.

A method for extracting line draft of a layered colored drawing cultural relic comprises the steps of inputting a colored drawing cultural relic image to be extracted into a line draft extraction model obtained by a method for constructing the layered colored drawing cultural relic line draft extraction model, and obtaining a colored drawing cultural relic line draft image.

A hierarchical colored drawing manuscript extraction model building device comprises a first data set building module, a crude extraction model obtaining module, a second data set building module, a thick manuscript extraction module, a fine extraction model obtaining module and a line manuscript extraction model obtaining module;

the first data set construction module is used for acquiring a plurality of colored drawing cultural relic images to obtain a first sample set;

performing edge extraction on the line manuscript image of each colored drawing cultural relic image in the first sample set by adopting an FDoG algorithm to obtain an FDoG label set;

acquiring a line draft image of each colored drawing cultural relic image in a first sample set, and acquiring a first line draft label set;

the rough extraction model obtaining module is used for taking the first sample set as input, taking the FDoG tag set and the first line draft tag set as reference output, training an edge extraction network and obtaining a rough extraction model;

the edge extraction network is obtained by adopting an edge data set as input and adopting an edge label set as reference output to train the BDCN edge detection network;

the edge data set comprises a plurality of natural images, and the edge tag set comprises an edge tag image corresponding to each natural image in the edge data set and an FDoG edge tag image obtained after edge extraction of each natural image in the edge data set;

the second data set construction module is used for acquiring a plurality of colored drawing cultural relic images to obtain a second sample set; wherein each painted cultural relic image in the second sample set is different from the painted cultural relic image in the first sample set;

acquiring a line draft image of each colored drawing cultural relic image in a second sample set, and acquiring a second line draft label set;

the thick line manuscript extraction module is used for inputting the obtained second sample set into a thick extraction model to obtain a thick line manuscript image set;

the fine extraction model obtaining module is used for taking the thick line manuscript image set as input, taking the second line manuscript label set as reference output, training a convolution neural network and obtaining a fine line manuscript extraction model;

the line draft extraction model obtaining module is used for obtaining a line draft extraction model, and the line draft extraction model comprises a crude extraction model and a fine line draft extraction model which are sequentially connected.

Further, the convolutional neural network in the fine extraction model obtaining module comprises a U-net network, a side output layer and a fusion output layer which are sequentially arranged;

the side output layer comprises a first convolution layer, an up-sampling layer and a first activation function layer which are sequentially arranged; the fusion output layer comprises a second convolution layer and a second activation function layer which are arranged in sequence.

Further, when the convolutional neural network is trained in the fine extraction model obtaining module, a stochastic gradient descent algorithm is adopted for training, wherein a loss function L fine Comprises the following steps:

wherein ω is

side Representing specific gravity, ω, in loss functions corresponding to different scale features

fuse Representing the specific gravity of the fusion feature in the loss function; s denotes the scale, S =1,2, \ 8230, S, S is a positive integer,

in the form of a single-channel loss function,

for a fusion loss function, is>

Y represents an image output after inputting a thick line draft image into a thin line draft extraction model

R A line draft label image corresponding to the thick line draft image is shown, and R shows the thick line draft image.

Further, when the coarse extraction model obtaining module adopts the edge data set and the edge label set to train the BDCN edge detection network, the loss function L is obtained pre Comprises the following steps:

where S denotes the scale, S =1,2, \ 8230;, S, S are positive integers, α is the weight of the edge label in the objective function, β is the weight of the FDoG edge label, P is

N Representing the output image after the natural image N is input to the BDCN edge detection network,

represents the edge label image corresponding to the natural image N, and>

representing the FDoG edge label image corresponding to the natural image N.

A layered colored drawing cultural relic line draft extraction device is used for inputting the colored drawing cultural relic image to be extracted into a line draft extraction model obtained by a layered colored drawing cultural relic line draft extraction model construction device to obtain a colored drawing cultural relic line draft image.

Compared with the prior art, the invention has the following technical effects:

1. the invention provides a line draft crude extraction method for a hierarchical colored drawing manuscript of an FDoG and neural network, and provides a method and a device for constructing a line draft extraction model of the FDoG and neural network, wherein a new weighting loss function is designed, a traditional FDoG algorithm is introduced into a BDCN edge detection neural network, the FDoG algorithm and the BDCN edge detection neural network are jointly trained to perform transfer learning from an edge detection task to a colored drawing manuscript extraction task, and the advantages of extracting the coherence edge feature by a deep convolutional network and the advantage of extracting the detail feature by the traditional algorithm are fully combined, so that the line draft information of the crude extracted manuscript is more complete, and even a part of line drafts influenced by diseases can be supplemented;

2. the invention provides a line draft fine extraction method, which is used for constructing a hierarchical colored drawing manuscript extraction model of an FDoG (fully drawn wire) and a neural network, wherein a designed new multi-scale U-Net network samples the features of different scales of each layer in the decoding process of the traditional U-Net network to the size of an original image, and the features of each scale are calculated by utilizing a loss function and are fully fused to capture rich disease multi-scale features, so that the disease noise which cannot be removed by the traditional algorithm is effectively inhibited, and the line draft in the colored drawing manuscript can be further refined and reduced;

3. the method and the device for extracting the FDoG and neural network layered colored drawing manuscript divide line manuscript extraction into two steps, firstly solve the problem of insufficient data by using the mobility of a BDCN edge detection network, restore the complete information of line manuscript edge details, supplement broken lines affected by diseases and perform coarse extraction on the line manuscript. And then, the crude extraction is used as prior knowledge, and diseases which cannot be eliminated by introducing a traditional algorithm in the first step and line blurring caused by a BDCN model are inhibited on the basis of the prior knowledge. The two steps are fully matched, and the advantages of the BDCN edge detection network and the advantages of the U-Net network are effectively combined, so that the extracted cultural relic line manuscript is coherent, complete, clear and clean, and is more comprehensive and accurate.

Detailed Description

The present invention will be described in detail below with reference to the accompanying drawings and examples. So that those skilled in the art can better understand the present invention. It is to be expressly noted that in the following description, a detailed description of known functions and designs will be omitted when it may obscure the main content of the present invention.

The following definitions or conceptual connotations relating to the present invention are provided for illustration:

BDCN network: the network is a convolutional neural network structure based on deep learning and is used for an edge detection task.

FDoG algorithm: the Gaussian difference filtering algorithm based on the flow is a classic traditional edge detection operator. The algorithm preserves salient edges in the image, and weak edges are guided to follow the consistent direction of the salient edges in the neighborhood thereof, so as to extract details in the line draft and maximize line continuity.

U-net network: the network is a convolutional neural network structure based on deep learning, and comprises a contraction path to learn deep advanced features and an expansion path to learn position features, and the downsampled original features are spliced into the upsampled features. The method is originally used for a medical image segmentation task, and is used for various tasks to realize feature extraction due to the simplicity and effectiveness of the structure.

Example one

The embodiment discloses a method for constructing a hierarchical colored drawing manuscript extraction model, which is implemented according to the following steps:

step 1, collecting a plurality of colored drawing cultural relic images to obtain a first sample set;

performing edge extraction on the line manuscript image of each colored drawing cultural relic image in the first sample set by adopting an FDoG algorithm to obtain an FDoG label set;

acquiring a line draft image of each colored drawing cultural relic image in a first sample set, and acquiring a first line draft label set;

in this step, the mode of collecting the plurality of color-painted cultural relic images can be shooting and collecting by a camera or other devices, and can also be calling and collecting from a database.

In this embodiment, the obtained colored drawing cultural relic image is as shown in fig. 1 (a), and an FDoG algorithm is adopted to perform edge extraction on the colored drawing cultural relic image as shown in fig. 1 (a) to obtain an edge label image as shown in fig. 1 (b); the line manuscript image obtained by extracting the hand-drawn line manuscript from the color-drawn document image shown in fig. 1 (a) is shown in fig. 1 (c).

Step 2, taking the first sample set as input, taking the FDoG label set and the first line draft label set as reference output, training an edge extraction network, and obtaining a coarse extraction model;

the edge extraction network is obtained by training a BDCN edge detection network by taking an edge data set as input and an edge label set as reference output;

the edge data set comprises a plurality of natural images, and the edge tag set comprises an edge tag image corresponding to each natural image in the edge data set and an FDoG edge tag image obtained after edge extraction of each natural image in the edge data set;

in this step, the edge label image corresponding to each natural image is a real edge image of each natural image, and this real edge image may be manually drawn; the FDoG edge label image corresponding to each natural image is obtained after edge extraction is carried out through an FDoG algorithm, and in the step, the two parts of data are combined to form a pre-training set of each group of three images serving as a crude extraction network. In this step, the natural image is not a painted cultural relic image.

In the step, the existing BDCN edge detection network is used as the basis of a rough extraction model, the BDCN edge detection network takes VGG16 as a basic structure, each layer is subjected to multiple expansion convolution and down sampling to obtain features of different scales, and finally, multi-scale information under different depths is fused.

In this embodiment, the BDCN Edge Detection Network is a Network structure proposed by "Bi-Directional Cascade Network for spatial Edge Detection" and used for Edge Detection task.

In this embodiment, to solve the problem of insufficient cultural relic data set, multiple sets of public data for edge detection are obtained to pre-train a crude extraction network, and a public data set of BSDS500 is obtained, where each set includes a natural image X

N And a corresponding edge label

The edge label contains a large amount of edge information but lacks detail; making a corresponding label @ using a conventional edge detection algorithm for FDoG>

The label contains relatively rich detail information and containsA certain noise; the natural image data is illustrated in fig. 2 (a), the corresponding edge label is illustrated in fig. 2 (b), and the edge label obtained by performing the FDoG algorithm on fig. 2 (a) is illustrated in fig. 2 (c); natural image data X

N Inputting the data into a BDCN edge detection network for training, and obtaining a multi-scale fusion characteristic P through learning

N The feature has information of edges and details at the same time, an edge extraction network is obtained after training is completed, and the network model learns the information of edges and details of the natural image style at the same time;

in the step, the FDoG edge label image is used as a label and retrained on the edge detection model pre-trained by BDCN, so that the network function with strong edge detection capability is fully utilized, and the trained edge extraction network fully combines the advantage of extracting the marginal characteristic by the deep convolutional network and the advantage of extracting the detailed characteristic by the traditional algorithm, so that the extraction result of the edge extraction network is more accurate;

when training the BDCN edge detection network, continuously optimizing the loss function by adopting a random gradient descent algorithm until convergence.

Optionally, in step 2, when the BDCN edge detection network is trained by using the edge data set and the edge label set, the loss function L is used pre Comprises the following steps:

where S denotes the scale, S =1,2, \ 8230;, S, S are positive integers, α is the weight of the edge label in the objective function, β is the weight of the FDoG edge label, P is

N Representing the output image after the natural image N is input to the BDCN edge detection network,

represents the edge label image corresponding to the natural image N, and>

FDoG edge tag image corresponding to natural image N。

In this embodiment, α is a weight for learning an edge detection tag in an objective function, β is a weight for learning an FDoG tag, and is used to balance the proportion of the edge tag and the detail tag, and directly affect the characteristics finally learned by the network, where α =0.9 and β =0.1, s represents a scale;

the L function is a cross entropy loss function defined as:

wherein,

defining a predicted map, based on the predicted location of the reference>

Referring to each normalized pixel in the prediction map, μ = λ · Y

+ |/(|Y

+ |+|Y

- |),ν=|Y

- |/(|Y

+ |+|Y

- I) to balance edge and non-edge pixels, Y

+ Denotes a positive sample, Y

- Indicating negative samples, and lambda controls the specific gravity of the positive and negative samples.

In this step, the edge extraction network is trained by using the first sample set as input and the FDoG tag set and the first line draft tag set as reference output, and finally the rough extraction model is obtained, wherein the purpose of using the first sample set as input and the FDoG tag set and the first line draft tag set as reference output is to enable the edge extraction network to learn to obtain the line draft feature P with the style characteristics of the colored drawing cultural relic R The feature contains information about the edges and details.

In this embodiment, a random gradient descent method is also used when the edge extraction network is trained to obtain a converged crude extraction model; similarly, the loss function at this time is:

the model is obtained by training colored drawing cultural relic data, can extract information with the style characteristics of the colored drawing cultural relic, and has strong edge detection network performance obtained by training a large amount of natural data so as to extract rich edges and details and supplement lines which are partially broken due to breakage. However, due to the complexity of the cultural relic background, the traditional algorithm FDoG cannot effectively inhibit diseases, so that the roughly extracted line draft contains noise caused by certain diseases.

Step 3, collecting a plurality of color-painted cultural relic images to obtain a second sample set; wherein each painted cultural relic image in the second sample set is different from the painted cultural relic image in the first sample set;

acquiring a line draft image of each colored drawing cultural relic image in a second sample set, and acquiring a second line draft label set;

in this step, an image different from the color-painted cultural relic image obtained in step 1 is used.

Acquiring other groups of colored drawing cultural relic data, wherein each group comprises a colored drawing cultural relic image X R And a label Y of line draft of the colored drawing cultural relic made by an expert R As a test set for the coarse extraction network.

Step 4, inputting the second sample set obtained in the step 3 into the coarse extraction model obtained in the step 2 to obtain a coarse line manuscript image set;

in the step, the model obtained in the step 2 is used for testing the colored drawing cultural relic data of the test set obtained in the step 3 to obtain a plurality of groups of crude extraction line manuscript image pairs, wherein each group comprises one crude extraction line manuscript image

And a label chart Y made by an expert

R 。

Step 5, taking the thick line draft image set as input, taking the second line draft label set as reference output, training a convolutional neural network, and obtaining a thin line draft extraction model;

in the present step, the crude extract line draft outputted from step 4 is plotted

The composed thick line manuscript image set is used as input.

In the embodiment, the convolutional neural network is based on a U-Net network, but is different from the U-Net network in the prior art in that the features of each layer in the decoding process are up-sampled to the original image size to form feature maps with different scales, and then the feature maps with the scales are fused to form a network structure of a fused feature map.

Optionally, the convolutional neural network in step 5 includes a U-net network, a side output layer, and a fusion output layer that are sequentially arranged;

the side output layer comprises a first convolution layer, an up-sampling layer and a first activation function layer which are sequentially arranged; the fusion output layer comprises a second convolution layer and a second activation function layer which are sequentially arranged.

In this embodiment, a plurality of upsampling layers of the U-net network are respectively connected to side output layers, wherein the side output layers are connected to the upsampling layers by a first convolution layer, and the upsampling layers are connected to the first activation function layer. The upper sampling layer is spliced with the U-net output layer channel at the same time, and then is connected with the fusion output layer, and the fusion output layer is connected with the second activation function layer through the second convolution layer.

As shown in fig. 3, the U-net network is mainly composed of a maximum pooling (downsampling) module, a deconvolution (upsampling) module, and a ReLU nonlinear activation function. The whole network process is as follows:

the maximum pooling modules perform downsampling, as shown in fig. 3, each maximum pooling module includes two convolution blocks and one maximum pooling layer, and each convolution block includes 3 × 3 convolution layers, a normalization layer, and an activation function layer, which are sequentially connected;

in this embodiment, four maximal pooling modules are provided, and two 3 × 3 volume blocks are spliced after the 4 th maximal pooling.

The method comprises the following steps that up-sampling is carried out by deconvolution (up-sampling) modules, each up-sampling module comprises two convolution blocks and an up-sampling layer, and each convolution block comprises a 3 x 3 convolution layer, a normalization layer and an activation function layer which are sequentially connected;

in this embodiment, four upsampling modules are correspondingly arranged.

In this embodiment, an output 1 × 1 convolution layer and an activation function layer are connected in sequence after the last up-sampling module.

In this embodiment, as shown in fig. 3, the first convolution layer performs 1 × 1 convolution on the output of the second single 3 × 3 convolution block and the output of the first three up-sampling modules in the U-net network, and then outputs four convolved features, the first up-sampling layer is used to perform up-sampling on the four convolved features, and then outputs the four up-sampled features, and the four up-sampled features are input to the first activation function layer to obtain 4 single-channel loss values; inputting 1 x 1 convolution layer in the U-net output layer into the activation function layer, and obtaining 1 single-channel loss value; calculating the 5 single-channel losses to obtain 5-scale side outputs of the multi-scale U-Net;

in addition, the four up-sampled features are spliced with the output features of the output 1 × 1 convolution layer in the U-net network, and are simultaneously input into a second convolution layer to carry out convolution by 1 × 1, so that a fusion feature is obtained, the feature is input into a second activation function layer to obtain a fusion loss value, and finally, multi-scale fusion output is obtained, wherein the fusion output is a fine extraction line draft.

And 6, obtaining a line draft extraction model, wherein the line draft extraction model comprises a crude extraction model obtained in the step 2 and a fine line draft extraction model obtained in the step 5 which are sequentially connected.

Optionally, when the convolutional neural network is trained in step 5, a stochastic gradient descent algorithm is used for training, wherein the loss function L is fine Comprises the following steps:

wherein ω is

side Representing specific gravity, ω, in the loss function for different scale features

fuse Representing the specific gravity of the fusion features in the loss function(ii) a S denotes the scale, S =1,2, \ 8230, S, S is a positive integer,

is a function of the loss of a single channel,

for a fusion loss function, is>

Y represents an image output after inputting a thick line draft image into a thin line draft extraction model

R A line draft label image corresponding to the thick line draft image is shown, and R shows the thick line draft image.

In this embodiment, the single-channel loss function and the fusion loss function are both cross-entropy loss functions.

In the present embodiment, S =5, ω fuse =1.1;

When s =1, ω side =0.8; when s =2, ω side =0.8; when s =3, ω side =0.4; when s =4, ω side =0.4; when s =5, ω side =0.4。

In the method for constructing the line draft extraction model of the color-drawing manuscript provided in this embodiment, the complete information of the edge details of the line draft is restored, the broken lines affected by the disease are supplemented, and the line draft is extracted roughly. And then, the diseases are inhibited on the basis of the rough extraction, and the noise introduced by the diseases is removed, so that a more detailed and clean line draft is obtained. The two steps are fully matched, and the advantages of the BDCN edge detection network and the advantages of the U-Net network are effectively combined, so that the extracted cultural relic line manuscript is more comprehensive and accurate. The embodiment provides a new weighting loss function, introduces a traditional FDoG method into a BDCN edge detection neural network, and performs transfer learning from an edge detection task to a painted manuscript extraction task by combining the two methods, so that the advantages of deep convolution network extraction of edge characteristics and the advantages of traditional algorithm extraction of detail characteristics are fully combined, the information of a roughly extracted manuscript line is more complete, and even a part of line manuscripts broken under the influence of diseases can be supplemented; the method provides a new multi-scale U-Net network for fine extraction of line draft, and samples the features of different scales of each layer in the decoding process of the U-Net network to the size of the original image, and performs reasonable feature fusion, thereby effectively inhibiting the disease noise which cannot be removed by the traditional algorithm, and further refining and restoring the line draft in the colored drawing cultural relic.

Example two

In this embodiment, a method for extracting line draft of a layered colored drawing cultural relic is provided, in which a to-be-extracted colored drawing cultural relic image is input into a line draft extraction model obtained by the method for constructing a layered colored drawing cultural relic line draft extraction model in the first embodiment, so as to obtain a colored drawing cultural relic line draft image.

In the embodiment, two types of line draft images of the colored drawing cultural relics are adopted to verify the effectiveness of the line draft extraction method provided by the invention; the method comprises the following steps of cleaning a colored drawing cultural relic image with a background, wherein the colored drawing cultural relic image has less disease distribution such as falling, cracks, stains and the like; colored drawing cultural relic images with complex backgrounds, which have a certain degree of cracks, shedding and noise under the influence of diseases. The clean background colored drawing cultural relics image adopted in the experiment is a copy Dunhuang wall painting image, the complex background colored drawing image is a Tang nationality prince tomb horse racing image and a Tang Wei prince tomb horse preparation image, and the sizes of the images are 480 multiplied by 1264, 180 multiplied by 336, 640 multiplied by 640 and 800 multiplied by 736 respectively.

In order to better evaluate the effectiveness of the line draft extraction method, the invention provides two experimental types, which are respectively as follows: ablation experiments for the algorithm of the present invention and comparison experiments with existing edge detection algorithms. The ablation experiment mainly verifies the effectiveness of the hierarchical extraction process, the comparison experiment is mainly compared with several existing Edge detection algorithms, including traditional Edge detection algorithms Canny, FDoG, edge-Box and the like, and meanwhile, the comparison experiment is also performed with Edge detection algorithms based on deep learning, such as HED, RCF and BDCN.

In the experiment, a pitorch software package is used for training a network, and in the first step of crude extraction network training, 9000 iterations are performed when natural image pair training is performed due to the fact that a pre-training model for edge detection is loaded; when training is carried out by using cultural relic image data, about 10500 iterations and batches are carried outThe size is set to 10; for the stochastic gradient descent algorithm, the initial learning rate is 1e -6 Setting the weight attenuation to 0.0002 and the momentum to 0.9; in the second step of fine extraction network training, approximately 20000 iterations are performed, and the batch size is set to 10; for the stochastic gradient descent algorithm, the initial learning rate is 1e -4 The weight attenuation is set to 0.0002 and the momentum to 0.9.

Ablation experiment analysis:

fig. 4 is a result of two-step line draft extraction of the colored drawing cultural relics in the ablation experimental analysis, and fig. 4 (a) is a colored drawing cultural relics graph used in the test; fig. 4 (b) is a rough-extracted colored drawing manuscript; fig. 4 (c) is the final fine-extracted colored drawing manuscript of the cultural relic line; fig. 4 (d) is a real label of the painted line manuscript drawn by the expert; by visually observing the crude extraction line draft image and the fine extraction line draft image, the line draft extracted by the crude extraction network contains more complete details and edge information, and simultaneously lines which are partially broken due to damage of diseases are connected, but noise introduced by the diseases cannot be effectively inhibited; the line draft extracted by the fine extraction network removes most of noise and further refines the line draft.

FIG. 5 is the result of extracting line draft of the colored drawing cultural relics by BDCN and U-Net network respectively in the analysis of ablation experiment, and FIG. 5 (a) is the graph of the colored drawing cultural relics used in the test; fig. 5 (b) is a line draft result obtained by only performing BDCN network training by using the color-painted historical relic data pair, fig. 5 (c) is a line draft result obtained by only performing U-Net network training by using the color-painted historical relic data pair, and fig. 5 (d) is a result of hierarchical line draft extraction by the method of the present invention; as can be seen from comparison, the BDCN network training result with the edge detection model migrated has a good image edge information extraction effect, but has artifacts and blurring phenomena; the training result of the U-Net network has no pixel blurring and artifacts, but the information loss is serious because there is no pre-trained edge detection model. The method firstly utilizes the advantages of the migratability of the BDCN network, combines the FDoG algorithm to extract more complete detail edge information, takes the result as the prior knowledge of the U-Net to train, solves the problem of fuzzy artifact of the BDCN, better utilizes the U-Net to extract fine line draft, and the final extraction result is superior to the former two in both the integrity of the line draft and the style reduction degree.

Comparing with a classical edge detection algorithm, analyzing by an experiment:

fig. 6 is a comparison result with a result of a conventional classical edge detection algorithm, and fig. 6 (a) is a result of extracting a line draft by a flow-based gaussian difference algorithm FDoG; FIG. 6 (b) is the extraction result of Edge-Box Edge detection algorithm on line draft; fig. 6 (c) is an extraction result of the RCF network on the line draft; fig. 6 (d) is the result of BDCN network line draft extraction; FIG. 6 (e) is the result of extracting line script by the algorithm of the present invention; visual observation shows that the line draft extraction based on the FDoG algorithm contains more details, but noise caused by diseases such as cracks and falling is obvious; the line draft extraction result of the Edge-Box algorithm is better than FDoG in noise removal, but is not clear, low in resolution and incomplete in details; the results extracted by the edge detection network based on RCF and BDCN are better in denoising and contour positioning, but have the phenomena of serious loss of details, thicker lines and artifacts; the result of extracting the line manuscript by the algorithm is superior to the algorithms in noise removal and detail expression, the problems of line thickness and artifacts extracted by an edge detection network are solved, and the extracted line manuscript image can clearly restore the original artistic characteristics of the colored drawing cultural relic.

Visual evaluation comparison can visually recognize the line draft extraction result, and meanwhile, objective evaluation indexes are used for evaluating the extraction result; the invention adopts three objective evaluation indexes of RMSE, SSIM and AP to carry out comprehensive evaluation on the image; wherein, RMSE is root mean square error, which is used for measuring the deviation between an observed value and a true value; SSIM is a structural similarity index, and the structural similarity of the image is evaluated through three aspects of contrast brightness, contrast and structure; the AP is average precision, measures the accuracy and integrity of a classification result and is a common objective index of a pixel-level classification task.

Table 1 shows the objective index comparison of line draft results extracted by the algorithm of the present invention and several classical edge detection algorithms; the first part is a comparison with classical conventional edge detection algorithms; the second part is compared with the edge detection algorithm based on deep learning in recent years, wherein the x represents the result of training by adopting the colored drawing cultural relic data pair; the third part is the comparison of the results of the traditional U-Net network for the line refinement extraction network and the multiscale U-Net network of the present invention. Most indexes of the method are superior to those of other methods, wherein the AP value of the method is far higher than that of other methods, namely the method is greatly improved in the integrity and the accuracy of online draft extraction compared with other methods, and meanwhile, the RMSE value and the SSIM value are also generally higher than those of other methods, which shows that the method is closer to the structural similarity of a label and has smaller error.

By integrating visual evaluation and objective index evaluation, the algorithm provided by the invention can well extract the line draft of the colored drawing cultural relic and more completely express the artistic characteristics of the colored drawing cultural relic.

TABLE 1 Objective index of extraction result of line draft of color-painted cultural relics

EXAMPLE III

The device comprises a first data set construction module, a crude extraction model obtaining module, a second data set construction module, a thick line draft extraction module, a fine extraction model obtaining module and a line draft extraction model obtaining module;

the first data set construction module is used for acquiring a plurality of colored drawing cultural relic images to obtain a first sample set;

performing edge extraction on the line manuscript image of each colored drawing cultural relic image in the first sample set by adopting an FDoG algorithm to obtain an FDoG label set;

acquiring a line draft image of each colored drawing cultural relic image in a first sample set, and acquiring a first line draft label set;

the rough extraction model obtaining module is used for taking the first sample set as input, taking the FDoG label set and the first line draft label set as reference output, training an edge extraction network and obtaining a rough extraction model;

the edge extraction network is obtained by training a BDCN edge detection network by using an edge data set as input and an edge label set as reference output;

the edge data set comprises a plurality of natural images, and the edge tag set comprises an edge tag image corresponding to each natural image in the edge data set and an FDoG edge tag image obtained after edge extraction of each natural image in the edge data set;

the second data set construction module is used for acquiring a plurality of color-drawing cultural relic images to obtain a second sample set; wherein each painted cultural relic image in the second sample set is different from the painted cultural relic image in the first sample set;

acquiring a line draft image of each colored drawing cultural relic image in a second sample set, and acquiring a second line draft label set;

the thick line manuscript extraction module is used for inputting the obtained second sample set into a thick extraction model to obtain a thick line manuscript image set;

the fine extraction model obtaining module is used for taking the thick line manuscript image set as input, taking the second line manuscript label set as reference output, training a convolutional neural network and obtaining a fine line manuscript extraction model;

the line draft extraction model obtaining module is used for obtaining a line draft extraction model, and the line draft extraction model comprises a crude extraction model and a fine line draft extraction model which are sequentially connected.

Optionally, the convolutional neural network in the fine extraction model obtaining module includes a U-net network, a side output layer, and a fusion output layer, which are sequentially arranged;

the side output layer comprises a first convolution layer, an up-sampling layer and a first activation function layer which are sequentially arranged; the fusion output layer comprises a second convolution layer and a second activation function layer which are sequentially arranged.

Optionally, when the convolutional neural network is trained in the fine extraction model obtaining module, the convolutional neural network is trained by using a stochastic gradient descent algorithm, wherein the loss function L is fine Comprises the following steps:

wherein ω is

side Representing specific gravity, ω, in the loss function for different scale features

fuse Representing the specific gravity of the fusion feature in the loss function; s represents scale, S =1,2, \8230, S is a positive integer,

in the form of a single-channel loss function,

for a fusion loss function, is>

Y represents an image output after inputting a thick line draft image into a thin line draft extraction model

R A line draft label image corresponding to the thick line draft image is shown, and R shows the thick line draft image.

Optionally, when the BDCN edge detection network is trained by using the edge data set and the edge label set in the coarse extraction model obtaining module, the loss function L is used pre Comprises the following steps:

wherein S represents scale, S =1,2, \8230, S and S are positive integers, alpha is the weight of the edge label in the objective function, beta is the weight of the FDoG edge label, and P is

N Representing the output image after the natural image N is input to the BDCN edge detection network,

represents the edge label image corresponding to the natural image N, and>

representing the FDoG edge label image corresponding to the natural image N.

Optionally, S =5, ω fuse =1.1;

When s =1, ω side =0.8; when s =2, ω side =0.8; when s =3, ω side =0.4; when s =4, ω side =0.4; when s =5, ω side =0.4。

Example four

In this embodiment, a device for extracting line draft of a layered colored drawing cultural relic is provided, which is used to input an image of a colored drawing cultural relic to be extracted into a line draft extraction model obtained by a device for constructing a line draft extraction model of a layered colored drawing cultural relic in the third embodiment, so as to obtain a line draft image of the colored drawing cultural relic.

Through the description of the above embodiments, those skilled in the art will clearly understand that the present invention may be implemented by software plus necessary general hardware, and certainly may also be implemented by hardware, but in many cases, the former is a better embodiment. Based on such understanding, the technical solutions of the present invention may be substantially implemented or a part of the technical solutions contributing to the prior art may be embodied in the form of a software product, which is stored in a readable storage medium, such as a floppy disk, a hard disk, or an optical disk of a computer, and includes several instructions for enabling a computer device (which may be a personal computer, a server, or a network device) to execute the methods according to the embodiments of the present invention.