CN110942040B - Gesture recognition system and method based on ambient light - Google Patents

Gesture recognition system and method based on ambient light Download PDFInfo

- Publication number

- CN110942040B CN110942040B CN201911203896.2A CN201911203896A CN110942040B CN 110942040 B CN110942040 B CN 110942040B CN 201911203896 A CN201911203896 A CN 201911203896A CN 110942040 B CN110942040 B CN 110942040B

- Authority

- CN

- China

- Prior art keywords

- signal

- gesture recognition

- data

- photoelectric

- photoelectric receivers

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V40/00—Recognition of biometric, human-related or animal-related patterns in image or video data

- G06V40/10—Human or animal bodies, e.g. vehicle occupants or pedestrians; Body parts, e.g. hands

- G06V40/107—Static hand or arm

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/045—Combinations of networks

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/04—Architecture, e.g. interconnection topology

- G06N3/048—Activation functions

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06N—COMPUTING ARRANGEMENTS BASED ON SPECIFIC COMPUTATIONAL MODELS

- G06N3/00—Computing arrangements based on biological models

- G06N3/02—Neural networks

- G06N3/08—Learning methods

- G06N3/084—Backpropagation, e.g. using gradient descent

Landscapes

- Engineering & Computer Science (AREA)

- Physics & Mathematics (AREA)

- Theoretical Computer Science (AREA)

- General Physics & Mathematics (AREA)

- General Health & Medical Sciences (AREA)

- General Engineering & Computer Science (AREA)

- Biophysics (AREA)

- Computational Linguistics (AREA)

- Data Mining & Analysis (AREA)

- Evolutionary Computation (AREA)

- Artificial Intelligence (AREA)

- Molecular Biology (AREA)

- Computing Systems (AREA)

- Biomedical Technology (AREA)

- Life Sciences & Earth Sciences (AREA)

- Mathematical Physics (AREA)

- Software Systems (AREA)

- Health & Medical Sciences (AREA)

- Human Computer Interaction (AREA)

- Multimedia (AREA)

- User Interface Of Digital Computer (AREA)

- Image Analysis (AREA)

Abstract

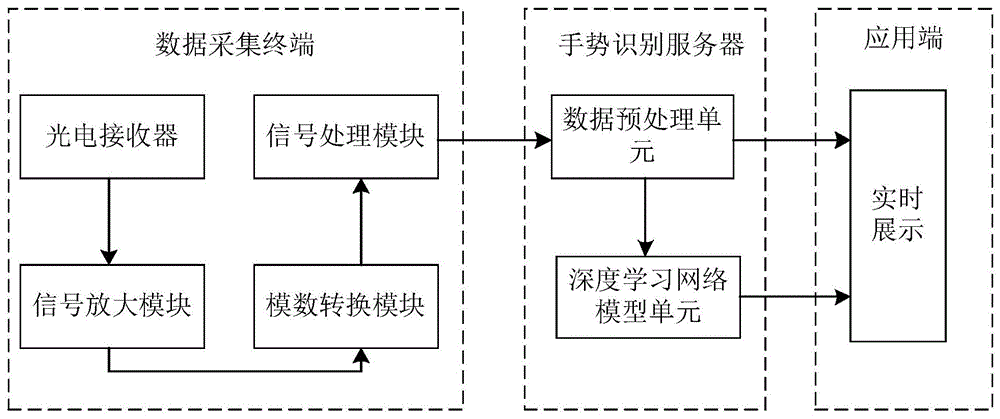

本发明涉及手势识别技术领域,目的在于提供低成本、高准确度的基于环境光的手势识别系统和方法,可以克服同类系统对于光源的要求,使用场景更加丰富。采用的技术方案为:包括数据采集终端、手势识别服务器和应用端,数据采集终端包括多个光电接收器、信号放大模块、模数转换模块和信号处理模块;光电接收器用于捕捉手势动作产生的光信号变化,光电接收器输出端分别与信号放大模块的输入端相连,信号放大模块的输出端与模数转换模块的输入端相连,模数转换模块的输出端与信号处理模块输入端相连,信号处理模块处理后的数据信息传输至手势识别服务器中进行识别,手势识别服务器输出识别信息并发送至应用端,应用端将识别信息进行实时展示。

The present invention relates to the technical field of gesture recognition, and aims to provide a low-cost, high-accuracy gesture recognition system and method based on ambient light, which can overcome the requirements of similar systems for light sources and provide more abundant use scenarios. The technical solution adopted is: including data acquisition terminal, gesture recognition server and application terminal, data acquisition terminal includes multiple photoelectric receivers, signal amplification module, analog-to-digital conversion module and signal processing module; photoelectric receiver is used to capture gesture generated When the optical signal changes, the output terminals of the photoelectric receiver are connected to the input terminals of the signal amplification module, the output terminals of the signal amplification module are connected to the input terminals of the analog-to-digital conversion module, and the output terminals of the analog-to-digital conversion module are connected to the input terminals of the signal processing module. The data information processed by the signal processing module is transmitted to the gesture recognition server for recognition, and the gesture recognition server outputs the recognition information and sends it to the application end, and the application end displays the recognition information in real time.

Description

技术领域technical field

本发明涉及手势识别技术领域,具体涉及一种基于环境光的手势识别系统和方法。The present invention relates to the technical field of gesture recognition, in particular to a gesture recognition system and method based on ambient light.

背景技术Background technique

智能设备的普及与人机交互的不断创新相辅相成。传统的智能家居或智能楼宇通常使用物联网技术将智能设备组网,通过智能终端发送指令与其进行交互。这种传统的交互方式正逐渐向学习成本更低的自然交互方式发展。市场上天猫精灵等智能音箱,Siri等智能设备语音助手已经屡见不鲜,作为自然交互方式之一的语音识别已经逐渐成熟并被广泛应用。尽管市场上有诸如Kinect,Leap等产品,手势识别目前仍鲜少在日常生活中得到应用。The popularity of smart devices and the continuous innovation of human-computer interaction complement each other. Traditional smart homes or smart buildings usually use IoT technology to network smart devices, and send instructions through smart terminals to interact with them. This traditional interaction method is gradually developing towards a natural interaction method with lower learning costs. Voice assistants such as smart speakers such as Tmall Genie and smart devices such as Siri are common in the market. As one of the natural interaction methods, voice recognition has gradually matured and been widely used. Although there are products such as Kinect and Leap on the market, gesture recognition is still rarely used in daily life.

常见的实现手势识别的方法包括使用图像、声学、射频以及可见光等技术。但是图像和声学技术需要进行图像采集和音频采集会从而引发安全和隐私问题。射频技术不仅需要在发射端、接收端使用较为复杂的技术手段且容易受到各种电磁场的干扰。而环境光具有频谱宽、几乎无处不在、安全、无隐私忧患、采集方便等优点。Common methods for realizing gesture recognition include using technologies such as images, acoustics, radio frequency, and visible light. But image and acoustic technologies require image capture and audio capture, which raises security and privacy concerns. Radio frequency technology not only requires the use of relatively complex technical means at the transmitting end and receiving end, but also is susceptible to interference from various electromagnetic fields. Ambient light has the advantages of wide spectrum, almost everywhere, safety, no privacy concerns, and convenient collection.

Tianxing Li等人在《Reconstructing Hand Poses Using Visible Light》中提出并构建了一种基于LED光的手势重建方法和系统Aili。该系统采用调制后的LED阵列作为光源,光电二极管作为可见光感应器实现了3D手势重建。但是该系统以及很多可见光通信领域的类似系统有以下缺点:Tianxing Li et al. proposed and built a gesture reconstruction method and system Aili based on LED light in "Reconstructing Hand Poses Using Visible Light". The system uses a modulated LED array as a light source and a photodiode as a visible light sensor to realize 3D gesture reconstruction. However, this system and many similar systems in the field of visible light communication have the following disadvantages:

1)需要通过在光源处安装额外的调制设备,安装不便捷且增加了系统成本;1) It is necessary to install additional modulation equipment at the light source, which is inconvenient to install and increases the system cost;

2)因需要调制,所以该系统光源类型只能是LED,而实际上目前LED的普及率并不高;2) Due to the need for modulation, the light source type of the system can only be LED, but in fact the current penetration rate of LED is not high;

3)该系统主要工作在于3D重建,若要用于手势识别场景,仍需要额外增加其他工作。3) The main work of the system is 3D reconstruction. If it is used in gesture recognition scenarios, additional work is still required.

发明内容Contents of the invention

本发明的目的在于提供一种低成本、高准确度的基于环境光的手势识别系统和方法,可以克服同类系统对于光源的要求,使用场景更加丰富。The purpose of the present invention is to provide a low-cost, high-accuracy gesture recognition system and method based on ambient light, which can overcome the requirements of similar systems for light sources, and provide more diverse usage scenarios.

为实现上述发明目的,本发明所采用的技术方案是:一种基于环境光的手势识别系统,包括数据采集终端、手势识别服务器和应用端,所述数据采集终端包括多个光电接收器、信号放大模块、模数转换模块和信号处理模块;所述光电接收器用于捕捉手势动作产生的光信号变化,多个所述光电接收器构成组合排列;所述光电接收器的输出端均与信号放大模块的输入端相连,所述信号放大模块的输出端与模数转换模块的输入端相连,所述模数转换模块的输出端与信号处理模块输入端相连,所述信号处理模块处理后的数据信息传输至手势识别服务器中进行识别,所述手势识别服务器输出识别信息并发送至应用端,所述应用端将识别信息进行实时展示。In order to achieve the purpose of the above invention, the technical solution adopted by the present invention is: a gesture recognition system based on ambient light, including a data collection terminal, a gesture recognition server and an application terminal, the data collection terminal includes a plurality of photoelectric receivers, signal An amplification module, an analog-to-digital conversion module and a signal processing module; the photoelectric receiver is used to capture the light signal changes generated by gestures, and a plurality of the photoelectric receivers form a combined arrangement; the output terminals of the photoelectric receiver are all connected to the signal amplification The input end of the module is connected, the output end of the signal amplification module is connected with the input end of the analog-digital conversion module, the output end of the analog-digital conversion module is connected with the input end of the signal processing module, and the data processed by the signal processing module The information is transmitted to the gesture recognition server for recognition, and the gesture recognition server outputs the recognition information and sends it to the application end, and the application end displays the recognition information in real time.

进一步的,所述手势识别服务器包括数据预处理单元和深度学习网络模型单元,所述数据预处理单元对信号处理模块输出的数据包进行解码并还原成多通道数据,所述深度学习网络模型单元将还原后的多通道数据进行识别并分类;所述多通道数据和所述深度学习网络模型单元识别分类的结果作为所述手势识别服务器输出的识别信息。Further, the gesture recognition server includes a data preprocessing unit and a deep learning network model unit, the data preprocessing unit decodes the data packets output by the signal processing module and restores them into multi-channel data, and the deep learning network model unit Recognizing and classifying the restored multi-channel data; the multi-channel data and the recognition and classification results of the deep learning network model unit are used as the recognition information output by the gesture recognition server.

进一步的,所述组合排列为矩形阵列排布、梯形排布或分散排布。Further, the combination arrangement is a rectangular array arrangement, a trapezoidal arrangement or a dispersed arrangement.

进一步的,所述深度学习网络模型单元为门控循环单元。Further, the deep learning network model unit is a gated recurrent unit.

进一步的,所述应用端为网页前端。Further, the application end is a web page front end.

一种基于环境光的手势识别方法,包括以下识别步骤:A gesture recognition method based on ambient light, comprising the following recognition steps:

S1:多个光电接收器实时捕捉手势动作在不同的手部位置产生的光信号变化,并将收集到的多个光信号分别转换为电流信号;S1: Multiple photoelectric receivers capture the light signal changes generated by gestures at different hand positions in real time, and convert the collected multiple light signals into current signals respectively;

S2:信号放大模块将电流信号转换为电压信号,并将其放大;S2: The signal amplification module converts the current signal into a voltage signal and amplifies it;

S3:放大后的电压信号经过模数转换模板转换为数字信号;S3: The amplified voltage signal is converted into a digital signal through an analog-to-digital conversion template;

S4:多通道数字信号经信号处理模块进行合并、编码,然后传输到手势识别服务器;S4: Multi-channel digital signals are merged and encoded by the signal processing module, and then transmitted to the gesture recognition server;

S5:数据预处理单元对接收到的原始数据进行解码,并还原成多通道数据;S5: The data preprocessing unit decodes the received original data and restores it to multi-channel data;

S6:还原后的多通道数据输入到深度学习网络模型单元中,完成手势的识别分类;S6: The restored multi-channel data is input into the deep learning network model unit to complete the recognition and classification of gestures;

S7:同时将还原后的多通道数据和识别分类的结果作为应用端的输入,应用端实时展示多通道的数据和识别出的手势。S7: At the same time, the restored multi-channel data and the recognition and classification results are used as the input of the application side, and the application side displays the multi-channel data and the recognized gestures in real time.

进一步的,手势识别方法还包括识别前的训练步骤,所述训练步骤为:所述手势识别服务器根据不同的手势动作通过反向传播算法进行训练,建立深度网络模型。Further, the gesture recognition method further includes a training step before recognition, and the training step is: the gesture recognition server performs training through a backpropagation algorithm according to different gesture actions, and establishes a deep network model.

本发明的有益效果集中体现在:The beneficial effects of the present invention are embodied in:

1、光电接收器仅用环境光就可进行手势识别,对光源没有限制,应用场景更加广泛;1. The photoelectric receiver can perform gesture recognition only with ambient light, there is no limit to the light source, and the application scenarios are more extensive;

2、无需安装额外的调制光源设备,降低了系统成本,使用、安装更加简便;2. There is no need to install additional modulation light source equipment, which reduces the system cost and makes it easier to use and install;

3、光电接收器非常敏感,能检测出微小的光强变化,因此可以识别细微差别的动作;3. The photoelectric receiver is very sensitive and can detect small changes in light intensity, so it can identify nuanced movements;

4、多个光电接收器构成组合排列,形成多通道的感光数据,可以提高手势识别准确率,同时降低识别延时。4. Multiple photoelectric receivers are combined and arranged to form multi-channel photosensitive data, which can improve the accuracy of gesture recognition and reduce the recognition delay.

附图说明Description of drawings

图1是本发明系统结构框图;Fig. 1 is a system block diagram of the present invention;

图2是本发明循环神经网络模型框图;Fig. 2 is a block diagram of the recurrent neural network model of the present invention;

图3是本发明光电接收器布局示意图;Fig. 3 is a schematic diagram of the layout of the photoelectric receiver of the present invention;

图4是本发明深度学习手势训练框架示意图;Fig. 4 is a schematic diagram of the deep learning gesture training framework of the present invention;

图5是手势训练动作示意图。Fig. 5 is a schematic diagram of gesture training actions.

具体实施方式Detailed ways

为了使本领域的技术人员更好地理解本发明的技术方案,下面结合附图和具体实施例对本发明作进一步的详细说明。In order to enable those skilled in the art to better understand the technical solutions of the present invention, the present invention will be further described in detail below in conjunction with the accompanying drawings and specific embodiments.

如图1所示,一种基于环境光的手势识别系统,包括数据采集终端、手势识别服务器和应用端,所述数据采集终端包括多个光电接收器、信号放大模块、模数转换模块和信号处理模块;As shown in Figure 1, a gesture recognition system based on ambient light includes a data collection terminal, a gesture recognition server, and an application terminal. The data collection terminal includes a plurality of photoelectric receivers, a signal amplification module, an analog-to-digital conversion module, and processing module;

所述光电接收器用于接收任一自由空间的环境光,并能捕捉手势动作产生的光信号变化,多个所述光电接收器构成组合排列;光电接收器将捕捉到的光信号转换为电流信号,光电接收器的输出端均与信号放大模块的输入端相连;The photoelectric receiver is used to receive ambient light in any free space, and can capture the light signal changes generated by gestures, and a plurality of the photoelectric receivers are arranged in combination; the photoelectric receiver converts the captured light signal into a current signal , the output terminals of the photoelectric receiver are connected to the input terminals of the signal amplification module;

所述信号放大器微小电流信号转化为电压信号,再进行放大处理,所述信号放大模块的输出端与模数转换模块的输入端相连;The small current signal of the signal amplifier is converted into a voltage signal, and then amplified, and the output end of the signal amplification module is connected to the input end of the analog-to-digital conversion module;

所述数模转换模块将信号放大器输出的模拟量的电压信号转换为数字量的数字信号,所述模数转换模块的输出端与信号处理模块输入端相连,所述信号处理模块用于将模数转换模块输出的数字信号合并,并进行编码;当手势识别服务器向信号处理模块发出请求后,信号处理模块向手势识别服务器发送数据包;The digital-to-analog conversion module converts the analog voltage signal output by the signal amplifier into a digital digital signal, the output end of the analog-to-digital conversion module is connected to the input end of the signal processing module, and the signal processing module is used to convert the analog The digital signals output by the digital conversion module are combined and encoded; when the gesture recognition server sends a request to the signal processing module, the signal processing module sends a data packet to the gesture recognition server;

所述手势识别服务器包括数据预处理单元和深度学习网络模型单元,在本实施例中,所述深度学习网络模型单元为门控循环单元;如图2所示,其中,h表示神经网络的迭代向量,t为时间点,v表示输入向量,U和W是门控循环单元参数矩阵,方框中的σ和tanh分别代表sigmoid和tanh激活函数,g是每个门的输出向量,代表向量逐元素相乘;门控循环单元共包括了两个门,后缀为u代表更新门,后缀为r代表重置门,最后通过后缀为c的状态更新运算计算出下一时刻的神经网络迭代向量;The gesture recognition server includes a data preprocessing unit and a deep learning network model unit. In this embodiment, the deep learning network model unit is a gated loop unit; as shown in Figure 2, wherein h represents the iteration of the neural network Vector, t is the time point, v represents the input vector, U and W are the parameter matrix of the gated recurrent unit, σ and tanh in the box represent the sigmoid and tanh activation functions respectively, g is the output vector of each gate, Represents the multiplication of vectors element by element; the gated cycle unit includes two gates, the suffix u represents the update gate, the suffix r represents the reset gate, and finally calculates the neural network at the next moment through the state update operation with the suffix c iteration vector;

所述数据预处理单元对信号处理模块输出的数据包进行解码并还原成多通道数据,还原后的多通道数据作为所述深度学习网络模型单元的输入,再将还原后的多通道数据进行识别并分类,具体的手势分类如图5所示,包括五指分别自然下垂、手掌自然摊开以及握拳七种手势。The data preprocessing unit decodes the data packets output by the signal processing module and restores them into multi-channel data, the restored multi-channel data is used as the input of the deep learning network model unit, and then the restored multi-channel data is identified And classification, the specific classification of gestures is shown in Figure 5, including seven gestures of natural drooping of five fingers, natural spreading of palms and clenching of fists.

所述多通道数据和所述深度学习网络模型单元识别分类的结果作为所述手势识别服务器输出的识别信息,所述手势识别服务器输出识别信息并发送至应用端,在本实施例中所述应用端为网页前端,网页前端可向用户展示每个通道的电压数值,还可用于展示最终识别手势结果以及相关交互应用。The multi-channel data and the recognition and classification results of the deep learning network model unit are used as the recognition information output by the gesture recognition server, and the gesture recognition server outputs the recognition information and sends it to the application end. In this embodiment, the application The terminal is the front end of the web page, which can display the voltage value of each channel to the user, and can also be used to display the final gesture recognition result and related interactive applications.

在本实施例中,所述光电接收器为光电二极管或光电三极管,其数量优选为八个,所述光电接收器在用户的手部上有三种组合排列,如图3所示,在图3的手部a中,光电接收器呈2*4矩形阵列排布,每相邻的两个光电接收器之间的距离为5cm,该方式优点在于左右对称、部署简单;In this embodiment, the photoelectric receivers are photodiodes or phototransistors, the number of which is preferably eight, and the photoelectric receivers are arranged in three combinations on the user's hand, as shown in Figure 3, in Figure 3 In the hand a of , the photoelectric receivers are arranged in a 2*4 rectangular array, and the distance between every two adjacent photoelectric receivers is 5cm. The advantage of this method is that it is symmetrical and easy to deploy;

在图3的手部b中,光电接收器呈梯形排布,上侧有三个光电接收器,设置在手部的食指、中指和无名指上,下侧有五个光电接收器,横向均匀分布,上下之间的光电接收器之间的距离为5cm;该方式的优点在于左右对称可以方便的切换左手、右手而无须进行排布调整,能适应不同手型,目的在于用有限的光电接收器尽可能多的捕捉本实施例手势相应空间微小的动作变化;In the hand b in Figure 3, the photoelectric receivers are arranged in a trapezoidal shape. There are three photoelectric receivers on the upper side, which are arranged on the index finger, middle finger and ring finger of the hand. There are five photoelectric receivers on the lower side, which are evenly distributed laterally. The distance between the upper and lower photoelectric receivers is 5cm; the advantage of this method is that the left and right hands can be switched conveniently without adjusting the arrangement, and it can adapt to different hand shapes. It is possible to capture as many tiny movement changes as possible in the corresponding space of gestures in this embodiment;

在图3的手部c中,光电接收器呈分散排布,光电接收器分别安装在每根手指的指尖附近和手掌上,该排布方式能最大限度地捕捉本实施例所识别手势的光信号的变化,但是因其不对称的特征只能用于识别单只手的手势。In the hand c of Fig. 3, the photoelectric receivers are arranged in a dispersed manner, and the photoelectric receivers are respectively installed near the fingertips of each finger and on the palm. This arrangement can capture the gestures recognized by this embodiment to the greatest extent Changes in the light signal, however, can only be used to recognize single-hand gestures due to their asymmetrical characteristics.

一种基于环境光的手势识别方法,包括以下识别步骤:A gesture recognition method based on ambient light, comprising the following recognition steps:

S1:多个光电接收器实时捕捉手势动作在不同的手部位置产生的光信号变化,并将收集到的八个光信号分别转换为电流信号;S1: Multiple photoelectric receivers capture the light signal changes generated by gestures at different hand positions in real time, and convert the eight collected light signals into current signals respectively;

S2:信号放大模块将电流信号转换为电压信号,并将其放大;S2: The signal amplification module converts the current signal into a voltage signal and amplifies it;

S3:放大后的电压信号经过模数转换模板转换为数字信号;S3: The amplified voltage signal is converted into a digital signal through an analog-to-digital conversion template;

S4:八通道数字信号经信号处理模块进行合并、编码,然后传输到手势识别服务器;S4: The eight-channel digital signals are merged and encoded by the signal processing module, and then transmitted to the gesture recognition server;

S5:数据预处理单元对接收到的原始数据进行解码,并还原成八通道数据;S5: The data preprocessing unit decodes the received original data and restores it to eight-channel data;

S6:还原后的八通道数据输入到深度学习网络模型单元中,完成手势的识别分类;S6: The restored eight-channel data is input into the deep learning network model unit to complete the recognition and classification of gestures;

S7:同时将还原后的八通道数据和识别分类的结果作为应用端的输入,应用端实时展示八通道的数据和识别出的手势。S7: At the same time, the restored eight-channel data and the recognition and classification results are used as the input of the application side, and the application side displays the eight-channel data and recognized gestures in real time.

所述手势识别方法还包括识别前的训练步骤,所述训练步骤为:所述手势识别服务器根据不同的手势动作通过反向传播算法进行训练,建立深度网络模型;其训练原理为:训练的主要途径是通过反向传播算法迭代更新参数矩阵的参数,主要使用了多分类交叉熵作为损失函数,进行反向传播迭代。如图4所示,每一个V表示每个时刻输入神经网络的光电接收器数据,经过神经网络运算后得到神经网络的输出(即RNN Output),通过全连接层和softmax函数后可以得到输入的光电接收器数据对应的手势;通过算法输出的手势与正确手势进行比对,正确则不调整神经网络权重矩阵的参数,错误则通过反向传播算法更新神经网络权重矩阵。The gesture recognition method also includes a training step before recognition, the training step is: the gesture recognition server trains through a backpropagation algorithm according to different gesture actions, and establishes a deep network model; its training principle is: the main The approach is to iteratively update the parameters of the parameter matrix through the backpropagation algorithm, mainly using multi-classification cross entropy as the loss function to perform backpropagation iterations. As shown in Figure 4, each V represents the photoelectric receiver data input to the neural network at each moment, and the output of the neural network (that is, RNN Output) is obtained after the neural network operation, and the input can be obtained after passing through the fully connected layer and the softmax function The gesture corresponding to the photoelectric receiver data; the gesture output by the algorithm is compared with the correct gesture. If it is correct, the parameters of the neural network weight matrix will not be adjusted, and if it is wrong, the neural network weight matrix will be updated through the back propagation algorithm.

需要说明的是,对于前述的各个方法实施例,为了简单描述,故将其都表述为一系列的动作组合,但是本领域技术人员应该知悉,本申请并不受所描述的动作顺序的限制,因为依据本申请,某一些步骤可以采用其他顺序或者同时进行。其次,本领域技术人员也应该知悉,说明书中所描述的实施例均属于优选实施例,所涉及的动作和单元并不一定是本申请所必须的。It should be noted that, for the sake of simple description, all the aforementioned method embodiments are expressed as a series of action combinations, but those skilled in the art should know that the present application is not limited by the described action sequence. Because according to the application, certain steps may be performed in other order or simultaneously. Secondly, those skilled in the art should also know that the embodiments described in the specification belong to preferred embodiments, and the actions and units involved are not necessarily required by this application.

Claims (7)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201911203896.2A CN110942040B (en) | 2019-11-29 | 2019-11-29 | Gesture recognition system and method based on ambient light |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201911203896.2A CN110942040B (en) | 2019-11-29 | 2019-11-29 | Gesture recognition system and method based on ambient light |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN110942040A CN110942040A (en) | 2020-03-31 |

| CN110942040B true CN110942040B (en) | 2023-04-18 |

Family

ID=69908994

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN201911203896.2A Active CN110942040B (en) | 2019-11-29 | 2019-11-29 | Gesture recognition system and method based on ambient light |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN110942040B (en) |

Families Citing this family (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN111459283A (en) * | 2020-04-07 | 2020-07-28 | 电子科技大学 | A Human-Computer Interaction Implementation Method Integrating Artificial Intelligence and Web3D |

| CN111781758A (en) * | 2020-07-03 | 2020-10-16 | 武汉华星光电技术有限公司 | Display screen and electronic equipment |

| CN113625882B (en) * | 2021-10-12 | 2022-06-14 | 四川大学 | Myoelectric gesture recognition method based on sparse multichannel correlation characteristics |

Citations (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN105205436A (en) * | 2014-06-03 | 2015-12-30 | 北京创思博德科技有限公司 | Gesture identification system based on multiple forearm bioelectric sensors |

| CN105786177A (en) * | 2016-01-27 | 2016-07-20 | 中国人民解放军信息工程大学 | Gesture recognition device and method |

Family Cites Families (11)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US8432372B2 (en) * | 2007-11-30 | 2013-04-30 | Microsoft Corporation | User input using proximity sensing |

| US8947353B2 (en) * | 2012-06-12 | 2015-02-03 | Microsoft Corporation | Photosensor array gesture detection |

| EP3155560B1 (en) * | 2014-06-14 | 2020-05-20 | Magic Leap, Inc. | Methods and systems for creating virtual and augmented reality |

| US10261685B2 (en) * | 2016-12-29 | 2019-04-16 | Google Llc | Multi-task machine learning for predicted touch interpretations |

| WO2019006760A1 (en) * | 2017-07-07 | 2019-01-10 | 深圳市大疆创新科技有限公司 | Gesture recognition method and device, and movable platform |

| CN107589832A (en) * | 2017-08-01 | 2018-01-16 | 深圳市汇春科技股份有限公司 | It is a kind of based on optoelectronic induction every empty gesture identification method and its control device |

| CN108088371B (en) * | 2017-12-19 | 2020-12-01 | 厦门大学 | A Photodetector Location Layout for Large Displacement Monitoring |

| EP3767433A4 (en) * | 2018-03-12 | 2021-07-28 | Sony Group Corporation | INFORMATION PROCESSING DEVICE, INFORMATION PROCESSING METHOD AND PROGRAM |

| CN109099942A (en) * | 2018-07-11 | 2018-12-28 | 厦门中莘光电科技有限公司 | A kind of photoelectricity modulus conversion chip of integrated silicon-based photodetector |

| CN208903277U (en) * | 2018-11-21 | 2019-05-24 | Oppo广东移动通信有限公司 | Display and electronic equipment |

| CN110046585A (en) * | 2019-04-19 | 2019-07-23 | 西北工业大学 | A kind of gesture identification method based on environment light |

-

2019

- 2019-11-29 CN CN201911203896.2A patent/CN110942040B/en active Active

Patent Citations (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN105205436A (en) * | 2014-06-03 | 2015-12-30 | 北京创思博德科技有限公司 | Gesture identification system based on multiple forearm bioelectric sensors |

| CN105786177A (en) * | 2016-01-27 | 2016-07-20 | 中国人民解放军信息工程大学 | Gesture recognition device and method |

Also Published As

| Publication number | Publication date |

|---|---|

| CN110942040A (en) | 2020-03-31 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| Zhang et al. | Data augmentation and dense-LSTM for human activity recognition using WiFi signal | |

| CN110942040B (en) | Gesture recognition system and method based on ambient light | |

| US20180186452A1 (en) | Unmanned Aerial Vehicle Interactive Apparatus and Method Based on Deep Learning Posture Estimation | |

| US20210264136A1 (en) | Model training method and apparatus, face recognition method and apparatus, device, and storage medium | |

| Akmeliawati et al. | Real-time Malaysian sign language translation using colour segmentation and neural network | |

| CN102799317B (en) | Intelligent Interactive Projection System | |

| Lian et al. | Intelligent multi-sensor control system based on innovative technology integration via ZigBee and Wi-Fi networks | |

| İnce et al. | Human activity recognition with analysis of angles between skeletal joints using a RGB‐depth sensor | |

| EP3862931A2 (en) | Gesture feedback in distributed neural network system | |

| CN113723185B (en) | Action behavior recognition method and device, storage medium and terminal equipment | |

| CN103914149A (en) | Gesture interaction method and gesture interaction system for interactive television | |

| KR102274581B1 (en) | Method for generating personalized hrtf | |

| Duan et al. | Ambient light based hand gesture recognition enabled by recurrent neural network | |

| TW201423612A (en) | Device and method for recognizing a gesture | |

| Chaudhary et al. | Depth‐based end‐to‐end deep network for human action recognition | |

| Huang et al. | TSHNN: Temporal-spatial hybrid neural network for cognitive wireless human activity recognition | |

| CN116861332A (en) | Intelligent dynamic gesture recognition method | |

| Wu et al. | Efficient prediction of link outage in mobile optical wireless communications | |

| CN110353693A (en) | A kind of hand-written Letter Identification Method and system based on WiFi | |

| CN105511619B (en) | A human-computer interaction control system and method based on visual infrared sensing technology | |

| Boner et al. | Tiny tcn model for gesture recognition with multi-point low power tof-sensors | |

| Lyu et al. | Identifiability-guaranteed simplex-structured post-nonlinear mixture learning via autoencoder | |

| CN114283798A (en) | Radio receiving method of handheld device and handheld device | |

| CN115438691B (en) | Small sample gesture recognition method based on wireless signals | |

| CN103558913A (en) | Virtual input glove keyboard with vibration feedback function |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |