CN109190508B - Multi-camera data fusion method based on space coordinate system - Google Patents

Multi-camera data fusion method based on space coordinate system Download PDFInfo

- Publication number

- CN109190508B CN109190508B CN201810917557.XA CN201810917557A CN109190508B CN 109190508 B CN109190508 B CN 109190508B CN 201810917557 A CN201810917557 A CN 201810917557A CN 109190508 B CN109190508 B CN 109190508B

- Authority

- CN

- China

- Prior art keywords

- target

- coordinate system

- camera

- space coordinate

- dimensional space

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V20/00—Scenes; Scene-specific elements

- G06V20/50—Context or environment of the image

- G06V20/52—Surveillance or monitoring of activities, e.g. for recognising suspicious objects

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/24—Classification techniques

- G06F18/241—Classification techniques relating to the classification model, e.g. parametric or non-parametric approaches

- G06F18/2413—Classification techniques relating to the classification model, e.g. parametric or non-parametric approaches based on distances to training or reference patterns

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/25—Fusion techniques

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/20—Analysis of motion

- G06T7/292—Multi-camera tracking

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/70—Determining position or orientation of objects or cameras

- G06T7/73—Determining position or orientation of objects or cameras using feature-based methods

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/10—Image acquisition modality

- G06T2207/10016—Video; Image sequence

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/30—Subject of image; Context of image processing

- G06T2207/30232—Surveillance

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- General Physics & Mathematics (AREA)

- Physics & Mathematics (AREA)

- Computer Vision & Pattern Recognition (AREA)

- Data Mining & Analysis (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Evolutionary Biology (AREA)

- Evolutionary Computation (AREA)

- Bioinformatics & Computational Biology (AREA)

- General Engineering & Computer Science (AREA)

- Artificial Intelligence (AREA)

- Life Sciences & Earth Sciences (AREA)

- Multimedia (AREA)

- Image Analysis (AREA)

- Length Measuring Devices By Optical Means (AREA)

Abstract

Description

技术领域technical field

本发明涉及视频图像处理领域,具体说是一种在空间坐标系下对连续多监控场景下的多摄像头采集到的监控数据融合方法。The invention relates to the field of video image processing, in particular to a monitoring data fusion method collected by multiple cameras in a continuous multi-monitoring scene under a spatial coordinate system.

背景技术Background technique

近年来,随着用于安防的网络摄像头的普及,智能视频监控技术已经迅速成为当下的一个研究热点。视频数据是对监控场景中发生的事的记录,其中蕴含着各类信息。鉴于在大多数视频监控数据中背景是固定不变的,所以对于视频监控数据,使用者真正感兴趣的是其中出现的目标以及目标的运行轨迹。In recent years, with the popularization of network cameras for security, intelligent video surveillance technology has rapidly become a research hotspot. Video data is a record of what happened in the surveillance scene, which contains various kinds of information. In view of the fact that the background is fixed in most video surveillance data, what users are really interested in is the target appearing in the video surveillance data and the running track of the target.

目前,视频监控数据大多是根据摄像头的编号对各摄像头采集到的数据分别进行存储,然后再通过智能视频监控技术进行分析处理。而目标检测、目标识别和目标跟踪是智能视频监控分析处理的三个重要环节。其中,目标跟踪是用来确定我们感兴趣的目标在视频序列中连续的位置,目标跟踪技术是计算机视觉领域的一个基础技术,具有广泛的应用价值。At present, most of the video surveillance data are stored separately according to the number of the cameras, and the data collected by each camera is then analyzed and processed by the intelligent video surveillance technology. The target detection, target recognition and target tracking are three important links in the analysis and processing of intelligent video surveillance. Among them, target tracking is used to determine the continuous position of the target we are interested in in the video sequence, and target tracking technology is a basic technology in the field of computer vision, which has a wide range of application values.

传统的目标跟踪技术都是基于二维图像空间对目标的历史运动轨迹进行记录。这种方法容易实现,且可以记录目标在当前场景下的运动轨迹。但是这种方法不足在于:1.传统的目标跟踪技术局限在二维图像空间,不能反映目标在空间中的位置变化信息;2.当目标发生跨场景的移动时,传统的目标跟踪技术需要将当前场景中的目标与之后多个场景中的目标进行比对,并以此判断目标的移动方向,不能很好的对目标进行多场景的持续跟踪。The traditional target tracking technology is based on the two-dimensional image space to record the historical trajectory of the target. This method is easy to implement, and can record the trajectory of the target in the current scene. However, the shortcomings of this method are: 1. The traditional target tracking technology is limited to the two-dimensional image space and cannot reflect the position change information of the target in the space; 2. When the target moves across scenes, the traditional target tracking technology needs to The target in the current scene is compared with the targets in the subsequent scenes, and the moving direction of the target is judged based on this, which cannot be used to continuously track the target in multiple scenes.

发明内容SUMMARY OF THE INVENTION

本发明的目的是:通过建立二维图像坐标系与真实三维空间坐标系之间的映射关系,将对多个摄像头获取到的视频帧进行目标检测与目标识别后的得到的目标在二维图像坐标系下的坐标信息映射到空间坐标系中,并在此基础上提供一种对目标进行跨场景跟踪方法,实现对多摄像头采集到的视频监控数据进行融合。The purpose of the present invention is: by establishing the mapping relationship between the two-dimensional image coordinate system and the real three-dimensional space coordinate system, the target obtained after target detection and target recognition will be performed on the video frames obtained by multiple cameras in the two-dimensional image. The coordinate information in the coordinate system is mapped into the space coordinate system, and on this basis, a cross-scene tracking method for the target is provided to realize the fusion of video surveillance data collected by multiple cameras.

该方法在分析摄像头成像原理和摄像头标定技术的基础上,建立二维图像坐标系与真实三维空间坐标系之间的坐标映射方程;然后将通过目标检测与目标识别方法获取到的目标在二维图像坐标系下的坐标,转换为目标在三维空间坐标系下的坐标;最后基于目标在三维空间坐标系下的坐标进行目标跟踪,实现多摄像头数据融合。这种方法可以还原目标在真实空间中的位置变化信息,同时还可以更好地对目标进行跨场景跟踪,实现对多个摄像头采集到的数据的融合,为高层次的目标行为分析提供更多的信息。On the basis of analyzing the camera imaging principle and camera calibration technology, the method establishes the coordinate mapping equation between the two-dimensional image coordinate system and the real three-dimensional space coordinate system; The coordinates in the image coordinate system are converted into the coordinates of the target in the three-dimensional space coordinate system; finally, the target tracking is performed based on the coordinates of the target in the three-dimensional space coordinate system to realize multi-camera data fusion. This method can restore the position change information of the target in the real space, and can also better track the target across scenes, realize the fusion of data collected by multiple cameras, and provide more high-level target behavior analysis. Information.

为了实现上述目的,本发明提供了一种基于空间坐标系的多摄像头数据融合方法,该方法包括以下步骤:In order to achieve the above purpose, the present invention provides a multi-camera data fusion method based on a spatial coordinate system, the method comprising the following steps:

在需要被监控的场景中部署各个摄像头;针对需要提取的目标构建训练数据集,完成目标检测与识别模型的训练;基于目标检测与识别模型提取各摄像头采集到的视频数据中的目标的类别和目标在二维图像坐标系统下的位置信息,建立二维图像坐标系与三维空间坐标系之间的坐标映射关系;对于连续多个场景中的摄像头采集到的视频流数据,进行目标检测与目标识别处理,提取各帧中出现的目标的类别信息以及目标在二维图像坐标系下的位置信息;基于坐标映射关系,将目标在二维图像坐标系下的位置信息映射为目标在三维空间坐标系下的坐标;并根据当前时间节点中的各目标与上一时间节点中各目标之间的距离信息,发现相邻时间节点中的相同目标,并将其连接起来,得到目标在三维空间坐标系下的运动轨迹数据。Deploy each camera in the scene to be monitored; build a training data set for the target to be extracted, and complete the training of the target detection and recognition model; The position information of the target in the two-dimensional image coordinate system, and the coordinate mapping relationship between the two-dimensional image coordinate system and the three-dimensional space coordinate system is established; for the video stream data collected by cameras in multiple consecutive scenes, the target detection and target Recognition processing, extracting the category information of the target appearing in each frame and the position information of the target in the two-dimensional image coordinate system; based on the coordinate mapping relationship, the position information of the target in the two-dimensional image coordinate system is mapped to the target in the three-dimensional space coordinate. and according to the distance information between each target in the current time node and each target in the previous time node, find the same target in adjacent time nodes, and connect them to get the coordinates of the target in three-dimensional space The motion trajectory data under the system.

优先地,建立二维图像坐标系与三维空间坐标系之间的坐标映射关系步骤,具体包括:Preferably, the step of establishing the coordinate mapping relationship between the two-dimensional image coordinate system and the three-dimensional space coordinate system specifically includes:

对于各摄像头监控的场景中,选择50个均匀分布的二维图像坐标系中的像素坐标,记为 {(u1,v1),(u2,v2),……,(u50,v50)},同时获取这50个像素坐标在真实三维空间坐标系中对应的空间坐标,记为{(X1,Y1,Z1),(X2,Y2,Z2),……,(X50 ,Y50 ,Z50 )},各点在真实三维空间坐标系下的坐标可以通过手工测量或者是利用GPS定位的方法获取;For the scene monitored by each camera, select 50 uniformly distributed pixel coordinates in the two-dimensional image coordinate system, denoted as {(u 1 ,v 1 ),(u 2 ,v 2 ),...,(u 50 , v 50 )}, and obtain the corresponding spatial coordinates of these 50 pixel coordinates in the real three-dimensional spatial coordinate system, denoted as {(X 1 , Y 1 , Z 1 ), (X 2 , Y 2 , Z 2 ),… ..., (X 50 , Y 50 , Z 50 )}, the coordinates of each point in the real three-dimensional space coordinate system can be obtained by manual measurement or GPS positioning;

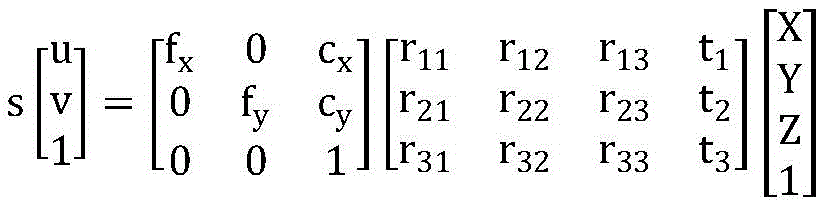

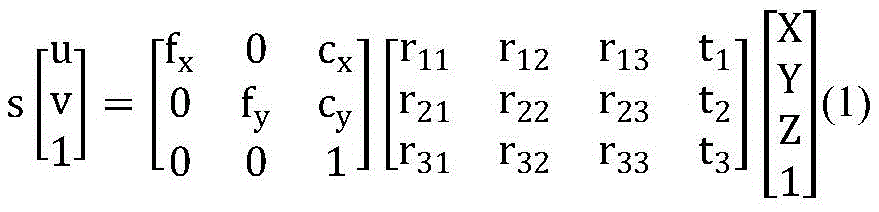

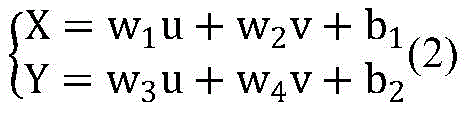

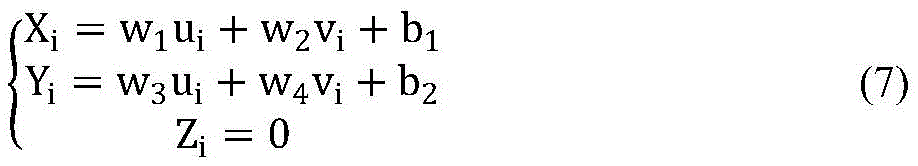

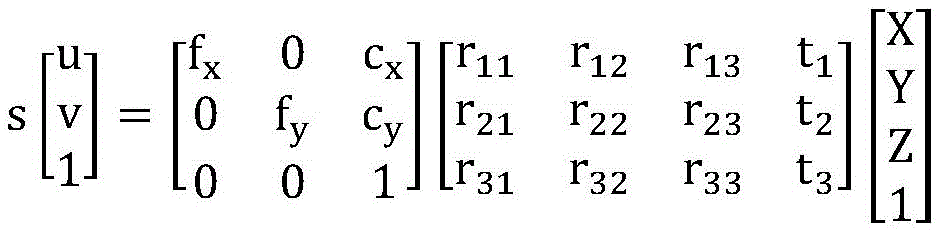

在摄像头成像的过程中,二维图像坐标点(u,v)与对应的三维空间坐标点(X,Y,Z)之间满足:In the process of camera imaging, the two-dimensional image coordinate point (u, v) and the corresponding three-dimensional space coordinate point (X, Y, Z) satisfy:

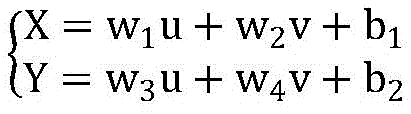

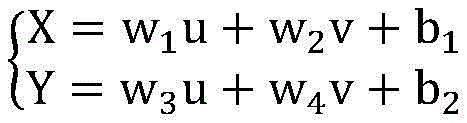

考虑到我们关注的目标都在地平面上,即Z=0,因此上式可转化为:Considering that the targets we are concerned about are all on the ground plane, that is, Z=0, the above formula can be transformed into:

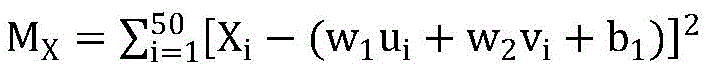

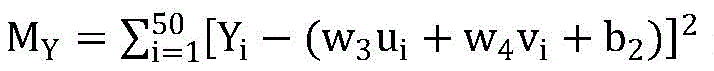

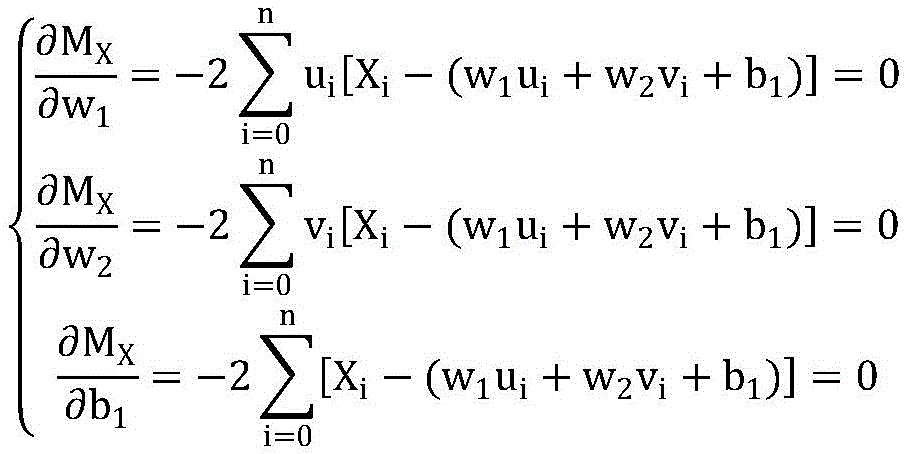

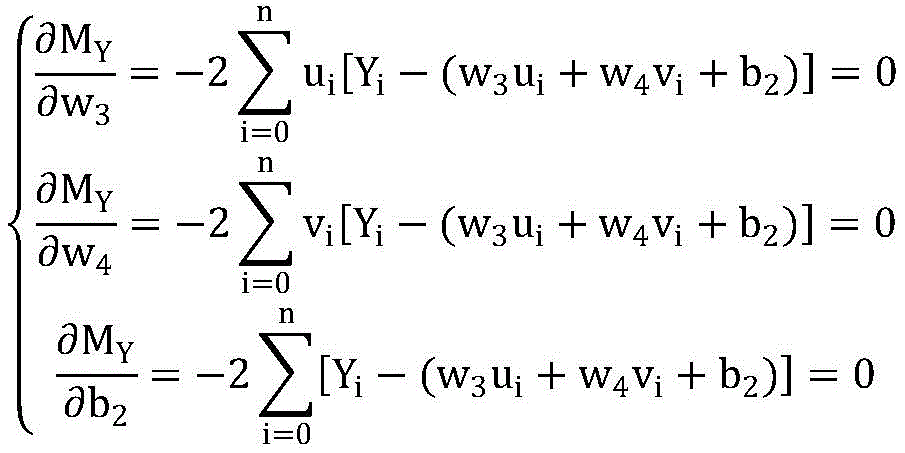

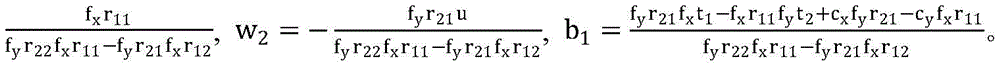

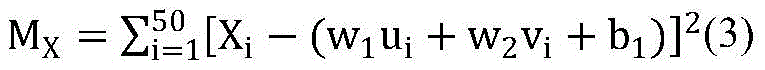

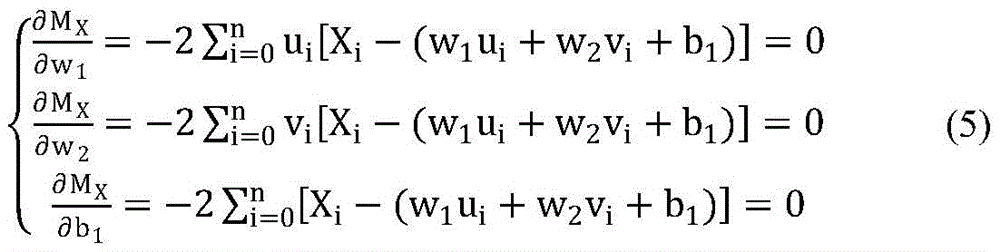

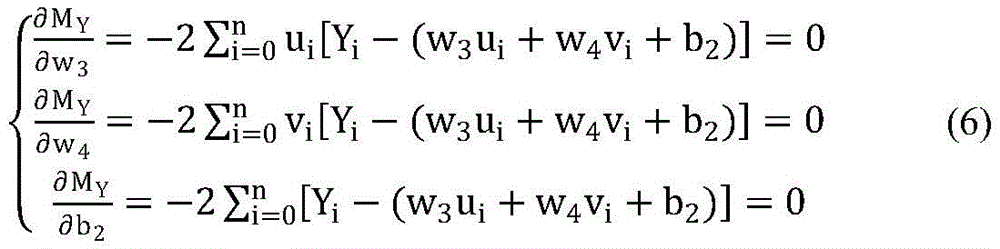

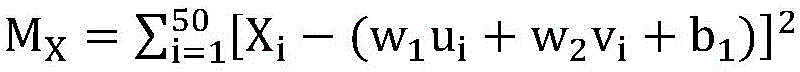

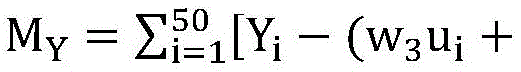

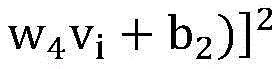

其中,参数(w1,w2,b1,w3,w4,b2)为常数,通过最小二乘法,找到使得所有拟合结果与实际数据的偏差的平方和和最小的参数 (w1,w2,b1,w3,w4,b2),即求解方程组:Among them, the parameters (w 1 ,w 2 ,b 1 ,w 3 ,w 4 ,b 2 ) are constants, and through the least squares method, find the sum of squares of deviations between all fitting results and the actual data and The smallest parameters (w 1 ,w 2 ,b 1 ,w 3 ,w 4 ,b 2 ), that is, solve the system of equations:

优选地,根据当前时间节点中的各目标与上一时间节点中各目标之间的距离信息,发现相邻时间节点中的相同目标,并将其连接起来,得到目标在三维空间坐标系下的运动轨迹数据步骤,具体包括:Preferably, according to the distance information between each target in the current time node and each target in the previous time node, find the same target in adjacent time nodes, and connect them to obtain the target in the three-dimensional space coordinate system. Motion trajectory data steps, including:

读取各摄像头监控场景下目标在三维空间坐标系下的坐标数据,并按坐标数据出现时间的先后排序;Read the coordinate data of the target in the three-dimensional space coordinate system in the monitoring scene of each camera, and sort the coordinate data according to the time of occurrence of the coordinate data;

计算第(i+1)个时间节点中各目标在三维空间坐标系下的坐标((i+1)1~(i+1)a)与第i个时间节点中各目标在三维空间坐标系下的坐标(i1~ib)之间的距离,记为dxy(其中x∈[1,a],y∈[1,b]);Calculate the coordinates of each target in the (i+1)th time node in the three-dimensional space coordinate system ((i+1) 1 ~ (i+1) a ) and each target in the i-th time node in the three-dimensional space coordinate system The distance between the coordinates (i 1 ~ i b ) under , denoted as d xy (where x∈[1,a], y∈[1,b]);

当x为定值时,暂且认为使得dxy最小的y与x是同一目标在相邻两个时间节点中的编号;When x is a fixed value, temporarily consider that y and x that make d xy the smallest are the numbers of the same target in two adjacent time nodes;

若dxy小于给定阈值T,则确定x,y为同一目标在相邻两个时间节点中的编号,否则x为在第(i+1)个时间节点第一次出现在监控场景中的新目标的编号;If d xy is less than the given threshold T, then determine that x and y are the numbers of the same target in two adjacent time nodes, otherwise x is the first time node that appears in the monitoring scene at the (i+1)th time node. the number of the new target;

将相邻两个时间节点中的相同目标在三维空间坐标系下的坐标依次连接起来,同时记录每一条坐标连线所属的目标的类别以及起止时间节点,得到各摄像头监控的场景下目标在三维空间坐标系下的运动轨迹数据。Connect the coordinates of the same target in the two adjacent time nodes in the three-dimensional space coordinate system in turn, and record the target category and start and end time nodes to which each coordinate connection line belongs, and obtain the three-dimensional target in the scene monitored by each camera. Motion trajectory data in the space coordinate system.

优选地,完成目标检测与识别模型的训练步骤,具体包括:Preferably, the training steps of the target detection and recognition model are completed, which specifically includes:

步骤201:针对每种需要提取的目标,采集500-1000张包含该目标的图片,对于这些图片中应尽可能包括从不同角度拍摄的目标;Step 201: For each target that needs to be extracted, collect 500-1000 pictures containing the target, and these pictures should include targets shot from different angles as much as possible;

步骤202:通过手动标记的方法,为步骤201中采集到的图片添加类别标签;Step 202: Add a category label to the picture collected in step 201 by manually labeling;

步骤203:通过手动标记的方法,为步骤201中采集到的图片添加目标在二维图像坐标系下的位置信息;Step 203: Add the position information of the target in the two-dimensional image coordinate system to the picture collected in step 201 by manually marking;

步骤204:将用于训练的图片数据集随机打乱构建训练数据集,并导入基于Caffe的深度学习框架 Faster-RCNN;Step 204: Randomly scramble the image data set used for training to construct a training data set, and import it into the Caffe-based deep learning framework Faster-RCNN;

步骤205:根据训练集的类别、类别数量修改训练参数,并设置迭代次数;Step 205: Modify the training parameters according to the category and the number of categories of the training set, and set the number of iterations;

步骤206:训练目标检测与识别模型。Step 206: Train a target detection and recognition model.

优选地,基于目标检测与识别模型提取各摄像头采集到的视频数据中的目标的类别和目标在二维图像坐标系统下的位置信息步骤,具体包括:Preferably, based on the target detection and recognition model, the steps of extracting the category of the target in the video data collected by each camera and the position information of the target under the two-dimensional image coordinate system specifically include:

对于连续多个场景中的摄像头采集到的视频流数据,利用目标检测与识别模型进行目标检测与目标识别处理,每隔1秒提取各摄像头采集到的当前帧中的目标的类别信息和目标在二维图像坐标系下的位置信息;同时,获取目标所属摄像头的ID以及采集时间,与该目标的位置信息构成目标的信息。For the video stream data collected by cameras in multiple consecutive scenes, the target detection and recognition model is used for target detection and target recognition processing, and the category information of the target in the current frame collected by each camera is extracted every 1 second. The position information in the two-dimensional image coordinate system; at the same time, the ID of the camera to which the target belongs and the acquisition time are obtained, and the position information of the target constitutes the target information.

本发明针对监控场景的实际需要,利用深度学习框架训练目标检测与目标识别模型,可以从连续多个监控场景中的摄像头采集到的图像中同时提取出多个不同目标的类别信息和目标在二维图像坐标系下的位置信息;通过最小二乘法,建立二维图像坐标系与三维空间坐标系之间的坐标映射关系,可以将检测到的各个目标在二维图像坐标系下的位置信息信息转化为该目标三维空间坐标系下的坐标;通过计算相邻时间节点下各目标之间的距离信息,获取目标在在三维空间坐标系下的运动轨迹。与传统目标跟踪技术相比,本方法可以将各摄像头采集到的分散的监控数据进行融合,可以同时获取多个目标在连续多个场景下的空间位置变化信息;通过坐标映射方程可以完成二维像素坐标向三维空间坐标的转换,实现了对目标在三维空间下的跟踪;同时,本方法所存储目标的类别数据和轨迹数据,相比于传统目标跟踪技术所采用的存储连续图像数据,大大减少了存储的数据量,提高了计算效率。In view of the actual needs of the monitoring scene, the present invention uses the deep learning framework to train the target detection and target recognition model, and can simultaneously extract the category information of multiple different targets and the target in the two images from the images collected by the cameras in the continuous monitoring scenes. The position information under the 2D image coordinate system; through the least squares method, the coordinate mapping relationship between the 2D image coordinate system and the 3D space coordinate system can be established, and the position information of each detected target in the 2D image coordinate system can be obtained. Convert it to the coordinates in the three-dimensional space coordinate system of the target; obtain the motion trajectory of the target in the three-dimensional space coordinate system by calculating the distance information between the targets under the adjacent time nodes. Compared with the traditional target tracking technology, this method can fuse the scattered monitoring data collected by each camera, and can simultaneously obtain the spatial position change information of multiple targets in multiple consecutive scenes; through the coordinate mapping equation, the two-dimensional transformation can be completed. The conversion of pixel coordinates to three-dimensional space coordinates realizes the tracking of the target in three-dimensional space; at the same time, the category data and trajectory data of the target stored in this method are much larger than the continuous image data stored in the traditional target tracking technology. The amount of stored data is reduced and the computational efficiency is improved.

附图说明Description of drawings

图1是本发明实施例提供的一种基于空间坐标系的多摄像头数据融合方法的流程示意图;1 is a schematic flowchart of a multi-camera data fusion method based on a spatial coordinate system provided by an embodiment of the present invention;

图2是目标检测与识别模型训练过程的流程图。Figure 2 is a flow chart of the training process of the target detection and recognition model.

具体实施方式Detailed ways

本发明的一种基于空间坐标系的多摄像头数据融合方法,结合附图作进一步详细说明:A multi-camera data fusion method based on a spatial coordinate system of the present invention is further described in detail with reference to the accompanying drawings:

图1是本发明实施例提供的一种基于空间坐标系的多摄像头数据融合方法的流程示意图。如图1所示,基于空间坐标系的多摄像头数据融合方法,包括步骤S1-S6:FIG. 1 is a schematic flowchart of a multi-camera data fusion method based on a spatial coordinate system provided by an embodiment of the present invention. As shown in Figure 1, the multi-camera data fusion method based on the spatial coordinate system includes steps S1-S6:

S1、在需要被监控的场景中部署多个摄像头,其中相邻摄像头覆盖的场景的边缘应尽可能靠近,同时相邻摄像头覆盖的场景边缘应尽可能少重叠。S1. Deploy multiple cameras in the scene to be monitored, wherein the edges of the scene covered by adjacent cameras should be as close as possible, and the edges of the scene covered by adjacent cameras should overlap as little as possible.

S2、训练目标检测与识别模型,模型训练流程图如附图2所示,具体实施时步骤如下:S2, training the target detection and recognition model, the model training flow chart is shown in Figure 2, and the specific implementation steps are as follows:

步骤201:每个类别选择500-1000张至少包含一个该类别目标的图片。对于图片的选取,应尽可能地选择不同角度下拍摄的图片以及包含不同姿态的目标的图片,构成图片数据集。Step 201: For each category, select 500-1000 pictures that contain at least one target of this category. For the selection of pictures, pictures taken at different angles and pictures containing targets with different poses should be selected as much as possible to form a picture data set.

步骤202:通过手动标记的方法,为步骤201中采集到的图片添加类别标签,类别标签就是图片中的目标所属的类别。Step 202 : adding a category label to the picture collected in step 201 by manual labeling, where the category label is the category to which the target in the picture belongs.

步骤203:通过手动标记的方法,为步骤201中采集到的图片添加目标在二维图像坐标系下的位置信息,目标在二维图像坐标系下的位置信息就是目标所在的矩形包围框的坐标信息(x1,y1,x2,y2),其中 (x1,y1)是目标所在的矩形包围框的左上角坐标,(x2,y2)是目标所在的矩形包围框的右下角坐标。Step 203: Add the position information of the target in the two-dimensional image coordinate system to the picture collected in step 201 by the method of manual marking, and the position information of the target in the two-dimensional image coordinate system is the coordinates of the rectangular bounding box where the target is located. Information (x1, y1, x2, y2), where (x1, y1) is the upper left corner coordinate of the rectangular bounding box where the target is located, and (x2, y2) is the lower right corner coordinate of the rectangular bounding box where the target is located.

步骤204:将用于训练的图片数据集随机打乱构建训练数据集,按照7:2:1的比例分为训练集、测试集和验证集,并导入深度学习框架Faster-RCNN。Step 204: Randomly scramble the image data set used for training to construct a training data set, divide it into a training set, a test set and a verification set according to the ratio of 7:2:1, and import the deep learning framework Faster-RCNN.

步骤205:根据训练集中目标的类别以及目标类别的数量修改训练参数,并设置迭代次数,包括rpn 第1阶段,fast rcnn第1阶段,rpn第2阶段,fast rcnn第2阶段这四个阶段的迭代次数。Step 205: Modify the training parameters according to the target category in the training set and the number of target categories, and set the number of iterations, including the first stage of rpn, the first stage of fast rcnn, the second stage of rpn, and the second stage of fast rcnn. number of iterations.

步骤206:训练目标检测与识别模型,在完成了设置的迭代次数后,将会得到后缀名为.caffemodel的目标检测与识别模型文件。Step 206 : train the target detection and recognition model, after completing the set number of iterations, a target detection and recognition model file with a suffix of .caffemodel will be obtained.

S3、对于连续多个场景中的摄像头采集到的视频流数据,每隔1秒对个摄像头采集到的当前帧进行目标检测与目标识别处理。提取当前帧中目标的类别信息和目标在二维图像坐标系下的二维像素坐标位置信息(ui,vi),同时还需要存储目标所属摄像头的ID以及目标出现的时间信息,这些信息共同构成目标的信息。S3. For the video stream data collected by cameras in multiple consecutive scenes, perform target detection and target recognition processing on the current frames collected by each camera every 1 second. Extract the category information of the target in the current frame and the two-dimensional pixel coordinate position information (u i , v i ) of the target in the two-dimensional image coordinate system, and also need to store the ID of the camera to which the target belongs and the time information of the target appearance. These information Information that together constitute a goal.

S4、二维坐标和三维坐标之间的坐标映射方程计算方法具体实施步骤如下:在摄像头成像的过程中,二维图像坐标点(u,v)与对应的三维空间坐标点(X,Y,Z)之间满足以下关系:S4. The specific implementation steps of the coordinate mapping equation calculation method between the two-dimensional coordinates and the three-dimensional coordinates are as follows: in the process of camera imaging, the two-dimensional image coordinate points (u, v) and the corresponding three-dimensional space coordinate points (X, Y, Z) satisfy the following relationship:

考虑到我们关注的目标都在地平面上,即Z=0,因此公式(1)可转化为:Considering that the targets we focus on are all on the ground plane, that is, Z=0, formula (1) can be transformed into:

其中, 基于此,可以通过最小二乘法建立二维图像坐标系与三维空间坐标系之间的坐标映射关系,具体步骤如下:in, Based on this, the coordinate mapping relationship between the two-dimensional image coordinate system and the three-dimensional space coordinate system can be established by the least squares method. The specific steps are as follows:

步骤401:对于各摄像头监控的场景,选择50个均匀分布的二维图像坐标系中的像素坐标,记为 {(u1,v1),(u2,v2),……,(u50,v50)},同时通过手工测量或者是利用GPS定位的方法采集这50个像素坐标在真实三维空间坐标系中对应的空间坐标,记为{(X1,Y1,Z1),(X2,Y2,Z2),……,(X50 ,Y50 ,Z50 )}。Step 401 : For the scene monitored by each camera, select 50 pixel coordinates in a uniformly distributed two-dimensional image coordinate system, denoted as {(u 1 ,v 1 ),(u 2 ,v 2 ),...,(u 50 , v 50 )}, and at the same time collect the corresponding spatial coordinates of these 50 pixel coordinates in the real three-dimensional space coordinate system by manual measurement or GPS positioning, denoted as {(X 1 , Y 1 , Z 1 ), (X 2 , Y 2 , Z 2 ), ..., (X 50 , Y 50 , Z 50 )}.

步骤402:通过最小二乘法,选择合适的参数(w1,w2,b1,w3,w4,b2),保证所有拟合结果与实际数据的偏差的平方和MX,MY最小,MX,MY可以表示为:Step 402: Select appropriate parameters (w 1 , w 2 , b 1 , w 3 , w 4 , b 2 ) through the least squares method to ensure the square sums of deviations between all fitting results and the actual data M X , M Y Minimum, M X , M Y can be expressed as:

求使MX,MY可以取到极小值时刻参数(w1,w2,b1,w3,w4,b2)的值,即求解方程组:Find the values of the parameters (w 1 ,w 2 ,b 1 ,w 3 ,w 4 ,b 2 ) at the time of minimum value so that M X and M Y can be obtained, that is, to solve the equation system:

将参数(w1,w2,b1,w3,w4,b2)带入公式(2)中,得到摄像头监控的场景下二维图像坐标系与三维空间坐标系之间的映射方程。Bring the parameters (w 1 ,w 2 ,b 1 ,w 3 ,w 4 ,b 2 ) into formula (2) to obtain the mapping equation between the two-dimensional image coordinate system and the three-dimensional space coordinate system in the scene monitored by the camera .

S5、将步骤S3中提取出的各个目标在二维图像坐标系下的二维像素坐标位置信息(ui,vi)输入步骤4 中计算得到的二维图像坐标系与三维空间坐标系之间的映射方程:S5. Input the two-dimensional pixel coordinate position information (u i , v i ) of each target extracted in step S3 under the two-dimensional image coordinate system into the difference between the two-dimensional image coordinate system and the three-dimensional space coordinate system calculated in

从而获取各监控场景下检测到的目标在三维空间坐标系下的坐标(Xi,Yi,Zi)。Thereby, the coordinates (X i , Y i , Z i ) of the detected targets in each monitoring scene in the three-dimensional space coordinate system are obtained.

步骤S6、多摄像头数据融合,基于各监控场景下的目标在三维空间坐标系下的坐标,提取目标在在三维空间坐标系下的轨迹数据,具体步骤如下:Step S6, multi-camera data fusion, based on the coordinates of the target in each monitoring scene in the three-dimensional space coordinate system, extract the trajectory data of the target in the three-dimensional space coordinate system, and the specific steps are as follows:

步骤601:按出现时间的先后顺序,依次读取相邻两个时间节点中各监控场景下目标在三维空间坐标系下的坐标。Step 601: Read the coordinates of the target in the three-dimensional space coordinate system in each monitoring scene in two adjacent time nodes in sequence according to the order of appearance time.

步骤602:计算第(i+1)个时间节点中各目标在三维空间坐标系下的坐标(记为(i+1)1~(i+1)a)与第i个时间节点中各目标在三维空间坐标系下的坐标(记为i1~ib)之间的距离,记为dxy(其中x∈[1,a], y∈[1,b])。Step 602: Calculate the coordinates of each target in the (i+1) th time node in the three-dimensional space coordinate system (denoted as (i+1) 1 to (i+1) a ) and the coordinates of each target in the ith time node The distance between the coordinates (denoted as i 1 to i b ) in the three-dimensional space coordinate system is denoted as d xy (where x∈[1,a], y∈[1,b]).

步骤603:当x为定值时,选择使得dxy最小的y,并暂且认为x,y为同一目标在相邻两个时间节点中的编号。Step 603: When x is a fixed value, select y that minimizes d xy , and temporarily consider x and y to be the numbers of the same target in two adjacent time nodes.

步骤604:若dxy小于给定阈值T,则确定x,y为同一目标在相邻两个时间节点中的编号,否则x为在第 (i+1)个时间节点第一次出现在监控场景中的新目标的编号。Step 604: If d xy is less than the given threshold T, then determine that x and y are the numbers of the same target in two adjacent time nodes, otherwise x is the first time that the (i+1)th time node appears in the monitoring system. The number of the new target in the scene.

步骤605:将步骤604中判断得到的相邻两个时间节点中的相同目标在三维空间坐标系下的坐标依次连接起来,并为每一条坐标连线添加该连线所属的目标的类别以及起止时间节点,这些数据共同构成各摄像头监控的场景下目标在三维空间坐标系下的运动轨迹数据。Step 605: Connect the coordinates of the same target in the three-dimensional space coordinate system in the two adjacent time nodes determined in step 604 in turn, and add the category and start and end of the target to which the connection belongs to each coordinate connection line. Time nodes, these data together constitute the movement trajectory data of the target in the three-dimensional space coordinate system in the scene monitored by each camera.

本发明实施例能还原目标在真实空间中的位置变化信息,同时还可以更好地对目标进行跨场景跟踪,实现对多个摄像头采集到的目标信息进行融合,为高层次的目标行为分析提供更多的信息。The embodiment of the present invention can restore the position change information of the target in the real space, and can also better track the target across scenes, realize the fusion of target information collected by multiple cameras, and provide high-level target behavior analysis. more information.

尽管已经示出并描述了本发明的特殊实施例,然而在不背离本发明的示例性实施例及其更宽广方面的前提下,本领域技术人员显然可以基于此处的教学做出变化和修改。因此,所附的权利要求意在将所有这类不背离本发明的示例性实施例的真实精神和范围的变化和更改包含在其范围之内。While particular embodiments of this invention have been shown and described, it will be apparent to those skilled in the art that changes and modifications based on the teachings herein can be made without departing from the exemplary embodiments of this invention and its broader aspects . Therefore, the appended claims are intended to include within their scope all such changes and modifications without departing from the true spirit and scope of the exemplary embodiments of this invention.

Claims (5)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201810917557.XA CN109190508B (en) | 2018-08-13 | 2018-08-13 | Multi-camera data fusion method based on space coordinate system |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201810917557.XA CN109190508B (en) | 2018-08-13 | 2018-08-13 | Multi-camera data fusion method based on space coordinate system |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN109190508A CN109190508A (en) | 2019-01-11 |

| CN109190508B true CN109190508B (en) | 2022-09-06 |

Family

ID=64921676

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN201810917557.XA Active CN109190508B (en) | 2018-08-13 | 2018-08-13 | Multi-camera data fusion method based on space coordinate system |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN109190508B (en) |

Families Citing this family (25)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN109840503B (en) * | 2019-01-31 | 2021-02-26 | 深兰科技(上海)有限公司 | A method and device for determining category information |

| CN109919064B (en) * | 2019-02-27 | 2020-12-22 | 湖南信达通信息技术有限公司 | Real-time people counting method and device in rail transit carriage |

| CN110000793B (en) * | 2019-04-29 | 2024-07-16 | 武汉库柏特科技有限公司 | Robot motion control method and device, storage medium and robot |

| CN110398760B (en) * | 2019-06-26 | 2024-11-12 | 杭州数尔安防科技股份有限公司 | Pedestrian coordinate capture device based on image analysis and use method thereof |

| CN110443228B (en) * | 2019-08-20 | 2022-03-04 | 图谱未来(南京)人工智能研究院有限公司 | Pedestrian matching method and device, electronic equipment and storage medium |

| CN110738846B (en) * | 2019-09-27 | 2022-06-17 | 同济大学 | Vehicle behavior monitoring system based on radar and video group and its realization method |

| CN110888957B (en) * | 2019-11-22 | 2023-03-10 | 腾讯科技(深圳)有限公司 | Object positioning method and related device |

| CN111597954A (en) * | 2020-05-12 | 2020-08-28 | 博康云信科技有限公司 | Method and system for identifying vehicle position in monitoring video |

| CN111754552A (en) * | 2020-06-29 | 2020-10-09 | 华东师范大学 | A multi-camera cooperative target tracking method based on deep learning |

| CN111985307B (en) * | 2020-07-07 | 2024-12-27 | 深圳市自行科技有限公司 | Driver specific action detection method |

| CN112037159B (en) * | 2020-07-29 | 2023-06-23 | 中天智控科技控股股份有限公司 | A method and system for cross-camera road space fusion and vehicle target detection and tracking |

| CN114078084A (en) * | 2020-08-13 | 2022-02-22 | 邯郸市金世达科技有限公司 | Method for realizing target positioning and tracking based on coordinate mapping |

| CN114170499A (en) * | 2020-08-19 | 2022-03-11 | 北京万集科技股份有限公司 | Object detection method, tracking method, device, vision sensor and medium |

| CN114078326B (en) * | 2020-08-19 | 2023-04-07 | 北京万集科技股份有限公司 | Collision detection method, device, visual sensor and storage medium |

| CN112307912A (en) * | 2020-10-19 | 2021-02-02 | 科大国创云网科技有限公司 | Method and system for determining personnel track based on camera |

| CN115205325B (en) * | 2021-04-13 | 2025-09-09 | 上海幻电信息科技有限公司 | Target tracking method and device |

| CN116469065B (en) * | 2022-01-06 | 2025-09-09 | 云控智行科技有限公司 | Method for estimating target recognition position error by using average cross-correlation ratio |

| CN115222920B (en) * | 2022-09-20 | 2023-01-17 | 北京智汇云舟科技有限公司 | Image-based digital twin spatio-temporal knowledge map construction method and device |

| CN116612594A (en) * | 2023-05-11 | 2023-08-18 | 深圳市云之音科技有限公司 | Intelligent monitoring and outbound system and method based on big data |

| CN116528062B (en) * | 2023-07-05 | 2023-09-15 | 合肥中科类脑智能技术有限公司 | Multiple target tracking methods |

| CN117528026B (en) * | 2023-10-25 | 2025-02-14 | 北京大学鄂尔多斯能源研究院 | A device control system and method from geographic space to high-precision digital space |

| CN117692583B (en) * | 2023-12-04 | 2025-02-18 | 中国人民解放军92941部队 | Image auxiliary guide method and device based on position information verification |

| CN117687426A (en) * | 2024-01-31 | 2024-03-12 | 成都航空职业技术学院 | A method and system for flight control of unmanned aerial vehicle in a low-altitude environment |

| CN119399694A (en) * | 2024-10-31 | 2025-02-07 | 中国银行股份有限公司 | A vault handover monitoring method and related device |

| CN119068056A (en) * | 2024-11-04 | 2024-12-03 | 悉望数智科技(杭州)有限公司 | A plant and station personnel positioning method and system based on visual recognition |

Citations (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN104501740A (en) * | 2014-12-18 | 2015-04-08 | 杭州鼎热科技有限公司 | Handheld laser three-dimension scanning method and handheld laser three-dimension scanning equipment based on mark point trajectory tracking |

| CN106127137A (en) * | 2016-06-21 | 2016-11-16 | 长安大学 | A kind of target detection recognizer based on 3D trajectory analysis |

| CN106952289A (en) * | 2017-03-03 | 2017-07-14 | 中国民航大学 | WiFi target localization method combined with deep video analysis |

-

2018

- 2018-08-13 CN CN201810917557.XA patent/CN109190508B/en active Active

Patent Citations (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN104501740A (en) * | 2014-12-18 | 2015-04-08 | 杭州鼎热科技有限公司 | Handheld laser three-dimension scanning method and handheld laser three-dimension scanning equipment based on mark point trajectory tracking |

| CN106127137A (en) * | 2016-06-21 | 2016-11-16 | 长安大学 | A kind of target detection recognizer based on 3D trajectory analysis |

| CN106952289A (en) * | 2017-03-03 | 2017-07-14 | 中国民航大学 | WiFi target localization method combined with deep video analysis |

Also Published As

| Publication number | Publication date |

|---|---|

| CN109190508A (en) | 2019-01-11 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN109190508B (en) | Multi-camera data fusion method based on space coordinate system | |

| CN111126304B (en) | Augmented reality navigation method based on indoor natural scene image deep learning | |

| US9286678B2 (en) | Camera calibration using feature identification | |

| CN109598794B (en) | Construction method of three-dimensional GIS dynamic model | |

| CN104061907B (en) | The most variable gait recognition method in visual angle based on the coupling synthesis of gait three-D profile | |

| TWI769787B (en) | Target tracking method and apparatus, storage medium | |

| CN112818925B (en) | Urban building and crown identification method | |

| CN114612823B (en) | A method for monitoring personnel behavior in laboratory safety management | |

| CN113256731B (en) | Target detection method and device based on monocular vision | |

| CN115797408B (en) | Target tracking method and apparatus that integrates multi-view images and 3D point clouds | |

| Han et al. | MMPTRACK: Large-scale densely annotated multi-camera multiple people tracking benchmark | |

| CN102034267A (en) | Three-dimensional reconstruction method of target based on attention | |

| CN109345568A (en) | Sports ground intelligent implementing method and system based on computer vision algorithms make | |

| CN101976461A (en) | Novel outdoor augmented reality label-free tracking registration algorithm | |

| CN110636248B (en) | Target tracking method and device | |

| CN118334071A (en) | Multi-camera fusion-based traffic intersection vehicle multi-target tracking method | |

| CN118675106A (en) | Real-time monitoring method, system, device and storage medium for falling rocks based on machine vision | |

| CN105574545B (en) | The semantic cutting method of street environment image various visual angles and device | |

| Shalaby et al. | Algorithms and applications of structure from motion (SFM): A survey | |

| CN113628251B (en) | Smart hotel terminal monitoring method | |

| CN119579772A (en) | Method, device and electronic equipment for constructing three-dimensional model of scene | |

| CN119339005A (en) | A fast conversion method for large space positioning based on image recognition | |

| CN116912877B (en) | Method and system for monitoring space-time contact behavior sequence of urban public space crowd | |

| Wang et al. | Research and implementation of the sports analysis system based on 3D image technology | |

| CN115131407B (en) | Robot target tracking method, device and equipment oriented to digital simulation environment |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant |