CN107862678B - Fundus image non-reference quality evaluation method - Google Patents

Fundus image non-reference quality evaluation method Download PDFInfo

- Publication number

- CN107862678B CN107862678B CN201710976518.2A CN201710976518A CN107862678B CN 107862678 B CN107862678 B CN 107862678B CN 201710976518 A CN201710976518 A CN 201710976518A CN 107862678 B CN107862678 B CN 107862678B

- Authority

- CN

- China

- Prior art keywords

- feature vector

- fundus image

- denoted

- area

- vector

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Classifications

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T7/00—Image analysis

- G06T7/0002—Inspection of images, e.g. flaw detection

- G06T7/0012—Biomedical image inspection

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/21—Design or setup of recognition systems or techniques; Extraction of features in feature space; Blind source separation

- G06F18/214—Generating training patterns; Bootstrap methods, e.g. bagging or boosting

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06F—ELECTRIC DIGITAL DATA PROCESSING

- G06F18/00—Pattern recognition

- G06F18/20—Analysing

- G06F18/24—Classification techniques

- G06F18/241—Classification techniques relating to the classification model, e.g. parametric or non-parametric approaches

- G06F18/2411—Classification techniques relating to the classification model, e.g. parametric or non-parametric approaches based on the proximity to a decision surface, e.g. support vector machines

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06V—IMAGE OR VIDEO RECOGNITION OR UNDERSTANDING

- G06V10/00—Arrangements for image or video recognition or understanding

- G06V10/40—Extraction of image or video features

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/20—Special algorithmic details

- G06T2207/20081—Training; Learning

-

- G—PHYSICS

- G06—COMPUTING OR CALCULATING; COUNTING

- G06T—IMAGE DATA PROCESSING OR GENERATION, IN GENERAL

- G06T2207/00—Indexing scheme for image analysis or image enhancement

- G06T2207/30—Subject of image; Context of image processing

- G06T2207/30004—Biomedical image processing

- G06T2207/30041—Eye; Retina; Ophthalmic

Landscapes

- Engineering & Computer Science (AREA)

- Theoretical Computer Science (AREA)

- Data Mining & Analysis (AREA)

- General Physics & Mathematics (AREA)

- Physics & Mathematics (AREA)

- Computer Vision & Pattern Recognition (AREA)

- General Engineering & Computer Science (AREA)

- Evolutionary Biology (AREA)

- Evolutionary Computation (AREA)

- Bioinformatics & Computational Biology (AREA)

- Bioinformatics & Cheminformatics (AREA)

- Artificial Intelligence (AREA)

- Life Sciences & Earth Sciences (AREA)

- Health & Medical Sciences (AREA)

- General Health & Medical Sciences (AREA)

- Medical Informatics (AREA)

- Nuclear Medicine, Radiotherapy & Molecular Imaging (AREA)

- Radiology & Medical Imaging (AREA)

- Quality & Reliability (AREA)

- Multimedia (AREA)

- Eye Examination Apparatus (AREA)

- Image Analysis (AREA)

Abstract

本发明公开了一种眼底图像无参考质量评价方法,其包括训练阶段和测试阶段两个过程;其考虑了亮度、自然度和结构布局对眼底图像质量的影响,提取出暗通道比重特征、亮通道比重特征、非均匀亮度特征、自然度质量评价分值和结构布局指标构成特征矢量,然后利用支持向量回归对训练图像集中的所有眼底图像的特征矢量进行训练,构造质量预测模型;在测试阶段,通过计算用作测试的眼底图像的特征矢量,并根据训练阶段构造的质量预测模型,预测得到该眼底图像的质量客观评价预测值,由于获得的特征矢量信息能够较好地反映眼底图像的质量变化情况,因此有效地提高了客观评价结果与主观感知之间的相关性。

The invention discloses a no-reference quality evaluation method for a fundus image, which includes two processes: a training phase and a testing phase; it takes into account the influence of brightness, naturalness and structural layout on the quality of the fundus image, extracts dark channel specific gravity features, bright The channel proportion feature, non-uniform brightness feature, naturalness quality evaluation score and structural layout index constitute the feature vector, and then use support vector regression to train the feature vector of all fundus images in the training image set to construct a quality prediction model; in the testing phase , by calculating the feature vector of the fundus image used as a test, and according to the quality prediction model constructed in the training stage, the objective quality evaluation prediction value of the fundus image is predicted, because the obtained feature vector information can better reflect the quality of the fundus image Therefore, the correlation between objective evaluation results and subjective perceptions is effectively improved.

Description

技术领域technical field

本发明涉及一种图像质量评价方法,尤其是涉及一种眼底图像无参考质量评价方法。The invention relates to an image quality evaluation method, in particular to a reference-free quality evaluation method of fundus images.

背景技术Background technique

眼底图像由专门的眼底相机拍摄获取,眼底图像包括视网膜中视盘、黄斑和血管等主要生理结构,是医学影像中一类重要的图像。其中,视盘在正常的眼底图像中表现为近似圆形的亮色区域,与背景区域的对比度最强,为视神经和血管的起始区域;黄斑由于其含有丰富的叶黄素,因此在眼底图像中表现为暗色区域,且该区域无血管结构,在黄斑的正中央有一个向内凹陷的区域称为中央凹;血管由视盘区域开始并延伸到整个眼球内部,呈现树状分布在整个眼底图像中,在视盘区域的血管最粗、密度最大,且基本沿垂直方向延伸。The fundus image is captured by a special fundus camera. The fundus image includes the main physiological structures such as the optic disc, macula, and blood vessels in the retina, and is an important type of image in medical imaging. Among them, the optic disc appears as an approximately circular bright color area in the normal fundus image, with the strongest contrast with the background area, which is the starting area of the optic nerve and blood vessels; the macula, because of its rich lutein, is in the fundus image. It appears as a dark area with no vascular structure, and there is an inwardly concave area in the center of the macula called the fovea; the blood vessels start from the optic disc area and extend to the inside of the entire eyeball, showing a tree-like distribution in the entire fundus image , the blood vessels in the optic disc region are the thickest and densest, and extend substantially in the vertical direction.

质量优的眼底图像能够帮助眼科医生诊断各种眼底疾病,也能帮助诊断与视网膜病变相关的全身性疾病。然而在成像过程中,往往会存在光照偏亮、光照偏暗、光照不均匀、模糊、对比度低及布局不合理等问题,导致所获取的眼底图像不能用于诊断而需要重新拍摄,大大降低了效率且增加了医疗诊断成本。因此,在拍摄眼底图像的同时自动评价图像质量并推荐是否需要重拍就变得至关重要。High-quality fundus images can help ophthalmologists diagnose various fundus diseases, as well as systemic diseases related to retinopathy. However, in the imaging process, there are often problems such as bright illumination, dark illumination, uneven illumination, blurring, low contrast and unreasonable layout, resulting in the acquired fundus images that cannot be used for diagnosis and need to be re-shot, which greatly reduces the efficiency and increase the cost of medical diagnosis. Therefore, it becomes crucial to automatically evaluate the image quality and recommend whether retakes are necessary while taking the fundus image.

发明内容SUMMARY OF THE INVENTION

本发明所要解决的技术问题是提供一种眼底图像无参考质量评价方法,其能够有效地提高客观评价结果与主观感知之间的相关性,利用其能够准确地自动评价眼底图像质量以确定是否需要重新拍摄眼底图像。The technical problem to be solved by the present invention is to provide a reference-free quality evaluation method for fundus images, which can effectively improve the correlation between objective evaluation results and subjective perception, and can accurately and automatically evaluate the quality of fundus images to determine whether the need for Retake the fundus image.

本发明解决上述技术问题所采用的技术方案为:一种眼底图像无参考质量评价方法,其特征在于包括训练阶段和测试阶段两个过程;The technical solution adopted by the present invention to solve the above-mentioned technical problems is: a method for evaluating the quality of fundus images without reference, which is characterized in that it includes two processes: a training phase and a testing phase;

所述的训练阶段过程的具体步骤为:The specific steps of the training phase process are:

①_1、选取N幅眼底图像构成训练图像集,记为{Ik|1≤k≤N};其中,N为正整数,N>1,k为正整数,1≤k≤N,Ik表示{Ik|1≤k≤N}中的第k幅眼底图像,{Ik|1≤k≤N}中的每幅眼底图像的宽度为W,且高度为H;①_1. Select N fundus images to form a training image set, denoted as {I k |1≤k≤N}; among them, N is a positive integer, N>1, k is a positive integer, 1≤k≤N, I k represents The k-th fundus image in {I k |1≤k≤N}, the width of each fundus image in {I k |1≤k≤N} is W, and the height is H;

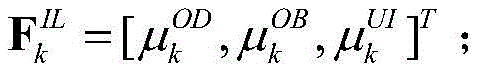

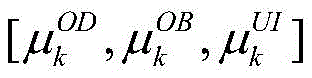

①_2、计算{Ik|1≤k≤N}中的每幅眼底图像的亮度特征矢量,将Ik的亮度特征矢量记为并计算{Ik|1≤k≤N}中的每幅眼底图像的自然度特征矢量,将Ik的自然度特征矢量记为计算{Ik|1≤k≤N}中的每幅眼底图像的结构布局特征矢量,将Ik的结构布局特征矢量记为其中,的维数为3×1,的维数为1×1,的维数为1×1;①_2. Calculate the luminance feature vector of each fundus image in {I k |1≤k≤N}, and denote the luminance feature vector of I k as and calculate the naturalness feature vector of each fundus image in {I k |1≤k≤N}, and denote the naturalness feature vector of I k as Calculate the structural layout feature vector of each fundus image in {I k |1≤k≤N}, and denote the structural layout feature vector of I k as in, The dimension of is 3 × 1, The dimension of is 1 × 1, The dimension is 1 × 1;

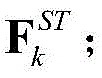

①_3、将{Ik|1≤k≤N}中的每幅眼底图像的亮度特征矢量、自然度特征矢量和结构布局特征矢量按序排列构成{Ik|1≤k≤N}中的每幅眼底图像的特征矢量,将Ik的特征矢量记为Fk,其中,Fk的维数为5×1,符号“[ ]”为矢量表示符号,表示将和连接起来形成一个特征矢量,为的转置;①_3. Arrange the luminance feature vector, naturalness feature vector and structural layout feature vector of each fundus image in {I k |1≤k≤N} in sequence to form each of {I k |1≤k≤N} is the feature vector of a fundus image, and the feature vector of I k is denoted as F k , Among them, the dimension of F k is 5 × 1, and the symbol "[ ]" is a vector representation symbol, means to and concatenated to form a feature vector, for transpose of ;

①_4、将{Ik|1≤k≤N}中的所有眼底图像各自的特征矢量和主观质量推荐值构成训练样本数据集合,训练样本数据集合中包含N个特征矢量和N个主观质量推荐值;然后采用支持向量回归作为机器学习的方法,对训练样本数据集合中的所有特征矢量进行训练,使得经过训练得到的回归函数值与主观质量推荐值之间的误差最小,拟合得到最优的权重矢量wopt和最优的偏置项bopt;接着利用最优的权重矢量wopt和最优的偏置项bopt,构造质量预测模型,记为f(F),其中,f( )为函数表示形式,F用于表示眼底图像的特征矢量,且作为质量预测模型的输入矢量,(wopt)T为wopt的转置,为F的线性函数;①_4. The respective feature vectors and subjective quality recommendation values of all fundus images in {I k |1≤k≤N} form a training sample data set, and the training sample data set contains N feature vectors and N subjective quality recommendation values. ; Then use support vector regression as a machine learning method to train all feature vectors in the training sample data set, so that the error between the regression function value obtained after training and the subjective quality recommendation value is the smallest, and the optimal fitting is obtained. The weight vector w opt and the optimal bias term b opt ; then use the optimal weight vector w opt and the optimal bias term b opt to construct a quality prediction model, denoted as f(F), Among them, f( ) is the function representation, F is used to represent the feature vector of the fundus image, and is used as the input vector of the quality prediction model, (w opt ) T is the transpose of w opt , is a linear function of F;

所述的测试阶段过程的具体步骤为:The specific steps of the test phase process are:

②对于任意一幅用作测试的眼底图像Itest,按照步骤①_2至步骤①_3的过程,以相同的操作,获取Itest的特征矢量,记为Ftest;然后根据训练阶段构造的质量预测模型对Ftest进行测试,预测得到Ftest对应的预测值,将该预测值作为Itest的质量客观评价预测值,记为Qtest,其中,Itest的宽度为W',且高度为H',Ftest的维数为5×1,为Ftest的线性函数。2. For any fundus image I test used as a test, follow the process of step ①_2 to step ①_3, with the same operation, obtain the feature vector of I test , and denote it as F test ; then according to the quality prediction model constructed in the training stage to F test is tested, and the predicted value corresponding to F test is predicted, and the predicted value is regarded as the quality objective evaluation predicted value of I test , which is denoted as Q test , Among them, the width of I test is W', and the height is H', and the dimension of F test is 5 × 1, is a linear function of F test .

所述的步骤①_2中的的获取过程为:In the mentioned steps ①_2 The acquisition process is:

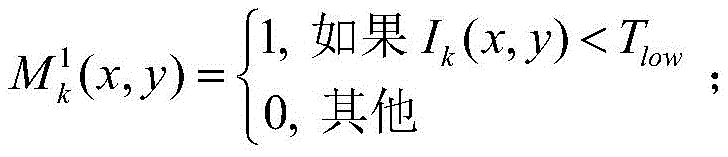

①_2a1、计算Ik的暗通道掩膜图像,记为将中坐标位置为(x,y)的像素点的像素值记为 其中,1≤x≤W,1≤y≤H,Ik(x,y)表示Ik中坐标位置为(x,y)的像素点的像素值,Tlow为暗通道阈值;①_2a1, calculate the dark channel mask image of I k , denoted as Will The pixel value of the pixel whose middle coordinate position is (x, y) is recorded as Among them, 1≤x≤W, 1≤y≤H, I k (x, y) represents the pixel value of the pixel point whose coordinate position is (x, y) in I k , and T low is the dark channel threshold;

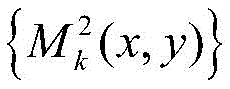

并计算Ik的亮通道掩膜图像,记为将中坐标位置为(x,y)的像素点的像素值记为 其中,Thigh为亮通道阈值;and calculate the bright channel mask image of I k , denoted as Will The pixel value of the pixel whose middle coordinate position is (x, y) is recorded as Among them, T high is the bright channel threshold;

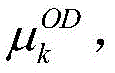

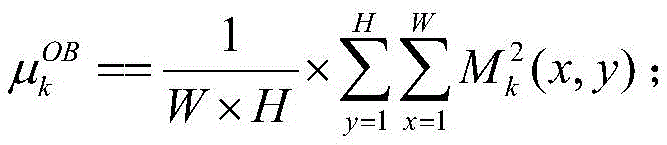

①_2b1、计算中的所有像素点的像素值的均值,作为Ik的暗通道比重特征,记为 ①_2b1, calculation The mean of the pixel values of all the pixels in , as the dark channel proportion feature of I k , is denoted as

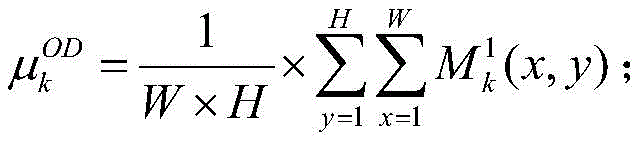

并计算中的所有像素点的像素值的均值,作为Ik的亮通道比重特征,记为 and calculate The mean of the pixel values of all the pixels in , as the bright channel proportion feature of I k , is denoted as

①_2c1、将Ik划分成多个尺寸大小为9×9像素且步长为1像素的相互重叠的子块;然后从Ik中的所有子块中随机选择M个子块;接着将选择的每个子块中的所有像素点的像素值组成列向量,将选择的第t个子块中的所有像素点的像素值组成的列向量记为yt;其中,M为正整数,1000≤M≤M*,M*表示Ik中包含的子块的总个数,t为正整数,1≤t≤M,yt的维数为81×1;①_2c1. Divide I k into multiple overlapping sub-blocks with a size of 9 × 9 pixels and a step size of 1 pixel; then randomly select M sub-blocks from all sub-blocks in I k ; The pixel values of all the pixels in the sub-blocks form a column vector, and the column vector composed of the pixel values of all the pixels in the selected t-th sub-block is denoted as y t ; wherein, M is a positive integer, 1000≤M≤M * , M * represents the total number of sub-blocks included in I k , t is a positive integer, 1≤t≤M, and the dimension of y t is 81×1;

①_2d1、计算Ik的非均匀亮度特征,记为 其中,μt表示yt中的所有元素的值的均值,也即表示选择的第t个子块中的所有像素点的像素值的均值,符号“|| ||”为求欧氏距离符号;①_2d1, calculate the non-uniform brightness feature of I k , denoted as Among them, μ t represents the mean value of the values of all elements in y t , that is, the mean value of the pixel values of all the pixels in the selected t-th sub-block, and the symbol “|| ||” is the Euclidean distance symbol;

①_2e1、将和按序排列构成的矢量作为Ik的亮度特征矢量 其中,的维数为3×1,符号“[ ]”为矢量表示符号,表示将和连接起来形成一个矢量,为的转置。①_2e1, will and The vector formed in order is used as the luminance feature vector of I k in, The dimension of is 3 × 1, the symbol "[ ]" is a vector representation symbol, means to and concatenated to form a vector, for transposition of .

所述的步骤①_2中的的获取过程为:In the mentioned steps ①_2 The acquisition process is:

①_2a2、选取N'幅主观质量推荐值为优的眼底图像构成训练集;然后采用自然图像质量预测器从训练集中提取出训练集的原始多元高斯模型,记为(μ,C);其中,N'为正整数,N'>1,μ表示(μ,C)的均值特征,C表示(μ,C)的协方差矩阵特征;①_2a2. Select N' fundus images with excellent subjective quality recommendation value to form the training set; then use the natural image quality predictor to extract the original multivariate Gaussian model of the training set from the training set, denoted as (μ, C); among them, N ' is a positive integer, N'>1, μ represents the mean feature of (μ, C), and C represents the covariance matrix feature of (μ, C);

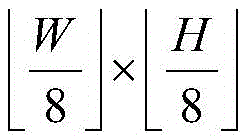

①_2b2、将Ik划分成M'个互不重叠的尺寸大小为64×64像素的子块;然后采用自然图像质量预测器从Ik中的每个子块中提取出Ik中的每个子块的原始多元高斯模型,将Ik中的第t'个子块的原始多元高斯模型记为(μt',Ct');其中,M'为正整数,符号为向下取整操作符号,t'为正整数,1≤t'≤M',μt'表示(μt',Ct')的均值特征,Ct'表示(μt',Ct')的协方差矩阵特征;①_2b2. Divide I k into M' non-overlapping sub-blocks with a size of 64×64 pixels; then use a natural image quality predictor to extract each sub-block in I k from each sub-block in I k The original multivariate Gaussian model of the symbol is the symbol of the round-down operation, t' is a positive integer, 1≤t'≤M', μ t' represents the mean feature of (μ t' ,C t' ), C t' represents (μ t' ,C t ' ) covariance matrix features;

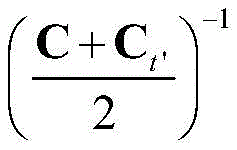

①_2c2、根据(μ,C)和Ik中的每个子块的原始多元高斯模型,计算Ik中的每个子块的自然度质量评价分值,将Ik中的第t'个子块的自然度质量评价分值记为qt',其中,(μ-μt')T为(μ-μt')的转置,为的逆;①_2c2. According to (μ, C) and the original multivariate Gaussian model of each sub-block in I k , calculate the naturalness quality evaluation score of each sub-block in I k , and calculate the naturalness of the t'th sub-block in I k The quality evaluation score is recorded as q t' , where (μ-μ t' ) T is the transpose of (μ-μ t' ), for the inverse of ;

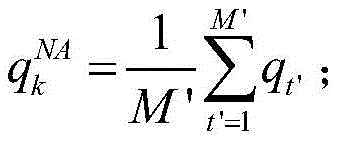

①_2d2、计算Ik的自然度质量评价分值,记为 然后直接将作为Ik的自然度特征矢量其中,的维数为1×1。①_2d2, calculate the naturalness quality evaluation score of I k , denoted as then directly Naturalness feature vector as I k in, The dimension is 1×1.

所述的步骤①_2中的的获取过程为:In the mentioned steps ①_2 The acquisition process is:

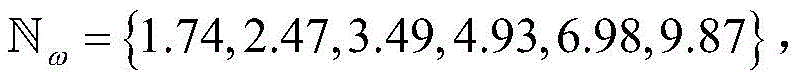

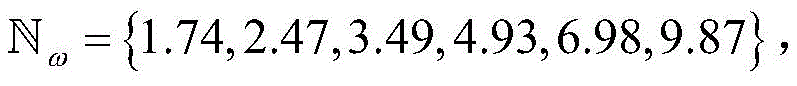

①_2a3、采用Log-Gabor滤波器对Ik进行滤波处理,得到Ik中的每个像素点在不同中心频率和不同方向因子下的频率响应,将Ik中坐标位置为(x,y)的像素点在中心频率为ω和方向因子为θ下的频率响应记为Gω,θ(x,y),Gω,θ(x,y)=eω,θ(x,y)+joω,θ(x,y);其中,1≤x≤W,1≤y≤H,ω表示Log-Gabor滤波器的中心频率, θ表示Log-Gabor滤波器的方向因子, eω,θ(x,y)为Gω,θ(x,y)的实部,oω,θ(x,y)为Gω,θ(x,y)的虚部,符号“j”为虚数表示符号;①-2a3, use Log-Gabor filter to filter I k , and obtain the frequency response of each pixel point in I k under different center frequencies and different direction factors, and set the coordinate position in I k as (x, y) The frequency response of a pixel at a center frequency of ω and a direction factor of θ is recorded as G ω, θ (x, y), G ω, θ (x, y) = e ω, θ (x, y) + jo ω ,θ (x,y); where 1≤x≤W, 1≤y≤H, ω represents the center frequency of the Log-Gabor filter, θ represents the direction factor of the Log-Gabor filter, e ω, θ (x, y) is the real part of G ω, θ (x, y), o ω, θ (x, y) is the imaginary part of G ω, θ (x, y), symbol "j" sign for imaginary numbers;

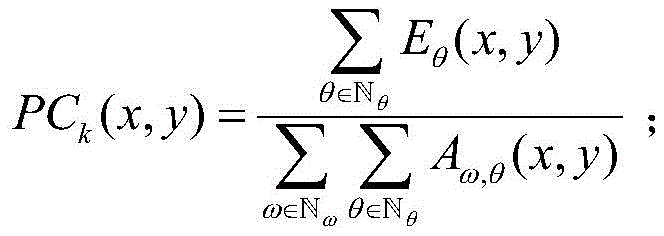

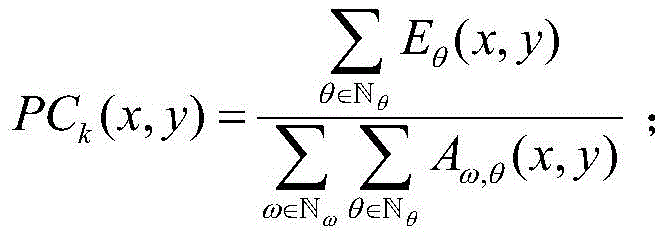

①_2b3、计算Ik的相位一致性图,记为{PCk(x,y)},将{PCk(x,y)}中坐标位置为(x,y)的像素点的像素值记为PCk(x,y),其中, ①_2b3. Calculate the phase consistency map of I k , denoted as {PC k (x, y)}, and denote the pixel value of the pixel point whose coordinate position is (x, y) in {PC k (x, y)} as PC k (x,y), in,

①_2c3、计算Ik的二值血管图,记为{Bk(x,y)},将{Bk(x,y)}中坐标位置为(x,y)的像素点的像素值记为Bk(x,y),其中,TPC为二值化阈值;①_2c3. Calculate the binary blood vessel map of I k , denoted as {B k (x, y)}, and denote the pixel value of the pixel point whose coordinate position is (x, y) in {B k (x, y)} as B k (x,y), Among them, T PC is the binarization threshold;

①_2d3、计算Ik的视盘中心位置,记为 其中,表示求取使得的值最小时的(x',y'),δ表示水平偏移位置,ε表示垂直偏移位置,表示Ik中以坐标位置(x',y')为中心、半径为100像素的圆形区域,Bk(x'+δ,y'+ε)表示{Bk(x,y)}中坐标位置为(x'+δ,y'+ε)的像素点的像素值;①_2d3, calculate the center position of the optic disc of I k , denoted as in, means to ask for (x', y') when the value of is the smallest, δ represents the horizontal offset position, ε represents the vertical offset position, Represents a circular area with a coordinate position (x', y') as the center and a radius of 100 pixels in I k , and B k (x'+δ, y'+ε) represents {B k (x, y)} in The pixel value of the pixel whose coordinate position is (x'+δ, y'+ε);

①_2e3、令Sk表示Ik的结构布局指标;然后判断是否落在规定的区域内,如果是,则令Sk=1,否则,令Sk=0;再直接将Sk作为Ik的结构布局特征矢量其中,的维数为1×1。①_2e3, let S k represent the structural layout index of I k ; then judge Whether it falls in the specified area If yes, let Sk = 1, otherwise, let Sk = 0; then directly use Sk as the structural layout feature vector of I k in, The dimension is 1×1.

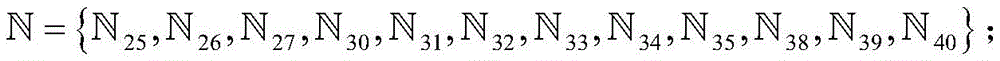

所述的步骤①_2e3中,规定的区域定义为:将Ik划分成8×8个互不重叠的尺寸大小为像素的区域,将Ik中的第p个区域记为然后水平扫描Ik,找出Ik中的第25个区域第26个区域第27个区域第30个区域第31个区域第32个区域第33个区域第34个区域第35个区域第38个区域第39个区域第40个区域再将找出的所有区域构成的集合作为规定的区域 其中,符号为向下取整操作符号,p为正整数,1≤p≤64。In the step ①_2e3 described, the specified area Defined as: dividing I k into 8 × 8 non-overlapping sizes as The area of pixels, denote the p-th area in I k as Then scan I k horizontally to find the 25th region in I k 26th area 27th area 30th area 31st area 32nd area 33rd area 34th area 35th area 38th area 39th area 40th area Then take the set of all the found areas as the specified area Among them, the symbol In order to round down the operation symbol, p is a positive integer, 1≤p≤64.

与现有技术相比,本发明的优点在于:Compared with the prior art, the advantages of the present invention are:

本发明方法考虑了亮度、自然度和结构布局对眼底图像质量的影响,提取出暗通道比重特征、亮通道比重特征、非均匀亮度特征、自然度质量评价分值和结构布局指标构成特征矢量,然后利用支持向量回归对训练图像集中的所有眼底图像的特征矢量进行训练,构造质量预测模型;在测试阶段,通过计算用作测试的眼底图像的特征矢量,并根据训练阶段构造的质量预测模型,预测得到该眼底图像的质量客观评价预测值,由于获得的特征矢量信息能够较好地反映眼底图像的质量变化情况,因此有效地提高了客观评价结果与主观感知之间的相关性。The method of the invention takes into account the influence of brightness, naturalness and structural layout on the quality of the fundus image, and extracts the dark channel proportion feature, the bright channel proportion feature, the non-uniform brightness feature, the naturalness quality evaluation score and the structural layout index to form a feature vector, Then use support vector regression to train the feature vectors of all fundus images in the training image set to construct a quality prediction model; in the testing phase, by calculating the feature vectors of the fundus images used for testing, and according to the quality prediction model constructed in the training phase, The predicted value of the objective evaluation of the quality of the fundus image is obtained by predicting, because the obtained feature vector information can better reflect the quality change of the fundus image, thus effectively improving the correlation between the objective evaluation result and the subjective perception.

附图说明Description of drawings

图1为本发明方法的总体实现框图。FIG. 1 is a block diagram of the overall implementation of the method of the present invention.

具体实施方式Detailed ways

以下结合附图实施例对本发明作进一步详细描述。The present invention will be further described in detail below with reference to the embodiments of the accompanying drawings.

本发明提出的一种眼底图像无参考质量评价方法,其总体实现框图如图1所示,其包括训练阶段和测试阶段两个过程。The overall implementation block diagram of a fundus image quality evaluation method without reference proposed by the present invention is shown in FIG. 1 , which includes two processes: a training phase and a testing phase.

所述的训练阶段过程的具体步骤为:The specific steps of the training phase process are:

①_1、选取N幅眼底图像构成训练图像集,记为{Ik|1≤k≤N};其中,N为正整数,N>1,如取N=1000,k为正整数,1≤k≤N,Ik表示{Ik|1≤k≤N}中的第k幅眼底图像,{Ik|1≤k≤N}中的每幅眼底图像的宽度为W,且高度为H。①_1. Select N fundus images to form a training image set, denoted as {I k |1≤k≤N}; among them, N is a positive integer, N>1, if N=1000, k is a positive integer, 1≤k ≤N, I k represents the k-th fundus image in {I k |1≤k≤N}, and each fundus image in {I k |1≤k≤N} has a width of W and a height of H.

在本实施例中,随机选择宁波大学建立的眼底图像数据库中的一部分眼底图像构成训练图像集。In this embodiment, a part of the fundus images in the fundus image database established by Ningbo University is randomly selected to constitute the training image set.

①_2、计算{Ik|1≤k≤N}中的每幅眼底图像的亮度特征矢量,将Ik的亮度特征矢量记为并计算{Ik|1≤k≤N}中的每幅眼底图像的自然度特征矢量,将Ik的自然度特征矢量记为计算{Ik|1≤k≤N}中的每幅眼底图像的结构布局特征矢量,将Ik的结构布局特征矢量记为其中,的维数为3×1,的维数为1×1,的维数为1×1。①_2. Calculate the luminance feature vector of each fundus image in {I k |1≤k≤N}, and denote the luminance feature vector of I k as and calculate the naturalness feature vector of each fundus image in {I k |1≤k≤N}, and denote the naturalness feature vector of I k as Calculate the structural layout feature vector of each fundus image in {I k |1≤k≤N}, and denote the structural layout feature vector of I k as in, The dimension of is 3 × 1, The dimension of is 1 × 1, The dimension is 1×1.

在本实施例中,步骤①_2中的的获取过程为:In this embodiment, in steps ①_2 The acquisition process is:

①_2a1、计算Ik的暗通道掩膜图像,记为将中坐标位置为(x,y)的像素点的像素值记为 其中,1≤x≤W,1≤y≤H,Ik(x,y)表示Ik中坐标位置为(x,y)的像素点的像素值,Tlow为暗通道阈值,在本实施例中取Tlow=50。①_2a1, calculate the dark channel mask image of I k , denoted as Will The pixel value of the pixel whose middle coordinate position is (x, y) is recorded as Among them, 1≤x≤W, 1≤y≤H, I k (x, y) represents the pixel value of the pixel point whose coordinate position is (x, y) in I k , T low is the dark channel threshold, in this implementation Take T low =50 in the example.

并计算Ik的亮通道掩膜图像,记为将中坐标位置为(x,y)的像素点的像素值记为 其中,Thigh为亮通道阈值,在本实施例中取Thigh=240。and calculate the bright channel mask image of I k , denoted as Will The pixel value of the pixel whose middle coordinate position is (x, y) is recorded as Among them, T high is the threshold value of the bright channel, and in this embodiment, T high =240 is taken.

①_2b1、计算中的所有像素点的像素值的均值,作为Ik的暗通道比重特征,记为 ①_2b1, calculation The mean of the pixel values of all the pixels in , as the dark channel proportion feature of I k , is denoted as

并计算中的所有像素点的像素值的均值,作为Ik的亮通道比重特征,记为 and calculate The mean of the pixel values of all the pixels in , as the bright channel proportion feature of I k , is denoted as

①_2c1、将Ik划分成多个尺寸大小为9×9像素且步长为1像素的相互重叠的子块;然后从Ik中的所有子块中随机选择M个子块;接着将选择的每个子块中的所有像素点的像素值组成列向量,将选择的第t个子块中的所有像素点的像素值组成的列向量记为yt;其中,M为正整数,1000≤M≤M*,M*表示Ik中包含的子块的总个数,在本实施例中取M=1000,t为正整数,1≤t≤M,yt的维数为81×1。①_2c1. Divide I k into multiple overlapping sub-blocks with a size of 9 × 9 pixels and a step size of 1 pixel; then randomly select M sub-blocks from all sub-blocks in I k ; The pixel values of all the pixels in the sub-blocks form a column vector, and the column vector composed of the pixel values of all the pixels in the selected t-th sub-block is denoted as y t ; wherein, M is a positive integer, 1000≤M≤M * , M * represents the total number of sub-blocks included in I k , in this embodiment, M=1000, t is a positive integer, 1≤t≤M, and the dimension of y t is 81×1.

①_2d1、计算Ik的非均匀亮度特征,记为 其中,μt表示yt中的所有元素的值的均值,也即表示选择的第t个子块中的所有像素点的像素值的均值,符号“|| ||”为求欧氏距离符号。①_2d1, calculate the non-uniform brightness feature of I k , denoted as Among them, μ t represents the mean of the values of all elements in y t , that is, the mean of the pixel values of all the pixels in the selected t-th sub-block, and the symbol “|| ||” is the Euclidean distance symbol.

①_2e1、将和按序排列构成的矢量作为Ik的亮度特征矢量 其中,的维数为3×1,符号“[ ]”为矢量表示符号,表示将和连接起来形成一个矢量,为的转置。①_2e1, will and The vector formed in order is used as the luminance feature vector of I k in, The dimension of is 3 × 1, the symbol "[ ]" is a vector representation symbol, means to and concatenated to form a vector, for transposition of .

在本实施例中,步骤①_2中的的获取过程为:In this embodiment, in steps ①_2 The acquisition process is:

①_2a2、选取N'幅主观质量推荐值为优的眼底图像构成训练集;然后采用现有的自然图像质量预测器(Natural Image Quality Evaluator,NIQE)从训练集中提取出训练集的原始多元高斯(Pristine Multivariate Gaussian,MVG)模型,记为(μ,C);其中,N'为正整数,N'>1,在本实施例中取N'=100,μ表示(μ,C)的均值特征,C表示(μ,C)的协方差矩阵特征。①_2a2. Select N' fundus images with excellent subjective quality recommendation value to form the training set; then use the existing Natural Image Quality Evaluator (NIQE) to extract the original multivariate Gaussian (Pristine Gaussian) of the training set from the training set Multivariate Gaussian, MVG) model, denoted as (μ, C); among them, N' is a positive integer, N'>1, in this embodiment, N'=100, μ represents the mean feature of (μ, C), C represents the covariance matrix feature of (μ, C).

①_2b2、将Ik划分成M'个互不重叠的尺寸大小为64×64像素的子块;然后采用现有的自然图像质量预测器(Natural Image Quality Evaluator,NIQE)从Ik中的每个子块中提取出Ik中的每个子块的原始多元高斯模型,将Ik中的第t'个子块的原始多元高斯模型记为(μt',Ct');其中,M'为正整数,符号为向下取整操作符号,t'为正整数,1≤t'≤M',μt'表示(μt',Ct')的均值特征,Ct'表示(μt',Ct')的协方差矩阵特征。 ①_2b2 . Divide I k into M' non-overlapping sub-blocks with a size of 64 × 64 pixels; The original multivariate Gaussian model of each sub-block in Ik is extracted from the block, and the original multivariate Gaussian model of the t'th sub-block in Ik is recorded as (μ t' ,C t' ); where, M' is positive integer, symbol is the symbol of the round-down operation, t' is a positive integer, 1≤t'≤M', μ t' represents the mean feature of (μ t' ,C t' ), C t' represents (μ t' ,C t ' ) of the covariance matrix feature.

①_2c2、根据(μ,C)和Ik中的每个子块的原始多元高斯模型,计算Ik中的每个子块的自然度质量评价分值,将Ik中的第t'个子块的自然度质量评价分值记为qt',其中,(μ-μt')T为(μ-μt')的转置,为的逆。①_2c2. According to (μ, C) and the original multivariate Gaussian model of each sub-block in I k , calculate the naturalness quality evaluation score of each sub-block in I k , and calculate the naturalness of the t'th sub-block in I k The quality evaluation score is recorded as q t' , where (μ-μ t' ) T is the transpose of (μ-μ t' ), for inverse of .

①_2d2、计算Ik的自然度质量评价分值,记为 然后直接将作为Ik的自然度特征矢量其中,的维数为1×1。①_2d2, calculate the naturalness quality evaluation score of I k , denoted as then directly Naturalness feature vector as I k in, The dimension is 1×1.

在本实施例中,步骤①_2中的的获取过程为:In this embodiment, in steps ①_2 The acquisition process is:

①_2a3、采用Log-Gabor滤波器对Ik进行滤波处理,得到Ik中的每个像素点在不同中心频率和不同方向因子下的频率响应,将Ik中坐标位置为(x,y)的像素点在中心频率为ω和方向因子为θ下的频率响应记为Gω,θ(x,y),Gω,θ(x,y)=eω,θ(x,y)+joω,θ(x,y);其中,1≤x≤W,1≤y≤H,ω表示Log-Gabor滤波器的中心频率, θ表示Log-Gabor滤波器的方向因子, eω,θ(x,y)为Gω,θ(x,y)的实部,oω,θ(x,y)为Gω,θ(x,y)的虚部,符号“j”为虚数表示符号。①-2a3, use Log-Gabor filter to filter I k , and obtain the frequency response of each pixel point in I k under different center frequencies and different direction factors, and set the coordinate position in I k as (x, y) The frequency response of a pixel at a center frequency of ω and a direction factor of θ is recorded as G ω, θ (x, y), G ω, θ (x, y) = e ω, θ (x, y) + jo ω ,θ (x,y); where 1≤x≤W, 1≤y≤H, ω represents the center frequency of the Log-Gabor filter, θ represents the direction factor of the Log-Gabor filter, e ω, θ (x, y) is the real part of G ω, θ (x, y), o ω, θ (x, y) is the imaginary part of G ω, θ (x, y), symbol "j" Signs for imaginary numbers.

①_2b3、计算Ik的相位一致性图,记为{PCk(x,y)},将{PCk(x,y)}中坐标位置为(x,y)的像素点的像素值记为PCk(x,y),其中, ①_2b3. Calculate the phase consistency map of I k , denoted as {PC k (x, y)}, and denote the pixel value of the pixel point whose coordinate position is (x, y) in {PC k (x, y)} as PC k (x,y), in,

①_2c3、计算Ik的二值血管图,记为{Bk(x,y)},将{Bk(x,y)}中坐标位置为(x,y)的像素点的像素值记为Bk(x,y),其中,TPC为二值化阈值,在本实施例中取TPC=0.45。①_2c3. Calculate the binary blood vessel map of I k , denoted as {B k (x, y)}, and denote the pixel value of the pixel point whose coordinate position is (x, y) in {B k (x, y)} as B k (x,y), Wherein, T PC is a binarization threshold, and in this embodiment, T PC =0.45.

①_2d3、计算Ik的视盘中心位置,记为 其中,表示求取使得的值最小时的(x',y'),δ表示水平偏移位置,ε表示垂直偏移位置,表示Ik中以坐标位置(x',y')为中心、半径为100像素的圆形区域,Bk(x'+δ,y'+ε)表示{Bk(x,y)}中坐标位置为(x'+δ,y'+ε)的像素点的像素值。①_2d3, calculate the center position of the optic disc of I k , denoted as in, means to ask for (x', y') when the value of is the smallest, δ represents the horizontal offset position, ε represents the vertical offset position, Represents a circular area with a coordinate position (x', y') as the center and a radius of 100 pixels in I k , and B k (x'+δ, y'+ε) represents {B k (x, y)} in The pixel value of the pixel whose coordinate position is (x'+δ, y'+ε).

①_2e3、令Sk表示Ik的结构布局指标;然后判断是否落在规定的区域内,如果是,则令Sk=1,否则,令Sk=0;再直接将Sk作为Ik的结构布局特征矢量其中,的维数为1×1。①_2e3, let S k represent the structural layout index of I k ; then judge Whether it falls in the specified area If yes, let Sk = 1, otherwise, let Sk = 0; then directly use Sk as the structural layout feature vector of I k in, The dimension is 1×1.

在本实施例中,步骤①_2e3中,规定的区域定义为:将Ik划分成8×8个互不重叠的尺寸大小为像素的区域,将Ik中的第p个区域记为然后水平扫描Ik,找出Ik中的第25个区域第26个区域第27个区域第30个区域第31个区域第32个区域第33个区域第34个区域第35个区域第38个区域第39个区域第40个区域再将找出的所有区域构成的集合作为规定的区域 其中,符号为向下取整操作符号,p为正整数,1≤p≤64。In this embodiment, in steps ①_2e3, the specified area is Defined as: dividing I k into 8 × 8 non-overlapping sizes as The area of pixels, denote the p-th area in I k as Then scan I k horizontally to find the 25th region in I k 26th area 27th area 30th area 31st area 32nd area 33rd area 34th area 35th area 38th area 39th area 40th area Then take the set of all the found areas as the specified area Among them, the symbol In order to round down the operation symbol, p is a positive integer, 1≤p≤64.

①_3、将{Ik|1≤k≤N}中的每幅眼底图像的亮度特征矢量、自然度特征矢量和结构布局特征矢量按序排列构成{Ik|1≤k≤N}中的每幅眼底图像的特征矢量,将Ik的特征矢量记为Fk,其中,Fk的维数为5×1,符号“[ ]”为矢量表示符号,表示将和连接起来形成一个特征矢量,为的转置。①_3. Arrange the luminance feature vector, naturalness feature vector and structural layout feature vector of each fundus image in {I k |1≤k≤N} in sequence to form each of {I k |1≤k≤N} is the feature vector of a fundus image, and the feature vector of I k is denoted as F k , Among them, the dimension of F k is 5 × 1, and the symbol "[ ]" is a vector representation symbol, means to and concatenated to form a feature vector, for transposition of .

①_4、将{Ik|1≤k≤N}中的所有眼底图像各自的特征矢量和主观质量推荐值构成训练样本数据集合,训练样本数据集合中包含N个特征矢量和N个主观质量推荐值;然后采用支持向量回归作为机器学习的方法,对训练样本数据集合中的所有特征矢量进行训练,使得经过训练得到的回归函数值与主观质量推荐值之间的误差最小,拟合得到最优的权重矢量wopt和最优的偏置项bopt;接着利用最优的权重矢量wopt和最优的偏置项bopt,构造质量预测模型,记为f(F),其中,f( )为函数表示形式,F用于表示眼底图像的特征矢量,且作为质量预测模型的输入矢量,(wopt)T为wopt的转置,为F的线性函数。①_4. The respective feature vectors and subjective quality recommendation values of all fundus images in {I k |1≤k≤N} form a training sample data set, and the training sample data set contains N feature vectors and N subjective quality recommendation values. ; Then use support vector regression as a machine learning method to train all feature vectors in the training sample data set, so that the error between the regression function value obtained after training and the subjective quality recommendation value is the smallest, and the optimal fitting is obtained. The weight vector w opt and the optimal bias term b opt ; then use the optimal weight vector w opt and the optimal bias term b opt to construct a quality prediction model, denoted as f(F), Among them, f( ) is the function representation, F is used to represent the feature vector of the fundus image, and is used as the input vector of the quality prediction model, (w opt ) T is the transpose of w opt , is a linear function of F.

所述的测试阶段过程的具体步骤为:The specific steps of the test phase process are:

②对于任意一幅用作测试的眼底图像Itest,按照步骤①_2至步骤①_3的过程,以相同的操作,获取Itest的特征矢量,记为Ftest;然后根据训练阶段构造的质量预测模型对Ftest进行测试,预测得到Ftest对应的预测值,将该预测值作为Itest的质量客观评价预测值,记为Qtest,其中,Itest的宽度为W',且高度为H',W'可与W相同或不相同,H'可与H相同或不相同,Ftest的维数为5×1,为Ftest的线性函数。2. For any fundus image I test used as a test, follow the process of step ①_2 to step ①_3, with the same operation, obtain the feature vector of I test , and denote it as F test ; then according to the quality prediction model constructed in the training stage to F test is tested, and the predicted value corresponding to F test is predicted, and the predicted value is regarded as the quality objective evaluation predicted value of I test , which is denoted as Q test , Among them, the width of I test is W', and the height is H', W' can be the same or different from W, H' can be the same or different from H, and the dimension of F test is 5 × 1, is a linear function of F test .

为进一步说明本发明方法的可行性和有效性,对本发明方法进行试验。In order to further illustrate the feasibility and effectiveness of the method of the present invention, the method of the present invention is tested.

在本实施例中,采用宁波大学建立的眼底图像数据库作为图像数据库,宁波大学建立的眼底图像数据库包括1000幅眼底图像,每幅眼底图像指定一个值为1或0的主观质量推荐值,1表示推荐质量为优,0表示推荐质量为劣。本发明利用评估分类质量的4个常用指标,即灵敏性(Sensitivity)、特异性(Specificity)、准确性(Accuracy)、ROC曲线下的面积(AUC),如果灵敏性、特异性、准确性和ROC曲线下的面积越接近100%,则说明本发明方法的客观评价结果与主观质量推荐值之间的相关性越好。表1给出了本发明方法得到的质量客观评价预测值与主观质量推荐值之间的相关性,从表1中可以看出,即使采用不同比例的眼底图像构成训练图像集,采用本发明方法得到的眼底图像的质量客观评价预测值与主观质量推荐值之间的相关性是很高的,足以说明本发明方法的有效性。In this embodiment, the fundus image database established by Ningbo University is used as the image database. The fundus image database established by Ningbo University includes 1000 fundus images, and each fundus image is assigned a subjective quality recommendation value of 1 or 0, where 1 represents The recommendation quality is excellent, and 0 means the recommendation quality is poor. The present invention utilizes 4 commonly used indicators to assess the quality of classification, namely sensitivity (Sensitivity), specificity (Specificity), accuracy (Accuracy), area under the ROC curve (AUC). The closer the area under the ROC curve is to 100%, the better the correlation between the objective evaluation result of the method of the present invention and the subjective quality recommendation value. Table 1 shows the correlation between the quality objective evaluation prediction value obtained by the method of the present invention and the subjective quality recommendation value. As can be seen from Table 1, even if the fundus images of different proportions are used to form the training image set, the method of the present invention is used to form the training image set. The correlation between the objective evaluation prediction value of the obtained fundus image quality and the subjective quality recommendation value is very high, which is sufficient to demonstrate the effectiveness of the method of the present invention.

表1采用本发明方法得到的质量客观评价预测值与主观质量推荐值之间的相关性Table 1 The correlation between the objective quality evaluation prediction value obtained by the method of the present invention and the subjective quality recommendation value

Claims (4)

Priority Applications (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201710976518.2A CN107862678B (en) | 2017-10-19 | 2017-10-19 | Fundus image non-reference quality evaluation method |

Applications Claiming Priority (1)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| CN201710976518.2A CN107862678B (en) | 2017-10-19 | 2017-10-19 | Fundus image non-reference quality evaluation method |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN107862678A CN107862678A (en) | 2018-03-30 |

| CN107862678B true CN107862678B (en) | 2020-03-17 |

Family

ID=61697260

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CN201710976518.2A Active CN107862678B (en) | 2017-10-19 | 2017-10-19 | Fundus image non-reference quality evaluation method |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN107862678B (en) |

Families Citing this family (14)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| CN108510446B (en) * | 2018-04-10 | 2023-03-14 | 四川和生视界医药技术开发有限公司 | Method and device for superimposing retinal images |

| CN110648303B (en) * | 2018-06-08 | 2022-07-26 | 上海市第六人民医院 | Fundus image analysis method, computer device, and storage medium |

| CN109273072A (en) * | 2018-08-23 | 2019-01-25 | 北京极视互联科技有限公司 | A kind of medical method of tele-medicine |

| CN109377472B (en) * | 2018-09-12 | 2021-08-03 | 宁波大学 | A method for evaluating the quality of fundus images |

| CN109522819B (en) * | 2018-10-29 | 2020-08-18 | 西安交通大学 | A fire image recognition method based on deep learning |

| CN114757893A (en) * | 2018-10-29 | 2022-07-15 | 上海鹰瞳医疗科技有限公司 | Fundus image normalization method and equipment |

| CN110021009B (en) * | 2019-01-18 | 2023-07-21 | 平安科技(深圳)有限公司 | Method, device and storage medium for evaluating fundus image quality |

| CN110009616B (en) * | 2019-04-01 | 2020-12-25 | 数坤(北京)网络科技有限公司 | Punctate calcification detection method |

| CN110516579B (en) * | 2019-08-21 | 2021-09-07 | 北京至真互联网技术有限公司 | Handheld fundus camera photographing method and device, equipment and storage medium |

| CN111681207B (en) * | 2020-05-09 | 2023-10-27 | 四维高景卫星遥感有限公司 | Remote sensing image fusion quality evaluation method |

| CN112770105B (en) * | 2020-12-07 | 2022-06-03 | 宁波大学 | Repositioning stereo image quality evaluation method based on structural features |

| CN113099215B (en) * | 2021-03-19 | 2022-06-21 | 宁波大学 | Cartoon image quality evaluation method |

| CN114882014B (en) * | 2022-06-16 | 2023-02-03 | 深圳大学 | Fundus image quality evaluation method, device and related medium based on dual model |

| CN117877692B (en) * | 2024-01-02 | 2024-08-02 | 珠海全一科技有限公司 | Personalized difference analysis method for retinopathy |

Citations (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US6847733B2 (en) * | 2001-05-23 | 2005-01-25 | Eastman Kodak Company | Retrieval and browsing of database images based on image emphasis and appeal |

| CN104135656A (en) * | 2014-07-11 | 2014-11-05 | 华南理工大学 | Method and device for detecting autostereoscopic display quality of mobile terminal |

| CN104361593A (en) * | 2014-11-14 | 2015-02-18 | 南京大学 | Color image quality evaluation method based on HVSs and quaternions |

-

2017

- 2017-10-19 CN CN201710976518.2A patent/CN107862678B/en active Active

Patent Citations (3)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| US6847733B2 (en) * | 2001-05-23 | 2005-01-25 | Eastman Kodak Company | Retrieval and browsing of database images based on image emphasis and appeal |

| CN104135656A (en) * | 2014-07-11 | 2014-11-05 | 华南理工大学 | Method and device for detecting autostereoscopic display quality of mobile terminal |

| CN104361593A (en) * | 2014-11-14 | 2015-02-18 | 南京大学 | Color image quality evaluation method based on HVSs and quaternions |

Non-Patent Citations (3)

| Title |

|---|

| Individual Differences in Image-Quality Estimations: Estimation Rules and Viewing Strategies;JENNI RADUN 等;《ACM Transactions on Applied Perceptions》;20160531;第13卷(第3期);第141-160页 * |

| Structural Fidelity vs. Naturalness - Objective Assessment of Tone Mapped Images;Hojatollah Yeganeh 等;《International Conference on Image Analysis and Recognition (ICIAR11)》;20110630;正文第1-10页 * |

| 一种基于人眼视觉特性的视频质量评价算法;朱宏 等;《计算机辅助设计与图形学学报》;20140531;第26卷(第5期);第776-781页 * |

Also Published As

| Publication number | Publication date |

|---|---|

| CN107862678A (en) | 2018-03-30 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| CN107862678B (en) | Fundus image non-reference quality evaluation method | |

| Mvoulana et al. | Fully automated method for glaucoma screening using robust optic nerve head detection and unsupervised segmentation based cup-to-disc ratio computation in retinal fundus images | |

| Da Rocha et al. | Diabetic retinopathy classification using VGG16 neural network | |

| CN109345469B (en) | A Speckle Denoising Method in OCT Imaging Based on Conditional Generative Adversarial Networks | |

| Sivaswamy et al. | Drishti-gs: Retinal image dataset for optic nerve head (onh) segmentation | |

| CN111292338B (en) | A method and system for segmenting choroidal neovascularization from fundus OCT images | |

| CN112465772B (en) | Fundus colour photographic image blood vessel evaluation method, device, computer equipment and medium | |

| CN113284101A (en) | Methods for modifying retinal fundus images for use in deep learning models | |

| CN109671049B (en) | A medical image processing method, system, equipment and storage medium | |

| Mrad et al. | A fast and accurate method for glaucoma screening from smartphone-captured fundus images | |

| CN113768461B (en) | Fundus image analysis method, fundus image analysis system and electronic equipment | |

| CN104102899B (en) | Retinal vessel recognition methods and device | |

| CN113610842B (en) | OCT image retina detachment and splitting automatic segmentation method based on CAS-Net | |

| US12109025B2 (en) | System and method for detecting a health condition using eye images | |

| CN109377472B (en) | A method for evaluating the quality of fundus images | |

| CN120937057A (en) | Retinal image segmentation via semi-supervised learning | |

| CN117036286A (en) | A method for effusion segmentation in OCT images based on point label learning | |

| Belghith et al. | A unified framework for glaucoma progression detection using Heidelberg Retina Tomograph images | |

| Chen et al. | Automated image quality assessment for anterior segment optical coherence tomograph | |

| Hu et al. | Multi-image stitching for smartphone-based retinal fundus stitching | |

| Magister et al. | Generative image inpainting for retinal images using generative adversarial networks | |

| CN111402246A (en) | A method of fundus image classification based on joint network | |

| Suwandoko et al. | An optimized segmentation of optic disc and optic cup in retinal fundus images based on multimap localization and conventional U-Net | |

| CN110415231A (en) | A CNV Segmentation Method Based on Attention Prior Network | |

| CN111291706B (en) | A retinal image optic disc positioning method |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| PB01 | Publication | ||

| PB01 | Publication | ||

| SE01 | Entry into force of request for substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| GR01 | Patent grant | ||

| GR01 | Patent grant | ||

| TR01 | Transfer of patent right | ||

| TR01 | Transfer of patent right |

Effective date of registration: 20230315 Address after: Room 2202, 22 / F, Wantong building, No. 3002, Sungang East Road, Sungang street, Luohu District, Shenzhen City, Guangdong Province Patentee after: Shenzhen dragon totem technology achievement transformation Co.,Ltd. Address before: 315211, Fenghua Road, Jiangbei District, Zhejiang, Ningbo 818 Patentee before: Ningbo University Effective date of registration: 20230315 Address after: 200120 3rd floor, building 2, No.200, zhangheng Road, China (Shanghai) pilot Free Trade Zone, Pudong New Area, Shanghai Patentee after: Shanghai Shiquan Shimei Technology Development Co.,Ltd. Address before: Room 2202, 22 / F, Wantong building, No. 3002, Sungang East Road, Sungang street, Luohu District, Shenzhen City, Guangdong Province Patentee before: Shenzhen dragon totem technology achievement transformation Co.,Ltd. |