CN101455084A - Method and apparatus for decoding/encoding video signal - Google Patents

Method and apparatus for decoding/encoding video signal Download PDFInfo

- Publication number

- CN101455084A CN101455084A CN 200780019504 CN200780019504A CN101455084A CN 101455084 A CN101455084 A CN 101455084A CN 200780019504 CN200780019504 CN 200780019504 CN 200780019504 A CN200780019504 A CN 200780019504A CN 101455084 A CN101455084 A CN 101455084A

- Authority

- CN

- China

- Prior art keywords

- view

- picture

- prediction

- information

- inter

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Pending

Links

Images

Landscapes

- Compression Or Coding Systems Of Tv Signals (AREA)

Abstract

Description

技术领域 technical field

本发明涉及一种用于解码/编码视频信号的方法及其装置。The present invention relates to a method and device for decoding/encoding video signals.

背景技术 Background technique

压缩编码是指用于经由通信电路传送数字化信息或者以适于存储介质的形式存储数字化信息的一系列信号处理技术。压缩编码的对象包括音频、视频、文本等。具体地,用于对序列执行压缩编码的技术被称为视频序列压缩。视频序列通常特征在于具有空间冗余和时间冗余。Compression coding refers to a series of signal processing techniques for transmitting digitized information via a communication circuit or storing digitized information in a form suitable for a storage medium. Compression coded objects include audio, video, text, etc. In particular, techniques for performing compression encoding on sequences are referred to as video sequence compression. Video sequences are often characterized by spatial redundancy and temporal redundancy.

发明内容 Contents of the invention

技术目的technical purpose

本发明的目的在于提高视频信号的编码效率。The object of the present invention is to improve the coding efficiency of video signals.

技术方案Technical solutions

本发明的一个目的在于通过定义用于识别图片的视图的视图信息来有效地编码视频信号。It is an object of the present invention to efficiently encode a video signal by defining view information for identifying a view of a picture.

本发明的另一个目的在于通过基于视图间参考信息编码视频信号来提高编码效率。Another object of the present invention is to improve coding efficiency by coding video signals based on inter-view reference information.

本发明的另一个目的在于通过定义视图级别信息来提供视频信号的视图可分级性。Another object of the present invention is to provide view scalability of video signals by defining view level information.

本发明的另一个目的在于通过定义指示是否获得虚拟视图的图片的视图间合成预测标识符来有效率地编码视频信号。Another object of the present invention is to efficiently encode a video signal by defining an inter-view synthesis prediction identifier indicating whether a picture of a virtual view is obtained or not.

有益效果Beneficial effect

在编码视频信号中,本发明通过使用用于识别图片的视图的视图信息执行视图间预测而使得能够更加有效地执行编码。并且,通过新定义的、指示用于分层结构的信息以提供视图可分级性的级别信息,本发明能够提供适用于使用者的视图序列。而且,通过定义与最低级别相应的视图作为参考视图,本发明提供与传统解码器的兼容性。另外,本发明通过在执行视图间预测期间决定是否预测虚拟视图的图片来提高编码效率。在预测虚拟视图的图片的情形中,本发明使得能够更准确地预测,由此降低要传送的位数。In encoding a video signal, the present invention enables encoding to be performed more efficiently by performing inter-view prediction using view information for identifying a view of a picture. And, the present invention can provide a view sequence suitable for a user by newly defining level information indicating information for a hierarchical structure to provide view scalability. Also, the present invention provides compatibility with legacy decoders by defining a view corresponding to the lowest level as a reference view. In addition, the present invention improves coding efficiency by deciding whether to predict a picture of a virtual view during performing inter-view prediction. In the case of predicting pictures of a virtual view, the invention enables more accurate prediction, thereby reducing the number of bits to be transferred.

附图说明 Description of drawings

图1是根据本发明的用于解码视频信号的装置的示意性框图。Fig. 1 is a schematic block diagram of an apparatus for decoding a video signal according to the present invention.

图2是根据本发明的实施例的能够被添加到多视图视频编码位流的关于多视图视频的配置信息的图表。FIG. 2 is a diagram of configuration information on multi-view video that can be added to a multi-view video encoding bitstream according to an embodiment of the present invention.

图3是根据本发明的实施例的参考图片列表构造单元620的内部框图。FIG. 3 is an internal block diagram of the reference picture

图4是根据本发明的实施例的用于提供视频信号的视图可分级性(scalability)的级别信息的分层结构的图表。FIG. 4 is a diagram of a hierarchical structure for providing level information of view scalability of a video signal according to an embodiment of the present invention.

图5是根据本发明的一个实施例的在NAL报头的扩展区域中包括级别信息的NAL单元配置的图表。FIG. 5 is a diagram of a NAL unit configuration including level information in an extension area of a NAL header according to one embodiment of the present invention.

图6是根据本发明的实施例的多视图视频信号的总体预测性结构的图表,用于解释视图间图片组的概念。FIG. 6 is a diagram of an overall predictive structure of a multi-view video signal for explaining the concept of an inter-view picture group according to an embodiment of the present invention.

图7是根据本发明的实施例的预测性结构的图表,用于解释新定义的视图间图片组的概念。FIG. 7 is a diagram of a predictive structure for explaining the concept of a newly defined inter-view picture group according to an embodiment of the present invention.

图8是根据本发明的实施例的用于使用视图间图片组识别信息来解码多视图视频的装置的示意性框图。FIG. 8 is a schematic block diagram of an apparatus for decoding multi-view video using inter-view group of picture identification information according to an embodiment of the present invention.

图9是根据本发明的实施例的用于构造参考图片列表的过程的流程图。FIG. 9 is a flowchart of a process for constructing a reference picture list according to an embodiment of the present invention.

图10是根据本发明的一个实施例的用于解释当当前片段(slice)是P-片段(P-slice)时初始化参考图片列表的方法的图表。FIG. 10 is a diagram for explaining a method of initializing a reference picture list when a current slice (slice) is a P-slice (P-slice) according to one embodiment of the present invention.

图11是根据本发明的一个实施例的用于解释当当前片段是B-片段(B-slice)时初始化参考图片列表的方法的图表。FIG. 11 is a diagram for explaining a method of initializing a reference picture list when a current slice is a B-slice (B-slice) according to one embodiment of the present invention.

图12是根据本发明的实施例的参考图片列表重排单元630的内部框图。FIG. 12 is an internal block diagram of the reference picture list rearrangement unit 630 according to an embodiment of the present invention.

图13是根据本发明的一个实施例的参考索引分配改变单元643B或者645B的内部框图。FIG. 13 is an internal block diagram of the reference index

图14是根据本发明的一个实施例的用于解释使用视图信息来重排参考图片列表的过程的图表。FIG. 14 is a diagram for explaining a process of rearranging a reference picture list using view information according to one embodiment of the present invention.

图15是根据本发明的另一实施例的参考图片列表重排单元630的内部框图。FIG. 15 is an internal block diagram of the reference picture list rearrangement unit 630 according to another embodiment of the present invention.

图16是根据本发明的实施例的用于视图间预测的参考图片列表重排单元970的内部框图。FIG. 16 is an internal block diagram of the reference picture list rearrangement unit 970 for inter-view prediction according to an embodiment of the present invention.

图17和图18是根据本发明的一个实施例的用于参考图片列表重排的句法的图表。17 and 18 are diagrams of syntax for reference picture list rearrangement according to one embodiment of the present invention.

图19是根据本发明的另一实施例的用于参考图片列表重排的句法的图表。FIG. 19 is a diagram of syntax for reference picture list rearrangement according to another embodiment of the present invention.

图20是根据本发明的一个实施例的用于获得当前块的亮度差值的过程的图表。FIG. 20 is a diagram of a process for obtaining a luminance difference value of a current block according to one embodiment of the present invention.

图21是根据本发明的实施例的用于执行当前块的亮度补偿的过程的流程图。FIG. 21 is a flowchart of a process for performing brightness compensation of a current block according to an embodiment of the present invention.

图22是根据本发明的一个实施例的用于使用关于相邻块的信息来获得当前块的亮度差预测值的过程的图表。FIG. 22 is a diagram of a process for obtaining a luma difference prediction value of a current block using information on neighboring blocks according to one embodiment of the present invention.

图23是根据本发明的一个实施例的用于使用关于相邻块的信息来执行亮度补偿的过程的流程图。FIG. 23 is a flowchart of a process for performing brightness compensation using information on neighboring blocks according to one embodiment of the present invention.

图24是根据本发明的另一实施例的用于使用关于相邻块的信息来执行亮度补偿的过程的流程图。FIG. 24 is a flowchart of a process for performing brightness compensation using information on neighboring blocks according to another embodiment of the present invention.

图25是根据本发明的一个实施例的用于使用虚拟视图中的图片来预测当前图片的过程的图表。25 is a diagram of a process for predicting a current picture using pictures in a virtual view, according to one embodiment of the invention.

图26是根据本发明的实施例的用于在MVC中执行视图间预测期间合成虚拟视图中的图片的过程的流程图。26 is a flowchart of a process for synthesizing pictures in a virtual view during performing inter-view prediction in MVC according to an embodiment of the present invention.

图27是根据本发明的用于在视频信号编码中根据片段类型执行加权预测的方法的流程图。FIG. 27 is a flowchart of a method for performing weighted prediction according to slice types in video signal encoding according to the present invention.

图28是根据本发明的在视频信号编码中在片段类型中可允许的宏块类型的图表。FIG. 28 is a chart of allowable macroblock types among slice types in video signal encoding according to the present invention.

图29和图30是根据本发明的一个实施例的用于根据新定义的片段类型来执行加权预测的句法的图表。29 and 30 are diagrams of syntax for performing weighted prediction according to newly defined segment types according to one embodiment of the present invention.

图31是根据本发明的使用指示在视频信号编码中是否执行视图间加权预测的标志信息来执行加权预测的方法的流程图。31 is a flowchart of a method of performing weighted prediction using flag information indicating whether to perform inter-view weighted prediction in video signal encoding according to the present invention.

图32是根据本发明的一个实施例的用于解释根据指示是否使用关于与当前图片的视图不同的视图中的图片的信息来执行加权预测的标志信息的加权预测方法的图表。32 is a diagram for explaining a weighted prediction method according to flag information indicating whether to perform weighted prediction using information about a picture in a view different from that of the current picture according to one embodiment of the present invention.

图33是根据本发明的一个实施例的用于根据新定义的标志信息来执行加权预测的句法的图表。FIG. 33 is a diagram of syntax for performing weighted prediction according to newly defined flag information according to one embodiment of the present invention.

图34是根据本发明的实施例的根据NAL(网络抽象层)单元类型来执行加权预测的方法的流程图。FIG. 34 is a flowchart of a method of performing weighted prediction according to NAL (Network Abstraction Layer) unit types according to an embodiment of the present invention.

图35和图36是根据本发明的一个实施例的用于在NAL单元类型是关于多视图视频编码的情形中执行加权预测的句法的图表。35 and 36 are diagrams of syntax for performing weighted prediction in a case where the NAL unit type is related to multi-view video coding according to one embodiment of the present invention.

图37是根据本发明的实施例的根据新定义的片段类型的视频信号解码装置的局部框图。FIG. 37 is a partial block diagram of a video signal decoding apparatus according to a newly defined slice type according to an embodiment of the present invention.

图38是根据本发明的用于解释在图37所示装置中解码视频信号的方法的流程图。FIG. 38 is a flowchart for explaining a method of decoding a video signal in the apparatus shown in FIG. 37 according to the present invention.

图39是根据本发明的一个实施例的宏块预测模式的图表。FIG. 39 is a diagram of macroblock prediction modes according to one embodiment of the present invention.

图40和图41是根据本发明的向其应用了片段类型和宏块模式的句法的图表。40 and 41 are diagrams of syntax to which a slice type and a macroblock mode are applied according to the present invention.

图42是向其应用图41中的片段类型的实施例的图表。FIG. 42 is a diagram of an embodiment to which the segment types in FIG. 41 are applied.

图43是包括在图41所示的片段类型中的片段类型的各种实施例的图表。FIG. 43 is a diagram of various embodiments of segment types included in the segment types shown in FIG. 41 .

图44是根据本发明的一个实施例的按照两个混合预测的预测进行的对于混合片段类型可允许的宏块的图表。FIG. 44 is a diagram of allowable macroblocks for mixed slice types according to prediction of two mixed predictions according to one embodiment of the present invention.

图45到47是根据本发明的一个实施例的在按照两个混合预测的预测的混合片段中存在的宏块的宏块类型的图表。45 to 47 are diagrams of macroblock types of macroblocks present in a predicted hybrid slice according to two hybrid predictions according to one embodiment of the present invention.

图48是根据本发明的实施例的根据新定义的片段类型的视频信号编码装置的局部框图。FIG. 48 is a partial block diagram of a video signal encoding apparatus according to a newly defined slice type according to an embodiment of the present invention.

图49是根据本发明的在图48所示装置中编码视频信号的方法的流程图。FIG. 49 is a flowchart of a method of encoding a video signal in the apparatus shown in FIG. 48 according to the present invention.

具体实施方式 Detailed ways

为了实现如在这里体现和广泛描述的这些和其它优点并且根据本发明的目的,一种解码视频信号的方法包括以下步骤:检查视频信号的编码方案,根据编码方案获得用于视频信号的配置信息,使用配置信息识别视图的总数目,基于视图的总数目识别视图间参考信息,以及基于视图间参考信息解码视频信号,其中配置信息至少包括用于识别视频信号的视图的视图信息。To achieve these and other advantages as embodied and broadly described herein and in accordance with the object of the present invention, a method of decoding a video signal comprises the steps of: checking the encoding scheme of the video signal, obtaining configuration information for the video signal according to the encoding scheme , identifying a total number of views using configuration information, identifying inter-view reference information based on the total number of views, and decoding the video signal based on the inter-view reference information, wherein the configuration information includes at least view information for identifying a view of the video signal.

为了进一步实现这些和其它优点并且根据本发明的目的,一种解码视频信号的方法包括以下步骤:检查视频信号的编码方案,根据编码方案获得用于视频信号的配置信息,从配置信息检查视频信号的用于视图可分级性的级别,使用配置信息识别视图间参考信息,以及基于级别和视图间参考信息解码视频信号,其中配置信息包括用于识别图片的视图的视图信息。To further achieve these and other advantages and in accordance with the object of the present invention, a method of decoding a video signal comprises the steps of: checking the coding scheme of the video signal, obtaining configuration information for the video signal according to the coding scheme, checking the video signal from the configuration information A level for view scalability, using configuration information to identify inter-view reference information, and decoding a video signal based on the level and the inter-view reference information, wherein the configuration information includes view information for identifying a view of a picture.

用于本发明的模式Patterns for the invention

现在将详细参考本发明的优选实施例,在附图中示出了其示例。Reference will now be made in detail to the preferred embodiments of the present invention, examples of which are illustrated in the accompanying drawings.

压缩和编码视频信号数据的技术考虑空间冗余、时间冗余、可分级冗余以及视图间冗余。并且,还能够在压缩编码过程中通过考虑在视图之间的相互冗余而执行压缩编码。考虑视图间冗余的用于压缩编码的技术仅是本发明的一个实施例。并且,本发明的技术思想可应用于时间冗余、可分级冗余等。Techniques for compressing and encoding video signal data consider spatial redundancy, temporal redundancy, scalable redundancy, and inter-view redundancy. And, it is also possible to perform compression encoding by considering mutual redundancy between views in the compression encoding process. A technique for compression encoding that considers inter-view redundancy is only one embodiment of the present invention. Also, the technical idea of the present invention can be applied to temporal redundancy, scalable redundancy, and the like.

研究H.264/AVC中的位流的配置,在处理运动图片编码过程自身的VCL(视频编码层)和传送并且存储编码信息的下层系统之间存在被称为NAL(网络抽象层)的单独的层结构。来自编码过程的输出是VCL数据并且其在被传送或者存储之前被NAL单元映射。每一个NAL单元包括压缩视频数据或者RBSP(原始字节序列载荷:运动图片压缩的结果数据),RBSP是与报头信息对应的数据。Studying the configuration of the bit stream in H.264/AVC, there is a separate layer called NAL (Network Abstraction Layer) between the VCL (Video Coding Layer) that handles the moving picture coding process itself and the lower layer system that transmits and stores the coding information. layer structure. The output from the encoding process is VCL data and it is mapped by NAL units before being transmitted or stored. Each NAL unit includes compressed video data or RBSP (Raw Byte Sequence Payload: result data of motion picture compression), which is data corresponding to header information.

NAL单元基本上包括NAL报头和RBSP。NAL报头包括指示是否包括作为NAL单元的参考图片的片段的标志信息(nal_ref_idc)和指示NAL单元的类型的标识符(nal_unit_type)。被压缩的原始数据被存储在RBSP中。并且,RBSP末位被添加到RBSP的最后部分以表示RBSP的长度为8-位乘法。作为NAL单元的类型,有IDR(即时解码刷新:instantaneous decoding refresh)图片、SPS(序列参数集:sequenceparameter set)、PPS(图片参数集:picture parameter set)、SEI(附加增强信息:supplemental enhancement information)等。A NAL unit basically includes a NAL header and RBSP. The NAL header includes flag information (nal_ref_idc) indicating whether to include a slice as a reference picture of the NAL unit and an identifier (nal_unit_type) indicating the type of the NAL unit. The compressed raw data is stored in RBSP. And, the last bit of RBSP is added to the last part of RBSP to indicate that the length of RBSP is 8-bit multiplication. As the type of NAL unit, there are IDR (instant decoding refresh: instantaneous decoding refresh) pictures, SPS (sequence parameter set: sequence parameter set), PPS (picture parameter set: picture parameter set), SEI (additional enhancement information: supplemental enhancement information) wait.

在标准化中,设置了对于各种类(profile)和级别的限制,以使得能够以适当成本实现目标产品。在这种情况下,解码器应该满足根据相应的类和级别决定的限制。因此,定义了两个概念“类”和“级别”来指示用于表示解码器能够将压缩序列的范围处理到何种程度的功能或者参数。并且,类标识符(profile_idc)能够标识出位流是基于规定的类的。类标识符是指指示位流所基于的类的标志。例如,在H.264/AVC中,如果类标识符是66,则这表示位流基于基线类。如果类标识符是77,则这表示位流基于主要类。如果类标识符是88,则这表示位流基于扩展类。并且,类标识符能够被包括在序列参数集中。In standardization, restrictions on various profiles and levels are set so that a target product can be realized at an appropriate cost. In this case, the decoder should satisfy the constraints determined according to the corresponding class and level. Therefore, two concepts "class" and "level" are defined to indicate functions or parameters for expressing to what extent a decoder can handle the range of compressed sequences. And, the profile identifier (profile_idc) can identify that the bit stream is based on a prescribed profile. A class identifier refers to a flag indicating a class on which a bit stream is based. For example, in H.264/AVC, if the class identifier is 66, this means that the bitstream is based on the baseline profile. If the class identifier is 77, this means that the bit stream is based on the main class. If the class identifier is 88, this indicates that the bit stream is based on the extended class. And, a class identifier can be included in a sequence parameter set.

所以,为了处理多视图视频,需要识别输入位流的类是否是多视图类。如果输入位流的类是多视图类,则有必要添加句法以使得能够传送关于多视图的至少一个附加信息。在这种情况下,多视图类指示作为H.264/AVC的修订技术的处理多视图视频的类模式。在MVC中,添加作为关于MVC模式的额外信息的句法可能比添加无条件句法更加有效。例如,当AVC的类标识符指示多视图类时,如果添加关于多视图视频的信息,则能够提高编码效率。Therefore, in order to process multi-view video, it is necessary to identify whether the class of the input bit stream is the multi-view class. If the class of the input bitstream is a multi-view class, it is necessary to add syntax to enable transmission of at least one additional information about multi-view. In this case, the multi-view class indicates a class mode of processing multi-view video which is a revised technology of H.264/AVC. In MVC, it may be more efficient to add syntax that is additional information about the MVC pattern than to add unconditional syntax. For example, when the class identifier of AVC indicates a multi-view class, if information on multi-view video is added, encoding efficiency can be improved.

序列参数集指示含有涵盖总体序列的编码的信息(例如类、级别等)的报头信息。整个被压缩的运动图片、即序列应该以序列报头开始。因此,与报头信息对应的序列参数集应该在参考该参数集的数据到达之前到达解码器。即,序列参数集RBSP对于运动图片压缩的结果数据扮演报头信息的角色。一旦位流被输入,则类标识符优选地识别输入位流是基于多个类中的哪一个。因此,通过向句法中添加用于决定输入位流是否涉及多视图类的部分(例如,“If(profile_idc==MULTI_VIEW_PROFILE)”),决定输入位流是否涉及多视图类。仅当确定输入位流涉及多视图类时,才能够添加各种配置信息。例如,能够在视图间图片组的情况下添加所有视图的数目、视图间参考图片的数目(List0/1),在非视图间图片组的情况下添加视图间参考图片的数目(List0/1)等。并且,关于视图的各种信息可用于产生和管理解码图片缓冲器中的参考图片列表。A sequence parameter set indicates header information containing encoded information (eg, class, level, etc.) covering the overall sequence. The entire compressed motion picture, ie sequence, should start with a sequence header. Therefore, the sequence parameter set corresponding to the header information should reach the decoder before the data referring to the parameter set arrives. That is, the sequence parameter set RBSP plays the role of header information for the result data of moving picture compression. Once the bitstream is input, the class identifier preferably identifies which of the plurality of classes the input bitstream is based on. Therefore, it is decided whether the input bitstream relates to the multi-view profile by adding a part to the syntax for determining whether the input bitstream relates to the multi-view profile (eg, "If (profile_idc==MULTI_VIEW_PROFILE)"). Various configuration information can be added only when it is determined that the input bit stream relates to the multi-view class. For example, it is possible to add the number of all views, the number of inter-view reference pictures (List0/1) in the case of an inter-view picture group, and the number of inter-view reference pictures (List0/1) in the case of a non-inter-view picture group wait. And, various information on a view can be used to generate and manage a reference picture list in a decoded picture buffer.

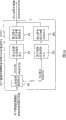

图1是根据本发明的用于解码视频信号的装置的示意性框图。Fig. 1 is a schematic block diagram of an apparatus for decoding a video signal according to the present invention.

参考图1,根据本发明的用于解码视频信号的装置包括NAL解析器100、熵解码单元200、反向量化/反向变换单元300、帧内预测单元400、去块滤波单元500、解码图片缓冲器单元600、帧间预测单元700等。Referring to FIG. 1, the device for decoding video signals according to the present invention includes a

解码图片缓冲器单元600包括参考图片存储单元610、参考图片列表构造单元620、参考图片管理单元650等。并且,参考图片列表构造单元620包括变量推导单元625、参考图片列表初始化单元630和参考图片列表重排单元640。The decoded picture buffer unit 600 includes a reference

并且,帧间预测单元700包括运动补偿单元710、亮度补偿单元720、亮度差预测单元730、视图合成预测单元740等。And, the inter prediction unit 700 includes a

NAL解析器100通过NAL单元执行解析以解码所接收的视频序列。通常,在解码片段报头和片段数据之前,至少一个序列参数集和至少一个图片参数集被传递到解码器。在这种情况下,各种配置信息能够被包括在NAL报头区域或者NAL报头的扩展区域中。因为MVC是对于传统的AVC技术的修订技术,所以在MVC位流的情形中仅仅添加配置信息而非无条件添加可能更加有效。例如,可以在NAL报头区域或者NAL报头的扩展区域中添加用于识别存在或者不存在MVC位流的标志信息。仅当根据标志信息输入位流是多视图视频编码位流时,才能够添加关于多视图视频的配置信息。例如,该配置信息可以包括时间级别信息、视图级别信息、视图间图片组识别信息、视图识别信息等。如下参考图2对其详细解释。The

图2是根据本发明的一个实施例的可添加到多视图视频编码位流的关于多视图视频的配置信息的图表。在下面的说明中解释关于多视图视频的配置信息的细节。FIG. 2 is a diagram of configuration information on multi-view video that can be added to a multi-view video coding bitstream according to one embodiment of the present invention. Details about the configuration information of the multi-view video are explained in the following description.

首先,时间级别信息指示关于用于提供根据视频信号的时间可分级性的分层结构的信息(①)。通过时间级别信息,能够为使用者提供在各种时间区(zone)上的序列。First, the temporal level information indicates information on a hierarchical structure for providing temporal scalability according to video signals (①). Through the time level information, sequences in various time zones (zones) can be provided to the user.

视图级别信息指示关于用于提供根据视频信号的视图可分级性的分层结构的信息(②)。在多视图视频中,有必要定义关于时间的级别以及关于视图的级别,从而为使用者提供各种时间和视图序列。在定义以上的级别信息的情形下,可以使用时间可分级性和视图可分级性。因此,使用者能够选择处于特定时间和视图处的序列,或者可以通过条件来限制被选择的序列。The view level information indicates information on a hierarchical structure for providing view scalability according to video signals (②). In multi-view video, it is necessary to define levels with respect to time as well as levels with respect to views, so as to provide users with various temporal and view sequences. In case of defining the above level information, time scalability and view scalability can be used. Thus, the user can select a sequence at a particular time and view, or the selected sequence can be limited by conditions.

能够根据特定条件以各种方式来设置级别信息。例如,能够根据照相机位置或者照相机校准而不同地设置级别信息。并且,能够通过考虑视图依赖性(dependency)来确定级别信息。例如,关于视图间图片组中的具有I-图片的视图的级别被设置为0,关于视图间图片组中的具有P-图片的视图的级别被设置为1,并且关于视图间图片组中的具有B-图片的视图的级别被设置为2。而且,能够不基于特定条件而随机地设置级别信息。将在随后参考图4和图5详细地解释视图级别信息。The level information can be set in various ways according to specific conditions. For example, level information can be set differently according to camera position or camera calibration. And, level information can be determined by considering view dependencies. For example, the level for a view with an I-picture in an inter-view picture group is set to 0, the level for a view with a P-picture in an inter-view picture group is set to 1, and the level for a view in an inter-view picture group The level of a view with a B-picture is set to 2. Also, level information can be set randomly without being based on specific conditions. The view level information will be explained in detail later with reference to FIGS. 4 and 5 .

视图间图片组识别信息指示用于标识当前NAL单元的编码图片是否为视图间图片组的信息(③)。在这种情况下,视图间图片组是指其中所有的片段仅仅参考具有相同图片序列号的片段的编码图片。例如,视图间图片组是指仅仅参考不同视图中的片段而不参考当前视图中的片段的编码图片。在多视图视频的解码过程中,可能需要视图间随机访问。视图间图片组识别信息对于实现有效的随机访问而言可能是必要的。并且,视图间参考信息对于视图间预测而言可能是必要的。所以,视图间图片组识别信息可以被用于获得视图间参考信息。而且,视图间图片组识别信息可以被用于在构造参考图片列表期间添加用于视图间预测的参考图片。此外,视图间图片组识别信息可以被用于管理所添加的用于视图间预测的参考图片。例如,参考图片可以被分类成视图间图片组和非视图间图片组,并且所分类的参考图片然后能够被标注,从而将不使用不能被用于视图间预测的参考图片。同时,视图间图片组识别信息可应用于假想参考解码器。将在随后参考图6解释视图间图片组识别信息的细节。The inter-view picture group identification information indicates information for identifying whether the coded picture of the current NAL unit is an inter-view picture group (③). In this case, an inter-view picture group refers to a coded picture in which all slices refer only to slices having the same picture sequence number. For example, an inter-view picture group refers to a coded picture that only refers to a slice in a different view and does not refer to a slice in the current view. During decoding of multi-view video, inter-view random access may be required. Inter-view group-of-picture identification information may be necessary to enable efficient random access. Also, inter-view reference information may be necessary for inter-view prediction. Therefore, the inter-view picture group identification information can be used to obtain inter-view reference information. Also, the inter-view picture group identification information may be used to add reference pictures for inter-view prediction during construction of a reference picture list. Also, inter-view picture group identification information may be used to manage added reference pictures for inter-view prediction. For example, reference pictures can be classified into inter-view picture groups and non-inter-view picture groups, and the classified reference pictures can then be marked so that reference pictures that cannot be used for inter-view prediction will not be used. Meanwhile, the inter-view picture group identification information may be applied to a hypothetical reference decoder. Details of the inter-view picture group identification information will be explained later with reference to FIG. 6 .

视图识别信息是指用于将当前视图中的图片与不同视图中的图片相区别的信息(④)。在编码视频信号期间,POC(图片次序号)或者“frame_num(帧号)”可以被用于标识每一个图片。在多视图视频序列的情况下,可以执行视图间预测。所以,需要用于将当前视图中的图片与另一视图中的图片相区别的识别信息。所以,有必要定义用于识别图片的视图的视图识别信息。能够从视频信号的报头区域中获得该视图识别信息。例如,所述报头区域可以是NAL报头区域、NAL报头的扩展区域、或者片段报头区域。使用视图识别信息来获得关于与当前图片的视图不同的视图中的图片的信息,并且能够使用该不同视图中的图片的信息来解码视频信号。视图识别信息可应用于视频信号的总体编码/解码过程。并且,使用考虑视图而不是考虑特定视图标识符的“frame_num”,视图识别信息能够被应用于多视图视频编码。View identification information refers to information used to distinguish a picture in the current view from pictures in a different view (④). During encoding of a video signal, POC (picture order number) or "frame_num (frame number)" may be used to identify each picture. In case of a multi-view video sequence, inter-view prediction may be performed. Therefore, identification information for distinguishing a picture in a current view from a picture in another view is required. Therefore, it is necessary to define view identification information for identifying a view of a picture. This view identification information can be obtained from the header area of the video signal. For example, the header area may be a NAL header area, an extension area of a NAL header, or a segment header area. The view identification information is used to obtain information on a picture in a view different from that of the current picture, and a video signal can be decoded using the information on the picture in the different view. The view identification information can be applied to the overall encoding/decoding process of the video signal. And, view identification information can be applied to multi-view video encoding using 'frame_num' which considers a view instead of a specific view identifier.

同时,熵解码单元200对于所解析的位流执行熵解码,并且然后提取每一个宏块的系数、运动矢量等。反向量化/反向变换单元300通过将所接收的量化值乘以常数而获得经转换的系数值,并且然后反向变换该系数值以重构像素值。使用重构的像素值,帧内预测单元400根据当前图片中的解码采样来执行帧内预测。同时,去块滤波单元500被应用于每一个编码宏块以减少块失真。滤波器平滑块边缘以提高解码帧的图像质量。滤波过程的选择依赖于在边缘周围的图像采样的边界强度和梯度。通过滤波的图片被输出或者被存储于解码图片缓冲器单元600中,以被用作参考图片。Meanwhile, the

解码图片缓冲器单元600充当存储或者打开先前编码的图片以执行帧间预测的角色。在这种情况下,为了在解码图片缓冲器单元600中存储图片或者打开图片,使用每一个图片的“frame_num”和POC(图片次序号)。所以,因为在先前编码的图片中存在在与当前图片的视图不同的视图中的图片,所以用于识别图片的视图的视图信息可以与“frame_num”和POC一起使用。解码图片缓冲器单元600包括参考图片存储单元610、参考图片列表构造单元620以及参考图片管理单元650。参考图片存储单元610存储为编码当前图片而将被参考的图片。参考图片列表构造单元620构造用于图片间(inter-picture)预测的参考图片的列表。在多视图视频编码中,可能需要视图间预测。所以,如果当前图片参考另一视图中的图片,则可能有必要构造用于视图间预测的参考图片列表。在这种情况下,参考图片列表构造单元620能够在产生用于视图间预测的参考图片列表期间使用关于视图的信息。将在随后参考图3解释参考图片列表构造单元620的细节。The decoded picture buffer unit 600 plays a role of storing or opening a previously encoded picture to perform inter prediction. In this case, in order to store a picture in the decoded picture buffer unit 600 or to open a picture, "frame_num" and POC (picture order number) of each picture are used. Therefore, since a picture in a view different from that of the current picture exists in a previously encoded picture, view information for identifying a view of a picture may be used together with 'frame_num' and the POC. The decoded picture buffer unit 600 includes a reference

图3是根据本发明的实施例的参考图片列表构造单元620的内部框图。FIG. 3 is an internal block diagram of the reference picture

参考图片列表构造单元620包括变量推导单元625、参考图片列表初始化单元630和参考列表重排单元640。The reference picture

变量推导单元625推导用于参考图片列表初始化的变量。例如,能够使用指示图片识别号的“frame_num”来推导变量。具体地,变量FrameNum(帧号)和FrameNumWrap(帧号换行)可以被用于每一个短期参考图片。首先,变量FrameNum等于句法元素frame_num的值。变量FrameNumWrap能够被用于解码图片缓冲器单元600以为每一个参考图片分配较小的号。并且,能够从变量FrameNum来推导变量FrameNumWrap。所以,能够使用推导出的变量FrameNumWrap来推导变量PicNum(图片号)。在这种情况下,变量PicNum可以指解码图片缓冲器单元600所使用的图片的识别号。在指示长期参考图片的情况下,可以使用变量LongTermPicNum(长期图片号)。The variable derivation unit 625 derives variables used for reference picture list initialization. For example, a variable can be derived using "frame_num" indicating a picture identification number. Specifically, the variables FrameNum (frame number) and FrameNumWrap (frame number wrap) can be used for each short-term reference picture. First, the variable FrameNum is equal to the value of the syntax element frame_num. The variable FrameNumWrap can be used in the decoded picture buffer unit 600 to assign a smaller number to each reference picture. Also, the variable FrameNumWrap can be derived from the variable FrameNum. Therefore, the variable PicNum (picture number) can be derived using the derived variable FrameNumWrap. In this case, the variable PicNum may refer to the identification number of the picture used by the decoding picture buffer unit 600 . In the case of indicating a long-term reference picture, the variable LongTermPicNum (long-term picture number) can be used.

为了构造用于视图间预测的参考图片列表,可以推导出第一变量(例如,ViewNum(视图号))以构造用于视图间预测的参考图片列表。例如,能够使用用于识别图片的视图的“view_id(视图标识符)”来推导第二变量(例如,ViewId(视图标识符))。首先,第二变量可以等于句法元素“view_id”的值。并且,第三变量(例如,ViewIdWrap(视图标识符换行))可以被用于解码图片缓冲器单元600以向每一个参考图片分配较小的视图识别号,并且可以从第二变量推导出。在这种情况下,第一变量ViewNum可以指解码图片缓冲器单元600所使用的图片的视图识别号。然而,因为在多视图视频编码中用于视图间预测的参考图片的数目可能相对小于用于时间预测的参考图片的数目,所以可以不定义用于指示长期参考图片的视图识别号的另一个变量。To construct a reference picture list for inter-view prediction, a first variable (eg, ViewNum) may be derived to construct a reference picture list for inter-view prediction. For example, the second variable (for example, ViewId (view identifier)) can be derived using "view_id (view identifier)" for identifying a view of a picture. First, the second variable may be equal to the value of the syntax element "view_id". Also, a third variable (eg, ViewIdWrap (view identifier wrap)) may be used in the decoded picture buffer unit 600 to assign a smaller view ID to each reference picture, and may be derived from the second variable. In this case, the first variable ViewNum may refer to a view identification number of a picture used by the decoding picture buffer unit 600 . However, since the number of reference pictures used for inter-view prediction may be relatively smaller than the number of reference pictures used for temporal prediction in multi-view video coding, another variable indicating the view identification number of a long-term reference picture may not be defined .

参考图片列表初始化单元630使用上述变量初始化参考图片列表。在这种情况下,用于参考图片列表的初始化过程可以根据片段类型而不同。例如,在解码P-片段的情况下,可以基于解码次序来分配参考索引。在解码B-片段的情况下,可以基于图片输出次序来分配参考索引。在初始化用于视图间预测的参考图片列表的情况下,可以基于第一变量、即从视图信息推导的变量向参考图片分配索引。The reference picture list initialization unit 630 initializes the reference picture list using the variables described above. In this case, initialization procedures for the reference picture list may differ according to slice types. For example, in case of decoding P-slices, reference indices may be assigned based on decoding order. In case of decoding B-slices, reference indexes may be allocated based on picture output order. In case of initializing a reference picture list for inter-view prediction, an index may be assigned to a reference picture based on a first variable, ie, a variable derived from view information.

参考图片列表重排单元640充当通过向被初始化的参考图片列表中的被频繁地参考的图片分配更小的索引来提高压缩效率的角色。这是因为,如果用于编码的参考索引变得更小则分配较小的位。The reference picture list rearrangement unit 640 plays a role of improving compression efficiency by assigning smaller indexes to frequently referenced pictures in the initialized reference picture list. This is because smaller bits are allocated if the reference index used for encoding becomes smaller.

并且,参考图片列表重排单元640包括片段类型检查单元642、参考图片列表0重排单元643以及参考图片列表1重排单元645。如果输入被初始化的参考图片列表,则片段类型检查单元642检查将被解码的片段的类型并且然后决定是重排参考图片列表0还是参考图片列表1。所以,如果片段类型不是I-片段,则参考图片列表0/1重排单元643、645执行参考图片列表0的重排,并且如果片段类型是B-片段,则还另外地执行参考图片列表1的重排。因此,在重排过程结束之后,参考图片列表得以构造。Furthermore, the reference picture list rearrangement unit 640 includes a segment

参考图片列表0/1重排单元643、645分别包括识别信息获得单元643A、645A和参考索引分配改变单元643B、645B。如果根据指示是否执行参考图片列表的重排的标志信息来执行参考图片列表的重排,则识别信息获得单元643A、645A接收指示参考索引的分配方法的识别信息(reordering_of_pic_nums_idc)。并且,参考索引分配改变单元643B、645B通过根据识别信息改变参考索引的分配而重排参考图片列表。

并且,可以利用另一种方法来操作参考图片列表重排单元640。例如,可以通过检查在通过片段类型检查单元642之前传递的NAL单元类型并且然后将NAL单元类型分类成MVC NAL情形和非MVCNAL情形而执行重排。And, another method may be used to operate the reference picture list rearranging unit 640 . For example, rearrangement may be performed by checking the NAL unit type passed before passing through the segment

参考图片管理单元650管理参考图片以更加灵活地执行帧间预测。例如,可以使用存储器管理控制操作方法和滑动(sliding)窗口方法。这是通过将存储器统一为一个存储器而管理参考图片存储器和非参考图片存储器并且利用较小的存储器来实现有效的存储器管理。在多视图视频编码期间,因为在视图方向中的图片具有相同的图片次序号,所以用于标识每一个图片的视图的信息能够被用于沿着视图方向标注图片。并且,帧间预测单元700可以使用按照以上方式管理的参考图片。The reference

帧间预测单元700使用在解码图片缓冲器单元600中存储的参考图片来执行帧间预测。帧间编码宏块能够被划分成宏块划分(partition)。并且,可以根据一个或者两个参考图片来预测每一个宏块划分。帧间预测单元700包括运动补偿单元710、亮度补偿单元720、亮度差预测单元730、视图合成预测单元740、加权预测单元750等。The inter prediction unit 700 performs inter prediction using reference pictures stored in the decoded picture buffer unit 600 . Inter-coded macroblocks can be divided into macroblock partitions. Also, each macroblock partition can be predicted from one or two reference pictures. The inter prediction unit 700 includes a

运动补偿单元710使用从熵解码单元200传递的信息来补偿当前块的运动。从视频信号中提取当前块的相邻块的运动矢量,并且然后由相邻块的运动矢量推导当前块的运动矢量预测。并且,使用推导出的运动矢量预测以及从视频信号中提取的差分运动矢量来补偿当前块的运动。并且,可以使用一个参考图片或者多个图片来执行运动补偿。在多视图视频编码期间,在当前图片参考不同视图中的图片的情形中,可以使用在解码图片缓冲器单元600中存储的用于视图间预测的参考图片列表信息来执行运动补偿。并且,还能够使用用于标识参考图片的视图的视图信息执行运动补偿。直接模式是用于根据用于编码块的运动信息来预测当前块的运动信息的编码模式。因为这种方法能够节省用于编码运动信息所需要的位的数目,所以压缩效率得以提高。例如,时间方向模式使用时间方向中的运动信息的相关性来预测关于当前块的运动信息。使用类似于该方法的方法,本发明能够使用视图方向中的运动信息的相关性来预测关于当前块的运动信息。The

同时,在输入位流对应于多视图视频的情况下,因为各个视图序列是由不同照相机获得的,所以由于照相机的内部和外部因素而产生亮度差。为了防止这点,亮度补偿单元720补偿亮度差。在执行亮度补偿期间,可以使用指示是否在视频信号的特定层上执行亮度补偿的标志信息。例如,可以使用指示是否对相应的片段或者宏块执行亮度补偿的标志信息来执行亮度补偿。在使用标志信息执行亮度补偿期间,亮度补偿可应用于各种宏块类型(例如,帧间16x16模式、B-跳跃(B-skip)模式、直接模式等)。Meanwhile, in a case where an input bit stream corresponds to a multi-view video, since each view sequence is obtained by a different camera, a luminance difference occurs due to internal and external factors of the camera. In order to prevent this, the brightness compensation unit 720 compensates the brightness difference. During performing luminance compensation, flag information indicating whether to perform luminance compensation on a specific layer of a video signal may be used. For example, luminance compensation may be performed using flag information indicating whether to perform luminance compensation for a corresponding slice or macroblock. During performing luma compensation using flag information, luma compensation can be applied to various macroblock types (eg, inter 16x16 mode, B-skip mode, direct mode, etc.).

在执行亮度补偿期间,能够使用关于相邻块的信息或者关于与当前块的视图不同的视图中的块的信息来重构当前块。并且,还能够使用当前块的亮度差值。在这种情况下,如果当前块参考不同视图中的块,则能够使用在解码图片缓冲器单元600中存储的用于视图间预测的参考图片列表信息来执行亮度补偿。在这种情况下,当前块的亮度差值指示在当前块的平均像素值和相应于当前块的参考块的平均像素值之间的差。作为使用亮度差值的示例,使用当前块的相邻块获得当前块的亮度差预测值,并且使用在亮度差值和亮度差预测值之间的差值(亮度差残值)。因此,解码单元能够使用该亮度差残值和亮度差预测值来重构当前块的亮度差值。在获得当前块的亮度差预测值期间,可以使用关于相邻块的信息。例如,可以使用相邻块的亮度差值来预测当前块的亮度差值。在预测之前,检查当前块的参考索引是否等于相邻块的参考索引。根据检查结果,然后决定将使用哪一种相邻块或者值。During performing luminance compensation, the current block can be reconstructed using information on neighboring blocks or information on blocks in a view different from that of the current block. And, it is also possible to use the luminance difference value of the current block. In this case, if the current block refers to a block in a different view, brightness compensation can be performed using reference picture list information for inter-view prediction stored in the decoded picture buffer unit 600 . In this case, the luminance difference value of the current block indicates a difference between an average pixel value of the current block and an average pixel value of a reference block corresponding to the current block. As an example of using the luminance difference value, a luminance difference predicted value of the current block is obtained using neighboring blocks of the current block, and a difference between the luminance difference value and the luminance difference predicted value (luminance difference residual value) is used. Therefore, the decoding unit can reconstruct the luma difference value of the current block using the luma difference residual value and the luma difference predictive value. During obtaining the luma difference predictor for the current block, information about neighboring blocks may be used. For example, the brightness difference value of the adjacent block may be used to predict the brightness difference value of the current block. Before prediction, it is checked whether the reference index of the current block is equal to the reference index of the neighboring block. Based on the result of the check, it is then decided which neighbor or value will be used.

视图合成预测单元740被用于使用与当前图片的视图相邻的视图中的图片来合成虚拟视图中的图片,并且使用虚拟视图中的合成图片来预测当前图片。解码单元能够根据从编码单元传递的视图间合成预测标识符来决定是否合成虚拟视图中的图片。例如,如果view_synthesize_pred_flag=1或者view_syn_pred_flag=1,则合成虚拟视图中的片段或者宏块。在这种情况下,当视图间合成预测标识符告知将产生虚拟视图时,可以使用用于识别图片的视图的视图信息来产生虚拟视图中的图片。并且,在根据虚拟视图中的合成图片来预测当前图片期间,可以使用视图信息来使用虚拟视图中的图片作为参考图片。The view

加权预测单元750被用于在编码其亮度暂时改变的序列的情况下补偿该序列的图片质量显著降低的现象。在MVC中,可以执行加权预测以补偿与不同视图中的序列的亮度差,以及对其亮度暂时改变的序列执行加权预测。例如,加权预测方法可以被分类成显式加权预测方法和隐式加权预测方法。The

具体地,显式加权预测方法能够使用一个参考图片或者两个参考图片。在使用一个参考图片的情况下,通过将相应于运动补偿的预测信号乘以加权系数而产生预测信号。在使用两个参考图片的情况下,通过将偏移值增加到将相应于运动补偿的预测信号乘以加权系数所得到的值,从而产生预测信号。Specifically, the explicit weighted prediction method can use one reference picture or two reference pictures. In the case of using one reference picture, a prediction signal is generated by multiplying a prediction signal corresponding to motion compensation by a weighting coefficient. In the case of using two reference pictures, a prediction signal is generated by adding an offset value to a value obtained by multiplying a prediction signal corresponding to motion compensation by a weighting coefficient.

并且,隐式加权预测使用离参考图片的距离执行加权预测。作为获得离参考图片的距离的方法,可以例如使用指示图片输出次序的POC(图片次序号)。在这种情况下,可以通过考虑每一个图片的视图的标识而获得POC。在获得关于不同视图中的图片的加权系数期间,可以使用用于识别图片的视图的视图信息以获得在各个图片的视图之间的距离。And, implicit weighted prediction performs weighted prediction using a distance from a reference picture. As a method of obtaining the distance from the reference picture, for example, POC (Picture Order Number) indicating the output order of pictures can be used. In this case, the POC can be obtained by considering the identity of the view of each picture. In obtaining weighting coefficients about pictures in different views, view information for identifying views of pictures may be used to obtain distances between views of respective pictures.

在视频信号编码期间,深度信息可用于特定应用或者另一目的。在这种情况下,深度信息可以指能够指示视图间视差差异的信息。例如,能够通过视图间预测获得视差矢量。并且,所获得的视差矢量应该被传递到解码装置以用于当前块的视差补偿。然而,如果获得深度映射并且然后其被传递到解码装置,则可以根据深度映射(或者视差映射)推出视差矢量而不用将视差矢量传递到解码装置。在这种情况下,其优势在于要被传递到解码装置的深度信息的位数能够被降低。所以,通过根据深度映射推导视差矢量,能够提供一种新的视差补偿方法。因此,在根据深度映射推导视差矢量期间使用不同视图中的图片的情况下,可以使用用于标识图片的视图的视图信息。During encoding of a video signal, depth information may be used for a specific application or for another purpose. In this case, the depth information may refer to information capable of indicating a disparity difference between views. For example, a disparity vector can be obtained by inter-view prediction. And, the obtained disparity vector should be delivered to the decoding device for disparity compensation of the current block. However, if a depth map is obtained and then passed to a decoding device, it is possible to derive a disparity vector from the depth map (or a disparity map) without passing the disparity vector to a decoding device. In this case, there is an advantage in that the number of bits of depth information to be delivered to the decoding device can be reduced. Therefore, by deriving the disparity vector from the depth map, a new method of disparity compensation can be provided. Therefore, in case pictures in different views are used during derivation of the disparity vector from the depth map, view information for identifying the view of the picture may be used.

根据预测模式选择通过上面解释的过程的帧间预测或者帧内预测图片以重构当前图片。在下面的说明中,对提供有效的视频信号的解码方法的各种实施例进行解释。An inter-predicted or intra-predicted picture through the process explained above is selected according to the prediction mode to reconstruct the current picture. In the following description, various embodiments of a decoding method providing an efficient video signal are explained.

图4是根据本发明的一个实施例的用于提供视频信号的视图可分级性的级别信息的分层结构的图表。FIG. 4 is a diagram of a hierarchical structure for providing level information of view scalability of a video signal according to one embodiment of the present invention.

参考图4,可以通过考虑视图间参考信息来决定关于每一个视图的级别信息。例如,因为不可能没有I-图片地解码P-图片和B-图片,所以可以向其视图间图片组是I-图片的基础视图分配“level=0”,向其视图间图片组是P-图片的基础视图分配“level=1”,并且向其视图间图片组是B-图片的基础视图分配“level=2”。然而,还能够根据特定标准随机地决定级别信息。Referring to FIG. 4 , level information on each view may be decided by considering inter-view reference information. For example, since it is impossible to decode P-pictures and B-pictures without I-pictures, "level=0" may be assigned to a base view whose inter-view picture group is an I-picture, and to a base view whose inter-view picture group is a P-picture. The base view of a picture is assigned "level=1", and "level=2" is assigned to the base view whose inter-view picture group is a B-picture. However, it is also possible to randomly decide the level information according to certain criteria.

可以根据特定标准或者无需标准地随机地决定级别信息。例如,在基于视图决定级别信息的情况下,可以将作为基础视图的视图V0设置为视图级别0,将使用一个视图中的图片预测的图片的视图设置为视图级别1,并且将使用多个视图中的图片预测的图片的视图设置为视图级别2。在这种情况下,可能需要具有与传统的解码器(例如,H.264/AVC、MPEG-2、MPEG-4等)的兼容性的至少一个视图序列。这个基础视图成为多视图编码的基础,它可以对应于用于预测另一个视图的参考视图。在MVC(多视图视频编码)中对应于基础视图的序列可以通过利用传统的序列编码方案(MPEG-2、MPEG-4、H.263、H.264等)编码而被配置成独立位流。对应于基础视图的序列能够与H.264/AVC相兼容或者可以不相兼容。然而,在与H.264/AVC相兼容的视图中的序列对应于基础视图。The level information may be randomly decided according to a certain standard or without a standard. For example, in the case of determining level information based on views, view V0 as a base view can be set to view

如能够在图4中看到的,可以将使用视图V0中的图片预测的图片的视图V2、使用视图V2中的图片预测的图片的视图V4、使用视图V4中的图片预测的图片的视图V6以及使用视图V6中的图片预测的图片的视图V7设置为视图级别1。并且,可以将使用视图V0和V2中的图片预测的图片的视图V1和以相同方式预测的视图V3、以及以相同方式预测的视图V5设置为视图级别2。所以,在使用者的解码器不能观看多视图视频序列的情况下,其仅仅解码对应于视图级别0的视图中的序列。在使用者的解码器受到类信息限制的情况下,可以仅仅解码受限制的视图级别的信息。在这种情况下,类是指用于视频编码/解码过程中的算法的技术元素被标准化。具体地,类是用于解码压缩序列的位序列所需要的一组技术元素并且可以是一种子标准。As can be seen in FIG. 4 , view V2 of pictures predicted using pictures in view V0 , view V4 of pictures predicted using pictures in view V2 , view V6 of pictures predicted using pictures in view V4 And view V7 of the picture predicted using the picture in view V6 is set to view

根据本发明的另一个实施例,级别信息可以根据照相机的位置而改变。例如,假设视图V0和V1是由位于前面的照相机获得的序列,视图V2和V3是由位于后面的照相机获得的序列,视图V4和V5是由位于左侧的照相机获得的序列,并且视图V6和V7是由位于右侧的照相机获得的序列,则可以将视图V0和V1设置为视图级别0,将视图V2和V3设置为视图级别1,将视图V4和V5设置为视图级别2,并且将视图V6和V7设置为视图级别3。可替代地,级别信息可以根据照相机校准而改变。可替代地,级别信息可以不基于特定标准地被随机地决定。According to another embodiment of the present invention, the level information can be changed according to the position of the camera. For example, assume that views V0 and V1 are sequences obtained by cameras located at the front, views V2 and V3 are sequences obtained by cameras located at the rear, views V4 and V5 are sequences obtained by cameras located at the left, and views V6 and V7 is the sequence acquired by the camera on the right, then you can set views V0 and V1 to view

图5是根据本发明的一个实施例的在NAL报头的扩展区域中包括级别信息的NAL单元配置的图表。FIG. 5 is a diagram of a NAL unit configuration including level information in an extension area of a NAL header according to one embodiment of the present invention.

参考图5,NAL单元基本上包括NAL报头和RBSP。NAL报头包括指示是否包括成为NAL单元的参考图片的片段的标志信息(nal_ref_idc)和指示NAL单元的类型的标识符(nal_unit_type)。并且,NAL报头还可包括指示关于分层结构以提供视图可分级性的信息的级别信息(view_level)。Referring to FIG. 5, a NAL unit basically includes a NAL header and an RBSP. The NAL header includes flag information (nal_ref_idc) indicating whether a slice becoming a reference picture of the NAL unit is included and an identifier (nal_unit_type) indicating the type of the NAL unit. And, the NAL header may further include level information (view_level) indicating information on a hierarchical structure to provide view scalability.

压缩的原始数据被存储在RBSP中,并且RBSP末位被添加到RBSP的最后部分以表示RBSP的长度为8-位乘法数。作为NAL单元的类型,有IDR(即时解码刷新:instantaneous decoding refresh)、SPS(序列参数集:sequence parameter set)、PPS(图片参数集:pictureparameter set)、SEI(附加增强信息:supplemental enhancementinformation)等。The compressed raw data is stored in the RBSP, and the last bit of the RBSP is added to the last part of the RBSP to indicate that the length of the RBSP is an 8-bit multiplication number. As the type of NAL unit, there are IDR (instant decoding refresh: instantaneous decoding refresh), SPS (sequence parameter set: sequence parameter set), PPS (picture parameter set: picture parameter set), SEI (additional enhancement information: supplemental enhancement information), etc.

NAL报头包括关于视图标识符的信息。并且,在根据视图级别执行解码期间,参考视图标识符解码相应视图级别的视频序列。The NAL header includes information on the view identifier. And, during decoding according to the view level, the video sequence of the corresponding view level is decoded with reference to the view identifier.

NAL单元包括NAL报头51和片段层53。NAL报头51包括NAL报头扩展52。并且,片段层53包括片段报头54和片段数据55。A NAL unit includes a

NAL报头51包括指示NAL单元的类型的标识符(nal_unit_type)。例如,指示NAL单元类型的标识符可以是关于可分级编码和多视图视频编码的标识符。在这种情况下,NAL报头扩展52可以包括区分当前NAL是用于可分级视频编码的NAL还是用于多视图视频编码的NAL的标志信息。并且,根据标志信息,NAL报头扩展52可以包括关于当前NAL的扩展信息。例如,在根据标志信息当前NAL是用于多视图视频编码的NAL的情况下,NAL报头扩展52可以包括指示关于分层结构以提供视图可分级性的信息的级别信息(view_level)。The

图6是根据本发明的一个实施例的多视图视频信号的总体预测结构的图表,用于解释视图间图片组的概念。FIG. 6 is a diagram of an overall prediction structure of a multi-view video signal according to an embodiment of the present invention, for explaining the concept of an inter-view picture group.

参考图6,水平轴上的T0到T100指示根据时间的帧,并且垂直轴上的S0到S7指示根据视图的帧。例如,在T0处的图片是指在同一时间区T0由不同照相机捕捉的帧,而在S0处的图片是指在不同时间区由单一照相机捕捉的序列。并且,在图中的箭头指示各个图片的预测方向和预测次序。例如,在时间区T0上的视图S2中的图片P0是从I0预测的图片,它成为在时间区T0上的视图S4中的图片P0的参考图片。并且,它分别地成为在视图S2中的时间区T4和T2上的图片B1和B2的参考图片。Referring to FIG. 6 , T0 to T100 on the horizontal axis indicate frames according to time, and S0 to S7 on the vertical axis indicate frames according to views. For example, a picture at T0 refers to frames captured by different cameras at the same time zone T0, while a picture at S0 refers to a sequence captured by a single camera at different time zones. And, arrows in the figure indicate prediction directions and prediction orders of respective pictures. For example, picture P0 in view S2 on time zone T0 is a picture predicted from I0, which becomes a reference picture for picture P0 in view S4 on time zone T0. And, it becomes a reference picture for pictures B1 and B2 on time zones T4 and T2 in view S2, respectively.

在多视图视频解码过程中,可能需要视图间随机访问。所以,通过减少解码强度(effort),对随机视图的访问应该是可能的。在这种情况下,实现有效的访问可能需要视图间图片组的概念。视图间图片组是指其中所有的片段仅仅参考具有相同图片次序号的片段的编码图片。例如,视图间图片组是指仅仅参考不同视图中的片段而不参考当前视图中的片段的编码图片。在图6中,如果在时间区T0上的视图S0中的图片I0是视图间图片组,则在同一时间区、即时间区T0上的不同视图中的所有的图片均成为视图间图片组。作为另一个例子,如果时间区T8上的视图S0中的图片I0是视图间图片组,则在同一时间区、即时间区T8上的不同视图中的所有的图片均是视图间图片组。同样地,T16,...,T96,和T100中所有的图片也成为视图间图片组。During multi-view video decoding, inter-view random access may be required. So, by reducing decoding effort, access to random views should be possible. In this case, implementing efficient access may require the concept of an inter-view picture group. An inter-view picture group refers to a coded picture in which all slices refer only to slices with the same picture order number. For example, an inter-view picture group refers to a coded picture that only refers to a slice in a different view and does not refer to a slice in the current view. In FIG. 6 , if the picture I0 in the view S0 in the time zone T0 is an inter-view picture group, then all the pictures in different views in the same time zone, that is, in the time zone T0, become an inter-view picture group. As another example, if picture I0 in view S0 in time zone T8 is an inter-view picture group, all pictures in different views in the same time zone, that is, time zone T8, are inter-view picture groups. Likewise, all pictures in T16, ..., T96, and T100 also become inter-view picture groups.

图7是根据本发明的实施例的预测结构的图表,用于解释新定义的视图间图片组的概念。FIG. 7 is a diagram of a prediction structure for explaining the concept of a newly defined inter-view picture group according to an embodiment of the present invention.

在MVC的总体预测结构中,GOP可以以I-图片开始。并且,I-图片与H.264/AVC相兼容。所以,与H.264/AVC相兼容的所有的视图间图片组总是能够成为I-图片。然而,在用P-图片来代替I-图片的情况下,可以进行更加有效的编码。具体地,使用使得GOP能够以与H.264/AVC相兼容的P-图片开始的预测结构使得能够进行更加有效的编码。In the overall prediction structure of MVC, a GOP may start with an I-picture. Also, the I-picture is compatible with H.264/AVC. Therefore, all inter-view picture groups compatible with H.264/AVC can always be I-pictures. However, more efficient encoding is possible in the case where P-pictures are used instead of I-pictures. Specifically, using a prediction structure that enables a GOP to start with a P-picture compatible with H.264/AVC enables more efficient encoding.

在这种情况下,如果视图间图片组被重新定义,则所有的片段成为能够不仅参考在同一时间区上的帧中的片段而且参考在不同时间区上的同一视图中的片段的编码图片。然而,在参考同一视图中在不同时间区上的片段的情形中,其可以被限制为仅仅与H.264/AVC相兼容的视图间图片组。例如,在图6中的视图S0中的时序点T8上的P-图片可以成为新定义的视图间图片组。同样地,在视图S0中的时序点T96上的P-图片或者在视图S0中的时序点T100上的P-图片可以成为新定义的视图间图片组。并且,能够仅当其是基础视图时定义视图间图片组。In this case, if the inter-view picture group is redefined, all slices become coded pictures capable of referring not only slices in frames on the same time zone but also slices in the same view on different time zones. However, in the case of referring to slices on different time zones in the same view, it may be limited to only inter-view picture groups compatible with H.264/AVC. For example, the P-picture at the timing point T8 in view S0 in FIG. 6 may become a newly defined inter-view picture group. Likewise, the P-picture at the timing point T96 in the view S0 or the P-picture at the timing point T100 in the view S0 can become a newly defined inter-view picture group. And, it is able to define an inter-view picture group only when it is a base view.

在视图间图片组已被解码之后,根据按照输出次序在该视图间图片组之前被解码的图片来解码所有的顺序编码图片,而不进行帧间预测。After an inter-view picture group has been decoded, all sequentially coded pictures are decoded from the pictures decoded before the inter-view picture group in output order without inter prediction.

考虑图6和图7所示的多视图视频的总体编码结构,因为视图间图片组的视图间参考信息不同于非视图间图片组的视图间参考信息,所以有必要根据视图间图片组识别信息将视图间图片组和非视图间图片组相互区分。Considering the overall coding structure of multi-view video shown in Figure 6 and Figure 7, because the inter-view reference information of the inter-view picture group is different from the inter-view reference information of the non-inter-view picture group, it is necessary to identify the information according to the inter-view picture group Inter-view picture groups and non-inter-view picture groups are distinguished from each other.

视图间参考信息是指能够识别出视图间图片之间的预测结构的信息。这能够从视频信号的数据区域中获得。例如,可以从序列参数集区域中获得。并且,可以使用参考图片的数目和关于参考图片的视图信息来识别视图间参考信息。例如,获得全部视图的数目并且然后可以基于所有视图的数目来获得用于识别每一个视图的视图信息。并且,可以获得对于每一个视图的参考方向的参考图片的数目。根据参考图片的数目,可以获得关于每一个参考图片的视图信息。以此方式,能够获得视图间参考信息。并且,通过将视图间图片组和非视图间图片组相区分,可以识别视图间参考信息。这能够使用指示当前NAL中的编码片段是否为视图间图片组的视图间图片组识别信息而被获得。如下参考图8解释视图间图片组识别信息的细节。The inter-view reference information refers to information capable of identifying a prediction structure between inter-view pictures. This can be obtained from the data field of the video signal. For example, available from the Sequence Parameter Sets area. And, inter-view reference information may be identified using the number of reference pictures and view information on the reference pictures. For example, the number of all views is obtained and then view information for identifying each view may be obtained based on the number of all views. And, the number of reference pictures of the reference direction for each view may be obtained. According to the number of reference pictures, view information on each reference picture can be obtained. In this way, inter-view reference information can be obtained. And, inter-view reference information can be identified by distinguishing an inter-view picture group from a non-inter-view picture group. This can be obtained using inter-view picture group identification information indicating whether a coded slice in the current NAL is an inter-view picture group. Details of the inter-view picture group identification information are explained with reference to FIG. 8 as follows.

图8是根据本发明的一个实施例的用于使用视图间图片组识别信息来解码多视图视频的装置的示意性框图。Fig. 8 is a schematic block diagram of an apparatus for decoding multi-view video using inter-view group of picture identification information according to an embodiment of the present invention.

参考图8,根据本发明的一个实施例的解码装置包括位流决定单元81、视图间图片组识别信息获得单元82以及多视图视频解码单元83。Referring to FIG. 8 , a decoding device according to an embodiment of the present invention includes a

如果输入位流,则位流决定单元81决定输入位流是用于可分级视频编码的编码位流还是用于多视图视频编码的编码位流。这能够通过在位流中包括的标志信息而被决定。If a bit stream is input, the bit

如果作为决定结果,输入位流是用于多视图视频编码的位流,则视图间图片组识别信息获得单元82能够获得视图间图片组识别信息。如果所获得的视图间图片组识别信息是“true(真)”,则其是指当前NAL的编码片段是视图间图片组。如果所获得的视图间图片组识别信息是“false(假)”,则其是指当前NAL的编码片段是非视图间图片组。可以从NAL报头的扩展区域或者片段层区域中获得该视图间图片组识别信息。If, as a result of the decision, the input bit stream is a bit stream for multi-view video encoding, the inter-view picture group identification

多视图视频解码单元83根据视图间图片组识别信息解码多视图视频。根据多视图视频序列的总体编码结构,视图间图片组的视图间参考信息不同于非视图间图片组的视图间参考信息。所以,例如在添加用于视图间预测的参考图片期间,可以使用视图间图片组识别信息,以产生参考图片列表。并且,还能够使用视图间图片组识别信息来管理用于视图间预测的参考图片。而且,视图间图片组识别信息可以被应用于假想参考解码器。The multi-view

作为使用视图间图片组识别信息的另一个示例,在对于每一个解码过程使用不同视图中的信息的情况下,可以使用在序列参数集中包括的视图间参考信息。在这种情况下,可能需要用于区分当前图片是视图间图片组还是非视图间图片组的信息,即,视图间图片组识别信息。所以,能够对于每一个解码过程使用不同的视图间参考信息。As another example of using inter-view picture group identification information, in the case of using information in different views for each decoding process, inter-view reference information included in a sequence parameter set may be used. In this case, information for distinguishing whether the current picture is an inter-view picture group or a non-inter-view picture group, ie, inter-view picture group identification information, may be required. Therefore, different inter-view reference information can be used for each decoding process.

图9是根据本发明的实施例的用于产生参考图片列表的过程的流程图。FIG. 9 is a flowchart of a process for generating a reference picture list according to an embodiment of the present invention.

参考图9,解码图片缓冲器单元600充当存储或者打开先前编码图片以执行图片间预测的角色。Referring to FIG. 9 , the decoded picture buffer unit 600 plays a role of storing or opening a previously encoded picture to perform inter-picture prediction.

首先,在当前图片之前被编码的图片被存储在参考图片存储单元610中,以被用作参考图片(S91)。First, a picture encoded prior to a current picture is stored in the reference

在多视图视频编码期间,因为先前编码图片中的一些位于与当前图片的视图不同的视图中,因此用于识别图片的视图的视图信息可以被用于利用这些图片作为参考图片。所以,解码器应该获得用于识别图片的视图的视图信息(S92)。例如,视图信息可以包括用于识别图片的视图的“view_id”。During multi-view video coding, because some of previously coded pictures are located in different views than the view of the current picture, view information for identifying the view of a picture can be used to utilize these pictures as reference pictures. Therefore, the decoder should obtain view information for identifying a view of a picture (S92). For example, the view information may include "view_id" for identifying a view of a picture.

解码图片缓冲器单元600需要推导在其中使用的变量以产生参考图片列表。因为对于多视图视频编码可能需要视图间预测,所以如果当前图片参考不同视图中的图片,则可能有必要产生用于视图间预测的参考图片列表。在这种情况下,解码图片缓冲器单元600需要使用所获得的视图信息来推导用来产生用于视图间预测的参考图片列表的变量(S93)。The decoded picture buffer unit 600 needs to derive the variables used therein to generate the reference picture list. Because inter-view prediction may be required for multi-view video coding, it may be necessary to generate a reference picture list for inter-view prediction if the current picture references pictures in different views. In this case, the decoded picture buffer unit 600 needs to use the obtained view information to derive variables used to generate a reference picture list for inter-view prediction (S93).

根据当前片段的片段类型,可以通过不同的方法来产生用于时间预测的参考图片列表或者用于视图间预测的参考图片列表(S94)。例如,如果片段类型是P/SP片段,则产生参考图片列表0(S95)。在片段类型是B-片段的情形中,产生参考图片列表0和参考图片列表1(S96)。在这种情况下,参考图片列表0或者1可以仅仅包括用于时间预测的参考图片列表或者用于时间预测的参考图片列表和用于视图间预测的参考图片列表两者。这将在随后参考图8和图9详细解释。According to the segment type of the current segment, a reference picture list for temporal prediction or a reference picture list for inter-view prediction may be generated by different methods ( S94 ). For example, if the slice type is a P/SP slice,

被初始化的参考图片列表经历用于向被频繁地参考的图片分配更小的号以进一步提高压缩速率的过程(S97)。并且,这可以被称为用于参考图片列表的重排过程,这将在随后参考图12到19详细解释。使用经重排的参考图片列表来解码当前图片,并且解码图片缓冲器单元600需要管理解码的参考图片以更加有效地操作缓冲器(S98)。通过以上过程管理的参考图片被帧间预测单元700读取出,以被用于帧间预测。在多视图视频编码中,帧间预测可以包括视图间预测。在这种情况下,可使用用于视图间预测的参考图片列表。The initialized reference picture list undergoes a process for assigning smaller numbers to frequently referenced pictures to further increase the compression rate (S97). And, this may be called a rearrangement process for a reference picture list, which will be explained in detail later with reference to FIGS. 12 to 19 . The current picture is decoded using the rearranged reference picture list, and the decoded picture buffer unit 600 needs to manage the decoded reference pictures to operate the buffer more efficiently (S98). The reference picture managed through the above process is read out by the inter prediction unit 700 to be used for inter prediction. In multi-view video coding, inter prediction may include inter-view prediction. In this case, a reference picture list for inter-view prediction can be used.

如下参考图10和图11解释用于根据片段类型产生参考图片列表的方法的详细示例。A detailed example of a method for generating a reference picture list according to slice types is explained with reference to FIGS. 10 and 11 as follows.

图10是根据本发明的一个实施例的用于解释当当前片段是P-片段时初始化参考图片列表的方法的图表。FIG. 10 is a diagram for explaining a method of initializing a reference picture list when a current slice is a P-slice according to one embodiment of the present invention.

参考图10,时间由T0,T1,...TN指示,而视图由V0,V1,...,V4指示。例如,当前图片指示在视图V4中在时间T3的图片。并且,当前图片的片段类型是P-片段。“PN”是变量PicNum的缩写,“LPN”是变量LongTermPicNum的缩写,并且“VN”是变量ViewNum的缩写。附加到每一个变量末端部分的数字指示每一个图片的时间(对于PN或者LPN)或者每一个图片的视图(对于VN)的索引。这可以以相同方式被应用于图11。Referring to FIG. 10, times are indicated by T0, T1, ... TN, and views are indicated by V0, V1, ..., V4. For example, the current picture indicates the picture at time T3 in view V4. And, the slice type of the current picture is a P-slice. "PN" is an abbreviation of a variable PicNum, "LPN" is an abbreviation of a variable LongTermPicNum, and "VN" is an abbreviation of a variable ViewNum. A number appended to the end part of each variable indicates the time (for PN or LPN) of each picture or the index of the view (for VN) of each picture. This can be applied to FIG. 11 in the same manner.

可以根据当前片段的片段类型以不同方式产生用于时间预测的参考图片列表或者用于视图间预测的参考图片列表。例如,在图12中的片段类型是P/SP片段。在这种情况下,产生参考图片列表0。具体地,参考图片列表0可以包括用于时间预测的参考图片列表和/或用于视图间预测的参考图片列表。在本实施例中,假设参考图片列表包括用于时间预测的参考图片列表和用于视图间预测的参考图片列表两者。A reference picture list for temporal prediction or a reference picture list for inter-view prediction may be generated differently according to a slice type of a current slice. For example, the segment type in FIG. 12 is a P/SP segment. In this case,

存在各种方法来排序参考图片。例如,可以根据解码或者图片输出的次序排列参考图片。可替代地,可以基于使用视图信息推导的变量排列参考图片。可替代地,可以根据指示视图间预测结构的视图间参考信息排列参考图片。Various methods exist to order reference pictures. For example, reference pictures may be arranged according to the order of decoding or picture output. Alternatively, reference pictures may be arranged based on variables derived using view information. Alternatively, reference pictures may be arranged according to inter-view reference information indicating an inter-view prediction structure.

在用于时间预测的参考图片列表的情形中,可以基于解码次序来排列短期参考图片和长期参考图片。例如,可以根据从指示图片识别号的值(例如,frame_num或者Longtermframeidx)推导的变量PicNum或者LongTermPicNum的值来排列它们。首先,短期参考图片可以在长期参考图片之前被初始化。可以从具有最高值的变量PicNum的参考图片到具有最低变量值的参考图片来设置短期参考图片的排列次序。例如,在PN0到PN2中,可以按照具有最高变量的PN1、具有中间变量的PN2和具有最低变量的PN0的次序来排列短期参考图片。可以从具有最低值的变量LongTermPicNum的参考图片到具有最高变量值的参考图片来设置长期参考图片的排列次序。例如,可以按照具有最高变量的LPN0和具有最低变量的LPN1的次序来排列长期参考图片。In case of a reference picture list for temporal prediction, short-term reference pictures and long-term reference pictures may be arranged based on a decoding order. For example, they may be arranged according to the value of the variable PicNum or LongTermPicNum derived from a value indicating a picture identification number (for example, frame_num or Longtermframeidx). First, short-term reference pictures can be initialized before long-term reference pictures. The arrangement order of the short-term reference pictures may be set from a reference picture having the highest value of variable PicNum to a reference picture having the lowest value of the variable. For example, among PN0 to PN2, short-term reference pictures may be arranged in the order of PN1 having the highest variance, PN2 having an intermediate variance, and PN0 having the lowest variance. The arrangement order of the long-term reference pictures may be set from a reference picture having the lowest value of the variable LongTermPicNum to a reference picture having the highest value of the variable. For example, the long-term reference pictures may be arranged in the order of LPN0 having the highest variance and LPN1 having the lowest variance.

在用于视图间预测的参考图片列表的情形中,可以基于使用视图信息推导的第一变量ViewNum来排列参考图片。具体地,可以按照具有最高第一变量(ViewNum)值的参考图片到具有最低第一变量(ViewNum)值的参考图片的次序来排列参考图片。例如,在VN0、VN1、VN2和VN3中,可以按照具有最高变量的VN3、VN2、VN1和具有最低变量的VN0的次序来排列参考图片。In case of a reference picture list for inter-view prediction, the reference pictures may be arranged based on the first variable ViewNum derived using view information. Specifically, the reference pictures may be arranged in the order from the reference picture with the highest value of the first variable (ViewNum) to the reference picture with the lowest value of the first variable (ViewNum). For example, among VN0, VN1, VN2, and VN3, reference pictures may be arranged in the order of VN3, VN2, VN1 having the highest variance and VN0 having the lowest variance.

因此,可以作为一个参考图片列表来管理用于时间预测的参考图片列表和用于视图间预测的参考图片列表两者。可替代地,可以作为单独的参考图片列表分别地管理用于时间预测的参考图片列表和用于视图间预测的参考图片列表。在作为一个参考图片列表管理用于时间预测的参考图片列表和用于视图间预测的参考图片列表两者的情形中,可以按照次序或者同时地初始化它们。例如,在根据次序初始化用于时间预测的参考图片列表和用于视图间预测的参考图片列表两者的情形中,用于时间预测的参考图片列表被优先地初始化,并且用于视图间预测的参考图片列表然后被附加地初始化。该概念也可应用于图11。Therefore, both the reference picture list for temporal prediction and the reference picture list for inter-view prediction can be managed as one reference picture list. Alternatively, the reference picture list for temporal prediction and the reference picture list for inter-view prediction may be managed separately as separate reference picture lists. In the case where both the reference picture list for temporal prediction and the reference picture list for inter-view prediction are managed as one reference picture list, they may be initialized sequentially or simultaneously. For example, in the case where both the reference picture list for temporal prediction and the reference picture list for inter-view prediction are initialized according to the order, the reference picture list for temporal prediction is preferentially initialized, and the reference picture list for inter-view prediction The reference picture list is then additionally initialized. This concept can also be applied to Figure 11.

如下参考图11解释当前图片的片段类型是B-片段的情形。A case where a slice type of a current picture is a B-slice is explained with reference to FIG. 11 as follows.

图11是根据本发明的一个实施例的用于解释当当前片段是B-片段时初始化参考图片列表的方法的图表。FIG. 11 is a diagram for explaining a method of initializing a reference picture list when a current slice is a B-slice according to one embodiment of the present invention.

参考图9,在片段类型是B-片段的情形中,产生参考图片列表0和参考图片列表1。在这种情况下,参考图片列表0或者参考图片列表1可以包括仅仅用于时间预测的参考图片列表或者用于时间预测的参考图片列表和用于视图间预测的参考图片列表两者。Referring to FIG. 9 , in case the slice type is a B-slice,

在用于时间预测的参考图片列表的情形中,短期参考图片排列方法可以不同于长期参考图片排列方法。例如,在短期参考图片的情形中,可以根据图片次序号(在下文中简称POC)来排列参考图片。在长期参考图片的情形中,可以根据变量(LongtermPicNum)值来排列参考图片。并且,短期参考图片可以在长期参考图片之前被初始化。In the case of a reference picture list for temporal prediction, the short-term reference picture arrangement method may be different from the long-term reference picture arrangement method. For example, in the case of short-term reference pictures, the reference pictures may be arranged according to picture order numbers (abbreviated as POC hereinafter). In the case of long-term reference pictures, the reference pictures may be arranged according to the variable (LongtermPicNum) value. Also, short-term reference pictures may be initialized before long-term reference pictures.

在排列参考图片列表0的短期参考图片的次序中,在具有小于当前图片的POC值的参考图片中,优先地从具有最高POC值的参考图片到具有最低POC值的参考图片排列参考图片,并且然后在具有大于当前图片的POC值的参考图片中,从具有最低POC值的参考图片到具有最高POC值的参考图片进行排列。例如,可以优先地从在具有小于当前图片的POC值的参考图片PN0和PN1中具有最高POC值的PN1到PN0排列参考图片,并且然后从在具有小于当前图片的POC值的参考图片PN3和PN4中具有最低POC值的PN3到PN4排列。In the order of arranging the short-term reference pictures of the

在排列参考图片列表0的长期参考图片的次序中,从具有最低变量LongtermPicNum的参考图片到具有最高变量的参考图片来排列参考图片。例如,从在LPNO和LPN1中具有最低值的LPN0到具有第二最低变量的LPN1来排列参考图片。In the order in which the long-term reference pictures of the

在用于视图间预测的参考图片列表的情形中,可以基于使用视图信息推导的第一变量ViewNum来排列参考图片。例如,在用于视图间预测的参考图片列表0的情形中,可以从在具有比当前图片更低的第一变量值的参考图片中具有最高的第一变量值的参考图片到具有最低的第一变量值的参考图片来排列参考图片。然后从在具有大于当前图片的第一变量值的参考图片中具有最低的第一变量值的参考图片到具有最高的第一变量值的参考图片来排列参考图片。例如,优先地从在具有小于当前图片的第一变量值的VN0和VN1中具有最高的第一变量值的VN1到具有最低的第一变量值的VN0排列参考图片,并且然后从在具有大于当前图片的第一变量值的VN3和VN4中具有最低的第一变量值的VN3到具有最高的第一变量值的VN4进行排列。In case of a reference picture list for inter-view prediction, the reference pictures may be arranged based on the first variable ViewNum derived using view information. For example, in the case of

在参考图片列表1的情形中,可以类似地应用在上面解释的排列参考列表0的方法。In the case of

首先,在用于时间预测的参考图片列表的情形中,在排列参考图片列表1的短期参考图片的次序中,优先地在具有大于当前图片的POC值的参考图片中从具有最低POC值的参考图片到具有最高POC值的参考图片来排列参考图片,并且然后在具有小于当前图片的POC值的参考图片中从具有最高POC值的参考图片到具有最低POC值的参考图片进行排列。例如,可以优先地从在具有大于当前图片的POC值的参考图片PN3和PN4中具有最低POC值的PN3到PN4来排列参考图片,并且然后从在具有大于当前图片的POC值的参考图片PN0和PN1中具有最高POC值的PN1到PN0进行排列。First, in the case of the reference picture list for temporal prediction, in the order of arranging the short-term reference pictures of the

在排列参考图片列表1的长期参考图片的次序中,从具有最低变量LongtermPicNum的参考图片到具有最高变量的参考图片来排列参考图片。例如,从在LPN0和LPN1中具有最低值的LPN0到具有最低变量的LPN1来排列参考图片。In the order in which the long-term reference pictures of the

在用于视图间预测的参考图片列表的情形中,可以基于使用视图信息推导的第一变量ViewNum来排列参考图片。例如,在用于视图间预测的参考图片列表1的情形中,可以在具有大于当前图片的第一变量值的参考图片中从具有最低的第一变量值的参考图片到具有最高的第一变量值的参考图片来排列参考图片。然后在具有小于当前图片的第一变量值的参考图片中从具有最高的第一变量值的参考图片到具有最低的第一变量值的参考图片来排列参考图片。例如,优先地在具有大于当前图片的第一变量值的VN3和VN4中从具有最低的第一变量值的VN3到具有最高的第一变量值的VN4来排列参考图片,并且然后在具有小于当前图片的第一变量值的VN0和VN1中从具有最高的第一变量值的VN1到具有最低的第一变量值的VN0进行排列。In case of a reference picture list for inter-view prediction, the reference pictures may be arranged based on the first variable ViewNum derived using view information. For example, in the case of

通过以上过程被初始化的参考图片列表被传递到参考图片列表重排单元640。初始化的参考图片列表然后被重排以更有效地进行编码。重排过程用于通过操作解码图片缓冲器而通过向具有被选择为参考图片的最高可能性的参考图片分配小号来降低位速率。如下参考图12到19解释重排参考图片列表的各种方法。The reference picture list initialized through the above process is passed to the reference picture list rearrangement unit 640 . The initialized reference picture list is then rearranged for more efficient coding. The rearrangement process is used to reduce the bit rate by assigning a small number to the reference picture with the highest probability of being selected as a reference picture by manipulating the decoded picture buffer. Various methods of rearranging the reference picture list are explained with reference to FIGS. 12 to 19 as follows.

图12是根据本发明的一个实施例的参考图片列表重排单元640的内部框图。FIG. 12 is an internal block diagram of the reference picture list rearrangement unit 640 according to an embodiment of the present invention.

参考图12,参考图片列表重排单元640基本上包括片段类型检查单元642、参考图片列表0重排单元643和参考图片列表1重排单元645。Referring to FIG. 12 , the reference picture list rearrangement unit 640 basically includes a slice

具体地,参考图片列表0重排单元643包括第一识别信息获得单元643A和第一参考索引分配改变单元643B。并且,参考图片列表1重排单元645包括第二识别获得单元645A和第二参考索引分配改变单元645B。Specifically, the

片段类型检查单元642检查当前片段的片段类型。然后根据片段类型决定是否重排参考图片列表0和/或参考图片列表1。例如,如果当前片段的片段类型是I-片段,则参考图片列表0和参考图片列表1均不被重排。如果当前片段的片段类型是P-片段,则仅仅重排参考图片列表0。如果当前片段的片段类型是B-片段,则参考图片列表0和参考图片列表1均被重排。The segment

如果用于执行参考图片列表0的重排的标志信息是“真”并且如果当前片段的片段类型不是I-片段,则激活参考图片列表0重排单元643。第一识别信息获得单元643A获得指示参考索引分配方法的识别信息。第一参考索引分配改变单元643B根据识别信息改变被分配给参考图片列表0的每一个参考图片的参考索引。If the flag information for performing rearrangement of

同样地,如果用于执行参考图片列表1的重排的标志信息是“真”并且如果当前片段的片段类型是B-片段,则激活参考图片列表1重排单元645。第二识别信息获得单元645A获得指示参考索引分配方法的识别信息。第二参考索引分配改变单元645B根据识别信息改变被分配给参考图片列表1的每一个参考图片的参考索引。Likewise, if the flag information for performing rearrangement of

所以,通过参考图片列表0重排单元643和参考图片列表1重排单元645产生用于实际帧间预测的参考图片列表信息。Therefore, reference picture list information for actual inter prediction is generated by the

如下参考图13解释通过第一或者第二参考索引分配改变单元643B或者645B来改变被分配给每一个参考图片的参考索引的方法。A method of changing a reference index assigned to each reference picture by the first or second reference index

图13是根据本发明的一个实施例的参考索引分配改变单元643B或者645B的内部框图。在下面的说明中,一起解释在图12中所示的参考图片列表0重排单元643和参考图片列表1重排单元645。FIG. 13 is an internal block diagram of the reference index

参考图13,第一和第二参考索引分配改变单元643B和645B的每一个包括用于时间预测的参考索引分配改变单元644A、用于长期参考图片的参考索引分配改变单元644B、用于视图间预测的参考索引分配改变单元644C和参考索引分配改变终止单元644D。根据由第一和第二识别信息获得单元643A和645A获得的识别信息,分别激活在第一和第二参考索引分配改变单元643B和645B中的部件。并且,保持执行重排过程,直至输入用于终止参考索引分配改变的识别信息。Referring to FIG. 13 , each of the first and second reference index

例如,如果从第一或者第二识别信息获得单元643A或者645A接收到用于改变用于时间预测的参考索引的分配的识别信息,则激活用于时间预测的参考索引分配改变单元644A。用于时间预测的参考索引分配改变单元644A根据所接收的识别信息获得图片号差。在这种情况下,图片号差是指在当前图片的图片号和预测的图片号之间的差。并且,预测的图片号可以指之前刚刚分配的参考图片的号。所以,能够使用所获得的图片号差来改变参考索引的分配。在这种情况下,根据识别信息,可以向/从预测的图片号增加/减去图片号差。For example, if identification information for changing allocation of reference indexes for temporal prediction is received from the first or second identification

作为另一个实例,如果接收到用于将参考索引的分配改变为指定的长期参考图片的识别信息,则激活用于长期参考图片的参考索引分配改变单元644B。用于长期参考图片的参考索引分配改变单元644B根据识别号获得指定图片的长期参考图片号。As another example, if identification information for changing the assignment of the reference index to a designated long-term reference picture is received, the reference index

作为另一个实例,如果接收到用于改变用于视图间预测的参考索引的分配的识别信息,则激活用于视图间预测的参考索引分配改变单元644C。用于视图间预测的参考索引分配改变单元644C根据识别信息获得视图信息差。在这种情况下,视图信息差是指在当前图片的视图号和预测的视图号之间的差。并且,预测的视图号可以指示之前刚刚分配的参考图片的视图号。所以,能够使用所获得的视图信息差改变参考索引的分配。在这种情况下,根据识别信息,可以向/从预测的视图号增加/减去视图信息差。As another example, if the identification information for changing the allocation of the reference index for inter-view prediction is received, the reference index

对于另一实例,如果接收到用于终止参考索引分配改变的识别信息,则激活参考索引分配改变终止单元644D。参考索引分配改变终止单元644D根据所接收的识别信息终止参考索引的分配改变。所以,参考图片列表重排单元640产生参考图片列表信息。For another example, if identification information for terminating a reference index allocation change is received, the reference index allocation

因此,能够与用于时间预测的参考图片一起地管理用于视图间预测的参考图片。可替代地,能够独立于用于时间预测的参考图片来管理用于视图间预测的参考图片。为此,可能需要用于管理用于视图间预测的参考图片的新信息。这将在随后参考图15到19解释。Therefore, reference pictures for inter-view prediction can be managed together with reference pictures for temporal prediction. Alternatively, reference pictures for inter-view prediction can be managed independently of reference pictures for temporal prediction. For this, new information for managing reference pictures for inter-view prediction may be required. This will be explained later with reference to FIGS. 15 to 19 .

如下参考图14解释用于视图间预测的参考索引分配改变单元644C的细节。Details of the reference index

图14是根据本发明的一个实施例的用于解释使用视图信息重排参考图片列表的过程的图表。FIG. 14 is a diagram for explaining a process of rearranging a reference picture list using view information according to one embodiment of the present invention.

参考图14,如果当前图片的视图号VN是3,如果解码图片缓冲器的尺寸DPBsize是4,并且如果当前片段的片段类型是P-片段,则如下解释用于参考图片列表0的重排过程。Referring to FIG. 14, if the view number VN of the current picture is 3, if the size of the decoded picture buffer DPBsize is 4, and if the slice type of the current slice is a P-slice, the rearrangement process for

首先,初始预测的视图号是“3”,它是当前图片的视图号。并且,用于视图间预测的参考图片列表0的初始排列是“4,5,6,2”(①)。在这种情况下,如果接收到用于通过减去视图信息差而改变用于视图间预测的参考索引的分配的识别信息,则根据所接收的识别信息获得“1”作为视图信息差。通过从预测的视图号(=3)减去视图信息差(=1)计算出新预测的视图号(=2)。具体地,将用于视图间预测的参考图片列表0的第一索引分配给具有视图号2的参考图片。并且,可以将先前分配给第一索引的图片移动到参考图片列表0的最后部分。所以,重排的参考图片列表0是“2,5,6,4”(②)。随后,如果接收到用于通过减去视图信息差而改变用于视图间预测的参考索引的分配的识别信息,则根据识别信息获得“-2”作为视图信息差。然后通过从预测的视图号(=2)减去视图信息差(=-2)计算出新预测的视图号(=4)。具体地,将用于视图间预测的参考图片列表0的第二索引分配给具有视图号4的参考图片。因此,重排的参考图片列表0是“2,4,6,5”(③)。随后,如果接收到用于终止参考索引分配改变的识别信息,则根据所接收的识别信息产生最终具有重排的参考图片列表0的参考图片列表0(④)。因此,最终产生的用于视图间预测的参考图片列表0的次序是“2,4,6,5”。First, the initially predicted view number is "3", which is the view number of the current picture. And, the initial arrangement of the

对于在已分配用于视图间预测的参考图片列表0的第一索引之后重排其余图片的另一个实例,可以将分配给每一个索引的图片移动到正好在相应的图片的后面的位置。具体地,将第二索引分配给具有视图号4的图片,将第三索引分配给向其分配第二索引的图片(视图号5),并且将第四索引分配给向其分配第三索引的图片(视图号6)。因此,重排的参考图片列表0变成“2,4,5,6”。并且,可以以相同的方式执行随后的重排过程。For another example of rearranging the remaining pictures after the first index of

通过以上解释的过程而产生的参考图片列表被用于帧间预测。可以作为一个参考图片列表来管理用于视图间预测的参考图片列表和用于时间预测的参考图片列表两者。可替代地,用于视图间预测的参考图片列表和用于时间预测的参考图片列表中的每一个可以作为单独的参考图片列表被管理。如下参考图15到19对此进行解释。The reference picture list generated through the process explained above is used for inter prediction. Both the reference picture list for inter-view prediction and the reference picture list for temporal prediction can be managed as one reference picture list. Alternatively, each of the reference picture list for inter-view prediction and the reference picture list for temporal prediction may be managed as a separate reference picture list. This is explained with reference to FIGS. 15 to 19 as follows.

图15是根据本发明的另一实施例的参考图片列表重排单元640的内部框图。FIG. 15 is an internal block diagram of the reference picture list rearrangement unit 640 according to another embodiment of the present invention.

参考图15,为了作为单独的参考图片列表来管理用于视图间预测的参考图片列表,可能需要新的信息。例如,在一些情形中,用于时间预测的参考图片列表被重排,并且然后用于视图间预测的参考图片列表被重排。Referring to FIG. 15 , in order to manage a reference picture list for inter-view prediction as a separate reference picture list, new information may be required. For example, in some cases, the reference picture list used for temporal prediction is rearranged, and then the reference picture list used for inter-view prediction is rearranged.

参考图片列表重排单元640基本上包括用于时间预测的参考图片列表重排单元910、NAL类型检查单元960和用于视图间预测的参考图片列表重排单元970。The reference picture list rearrangement unit 640 basically includes a reference picture list rearrangement unit for temporal prediction 910 , a NAL type check unit 960 and a reference picture list rearrangement unit for inter-view prediction 970 .

用于时间预测的参考图片列表重排单元910包括片段类型检查单元642、第三识别信息获得单元920、第三参考索引分配改变单元930、第四识别信息获得单元940和第四参考索引分配改变单元950。第三参考索引分配改变单元930包括用于时间预测的参考索引分配改变单元930A、用于长期参考图片的参考索引分配改变单元930B和参考索引分配改变终止单元930C。同样地,第四参考索引分配改变单元950包括用于时间预测的参考索引分配改变单元950A、用于长期参考图片的参考索引分配改变单元950B和参考索引分配改变终止单元950C。The reference picture list rearrangement unit 910 for temporal prediction includes a segment

用于时间预测的参考图片列表重排单元910重排用于时间预测的参考图片。用于时间预测的参考图片列表重排单元910的操作除了用于视图间预测的参考图片的信息外与图10所示的前述参考图片列表重排单元640的操作相同。所以,在下面的说明中省略了用于时间预测的参考图片列表重排单元910的细节。The reference picture list rearrangement for temporal prediction unit 910 rearranges reference pictures for temporal prediction. The operation of the reference picture list rearrangement unit 910 for temporal prediction is the same as that of the aforementioned reference picture list rearrangement unit 640 shown in FIG. 10 except for the information of the reference picture used for inter-view prediction. Therefore, details of the reference picture list rearranging unit 910 for temporal prediction are omitted in the following description.

NAL类型检查单元960检查所接收的位流的NAL类型。如果NAL类型是用于多视图视频编码的NAL,则通过用于时间预测的参考图片列表重排单元970重排用于视图间预测的参考图片。所产生的用于视图间预测的参考图片列表与由用于时间预测的参考图片列表重排单元910产生的参考图片列表一起被用于帧间预测。然而,如果NAL类型不是用于多视图视频编码的NAL,则用于视图间预测的参考图片列表不被重排。在这种情况下,仅仅产生用于时间预测的参考图片列表。并且,视图间预测参考图片列表重排单元970重排用于视图间预测的参考图片。如下参考图16对此进行详细解释。The NAL type checking unit 960 checks the NAL type of the received bitstream. If the NAL type is NAL for multi-view video coding, reference pictures for inter-view prediction are rearranged by the reference picture list rearrangement unit 970 for temporal prediction. The generated reference picture list for inter-view prediction is used for inter prediction together with the reference picture list generated by the reference picture list rearrangement unit 910 for temporal prediction. However, if the NAL type is not NAL for multi-view video coding, the reference picture list for inter-view prediction is not rearranged. In this case, only reference picture lists for temporal prediction are generated. And, the inter-view prediction reference picture list rearrangement unit 970 rearranges reference pictures used for inter-view prediction. This is explained in detail with reference to FIG. 16 as follows.

图16是根据本发明的一个实施例的用于视图间预测的参考图片列表重排单元970的内部框图。FIG. 16 is an internal block diagram of the reference picture list rearrangement unit 970 for inter-view prediction according to an embodiment of the present invention.

参考图16,用于视图间预测的参考图片列表重排单元970包括片段类型检查单元642、第五识别信息获得单元971、第五参考索引分配改变单元972、第六识别信息获得单元973和第六参考索引分配改变单元974。Referring to FIG. 16 , the reference picture list rearrangement unit 970 for inter-view prediction includes a segment

片段类型检查单元642检查当前片段的片段类型。如果这样,然后根据片段类型决定是否执行参考图片列表0和/或参考图片列表1的重排。能够从图10推出片段类型检查单元642的细节,其在下面的说明中被省略。The segment