CN101361117B - Method and apparatus for processing a media signal - Google Patents

Method and apparatus for processing a media signal Download PDFInfo

- Publication number

- CN101361117B CN101361117B CN2007800015359A CN200780001535A CN101361117B CN 101361117 B CN101361117 B CN 101361117B CN 2007800015359 A CN2007800015359 A CN 2007800015359A CN 200780001535 A CN200780001535 A CN 200780001535A CN 101361117 B CN101361117 B CN 101361117B

- Authority

- CN

- China

- Prior art keywords

- information

- rendering

- signal

- unit

- filter

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Active

Links

Images

Landscapes

- Stereophonic System (AREA)

Abstract

Description

技术领域technical field

本发明涉及处理媒体信号的装置及其方法,尤其涉及通过使用媒体信号的空间信息生成环绕信号的装置及其方法。The present invention relates to a device and method for processing media signals, in particular to a device and method for generating surround signals by using spatial information of media signals.

背景技术Background technique

一般而言,各种类型的装置和方法已被广泛地用于通过使用多声道媒体信号的空间信息以及声道缩减混音信号来生成该多声道媒体信号,其中声道缩减混音信号是通过将多声道媒体信号作声道缩减混音成单声道或立体声信号而生成的。In general, various types of devices and methods have been widely used to generate a multi-channel media signal by using spatial information of the multi-channel media signal and a downmix signal, wherein the downmix signal It is generated by downmixing a multi-channel media signal to a mono or stereo signal.

然而,上述的方法和装置在不适于生成多声道信号的环境中是不可使用的。例如,它们对于仅能生成立体声信号的设备是不可使用的。换言之,没有任何现有的在不能通过使用多声道信号的空间信息生成该多声道信号的环境中生成环绕信号——其中该环绕信号具有多声道特征——的方法或装置。However, the methods and apparatus described above are not usable in environments that are not suitable for generating multi-channel signals. For example, they are not available for devices that can only generate stereo signals. In other words, there is no existing method or apparatus for generating a surround signal having multi-channel characteristics in an environment where the multi-channel signal cannot be generated by using the spatial information of the multi-channel signal.

所以,因为没有任何现有的在仅能生成单声道或立体声信号的设备中生成环绕信号的方法或装置,所以难以高效率地处理媒体信号。Therefore, since there is not any existing method or apparatus for generating surround signals in a device capable of generating only mono or stereo signals, it is difficult to efficiently process media signals.

发明公开invention disclosure

技术问题technical problem

因此,本发明涉及一种基本上消除了一个或多个由于相关技术的局限和缺点引起的问题的处理媒体信号的装置及其方法。Accordingly, the present invention is directed to an apparatus and method for processing a media signal that substantially obviate one or more problems due to limitations and disadvantages of the related art.

本发明的一个目的是提供一种用于处理信号的装置及其方法,藉之可通过使用媒体信号的空间信息来将该媒体信号转换成环绕信号。An object of the present invention is to provide an apparatus for processing a signal and method thereof, by which a media signal can be converted into a surround signal by using spatial information of the media signal.

本发明的另外的特征和优点将在以下的描述中阐述,并将从描述中部分地显而易见,或者可从本发明的实践中认识到。本发明的目的和其它优点将可由书面说明书及其权利要求书和附图中具体指出的结构来实现并获得。Additional features and advantages of the invention will be set forth in the description which follows, and in part will be obvious from the description, or may be learned by practice of the invention. The objectives and other advantages of the invention will be realized and attained by the structure particularly pointed out in the written description and claims hereof as well as the appended drawings.

技术方案Technical solutions

为了实现这些和其它优点且根据本发明的目的,一种根据本发明的处理信号的方法包括:通过使用指示多个源之间的特征的空间信息生成对应于这多个源中的每一个源的源映射信息;通过将给出环绕效果的滤波器信息按源应用于这些源映射信息来生成子渲染信息;通过整合这些子渲染信息中的至少一个生成用于生成环绕信号的渲染信息;以及通过将此渲染信息应用于通过对这多源进行声道缩减混音处理生成的声道缩减混音信号来生成环绕信号。To achieve these and other advantages and in accordance with the object of the present invention, a method of processing a signal according to the present invention comprises generating a signal corresponding to each of the plurality of sources by using spatial information indicative of features between the plurality of sources generating sub-rendering information by applying filter information giving a surround effect to the source-mapping information by source; generating rendering information for generating a surround signal by integrating at least one of the sub-rendering information; and A surround signal is generated by applying this rendering information to a downmix signal generated by downmixing the multiple sources.

为了进一步实现这些和其它优点且根据本发明的目的,一种处理信号的装置包括:源映射单元,其通过使用指示多个源之间特征的空间信息生成对应于这多个源中的每一个源的源映射信息;子渲染信息生成单元,其通过将具有环绕效果的滤波器信息按源应用于这些源映射信息来生成子渲染信息;整合单元,其通过整合这些子渲染信息中的至少一个生成用于生成环绕信号的渲染信息;以及渲染单元,其通过将渲染信息应用于通过对这多个源进行声道缩减混音处理生成的声道缩减混音信号来生成环绕信号。To further achieve these and other advantages and in accordance with the object of the present invention, an apparatus for processing a signal comprises: a source mapping unit which generates a signal corresponding to each of the plurality of sources by using spatial information indicative of features between the plurality of sources Source mapping information of the source; a sub-rendering information generation unit that generates sub-rendering information by applying filter information with a surround effect to the source mapping information by source; an integration unit that generates sub-rendering information by integrating at least one of the sub-rendering information generating rendering information for generating the surround signal; and a rendering unit generating the surround signal by applying the rendering information to the downmix signal generated by downmixing the plurality of sources.

应理解,以上的一般描述和以下的详细描述是示例性和说明性的,并且旨在提供对主张权利的本发明的进一步解释。It is to be understood that both the foregoing general description and the following detailed description are exemplary and explanatory and are intended to provide further explanation of the invention as claimed.

有益效果Beneficial effect

根据本发明的信号处理装置和方法使得接收包括通过对多声道信号进行声道缩减混音处理生成的声道缩减混音信号以及该多声道信号的空间信息的比特流的解码器能在不能够恢复该多声道信号的环境中生成具有环绕效果的信号。The signal processing apparatus and method according to the present invention enable a decoder receiving a bit stream including a down-mix signal generated by down-mixing a multi-channel signal and spatial information of the multi-channel signal to A signal with a surround effect is generated in an environment where it is not possible to restore the multi-channel signal.

附图简述Brief description of the drawings

包括于此以提供对本发明的进一步理解、并被结合在本申请中且构成其一部分的附图示出本发明的实施方式,其与说明书一起可用来解释本发明的原理。The accompanying drawings, which are included to provide a further understanding of the invention and are incorporated in and constitute a part of this application, illustrate embodiments of the invention and together with the description serve to explain the principles of the invention.

附图中:In the attached picture:

图1是根据本发明的一个实施例的音频信号编码装置和音频信号解码装置的的框图;1 is a block diagram of an audio signal encoding device and an audio signal decoding device according to an embodiment of the present invention;

图2是根据本发明的一个实施例的音频信号的比特流的结构图;Fig. 2 is a structural diagram of a bit stream of an audio signal according to an embodiment of the present invention;

图3是根据本发明的一个实施例的空间信息转换单元的详细框图;3 is a detailed block diagram of a spatial information conversion unit according to an embodiment of the present invention;

图4和图5是根据本发明的一个实施例用于源映射过程的声道配置的框图;4 and 5 are block diagrams of channel configurations for a source mapping process according to one embodiment of the invention;

图6和图7是根据本发明的一个实施例用于立体声的声道缩减混音信号的渲染单元的详细框图;6 and 7 are detailed block diagrams of a rendering unit for a stereo channel downmix signal according to an embodiment of the present invention;

图8和图9是根据本发明的一个实施例用于单声道的声道缩减混音信号的渲染单元的详细框图;8 and 9 are detailed block diagrams of a rendering unit for a mono channel downmix signal according to an embodiment of the present invention;

图10和图11是根据本发明的一个实施例的平滑单元和扩展单元的框图;10 and 11 are block diagrams of a smoothing unit and an expanding unit according to an embodiment of the present invention;

图12是用于解释根据本发明的一个实施例的第一平滑方法的坐标图;12 is a graph for explaining a first smoothing method according to an embodiment of the present invention;

图13是用于解释根据本发明的一个实施例的第二平滑方法的坐标图;13 is a graph for explaining a second smoothing method according to an embodiment of the present invention;

图14是用于解释根据本发明的一个实施例的第三平滑方法的坐标图;14 is a graph for explaining a third smoothing method according to an embodiment of the present invention;

图15是用于解释根据本发明的一个实施例的第四平滑方法的坐标图;15 is a graph for explaining a fourth smoothing method according to an embodiment of the present invention;

图16是用于解释根据本发明的一个实施例的第五平滑方法的坐标图;16 is a graph for explaining a fifth smoothing method according to an embodiment of the present invention;

图17是用于解释对应于每个声道的原型滤波器信息的图;FIG. 17 is a diagram for explaining prototype filter information corresponding to each channel;

图18是根据本发明的一个实施例在空间信息转换单元中生成渲染滤波器信息的第一方法的框图;18 is a block diagram of a first method for generating rendering filter information in a spatial information conversion unit according to an embodiment of the present invention;

图19是根据本发明的一个实施例在空间信息转换单元中生成渲染滤波器信息的第二方法的框图;19 is a block diagram of a second method of generating rendering filter information in a spatial information conversion unit according to an embodiment of the present invention;

图20是根据本发明的一个实施例在空间信息转换单元中生成渲染滤波器信息的第三方法的框图;20 is a block diagram of a third method of generating rendering filter information in a spatial information conversion unit according to an embodiment of the present invention;

图21是用于解释根据本发明的一个实施例在渲染单元中生成环绕信号的方法的图;21 is a diagram for explaining a method of generating surround signals in a rendering unit according to an embodiment of the present invention;

图22是根据本发明的一个实施例的第一内插法的图;Figure 22 is a diagram of a first interpolation method according to one embodiment of the present invention;

图23是根据本发明的一个实施例的第二内插法的图;Figure 23 is a diagram of a second interpolation method according to one embodiment of the present invention;

图24是根据本发明的一个实施例的块切换法的图;FIG. 24 is a diagram of a block switching method according to one embodiment of the present invention;

图25是根据本发明的一个实施例应用由窗口长度决定单元决定的窗口长度的位置的框图;Fig. 25 is a block diagram of applying the position of the window length determined by the window length determination unit according to one embodiment of the present invention;

图26是根据本发明的一个实施例在处理音频信号中使用的具有各种长度的滤波器的图;26 is a diagram of filters of various lengths used in processing audio signals according to one embodiment of the present invention;

图27是根据本发明的一个实施例通过使用多个子滤波器来分开地处理音频信号的方法的图;27 is a diagram of a method for separately processing an audio signal by using a plurality of sub-filters according to one embodiment of the present invention;

图28是根据本发明的一个实施例向单声道的声道缩减混音信号渲染由多个子滤波器生成的分割渲染信息的方法的框图;28 is a block diagram of a method for rendering split rendering information generated by a plurality of sub-filters to a mono channel downmix signal according to an embodiment of the present invention;

图29是根据本发明的一个实施例向立体声的声道缩减混音信号渲染由多个子滤波器生成的分割渲染信息的方法的框图;29 is a block diagram of a method for rendering split rendering information generated by a plurality of sub-filters to a stereo downmix signal according to an embodiment of the present invention;

图30是根据本发明的一个实施例的声道缩减混音信号的第一域转换方法的框图;以及FIG. 30 is a block diagram of a first domain conversion method of a channel downmix signal according to an embodiment of the present invention; and

图31是根据本发明的一个实施例的声道缩减混音信号的第二域转换方法的框图。Fig. 31 is a block diagram of a second domain conversion method for a downmix signal according to an embodiment of the present invention.

本发明的最佳实施方式BEST MODE FOR CARRYING OUT THE INVENTION

现在将详细参考本发明的优选实施方式,其示例图解于附图中。Reference will now be made in detail to the preferred embodiments of the invention, examples of which are illustrated in the accompanying drawings.

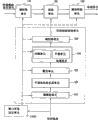

图1是根据本发明的一个实施例的音频信号编码装置和音频信号解码装置的的框图。FIG. 1 is a block diagram of an audio signal encoding device and an audio signal decoding device according to an embodiment of the present invention.

参考图1,编码装置10包括声道缩减混音单元100、空间信息生成单元200、声道缩减混音信号编码单元300、空间信息编码单元400、和多路复用单元500。Referring to FIG. 1 , the encoding device 10 includes a downmix unit 100 , a spatial information generation unit 200 , a downmix signal encoding unit 300 , a spatial information encoding unit 400 , and a multiplexing unit 500 .

如果多源(X1、X2、……、Xn)音频信号被输入到声道缩减混音单元100,则声道缩减混音单元100将所输入的信号作声道缩减混音成声道缩减混音信号。在这种情形中,声道缩减混音信号包括单声道、立体声及多源音频信号。If a multi-source (X1, X2, ..., Xn) audio signal is input to the downmix unit 100, the downmix unit 100 downmixes the input signal into a downmix tone signal. In this case, the downmix signal includes mono, stereo and multi-source audio signals.

源包括声道,且在以下的描述中方便地表示为声道。在本说明书中,以单声道或立体声的声道缩减混音信号作为参考。然而,本发明不限于单声道或立体声的声道缩减混音信号。Sources include channels and are conveniently denoted as channels in the description below. In this specification, a mono or stereo downmix signal is used as a reference. However, the invention is not limited to mono or stereo downmix signals.

编码装置10能可任选地使用从外部环境直接提供的任意性声道缩减混音信号。The encoding device 10 can optionally use an arbitrary downmix signal provided directly from the external environment.

空间信息生成单元200从多声道音频信号生成空间信息。此空间信息可在声道缩减混音过程中生成。所生成的声道缩减混音信号和空间信息分别由声道缩减混音信号编码单元300和空间信息编码单元400编码,然后传输至多路复用单元500。The spatial information generation unit 200 generates spatial information from a multi-channel audio signal. This spatial information can be generated during downmixing. The generated downmix signal and spatial information are respectively encoded by the downmix signal encoding unit 300 and the spatial information encoding unit 400 , and then transmitted to the multiplexing unit 500 .

在本发明中,‘空间信息’是指由解码装置从对声道缩减混音信号进行声道扩展混音来生成多声道信号所需的信息,其中该声道缩减混音信号是由编码装置通过对该多声道信号进行声道缩减混音处理来生成并被传输到该解码装置的。空间信息包括空间参数。空间参数包括指示声道之间的能量差的CLD(声道电平差)、指示声道之间的相关性的ICC(声道间相干性)、在从两声道生成三声道时使用的CPC(声道预测系数)等。In the present invention, 'spatial information' refers to information required by a decoding device to generate a multi-channel signal from downmixing a downmix signal encoded by generated by the device by downmixing the multi-channel signal and transmitted to the decoding device. Spatial information includes spatial parameters. Spatial parameters include CLD (Channel Level Difference) indicating the energy difference between channels, ICC (Inter-Channel Coherence) indicating the correlation between channels, used when generating three channels from two channels CPC (Channel Prediction Coefficient) etc.

在本发明中,‘声道缩减混音信号编码单元’或‘声道缩减混音信号解码单元’是指编码或解码音频信号而不是空间信息的编解码器。在本说明书中,以声道缩减混音音频信号为音频信号而不是空间信息的例子。并且,声道缩减混音信号编码或解码单元可包括MP3、AC-3、DTS、或AAC。此外,声道缩减混音信号编码或解码单元可包括未来的编解码器以及以前已经开发出来的编解码器。In the present invention, 'downmix signal encoding unit' or 'downmix signal decoding unit' refers to a codec that encodes or decodes audio signals instead of spatial information. In this specification, a downmix audio signal is taken as an example of an audio signal instead of spatial information. Also, the downmix signal encoding or decoding unit may include MP3, AC-3, DTS, or AAC. Furthermore, the downmix signal encoding or decoding unit may include future codecs as well as codecs that have been developed before.

多路复用单元500通过将声道缩减混音信号与空间信息多路复用来生成比特流,然后将所生成的比特流传输到解码装置20。此外,稍后将在图2中解释此比特流的结构。The multiplexing unit 500 generates a bit stream by multiplexing the downmix signal with spatial information, and then transmits the generated bit stream to the decoding device 20 . Also, the structure of this bitstream will be explained later in FIG. 2 .

解码装置20包括多路分解单元600、声道缩减混音信号解码单元700、空间信息解码单元800、渲染单元900、以及空间信息转换单元1000。The decoding device 20 includes a demultiplexing unit 600 , a downmix signal decoding unit 700 , a spatial information decoding unit 800 , a

多路分解单元600接收比特流,然后从该比特流中分离出经编码的声道缩减混音信号和经编码的空间信息。随后,声道缩减混音信号解码单元700对此经编码的声道缩减混音信号进行解码,并且空间信息解码单元800对此经编码的空间信息进行解码。The demultiplexing unit 600 receives a bitstream and then separates the encoded downmix signal and the encoded spatial information from the bitstream. Then, the down-mix signal decoding unit 700 decodes the encoded down-mix signal, and the spatial information decoding unit 800 decodes the encoded spatial information.

空间信息转换单元1000利用经解码的空间信息和滤波器信息生成可应用于声道缩减混音信号的渲染信息。在这种情形中,将渲染信息应用于该声道缩减混音信号以生成环绕信号。The spatial

例如,环绕信号按以下方式生成。首先,由编码装置10从多声道音频信号生成声道缩减混音信号的过程可包括利用OTT(一至二)框或TTT(三至三)框的若干步骤。在这种情形中,空间信息可从这些步骤中的每一个生成。空间信息被传输到解码装置20。解码装置20然后通过转换空间信息然后用声道缩减混音信号渲染经转换的空间信息来生成环绕信号。本发明不是通过对声道缩减混音信号进行声道扩展混音处理来生成多声道信号,而是代之以涉及包括以下步骤的渲染方法:提取用于每个声道扩展混音步骤的空间信息,并通过使用所提取的空间信息执行渲染。例如,HRTF(头部相关的传递函数)滤波在该渲染方法中是可使用的。For example, surround signals are generated as follows. First, the process of generating a downmix signal from a multi-channel audio signal by the encoding device 10 may include several steps using an OTT (one to two) box or a TTT (three to three) box. In this case, spatial information can be generated from each of these steps. The spatial information is transmitted to decoding means 20 . The decoding device 20 then generates the surround signal by converting the spatial information and then rendering the converted spatial information with the downmix signal. Instead of generating a multi-channel signal by downmixing a downmix signal, the present invention instead involves a rendering method comprising the steps of extracting the spatial information, and perform rendering by using the extracted spatial information. For example, HRTF (Head Related Transfer Function) filtering is usable in this rendering method.

在这种情形中,空间信息是也可应用于混合域的值。所以,可根据域将渲染分类成以下的类型。In this case, spatial information is a value that also applies to mixed domains. Therefore, rendering can be classified into the following types according to domains.

第一类型是通过令声道缩减混音信号通过混合滤波器组来在混合域上执行渲染。在这种情形中,空间信息的域转换是不必要的。The first type is to perform rendering on the mix domain by passing the downmix signal through a mix filter bank. In this case, domain conversion of the spatial information is unnecessary.

第二类型是在时域上执行渲染。在这种情形中,第二类型利用HRTF滤波器是被建模成时域上的FIR(有限逆响应)滤波器或IIR(无限逆响应)滤波器这一事实。所以,将空间信息转换成时域的滤波器系数的过程是需要的。The second type is to perform rendering on the temporal domain. In this case, the second type exploits the fact that HRTF filters are modeled as FIR (finite inverse response) filters or IIR (infinite inverse response) filters in the time domain. Therefore, a process of converting spatial information into time-domain filter coefficients is required.

第三类型是在不同的频域上执行渲染。例如,此渲染在DFT(离散傅里叶变换)域上执行。在这种情形中,将空间信息变换至相应的域中的过程是必需的。特别是,第三类型通过将时域上的滤波替换成频域上的运算来使快速运算能得以实现。A third type is to perform rendering on a different frequency domain. For example, this rendering is performed on the DFT (Discrete Fourier Transform) domain. In this case, a process of transforming the spatial information into the corresponding domain is necessary. In particular, the third type enables fast operations by replacing filtering in the time domain with operations in the frequency domain.

在本发明中,滤波器信息是关于处理音频信号所需的滤波器的信息,并包括提供给特定滤波器的滤波器系数。解释滤波器信息的例子如下。首先,原型滤波器信息是特定滤波器的原始滤波器信息,并可表示为GL_L等。经转换的滤波器信息指示在原型滤波器信息已被转换后的滤波器系数,并可表示为GL_L等。子渲染信息是指将原型滤波器信息空间化以生成环绕信号所得到的滤波器信息,并可表示为FL_L1等。渲染信息是指执行渲染所需的滤波器信息,并可表示为HL_L等。经内插/平滑的渲染信息是指从内插/平滑此渲染信息得到的滤波器信息,并可表示为HL-L等。在本说明书中,提到了以上的滤波器信息。然而,本发明不受滤波器信息的名称的限制。具体地,以HRTF为滤波器信息的例子。然而,本发明不限于HRTF。In the present invention, filter information is information on a filter required for processing an audio signal, and includes filter coefficients provided to a specific filter. An example explaining the filter information is as follows. First, the prototype filter information is original filter information of a specific filter, and can be expressed as GL_L or the like. The converted filter information indicates filter coefficients after the prototype filter information has been converted, and may be expressed as GL_L or the like. The sub-rendering information refers to the filter information obtained by spatializing the prototype filter information to generate the surround signal, and may be expressed as FL_L1 and the like. Rendering information refers to filter information required to perform rendering, and may be expressed as HL_L or the like. The interpolated/smoothed rendering information refers to filter information obtained from interpolating/smoothing this rendering information, and may be expressed as HL-L or the like. In this specification, the above filter information is referred to. However, the present invention is not limited by the name of the filter information. Specifically, take HRTF as an example of filter information. However, the present invention is not limited to HRTFs.

渲染单元900接收经解码的声道缩减混音信号和渲染信息,然后利用经解码的声道缩减混音信号和渲染信息生成环绕信息。环绕信号可以是向仅能够生成立体声信号的音频系统提供环绕效果的信号。除了仅能够生成立体声信号的音频系统外,本发明还可应用于各种系统。The

图2是根据本发明的一个实施例的音频信号的比特流的结构图,其中该比特流包括经编码的声道缩减混音信号和经编码的空间信息。Fig. 2 is a structural diagram of a bit stream of an audio signal according to an embodiment of the present invention, wherein the bit stream includes encoded downmix signals and encoded spatial information.

参考图2,1帧音频有效载荷包括声道缩减混音信号字段和辅助数据字段。经编码的空间信息可存储在此辅助数据字段中。例如,如果音频有效载荷是48~128kbps(千比特/秒),则空间信息可具有5~32kbps的范围。然而,对音频有效载荷和空间信息的范围不设限制。Referring to FIG. 2, a 1-frame audio payload includes a downmix signal field and an ancillary data field. Encoded spatial information may be stored in this auxiliary data field. For example, if the audio payload is 48˜128 kbps (kilobits per second), the spatial information may have a range of 5˜32 kbps. However, there is no limit to the range of audio payload and spatial information.

图3是根据本发明的一个实施例的空间信息转换单元的详细框图。FIG. 3 is a detailed block diagram of a spatial information conversion unit according to an embodiment of the present invention.

参考图3,空间信息转换单元1000包括源映射单元1010、子渲染信息生成单元1020、整合单元1030、处理单元1040、以及域转换单元1050。Referring to FIG. 3 , the spatial

源映射单元101通过利用空间信息执行源映射来生成对应于音频信号的每一个源的源映射信息。在这种情形中,源映射信息是指通过利用空间信息等来生成以使其对应于音频信号的每一个源的每源的信息。源包括声道,且在这种情形中,生成的是对应于每一声道的源映射信息。可将源映射信息表示为系数。并且,稍后将参考图4和图5详细解释源映射过程。The source mapping unit 101 generates source mapping information corresponding to each source of an audio signal by performing source mapping using spatial information. In this case, the source map information refers to per-source information generated by using spatial information or the like so as to correspond to each source of the audio signal. A source includes channels, and in this case, source map information corresponding to each channel is generated. The source map information may be represented as coefficients. And, the source mapping process will be explained in detail later with reference to FIGS. 4 and 5 .

子渲染信息生成单元1020通过利用源映射信息和滤波器信息生成对应于每个源的子渲染信息。例如,如果渲染单元900是HRTF滤波器。则子渲染信息生成单元1020能通过利用HRTF滤波器信息生成子渲染信息。The sub-rendering

整合单元1030通过整合子渲染信息以使其对应于声道缩减混音信号的每一个源来生成渲染信息。通过利用空间信息和滤波器信息生成的渲染信息是指通过被应用于声道缩减混音信号来生成环绕信号的信息。并且,渲染信息包括滤波器系数类型。可省略整合以减少渲染过程的运算量。随后,渲染信息被传输给处理单元1042。The integrating

处理单元1042包括内插单元1041和/或平滑单元1042。渲染信息由内插单元1041内插和/或由平滑单元1042平滑。The

域转换单元1050将渲染信息的域转换至渲染单元900所使用的声道缩减混音信号的域。并且,可向包括图3中所示的位置在内的各种位置之一设置域转换单元1050。所以,如果渲染信息是在与渲染单元900相同的域上生成的,则可省略域转换单元1050。经域转换的渲染信息随后被传输给渲染单元900。The

空间信息转换单元1000可包括滤波器信息转换单元1060。在图3中,滤波器信息转换单元1060被设置在空间信息转换单元100内。替换地,可将滤波器信息转换单元1060设置在空间信息转换单元100的外部。滤波器信息转换单元1060被转换成适用于从例如HRTF等的随机滤波器信息生成子渲染信息或渲染信息。滤波器信息的转换过程可包括以下步骤。The spatial

首先,包括将域匹配成可应用的步骤。如果滤波器信息的域不匹配执行渲染的域,则需要此域匹配步骤。例如,将时域HRTF转换到用于生成渲染信息的DFT、QMF或混合域的步骤是必需的。First, the step of matching domains to be applicable is included. This domain matching step is required if the domain of the filter information does not match the domain where rendering is performed. For example, a step of converting time-domain HRTF to DFT, QMF or hybrid domain for generating rendering information is required.

第二,可包括系数约简步骤。在这种情形中,易于保存经域转换的HRTF并将经域转换的HRTF应用于空间信息。例如,如果原型滤波器系数具有长抽头(tap)数(长度)的响应,则在5.1声道的情形中对应的系数必须存储在与对应长度合计总共为10的响应相对应的存储空间中。这增加了存储器的负载和运算量。为了防止这一问题,可采用在域转换过程中在维持滤波器特性的同时约简要存储的滤波器系数的方法。例如,HRTF响应可被转换成少数几个参数值。在这种情形中,参数生成过程和参数值可根据应用的域而有所不同。Second, a coefficient reduction step may be included. In this case, it is easy to save the domain-transformed HRTF and apply the domain-converted HRTF to spatial information. For example, if the prototype filter coefficients have a response with a long number of taps (length), the corresponding coefficients must be stored in a storage space corresponding to responses whose corresponding lengths add up to 10 in the case of 5.1 channels. This increases the load on the memory and the amount of computation. In order to prevent this problem, a method of compacting stored filter coefficients while maintaining filter characteristics during domain conversion may be employed. For example, HRTF responses can be transformed into a few parameter values. In this case, the parameter generation process and parameter values may differ according to the domain of application.

声道缩减混音信号在用渲染信息进行渲染之前通过域转换单元1110和/或解相关单元1200。在渲染信息的域与声道缩减混音信号的域不同的情形中,域转换单元1110转换声道缩减混音信号的域以将这两个域匹配起来。The downmix signal passes through the domain conversion unit 1110 and/or the

解相关单元1200被应用于经域转换的声道缩减混音信号。与将解相关器应用于渲染信息的方法相比,这可能会具有相对较高的运算量。然而,它能够防止在生成渲染信息的过程中发生畸变。如果运算量可允许,则解相关单元1200可包括多个特性上彼此不同的解相关器。如果声道缩减混音信号是立体声信号,则可以不使用解相关单元1200。在图3中,在渲染过程中使用的是经域转换的单声道的声道缩减混音信号——即频率、混合、QMF或DFT域上单声道的声道缩减混音信号的情形中,在相应的域上使用解相关器。并且,本发明还包括在时域上使用的解相关器。在该情形中,是将域转换单元1100之前的单声道的声道缩减混音信号直接输入到解相关单元1200。第一阶或更高阶的IIR滤波器(或FIR滤波器)可作为解相关器使用。The

随后,渲染单元900利用声道缩减混音信号、经解相关的声道缩减混音信号、和渲染信息生成环绕信号。如果声道缩减混音信号是立体声信号,则可以不使用经解相关的声道缩减混音信号。稍后将参考图6至9描述渲染过程的详情。Subsequently, the

此环绕信号由域逆转换单元1300转换至时域然后被输出。如果是这样的话,用户就能够通过立体声耳机等听到具有多声道效果的声音。The surround signal is converted to the time domain by the domain

图4和图5是根据本发明的一个实施例用于源映射过程的声道配置的框图。源映射过程是通过利用空间信息生成与音频信号的每一个源相对应的源映射信息的过程。如在上面描述中提及的,源包括声道,且可生成源映射信息以使之对应于图4和图5中所示的声道。源映射信息以适用于渲染过程的类型来生成。4 and 5 are block diagrams of channel configurations for a source mapping process according to one embodiment of the present invention. The source mapping process is a process of generating source mapping information corresponding to each source of an audio signal by utilizing spatial information. As mentioned in the above description, a source includes channels, and source mapping information may be generated so as to correspond to the channels shown in FIGS. 4 and 5 . Source map information is generated in a type suitable for the rendering pass.

例如,如果声道缩减混音信号是单声道信号,则能够利用诸如CLD1~CLD5、ICC1~ICC5等空间信息生成源映射信息。For example, if the downmix signal is a mono signal, it is possible to generate source mapping information using spatial information such as CLD1-CLD5, ICC1-ICC5, and the like.

可将源映射信息表示为诸如D_L(=DL)、D_R(=DR)、D_C(=DC)、D_LFE(=DLFE)、D_Ls(=DLs)、D_R(=DRs)等值。在这种情形中,生成源映射信息的过程可根据对应于空间信息的树状结构、要使用的空间信息的范围等而变。在本说明书中,声道缩减混音信号例如是单声道信号,它不对本发明构成限制。Source mapping information can be expressed as D_L(=D L ), D_R(=D R ), D_C(=D C ), D_LFE(=D LFE ), D_Ls(=D Ls ), D_R(=D Rs ), etc. value. In this case, the process of generating the source map information may vary according to the tree structure corresponding to the spatial information, the range of the spatial information to be used, and the like. In this specification, the downmix signal is, for example, a mono signal, which does not limit the present invention.

从渲染单元900输出的右和左声道输出可表达为数学演算1。Right and left channel outputs output from the

数学演算1

Lo=L*GL_L′+C*GC_L′+R*GR_L′+Ls*GLs_L′+Rs*GRs_L′Lo=L*GL_L'+C*GC_L'+R*GR_L'+Ls*GLs_L'+Rs*GRs_L'

Ro=L*GL_R′+C*GC_R′+R*GR_R′+Ls*GLs_R′+Rs*GRs_R′Ro=L*GL_R'+C*GC_R'+R*GR_R'+Ls*GLs_R'+Rs*GRs_R'

在这种情形中,算子‘*’指示DFT域上的乘积,且可被QMF或时域上的卷积所替代。In this case, the operator '*' indicates a product on the DFT domain and can be replaced by a QMF or convolution on the time domain.

本发明包括由利用空间信息的源映射信息或由利用空间信息和滤波器信息的源映射信息生成L、C、R、Ls和Rs的方法。例如,可仅利用空间信息的CLD或利用空间信息的CLD和ICC来生成源映射信息。仅利用CLD生成源映射信息的方法解释如下。The present invention includes a method of generating L, C, R, Ls and Rs from source map information using spatial information or from source map information using spatial information and filter information. For example, source map information may be generated using only CLD of spatial information or using CLD and ICC of spatial information. The method of generating source map information using only CLD is explained below.

在此树状结构具有图4所示的结构的情形中,可将仅利用CLD获得源映射信息的第一方法表达为数学演算2。In the case where this tree structure has the structure shown in FIG. 4 , the first method of obtaining source map information using only CLD can be expressed as

数学演算2

在这种情形中,In this case,

,且‘m’指示单声道的声道缩减混音信号。, and 'm' indicates a mono downmix signal.

在此树状结构具有图5中所示的结构的情形中,仅利用CLD获得源映射信息的第二方法可表达为数学演算3。In the case where this tree structure has the structure shown in FIG. 5 , the second method of obtaining source map information using only CLD can be expressed as Mathematical Calculations 3 .

数学演算3Mathematical Calculus 3

如果源映射信息仅利用CLD生成,则三维效果可能下降。所以能够利用ICC和/或解相关器来生成源映射信息。并且,通过利用解相关器输出信号dx(m)生成的多声道信息可表达为数学演算4。If the source map information is generated using only CLD, the three-dimensional effect may be reduced. So source map information can be generated using ICC and/or decorrelators. And, the multi-channel information generated by using the decorrelator output signal dx(m) can be expressed as a mathematical operation 4 .

数学演算4Mathematical Calculus 4

在这种情形中,‘A’、‘B’和‘C’是可通过利用CLD和ICC来表示的值。‘d0’至‘d3’指示解相关器。并且,‘m’指示单声道的声道缩减混音信号。然而,该方法不可用于生成诸如D_L、D_R等源映射信息。In this case, 'A', 'B', and 'C' are values expressible by using CLD and ICC. 'd 0 ' to 'd 3 ' indicate decorrelators. And, 'm' indicates a monaural downmix signal. However, this method cannot be used to generate source map information such as D_L, D_R, etc.

因此,利用关于声道缩减混音信号的CLD、ICC和/或解相关器生成源映射信息的第一方法将dx(m)(x=0,1,2)视为独立输入。在这种情形中,‘dx’可用于根据数学演算5生成子渲染滤波器信息的过程。Therefore, a first method of generating source mapping information using CLD, ICC and/or decorrelators on the downmix signal considers dx(m) (x=0, 1, 2) as independent inputs. In this case, 'dx' can be used in the process of generating sub-rendering filter information according to

数学演算5

FL_L_M=d_L_M*GL_L′(单声道输入→左输出)FL_L_M=d_L_M*GL_L' (mono input → left output)

FL_R_M=d_L_M*GL_R′(单声道输入→右输出)FL_R_M=d_L_M*GL_R' (mono input → right output)

FL_L_Dx=d_L_Dx*GL_L′(Dx输出→左输出)FL_L_Dx=d_L_Dx*GL_L'(Dx output→left output)

FL_R_Dx=d_L_Dx*GL_R′(Dx输出→右输出)FL_R_Dx=d_L_Dx*GL_R'(Dx output→right output)

并且,渲染信息可利用数学演算5的结果根据数学演算6来生成。And, the rendering information may be generated according to the mathematical calculation 6 using the result of the

数学演算6Mathematical Calculus 6

HM_L=FL_L_M+FR_L_M+FC_L_M+FLS_L_M+FRS_L_M+FLFE_L_MHM_L=FL_L_M+FR_L_M+FC_L_M+FLS_L_M+FRS_L_M+FLFE_L_M

HM_R=FL_R_M+FR_R_M+FC_R_M+FLS_R_M+FRS_R_M+FLFE_R_MHM_R=FL_R_M+FR_R_M+FC_R_M+FLS_R_M+FRS_R_M+FLFE_R_M

HDx_L=FL_L_Dx+FR_L_Dx+FC_L_Dx+FLS_L_Dx+FRS_L_Dx+FLFE_L_DxHDx_L=FL_L_Dx+FR_L_Dx+FC_L_Dx+FLS_L_Dx+FRS_L_Dx+FLFE_L_Dx

HDx_R=FL_R_Dx+FR_R_Dx+FC_R_Dx+FLS_R_Dx+FRS_R_Dx+FLFE_R_DxHDx_R=FL_R_Dx+FR_R_Dx+FC_R_Dx+FLS_R_Dx+FRS_R_Dx+FLFE_R_Dx

渲染信息生成过程的详情稍后解释。利用CLD、ICC和/或解相关器生成源映射信息的第一方法将dx输出值即‘dx(m)’作为独立输入处理,这可能增加运算量。Details of the rendering information generation process are explained later. The first method of generating source map information using CLD, ICC and/or decorrelator processes the dx output value, 'dx(m)', as a separate input, which may increase the computational load.

利用CLD、ICC和/或解相关器生成源映射信息的第二方法采用在频域上应用的解相关器。在这种情形中,可将源映射信息表达为数学演算7。A second method of generating source mapping information using CLD, ICC and/or decorrelators employs a decorrelator applied in the frequency domain. In this case, the source map information can be expressed as a mathematical operation7.

数学演算7Mathematical Calculus 7

在这种情形中,通过在频域上应用解相关器,就可生成与应用解相关器之前相同的诸如D_L、D_R等的源映射信息。所以,它能以简单的方式实现。In this case, by applying the decorrelator on the frequency domain, the same source mapping information as before applying the decorrelator, such as D_L, D_R, etc., can be generated. So, it can be implemented in a simple manner.

利用CLD、ICC和/或解相关器生成源映射信息的第三方法采用如第二方法的解相关器那样的具有全通特性的解相关器。在这种情形中,全通特性是指大小固定仅有相位变动。并且,本发明可采用如第一方法的解相关器那样的具有全通特性的解相关器。A third method of generating source map information using CLD, ICC, and/or a decorrelator employs a decorrelator with an all-pass characteristic like the decorrelator of the second method. In this case, the all-pass characteristic means that the magnitude is fixed and only the phase changes. Also, the present invention can employ a decorrelator having an all-pass characteristic like the decorrelator of the first method.

利用CLD、ICC和/或解相关器生成源映射信息的第四方法通过使用针对相应各声道(例如,L、R、C、Ls、Rs等)的解相关器代替使用第二方法的‘d0’至‘d3’来实行解相关。在这种情形中,可将源映射信息表达为数学演算8。A fourth method of generating source mapping information using CLD, ICC, and/or decorrelators replaces the ' d0' to 'd3' to perform decorrelation. In this case, the source map information can be expressed as a mathematical operation 8 .

数学演算8Mathematical Calculus 8

在这种情形中,‘k’是从CLD和ICC值确定的经解相关信号的能量值。并且‘d_L’、‘d_R’、‘d_C’、‘d_Ls’和‘d_Rs’分别指示应用于诸声道的解相关器。In this case 'k' is the energy value of the decorrelated signal determined from the CLD and ICC values. And 'd_L', 'd_R', 'd_C', 'd_Ls' and 'd_Rs' indicate decorrelators applied to channels, respectively.

利用CLD、ICC和/或解相关器生成源映射信息的第五方法通过在第四方法中将‘d_L’和‘d_R’配置成相互对称并在第四方法中将‘d_Ls’和‘d_Rs’配置成相互对称来使解相关效果最大化。具体地,假设d_R=f(d_L)且d_Rs=f(d_Ls),仅需要设计‘d_L’、‘d_C’和‘d_Ls’。The fifth method of generating source mapping information using CLD, ICC and/or decorrelator is by configuring 'd_L' and 'd_R' to be symmetrical to each other in the fourth method and configuring 'd_Ls' and 'd_Rs' in the fourth method The configurations are symmetrical to each other to maximize the decorrelation effect. Specifically, assuming that d_R=f(d_L) and d_Rs=f(d_Ls), only 'd_L', 'd_C' and 'd_Ls' need to be designed.

利用CLD、ICC和/或解相关器生成源映射信息的第六方法是在第五方法中将‘d_L’和‘d_Ls’配置成具有相关性。且,也可将‘d_L’和‘d_C’配置成具有相关性。A sixth method of generating source map information using CLD, ICC and/or decorrelator is to configure 'd_L' and 'd_Ls' to have correlation in the fifth method. Also, 'd_L' and 'd_C' may be configured to have a correlation.

利用CLD、ICC和/或解相关器生成源映射信息的第七方法是将第三方法中的解相关器用作全通滤波器的串联或嵌套结构。第七方法利用了即使将全通滤波器用作串联或嵌套结构全通特性也能维持这一事实。在将全通滤波器用作串联或嵌套结构的情形中,能够获取更多不同种类的相位响应。因此,可使解相关效果最大化。A seventh method for generating source map information using CLD, ICC and/or decorrelators is to use the decorrelators in the third method as a cascaded or nested structure of all-pass filters. The seventh method takes advantage of the fact that the all-pass characteristic is maintained even if an all-pass filter is used as a series or nested structure. In the case of using all-pass filters as series or nested structures, more diverse kinds of phase responses can be obtained. Therefore, the decorrelation effect can be maximized.

利用CLD、ICC和/或解相关器生成源映射信息的第八方法是将相关技术的解相关器与第二方法的频域解相关器一起使用。在这种情形中,可将多声道信号表达为数学演算9。An eighth method of generating source mapping information using CLD, ICC, and/or a decorrelator is to use a related art decorrelator together with a frequency domain decorrelator of the second method. In this case, the multi-channel signal can be expressed as a mathematical operation9.

数学演算9Mathematical Calculus 9

在这种情形中,滤波器系数生成过程使用在第一方法中解释的相同的过程——除了将‘A’改成了‘A+Kd’。In this case, the filter coefficient generation process uses the same process explained in the first method - except 'A' is changed to 'A+Kd'.

利用CLD、ICC和/或解相关器生成源映射信息的第九方法是通过在使用相关技术的解相关器的情形中将频域解相关器应用于该相关技术的解相关器的输出来生成经进一步解相关的值。因此,能够通过克服频域解相关器的局限来以很少的运算量生成源映射信息。A ninth method of generating source mapping information using CLD, ICC and/or a decorrelator is by applying a frequency domain decorrelator to the output of a related art decorrelator in the case of a related art decorrelator The value after further decorrelation. Therefore, source map information can be generated with a small amount of computation by overcoming the limitation of the frequency domain decorrelator.

利用CLD、ICC和/或解相关器生成源映射信息的第十方法表达为数学演算10。A tenth method of generating source map information using CLD, ICC and/or decorrelators is expressed as mathematical calculation 10 .

数学演算10Mathematical Calculus 10

在这种情形中,‘di_(m)’(i=L,R,C,Ls,Rs)是应用于声道i的解相关器输出值。且,该输出值可在时域、频域、QMF域、混合域等上处理。如果输出值在与当前处理的域不同的域上处理的,则其可由域转换来被转换。能够对d_L、d_R、d_C、d_Ls和d_Rs使用同一个′d。在这种情形中,能以非常简单的方式表达数学演算10。In this case 'di_(m)' (i=L, R, C, Ls, Rs) is the decorrelator output value applied to channel i. Also, the output value can be processed in time domain, frequency domain, QMF domain, mixed domain, etc. If the output value was processed on a different domain than the one currently being processed, it may be transformed by a domain transformation. The same 'd can be used for d_L, d_R, d_C, d_Ls and d_Rs. In this case, the mathematical calculation 10 can be expressed in a very simple manner.

如果数学演算10被应用于数学演算1,则可将数学演算1表达为数学演算11。If Math10 is applied to Math1, Math1 can be expressed as Math11.

数学演算11Mathematical Calculus 11

Lo=HM_L*m+HMD_L*d(m)Lo=HM_L*m+HMD_L*d(m)

Ro=HM_R*R+HMD_R*d(m)Ro=HM_R*R+HMD_R*d(m)

在这种情形中,渲染信息HM_L是从组合空间信息与滤波器信息以用输入m生成环绕信号Lo所得到的值。且渲染信息HM_R是从组合空间信息与滤波器信息以用输入m生成环绕信号Ro所得到的值。此外,‘d(m)’是通过将任意域上的解相关器输出值转为当前域上的值而生成的解相关器输出值,或是通过在当前域上处理而生成的解相关器输出值。渲染信息HMD_L是指示在渲染d(m)时向‘Lo’添加解相关器输出值d(m)的程度的值,且还是将空间信息与滤波器信息组合起来得到的值。渲染信息HMD_R是指示在渲染d(m)时向‘Ro’添加解相关器输出值d(m)的程度的值。In this case, the rendering information HM_L is a value resulting from combining the spatial information and the filter information to generate the surround signal Lo with the input m. And the rendering information HM_R is a value obtained from combining the spatial information and the filter information to generate the surround signal Ro with the input m. In addition, 'd(m)' is the decorrelator output value generated by converting the decorrelator output value on an arbitrary domain to the value on the current domain, or the decorrelator output value generated by processing on the current domain output value. The rendering information HMD_L is a value indicating the degree to which the decorrelator output value d(m) is added to 'Lo' at the time of rendering d(m), and is also a value obtained by combining spatial information and filter information. The rendering information HMD_R is a value indicating the degree to which the decorrelator output value d(m) is added to 'Ro' when d(m) is rendered.

由此,为了对单声道的声道缩减混音信号执行渲染处理,本发明提出了一种通过向声道缩减混音信号和经解相关的声道缩减混音信号渲染藉由组合空间信息与滤波器信息(例如,HRTF滤波器系数)而生成的渲染信息来生成环绕信号的方法。此渲染过程可不拘于域地来执行。如果将‘d(m)’表达为在频域上执行的‘d*m’(乘积算子),则可将数学演算11表达为数学演算12。Thus, in order to perform a rendering process on a mono down-mix signal, the present invention proposes a method for rendering the down-mix signal by combining the spatial information to the down-mix signal and the decorrelated down-mix signal. A method for generating surround signals from rendering information generated with filter information (eg, HRTF filter coefficients). This rendering process can be performed domain-independently. If 'd(m)' is expressed as 'd*m' (product operator) performed on the frequency domain, mathematical operation 11 can be expressed as

数学演算12

Lo=HM_L*m+HMD_L*d*m=HMoverall_L*mLo=HM_L*m+HMD_L*d*m=HMoverall_L*m

Ro=HM_R*m+HMD_R*d*m=HMoveralf_R*mRo=HM_R*m+HMD_R*d*m=HMoveralf_R*m

由此,在频域上对声道缩减混音信号执行渲染过程的情形中,能够以将从组合空间信息、滤波器信息和解相关器组合得到的值恰当地表示为乘积形式的方式来使运算量最小化。Thus, in the case of performing a rendering process on a downmix signal in the frequency domain, it is possible to make the operation amount is minimized.

图6和图7是根据本发明的一个实施例用于立体声的声道缩减混音信号的渲染单元的详细框图。6 and 7 are detailed block diagrams of a rendering unit for a stereo downmix signal according to an embodiment of the present invention.

参考图6,渲染单元900包括渲染单元-A 910和渲染单元-B 920。Referring to FIG. 6, the

如果声道缩减混音信号是立体声信号,则空间信息转换单元1000生成用于声道缩减混音信号的左和右声道的渲染信息。渲染单元-A 910通过向声道缩减混音信号的左声道渲染用于该声道缩减混音信号的左声道的渲染信息来生成环绕信号。并且,渲染单元-B 920通过向声道缩减混音信号的右声道渲染用于该声道缩减混音信号的右声道的渲染信息来生成环绕信号。声道的名称仅仅是示例性的,它不对本发明构成限制。If the downmix signal is a stereo signal, the spatial

渲染信息可包括递送给同一声道的渲染信息和递送给另一个声道的渲染信息。The rendering information may include rendering information delivered to the same channel and rendering information delivered to another channel.

例如,空间信息转换单元1000能够生成输入至用于声道缩减混音信号的左声道的渲染单元的渲染信息HL_L和HL_R,其中渲染信息HL_L被递送至对应于同一声道的左输出,而渲染信息HL_R被递送至对应于另一个声道的右输出。并且,空间信息转换单元1000能够生成输入至用于声道缩减混音信号的右声道的渲染单元的渲染信息HR_R和HR_L,其中渲染信息HR_R被递送至对应于同一声道的右输出,而渲染信息HR_L被递送至对应于另一个声道的左输出。For example, the spatial

参考图7,渲染单元900包括渲染单元-1A 911、渲染单元-2A 912、渲染单元-1B 921以及渲染单元-2B 922。Referring to FIG. 7, the

渲染单元900接收立体声的声道缩减混音信号和来自空间信息转换单元1000的渲染信息。随后,渲染单元900通过向此立体声的声道缩减混音信号渲染此渲染信息来生成环绕信号。The

具体地,渲染单元-1A 911通过利用用于声道缩减混音信号的左声道的渲染信息当中的递送至同一声道的渲染信息HL_L来执行渲染。渲染单元-2A 912通过利用用于声道缩减混音信号的左声道的渲染信息当中递送至另一个声道的渲染信息HL_R来执行渲染。渲染单元-1B 921利用用于声道缩减混音信号的右声道的渲染信息当中递送至同一声道的渲染信息HR_R来执行渲染。且渲染单元-2B 922通过利用用于声道缩减混音信号的右声道的渲染信息当中递送至另一个声道的渲染信息HR_L来执行渲染。Specifically, the rendering unit-

在以下的描述中,递送至另一个声道的渲染信息被命名为‘交叉渲染信息’。交叉渲染信息HL_R或HR_L被应用至同一声道然后由加法器加至另一个声道。在这种情形中,交叉渲染信息HL_R和/或HR_L可以是0。如果交叉渲染信息HL_R和/或HR_L是0,则意味着对相应路径没有贡献。In the following description, rendering information delivered to another channel is named 'cross rendering information'. The cross-rendering information HL_R or HR_L is applied to the same channel and then added to the other channel by an adder. In this case, cross rendering information HL_R and/or HR_L may be 0. If the cross rendering information HL_R and/or HR_L is 0, it means that there is no contribution to the corresponding path.

图6或图7中所示的环绕信号生成方法的例子解释如下。An example of the surround signal generation method shown in FIG. 6 or 7 is explained as follows.

首先,如果声道缩减混音信号是立体声信号,则定义为‘x’的声道缩减混音信号、定义为‘D’的通过利用空间信息生成的源映射信息、定义为‘G’的原型滤波器信息、定义为‘p’的多声道信号和定义为‘y’的环绕信号可由数学演算13中所示的矩阵表示。First, if the downmix signal is a stereo signal, the downmix signal is defined as 'x', the source map information generated by using spatial information is defined as 'D', the prototype is defined as 'G' The filter information, the multi-channel signal defined as 'p' and the surround signal defined as 'y' may be represented by a matrix as shown in Mathematics 13.

数学演算13Mathematical Calculus 13

在这种情形中,如果上述值是在频域上,则它们可如下展开。In this case, if the above values are on the frequency domain, they can be expanded as follows.

首先,如数学演算14中所示,可将多声道信号p表达为通过利用空间信息生成的源映射信息D与声道缩减混音信号x之间的乘积。First, as shown in Mathematical Calculations 14, a multi-channel signal p can be expressed as a product between source map information D generated by using spatial information and a downmix signal x.

数学演算14Mathematical Calculus 14

环绕信号y如数学演算15所示可通过向多声道信号p渲染原型滤波器信息G来生成。The surround signal y can be generated by rendering the prototype filter information G to the multi-channel signal p as shown in Mathematical Calculations 15 .

数学演算15Mathematical Calculus 15

y=G·py=G·p

在这种情形中,如果将数学演算14代入p,则可生成为数学演算16。In this case, if the mathematical operation 14 is substituted into p, it can be generated as the mathematical operation 16 .

数学演算16Mathematical Calculus 16

y=GDxy = GDx

在这种情形中,如果将渲染信息H定义为H=GD,则环绕信号y和声道缩减混音信号x可具有数学演算17的关系。In this case, if the rendering information H is defined as H=GD, the surround signal y and the downmix signal x may have a relationship of mathematical operation 17 .

数学演算17Mathematical Calculus 17

因此,在通过处理滤波器信息与源映射信息之积生成渲染信息H之后,将声道缩减混音信号x乘以渲染信息H以生成环绕信号y。Therefore, after generating the rendering information H by processing the product of the filter information and the source map information, the downmix signal x is multiplied by the rendering information H to generate the surround signal y.

根据渲染信息H的定义,可将渲染信息H表达为数学演算18。According to the definition of the rendering information H, the rendering information H can be expressed as a mathematical operation 18 .

数学演算18Mathematical Calculus 18

H=GDH=GD

图8和图9是根据本发明的一个实施例用于单声道的声道缩减混音信号的渲染单元的详细框图。8 and 9 are detailed block diagrams of a rendering unit for a mono-channel downmix signal according to an embodiment of the present invention.

参考图8,渲染单元900包括渲染单元-A 930和渲染单元-B 940。Referring to FIG. 8, the

如果声道缩减混音信号是单声道信号,则空间信息转换单元1000生成渲染信息HM_L和HM_R,其中渲染信息HM_L是在向左声道渲染此单声道信号时使用,而渲染信息HM_R是在向右声道渲染此单声道信号时使用。If the channel downmix signal is a mono signal, the spatial

渲染单元-A 930将渲染信息HM_L应用到单声道的声道缩减混音信号以生成左声道环绕信号。渲染单元-B 940将渲染信息HM_R应用到单声道的声道缩减混音信号以生成右声道环绕信号。The rendering unit-

图中的渲染单元900不使用解相关器。然而,如果渲染单元-A 930和渲染单元-B 940分别通过利用数学演算12中定义的渲染信息Hmoverall_R和Hmoverall_L执行渲染,则能够分别获得应用了解相关器的输出。The

同时,在完成对单声道的声道缩减混音信号执行的渲染后试图获得立体声信号而不是环绕信号的输出的情形中,以下两种方法是可能的。Meanwhile, in the case of attempting to obtain an output of a stereo signal instead of a surround signal after completion of rendering performed on a down-mix signal of mono, the following two methods are possible.

第一种方法是使用用于立体声输出的值来代替使用用于环绕效果的渲染信息。在这种情形中,可通过仅修改图3中所示的结构中的渲染信息来获得立体声信号。The first is to use the values for stereo output instead of rendering information for surround effects. In this case, a stereo signal can be obtained by only modifying the rendering information in the structure shown in FIG. 3 .

第二方法是在利用声道缩减混音信号和空间信息生成多声道信号的解码过程中,可通过将解码过程仅执行到获得特定声道数的相应步骤来获得立体声信号。The second method is that in a decoding process for generating a multi-channel signal using a downmix signal and spatial information, a stereo signal may be obtained by performing the decoding process only up to a corresponding step for obtaining a specific channel number.

参考图9,渲染单元900对应于其中经解相关信号被表示为一个,即数学演算11的情形。渲染单元900包括渲染单元-1A 931、渲染单元-2A 932、渲染单元-1B 941、和渲染单元-2B 942。渲染单元900类似于用于立体声的声道缩减混音信号的渲染单元——除了渲染单元900包括用于经解相关信号的渲染单元941和942。Referring to FIG. 9 , a

在立体声的声道缩减混音信号的情形中,可认为两声道之一是经解相关信号。所以,在不采用附加解相关器的情况下,能够通过使用先前定义的四种渲染信息HL_L、HL_R等执行渲染过程。具体地,渲染单元-1A 931通过将渲染信息HM_L应用于单声道的声道缩减混音信号来生成将被递送至同一声道的信号。渲染单元-2A 932通过将渲染信息HM_R应用于单声道的声道缩减混音信号来生成将被递送至另一声道的信号。渲染单元-1B 941通过将渲染信息HMD_R应用于经解相关信号来生成将被递送至同一声道的信号。且渲染单元-2B 942通过将渲染信息HMD_L应用于此经解相关信号来生成将递送至另一声道的信号。In the case of a stereo downmix signal, one of the two channels may be considered to be a decorrelated signal. Therefore, without employing an additional decorrelator, it is possible to perform a rendering process by using the previously defined four kinds of rendering information HL_L, HL_R, etc. Specifically, the rendering unit-1A 931 generates a signal to be delivered to the same channel by applying the rendering information HM_L to the downmix signal of the mono channel. The rendering unit-2A 932 generates a signal to be delivered to another channel by applying the rendering information HM_R to the mono-channel downmix signal. The rendering unit-1B 941 generates a signal to be delivered to the same channel by applying the rendering information HMD_R to the decorrelated signal. And the rendering unit-2B 942 generates a signal to be delivered to another channel by applying the rendering information HMD_L to this decorrelated signal.

如果声道缩减混音信号是单声道信号,则定义为x的声道缩减混音信号、定义为D的源声道信息、定义为G的原型滤波器信息、定义为p的多声道信号、和定义为y的环绕信号可由数学演算19中所示的矩阵表示。If the downmix signal is a mono signal, the downmix signal is defined as x, the source channel information is defined as D, the prototype filter information is defined as G, and the multichannel is defined as p The signal, and the surround signal defined as y, can be represented by the matrices shown in Mathematical Calculation 19.

数学演算19Mathematical Calculus 19

x=[Mi],

在这种情形中,这些矩阵之间的关系类似于声道缩减混音信号是立体声信号的情形中的关系。所以省略其详情。In this case, the relationship between these matrices is similar to that in the case where the downmix signal is a stereo signal. Therefore its details are omitted.

同时,参考图4和图5描述的源映射信息以及通过利用此源映射信息生成的渲染信息具有每频带、参数带、和/或传送时隙不同的值。在该情形中,如果源映射信息和/或渲染信息的值在相邻带之间或边界时隙之间具有相当大的差,则在渲染过程中可能会发生畸变。为了防止此畸变,需要频域和/或时域上的平滑过程。除了频域平滑和/或时域平滑外,也可使用适用于渲染的其它平滑方法。并且,可使用从将源映射信息或渲染信息乘以一特定增益得到的值。Meanwhile, source map information described with reference to FIGS. 4 and 5 and rendering information generated by using this source map information have different values per frequency band, parameter band, and/or transmission slot. In this case, distortion may occur during rendering if the values of source map information and/or rendering information have a considerable difference between adjacent bands or between boundary slots. In order to prevent this distortion, a smoothing process in frequency domain and/or time domain is required. In addition to frequency domain smoothing and/or time domain smoothing, other smoothing methods suitable for rendering may also be used. Also, a value obtained by multiplying source map information or rendering information by a certain gain may be used.

图10和图11是根据本发明的一个实施例的平滑单元和扩展单元的框图。10 and 11 are block diagrams of a smoothing unit and an expanding unit according to one embodiment of the present invention.

如图10和图11所示,根据本发明的平滑方法可应用于渲染信息和/或源映射信息。然而,该平滑方法也可应用于其它类型的信息。在以下的描述中,描述了频域上的平滑。然而除了频域平滑以外,本发明也包括时域平滑。As shown in FIGS. 10 and 11 , the smoothing method according to the present invention can be applied to rendering information and/or source map information. However, this smoothing method can also be applied to other types of information. In the following description, smoothing in the frequency domain is described. However, in addition to smoothing in the frequency domain, the present invention also includes smoothing in the time domain.

参考图10和图11,平滑单元1042能够对渲染信息和/或源映射信息执行平滑。稍后将参考图18至图20描述平滑发生的位置的详细例子。Referring to FIGS. 10 and 11 , the

平滑单元1042可被配置成与扩展单元1043联用,在扩展单元中渲染信息和/或源映射信息可被扩展到比参数频带更宽的范围——例如滤波器带中。具体地,源映射信息可被扩展到与滤波器信息相对应的频率分辨率(例如,滤波器带)以便乘以此滤波器信息(例如,HRTF滤波器系数)。根据本发明的平滑是在扩展之前或与扩展一起执行的。与扩展一起使用的平滑可采用图12至16中所示的方法之一。The

图12是用于解释根据本发明的一个实施例的第一平滑方法的坐标图。FIG. 12 is a graph for explaining a first smoothing method according to an embodiment of the present invention.

参考图12,第一平滑方法采用在每个参数带中与空间信息具有相同大小的值。在这种情形中,可通过使用合适的平滑函数来实现平滑效果。Referring to FIG. 12, the first smoothing method employs values having the same size as spatial information in each parameter band. In this case, a smoothing effect can be achieved by using a suitable smoothing function.

图13是用于解释根据本发明的一个实施例的第二平滑方法的坐标图。FIG. 13 is a graph for explaining a second smoothing method according to an embodiment of the present invention.

参考图13,第二平滑方法是要通过连接参数带的代表性位置获得平滑效果。代表性位置是诸参数带中的每一个的正中心、与对数标度、Bark标度等成比例的中心位置。最低频率值、或由不同方法事先确定的位置。Referring to FIG. 13 , the second smoothing method is to obtain a smoothing effect by connecting representative positions of the parameter bands. The representative position is the exact center of each of the parameter bands, a center position proportional to a logarithmic scale, a Bark scale, or the like. The lowest frequency value, or the location determined in advance by different methods.

图14是用于解释根据本发明的一个实施例的第三平滑方法的坐标图。FIG. 14 is a graph for explaining a third smoothing method according to an embodiment of the present invention.

参考图14,第三平滑方法是要以平滑地连接参数的边界的曲线或直线的形式执行平滑。在这种情形中,第三平滑方法使用预设的边界平滑曲线或由一阶或更高阶的IIR滤波器或FIR滤波器所作的低通滤波。Referring to FIG. 14 , the third smoothing method is to perform smoothing in the form of a curve or a straight line that smoothly connects the boundaries of parameters. In this case, the third smoothing method uses a preset boundary smoothing curve or low-pass filtering by a first-order or higher-order IIR filter or FIR filter.

图15是用于解释根据本发明的一个实施例的第四平滑方法的坐标图。FIG. 15 is a graph for explaining a fourth smoothing method according to an embodiment of the present invention.

参考图15,第四平滑方法是通过向空间信息轮廓添加诸如随机噪声之类的信号来实现平滑效果。在这种情形中,可将在声道或频带中不同的值用作随机噪声。在频域上添加随机噪声的情形中,可在保持相位值不变的同时仅添加大小值。除了频域上的平滑效果外,第四平滑方法也可实现声道间解相关效果。Referring to FIG. 15 , the fourth smoothing method is to achieve a smoothing effect by adding a signal such as random noise to the spatial information profile. In this case, values that differ in channels or frequency bands can be used as random noise. In the case of adding random noise on the frequency domain, only the magnitude value can be added while keeping the phase value unchanged. In addition to the smoothing effect in the frequency domain, the fourth smoothing method can also achieve the decorrelation effect between channels.

图16是用于解释根据本发明的一个实施例的第五平滑方法的坐标图。FIG. 16 is a graph for explaining a fifth smoothing method according to one embodiment of the present invention.

参考图16,第五平滑方法是要使用第二至第四平滑方法的组合。例如,在已连接代表性的参数带的代表性位置之后,添加随机噪声并随后应用低通滤波。这样就可修改序列。第五平滑方法使频域上的不连续点最小化,并可增强声道间解相关效果。Referring to FIG. 16 , the fifth smoothing method is to use a combination of the second to fourth smoothing methods. For example, after the representative positions of the representative parameter bands have been connected, random noise is added and then low-pass filtering is applied. This allows the sequence to be modified. The fifth smoothing method minimizes the discontinuities in the frequency domain and can enhance the decorrelation effect between channels.

在第一至第五平滑方法中,每声道的相应频域上的空间信息值(例如,CLD值)的总功率应如常数那样是均匀的。为此,在每声道地执行平滑方法之后,应执行功率归一化。例如,如果声道缩减混音信号是单声道信号,则相应各声道的电平值应满足数学演算20的关系。In the first to fifth smoothing methods, the total power of spatial information values (for example, CLD values) on the corresponding frequency domain for each channel should be uniform as a constant. For this, power normalization should be performed after performing the smoothing method per channel. For example, if the channel downmix signal is a mono signal, the level values of corresponding channels should satisfy the relationship of mathematical calculation 20.

数学演算20Mathematical Calculus 20

D_L(pb)+D_R(pb)+D_C(pb)+D_Ls(pb)+D_Rs(pb)+D_Lfe(pb)=CD_L(pb)+D_R(pb)+D_C(pb)+D_Ls(pb)+D_Rs(pb)+D_Lfe(pb)=C

在这种情形中,‘pb=0~总参数频数1’,并且‘C’是任意常数。In this case, 'pb=0~total parameter frequency 1', and 'C' is an arbitrary constant.

图17是用于解释每声道的原型滤波器信息的图。Fig. 17 is a diagram for explaining prototype filter information per channel.

参考图17,为了渲染,已经通过用于左声道源的GL_L滤波器的信号被发送到左输出,而已经通过GL_R滤波器的信号被发送到右输出。Referring to FIG. 17 , for rendering, the signal that has passed the GL_L filter for the left channel source is sent to the left output, while the signal that has passed the GL_R filter is sent to the right output.

随后,通过将从相应各声道接收到的所有信号相加来生成左最终输出(例如,Lo)和右最终输出(例如,Ro)。具体地,所渲染的左/右声道输出可表达为数学演算21。Subsequently, a left final output (eg Lo) and a right final output (eg Ro) are generated by summing all signals received from respective channels. Specifically, the rendered left/right channel output can be expressed as a mathematical operation 21 .

数学演算21Mathematical Calculus 21

Lo=L*GL_L+C*GC_L+R*GR_L+Ls*GLs_L+Rs*GRs_LLo=L*GL_L+C*GC_L+R*GR_L+Ls*GLs_L+Rs*GRs_L

Ro=L*GL_R+C*GC_R+R*GR_R+Ls*GLs_R+Rs*GRs_RRo=L*GL_R+C*GC_R+R*GR_R+Ls*GLs_R+Rs*GRs_R

在本发明中,所渲染的左/右声道输出可通过利用藉由利用空间信息将声道缩减混音信号解码成多声道信号而生成的L、R、C、Ls和Rs来生成。并且,本发明能够在不生成L、R、C、Ls和Rs的情况下利用渲染信息生成所渲染的左/右声道输出,其中渲染信息是通过利用空间信息和滤波器信息生成的。In the present invention, the rendered left/right channel output may be generated by utilizing L, R, C, Ls, and Rs generated by decoding the downmix signal into a multi-channel signal using spatial information. And, the present invention can generate rendered left/right channel output using rendering information generated by using spatial information and filter information without generating L, R, C, Ls, and Rs.

参考图18至20解释利用空间信息生成渲染信息的过程如下。The process of generating rendering information using spatial information is explained with reference to FIGS. 18 to 20 as follows.

图18是根据本发明的一个实施例在空间信息转换单元900中生成渲染信息的第一方法的框图。FIG. 18 is a block diagram of a first method of generating rendering information in the spatial

参考图18,如在上面描述中所提及的,空间信息转换单元900包括源映射单元1010、子渲染信息生成单元1020、整合单元1030、处理单元1040、以及域转换单元1050。空间信息转换单元900具有与图3中所示相同的配置。Referring to FIG. 18 , as mentioned in the above description, the spatial

子渲染信息生成单元1020包括至少一个或多个子渲染信息生成单元(第1子渲染信息生成单元至第N子渲染信息生成单元)。The sub-rendering

子渲染信息生成单元1020通过使用滤波器信息和源映射信息生成子渲染信息。The sub-rendering

例如,如果声道缩减混音信号是单声道信号,则第一子渲染信息生成单元能够生成对应于多声道上的左声道的子渲染信息。并且,可利用源映射信息D_L和经转换的滤波器信息GL_L′和GL_R′将此子渲染信息表示为数学演算22For example, if the downmix signal is a mono signal, the first sub-rendering information generation unit can generate sub-rendering information corresponding to the left channel on the multi-channel. And, this sub-rendering information can be represented as a mathematical operation 22 using the source map information D_L and the converted filter information GL_L' and GL_R'

数学演算22Mathematical Calculus 22

FL_L=D_L*GL_L′FL_L=D_L*GL_L'

(单声道输入→至左输出声道的滤波器系数)(mono input → filter coefficients to left output channel)

FL_R=D_L*GL_R′FL_R=D_L*GL_R'

(单声道输入→至右输出声道的滤波器系数)(mono input → filter coefficients to right output channel)

在这种情形中,D_L是通过在源映射单元1010中利用空间信息生成的值。然而,生成D_L的过程可遵循树状结构。In this case, D_L is a value generated by utilizing spatial information in the

第二子渲染信息生成单元可生成对应于多声道上的右声道的子渲染信息FR_L和FR_R。并且,第N子渲染信息生成单元能够生成对应于多声道上的右环绕声道的子渲染信息FRs_L和FRs_R。The second sub-rendering information generating unit may generate sub-rendering information FR_L and FR_R corresponding to the right channel on the multi-channel. And, the Nth sub-rendering information generation unit can generate sub-rendering information FRs_L and FRs_R corresponding to the right surround channel on the multi-channel.

如果声道缩减混音信号是立体声信号,则第一子渲染信息生成单元可生成对应于多声道上的左声道的子渲染信息。并且,可通过利用源映射信息D_L1和D_L2将此子渲染信息表示为数学演算23。If the downmix signal is a stereo signal, the first sub-rendering information generating unit may generate sub-rendering information corresponding to a left channel on a multi-channel. And, this sub-rendering information can be expressed as a mathematical operation 23 by utilizing the source map information D_L1 and D_L2.

数学演算23Mathematical Calculus 23

FL_L1=D_L1*GL_L′FL_L1=D_L1*GL_L'

(左输入→至左输出声道的滤波器系数)(left input → filter coefficients to left output channel)

FL_L2=D_L2*GL_L′FL_L2=D_L2*GL_L'

(右输入→至左输出声道的滤波器系数)(right input → filter coefficients to left output channel)

FL_R1=D_L1*GL_R′FL_R1=D_L1*GL_R'

(左输入→至右输出声道的滤波器系数)(left input → filter coefficients to right output channel)

PL_R2=D_L2*GL_R′PL_R2=D_L2*GL_R'

(右输入→至右输出声道的滤波器系数)(right input → filter coefficients to right output channel)

在数学演算23中,例如,如下解释FL_R1。In Math 23, for example, FL_R1 is explained as follows.

首先,在FL_R1中,‘L’指示多声道的位置,‘R’指示环绕信号的输出声道,且‘1’指示声道缩减混音信号的声道。即,FL_R1指示在从声道缩减混音信号的左声道生成环绕信号的右输出声道时使用的子渲染信息。First, in FL_R1, 'L' indicates the position of the multi-channel, 'R' indicates the output channel of the surround signal, and '1' indicates the channel of the downmix signal. That is, FL_R1 indicates sub-rendering information used when generating the right output channel of the surround signal from the left channel of the down-mix signal.

第二,D_L1和D_L2是通过在源映射单元1010中利用空间信息生成的值。Second, D_L1 and D_L2 are values generated by utilizing spatial information in the

如果声道缩减混音信号是立体声信号,则能够以与声道缩减混音信号是单声道信号的情形相同的方式从至少一个子渲染信息生成单元生成多个子渲染信息。由多个子渲染信息生成单元生成的子渲染信息的类型是示例性的,这不对本发明构成限制。If the downmix signal is a stereo signal, a plurality of sub rendering information can be generated from at least one sub rendering information generating unit in the same manner as a case where the downmix signal is a monaural signal. The types of the sub-rendering information generated by the plurality of sub-rendering information generating units are exemplary, which does not limit the present invention.

由子渲染信息生成单元1020生成的子渲染信息经由整合单元1030、处理单元1040、以及域转换单元1050传送至渲染单元900。The sub-rendering information generated by the sub-rendering

整合单元1030将每声道生成的子渲染信息整合成用于渲染过程的渲染信息(例如,HL_L、HL_R、HR_L、HR_R)。如下解释单声道信号情形以及立体声信号情形下整合单元1030中的整合过程。The

首先,如果声道缩减混音信号是单声道信号,则渲染信息可表达为数学演算24。First, if the downmix signal is a mono signal, the rendering information can be expressed as a mathematical operation 24 .

数学演算24Mathematical Calculus 24

HM_L=FL_L+FR_L+FC_L+FLs_L+FRs_L+FLFE_LHM_L=FL_L+FR_L+FC_L+FLs_L+FRs_L+FLFE_L

HM_R=FL_R+FR_R+FC_R+FLs_R+FRs_R+FLFE_RHM_R=FL_R+FR_R+FC_R+FLs_R+FRs_R+FLFE_R

第二,如果声道缩减混音信号是立体声信号,则可将渲染信息表达为数学演算25。Second, if the downmix signal is a stereo signal, the rendering information can be expressed as a mathematical operation 25 .

数学演算25Mathematical Calculus 25

HL_L=FL_L1+FR_L1+FC_L1+FLs_L1+FRs_L1+FLFE_L1HL_L=FL_L1+FR_L1+FC_L1+FLs_L1+FRs_L1+FLFE_L1

HR_L=FL_L2+FR_L2+FC_L2+FLs_L2+FRs_L2+FLFE_L2HR_L=FL_L2+FR_L2+FC_L2+FLs_L2+FRs_L2+FLFE_L2

HL_R=FL_R1+FR_R1+FC_R1+FLs_R1+FRs_R1+FLFE_R1HL_R=FL_R1+FR_R1+FC_R1+FLs_R1+FRs_R1+FLFE_R1

HR_R=FL_R2+FR_R2+FC_R2+FLs_R2+FRs_R2+FLFE_R2HR_R=FL_R2+FR_R2+FC_R2+FLs_R2+FRs_R2+FLFE_R2

随后,处理单元1040包括内插单元1041和/或平滑单元1042,并执行针对渲染信息的内插和/或平滑。内插和/或平滑可在时域、频域、或QMF域上执行。在本说明书中,以时域为例,这不对本发明构成限制。Subsequently, the

如果所传送的渲染信息在时域上具有宽间隔,则执行内插以获得渲染信息之间非现存的渲染信息。例如,假设渲染信息分别存在于第n时隙和第(n+k)时隙中,则能够通过使用所生成的渲染信息(例如,HL_L、HR_L、HL_R、HR_R)在未传送的时隙上执行线性内插。If the transmitted rendering information has a wide interval in the temporal domain, interpolation is performed to obtain non-existing rendering information between the rendering information. For example, assuming that rendering information exists in the n-th slot and the (n+k)-th slot respectively, it is possible to use the generated rendering information (for example, HL_L, HR_L, HL_R, HR_R) Perform linear interpolation.

参考声道缩减混音信号是单声道信号的情形和声道缩减混音信号是立体声信号的情形解释从内插生成的渲染信息。The rendering information generated from the interpolation is explained with reference to the case where the downmix signal is a mono signal and the case where the downmix signal is a stereo signal.

如果声道缩减混音信号是单声道信号,则可将内插渲染信息表达为数学演算26。If the downmix signal is a mono signal, the interpolated rendering information can be expressed as a mathematical operation 26 .

数学演算26Mathematical Calculus 26

HM_L(n+j)=HM_L(n)*(1-a)+HM_L(n+k)*aHM_L(n+j)=HM_L(n)*(1-a)+HM_L(n+k)*a

HM_R(n+j)=HM_R(n)*(1-a)+HM_R(n+k)*aHM_R(n+j)=HM_R(n)*(1-a)+HM_R(n+k)*a

如果声道缩减混音信号是立体声信号,则可将经内插的渲染信息表达为数学演算27。If the downmix signal is a stereo signal, the interpolated rendering information can be expressed as a mathematical operation 27 .

数学演算27Mathematical Calculus 27

HL_L(n+j)=HL_L(n)*(1-a)+HL_L(n+k)*aHL_L(n+j)=HL_L(n)*(1-a)+HL_L(n+k)*a

HR_L(n+j)=HR_L(n)*(1-a)+HR_L(n+k)*aHR_L(n+j)=HR_L(n)*(1-a)+HR_L(n+k)*a

HL_R(n+j)=HL_R(n)*(1-a)+HL_R(n+k)*aHL_R(n+j)=HL_R(n)*(1-a)+HL_R(n+k)*a

HR_R(n+j)=HR_R(n)*(1-a)+HR_R(n+k)*aHR_R(n+j)=HR_R(n)*(1-a)+HR_R(n+k)*a

在这种情形中,有0<j<k。‘j’和‘k’是整数。且,‘a’是与将表达为数学演算28的‘′0<a<1’相对应的实数。In this case, 0<j<k. 'j' and 'k' are integers. And, 'a' is a real number corresponding to ''0<a<1' which will be expressed as a mathematical operation 28 .

数学演算28Mathematical Calculus 28

a=j/ka=j/k

如果是这样的话,能够根据数学演算27和数学演算28获得与在连接这两个时隙中的值的直线上的未传送时隙相对应的值。稍后将参考图22和图23解释内插的详情。If so, the value corresponding to the non-transmitted slot on the straight line connecting the values in these two slots can be obtained according to the mathematical operation 27 and the mathematical operation 28 . Details of the interpolation will be explained later with reference to FIGS. 22 and 23 .

在滤波器系数值在时域上的两相邻时隙之间突变的情形中,平滑单元1042执行平滑以防止由于不连续点的出现引起的畸变问题。可利用参考图12至16描述的平滑方法实行时域上的平滑。平滑可与扩展一起执行。并且,平滑可根据其所应用的位置而不同。如果声道缩减混音信号是单声道信号,则可将时域平滑表示为数学演算29。In the case where the filter coefficient value changes abruptly between two adjacent slots on the time domain, the

数学演算29Mathematical Calculus 29

HM_L(n)′=HM_L(n)*b+HM_L(n-1)′*(1-b)HM_L(n)'=HM_L(n)*b+HM_L(n-1)'*(1-b)

HM_R(n)′=HM_R(n)*b+HM_R(n-1)′*(1-b)HM_R(n)'=HM_R(n)*b+HM_R(n-1)'*(1-b)

即,平滑可由按照将在前一时隙n-1中已作平滑的渲染信息HM_L(n-1)或HM_R(n-1)乘以(1-b)、将当前时隙中生成的渲染信息HM_L(n)或HM_R(n)乘以b、并将这两个乘法结果相加的方式执行的1-pol IIR滤波器类型来执行。在这种情形中,‘b’是0<b<1的常数。如果‘b’变小,则平滑效果变大。如果‘b’变大,则平滑效果变小。并且,可以相同的方式应用其余的滤波器。That is, the smoothing can be performed by multiplying the rendering information HM_L(n-1) or HM_R(n-1) that has been smoothed in the previous time slot n-1 by (1-b), and multiplying the rendering information generated in the current time slot HM_L(n) or HM_R(n) is multiplied by b and the 1-pol IIR filter type is performed by adding the results of these two multiplications. In this case, 'b' is a constant of 0<b<1. If 'b' gets smaller, the smoothing effect gets bigger. If 'b' becomes larger, the smoothing effect becomes smaller. And, the rest of the filters can be applied in the same way.

可通过利用针对时域平滑的数学演算29将内插和平滑表示为数学演算30中所示的一个表达式。Interpolation and smoothing can be expressed as one expression shown in math 30 by using math 29 for temporal smoothing.

数学演算30Mathematical Calculus 30

HM_L(n+j)′=(HM_L(n)*(1-a)+HM_L(n+k)*a)*b+HM_L(n+j-1)′*(1-b)HM_L(n+j)'=(HM_L(n)*(1-a)+HM_L(n+k)*a)*b+HM_L(n+j-1)'*(1-b)

HM_R(n+j)′=(HM_R(n)*(1-a)+HM_R(n+k)*a)*b+HM_R(n+j-1)′*(1-b)HM_R(n+j)'=(HM_R(n)*(1-a)+HM_R(n+k)*a)*b+HM_R(n+j-1)'*(1-b)

如果由内插单元1041执行了内插和/或如果由平滑单元1042执行了平滑,则可获得具有与原型渲染信息的能量值不同的能量值的渲染信息。为了防止该问题,可另外执行能量归一化。If interpolation is performed by the

最后,域转换单元1050对渲染信息执行针对用于执行渲染的域的域转换。如果用于执行渲染的域与渲染信息的域相同,则可不执行此域转换。之后,将经域转换的渲染信息传输到渲染单元900。Finally, the

图19是根据本发明的一个实施例在空间信息转换单元中生成渲染信息的第二方法的框图。FIG. 19 is a block diagram of a second method of generating rendering information in a spatial information conversion unit according to an embodiment of the present invention.

第二方法与第一方法的类似之处在于空间信息转换单元1000包括源映射单元1010、子渲染信息生成单元1020、整合单元1030、处理单元1040、以及域转换单元1050,并在于子渲染信息生成单元1020包括至少一个子渲染信息生成单元。The second method is similar to the first method in that the spatial

参考图19,生成渲染信息的第二方法与第一方法的不同之处在于处理单元1040的位置。所以,可对在子渲染信息生成单元1020中每声道地生成的子渲染信息(例如,在单声道情形中的FL_L和FL_R或在立体声信号情形中的FL_L1、FL_L2、FL_R1、FL_R2)每声道地来执行内插和/或平滑。Referring to FIG. 19 , the second method of generating rendering information differs from the first method in the location of the

随后,整合单元1030将经内插和/或平滑的子渲染信息整合成渲染信息。Subsequently, the

将所生成的渲染信息经由域转换单元1050传输到渲染单元900。The generated rendering information is transmitted to the

图20是根据本发明的一个实施例在空间信息转换单元中生成渲染滤波器信息的第三方法的框图。FIG. 20 is a block diagram of a third method of generating rendering filter information in a spatial information conversion unit according to an embodiment of the present invention.

第三方法与第一或第二方法的类似之处在于空间信息转换单元1000包括源映射单元1010、子渲染信息生成单元1020、整合单元1030、处理单元1040、以及域转换单元1050,并在于子渲染信息生成单元1020包括至少一个子渲染信息生成单元。The third method is similar to the first or second method in that the spatial

参考图20,生成渲染信息的第三方法与第一或第二方法的不同之处在于处理单元1040与源映射单元1010相邻。所以,可对通过在源映射单元1010中使用空间信息生成的源映射信息每声道地来执行内插和/或平滑。Referring to FIG. 20 , the third method of generating rendering information is different from the first or second method in that the

随后,子渲染信息生成单元1020通过利用经内插和/或平滑的源映射信息和滤波器信息生成子渲染信息。Subsequently, the sub-rendering

子渲染信息在整合单元1030中被整合成渲染信息。并且,将所生成的渲染信息经由域转换单元1050传输至渲染单元900。The sub-rendering information is integrated into rendering information in the integrating

图21是用于解释根据本发明的一个实施例在渲染单元中生成环绕信号的方法的图。图21示出在DFT域上执行的渲染过程。然而,该渲染过程也可按类似方式在不同域上实现。图21示出输入信号是单声道的声道缩减混音信号的情形。然而,图21能以类似方式应用于包括立体声的声道缩减混音信号等在内的其它输入声道。FIG. 21 is a diagram for explaining a method of generating surround signals in a rendering unit according to one embodiment of the present invention. Figure 21 shows the rendering process performed on the DFT domain. However, the rendering process can also be implemented on different domains in a similar manner. FIG. 21 shows a case where the input signal is a monaural downmix signal. However, FIG. 21 can be applied in a similar manner to other input channels including a stereo downmix signal and the like.

参考图21,时域上的单声道的声道缩减混音信号在域转换单元中优选地执行具有重叠区间OL的开窗。图21示出使用50%重叠的情形。然而,本发明包括使用其它重叠的情形。Referring to FIG. 21 , the monaural downmix signal in the time domain is preferably windowed with an overlapping interval OL in the domain conversion unit. Figure 21 shows the case where 50% overlap is used. However, the present invention includes the use of other overlapping situations.

用于执行开窗口的窗函数可采用藉由在时域上无不连续性地无缝连接而在DFT域上具有良好频率选择性的函数。例如,正弦平方窗函数可用作此窗函数。A window function for performing windowing may employ a function having good frequency selectivity in the DFT domain by being seamlessly connected without discontinuity in the time domain. For example, a sine square window function can be used as this window function.

随后,利用在域转换单元中进行转换的渲染信息,对具有从开窗获取的OL*2长度的单声道的声道缩减混音信号执行渲染滤波器的抽头(tab)长度[精确地,是(抽头长度)-1]的补零ZL。然后执行域转换转为DFT域。图20示出块-k声道缩减混音信号被域转换到DFT域中。Then, using the rendering information converted in the domain conversion unit, the tap length of the rendering filter is performed on the mono down-mix signal with length OL*2 obtained from the windowing [precisely, is the zero-padding ZL of (tap length)-1]. Then perform a domain transformation into the DFT domain. Fig. 20 shows that the block-k channel downmix signal is domain transformed into the DFT domain.

经域转换的声道缩减混音信号由使用渲染信息的渲染滤波器来渲染。可将渲染过程表示为声道缩减混音信号与渲染信息的乘积。经渲染的声道缩减混音信号在域逆转换单元中经历IDFT(离散傅立叶逆变换),然后与先前以OL长度的延迟执行的声道缩减混音信号(图20中的块k-1)重叠以生成环绕信号。The domain converted downmix signal is rendered by a rendering filter using the rendering information. The rendering process can be expressed as the product of the downmix signal and the rendering information. The rendered downmix signal undergoes IDFT (Inverse Discrete Fourier Transform) in the domain inverse transform unit and is then compared with the downmix signal previously performed with a delay of length OL (block k-1 in Fig. 20) overlap to create a surround signal.

可在经历此渲染过程的每一个块上执行内插。如下解释内插法。Interpolation can be performed on every block that goes through this rendering process. The interpolation method is explained as follows.

图22是根据本发明的一个实施例的第一内插法的图。根据本发明的内插可在各个位置上执行。例如,内插可在图18至图20中所示的空间信息转换单元中的各个位置上执行,或可在渲染单元中执行。可将空间信息、源映射信息、滤波器信息等用作待内插的值。在本说明书中,空间信息示例性地用于描述。然而,本发明不限于空间信息。内插在扩展至更宽频带之前或与之一起执行。Figure 22 is a diagram of a first interpolation method according to one embodiment of the present invention. Interpolation according to the invention can be performed at various positions. For example, interpolation may be performed at various positions in the spatial information conversion unit shown in FIGS. 18 to 20 , or may be performed in the rendering unit. Spatial information, source map information, filter information, etc. may be used as values to be interpolated. In this specification, spatial information is exemplarily used for description. However, the present invention is not limited to spatial information. Interpolation is performed before or along with the extension to the wider frequency band.

参考图22,从编码装置传输的空间信息可从随机位置传输而不是在每一个时隙上传送。一个空间帧能够携带多个空间信息集(例如,图22中的参数集n和n+1)。在低比特率的情形中,一个空间帧能够携带单个新的空间信息集。所以,是使用相邻的已传送的空间信息集的值来实行对未传送时隙的内插。用于执行渲染的窗口之间的间隔并不总是与时隙匹配。所以,如图22中所示,找出在渲染窗口的中心处(K-1、K、K+1、K+2等)的内插出的值来使用。尽管图22示出在存在空间信息集的时隙之间实行线性内插,但本发明不限于该内插法。例如,在不存在空间信息集的时隙上不实性内插。而是可代之以采用先前的或预先设定的值。Referring to FIG. 22, the spatial information transmitted from the encoding device may be transmitted from a random position instead of being transmitted on every slot. One spatial frame can carry multiple sets of spatial information (eg, parameter sets n and n+1 in FIG. 22 ). In the case of low bit rates, one spatial frame can carry a single new set of spatial information. Therefore, the interpolation of the non-transmitted slots is performed using the values of adjacent transmitted spatial information sets. The spacing between windows used to perform rendering does not always match the time slot. So, as shown in Figure 22, find the interpolated value at the center of the rendering window (K-1, K, K+1, K+2, etc.) to use. Although FIG. 22 shows that linear interpolation is performed between time slots in which sets of spatial information exist, the present invention is not limited to this interpolation. For example, false interpolation over time slots where no spatial information set exists. Instead, previous or preset values may be used.

图23是根据本发明的一个实施例的第二内插法的图。Figure 23 is a diagram of a second interpolation method according to one embodiment of the present invention.

参考图23,根据本发明的一个实施例的第二内插法具有将采用先前值的区间、采用预先设定的缺省值的区间等相组合的结构。例如,可通过使用维持先前值的方法、采用预先设定的缺省值的方法、以及在一个空间帧的区间里执行线性内插的方法中的至少一种来执行内插。在一个窗口中存在至少两个新的空间信息集的情形中,可能会发生畸变。在以下的描述中,解释用于防止畸变的块切换。Referring to FIG. 23 , the second interpolation method according to one embodiment of the present invention has a structure combining intervals using previous values, intervals using preset default values, and the like. For example, interpolation may be performed by using at least one of a method of maintaining a previous value, a method of adopting a preset default value, and a method of performing linear interpolation in an interval of one spatial frame. Distortion may occur in situations where there are at least two new sets of spatial information in a window. In the following description, block switching for preventing distortion is explained.

图24是根据本发明的一个实施例的块切换法的图。FIG. 24 is a diagram of a block switching method according to one embodiment of the present invention.

参考图24(a),因为窗口长度大于时隙长度,所以一个窗口区间中可能存在至少两个空间信息集(例如,图24中的参数集n和n+1)。在这种情形中,应将空间信息集中的每一个应用于不同的时隙。然而,如果应用了从内插这至少两个空间信息集得到的一个值,则可能发生畸变。即,可能发生归因于根据窗口长度的时间分辨率不足的畸变。Referring to FIG. 24( a ), since the window length is greater than the slot length, there may be at least two spatial information sets in one window interval (eg, parameter sets n and n+1 in FIG. 24 ). In this case, each of the spatial information sets should be applied to a different time slot. However, distortion may occur if a value resulting from interpolating the at least two sets of spatial information is applied. That is, distortion due to insufficient temporal resolution according to the window length may occur.

为了解决这一问题,可使用改变窗口大小以配合时隙分辨率的切换方法。例如,如图24(b)所示,对于要求高分辨率的区间,可将窗口大小切换成大小较短的窗口。在这种情形中,在已切换的窗口的开始部分和结束部分处,使用连接窗以防止在已切换的窗口的时域上出现接缝。To solve this problem, a switching method that changes the window size to match the slot resolution can be used. For example, as shown in Fig. 24(b), for an interval requiring high resolution, the window size can be switched to a window with a shorter size. In this case, at the beginning and end of the switched window, a connection window is used to prevent seams from appearing on the time domain of the switched window.

窗口长度可以不是作为单独的附加信息来传输而是代之以通过在解码装置中使用空间信息来确定。例如,窗口长度可通过利用更新空间信息的时隙的区间来确定。即,如果用于更新空间信息的区间窄,则使用长度短的窗口函数。如果用于更新空间信息的区间宽,则使用长度长的窗口函数。在这种情形中,通过在渲染中使用可变长度的窗口,有利的是不单独地使用发送窗口长度信息的比特。在图24(b)中示出了两种类型的窗口长度。然而,根据传输频率和空间信息的关系可使用具有各种长度的窗口。所决定的窗口长度信息可应用于生成环绕信号的各个步骤,这将在以下的描述中解释。The window length may not be transmitted as separate additional information but instead be determined by using the spatial information in the decoding device. For example, the window length can be determined by using the interval of the time slot for updating the spatial information. That is, if the interval for updating spatial information is narrow, a window function with a short length is used. If the interval used to update the spatial information is wide, use a window function with a long length. In this case, by using a variable length window in the rendering, it is advantageous not to use the bits that transmit the window length information separately. Two types of window lengths are shown in Fig. 24(b). However, windows with various lengths may be used depending on the relationship between transmission frequency and spatial information. The determined window length information can be applied to various steps of generating the surround signal, which will be explained in the following description.

图25是根据本发明的一个实施例应用由窗口长度决定单元决定的窗口长度的位置的框图。FIG. 25 is a block diagram of a location where a window length determined by a window length determination unit is applied according to one embodiment of the present invention.

参考图25,窗口长度决定单元1400可通过使用空间信息来决定窗口长度。关于所决定的窗口长度的信息可应用于源映射单元1010、整合单元1030、处理单元1040、域转换单元1050和1100以及域逆转换单元1300。图25示出使用立体声的声道缩减混音信号的情形。然而,本发明不仅限于立体声的声道缩减混音信号。如上述描述中所提及的,即使窗口长度缩短,根据滤波器抽头数决定的补零长度也是不可调节的。所以,在以下的描述中解释该问题的解决方案。Referring to FIG. 25, the window

图26是根据本发明的一个实施例在处理音频信号中使用的具有各种长度的滤波器的图。如在上面描述中提及的,如果根据滤波器抽头数决定的补零长度不作调节,则实质上发生合计达相应长度的覆盖,从而致使时间分辨率不足。该问题的解决方案是通过限制滤波器抽头的长度来缩短补零的长度。缩短补零长度的方法可通过截断响应的尾部(例如,对应于回响的扩散区间)来实现。在这种情形中,渲染过程可能比不截断滤波器响应的尾部的情形精确度低。然而,时域上的滤波器系数值很小,从而主要影响了回响。所以,音质没有受到截断的显著影响。FIG. 26 is a diagram of filters of various lengths used in processing an audio signal according to one embodiment of the present invention. As mentioned in the above description, if the length of zero padding determined according to the number of filter taps is not adjusted, overlays amounting to a corresponding length substantially occur, resulting in insufficient temporal resolution. The solution to this problem is to shorten the length of zero padding by limiting the length of the filter taps. A method of shortening the zero-padding length can be achieved by truncating the tail of the response (eg, corresponding to the diffusion interval of the reverberation). In this case, the rendering process may be less accurate than if the tails of the filter response were not truncated. However, the values of the filter coefficients in the time domain are small, thereby mainly affecting the reverberation. Therefore, sound quality is not significantly affected by truncation.

参考图26,有四种滤波器可使用。这四种滤波器可在DFT域上使用,这不对本发明构成限制。Referring to Figure 26, there are four filters available. These four filters can be used in the DFT domain, which is not a limitation of the present invention.

滤波器-N指示具有长滤波器长度FL和不受滤波器抽头数限制的长补零长度2*OL的滤波器。滤波器-N2指示通过限制滤波器的抽头数而具有比滤波器-N1要短的补零长度2*LO的具有相同滤波器长度FL的滤波器。滤波器-N3指示通过不限制滤波器抽头数而具有长的补零长度2*LO的具有比滤波器-N1要短的滤波器长度FL的滤波器。并且,滤波器-N4指示通过限制滤波器的抽头数而具有比滤波器-N1要短的窗口长度FL的具有短补零长度2*LO的滤波器。Filter-N indicates a filter with a long filter length FL and a long zero-

如在以上描述中提及的,可利用以上示例性的四种滤波器来解决时间分辨率的问题。并且,对于滤波器响应的尾部,可将不同的滤波器系数用于每一个域。As mentioned in the above description, the above exemplary four filters can be used to solve the problem of temporal resolution. Also, for the tails of the filter response, different filter coefficients may be used for each domain.

图27是根据本发明的一个实施例通过使用多个子滤波器来分开地处理音频信号的方法的图。可将一个滤波器分成具有相互不同的滤波器系数的子滤波器。在通过利用子滤波器处理音频信号之后,可使用将处理的结果相加的方法。在向具有小能量的滤波器响应尾部应用空间信息的情形中,即,在通过利用具有长滤波器抽头的滤波器执行渲染的情形中,该方法提供了按预定长度单位来分开地处理音频信号的功能。例如,因为滤波器的尾部对于对应于每一个声道的每HRTF没有显著变化,所以可通过提取多个窗口共有的系数来执行渲染。在本说明书中,描述了在DFT域上执行的情形。然而,本发明不限于DFT域。FIG. 27 is a diagram of a method of separately processing an audio signal by using a plurality of sub-filters according to one embodiment of the present invention. One filter may be divided into sub-filters having mutually different filter coefficients. After an audio signal is processed by using sub-filters, a method of adding the processed results may be used. In the case of applying spatial information to the tail of the filter response with small energy, i.e. in the case of rendering by using a filter with long filter taps, the method provides for separately processing the audio signal in predetermined length units function. For example, since the tail of the filter does not change significantly for each HRTF corresponding to each channel, rendering may be performed by extracting coefficients common to a plurality of windows. In this specification, the case performed on the DFT domain is described. However, the invention is not limited to the DFT domain.

参考图27,在将一个滤波器FL分成多个子区后,这多个子区可有具有相互不同的滤波器系数的多个子滤波器(滤波器-A和滤波器-B)来处理。Referring to FIG. 27, after one filter FL is divided into a plurality of subsections, the subsections can be processed by a plurality of subfilters (filter-A and filter-B) having filter coefficients different from each other.