CN100551014C - Content processing device, method for processing content - Google Patents

Content processing device, method for processing content Download PDFInfo

- Publication number

- CN100551014C CN100551014C CNB200680000555XA CN200680000555A CN100551014C CN 100551014 C CN100551014 C CN 100551014C CN B200680000555X A CNB200680000555X A CN B200680000555XA CN 200680000555 A CN200680000555 A CN 200680000555A CN 100551014 C CN100551014 C CN 100551014C

- Authority

- CN

- China

- Prior art keywords

- captions

- frame

- scene change

- caption area

- image

- Prior art date

- Legal status (The legal status is an assumption and is not a legal conclusion. Google has not performed a legal analysis and makes no representation as to the accuracy of the status listed.)

- Expired - Fee Related

Links

Images

Landscapes

- Television Signal Processing For Recording (AREA)

- Information Retrieval, Db Structures And Fs Structures Therefor (AREA)

Abstract

Description

技术领域 technical field

本发明涉及被配置为对通过例如记录电视节目而获得的视频内容执行诸如索引编制(indexing)之类的处理的内容处理设备,并涉及处理内容的方法和计算机程序。具体地,本发明涉及被配置为在电视节目的标题(即,主题)的基础上确定所记录的电视节目的场景变化、并执行场景的分段或分类的内容处理设备,并涉及处理内容的方法和计算机程序。The present invention relates to a content processing device configured to perform processing such as indexing on video content obtained by, for example, recording a television program, and to a method and a computer program for processing the content. Specifically, the present invention relates to a content processing device configured to determine a scene change of a recorded television program on the basis of the title (i.e., theme) of the television program, and to perform segmentation or classification of the scene, and to processing content Methods and Computer Programs.

更具体地,本发明涉及被配置为在视频内容中包括的字幕(telop)的基础上检测主题变化、在所检测的主题的基础上执行视频内容的分段、并执行索引编制的内容处理设备,并涉及处理内容的方法和计算机程序。具体地,本发明涉及被配置为通过使用在视频内容中包括的字幕而在相对小的数据量的基础上检测主题变化的内容处理设备,并涉及处理内容的方法和计算机程序。More particularly, the present invention relates to a content processing device configured to detect theme changes on the basis of telops included in video content, perform segmentation of video content on the basis of the detected theme, and perform indexing , and relates to methods and computer programs for processing content. In particular, the present invention relates to a content processing device configured to detect a theme change on the basis of a relatively small amount of data by using subtitles included in video content, and to a method and computer program for processing content.

背景技术 Background technique

在今天的信息科学中,广播的重要性是不可估量的。具体地,因为直接将声音和图像传送给观众,所以电视广播对观众有很大的影响。广播技术包括广泛的技术,如处理、传送和接收信号、以及处理音频和视频信息。In today's information science, the importance of broadcasting is immeasurable. In particular, television broadcasting has a great influence on viewers because sound and images are directly transmitted to the viewers. Broadcasting technology includes a wide range of technologies such as processing, transmitting and receiving signals, and processing audio and video information.

电视机的家庭普及率非常高,并且,由一般公众观看从各个电视台广播的电视节目。作为观看广播内容的另一种方式,观众可记录下内容,并在任意选定的时间回放所记录的内容。The household penetration rate of televisions is very high, and television programs broadcast from various television stations are viewed by the general public. As an alternative to watching broadcast content, viewers can record content and play back the recorded content at any chosen time.

近来,数字技术的进步已使得能够存储大量的音频视频数据。例如,可相对便宜地购买到具有数十到数百吉字节的容量的硬盘驱动器(HDD),并且可在市场上获得能够记录和播放电视节目的基于HDD的记录设备以及个人计算机(PC)。HDD是一种可随机存取的装置。因此,当播放在HDD上记录的节目时,不必如已知的视频带那样按照记录顺序来播放节目,而是可以直接播放任何记录的节目(或节目中的任何场景或片段)。诸如电视机、或视频记录和播放设备之类的接收设备接收并在大存储装置(如硬盘装置)中临时存储广播内容、然后播放所存储的内容的观看模式被称为“服务器广播”。通过使用与常规电视系统不同的服务器广播系统,观众不必在对节目进行广播时观看所广播的节目,而是可以在任何选定的时间观看节目。Recently, advances in digital technology have made it possible to store large amounts of audiovisual data. For example, hard disk drives (HDDs) with a capacity of tens to hundreds of gigabytes are available relatively cheaply, and HDD-based recording devices and personal computers (PCs) capable of recording and playing back television programs are available on the market. . HDD is a random access device. Therefore, when playing a program recorded on the HDD, it is not necessary to play the programs in the recorded order as known video tapes, but any recorded program (or any scene or segment in the program) can be played directly. A viewing mode in which a receiving device such as a television, or a video recording and playing device receives and temporarily stores broadcast content in a large storage device such as a hard disk device, and then plays the stored content is called "server broadcast". By using a server broadcast system different from conventional television systems, viewers do not have to watch a program as it is broadcast, but can watch it at any chosen time.

服务器广播系统的硬盘容量的增加已允许观众记录多达数十小时的电视节目。然而,观众基本上不可能观看在硬盘上记录的全部电视内容。如果观众可以检索仅仅感兴趣的场景,并进行摘要观看(digest viewing),则观众可以能够高效且有效地使用所记录的内容。The increase in hard disk capacity of server broadcast systems has allowed viewers to record up to tens of hours of television programming. However, it is basically impossible for viewers to watch all of the television content recorded on the hard disk. If the viewer can retrieve only the scenes of interest and perform digest viewing, the viewer may be able to efficiently and effectively use the recorded content.

为对所记录的内容进行场景检索和摘要观看,必须对图像进行索引编制。作为视频索引编制的方法,公知一种方法,其中,检测对应于视频信号较大变化的帧的场景变化点,并执行索引编制。Images must be indexed for scene retrieval and summary viewing of recorded content. As a method of video indexing, a method is known in which a scene change point corresponding to a frame in which a video signal largely changes is detected, and indexing is performed.

例如,已知一种场景变化检测方法,用于当对应于两个连续图像场或图像帧的、代表构成图像的分量的直方图的差的总和大于预定阈值时,检测图像的场景已变化(例如,参考专利文献1)。当形成直方图时,向预定层及其相邻层分配常量,并将所述常量相加;通过正规化而计算新的直方图;通过使用新计算的直方图,在每两个连续图像场或图像帧中检测场景的变化。这样,即使在衰减图像中,也能精确地检测出场景变化。For example, a scene change detection method is known for detecting that the scene of an image has changed ( For example, refer to Patent Document 1). When forming a histogram, assign constants to a predetermined layer and its adjacent layers, and add said constants; calculate a new histogram by normalization; by using the newly calculated histogram, in every two consecutive image fields Or detect scene changes in image frames. In this way, scene changes can be accurately detected even in attenuated images.

在电视节目中包括许多场景变化点。通常,处理(treat)对应于特定标题(即,主题)的时间周期、并对视频内容进行分段和分类被考虑为适合于摘要观看。然而,即使当同一标题继续进行时,场景也会频繁地变化。因此,仅依赖于场景变化点的视频索引编制方法将不一定提供用户想要的索引编制。Many scene changes are included in television programs. Typically, time periods corresponding to specific titles (ie, topics) are treated, and video content is segmented and categorized as suitable for summary viewing. However, even when the same title continues, scenes change frequently. Therefore, video indexing methods that rely only on scene change points will not necessarily provide the indexing desired by the user.

已提出了一种视频声音内容汇编(compiling)设备,其被配置为:通过使用视频数据来检测视频切换(cut)位置、使用声音数据来执行声音群集(clustering)、以及通过整合视频数据和声音数据来执行索引编制,而根据索引信息来汇编、检索和选择视频内容(例如,参考专利文献2)。根据该视频声音内容汇编设备,将从音频信息获得的索引信息(用于区分有声、无声、以及音乐)与场景变化点相链接。这样,可将有意义的图像和声音的片段检测为场景,并且可忽略不太有意义的场景变化点。然而,因为在一个电视节目中存在许多场景变化点,所以不能在不同主题的基础上对视频内容进行分段。There has been proposed a video-sound content compiling device configured to detect a video cut position by using video data, perform sound clustering using sound data, and integrate video data and sound Indexing is performed on data, and video content is compiled, retrieved, and selected based on index information (for example, refer to Patent Document 2). According to this video-sound content compiling device, index information (for distinguishing voice, silence, and music) obtained from audio information is linked with scene change points. In this way, meaningful segments of images and sounds can be detected as scenes, and less meaningful scene change points can be ignored. However, because there are many scene change points in one television program, it is not possible to segment the video content on the basis of different themes.

通常,作为产生和编辑诸如新闻节目和综艺节目(variety program)的电视广播的方法,采用了一种在图像帧的角落中显示明确地或隐含地表示节目的标题的字幕的方法。可使用在图像帧中显示的字幕,作为在字幕的显示周期中指定或评估广播节目的主题的重要线索。因此,从视频内容提取字幕,并执行视频索引编制,其中将所显示的字幕的内容定义为一个索引。Generally, as a method of producing and editing television broadcasts such as news programs and variety programs, a method of displaying subtitles expressly or implicitly indicating titles of programs in corners of image frames is employed. The subtitles displayed in the image frames can be used as an important clue for specifying or evaluating the theme of the broadcast program during the display period of the subtitles. Therefore, subtitles are extracted from video content, and video indexing is performed in which the content of displayed subtitles is defined as an index.

例如,已提出了一种广播节目内容菜单产生设备,其被配置为:检测图像帧中包括的字幕作为图像帧的特征图像片段,并且通过提取对应于仅仅字幕的图像数据而自动地产生表示广播节目的内容的菜单(例如,参考专利文献3)。通常,为在帧中检测字幕,必须执行边缘检测。然而,边缘计算加入了高处理负担。对于用来对每个图像帧执行边缘检测的设备,需要大的计算量。另外,该设备的主要目的在于使用从视频数据提取的字幕来自动产生新闻节目的节目菜单,而不在于在所检测到的字幕的基础上指定新闻节目中的主题的变化、或使用主题来添加图像索引。换言之,未对基于与在图像帧中检测到的字幕有关的信息来执行图像索引编制的问题提供解决方法。For example, there has been proposed a broadcast program content menu generating device configured to: detect subtitles included in an image frame as characteristic image segments of the image frame, and automatically generate a subtitle representing the broadcast by extracting image data corresponding to only the subtitle A menu of contents of a program (for example, refer to Patent Document 3). Typically, to detect subtitles in a frame, edge detection must be performed. However, edge computing adds a high processing burden. For a device used to perform edge detection for each image frame, a large amount of calculation is required. In addition, the main purpose of the device is to automatically generate program menus for news programs using subtitles extracted from video data, not to specify changes in topics in news programs on the basis of detected subtitles, or to use topics to add image index. In other words, no solution is provided to the problem of performing image indexing based on information about subtitles detected in image frames.

[专利文献1][Patent Document 1]

日本未审查专利申请公开第2004-282318号Japanese Unexamined Patent Application Publication No. 2004-282318

[专利文献2][Patent Document 2]

日本未审查专利申请公开第2002-271741号Japanese Unexamined Patent Application Publication No. 2002-271741

[专利文献3][Patent Document 3]

日本未审查专利申请公开第2004-364234号Japanese Unexamined Patent Application Publication No. 2004-364234

发明内容 Contents of the invention

本发明的一个目的在于:提供优异的内容处理设备,其能够通过基于节目的标题(即主题)而确定场景变化而适当地执行对所记录视频内容的视频索引编制、以及将视频内容分段为场景;以及提供处理内容的方法和计算机程序。An object of the present invention is to provide an excellent content processing apparatus capable of properly performing video indexing of recorded video content and segmenting the video content into scenarios; and providing methods and computer programs for processing content.

本发明的另一个目的在于:提供优异的内容处理设备,其被配置为通过使用图像中包括的字幕来检测视频内容中的主题变化、通过每个主题而对内容进行分段、以及执行索引编制;以及提供处理内容的方法和计算机程序。Another object of the present invention is to provide an excellent content processing device configured to detect theme changes in video content by using subtitles included in images, segment content by each theme, and perform indexing and providing methods and computer programs for processing content.

本发明的另一个目的在于:提供优异的内容处理设备,其被配置为通过使用视频内容中包括的字幕来在相对小的数据量的基础上检测标题变化;以及提供处理内容的方法和计算机程序。Another object of the present invention is to provide an excellent content processing device configured to detect title changes on the basis of a relatively small amount of data by using subtitles included in video content; and to provide a method and computer program for processing content .

通过考虑上述问题,本发明的第一方面提供一种内容处理设备,其被配置为处理包括时序顺序的图像帧的视频内容数据,该设备包括:场景变化检测单元,其被配置为在要处理的视频内容中检测场景变化点,该场景变化点是其中一个图像帧的场景与另一图像帧的场景显著不同的两个图像帧之间的点;主题检测单元,其被配置为在要处理的视频内容中检测与主题以及在其字幕区域中显示了同一固定字幕的多个连续图像帧相对应的片段;以及索引存储单元,其被配置为存储指示与由所述主题检测单元检测到的片段相对应的时间周期的索引信息。By considering the above problems, a first aspect of the present invention provides a content processing device configured to process video content data including image frames in temporal order, the device comprising: a scene change detection unit configured to process Detecting a scene change point in the video content of the image frame, the scene change point is a point between two image frames where the scene of one image frame is significantly different from the scene of the other image frame; the subject detection unit is configured to process Detecting a segment corresponding to a theme and a plurality of consecutive image frames in which the same fixed subtitle is displayed in the subtitle area of the video content; and an index storage unit configured to store the The index information of the time period corresponding to the segment.

已经变得普通的是,在接收设备中接收和临时存储诸如电视节目之类的广播内容,然后播放该内容。硬盘容量的增长已使得能够记录对应于数十小时的电视节目。因此,有效的是,从所记录的内容中检索仅仅观众感兴趣的场景,并且允许观众执行摘要观看。为使得所记录内容的场景检索和摘要观看成为可能,必须对图像进行索引编制。It has become common to receive and temporarily store broadcast content such as a television program in a receiving device, and then play the content. The increase in the capacity of hard disks has made it possible to record television programs corresponding to tens of hours. Therefore, it is effective to retrieve only scenes of interest to the viewer from the recorded content, and to allow the viewer to perform digest viewing. To enable scene retrieval and summary viewing of recorded content, images must be indexed.

传统地,已公知通过从视频内容检测场景变化点而编制索引的方法。然而,由于在电视节目中包括许多场景变化点,所以,所述索引编制对于观众来说不一定是最优的。Conventionally, a method of indexing by detecting scene change points from video content has been known. However, due to the many scene changes included in a television program, the indexing is not necessarily optimal for the viewer.

对于诸如新闻节目和综艺节目的广播电视节目,经常在图像帧的四个角落中显示代表节目的主题的字幕。因此,可从视频内容提取字幕,并且可使用字幕的显示内容作为索引。然而,为从视频内容提取字幕,必须对每个图像帧执行边缘检测处理。由于必须执行大量的计算,所以这是个问题。For broadcast television programs such as news programs and variety shows, subtitles representing the theme of the program are often displayed in the four corners of an image frame. Accordingly, subtitles can be extracted from video content, and the display content of the subtitles can be used as an index. However, to extract subtitles from video content, edge detection processing must be performed for each image frame. This is a problem due to the large number of calculations that must be performed.

因此,根据本发明的内容处理设备首先检测在要处理的视频内容中包括的场景变化点,然后,检测在紧接着场景变化点之前和之后的图像帧中是否显示了字幕。如果检测到字幕,则检测其中显示了同一固定字幕的片段。这样,减少了为提取字幕而执行边缘检测处理的量,从而降低了为检测主题而施加的处理负担。Therefore, the content processing apparatus according to the present invention first detects a scene change point included in video content to be processed, and then detects whether subtitles are displayed in image frames immediately before and after the scene change point. If subtitles are detected, detect segments where the same fixed subtitle is displayed. In this way, the amount of edge detection processing performed to extract subtitles is reduced, thereby reducing the processing load imposed on detecting subjects.

例如,主题检测单元产生与场景变化点之前和之后一秒的周期相对应的图像帧的平均图像,并检测在该平均图像中包括的字幕。如果在场景变化点之前和之后连续显示字幕,则字幕部分将在平均图像中保持清晰,而其他部分将模糊。这样,可改善字幕检测的精度。通过执行例如边缘检测,可以进行字幕检测。For example, the subject detection unit generates an average image of image frames corresponding to periods of one second before and after a scene change point, and detects subtitles included in the average image. If the subtitles are displayed continuously before and after the scene change point, parts of the subtitles will remain sharp in the average image, while other parts will be blurred. In this way, the accuracy of subtitle detection can be improved. Subtitle detection can be performed by performing, for example, edge detection.

主题检测单元将在平均图像中检测到的字幕与在其中显示同一固定字幕的片段中的场景变化点之前的图像帧的字幕区域中显示的字幕进行比较,并将字幕消失的点定义为主题的起始点。类似地,主题检测单元将在平均图像中检测到的字幕与在其中显示同一固定字幕的片段中的场景变化点之后的图像帧的字幕区域中显示的字幕进行比较,并将字幕消失的点定义为主题的结束点。可通过计算在被与在平均图像中检测到的图像进行比较的每个图像帧的字幕区域中的每个颜色分量的平均颜色、以便确定图像帧之间的平均颜色之间的欧几里得距离是否超过预定阈值,通过较小的处理负担而确定字幕是否已从字幕区域消失。当然,通过已知的采用检测场景变化的方法,可更加精确地检测字幕消失的点。The theme detection unit compares the subtitle detected in the average image with the subtitle displayed in the subtitle area of the image frame preceding the scene change point in the segment in which the same fixed subtitle is displayed, and defines the point at which the subtitle disappears as the subtitle of the theme. starting point. Similarly, the subject detection unit compares the subtitle detected in the average image with the subtitle displayed in the subtitle area of the image frame after the scene change point in the segment in which the same fixed subtitle is displayed, and defines the point at which the subtitle disappears end point of the topic. Euclidean between the average colors between image frames can be determined by calculating the average color of each color component in the subtitle area of each image frame compared to the image detected in the average image Whether the distance exceeds a predetermined threshold determines whether the subtitle has disappeared from the subtitle area with a small processing burden. Of course, the point at which subtitles disappear can be detected more accurately by using a known method of detecting scene changes.

然而,存在一个问题,其中,当在字幕区域内计算平均颜色时,除了字幕区域中包括的字幕之外的背景颜色的影响较大。由此,作为替换方法,提出了一种使用边缘信息而确定是否存在字幕的方法。换言之,确定要比较的帧的字幕区域中的边缘图像,并且在帧的字幕区域中的边缘图像的比较结果的基础上确定字幕区域中的字幕的存在。更具体地,确定要比较的帧的字幕区域中的边缘图像,并且,当在字幕区域中检测到的边缘图像中的像素数目显著减少时,确定字幕已消失,而当像素数目的变化较小时,确定字幕被连续显示。另外,当边缘图像的像素数目显著增加时,这可被确定为新的字幕出现。However, there is a problem in that, when calculating the average color in the subtitle area, the influence of the background color other than the subtitle included in the subtitle area is large. Thus, as an alternative method, a method of determining whether subtitles exist using edge information has been proposed. In other words, an edge image in the subtitle area of the frame to be compared is determined, and the existence of the subtitle in the subtitle area is determined on the basis of the comparison result of the edge images in the subtitle area of the frame. More specifically, the edge image in the subtitle area of the frame to be compared is determined, and when the number of pixels in the edge image detected in the subtitle area is significantly reduced, it is determined that the subtitle has disappeared, and when the change in the number of pixels is small , to make sure subtitles are displayed continuously. In addition, when the number of pixels of the edge image increases significantly, this can be determined as the appearance of a new subtitle.

当字幕变化时,边缘图像的像素数目可能不发生非常大的变化。即使在帧之间的字幕区域中的边缘图像的像素数目的变化较小时,当对应于每个边缘图像的每个边缘像素的逻辑“与”、以及作为结果的图像中的边缘像素的数目显著减小(例如,三分之一或更少)时,也可评估字幕的变化,即字幕的起始和结束位置。When subtitles are changed, the number of pixels of the edge image may not change very much. Even when the variation in the number of pixels of the edge images in the subtitle area between frames is small, when the logical AND of each edge pixel corresponding to each edge image, and the number of edge pixels in the resulting image is significant When reduced (for example, by a third or less), changes in subtitles, ie starting and ending positions of subtitles, may also be evaluated.

主题检测单元在起始点和结束点的基础上确定片段的长度,并且,如果片段的长度比预定的时间量长时,将该片段确定为对应于预定主题。这样,可防止错误检测。The theme detection unit determines the length of the segment on the basis of the start point and the end point, and determines the segment as corresponding to a predetermined theme if the length of the segment is longer than a predetermined amount of time. In this way, false detection can be prevented.

主题检测单元可在其中在帧中检测到字幕的字幕区域的大小、以及位置信息的基础上确定该字幕是否是必要的字幕。根据广播公司的通常制定的惯例来确定图像帧中的字幕出现的位置、以及字幕的大小。通过在这种制定的惯例的基础上参考图像帧中的字幕出现的位置以及字幕的大小来检测字幕,可减少错误检测。The subject detection unit may determine whether the subtitle is a necessary subtitle based on the size of a subtitle area in which the subtitle is detected in the frame, and position information. The position where the subtitles appear in the image frame, and the size of the subtitles are determined according to commonly established conventions of broadcasters. By detecting subtitles with reference to the position where the subtitles appear in the image frame and the size of the subtitles on the basis of such an established convention, erroneous detections can be reduced.

本发明的第二方面提供一种以计算机可读的格式写入的计算机程序,用以在计算机系统上执行对包括时序顺序的图像帧的视频内容的处理,该处理包括步骤:在要处理的视频内容中检测场景变化点,该场景变化点是其中一个图像帧的场景与另一图像帧的场景显著不同的两个图像帧之间的点;在紧接着在检测场景变化点的步骤中检测到的场景变化点之前和之后的图像帧的基础上,在要处理的视频内容中检测与在其字幕区域中显示同一固定字幕的多个连续图像帧相对应的片段,以检测在图像帧的字幕区域中是否显示了字幕;存储指示与在检测片段的步骤中检测到的片段相对应的时间周期的索引信息;以及当从在存储步骤中存储的索引信息中选择了主题时,播放与由索引信息代表的起始时间和结束时间相对应的视频内容的片段。A second aspect of the present invention provides a computer program written in a computer-readable format for performing on a computer system processing of video content comprising image frames in temporal order, the processing comprising the steps of: Detecting a scene change point in the video content, the scene change point is a point between two image frames in which the scene of one image frame is significantly different from the scene of the other image frame; in the step of detecting the scene change point immediately after On the basis of the image frames before and after the scene change point detected, in the video content to be processed, a segment corresponding to a plurality of consecutive image frames displaying the same fixed subtitle in its subtitle area is detected to detect the gap between the image frames whether a subtitle is displayed in the subtitle area; storing index information indicating a time period corresponding to the segment detected in the step of detecting the segment; and when a theme is selected from the index information stored in the storing step, playing the The index information represents the segment of the video content corresponding to the start time and end time.

根据本发明的第二方面的计算机程序定义了一种以计算机可读的格式写入的计算机程序,以在计算机系统中执行预定处理。换言之,通过向计算机系统安装根据本发明的第二方面的计算机程序,在计算机系统上执行协作操作,以便可实现与根据本发明的第一方面的内容处理设备相同的操作。The computer program according to the second aspect of the present invention defines a computer program written in a computer-readable format to execute predetermined processing in a computer system. In other words, by installing the computer program according to the second aspect of the present invention to the computer system, cooperative operations are performed on the computer system so that the same operations as those of the content processing apparatus according to the first aspect of the present invention can be realized.

本发明提供了:优异的内容处理设备,其被配置为在视频内容中包括的字幕的基础上检测视频内容的主题变化,以在所检测的主题的基础上执行视频内容的分段,并执行索引编制;以及处理内容的方法和计算机程序。The present invention provides: an excellent content processing device configured to detect a theme change of a video content on the basis of subtitles included in the video content, to perform segmentation of the video content on the basis of the detected theme, and to perform Indexing; and methods and computer programs for processing content.

本发明提供了:优异的内容处理设备,其被配置为通过使用在视频内容中包括的字幕,而在相对小的数据量的基础上检测标题变化;以及处理内容的方法和计算机程序。The present invention provides: an excellent content processing device configured to detect title changes on the basis of a relatively small amount of data by using subtitles included in video content; and a method and computer program for processing content.

例如,根据本发明,可在主题的基础上对所记录的电视节目分段。通过利用主题对电视节目分段并添加索引,用户可以以诸如摘要观看之类的高效方式来观看电视节目。例如,用户可在重放所记录的内容时检查主题的开始处,并且,如果该主题不使他们产生兴趣,则用户可跳到下一主题。另外,当在DVD上存储所记录的视频内容时,诸如存储仅仅所选择的主题之类的编辑操作是容易的。For example, in accordance with the present invention, recorded television programs may be segmented on a thematic basis. By segmenting and indexing TV shows by topic, users can watch TV shows in an efficient manner such as digest viewing. For example, a user can check the beginning of a topic while replaying recorded content, and if the topic does not interest them, the user can skip to the next topic. In addition, editing operations such as storing only selected subjects are easy when storing recorded video content on a DVD.

下面将参考附图详细说明本发明的其他目的和优点。Other objects and advantages of the present invention will be described in detail below with reference to the accompanying drawings.

附图说明 Description of drawings

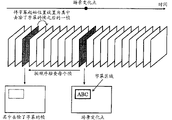

图1图解了根据本发明的实施例的视频内容处理设备的功能结构的示意图;1 illustrates a schematic diagram of a functional structure of a video content processing device according to an embodiment of the present invention;

图2图解了在电视节目的示例场景中包括的字幕区域的示意图;FIG. 2 illustrates a schematic diagram of a subtitle area included in an example scene of a television program;

图3图解了用于检测其中显示同一固定字幕的视频内容的片段的、主题检测过程的流程图;3 illustrates a flowchart of a subject detection process for detecting segments of video content in which the same fixed subtitle is displayed;

图4图解了检测从紧接着场景变化点之后和之前的图像获取的平均图像中的字幕的方法;4 illustrates a method of detecting subtitles in an average image acquired from images immediately after and before a scene change point;

图5图解了检测从紧接着场景变化点之后和之前的图像获取的平均图像中的字幕的方法;5 illustrates a method of detecting subtitles in an average image acquired from images immediately after and before a scene change point;

图6图解了检测从紧接着场景变化点之后和之前的图像获取的平均图像中的字幕的方法;6 illustrates a method of detecting subtitles in an average image acquired from images immediately after and before a scene change point;

图7图解了检测从紧接着场景变化点之后和之前的图像获取的平均图像中的字幕的方法;7 illustrates a method of detecting subtitles in an average image acquired from images immediately after and before a scene change point;

图8图解了在具有720×480像素的宽高比的图像帧中的字幕检测区域的结构的示例;8 illustrates an example of the structure of a subtitle detection area in an image frame having an aspect ratio of 720×480 pixels;

图9图解了从帧序列检测主题的起始位置的情形;FIG. 9 illustrates a situation in which a starting position of a subject is detected from a sequence of frames;

图10图解了示出从帧序列检测主题的起始位置的过程的流程图;FIG. 10 illustrates a flowchart showing a process of detecting a starting position of a subject from a sequence of frames;

图11图解了从帧序列检测主题的结束位置的情形;FIG. 11 illustrates a situation of detecting an end position of a subject from a sequence of frames;

图12图解了示出从帧序列检测主题的结束位置的过程的流程图。FIG. 12 illustrates a flowchart showing a process of detecting an end position of a subject from a sequence of frames.

附图标记reference sign

10视频内容处理设备10Video content processing equipment

11图像存储单元11 image storage unit

12场景变化检测单元12 scene change detection unit

13主题检测单元13 subject detection unit

14索引存储单元14 index storage units

15播放单元15 playback units

具体实施方式 Detailed ways

将参考附图详细说明本发明的实施例。Embodiments of the present invention will be described in detail with reference to the drawings.

图1图解了根据本发明的实施例的视频内容处理设备10的功能结构的示意图。该图中示出的视频内容处理设备10包括图像存储单元11、场景变化检测单元12、主题检测单元13、索引存储单元14、以及播放单元15。FIG. 1 illustrates a schematic diagram of the functional structure of a video content processing device 10 according to an embodiment of the present invention. The video content processing device 10 shown in the figure includes an image storage unit 11 , a scene change detection unit 12 , a theme detection unit 13 , an index storage unit 14 , and a playback unit 15 .

图像存储单元11解调并存储广播电波,并存储经由因特网而从信息源下载的视频内容。例如,图像存储单元11可以由硬盘记录器构成。The image storage unit 11 demodulates and stores broadcast waves, and stores video contents downloaded from information sources via the Internet. For example, the image storage unit 11 may be constituted by a hard disk recorder.

场景变化单元12从图像存储单元11检索经受主题检测的视频内容,跟踪在连续图像中包括的场景(场景或布景),并检测其中场景由于图像的切换而显著变化的场景变化点。Scene change unit 12 retrieves video content subjected to subject detection from image storage unit 11, tracks scenes (scenes or sets) included in consecutive images, and detects scene change points where scenes change significantly due to switching of images.

例如,图像存储单元11可采用在已转让给受让人的日本未审查专利申请公开号2004-282318中公开的检测场景变化的方法。更具体地,通过产生两个连续场或帧的代表图像分量的直方图,并检测在所计算的直方图的差的总和大于预定阈值时的场景的变化,而确定场景变化点。当产生直方图时,向对应层及其相邻层分配常数,并将所述常数相加。然后,通过正规化,计算另一直方图的结果。通过使用这些新产生的直方图,可在屏幕上的每两个图像中检测场景变化。因而,即使在衰减图像中,也能精确地检测场景变化。For example, the image storage unit 11 may employ the method of detecting a scene change disclosed in Japanese Unexamined Patent Application Publication No. 2004-282318 assigned to the assignee. More specifically, scene change points are determined by generating histograms representing image components of two consecutive fields or frames, and detecting changes in the scene when the sum of the differences of the calculated histograms is greater than a predetermined threshold. When generating the histogram, constants are assigned to the corresponding layer and its neighbors, and the constants are added. Then, with normalization, the result of another histogram is calculated. By using these newly generated histograms, scene changes can be detected in every two images on the screen. Thus, scene changes can be accurately detected even in attenuated images.

主题检测单元13在经受主题检测的视频内容中检测其中显示了同一固定字幕的片段,并输出所检测到的片段,作为电视节目中对应于特定主题的片段。The theme detection unit 13 detects a section in which the same fixed subtitle is displayed in the video content subjected to theme detection, and outputs the detected section as a section corresponding to a specific theme in a television program.

在诸如新闻节目和综艺节目的电视节目中,可使用在图像帧中显示的字幕作为指定或评估其中显示了字幕的电视节目中的片段的主题的重要线索。然而,检测和提取字幕所需的计算量非常大。因此,根据该实施例,按照尽可能多地降低必须在其上执行边缘检测的图像帧的数目的方式,在视频内容中检测到的场景变化点的基础上,检测其中显示同一固定字幕的片段。可将其中显示了同一固定字幕的片段看作电视节目中对应于特定主题的片段。当执行视频内容的分段、索引编制、以及摘要观看时,可将该片段作为单个块而适当地处理。下面将说明主题检测处理的细节。In television programs such as news programs and variety shows, subtitles displayed in image frames can be used as important clues for specifying or evaluating the subject matter of segments in the television program in which subtitles are displayed. However, the computation required to detect and extract subtitles is very heavy. Therefore, according to this embodiment, segments in which the same fixed subtitle is displayed are detected on the basis of scene change points detected in the video content in such a manner that the number of image frames on which edge detection must be performed is reduced as much as possible. . Segments in which the same fixed caption is displayed can be thought of as segments of a television program that correspond to a particular theme. When performing segmentation, indexing, and summary viewing of video content, the segment can be properly handled as a single block. Details of the subject detection processing will be described below.

索引存储单元14存储与由图像存储单元11检测到的、其中显示了同一固定字幕的每个片段有关的时间信息。下表显示了在索引存储单元14中存储的时间信息的示例结构。在该表中,提供了用于每个检测到的片段的记录。在每条记录中,记录了对应于片段的主题的题目、片段的起始时间、以及片段的结束时间。例如,可以以标准结构的描述语言,如可扩展标记语言(XML),写入索引信息。主题的题目可以是视频内容(或电视节目)的题目、或者所显示的字幕的字符信息。The index storage unit 14 stores time information on each segment detected by the image storage unit 11 in which the same fixed subtitle is displayed. The following table shows an example structure of time information stored in the index storage unit 14. In this table, a record for each detected fragment is provided. In each record, the title corresponding to the subject of the segment, the start time of the segment, and the end time of the segment are recorded. For example, index information can be written in a standard structured description language, such as Extensible Markup Language (XML). The title of the theme may be the title of video content (or TV program), or character information of displayed subtitles.

表1Table 1

播放单元15从图像存储单元11检索被指使为要播放的视频内容,并且对所检索到的视频内容进行解码和解调,以便作为图像和声音输出该视频内容。根据该实施例,播放单元15在内容名称的基础上而从索引存储单元14检索适当的索引信息,以便播放视频内容、并将索引信息链接到该视频内容。例如,当从由索引存储单元14管理的索引信息中选择了主题时,从图像存储单元11检索相应的视频内容,并且播放从由索引信息指示的起始时间到结束时间的片段。The playback unit 15 retrieves video content instructed to be played from the image storage unit 11, and decodes and demodulates the retrieved video content to output the video content as images and sounds. According to this embodiment, the playback unit 15 retrieves appropriate index information from the index storage unit 14 on the basis of the content name in order to play video content and links the index information to the video content. For example, when a theme is selected from the index information managed by the index storage unit 14, the corresponding video content is retrieved from the image storage unit 11, and a section from the start time to the end time indicated by the index information is played.

接着,将详细说明由主题检测单元13执行的、用来在视频内容中检测其中显示了同一固定字幕的片段的主题检测处理。Next, the theme detection processing performed by the theme detection unit 13 to detect a segment in video content in which the same fixed caption is displayed will be described in detail.

根据该实施例,使用紧接着由场景变化检测单元12检测到的场景变化点之后和之间的帧,以检测在图像帧中是否显示了字幕。当检测到显示的字幕时,由于检测到其中显示了同一固定字幕的片段,所以,能减少用于提取字幕的边缘检测处理。因此,可降低在检测主题时施加的处理负担。According to this embodiment, frames immediately after and between the scene change point detected by the scene change detection unit 12 are used to detect whether subtitles are displayed in the image frame. When a displayed subtitle is detected, since a segment in which the same fixed subtitle is displayed is detected, edge detection processing for extracting subtitles can be reduced. Therefore, the processing load imposed upon detecting a subject can be reduced.

例如,在具有多种体裁的电视节目(如新闻节目和综艺节目)中,显示字幕以获得理解和支持、并引起兴趣、或吸引观众的注意。在许多情况下,如图2所示,在屏幕上的四个区域之一中显示固定字幕。通常,固定字幕具有下面的特点:For example, in a television program with various genres, such as a news program and a variety show, subtitles are displayed to gain understanding and support, and to arouse interest, or to attract viewers' attention. In many cases, fixed subtitles are displayed in one of four areas on the screen, as shown in FIG. 2 . Generally, fixed subtitles have the following characteristics:

1)用作广播电视节目的标题的代表(题目等);1) Used as a representative of the title of a broadcast television program (title, etc.);

2)当电视节目关于相同标题时,被连续显示。2) When TV programs are about the same title, they are displayed consecutively.

例如,在新闻节目中,当广播特定的新闻项时,可能连续地显示该新闻项的题目。主题检测单元13检测其中显示了固定字幕的节目的这样的片段,并将索引添加到所检测到的对应于特定主题的片段。主题检测单元13还能够产生所检测的固定字幕的缩略图(thumbnail),或识别所显示的字幕的字符以获得对应于特定主题的题目的字符信息。For example, in a news program, when a particular news item is broadcast, the title of the news item may be continuously displayed. The theme detection unit 13 detects such a section of a program in which a fixed subtitle is displayed, and adds an index to the detected section corresponding to a specific theme. The theme detection unit 13 is also capable of generating a thumbnail of a detected fixed subtitle, or recognizing characters of a displayed subtitle to obtain character information of a title corresponding to a specific theme.

图3图解了示出由主题检测单元13执行的、用来检测其中显示了同一固定字幕的视频内容的片段的主题检测处理的流程图。FIG. 3 illustrates a flowchart showing a theme detection process performed by the theme detection unit 13 to detect sections of video content in which the same fixed subtitle is displayed.

首先,从要处理的视频内容中检索第一场景变化点处的视频帧(步骤S1)。从对应于场景变化点之前一秒和之后一秒的图像帧产生平均图像(步骤S2)。然后,对平均图像执行字幕检测(步骤S3)。如果该字幕在场景变化点之后和之间继续显示,则平均图像的字幕部分将保持清晰,而其他部分将会模糊。因此,可改善字幕的检测精度。用于生成平均图像的图像帧不限于场景变化点之前和之后一秒的图像帧。只要从场景变化点之前和之后的点得到用于获得平均图像的图像帧即可,可使用多于两个的图像帧。First, the video frame at the first scene change point is retrieved from the video content to be processed (step S1). An average image is generated from image frames corresponding to one second before and one second after the scene change point (step S2). Then, subtitle detection is performed on the averaged image (step S3). If the subtitles continue to be displayed after and between scene change points, parts of the subtitles of the average image will remain sharp while other parts will be blurred. Therefore, detection accuracy of subtitles can be improved. The image frames used to generate the average image are not limited to the image frames one second before and after the scene change point. More than two image frames may be used as long as the image frames for obtaining the average image are obtained from points before and after the scene change point.

图4到6图解了从根据场景变化点之前和之后的图像帧而生成的平均图像中检测字幕的过程。由于一个图像帧的场景对于其他图像帧的场景有显著的变化,所以,通过对两个图像帧取平均而获得的帧是模糊的,好像图像帧被阿尔发交融(alpha blend)了。如果如图5所示、在场景变化点之前和之后连续显示同一固定字幕,则平均图像的字幕部分保持清晰,并且从模糊的背景突显出来。因此,可通过执行边缘检测处理而以高精度的方式提取字幕。如果如图6所示、仅仅在场景变化点之前和之后的图像帧的一个中显示了字幕(或者,如果在一个图像帧中显示的字幕与在其他帧中显示的字幕不同),则平均图像的字幕部分将按照与背景一样的方式而变模糊。这样,不会错误地检测到字幕。4 to 6 illustrate a process of detecting subtitles from an average image generated from image frames before and after a scene change point. Since the scene of one image frame varies significantly from that of the other image frames, the frame obtained by averaging the two image frames is blurred as if the image frames were alpha blended. If the same fixed subtitle is continuously displayed before and after the scene change point as shown in Figure 5, the subtitle part of the average image remains sharp and stands out from the blurry background. Therefore, subtitles can be extracted with high precision by performing edge detection processing. If subtitles are displayed in only one of the image frames before and after the scene change point as shown in Figure 6 (or, if the subtitles displayed in one image frame are different from those displayed in other frames), the average image The subtitle portion of the will be blurred in the same way as the background. In this way, subtitles are not falsely detected.

通常,字幕的亮度比背景的亮度更高。因此,可采用使用边缘信息检测字幕的方法。例如,对输入图像执行YUV转换,并且,然后,对Y分量执行边缘计算。为执行边缘计算,可采用在已转让给受让人的日本未审查专利申请公开号2004-343352中描述的字幕信息处理方法、或在日本未审查专利申请号2004-318256中描述的人工(artificial)图像提取方法。Typically, subtitles are brighter than the background. Therefore, a method of detecting subtitles using edge information may be employed. For example, YUV conversion is performed on the input image, and, then, edge calculation is performed on the Y component. To perform edge computing, the subtitle information processing method described in Japanese Unexamined Patent Application Publication No. 2004-343352 assigned to the assignee, or the artificial (artificial ) image extraction method.

如果从平均图像中检测到字幕(步骤S4),则将满足以下条件的矩形区域确定为实际字幕。If subtitles are detected from the averaged image (step S4), a rectangular area satisfying the following conditions is determined as an actual subtitle.

1)大于预定面积的区域(例如,大于80×30像素)1) An area larger than a predetermined area (for example, larger than 80×30 pixels)

2)不会覆盖多于一个字幕区域的区域(参考图2)2) Areas that do not cover more than one subtitle area (refer to Figure 2)

根据广播公司通常制定的惯例,而确定字幕出现的位置、以及图像帧中的字幕的大小。通过在这种制定的惯例的基础之上、参考图像帧中的字幕出现的位置和字幕的大小而检测字幕,可减少错误检测。图8图解了具有720×480像素的宽高比的图像帧中的字幕检测区域的结构的示例。The position where the subtitles appear and the size of the subtitles within the image frame are determined according to conventions generally established by broadcasters. By detecting subtitles on the basis of such established conventions with reference to the position where the subtitles appear and the size of the subtitles in the image frame, erroneous detections can be reduced. FIG. 8 illustrates an example of the structure of a subtitle detection area in an image frame having an aspect ratio of 720×480 pixels.

当检测到字幕时,按顺序逐一地将检测到的字幕的字幕区域与场景变化点之前的图像帧中的字幕区域进行比较。将紧接着其中字幕从字幕区域消失的图像帧之后的图像帧确定为对应于特定主题的片段的起始点(步骤S5)。When subtitles are detected, the subtitle areas of the detected subtitles are sequentially compared one by one with the subtitle areas in the image frame before the scene change point. The image frame immediately following the image frame in which the subtitle disappears from the subtitle area is determined as the starting point of the segment corresponding to the specific theme (step S5).

图9图解了在步骤S5中从帧序列检测主题的起始位置的情形。如该图所示,从在步骤S3中检测到字幕的场景变化点开始,向前按顺序对每个帧执行字幕区域的比较。然后,当检测到其中字幕从字幕区域消失的帧时,将紧接着之后的帧检测为主题的起始位置。FIG. 9 illustrates a situation where the start position of the subject is detected from the sequence of frames in step S5. As shown in the figure, the comparison of the subtitle area is performed sequentially for each frame forward from the detection of the scene change point of the subtitle in step S3. Then, when a frame in which subtitles disappear from the subtitle area is detected, the immediately following frame is detected as the start position of the subject.

在图10中,以流程图的方式示出了从帧序列检测主题的起始位置的过程。首先,当在当前帧位置之前存在帧(步骤S21)时,获得该帧(步骤S22),并且比较这些帧的字幕区域(步骤S23)。然后,如果字幕区域中没有变化(步骤S24中的“否”),则字幕是连续显示的。由此,该过程返回到步骤S21,以重复上述过程。如果字幕区域中有变化(步骤S24中的“是”),则字幕已消失。由此,输出紧接着该帧之后的帧,作为主题的起始位置,并且完成该处理例程。In FIG. 10 , the process of detecting the starting position of a subject from a sequence of frames is shown in the form of a flowchart. First, when there is a frame before the current frame position (step S21), the frame is obtained (step S22), and the subtitle areas of these frames are compared (step S23). Then, if there is no change in the subtitle area ("No" in step S24), subtitles are continuously displayed. Thus, the process returns to step S21 to repeat the above-mentioned process. If there is a change in the subtitle area ("YES" in step S24), the subtitle has disappeared. Thus, the frame immediately following this frame is output as the start position of the subject, and the processing routine is completed.

类似地,按顺序逐一地将检测到的字幕的字幕区域与场景变化点之后的图像帧中的字幕区域进行比较。将紧接着其中字幕从字幕区域消失的图像帧之前的图像帧确定为对应于特定主题的片段的结束点(步骤S6)。Similarly, subtitle regions of detected subtitles are compared with subtitle regions in image frames after the scene change point one by one in order. The image frame immediately preceding the image frame in which the subtitle disappears from the subtitle area is determined as the end point of the segment corresponding to the specific theme (step S6).

图11图解了从帧序列检测主题的结束位置的情形。如该图所示,从在步骤S3中检测到字幕的场景变化点开始,向后按顺序对每个帧执行字幕区域的比较。然后,当检测到其中字幕从字幕区域消失的帧时,将紧接着之后的帧检测为主题的结束位置。FIG. 11 illustrates a situation of detecting the end position of a subject from a sequence of frames. As shown in the figure, the comparison of the subtitle area is performed sequentially for each frame backward from the detection of the scene change point of the subtitle in step S3. Then, when a frame in which subtitles disappear from the subtitle area is detected, the immediately following frame is detected as the end position of the theme.

图12图解了示出从帧序列检测主题的结束位置的过程的流程图。首先,当在当前帧位置之后存在帧(步骤S31)时,获得该帧(步骤S32),并且比较这些帧的字幕区域(步骤S33)。然后,如果字幕区域中没有变化(步骤S34中的“否”),则字幕是连续显示的。由此,该过程返回到步骤S31,以重复上述过程。如果字幕区域中有变化(步骤S34中的“是”),则字幕已消失。由此,输出紧接着该帧之前的帧,作为主题的结束位置,并且完成该处理例程。FIG. 12 illustrates a flowchart showing a process of detecting an end position of a subject from a sequence of frames. First, when there is a frame after the current frame position (step S31), the frame is obtained (step S32), and the subtitle areas of these frames are compared (step S33). Then, if there is no change in the subtitle area ("No" in step S34), subtitles are continuously displayed. Thus, the process returns to step S31 to repeat the above-mentioned process. If there is a change in the subtitle area ("YES" in step S34), the subtitle has disappeared. Thus, the frame immediately preceding this frame is output as the end position of the subject, and the processing routine is completed.

当如图9和11所示地检测字幕消失位置时,通过按顺序逐一比较从作为起始位置的场景变化点向前和向后的帧的字幕区域,可精确地检测到字幕已消失的位置。为降低处理负担,可通过以下步骤检测字幕消失的近似位置。When detecting the subtitle disappearing position as shown in FIGS. 9 and 11, by sequentially comparing the subtitle areas of the frames forward and backward from the scene change point as the starting position one by one, the position where the subtitle has disappeared can be accurately detected. . In order to reduce the processing load, the approximate position where the subtitle disappears can be detected through the following steps.

1)比较在包括交替排列的I画面(帧内编码图像)和多个P画面(帧间前向预测编码图像)的编码图像(如MPEG)中的I画面1) Comparing I pictures in a coded picture (such as MPEG) including alternately arranged I pictures (intra-coded pictures) and a plurality of P pictures (inter-frame forward predictive coded pictures)

2)按照每秒而比较图像帧2) Compare image frames per second

例如,可通过计算被比较的图像帧的字幕区域的RGB分量的平均颜色、并确定图像帧之间的平均颜色的欧几里得距离(Euclidean distance)是否超过预定阈值,而确定字幕是否已从字幕区域消失。这样,可确定字幕是否消失,同时仅需要较小的处理负担。换言之,确定字幕已在满足下面的方程式(1)的场景变化点之前或之后的第n个图像帧处消失,其中,ROavg、GOavg、和BOavg代表场景变化点处的图像帧中的字幕区域的平均颜色(即,RGB分量的平均),Rnavg、Gnavg、和Bnavg代表距场景变化点的第n图像帧中的字幕区域的平均颜色。例如,该阈值是60。For example, it may be determined whether the subtitle has been removed from the subtitle by calculating the average color of the RGB components of the subtitle area of the compared image frames and determining whether the Euclidean distance (Euclidean distance) of the average color between the image frames exceeds a predetermined threshold value. The subtitle area disappears. In this way, it is possible to determine whether subtitles have disappeared while requiring only a small processing burden. In other words, it is determined that the subtitle has disappeared at the n-th image frame before or after the scene change point satisfying the following equation (1), wherein RO avg , GO avg , and BO avg represent the The average color of the subtitle area (ie, the average of RGB components), Rn avg , Gn avg , and Bn avg represent the average color of the subtitle area in the nth image frame from the scene change point. For example, the threshold is 60.

当固定字幕在未发生场景变化的片段中消失时,平均图像将包括清晰的背景,而字幕将模糊,如图7所示。换言之,该结果与图5中所示的相反。在固定字幕在未发生场景变化的片段中出现时也是这样。为更精确地检测字幕消失的点,可对字幕区域采用在日本未审查专利申请公开号2004-282318中公开的检测场景变化的方法。When the fixed subtitle disappears in the segment where no scene change occurs, the average image will include a clear background, while the subtitle will be blurred, as shown in Figure 7. In other words, the results are opposite to those shown in FIG. 5 . The same is true when fixed subtitles appear in segments where no scene changes occur. In order to more accurately detect the point where the subtitle disappears, the method of detecting a scene change disclosed in Japanese Unexamined Patent Application Publication No. 2004-282318 can be employed for the subtitle area.

这里,存在一个问题,其中,当在字幕区域内计算平均颜色时,除了字幕区域中包括的字幕之外的背景颜色的影响较大,这降低了检测精度。由此,作为替换方法,提出了一种使用边缘信息而确定是否存在字幕的方法。换言之,确定要比较的帧的字幕区域中的边缘图像,并且在帧的字幕区域中的边缘图像的比较结果的基础上确定字幕区域中字幕的存在。更具体地,确定要比较的帧的字幕区域中的边缘图像,并且,当在字幕区域中检测到的边缘图像中的像素数目显著减少时,可确定字幕已消失。相反,像素数目的变化较小时,可确定字幕被连续显示。Here, there is a problem in that, when the average color is calculated in the subtitle area, the influence of the background color other than the subtitle included in the subtitle area is large, which lowers the detection accuracy. Thus, as an alternative method, a method of determining whether subtitles exist using edge information has been proposed. In other words, the edge images in the subtitle areas of the frames to be compared are determined, and the presence of subtitles in the subtitle areas is determined on the basis of the comparison result of the edge images in the subtitle areas of the frames. More specifically, an edge image in the subtitle area of the frame to be compared is determined, and when the number of pixels in the edge image detected in the subtitle area is significantly reduced, it may be determined that the subtitle has disappeared. On the contrary, when the change in the number of pixels is small, it can be determined that subtitles are continuously displayed.

例如,SC代表场景变化点,Rect代表SC处的字幕区域,EdgeImg1代表SC处的Rect的边缘图像。EdgeImgN代表从SC开始计算(向着时间轴的开始或结束)的第n帧的字幕区域Rect中的边缘图像。通过预定阈值(例如,128)将边缘图像二进制化。在图10所示的流程图中的步骤S23、以及图12所示的流程图中的步骤S33中,比较EdgeImg1与EdgeImgN的边缘点的数目(像素数目)。当边缘点的数目显著减少(例如,三分之一或更少)时,可评估为字幕已消失(然而,当边缘点的数目显著增大时,可评估为字幕已出现)。For example, SC represents the scene change point, Rect represents the subtitle area at SC, and EdgeImg1 represents the edge image of the Rect at SC. EdgeImgN represents the edge image in the subtitle area Rect of the n-th frame counted from SC (towards the start or end of the time axis). The edge image is binarized by a predetermined threshold (eg, 128). In step S23 in the flowchart shown in FIG. 10 and step S33 in the flowchart shown in FIG. 12 , the numbers of edge points (pixel numbers) of EdgeImg1 and EdgeImgN are compared. When the number of edge points is significantly reduced (eg, one-third or less), it can be evaluated that subtitles have disappeared (however, when the number of edge points has been significantly increased, it can be evaluated that subtitles have appeared).

当边缘点的数目在EdgeImg1和EdgeImgN上并没有太大的不同时,可评估为字幕被连续显示。然而,存在这样的可能性,即,即使边缘点的数目还未发生较大变化,字幕却已改变。由此,当获得对EdgeImg1和EdgeImgN中的每个像素的逻辑“与”、并且结果图像中的边缘点的数目显著减少(例如,三分之一或更少)时,评估为字幕已变化,即,这是字幕的起始或结束点。这样,可改善检测精度。When the number of edge points is not much different between EdgeImg1 and EdgeImgN, it can be evaluated that subtitles are continuously displayed. However, there is a possibility that subtitles have changed even though the number of edge points has not changed greatly. Thus, when a logical AND of each pixel in EdgeImg1 and EdgeImgN is obtained, and the number of edge points in the resulting image is significantly reduced (for example, by a third or less), it is evaluated that the subtitle has changed, That is, this is the start or end point of the subtitle. In this way, detection accuracy can be improved.

接着,从在步骤S6中确定的字幕结束点减去在步骤S5中确定的字幕起始点,以确定字幕显示时间。然后,通过仅当字幕被显示了预定的时间量时才将字幕显示时间确定为对应于特定主题的片段(步骤S7),可降低错误检测的可能性。还可以从电子节目向导(EPG)获得关于电视节目的体裁信息,并且根据体裁而改变字幕显示时间的阈值。例如,因为对于新闻节目,字幕显示时间相对长,所以可将阈值设置为30秒,然而,对于综艺节目,可将阈值设置为10秒。Next, the subtitle start point determined in step S5 is subtracted from the subtitle end point determined in step S6 to determine the subtitle display time. Then, by determining the subtitle display time as a section corresponding to a specific theme only when the subtitle is displayed for a predetermined amount of time (step S7), the possibility of false detection can be reduced. It is also possible to obtain genre information on television programs from an electronic program guide (EPG), and change the threshold of subtitle display time according to the genre. For example, because the subtitle display time is relatively long for a news program, the threshold may be set to 30 seconds, however, for a variety show, the threshold may be set to 10 seconds.

将在步骤S7中被识别为对应于特定主题的片段的字幕起始点和结束点存储在索引存储单元14中(步骤S8)。The subtitle start point and end point of the section identified in step S7 as corresponding to the specific theme are stored in the index storage unit 14 (step S8).

主题检测单元13联系场景变化检测单元12,以确认在步骤S6中检测到的字幕结束点之后、视频内容是否包括场景变化点(步骤S9)。当在字幕结束点之后未发现场景变化点时,完成整个处理例程。当在字幕结束点之后发现场景变化点时,检索下一场景变化点的帧(步骤S10),该过程返回到步骤S2,并且重复上述主题检测过程。The subject detection unit 13 contacts the scene change detection unit 12 to confirm whether the video content includes a scene change point after the subtitle end point detected in step S6 (step S9). When no scene change point is found after the subtitle end point, the entire processing routine is completed. When a scene change point is found after the subtitle end point, the frame of the next scene change point is retrieved (step S10), the process returns to step S2, and the above-described theme detection process is repeated.

在步骤S4中,当在要处理的场景检测点处未检测到字幕时,主题检测单元13联系场景变化检测单元12,以确认在视频内容中是否包括后续的场景变化点(步骤S11)。当不包括后续场景变化点时,完成整个处理例程。相反,当包括了后续场景变化点时,检索下一场景变化点的帧(步骤S10),该过程返回到步骤S2,并且重复上述主题检测过程。In step S4, when subtitles are not detected at the scene detection point to be processed, the theme detection unit 13 contacts the scene change detection unit 12 to confirm whether a subsequent scene change point is included in the video content (step S11). When subsequent scene change points are not included, the entire processing routine is completed. Conversely, when a subsequent scene change point is included, the frame of the next scene change point is retrieved (step S10), the process returns to step S2, and the above-described subject detection process is repeated.

根据本实施例,基于在电视屏幕的四个角落处提供字幕区域的假设而执行字幕检测过程,如图2所示。然而,在许多电视节目中,在这些区域之一中持续地显示当前时间。为防止错误检测,可获得所检测的字幕的字符信息,并且,如果将所述特征识别为数字时,可将所检测到的字幕确定为不是实际字幕。According to the present embodiment, the subtitle detection process is performed on the assumption that subtitle areas are provided at the four corners of the television screen, as shown in FIG. 2 . However, in many television programs, the current time is continuously displayed in one of these areas. To prevent erroneous detection, character information of the detected subtitle may be obtained, and if the feature is recognized as a number, the detected subtitle may be determined not to be an actual subtitle.

在某些情况下,字幕可能从屏幕消失,而在数秒之后,同一字幕可能再次出现。在这样的情况下,当满足以下条件时,通过将字幕显示看作是连续的字幕显示(即,继续同一主题),即使在字幕显示不连续、即字幕显示被中断时,也可以防止生成额外的索引。In some cases, subtitles may disappear from the screen, and after a few seconds, the same subtitles may reappear. In such a case, by treating subtitle display as continuous subtitle display (i.e., continuing the same theme) when the following conditions are met, even when subtitle display is discontinuous, that is, subtitle display is interrupted, it is possible to prevent additional index of.

1)在字幕消失之前、以及在字幕再次出现之后的字幕区域中满足方程式11) Equation 1 is satisfied in the subtitle area before the subtitle disappears and after the subtitle reappears

2)在字幕消失之前、以及在字幕再次出现之后的字幕区域中,边缘图像的像素数目基本相同,并且,当获得对边缘图像中的相应每个像素的逻辑“与”时,边缘图像的像素数目基本相同,2) Before the subtitle disappears, and in the subtitle area after the subtitle reappears, the number of pixels of the edge image is substantially the same, and when the logical "AND" of each corresponding pixel in the edge image is obtained, the pixels of the edge image are basically the same number,

3)字幕消失的时间量等于或小于阈值(例如,5秒)3) The amount of time the caption disappears is equal to or less than a threshold (eg, 5 seconds)

例如,可从EPG获得电视节目的体裁信息,使得可根据电视节目的体裁(如新闻节目或综艺节目)而改变中断时间的阈值。For example, genre information of TV programs can be obtained from EPG, so that the threshold of interruption time can be changed according to the genre of TV programs (such as news programs or variety shows).

工业实用性Industrial Applicability

在上文中,已参考特定实施例详细说明了本发明。然而,显然,本领域技术人员可在本发明的范围内修改或改变这些实施例。In the foregoing, the present invention has been described in detail with reference to specific embodiments. However, it is obvious that those skilled in the art can modify or change these embodiments within the scope of the present invention.

在本说明书中,描述了对主要通过记录电视节目而获得的视频内容执行索引编制的情况,但本发明的要旨不受限制。根据本发明的内容处理设备能适当地执行对被产生和汇编来用于除电视广播以外的用途、且包括代表主题的字幕区域的各种视频内容的索引编制。In this specification, the case where indexing is performed on video content obtained mainly by recording television programs is described, but the gist of the present invention is not limited. The content processing apparatus according to the present invention can appropriately perform indexing of various video contents that are generated and compiled for purposes other than television broadcasting and that include subtitle regions representing topics.

本质上,已经以示例的形式公开了本发明,并且在此说明书中说明的内容不应被解释为具有限制性。应该从权利要求的范围推断出本发明的本质。The present invention has been disclosed by way of example in nature, and what has been described in this specification should not be construed as limiting. The essence of the present invention should be inferred from the scope of the claims.

Claims (21)

Applications Claiming Priority (3)

| Application Number | Priority Date | Filing Date | Title |

|---|---|---|---|

| JP153419/2005 | 2005-05-26 | ||

| JP2005153419 | 2005-05-26 | ||

| JP108310/2006 | 2006-04-11 |

Publications (2)

| Publication Number | Publication Date |

|---|---|

| CN1993989A CN1993989A (en) | 2007-07-04 |

| CN100551014C true CN100551014C (en) | 2009-10-14 |

Family

ID=38214988

Family Applications (1)

| Application Number | Title | Priority Date | Filing Date |

|---|---|---|---|

| CNB200680000555XA Expired - Fee Related CN100551014C (en) | 2005-05-26 | 2006-05-10 | Content processing device, method for processing content |

Country Status (1)

| Country | Link |

|---|---|

| CN (1) | CN100551014C (en) |

Families Citing this family (8)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP5347279B2 (en) * | 2008-02-13 | 2013-11-20 | ソニー株式会社 | Image display device |

| CN101650958B (en) * | 2009-07-23 | 2012-05-30 | 中国科学院声学研究所 | Method for extracting scene fragments from movie videos and method for establishing indexes thereof |

| CN106020625A (en) * | 2011-08-31 | 2016-10-12 | 观致汽车有限公司 | Interactive system and method for controlling vehicle application through same |

| CN106658196A (en) * | 2017-01-11 | 2017-05-10 | 北京小度互娱科技有限公司 | Method and device for embedding advertisement based on video embedded captions |

| CN111091811B (en) * | 2019-11-22 | 2022-04-22 | 珠海格力电器股份有限公司 | Method and device for processing voice training data and storage medium |

| CN113342240B (en) * | 2021-05-31 | 2024-01-26 | 深圳市捷视飞通科技股份有限公司 | Courseware switching method and device, computer equipment and storage medium |

| WO2023019510A1 (en) * | 2021-08-19 | 2023-02-23 | 浙江吉利控股集团有限公司 | Data indexing method, apparatus and device, and storage medium |

| CN116366884A (en) * | 2023-03-29 | 2023-06-30 | 平安银行股份有限公司 | Video narration processing method, video narration processing device and computer equipment |

Citations (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP2003298981A (en) * | 2002-04-03 | 2003-10-17 | Oojisu Soken:Kk | Digest image generating apparatus, digest image generating method, digest image generating program, and computer-readable storage medium for storing the digest image generating program |

| CN1588991A (en) * | 2004-09-13 | 2005-03-02 | 西安交通大学 | Video frequency image self adaption detail intensifying method for digital TV post processing technology |

-

2006

- 2006-05-10 CN CNB200680000555XA patent/CN100551014C/en not_active Expired - Fee Related

Patent Citations (2)

| Publication number | Priority date | Publication date | Assignee | Title |

|---|---|---|---|---|

| JP2003298981A (en) * | 2002-04-03 | 2003-10-17 | Oojisu Soken:Kk | Digest image generating apparatus, digest image generating method, digest image generating program, and computer-readable storage medium for storing the digest image generating program |

| CN1588991A (en) * | 2004-09-13 | 2005-03-02 | 西安交通大学 | Video frequency image self adaption detail intensifying method for digital TV post processing technology |

Also Published As

| Publication number | Publication date |

|---|---|

| CN1993989A (en) | 2007-07-04 |

Similar Documents

| Publication | Publication Date | Title |

|---|---|---|

| US7894709B2 (en) | Video abstracting | |

| KR101237229B1 (en) | Contents processing device and contents processing method | |

| US6597859B1 (en) | Method and apparatus for abstracting video data | |

| US8214368B2 (en) | Device, method, and computer-readable recording medium for notifying content scene appearance | |

| US7676821B2 (en) | Method and related system for detecting advertising sections of video signal by integrating results based on different detecting rules | |

| KR20060027826A (en) | Video processing apparatus, integrated circuit for video processing apparatus, video processing method, and video processing program | |

| US7149365B2 (en) | Image information summary apparatus, image information summary method and image information summary processing program | |

| US20100259688A1 (en) | method of determining a starting point of a semantic unit in an audiovisual signal | |

| CN101568968B (en) | Method for creating a new summary of an audiovisual document that already includes a summary and reports and a receiver that can implement said method | |

| KR100659882B1 (en) | Broadcast recording device and broadcast search device of digital broadcasting system | |

| US20090196569A1 (en) | Video trailer | |

| CN100551014C (en) | Content processing device, method for processing content | |

| US20050264703A1 (en) | Moving image processing apparatus and method | |

| JP4702577B2 (en) | Content reproduction order determination system, method and program thereof | |

| JP2000023062A (en) | Digest creation system | |

| CN101799823A (en) | Contents processing apparatus and method | |

| JP2007266838A (en) | RECORDING / REPRODUCING DEVICE, RECORDING / REPRODUCING METHOD, AND RECORDING MEDIUM CONTAINING RECORDING / REPRODUCING PROGRAM | |

| KR101401974B1 (en) | Method and apparatus for browsing recorded news programs | |

| KR20060102639A (en) | Video playback system and method | |

| WO2004047109A1 (en) | Video abstracting | |

| JP2008085700A (en) | Video reproducing unit and program for reproduction |

Legal Events

| Date | Code | Title | Description |

|---|---|---|---|

| C06 | Publication | ||

| PB01 | Publication | ||

| C10 | Entry into substantive examination | ||

| SE01 | Entry into force of request for substantive examination | ||

| C14 | Grant of patent or utility model | ||

| GR01 | Patent grant | ||

| CF01 | Termination of patent right due to non-payment of annual fee |

Granted publication date: 20091014 Termination date: 20150510 |

|

| EXPY | Termination of patent right or utility model |